British banks and finance firms are quietly reshaping their hiring plans for 2026: the next wave of tech recruitment is not just for data scientists and MLOps engineers, but for behavioural scientists, psychologists, ethicists and legal specialists tasked with steering AI use inside organisations. The shift — signalled in recent industry surveys and accelerated by a high‑profile operational failure traced to an AI “hallucination” — marks a crucial moment for how financial services balance productivity gains from generative AI with model risk, human behaviour, and reputational exposure.

The financial sector’s appetite for AI has moved beyond proof‑of‑concept pilots. After a difficult year for hiring across the industry, many firms are preparing a targeted recruitment push for 2026 that places ethical AI, human factors, and governance roles at the centre of technology hiring. Survey results circulated late in January show a majority of firms planning to increase headcount, with technology roles dominating the hiring plans and boardroom hiring prioritising AI capability. At the same time, regulators and inspectorates are sharpening scrutiny of the real‑world harms that can arise when AI output is accepted uncritically — a dynamic amplified after a policing intelligence report that relied on a fabricated fixture generated by an AI assistant helped justify a high‑impact decision.

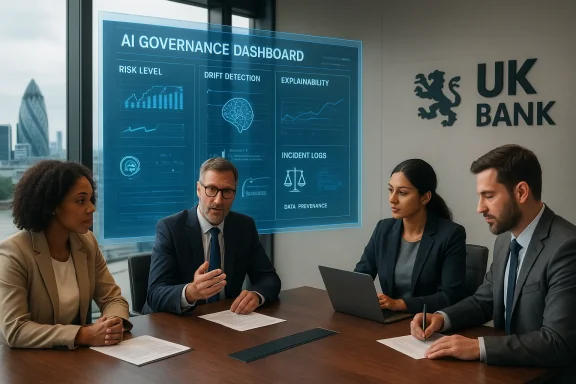

Taken together, these developments are forcing banks and financial firms to treat AI not only as an engineering problem, but as a socio‑technical one that requires expertise from behavioural science, psychology, ethics and governance.

Key learnings for financial services:

Practical hiring steps:

However, meaningful change requires more than new titles. Risks remain:

Banks that achieve this balance will not only reduce harm but will turn trustworthy AI into a genuine competitive advantage in a sector where reputation and reliability are everything.

Conclusion

The current moment offers banks a rare opportunity: to design AI adoption pathways that harness generative capabilities while acknowledging human limitations and social consequences. Ethical AI hiring — focused on behavioural science, psychology, governance and legal rigour — is neither a PR exercise nor an optional add‑on. It is an operational necessity for firms that want to scale AI without courting regulatory, financial or reputational shock. The challenge ahead is not merely recruiting smart people, but giving them the mandate, data, and cross‑functional authority to change how decisions are made inside financial institutions. The next wave of hires will determine whether the sector treats the Copilot hallucination as an outlier or as a systemic call to do AI differently.

Source: lbc.co.uk EMB 00:01 UK finance firms to boost hiring of ethical AI experts amid growing concerns over its misuse | LBC

Background

Background

The financial sector’s appetite for AI has moved beyond proof‑of‑concept pilots. After a difficult year for hiring across the industry, many firms are preparing a targeted recruitment push for 2026 that places ethical AI, human factors, and governance roles at the centre of technology hiring. Survey results circulated late in January show a majority of firms planning to increase headcount, with technology roles dominating the hiring plans and boardroom hiring prioritising AI capability. At the same time, regulators and inspectorates are sharpening scrutiny of the real‑world harms that can arise when AI output is accepted uncritically — a dynamic amplified after a policing intelligence report that relied on a fabricated fixture generated by an AI assistant helped justify a high‑impact decision.Taken together, these developments are forcing banks and financial firms to treat AI not only as an engineering problem, but as a socio‑technical one that requires expertise from behavioural science, psychology, ethics and governance.

Why banks are hiring ethical AI experts now

From model performance to human behaviour

Banks began with quant and data science teams focused on model accuracy, latency and capacity. That technical stack remains essential, but the operational failure modes now in focus are human-centred: misuse of assistants, complacency when staff over‑trust model output, prompt‑drift, and the organisational incentives that push workers to rely on generative tools without adequate checks.- Banks face cost and efficiency pressures that encourage rapid AI adoption.

- Productivity gains can create perverse incentives: employees are rewarded for output rather than the reliability of decisions.

- Human supervision is fallible; oversight must be designed rather than assumed.

Reputational and regulatory pressure

Financial institutions operate in a high‑trust environment. One falsely generated claim or misapplied model can cascade into regulatory investigations, customer harm, and boardroom upheaval. Regulated markets are already seeing guidance and supervisory attention focused on AI governance, model risk, and the need for auditable decision trails.- Boards are prioritising AI capability as a strategic imperative.

- Supervisors expect robust model risk frameworks and demonstrable controls for AI systems that materially affect customers or markets.

- Firms recognise that ethical AI hires can help translate regulatory expectations into operational policy and employee behaviour.

Competitive advantage through trust

Firms that build trustworthy AI practices gain customer and partner confidence. Ethical AI hires can lead the craft of embedding fairness, explainability, and human oversight into product lifecycles — converting compliance into a market differentiator.The roles banks are recruiting: what they look like

Banks are creating cross‑disciplinary roles that blend technical, social, and regulatory expertise. The following are the most visible job families emerging in 2026 hiring plans.1. Behavioural scientist / human factors specialist

- Focus: How frontline staff and customers interact with AI; cognitive load; decision architecture.

- Tasks: design prompts and user interfaces that minimise bias and over‑reliance; run controlled experiments; craft training programmes that change behaviour.

- Value: reduces human error that converts model mistakes into operational incidents.

2. AI ethics lead / chief AI officer (non‑technical)

- Focus: policy, governance, stakeholder engagement.

- Tasks: draft AI use policies, chair cross‑functional governance boards, coordinate with legal and compliance.

- Value: translates ethical principles into implementable controls and middle‑management KPIs.

3. AI assurance and model risk manager

- Focus: validation, independent testing, performance monitoring.

- Tasks: perform red‑teaming, create model registries, implement drift detection and explainability checks.

- Value: ensures models meet regulatory, audit, and internal risk thresholds.

4. AI legal & regulatory specialist

- Focus: interpretation of evolving laws, contractual risk for third‑party models, incident reporting.

- Tasks: review supplier contracts for data provenance and IP, support incident response, map obligations to operations.

- Value: mitigates legal exposure when generative models are used.

5. Trust & safety / content governance analyst

- Focus: output safety for customer‑facing assistants and automated messaging.

- Tasks: content filtering rules, escalation pathways, false‑positive detection, incident logging.

- Value: prevents brand damage from inappropriate or misleading outputs.

6. Data governance & privacy specialist (AI‑focused)

- Focus: dataset provenance, bias mitigation, privacy preservation.

- Tasks: enforce anonymisation standards, ensure training data quality, maintain lineage and intellectual property controls.

- Value: protects customers and reduces model bias and regulatory risk.

The Maccabi Copilot incident: a warning shot

A recent operational review into a decision to ban away supporters at a football fixture uncovered a telling failure: an intelligence briefing cited a non‑existent past match, which investigators traced to an AI assistant’s fabricated claim. The error was sufficient to influence a safety classification and ultimately contributed to severe political and reputational consequences for the police force involved.Key learnings for financial services:

- Generative AI hallucinations are operational risks. False statements can appear plausible and be mistaken for evidence unless verification procedures exist.

- Denial is an ineffective defence. Early denials about AI use gave way to admissions and inquiries — transparency and traceability are essential.

- Human oversight is necessary but insufficient. Asking staff to “just check” AI output without tooling and process changes is optimistic; human reviewers will miss errors when under workload pressure or when the AI output fits preconceptions.

- Failure to map AI usage flows causes blind spots. It’s not enough to know “we use Copilot”; organisations must inventory where and how LLMs influence decisions and documentation.

Operational responses banks should adopt now

Banks can adopt an integrated approach that blends technical, behavioural and governance controls. The most effective programmes combine five pillars:- AI inventory and risk classification

- Catalogue every AI system, internal or third‑party, and classify by potential impact (customer outcomes, financial risk, market stability).

- Model risk and validation lifecycle

- Apply robust validation before deployment: testing, stress scenarios, explainability checks and red‑team adversarial testing.

- Human‑in‑the‑loop design and escalation

- Define precise human oversight points, mandatory verification steps for high‑risk outputs, and clear escalation routes.

- Behavioural design and training

- Use behavioural interventions to prevent automation bias (e.g., checklists, uncertainty prompts, required attestations).

- Continuous monitoring and incident response

- Deploy drift detection, output logging, and a documented incident playbook for hallucinations and model failures.

Talent and recruitment: building the right team

Recruiting for these roles is not as simple as copying a job description. Financial firms are competing in a tight labour market where talent with combined social science and technology experience is scarce.Practical hiring steps:

- Define outcomes, not titles

- Frame roles by the measurable changes expected (e.g., reduce misinformation incidents by X%, implement model registry within 6 months).

- Use interdisciplinary assessments

- Evaluate candidates with scenario exercises that blend data tasks and stakeholder negotiation, not only technical tests.

- Partner with academia and professional bodies

- Create pipelines with psychology, behavioural economics, and ethics researchers who have industry experience.

- Invest in internal rotation and upskilling

- Retrain compliance lawyers and product owners with modular courses in AI risk and behavioural design.

- Compensate for scarcity with external advisors

- Use trusted consultants for rapid capability buildup while hiring permanent staff.

Governance: what boards and C‑suite must demand

Boards and senior executives must move beyond high‑level pledges and require a set of concrete deliverables:- An AI inventory and risk map that is refreshed quarterly.

- A documented validation standard for any AI system that affects customers, credit decisions, or market positions.

- A policy defining acceptable use, including mandatory human verification levels tied to risk tiers.

- Regular reporting of near misses, hallucination incidents, and model drift metrics.

- Evidence of staff training and behavioural interventions, measured for adoption and effectiveness.

Trade‑offs and risks to watch

Adopting workplaces that emphasise monitoring, measurement and behavioural control introduces its own risks.- Surveillance creep and morale: tracking “how much AI an employee uses” can create a culture of distrust and reward gaming. Management must balance oversight with autonomy and clear purpose.

- Over‑engineering compliance: rigid controls can chill beneficial experimentation and slow time to market if not risk‑calibrated.

- Talent signalling: hiring lots of “ethics” roles without real decision power risks creating window dressing and reputational damage.

- Third‑party concentration: relying on a small set of large model providers concentrates supply‑chain risk and creates single points of failure.

- Regulatory fragmentation: global banks must navigate divergent regional rules (EU AI Act, UK guidance, other national rules); inconsistent compliance can be operationally burdensome.

Practical checklist for banks deploying generative AI

- Create an AI inventory and classify systems by impact and risk.

- Insist on provenance metadata for every output that influences decisions (who/what produced it, model version, prompt).

- Require an “explainability brief” for high‑impact models summarising limitations and known failure modes.

- Implement templates and checklists for human review to reduce cognitive bias.

- Log and retain AI outputs for a defined retention policy to support investigations.

- Run regular red‑team exercises and scenario drills simulating hallucination incidents.

- Ensure contracts with model providers guarantee data lineage, security controls and incident support.

- Build cross‑functional incident response playbooks with legal, communications and regulatory liaisons.

- Train frontline staff in critical consumption of AI outputs — how to test, validate, and escalate.

- Measure adoption and control effectiveness with quantifiable metrics, not just completion certificates.

What success looks like

Banks that move beyond checkbox compliance and embed behavioural design into AI governance will demonstrate three linked outcomes:- Fewer operational incidents triggered by hallucinations or automation bias.

- Faster, safer rollout of value‑creating AI products because governance is practical and well integrated with product development.

- Stronger stakeholder confidence — from customers, regulators and investors — because the firm can show measurable controls and a culture of prudent innovation.

Final analysis: strength, weakness and the road ahead

The decision by UK banks and financial firms to prioritise behavioural science, psychology and ethical AI expertise is a pragmatic and necessary evolution. Its strengths are clear: it recognises that generative AI’s primary residual risks are social and organisational, not only mathematical. Hiring non‑technical experts signals a maturing approach that treats AI as a product of people and institutions, not just code.However, meaningful change requires more than new titles. Risks remain:

- Superficial hires — bringing in ethics officers who lack integration with product and risk teams will not prevent incidents.

- Process bloat — excessive controls can kill agility if not carefully aligned with risk tiers.

- Regulatory mismatch — as supervisory expectations evolve, firms must avoid siloed compliance efforts that cannot scale across jurisdictions.

Banks that achieve this balance will not only reduce harm but will turn trustworthy AI into a genuine competitive advantage in a sector where reputation and reliability are everything.

Conclusion

The current moment offers banks a rare opportunity: to design AI adoption pathways that harness generative capabilities while acknowledging human limitations and social consequences. Ethical AI hiring — focused on behavioural science, psychology, governance and legal rigour — is neither a PR exercise nor an optional add‑on. It is an operational necessity for firms that want to scale AI without courting regulatory, financial or reputational shock. The challenge ahead is not merely recruiting smart people, but giving them the mandate, data, and cross‑functional authority to change how decisions are made inside financial institutions. The next wave of hires will determine whether the sector treats the Copilot hallucination as an outlier or as a systemic call to do AI differently.

Source: lbc.co.uk EMB 00:01 UK finance firms to boost hiring of ethical AI experts amid growing concerns over its misuse | LBC