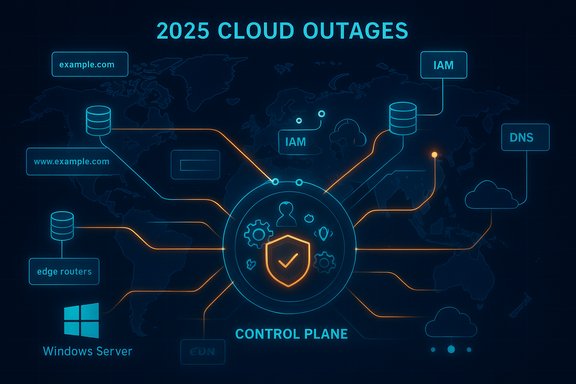

The internet’s backbone showed uncommon fragility in 2025 as a small number of control‑plane failures — DNS anomalies, configuration rollouts and authentication breakdowns — cascaded into outages that took millions of users, thousands of businesses and a chunk of the modern web offline. What began as localized failures in managed primitives repeatedly turned into global disruptions, underscoring how convenience and concentration in the public cloud can create systemic single points of failure.

Background

The pattern of 2025’s top cloud incidents is notable for what they had in common: the failing components were not always the big compute fleets or overloaded networks, but the glue that coordinates them — DNS records, edge routing fabrics, authentication services and automation that manages control‑plane state. Those primitives are small in surface area but critical in function; when they misbehave, large swaths of otherwise healthy infrastructure become effectively unreachable. This structural observation is supported by independent reconstructions and vendor post‑incident summaries that consistently point to control‑plane and DNS/edge failures as the proximate causes of the largest outages.In 2025, five incidents captured public attention and framed industry conversations about resilience, vendor transparency and architectural trade‑offs:

- The December 25 gaming sign‑in meltdown that left thousands unable to play major multiplayer titles.

- Multiple Cloudflare incidents (notably November 18 and December 5) that affected content platforms, AI services and collaboration tools.

- A severe AWS US‑EAST‑1 outage on October 20 that exposed DNS automation failures linked to DynamoDB endpoint resolution.

- A Microsoft Azure disruption on October 29 caused by an inadvertent configuration change in Azure Front Door.

- A Google Cloud incident earlier in the year that also highlighted identity and quota/identity subsystem fragility.

The five major incidents of 2025 — what happened and why it mattered

1) Christmas gaming outage — authentication and attribution confusion

On December 25 many gamers found themselves unable to log into popular multiplayer titles — a high‑visibility, consumer‑facing outage that hit on a peak usage day. Initial public discussion suggested the problem was rooted in a hyperscaler, but follow‑up reporting and vendor statements indicated the failure traced to an authentication failure inside Epic Online Services (EOS), which many games use for sign‑in and entitlement checks. The incident highlights a common pattern: a shared third‑party service used by many front‑end titles becomes a high‑impact central point of failure.What to note:

- Shared authentication and session providers collapse login and entitlement flows across multiple games simultaneously, even if game servers remain healthy.

- Attribution is often messy in real time: initial signals (for example, elevated cloud provider error rates) can be misread; accurate root cause identification may lag by hours or days. Be cautious with early claims that pin blame on a particular vendor until official post‑incident statements are available.

2) Cloudflare incidents — bot mitigation and configuration mistakes

Cloudflare experienced two widely‑noticed disruptions late in the year. On November 18 a bug in a bot‑management subsystem produced a broad outage that hit platforms including large streaming, AI and social services. A shorter incident on December 5 traced to a firewall/configuration error and affected business‑critical apps and collaboration tools.Why these mattered:

- Edge services like Cloudflare sit at the very first hop for millions of web requests; when their policy engines (bot management, WAFs, rate limiting) misclassify or inadvertently block traffic, the visible symptom for users is a simple inability to reach major services.

- Configuration and logic bugs in bot management or firewall modules are particularly hazardous because they can propagate rapidly across global points of presence and interact unpredictably with client IP diversity and CDN caches.

- Test bot management and firewall changes in low‑risk canaries distributed across geographic PoPs before global rollouts.

- Maintain rapid rollback hooks and ensure that provider status pages expose meaningful, actionable information during incidents.

3) AWS — US‑EAST‑1 DNS automation bug (October 20)

The October 20 AWS disruption is the most consequential event of the year because of its breadth and because it exposed the brittle dependencies many services place on a single region and on a small set of managed primitives. In short: an automation bug that managed DNS entries for the DynamoDB regional endpoint produced empty or incorrect DNS responses in US‑EAST‑1. That DNS symptom prevented many clients and internal control‑plane components from resolving DynamoDB’s hostname, which cascaded into throttled EC2 launches, stalled control‑plane orchestration and broad second‑order failures across Lambda, API Gateway and dependent SaaS. Recovery was staged: DNS mitigation came first, then manual fixes, throttles to allow backlogs to drain, and long tails of residual errors as caches and queues reconciled.Why DNS mattered more than compute:

- DNS is the internet’s address book; if the address book returns empty answers the client never reaches the service, even if the actual servers are healthy.

- Many managed control‑plane functions and metadata stores rely on DynamoDB or similar small‑state databases for session tokens, feature flags and leader election; when those small, high‑frequency operations fail, user‑facing features collapse.

- Massive spikes in outage tracker reports and monitoring telemetry.

- Vendor status messages pointing to DNS resolution failures for the DynamoDB API hostname in US‑EAST‑1 as the proximate symptom.

- Industry analysis noting that concentration of control‑plane features in a single region amplifies systemic risk.

4) Microsoft Azure — Azure Front Door misconfiguration (October 29)

Less than two weeks after the AWS incident, Microsoft disclosed an eight‑hour global disruption caused by an inadvertent configuration change in Azure Front Door (AFD), its global edge routing and application delivery fabric. The configuration change propagated across PoPs and affected DNS routing, authentication flows and portal responsiveness — producing service degradation in Microsoft 365, Xbox Live, Minecraft and enterprise portals in aviation, telecom and retail.Why this incident stood out:

- Edge routing fabrics are sensitive because they carry TLS termination, token issuance and initial request routing. A configuration error at that layer can block authentication and authorization flows, making the symptom identical to a backend outage even when origin services are healthy.

- Rollback strategies for global edge changes are complicated by cache and state convergence across PoPs; reversion does not always produce immediate global recovery.

- Restrict global config changes and require staged rollouts with automated canarying.

- Maintain an alternate ingress path for critical admin and break‑glass operations that does not depend on the edge fabric being in a pristine global state.

5) Google Cloud — identity/quota/IAM‑related disruptions (mid‑2025)

Google Cloud suffered a separate, multi‑hour incident earlier in 2025 rooted in identity/quota/IAM subsystems that produced cross‑service authentication failures and quota enforcement anomalies. While this disruption differed technically from the DNS+control‑plane failures at AWS and Azure, it followed the same general pattern: a relatively narrow control‑plane fault translated into broad service interruption because many dependent services rely on centralized identity and quota primitives.Cross‑cutting technical analysis — why small control‑plane failures cascade

The five incidents above are diverse in trigger but uniform in amplification path. The core technical themes include:- Control‑plane primacy: Modern cloud systems put metadata, state and orchestration logic into small, managed services. Those services are on the critical path for many runtime operations; when they fail, the visible symptom is a broad outage even if compute resources remain healthy.

- DNS fragility: DNS caching, TTL semantics and automation complexity make DNS a deceptively fragile hinge. Empty answers or stale records can persist in resolver caches and prolong recovery after a control‑plane fix is applied.

- Automation risks: Automated rollouts and enactors speed operations but magnify the blast radius of software defects or stale plans. Several incidents involved automation interacting with a lagging enactor or an unintended configuration change that replicated globally.

- Edge fabrics as choke points: CDNs, WAFs and bot mitigation systems sit on the first hop; misconfigurations there block millions of users quickly.

- Opacity and delayed attribution: In the first hours of an outage, public signals are noisy. Initial attributions are often revised as telemetry and vendor post‑mortems emerge. This uncertainty complicates communications and incident response.

Business and regulatory fallout

Major cloud outages have consequences beyond engineering: boardroom scrutiny, procurement reassessments, and potential regulatory interest grow after these events. Large customers will press for more transparent SLAs, clearer incident timelines, and possibly contractual options for multi‑cloud architectures or compensation where business continuity was undermined. Policy conversations will likely continue around whether hyperscalers supplying critical public services should face stricter disclosure or resilience requirements.Insurers and risk teams are revising loss models and scenario planning. Some analyses suggest the aggregate financial exposure from high‑impact cloud outages is material and will be more closely reflected in pricing and contractual obligations in future vendor negotiations.

Practical, actionable resilience playbook for WindowsForum readers

For IT managers, SREs and Windows admins responsible for production systems, the events of 2025 convert abstract best practices into urgent operational imperatives. Below is a pragmatic checklist to reduce blast radius and accelerate recovery.1) Map dependencies and prioritize critical primitives

- Inventory mission‑critical components and list which managed primitives (DynamoDB, managed caches, identity services, feature‑flag stores) they depend on.

- Mark those primitives as high‑risk and design alternate paths.

2) Harden DNS and resolver behavior

- Use multiple authoritative DNS providers for critical names.

- Test client resolver fallbacks and TTL behavior under failure conditions.

- Ensure clients handle empty DNS answers gracefully and fail fast to trigger alternative flows.

3) Architect for graceful degradation

- Implement reduced‑functionality fallbacks for core user journeys (login, read‑only content, cached assets) so a DNS or auth failure does not produce a blank screen.

- Prepackage static fallbacks (CDN caches, offline pages) for peak traffic moments.

4) Practice runbooks and failure rehearsals

- Regularly run chaos experiments and failure drills that simulate DNS failures, IAM unavailability and edge fabric misconfigurations.

- Validate communications playbooks (status updates, out‑of‑band notifications) and break‑glass admin channels.

5) Phase‑rollouts and canary changes

- For both your own infrastructure and when requesting provider edge changes, require staged, geographically distributed canaries before global deployment.

- Maintain immediate rollback automation and monitor canary health with strict abort thresholds.

6) Multi‑region and multi‑provider patterns — where it matters

- Selectively adopt multi‑region or multi‑cloud for the small set of services whose availability is essential to revenue or safety.

- Avoid the mental trap that “all services must be multi‑cloud”; instead, focus budget and engineering effort where the risk/reward is highest.

7) DNS, identity and session store fallbacks

- For authentication, maintain local admin break‑glass accounts that do not depend on external identity providers.

- Consider local, write‑through caches for session tokens and small‑state metadata so basic operations can continue if a remote store becomes inaccessible.

Strengths shown by providers — and persistent risks

Strengths observed

- Rapid mitigation: In several incidents, engineers moved quickly to identify proximate symptoms and apply mitigations (DNS fixes, rollbacks, throttles) that restored broad functionality within hours. These operational responses prevented longer outages.

- Increased transparency: Providers published status updates and, in some cases, committed to post‑incident reviews that will produce concrete engineering changes. That public timeline is valuable to customers when making procurement decisions.

Persistent risks

- Concentration of control‑plane functions in single regions or single providers remains the root structural vulnerability.

- Automation and staged enactors are efficient but can replicate mistakes at global scale when safeguards are insufficient.

- DNS and cache convergence create a recovery tail that is often longer than the initial remediation window; customers experience residual impact long after a provider reports mitigation.

What the industry should demand next

- Corporate customers should require clearer contractual visibility into the architecture of control‑plane services and timelines for post‑incident remediation plans.

- Providers should invest in independent, third‑party audits of critical control‑plane automation and publish measurable resilience targets (beyond generic SLA uptime figures).

- Regulators and sector‑specific authorities should consider risk classification frameworks for hyperscalers that host essential national and financial services. These frameworks should balance innovation with systemic reliability.

A measured verdict — resilience is a socio‑technical problem

The outages of 2025 are not proof that cloud is broken; they are a reminder that resilience is a design requirement, not an emergent property. Hyperscale providers deliver enormous value and enable innovation at speed and scale. But that economic and technical convenience concentrates systemic risk into a few control points — DNS, identity, edge routing and managed automation — that form a new kind of critical infrastructure.Operational improvements are straightforward in concept but organizationally hard in execution: mapping dependencies, funding multi‑region fallbacks, and coordinating contractual and regulatory expectations. The immediate technical fixes are known; the institutional commitment to implement them broadly and measurably across millions of services is the test ahead.

Final, practical checklist (quick reference)

- Inventory mission‑critical managed primitives and assign a resilience owner.

- Add multiple authoritative DNS chains and test TTL/resolver behavior.

- Create static or reduced‑function fallbacks for core user paths.

- Require canaries and automated rollbacks for edge/config changes.

- Establish identity break‑glass accounts and local session caches.

- Run quarterly chaos exercises that simulate DNS, IAM and edge failures.

- Negotiate clearer visibility and remediation timelines with cloud vendors.

The outages that defined 2025 shone a stark light on a persistent truth: the internet is only as reliable as its smallest, most‑used primitives. Firms that treat DNS, identity and edge routing as peripheral will continue to be surprised when the next “minor” failure becomes a major business disruption. For platform architects and Windows administrators, the clear imperative is to plan, test and budget for that inevitability — because the next outage will almost certainly arrive on a day when users expect everything to just work.

Source: NDTV Profit AWS Outage And Top Cloud Disruptions Of 2025 That Brought Web To A Standstill