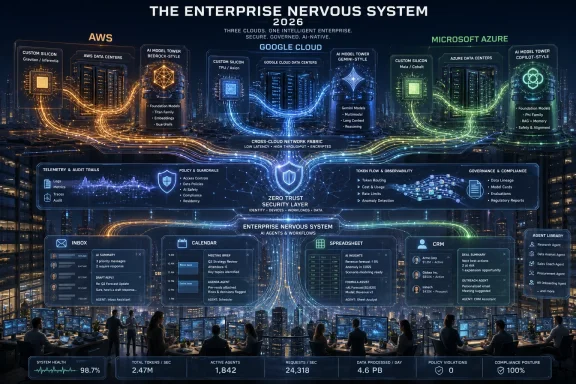

Amazon Web Services, Google Cloud, and Microsoft Azure are now competing in 2026 to own the entire enterprise AI stack, from chips and data centers to models, agents, business applications, workflow context, and the daily software surfaces where employees actually work. The race is no longer about renting faster GPUs by the hour. It is about turning AI into a vertically integrated operating model for the enterprise. That makes the next cloud decision less like choosing infrastructure and more like choosing a corporate nervous system.

For most of the cloud era, the hyperscaler pitch was modularity. Compute, storage, databases, analytics, identity, observability, and developer tooling could be assembled like Lego bricks, and enterprise architects could at least pretend the pieces were portable. The marketing language was all about flexibility, even when the invoices and migration projects told a more complicated story.

AI is collapsing that polite fiction. The new cloud stack is not a shelf of services so much as a chain of dependencies: custom silicon trains or serves the model, the model is exposed through a managed platform, the platform is grounded in enterprise data, agents automate work across applications, and telemetry from those workflows improves the next layer of orchestration. The customer does not merely consume infrastructure. The customer is gradually pulled into a provider’s full theory of how enterprise work should run.

That is the real meaning behind the latest wave of announcements from AWS, Google Cloud, and Microsoft. Each company says it wants to offer choice. Each company also wants to be the default place where AI applications are built, deployed, governed, monitored, secured, and ultimately monetized. The contradiction is not accidental; it is the business model.

The old cloud lock-in problem was about where the database lived. The new lock-in problem is about where the context lives.

But AI is not only about infrastructure. It is also about workflows, user interfaces, and business processes — areas where AWS has historically been thinner than Microsoft and Google. That is why Amazon’s push around the expanded Connect family matters. Connect began as a cloud contact-center product, but AWS is now framing it as a broader family of business applications spanning customer AI, talent, decisioning, and health-related workflows.

This is AWS moving up the stack with purpose. The company does not have Microsoft 365. It does not have Google Workspace. It does not own the spreadsheet, the inbox, the meeting, the CRM record, or the enterprise collaboration habit in the same native way its rivals do. So AWS has to invent an application surface that makes sense from its strengths: operational data, customer interactions, event-driven systems, and AI services that sit close to enterprise infrastructure.

Amazon Bedrock is the bridge. By offering access to multiple model families, including models from Anthropic, Amazon, OpenAI, Google, Meta, Mistral, Cohere, and others, AWS is trying to make the model layer feel like another cloud service: selectable, swappable, metered, and governed. SageMaker, Bedrock AgentCore, Lambda, S3, DynamoDB, Redshift, and the rest become the scaffolding around that model marketplace.

The pitch is familiar AWS: don’t bet the company on one model, one app suite, or one interface. Build with components. Compose what you need. Keep your inference near your data. Let AWS optimize the infrastructure under you.

The weakness is equally familiar. Composability is powerful, but it can also become architectural sprawl. AWS customers rarely get locked in by a single dramatic decision; they get locked in by thousands of small, rational decisions that accumulate into a system no one wants to re-platform. AI will intensify that pattern because agents, embeddings, retrieval pipelines, model evaluations, policy layers, and workflow triggers create dependencies that are harder to see than EC2 instances and S3 buckets.

The TPU is central to that story. While Nvidia still defines the broader accelerator market, Google’s long investment in Tensor Processing Units gives it a differentiated silicon layer that is not just a procurement hedge. TPUs are part of Google’s model-development culture, part of its internal economics, and increasingly part of its external cloud pitch.

Then comes Gemini, Vertex AI, BigQuery, AlloyDB, Looker, Workspace, security tooling, and the emerging agent layer. Google can plausibly tell customers that it has a first-party path from silicon to model to data warehouse to productivity surface. In an AI market drowning in middleware claims, that coherence matters.

Google Cloud Next 2026 leaned heavily into this point. The message was not merely that Google has good AI. It was that Google can optimize the stack end to end: hardware, networking, model serving, data grounding, security, and application experiences. That is vertical integration as engineering religion.

The company’s first-quarter numbers gave the argument financial force. Google Cloud revenue crossed $20 billion in the quarter and grew 63 percent year over year, an extraordinary acceleration for a business of that size. Alphabet executives also tied the performance directly to AI infrastructure and Gemini adoption, while acknowledging the obvious constraint: demand is outrunning available capacity.

That last point is important. Google’s AI advantage is not costless. Alphabet’s 2026 capital expenditure guidance now sits in the $180 billion to $190 billion range. The full-stack AI story requires full-stack spending, and the spending is now so large that it reshapes how investors, customers, and competitors read every product announcement.

That matters because enterprise AI is only partly a model problem. The harder problem is context. Who is the user? What project are they working on? What documents are relevant? Which meetings shaped the decision? What permissions apply? Which systems of record are authoritative? What is the next step in the workflow?

Microsoft’s answer is WorkIQ and the broader Copilot architecture. The company wants to turn its enormous footprint across documents, communications, code, meetings, identity, and business applications into the context layer for agentic work. Azure provides the infrastructure, Foundry provides model and app development, Fabric and OneLake provide data gravity, and Copilot becomes the interface through which AI appears inside the tools employees already use.

That is a formidable position. Microsoft does not need to persuade many workers to open a new AI application if it can insert AI into Outlook, Teams, Excel, Word, Visual Studio Code, GitHub, and Dynamics. Distribution is not a side benefit in this market. It is the moat.

The concern for Microsoft is lower in the stack. The company is investing in its own silicon, including Maia AI accelerators and Cobalt CPUs, but it does not yet have the same maturity narrative as Google with TPUs or AWS with Graviton, Trainium, and Inferentia. Microsoft can still buy enormous quantities of Nvidia hardware and operate at staggering scale, but the economics of AI inference will increasingly reward custom optimization.

Still, Microsoft’s advantage is that many enterprises are already halfway inside its AI stack before they make an explicit platform decision. If Copilot becomes the natural interface for work and WorkIQ becomes the context layer behind it, Azure’s role as the infrastructure substrate becomes easier to defend. Microsoft does not have to win every model benchmark if it owns the place where decisions are made.

AWS grew revenue 28 percent year over year in the first quarter, a strong performance given its larger base. Amazon also said first-quarter capital expenditures reached roughly $43 billion, with AI and AWS demand driving much of the increase, and the company has pointed to full-year 2026 capex around $200 billion. That is not a tactical investment cycle. That is an industrial buildout.

Google Cloud’s 63 percent growth is the eye-catching number, but the more revealing detail is capacity. When a cloud provider says growth is constrained by its ability to deploy infrastructure fast enough, it is telling customers that the bottleneck has moved from demand generation to physical execution. Data centers, chips, power, networking, cooling, and supply chains are now product strategy.

Microsoft’s Azure and other cloud services grew 40 percent in its fiscal third quarter, driven by demand across the platform. The company has also emphasized improvements in GPU deployment, inference throughput, and custom silicon economics. That language is not just for Wall Street; it is a signal to CIOs that the cloud provider able to reduce token cost, speed up inference, and bring capacity online faster will shape the practical economics of enterprise AI.

The capital expenditure numbers are astonishing because they are not isolated. Alphabet, Microsoft, Amazon, and Meta are collectively preparing for AI infrastructure spending at a scale that would once have sounded like national industrial policy. In effect, the hyperscalers are building private AI utilities, and enterprises are deciding which utility’s assumptions they want embedded into their own operations.

AWS has been explicit about this. Graviton changed the economics of general-purpose cloud compute. Trainium and Inferentia are intended to do the same for AI training and inference. Amazon now talks about its custom silicon business as one of the largest data-center chip businesses in the world, and the logic is obvious: if AWS can make AI workloads cheaper and more predictable on its own chips, it can offer customers a better price-performance curve while keeping more of the margin inside Amazon.

Google has the deepest custom AI silicon history among the three. TPUs were not a late reaction to the generative AI boom; they were an architectural choice made years earlier to support Google’s own machine learning needs. That gives Google an unusually integrated story when it talks about Gemini, Cloud TPU, model serving, and efficiency.

Microsoft is newer to this part of the stack, but it cannot afford to stay dependent on outside accelerators forever. Maia and Cobalt are early signs of a broader ambition: reduce reliance on external silicon where possible, tune hardware to Microsoft’s own workloads, and use the company’s software footprint to amortize the investment. The company’s challenge is not whether it can design chips. It is whether it can make them operationally central fast enough to matter in the next wave of AI economics.

For customers, custom silicon will arrive dressed as savings. The provider will say the workload runs faster, inference costs less, and capacity is more available. Often that will be true. The tradeoff is that optimization and dependency are twins. The more your AI architecture is tuned to a provider’s chips, serving stack, model-routing system, and agent runtime, the less portable it becomes.

An enterprise agent grounded in a company’s email, documents, CRM notes, support tickets, code repositories, data warehouse, identity graph, and meeting transcripts becomes more than an application. It becomes an operational memory. Moving that memory from one provider to another is not like exporting a table.

This is where the vertical integration race becomes most consequential. AWS wants agents close to the infrastructure and data services where enterprise systems already run. Google wants agents connected to Gemini, BigQuery, Workspace, and its AI-native data tooling. Microsoft wants agents embedded in the work surface and enriched by the context of Microsoft 365, Teams, GitHub, Dynamics, and Power Platform.

Each version has appeal. Each version also creates a new class of switching cost.

The artifacts of agentic AI are messy: prompts, embeddings, policies, tool definitions, evaluation sets, memory stores, semantic models, orchestration graphs, audit trails, and behavioral expectations. A company may be able to move raw data from one cloud to another and still find that its AI workflows no longer behave the same way. The hidden lock-in is not the file format. It is the learned process.

This is why “open” has become such a charged word in hyperscaler messaging. Every provider wants to say it supports model choice, open-source models, third-party tools, and multicloud realities. But openness at the model-selection layer does not automatically mean portability at the workflow layer. A Bedrock application, a Gemini Enterprise agent, and a Copilot Studio workflow may all call open models in some circumstances while still binding the enterprise to proprietary orchestration, identity, governance, telemetry, and context layers.

A vertically integrated AI stack can reduce friction. It can make model deployment easier, simplify security reviews, improve latency, reduce inference cost, provide better observability, and turn AI from a lab experiment into a production capability. For many companies, especially those without elite AI infrastructure teams, the hyperscaler stack will be the only practical way to deploy advanced AI at scale.

The best version of this future is genuinely useful. A hospital system could use cloud AI to coordinate clinical documentation, scheduling, claims workflows, and patient communications. A manufacturer could connect supply-chain data, maintenance logs, ERP systems, and field-service notes into a responsive agentic layer. A software company could tie code, tickets, incidents, deployments, and customer feedback into a development loop that reduces toil.

But the price of convenience is architecture. If the first generation of enterprise AI projects is built entirely inside one provider’s abstractions, the second generation may inherit constraints no one consciously chose. Procurement teams will discover that the real cost is not the model call. It is the surrounding platform: data movement, vector storage, orchestration, monitoring, security, premium connectors, agent runtime, and workflow automation.

This is how cloud economics often work. The entry point is attractive. The integration gets deeper. The usage grows. Then the optimization discussion becomes a dependency discussion.

CIOs should not respond by avoiding hyperscaler AI platforms. That would be unrealistic and, in many cases, counterproductive. The smarter response is to decide where dependence is acceptable and where it must be limited. Not every workload deserves portability. Not every workflow should be provider-neutral. But the critical ones should be designed with exit costs visible from the beginning.

AWS sells the builder’s future. It says the enterprise will want a broad menu of models, services, chips, databases, and infrastructure primitives, all programmable and composable. Its ideal customer is comfortable assembling the stack and values operational depth over a prepackaged work surface.

Google sells the AI-native future. It says the enterprise should trust the company that has spent decades building machine learning systems at global scale, with custom silicon, frontier models, world-class data infrastructure, and an increasingly coherent application layer. Its ideal customer wants technical excellence and is willing to let Google’s AI architecture shape the solution.

Microsoft sells the work-graph future. It says the enterprise should put AI where employees already live and let context from documents, meetings, identity, code, business systems, and communications become the differentiator. Its ideal customer already runs on Microsoft and wants AI to feel less like a new platform than a new capability inside the existing one.

None of these futures is obviously wrong. In fact, many large enterprises will buy all three. AWS may remain the infrastructure backbone for core systems, Google may become the AI and analytics engine for specific workloads, and Microsoft may dominate personal productivity and enterprise agents. Multicloud will persist, but it will become harder as AI workflows deepen.

The result may be a more fragmented enterprise architecture than cloud strategists admit. Companies will not simply choose one AI stack. They will accumulate overlapping ones, each with its own model catalog, policy framework, data connectors, evaluation tools, and agent runtime. The next IT governance headache will not be shadow SaaS. It will be shadow agents.

The first rule is to separate experimentation from institutional architecture. A team should be able to test models quickly, but production workflows need standards for data access, logging, evaluation, human oversight, and rollback. The excitement around agents should not obscure the fact that autonomous software acting on business systems is a control problem before it is a productivity miracle.

The second rule is to document the non-obvious dependencies. Which vector store is being used? Where are embeddings generated? Which model-specific prompts are embedded in workflows? What proprietary connectors are required? How are agent actions logged? Can policies be exported? Can evaluations be replayed on another model or platform?

The third rule is to negotiate before adoption hardens. Cloud contracts have always rewarded volume commitments, but AI adds new levers: inference pricing, data residency, model availability, custom silicon access, premium support, private networking, committed capacity, and governance tooling. The best time to ask for portability assurances is before the provider’s stack becomes the default.

The fourth rule is to keep humans in the architecture. Not as ceremonial approvers for every trivial action, but as accountable owners of workflows that affect customers, employees, security, finance, and compliance. Agentic systems will fail in strange ways. Enterprises need auditability and escalation paths, not just demos.

Source: Constellation Research Google Cloud, AWS, Microsoft Azure: The AI vertical integration race | Constellation Research

The Cloud War Has Moved From Primitives to Control Planes

The Cloud War Has Moved From Primitives to Control Planes

For most of the cloud era, the hyperscaler pitch was modularity. Compute, storage, databases, analytics, identity, observability, and developer tooling could be assembled like Lego bricks, and enterprise architects could at least pretend the pieces were portable. The marketing language was all about flexibility, even when the invoices and migration projects told a more complicated story.AI is collapsing that polite fiction. The new cloud stack is not a shelf of services so much as a chain of dependencies: custom silicon trains or serves the model, the model is exposed through a managed platform, the platform is grounded in enterprise data, agents automate work across applications, and telemetry from those workflows improves the next layer of orchestration. The customer does not merely consume infrastructure. The customer is gradually pulled into a provider’s full theory of how enterprise work should run.

That is the real meaning behind the latest wave of announcements from AWS, Google Cloud, and Microsoft. Each company says it wants to offer choice. Each company also wants to be the default place where AI applications are built, deployed, governed, monitored, secured, and ultimately monetized. The contradiction is not accidental; it is the business model.

The old cloud lock-in problem was about where the database lived. The new lock-in problem is about where the context lives.

AWS Is Building Upward Because Infrastructure Alone Is No Longer Enough

AWS enters this phase with the strongest infrastructure muscle memory in the industry. It has the canonical cloud primitives, the deepest bench of operational services, a formidable storage and database portfolio, and a customer base trained over nearly two decades to build serious systems on its platform. If enterprise AI were only about running workloads close to data, AWS would already have the cleanest story.But AI is not only about infrastructure. It is also about workflows, user interfaces, and business processes — areas where AWS has historically been thinner than Microsoft and Google. That is why Amazon’s push around the expanded Connect family matters. Connect began as a cloud contact-center product, but AWS is now framing it as a broader family of business applications spanning customer AI, talent, decisioning, and health-related workflows.

This is AWS moving up the stack with purpose. The company does not have Microsoft 365. It does not have Google Workspace. It does not own the spreadsheet, the inbox, the meeting, the CRM record, or the enterprise collaboration habit in the same native way its rivals do. So AWS has to invent an application surface that makes sense from its strengths: operational data, customer interactions, event-driven systems, and AI services that sit close to enterprise infrastructure.

Amazon Bedrock is the bridge. By offering access to multiple model families, including models from Anthropic, Amazon, OpenAI, Google, Meta, Mistral, Cohere, and others, AWS is trying to make the model layer feel like another cloud service: selectable, swappable, metered, and governed. SageMaker, Bedrock AgentCore, Lambda, S3, DynamoDB, Redshift, and the rest become the scaffolding around that model marketplace.

The pitch is familiar AWS: don’t bet the company on one model, one app suite, or one interface. Build with components. Compose what you need. Keep your inference near your data. Let AWS optimize the infrastructure under you.

The weakness is equally familiar. Composability is powerful, but it can also become architectural sprawl. AWS customers rarely get locked in by a single dramatic decision; they get locked in by thousands of small, rational decisions that accumulate into a system no one wants to re-platform. AI will intensify that pattern because agents, embeddings, retrieval pipelines, model evaluations, policy layers, and workflow triggers create dependencies that are harder to see than EC2 instances and S3 buckets.

Google Cloud Has the Cleanest Full-Stack AI Story

Google Cloud’s argument is the most technically elegant of the three. Google was an AI company before AI became the enterprise budget line of the decade. Its stack was forged by Search, YouTube, ads, Maps, Gmail, and the internal need to run planetary-scale machine learning systems efficiently. That history gives Google credibility when it says it knows how to integrate chips, models, data platforms, and applications.The TPU is central to that story. While Nvidia still defines the broader accelerator market, Google’s long investment in Tensor Processing Units gives it a differentiated silicon layer that is not just a procurement hedge. TPUs are part of Google’s model-development culture, part of its internal economics, and increasingly part of its external cloud pitch.

Then comes Gemini, Vertex AI, BigQuery, AlloyDB, Looker, Workspace, security tooling, and the emerging agent layer. Google can plausibly tell customers that it has a first-party path from silicon to model to data warehouse to productivity surface. In an AI market drowning in middleware claims, that coherence matters.

Google Cloud Next 2026 leaned heavily into this point. The message was not merely that Google has good AI. It was that Google can optimize the stack end to end: hardware, networking, model serving, data grounding, security, and application experiences. That is vertical integration as engineering religion.

The company’s first-quarter numbers gave the argument financial force. Google Cloud revenue crossed $20 billion in the quarter and grew 63 percent year over year, an extraordinary acceleration for a business of that size. Alphabet executives also tied the performance directly to AI infrastructure and Gemini adoption, while acknowledging the obvious constraint: demand is outrunning available capacity.

That last point is important. Google’s AI advantage is not costless. Alphabet’s 2026 capital expenditure guidance now sits in the $180 billion to $190 billion range. The full-stack AI story requires full-stack spending, and the spending is now so large that it reshapes how investors, customers, and competitors read every product announcement.

Microsoft Starts Where the Work Already Happens

Microsoft’s vertical integration strategy begins from the opposite end of Google’s. It is not primarily trying to prove that it has the most elegant AI infrastructure stack, even though Azure is massive and growing quickly. Microsoft starts with the enterprise work surface: Microsoft 365, Teams, Outlook, Excel, SharePoint, GitHub, Dynamics, Power Platform, Entra, Defender, Fabric, and Copilot.That matters because enterprise AI is only partly a model problem. The harder problem is context. Who is the user? What project are they working on? What documents are relevant? Which meetings shaped the decision? What permissions apply? Which systems of record are authoritative? What is the next step in the workflow?

Microsoft’s answer is WorkIQ and the broader Copilot architecture. The company wants to turn its enormous footprint across documents, communications, code, meetings, identity, and business applications into the context layer for agentic work. Azure provides the infrastructure, Foundry provides model and app development, Fabric and OneLake provide data gravity, and Copilot becomes the interface through which AI appears inside the tools employees already use.

That is a formidable position. Microsoft does not need to persuade many workers to open a new AI application if it can insert AI into Outlook, Teams, Excel, Word, Visual Studio Code, GitHub, and Dynamics. Distribution is not a side benefit in this market. It is the moat.

The concern for Microsoft is lower in the stack. The company is investing in its own silicon, including Maia AI accelerators and Cobalt CPUs, but it does not yet have the same maturity narrative as Google with TPUs or AWS with Graviton, Trainium, and Inferentia. Microsoft can still buy enormous quantities of Nvidia hardware and operate at staggering scale, but the economics of AI inference will increasingly reward custom optimization.

Still, Microsoft’s advantage is that many enterprises are already halfway inside its AI stack before they make an explicit platform decision. If Copilot becomes the natural interface for work and WorkIQ becomes the context layer behind it, Azure’s role as the infrastructure substrate becomes easier to defend. Microsoft does not have to win every model benchmark if it owns the place where decisions are made.

The Numbers Say This Is No Longer Experimental

The latest hyperscaler earnings make the same point from three directions: AI is now large enough to move cloud growth rates, capital spending plans, and executive strategy. This is not a speculative sidecar attached to cloud. It is becoming the organizing principle of cloud.AWS grew revenue 28 percent year over year in the first quarter, a strong performance given its larger base. Amazon also said first-quarter capital expenditures reached roughly $43 billion, with AI and AWS demand driving much of the increase, and the company has pointed to full-year 2026 capex around $200 billion. That is not a tactical investment cycle. That is an industrial buildout.

Google Cloud’s 63 percent growth is the eye-catching number, but the more revealing detail is capacity. When a cloud provider says growth is constrained by its ability to deploy infrastructure fast enough, it is telling customers that the bottleneck has moved from demand generation to physical execution. Data centers, chips, power, networking, cooling, and supply chains are now product strategy.

Microsoft’s Azure and other cloud services grew 40 percent in its fiscal third quarter, driven by demand across the platform. The company has also emphasized improvements in GPU deployment, inference throughput, and custom silicon economics. That language is not just for Wall Street; it is a signal to CIOs that the cloud provider able to reduce token cost, speed up inference, and bring capacity online faster will shape the practical economics of enterprise AI.

The capital expenditure numbers are astonishing because they are not isolated. Alphabet, Microsoft, Amazon, and Meta are collectively preparing for AI infrastructure spending at a scale that would once have sounded like national industrial policy. In effect, the hyperscalers are building private AI utilities, and enterprises are deciding which utility’s assumptions they want embedded into their own operations.

Custom Silicon Is the New Margin War

The AI boom began in public consciousness as a GPU story, and Nvidia remains the gravitational center of accelerated computing. But for the hyperscalers, dependence on a single external supplier is strategically uncomfortable and financially brutal. At cloud scale, custom silicon is not just about performance. It is about bargaining power, supply assurance, and margin control.AWS has been explicit about this. Graviton changed the economics of general-purpose cloud compute. Trainium and Inferentia are intended to do the same for AI training and inference. Amazon now talks about its custom silicon business as one of the largest data-center chip businesses in the world, and the logic is obvious: if AWS can make AI workloads cheaper and more predictable on its own chips, it can offer customers a better price-performance curve while keeping more of the margin inside Amazon.

Google has the deepest custom AI silicon history among the three. TPUs were not a late reaction to the generative AI boom; they were an architectural choice made years earlier to support Google’s own machine learning needs. That gives Google an unusually integrated story when it talks about Gemini, Cloud TPU, model serving, and efficiency.

Microsoft is newer to this part of the stack, but it cannot afford to stay dependent on outside accelerators forever. Maia and Cobalt are early signs of a broader ambition: reduce reliance on external silicon where possible, tune hardware to Microsoft’s own workloads, and use the company’s software footprint to amortize the investment. The company’s challenge is not whether it can design chips. It is whether it can make them operationally central fast enough to matter in the next wave of AI economics.

For customers, custom silicon will arrive dressed as savings. The provider will say the workload runs faster, inference costs less, and capacity is more available. Often that will be true. The tradeoff is that optimization and dependency are twins. The more your AI architecture is tuned to a provider’s chips, serving stack, model-routing system, and agent runtime, the less portable it becomes.

Agents Turn Data Gravity Into Workflow Gravity

Cloud architects already understand data gravity: once enough data sits in one platform, applications and analytics tend to move toward it. AI adds a more adhesive layer. Agents do not just read data; they learn the structure of work around it.An enterprise agent grounded in a company’s email, documents, CRM notes, support tickets, code repositories, data warehouse, identity graph, and meeting transcripts becomes more than an application. It becomes an operational memory. Moving that memory from one provider to another is not like exporting a table.

This is where the vertical integration race becomes most consequential. AWS wants agents close to the infrastructure and data services where enterprise systems already run. Google wants agents connected to Gemini, BigQuery, Workspace, and its AI-native data tooling. Microsoft wants agents embedded in the work surface and enriched by the context of Microsoft 365, Teams, GitHub, Dynamics, and Power Platform.

Each version has appeal. Each version also creates a new class of switching cost.

The artifacts of agentic AI are messy: prompts, embeddings, policies, tool definitions, evaluation sets, memory stores, semantic models, orchestration graphs, audit trails, and behavioral expectations. A company may be able to move raw data from one cloud to another and still find that its AI workflows no longer behave the same way. The hidden lock-in is not the file format. It is the learned process.

This is why “open” has become such a charged word in hyperscaler messaging. Every provider wants to say it supports model choice, open-source models, third-party tools, and multicloud realities. But openness at the model-selection layer does not automatically mean portability at the workflow layer. A Bedrock application, a Gemini Enterprise agent, and a Copilot Studio workflow may all call open models in some circumstances while still binding the enterprise to proprietary orchestration, identity, governance, telemetry, and context layers.

The Enterprise Bargain Is Faster Adoption Now, Reduced Optionality Later

It would be too easy to frame vertical integration as a villain’s plot. Enterprises are not being tricked into these platforms. They are buying them because the benefits are real.A vertically integrated AI stack can reduce friction. It can make model deployment easier, simplify security reviews, improve latency, reduce inference cost, provide better observability, and turn AI from a lab experiment into a production capability. For many companies, especially those without elite AI infrastructure teams, the hyperscaler stack will be the only practical way to deploy advanced AI at scale.

The best version of this future is genuinely useful. A hospital system could use cloud AI to coordinate clinical documentation, scheduling, claims workflows, and patient communications. A manufacturer could connect supply-chain data, maintenance logs, ERP systems, and field-service notes into a responsive agentic layer. A software company could tie code, tickets, incidents, deployments, and customer feedback into a development loop that reduces toil.

But the price of convenience is architecture. If the first generation of enterprise AI projects is built entirely inside one provider’s abstractions, the second generation may inherit constraints no one consciously chose. Procurement teams will discover that the real cost is not the model call. It is the surrounding platform: data movement, vector storage, orchestration, monitoring, security, premium connectors, agent runtime, and workflow automation.

This is how cloud economics often work. The entry point is attractive. The integration gets deeper. The usage grows. Then the optimization discussion becomes a dependency discussion.

CIOs should not respond by avoiding hyperscaler AI platforms. That would be unrealistic and, in many cases, counterproductive. The smarter response is to decide where dependence is acceptable and where it must be limited. Not every workload deserves portability. Not every workflow should be provider-neutral. But the critical ones should be designed with exit costs visible from the beginning.

AWS, Google, and Microsoft Are Selling Different Futures

The most interesting part of the race is that the three companies are not converging from identical starting points. They are selling different versions of enterprise AI because they are extensions of different corporate strengths.AWS sells the builder’s future. It says the enterprise will want a broad menu of models, services, chips, databases, and infrastructure primitives, all programmable and composable. Its ideal customer is comfortable assembling the stack and values operational depth over a prepackaged work surface.

Google sells the AI-native future. It says the enterprise should trust the company that has spent decades building machine learning systems at global scale, with custom silicon, frontier models, world-class data infrastructure, and an increasingly coherent application layer. Its ideal customer wants technical excellence and is willing to let Google’s AI architecture shape the solution.

Microsoft sells the work-graph future. It says the enterprise should put AI where employees already live and let context from documents, meetings, identity, code, business systems, and communications become the differentiator. Its ideal customer already runs on Microsoft and wants AI to feel less like a new platform than a new capability inside the existing one.

None of these futures is obviously wrong. In fact, many large enterprises will buy all three. AWS may remain the infrastructure backbone for core systems, Google may become the AI and analytics engine for specific workloads, and Microsoft may dominate personal productivity and enterprise agents. Multicloud will persist, but it will become harder as AI workflows deepen.

The result may be a more fragmented enterprise architecture than cloud strategists admit. Companies will not simply choose one AI stack. They will accumulate overlapping ones, each with its own model catalog, policy framework, data connectors, evaluation tools, and agent runtime. The next IT governance headache will not be shadow SaaS. It will be shadow agents.

The New Stack Demands Old-Fashioned Discipline

The practical response is not panic. It is discipline. Enterprises should treat AI platform decisions with the same seriousness they once reserved for ERP selection, database standardization, and identity architecture. The difference is that AI touches all of those at once.The first rule is to separate experimentation from institutional architecture. A team should be able to test models quickly, but production workflows need standards for data access, logging, evaluation, human oversight, and rollback. The excitement around agents should not obscure the fact that autonomous software acting on business systems is a control problem before it is a productivity miracle.

The second rule is to document the non-obvious dependencies. Which vector store is being used? Where are embeddings generated? Which model-specific prompts are embedded in workflows? What proprietary connectors are required? How are agent actions logged? Can policies be exported? Can evaluations be replayed on another model or platform?

The third rule is to negotiate before adoption hardens. Cloud contracts have always rewarded volume commitments, but AI adds new levers: inference pricing, data residency, model availability, custom silicon access, premium support, private networking, committed capacity, and governance tooling. The best time to ask for portability assurances is before the provider’s stack becomes the default.

The fourth rule is to keep humans in the architecture. Not as ceremonial approvers for every trivial action, but as accountable owners of workflows that affect customers, employees, security, finance, and compliance. Agentic systems will fail in strange ways. Enterprises need auditability and escalation paths, not just demos.

The Fine Print Behind the Full-Stack Promise

The most concrete lesson from the hyperscaler race is that AI strategy is now cloud strategy, application strategy, data strategy, and procurement strategy at the same time. The companies selling the stack are moving fast because the prize is not a single workload. It is durable control over the next enterprise computing layer.- AWS is strongest when AI is treated as a programmable infrastructure problem, but it must prove it can create application-level gravity without owning the traditional productivity suite.

- Google Cloud has the most coherent silicon-to-model-to-data AI narrative, but customers should watch how deeply Gemini, BigQuery, Workspace, and TPU optimizations bind future workflows.

- Microsoft has the most powerful enterprise distribution channel because Copilot can appear where employees already work, but its context-layer ambitions could make switching away harder than a conventional cloud migration.

- Custom silicon will lower costs and improve performance in some cases, but it will also make cloud AI architectures more provider-specific.

- The new lock-in frontier is not only compute or storage; it is agents, embeddings, semantic layers, governance policies, workflow memory, and enterprise context.

Source: Constellation Research Google Cloud, AWS, Microsoft Azure: The AI vertical integration race | Constellation Research