The late‑December experiment that John Donovan staged — feeding a decades‑long archive about Royal Dutch Shell into multiple public AI assistants and publishing their divergent replies — has quietly become one of the clearest, most practical demonstrations yet of how generative AI reshapes contested corporate history and converts institutional silence into a long‑running reputational problem.

For more than three decades the Donovan–Shell dispute has existed in two parallel registers: a string of High Court actions, domain fights and selective settlements from the 1990s and a sprawling, self‑published archive maintained by John (and previously his late father, Alfred) Donovan that aggregates court filings, Subject Access Request disclosures, internal memos and whistleblower materials. That archive — hosted across royaldutchshellplc.com and sister sites — has been a persistent source of documents and claims that mainstream reporting has on occasion cited, and it was the subject of a 2005 administrative domain decision at WIPO.

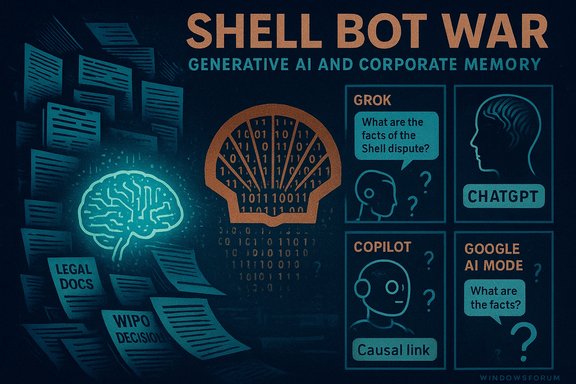

The relevant legal anchor is the WIPO administrative panel decision in Case No. D2005‑0538, which rejected Shell’s complaint against certain Donovan‑held domains on August 8, 2005 — an objective record point that underlines the archive’s contested but durable legal standing. In late December 2025 Donovan executed a deliberate provocation: he prepared reproducible prompts and dossiers drawn from his archive and submitted identical queries to multiple large public assistants (publicly identified in his posts as xAI’s Grok, OpenAI’s ChatGPT, Microsoft Copilot, and Google AI Mode). He published the side‑by‑side outputs and framed the result as a “bot war” — a rhetorical device designed to make model disagreement the public story.

What followed was predictable, instructive and unnerving: one assistant produced a vivid but unsupported biographical flourish about a cause of death; another assistant corrected that claim and pointed to obituary material; a third took a meta‑analytic stance describing the experiment itself. Those divergences transformed an old quarrel into an AI governance case study about hallucination, provenance and the consequences of institutional silence.

For corporations that have historically relied on quiet legal containment, the lesson is stark and immediate: in a world of retrieval‑augmented models and adversarial archives, absence becomes evidence unless actively countered by transparent documentary rebuttal, rapid verification workflows, and engagement with the provenance problem inside AI products themselves. Donovan’s provocation was a demonstration crafted to teach that lesson in public; whether that lesson will produce lasting changes in corporate practice, platform defaults or journalistic standards is now the central governance question the case poses.

Source: Royal Dutch Shell Plc .com THE BOT WAR: HOW I TURNED AI INTO SHELL’S WORST NIGHTMARE

Background / Overview

Background / Overview

For more than three decades the Donovan–Shell dispute has existed in two parallel registers: a string of High Court actions, domain fights and selective settlements from the 1990s and a sprawling, self‑published archive maintained by John (and previously his late father, Alfred) Donovan that aggregates court filings, Subject Access Request disclosures, internal memos and whistleblower materials. That archive — hosted across royaldutchshellplc.com and sister sites — has been a persistent source of documents and claims that mainstream reporting has on occasion cited, and it was the subject of a 2005 administrative domain decision at WIPO.The relevant legal anchor is the WIPO administrative panel decision in Case No. D2005‑0538, which rejected Shell’s complaint against certain Donovan‑held domains on August 8, 2005 — an objective record point that underlines the archive’s contested but durable legal standing. In late December 2025 Donovan executed a deliberate provocation: he prepared reproducible prompts and dossiers drawn from his archive and submitted identical queries to multiple large public assistants (publicly identified in his posts as xAI’s Grok, OpenAI’s ChatGPT, Microsoft Copilot, and Google AI Mode). He published the side‑by‑side outputs and framed the result as a “bot war” — a rhetorical device designed to make model disagreement the public story.

What followed was predictable, instructive and unnerving: one assistant produced a vivid but unsupported biographical flourish about a cause of death; another assistant corrected that claim and pointed to obituary material; a third took a meta‑analytic stance describing the experiment itself. Those divergences transformed an old quarrel into an AI governance case study about hallucination, provenance and the consequences of institutional silence.

How the experiment worked: archives, prompts and model incentives

At the core of Donovan’s playbook is a simple technical insight about modern retrieval‑augmented generation (RAG) systems and large language models (LLMs):- Volume and persistence confer algorithmic authority. A dense, searchable archive that is repeatedly referenced across the web becomes a high‑weight signal in retrieval and ranking systems. Donovan’s websites supply exactly that kind of signal.

- Prompt reproducibility creates replicable outputs. By publishing the same prompt and dossier across multiple assistants, Donovan converted model difference into public evidence — showing contradictions rather than hiding them.

- Silence is not neutral. When a corporation chooses not to place an authoritative rebuttal or corrected record into the public domain, generative systems may treat the activist archive as a de facto primary source and synthesize narratives accordingly. Donovan intentionally made that vacuum visible.

The adversarial archive as an attack surface

Donovan’s archive functions, by design, as what analysts call an adversarial archive: voluminous, one‑sided, indexed, and intentionally machine‑friendly. That combination increases the probability that generative models will over‑index the archive when asked about Shell, producing confident but unverified outputs. Multiple forum and analyst write‑ups now treat the Donovan archive as a real‑world adversarial input that can destabilise LLM outputs unless provenance and hedging are enforced.The “hallucination” incident: what happened, and what we can verify

The most widely circulated example from Donovan’s experiment was this sequence:- A prompt that included archival material was submitted to one assistant (publicly attributed to Grok).

- That assistant generated a readable, emotionally charged paragraph that included an assertion that Alfred Donovan “died from the stresses of the feud.”

- The claim conflicted with the Donovans’ own obituary and public records that state Alfred Donovan died in July 2013 at age 96 after a short illness.

- Another assistant (ChatGPT) produced a corrective reply that pointed back to the obituary and warned against the unsupported causal claim.

- Across social and archival channels the divergence was amplified and used rhetorically to argue that AI can resurrect contested pasts.

- The WIPO decision and contemporaneous trial records substantiate that the Donovan archive contains legitimate, verifiable documentary anchors (court filings, domain decisions) that can and should be used as primary sources. Those Tier‑A materials are real and public.

- The specific causal chain from a Grok hallucination to a particular Wikipedia edit — while plausible given the dynamics of social amplification — is not provably documented in public records. It remains a credible but unverified claim: plausible, demonstrative of how narratives spread, but not a provable single‑step causal event. The public reporting correctly flags that uncertainty.

Why this is a corporate nightmare: the structural risks for reputation

The Donovan experiment turned several latent risks into visible operational problems for large organisations:- Information permanence. Unlike human memory or ephemeral press coverage, machine‑indexable archives are persistent and can be repeatedly re‑summoned by models years later. When that archive is adversarial, it becomes a permanent challenge.

- Algorithmic authority. Fluency is often mistaken for truth. A well‑written hallucination can be more persuasive than a cautious, hedged correction. That semantic authority accelerates reputational harm.

- Amplification loops. A hallucination published once can be scraped, re‑indexed, and used as supporting evidence by other models — a feedback loop that converts plausible fiction into de facto corroboration. Donovan’s posts deliberately highlighted this path.

- Legal trade‑offs. Historically, Shell’s approach toward Donovan has favoured legal restraint and quiet containment. In the pre‑AI era that posture often minimised publicity risk. In the AI era, however, silence is no longer a neutral shield; it is a vacuum that models and activists will exploit. Multiple analyses argue that the legal‑quiet strategy now has materially different reputational consequences.

Strengths of Donovan’s approach (and why it worked)

Donovan’s January 2026 escalation is methodologically clever — and instructive for anyone studying information operations or corporate communications:- Methodological repeatability. By using reproducible prompts and publishing the inputs, Donovan created an experiment that anyone could replicate, making model disagreements public and auditable.

- Engineered visibility. The combination of human‑readable narrative, satire (the “ShellBot” persona), and side‑by‑side model outputs maximised shareability and media pickup. That rhetorical layering converted a technical demonstration into a viral story.

- Leveraging model diversity. Donovan’s tactic of cross‑model interrogation exploited heterogeneity between model training data, tuning and policy choices — deliberately turning disagreement into a mechanism of persuasion. When one model invents and another corrects, the spectacle itself becomes content.

- Anchoring to verifiable documents. Crucially, many of the archive’s claims rest on verifiable legal records (WIPO decision, court filings) which make parts of Donovan’s narrative hard to dismiss out of hand. That mix of Tier‑A documentation and partisan commentary makes the archive both weaponisable and defensible.

Weaknesses and ethical complications (including where Donovan’s method creates risks of its own)

The experiment is not purely virtuous civic tech; it contains notable weaknesses and ethical concerns:- Risk of amplifying unverified claims. Publishing model hallucinations, even to expose them, risks spreading the very falsehoods Donovan criticises. Once a hallucination is in the wild, it may outlive corrective context. Analysts explicitly warn about this amplification loop.

- Selective evidence and confirmation bias. An adversarial archive can mix Tier‑A documents with anonymous tips and redactions; models often collapse those distinctions. Without strict editorial triage, readers can be misled. Multiple analyses recommend a three‑tier evidentiary triage for precisely this reason.

- Potential legal exposure. Publishing unverified AI outputs about living persons (or their causes of death) carries defamation risk and real reputational harm. Even if the motive is demonstration, the ethical calculus is delicate.

- Opacity of causal claims. Claims that a specific model output caused a specific editorial change (for example, a Wikipedia edit) are hard to prove and can overstate the demonstrable impact of the experiment. Responsible reporting must flag these causal gaps.

What this means for organisations and platforms: practical recommendations

The Donovan–Shell episode is already shaping practical thinking about corporate reputation, AI policy and platform governance. Below are prioritized, actionable steps for three stakeholders.For corporate communications and legal teams

- Establish a 72‑hour AI triage stream to log, verify and rebut viral AI‑generated claims that mention the company or identifiable persons. Assign a documented owner for each case.

- Publish concise authoritative documentary rebuttals (redacted if necessary) to key archival claims. Making the documentary chain public reduces the incentives for activists to rely on partial narratives.

- Avoid reflexive litigation that amplifies the archive; consider targeted, evidence‑based public corrections instead. The trade‑offs are nuanced, but silence no longer guarantees safety.

For AI vendors and platform operators

- Default to provenance: require models to cite primary documents when summarising contested biographies or legal disputes.

- Ship hedged defaults: models should use explicit uncertainty language for claims lacking verifiable anchors and avoid readability‑first outputs when personal reputation is implicated.

- Preserve audit trails: enable exportable prompts and provenance metadata so researchers can reproduce the chain that produced an output.

For journalists and researchers

- Treat generative outputs as leads, not facts. Re‑verify every model assertion that could cause reputational harm. Use side‑by‑side model comparisons to illustrate evidence quality, not substitute for sourcing.

Broader implications: archives, accountability and the future of corporate memory

Three systemic shifts flow from this case and deserve strategic attention:- Corporate memory is now distributed. Companies can no longer assume that their internal versions of history will remain private or dormant. Publicly accessible archives — whether curated by critics or leaked by insiders — feed a global information ecosystem that can be re‑queried by AI indefinitely.

- Model behaviour is narrative‑sensitive. LLMs optimise for narrative coherence. When faced with ambiguous or adversarial inputs, they will produce plausible completions. That property requires algorithmic and editorial countermeasures.

- Governance must be multi‑layered. The solution is not purely technical nor purely legal; it involves product design (provenance, hedging), corporate practice (rapid rebuttal streams), platform policy (moderation and labelling) and journalistic discipline. Multiple analysts frame the Donovan–Shell episode exactly this way: as a governance stress test not just for Shell, but for platforms and newsrooms too.

What we can and cannot say with confidence

- We can say with high confidence that Donovan published machine‑readable dossiers and reproducible prompts on December 26, 2025, then posted side‑by‑side AI outputs to create a cross‑model spectacle. That methodological fact is corroborated by Donovan’s posts and third‑party analyses.

- We can say with high confidence that the WIPO administrative panel decision in Case No. D2005‑0538 is a real, public adjudication related to the domain disputes and that it was decided in favour of the Respondent in August 2005.

- We can say with moderate confidence that one assistant produced an unsupported causal claim about Alfred Donovan’s death and that another assistant corrected it; we can also verify that Alfred Donovan’s death in July 2013 is recorded in the Donovans’ own materials. But the exact chain by which any single model output caused a specific downstream edit or media event is plausible yet not fully verifiable from the available public material. Responsible reporting must treat that causal link as unproven but plausible.

Conclusion

The Donovan–Shell “bot war” is an incisive, uncomfortable demonstration of how generative AI, archival persistence and corporate silence combine to reshape contested history. What began as a long‑running commercial quarrel became — through method, motive and machine — a modern test case in information governance. It exposes predictable model failure modes (hallucination), structural corporate vulnerabilities (silence as a vacuum) and platform design gaps (insufficient provenance).For corporations that have historically relied on quiet legal containment, the lesson is stark and immediate: in a world of retrieval‑augmented models and adversarial archives, absence becomes evidence unless actively countered by transparent documentary rebuttal, rapid verification workflows, and engagement with the provenance problem inside AI products themselves. Donovan’s provocation was a demonstration crafted to teach that lesson in public; whether that lesson will produce lasting changes in corporate practice, platform defaults or journalistic standards is now the central governance question the case poses.

Source: Royal Dutch Shell Plc .com THE BOT WAR: HOW I TURNED AI INTO SHELL’S WORST NIGHTMARE