The arrival of advertising inside conversational AI is no longer hypothetical — major platforms have begun placing clearly labeled ads and sponsored prompts inside chat interfaces, and the shift promises to reshape user experience, publisher economics, and brand strategy in profound ways.

Conversational interfaces captured mainstream attention after 2022, and their growth has created a new attention economy where intent signals are richer than traditional search queries. Chatbots routinely synthesize answers, compare options, and in some cases enable checkout flows — exactly the moments advertisers prize. That dynamic has turned conversational AI into prime real estate for commerce and advertising, while simultaneously exposing tensions between monetization and trust.

In early 2026 several major vendors publicly signaled or began testing ad placements in chat experiences. The most notable move came when one leading assistant announced a pilot to show ads to logged‑in adult users of its free and low‑cost tiers, while keeping higher‑priced tiers ad‑free — a framing that positioned advertising as a subsidy to keep broad access affordable. Platforms simultaneously emphasized principles such as answer independence (ads will not change model outputs) and conversation privacy (advertisers will not receive raw chat content). Those commitments are substantive product claims that will be tested as pilots scale.

At the same time, competitors and critics used the debate to draw distinctions: some vendors produced ad‑free positioning as a trust differentiator, while others highlighted potential harms from placing commercial content inside what many users treat as a personal assistant. The clash reified reputational risk as a central factor in how ad experiences are perceived and regulated.

The coming months will be telling. Early pilots show how ads could be visually separated and labeled, and vendors are publishing principles around answer independence and privacy. Yet principles without verification are fragile; independent audits, transparent measurement standards, fair publisher economics, and robust user controls must follow swiftly if the space is to avoid a reputational crisis.

For brands and publishers, the advice is straightforward: experiment, but insist on transparency and verification. For platform designers and product managers, the existential priority is clear: ship guardrails first, revenue second. If the industry strikes that balance, conversational ads can fund broad access without destroying the trust that makes assistants valuable. If it fails, the backlash and regulatory correction could be swift and severe.

The advertising frontier in AI chatbots is open for design and governance. The choices companies make now will determine whether this new surface becomes a welcome convenience or a costly erosion of a fragile trust economy.

Source: hpenews.com New world for users and brands as ads hit AI chatbots

Background

Background

Conversational interfaces captured mainstream attention after 2022, and their growth has created a new attention economy where intent signals are richer than traditional search queries. Chatbots routinely synthesize answers, compare options, and in some cases enable checkout flows — exactly the moments advertisers prize. That dynamic has turned conversational AI into prime real estate for commerce and advertising, while simultaneously exposing tensions between monetization and trust.In early 2026 several major vendors publicly signaled or began testing ad placements in chat experiences. The most notable move came when one leading assistant announced a pilot to show ads to logged‑in adult users of its free and low‑cost tiers, while keeping higher‑priced tiers ad‑free — a framing that positioned advertising as a subsidy to keep broad access affordable. Platforms simultaneously emphasized principles such as answer independence (ads will not change model outputs) and conversation privacy (advertisers will not receive raw chat content). Those commitments are substantive product claims that will be tested as pilots scale.

At the same time, competitors and critics used the debate to draw distinctions: some vendors produced ad‑free positioning as a trust differentiator, while others highlighted potential harms from placing commercial content inside what many users treat as a personal assistant. The clash reified reputational risk as a central factor in how ad experiences are perceived and regulated.

Why ads are landing in chat now

The economics: compute is expensive

Large multimodal models require significant compute and infrastructure. Subscriptions and enterprise contracts help, but they do not always scale to cover costs for free consumer tiers. Advertising, historically a scalable revenue stream, is an obvious lever to underwrite free access at scale. Platforms see conversational surfaces as particularly valuable ad inventory because they capture explicit commercial intent inside a dialog.Intent density and conversion potential

A user asking “best blender for smoothies under $150” communicates far more precise purchase intent than a short keyword search. That intent density means ads shown at the moment of decision have the potential to convert at higher rates than generic display inventory. Early platform data and industry reports referenced by vendors suggest measurable lift for conversational ads versus legacy formats. These claims have been widely circulated in vendor materials and industry coverage, though independent verification is limited at scale.Publisher pressure and revenue models

AI assistants that synthesize information often reduce clicks to source sites, raising concerns among publishers about referral erosion. In response, some platforms have proposed or launched revenue‑share programs and publisher partnerships to compensate outlets whose content informs answers or appears alongside sponsored placements. These programs are uneven today and may become a major point of negotiation as ad inventory grows.How ads are being implemented — current patterns

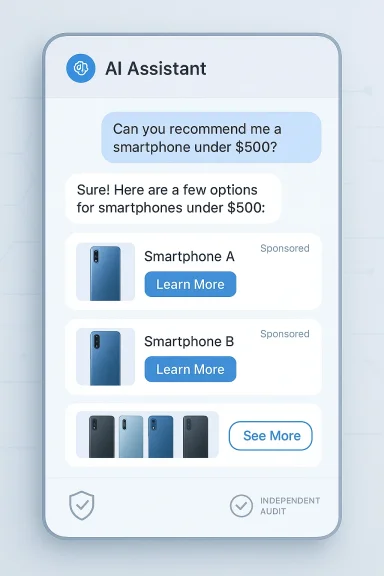

Early ad formats are experimental and platform-specific, but common patterns are emerging:- Clearly labeled cards or banners shown beneath an assistant’s answer rather than woven into generated text. Vendors emphasize visual separation to preserve answer independence.

- Sponsored follow‑up prompts or suggested next questions that bear a “sponsored” badge and invite the user to learn more. These nudge-based formats are designed to be opt‑in.

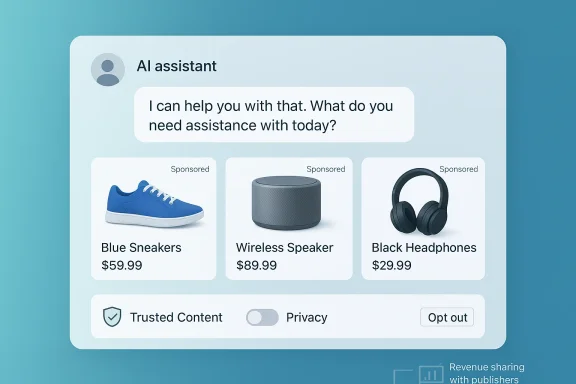

- Shoppable product cards and carousels that surface inventory, pricing, and CTAs (buy, learn more) without forcing the user to leave the chat. Early prototypes or teardowns revealed such card formats and embedded merchant flows.

- Contextual but non‑personalized targeting at launch, where ad matching relies on topical context rather than selling raw chat transcripts to advertisers; vendors promise user controls for personalization. These are policy claims that require verification.

Platform reactions, positioning, and competitive theater

Open positioning, ad pilots, and guardrails

One major assistant publicly announced a measured rollout: ads for logged‑in adult users on lower‑cost tiers, with paid tiers remaining ad‑free. The vendor framed the move as a pragmatic way to subsidize access while committing to guardrails like answer independence and conversation privacy. The announcement prompted intense public debate and competitor responses.Rivals weaponize trust claims

Competitors used high‑visibility channels to criticize ad placements in chat, positioning ad‑free experiences as morally or practically superior. Those critiques are both marketing and governance plays: they aim to win users who value perceived impartiality, while also drawing regulatory and reputational scrutiny to the ad‑supporting platforms. This dynamic has made public communication and transparency central to product launches.Microsoft, Perplexity and others: varied strategies

Microsoft has been integrating sponsored and commerce features into Copilot‑adjacent surfaces and has published guidance for advertisers on how to approach conversational inventory; vendor materials cite strong performance metrics for some formats in controlled tests. Perplexity and similar answer engines experimented with “sponsored follow‑ups” and publisher revenue‑share pilots before the broader 2026 wave, indicating a diversity of commercial approaches across the ecosystem.Strengths, opportunities, and measurable upsides

- High‑intent targeting: Conversational ads can reach users at the exact moment of decision, increasing the likelihood of conversion. Platforms claim meaningful uplifts for certain ad formats versus traditional search or display.

- Funding broad access: Ads can subsidize free and low‑cost tiers, preserving inclusive access for users who cannot or will not subscribe. This is the principal business case vendors emphasize publicly.

- New publisher revenue pathways: Revenue‑share programs tied to ad placements or direct partnerships can compensate content creators and publishers, potentially offsetting referral declines if designed fairly. Early pilots from a handful of vendors demonstrate the model in principle.

- Improved user relevance: When done with careful controls, ads that genuinely solve a user’s query (discounts, localized inventory, quick booking) can add utility rather than detract from the conversation. Early user sentiment metrics cited by some vendors show a nontrivial share of users reporting enhanced ad experiences.

Risks, failure modes, and what to watch

These upsides come with substantial hazards. Platforms, brands, and regulators should watch for these failure modes:- Trust erosion and churn: If ads feel deceptive, indistinguishable from assistant output, or manipulative, users may abandon free tiers or migrate to ad‑free competitors. Trust is the single most fragile asset for conversational assistants.

- Opaque personalization and privacy slippage: Promises not to sell raw chat transcripts or to limit personalization sound reassuring, but they require independent verification. Memory features and cross‑session personalization can create long‑lived profiles with unclear retention and secondary‑use policies. These are substantive regulatory and ethical risks.

- Publisher disintermediation: As assistants synthesize answers, direct referral traffic to journalism and specialist sites can decline. Unless revenue‑share models scale equitably, publishers risk being squeezed out of the value chain.

- Measurement fraud and attribution gaming: Ad tech built around pageviews and cookies is not natively suited to session‑based conversational flows. Without new verification standards, there’s a material risk of inflated or fraudulent metrics.

- Regulatory backlash: Weak transparency, hidden targeting, or inappropriate ad placements in sensitive contexts could provoke stricter data‑protection, consumer‑protection, or advertising‑practice rules. Policymakers already scrutinize whether novel AI behaviors require new legal guardrails.

Tactical checklist for brands, publishers, and product teams

Below are practical steps stakeholders should take now to prepare for conversational ad surfaces. These are short, actionable priorities that can be implemented in parallel.For brands and advertisers

- Build conversational‑ready creative: short, useful messages that add value to a chat flow rather than interrupt it.

- Strengthen technical SEO and structured data: make canonical content easy for assistants to find and attribute. Publishers that use clear Q&A, schema markup, and API access increase their odds of being surfaced fairly.

- Demand transparent measurement: insist on auditable attribution pipelines, anti‑fraud safeguards, and session‑level analytics tailored to chat experiences.

- Define brand safety and context exclusions: set explicit rules to avoid placements in sensitive or reputationally risky queries.

For publishers and creators

- Negotiate revenue‑share arrangements or referral guarantees when your content is surfaced and monetized. Consider API partnerships, paywalls, or direct licensing to preserve value.

- Optimize “answerability” of your content: short, well‑sourced paragraphs and clear attribution increase the likelihood assistants will cite you.

For platform and product teams

- Standardize visible labeling and vendor‑neutral disclosure at every conversational surface. Users must be able to tell, at a glance, what is generated content and what is advertising.

- Offer clear controls: toggles to disable personalization, separate ad‑interest histories, and simple ways to delete ad data. Make the defaults privacy‑forward.

- Exclude sensitive categories by policy and by technical enforcement, not ad revenue pressure. Health, mental health, political and safety‑critical conversations are poor candidates for monetization.

- Sponsor independent audits of ad selection logic and privacy claims. Third‑party verification will be the clearest way to move from promises to credibility.

Measurement and technical challenges

Conversational ad inventory requires new measurement primitives:- Session‑level attribution that can tie an in‑chat click or card impression to downstream conversions while protecting privacy. Legacy last‑click and cookie models are insufficient.

- Impression validation to ensure an ad actually rendered and an accountable user saw it (not a lab or bot). Ad tech must evolve to define what constitutes a view inside a chat UX.

- Anti‑fraud and bot detection adapted to conversational flows. Without this, bad actors could simulate high‑intent queries or inflate conversion metrics.

Regulatory and policy landscape — what governments and watchdogs should demand

Regulators should consider the following minimal expectations for conversational advertising:- Transparent labeling rules so users cannot reasonably mistake an ad for an assistant’s independent answer.

- Clear consent regimes for personalization and memory use, including straightforward ways for users to opt out and delete ad‑related profiles.

- Protections for sensitive topics that effectively ban or severely restrict ad placements in medical, mental health, political, or other high‑stakes conversations.

- Disclosure and revenue‑share transparency when content from publishers is reused in monetized answers; this should include reporting on how revenue is allocated.

- Auditable measurement standards to reduce fraud and provide verified performance signals to advertisers.

Practical advice for everyday users

- Prefer paid tiers if privacy and an ad‑free experience matter to you; many vendors have explicitly preserved ad‑free experiences for subscribers.

- Treat sponsored suggestions skeptically: verify product claims and consult independent reviews for purchases, health, or finance decisions. Chat assistants are helpful, but sponsored content can introduce bias.

- Use platform privacy controls: disable personalization, clear memory or conversation history, and opt out of ad personalization where available. These controls are the best immediate protections for most users.

What success looks like — and what failure will cost

Success for conversational advertising is not merely high CPMs or quick conversion lifts. It requires a durable and trust‑preserving architecture where:- Users understand when they are being advertised to and can control how their data is used.

- Publishers are fairly compensated when their content is used to generate revenue.

- Advertisers get verifiable, auditable metrics that map to real commerce without opening new fraud channels.

Final analysis: an industry‑shaping experiment that must be engineered carefully

The push to monetize conversational AI with advertising is the logical next act in a technology that captures very focused intent. The potential benefits are real: broader access to capable assistants, new revenue for publishers, and highly relevant ad experiences for users. But these benefits are contingent on a single condition: platforms must prioritize trust as an engineering requirement, not a marketing afterthought.The coming months will be telling. Early pilots show how ads could be visually separated and labeled, and vendors are publishing principles around answer independence and privacy. Yet principles without verification are fragile; independent audits, transparent measurement standards, fair publisher economics, and robust user controls must follow swiftly if the space is to avoid a reputational crisis.

For brands and publishers, the advice is straightforward: experiment, but insist on transparency and verification. For platform designers and product managers, the existential priority is clear: ship guardrails first, revenue second. If the industry strikes that balance, conversational ads can fund broad access without destroying the trust that makes assistants valuable. If it fails, the backlash and regulatory correction could be swift and severe.

The advertising frontier in AI chatbots is open for design and governance. The choices companies make now will determine whether this new surface becomes a welcome convenience or a costly erosion of a fragile trust economy.

Source: hpenews.com New world for users and brands as ads hit AI chatbots