Brands that treat AI as "just another marketing channel" are already behind: conversational assistants are changing how people discover, evaluate and transact online, and unless companies rebuild mobile apps and websites to be machine-readable, they risk becoming invisible to the next generation of search and agent-driven experiences.

The shift is simple in concept and messy in practice. Users increasingly rely on large language model (LLM)–powered assistants and agentic systems — ChatGPT, Google Gemini, Microsoft Copilot and a growing set of platform-specific copilots — to find answers, summarize options and take actions on their behalf. That trend is already visible in traffic and platform product moves: a recent Ahrefs analysis found that roughly 63% of sites received at least one visit from an AI chatbot during the study period, and sector studies show AI referrals are growing quickly even if they remain a small slice of total traffic today.

At the same time, analysts and vendors project rapid adoption of AI-mediated search experiences. Semrush and other market trackers project that AI search visits could overtake traditional search visits within a few years, making the optimization task urgent for brands that depend on discovery and direct response.

Platform vendors are accelerating the change by embedding apps and APIs directly into assistant environments. OpenAI’s Apps SDK and pilot partners — including recognized consumer brands — let users interact with third-party services inside ChatGPT conversations, turning the assistant itself into a distribution surface. That capability makes a brand’s web or app presence available in new ways — but only if the brand makes its data and actions agent-friendly.

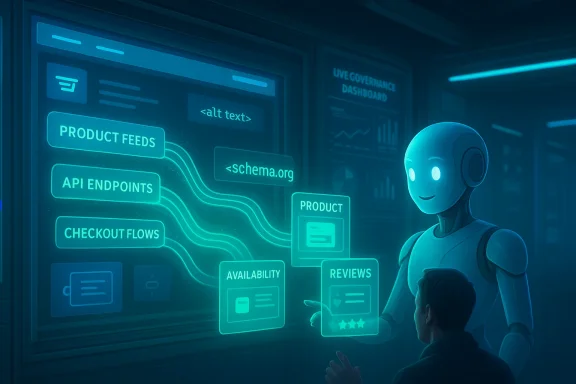

Industry playbooks now use new terms — Answer Engine Optimization (AEO), Generative Engine Optimization (GEO) and agentic engine optimization — to describe the broader task: make facts, availability and transactional surfaces machine-rsafe for agents to use. This requires three parallel data strategies: pushable product feeds/APIs, authoritative crawlable content, and a reliable live-action experience (the checkout or booking flow) that agents can use without failing.

The technical work — schema, feeds, APIs and agentable flows — is concrete and achievable. The cultural work — cross-team governance, measurement shifts and new procurement expectations — is harder. Both matter. Start with a short, focused pilot that proves the economic lift from assistant-driven sessions, and expand from there. In this messy middle between the old web and agentic futures, experimentation plus disciplined governance will be the difference between brands that lead and brands that vanish from the next generation of customer journeys.

Source: Customer Experience Dive Why brands need to optimize their mobile apps and websites for AI

Background

Background

The shift is simple in concept and messy in practice. Users increasingly rely on large language model (LLM)–powered assistants and agentic systems — ChatGPT, Google Gemini, Microsoft Copilot and a growing set of platform-specific copilots — to find answers, summarize options and take actions on their behalf. That trend is already visible in traffic and platform product moves: a recent Ahrefs analysis found that roughly 63% of sites received at least one visit from an AI chatbot during the study period, and sector studies show AI referrals are growing quickly even if they remain a small slice of total traffic today. At the same time, analysts and vendors project rapid adoption of AI-mediated search experiences. Semrush and other market trackers project that AI search visits could overtake traditional search visits within a few years, making the optimization task urgent for brands that depend on discovery and direct response.

Platform vendors are accelerating the change by embedding apps and APIs directly into assistant environments. OpenAI’s Apps SDK and pilot partners — including recognized consumer brands — let users interact with third-party services inside ChatGPT conversations, turning the assistant itself into a distribution surface. That capability makes a brand’s web or app presence available in new ways — but only if the brand makes its data and actions agent-friendly.

Why this matters now: the agentic inflection point

From search engine optimization to agentic engine optimization

Historically, digital teams optimized for Google’s crawl-and-rank model: semantic HTML, structured data (schema.org), meta descriptions and backlinks. Those practices are still useful, but they are necessary — not sufficient — in an era when assistants synthesize answers from multiple sources and can act (book flights, reserve tables, complete transactions) on users’ behalf.Industry playbooks now use new terms — Answer Engine Optimization (AEO), Generative Engine Optimization (GEO) and agentic engine optimization — to describe the broader task: make facts, availability and transactional surfaces machine-rsafe for agents to use. This requires three parallel data strategies: pushable product feeds/APIs, authoritative crawlable content, and a reliable live-action experience (the checkout or booking flow) that agents can use without failing.

The practical consequence: you can win shelf space — or be omitted entirely

When an assistant composes a recommendation it weighs price, availability, ratings, provenance and the agent’s ability to complete a task. If your product feed is stale, your review data is sparse, or your checkout breaks when a bot interacts with it, an assistant will simply chcompetitor — and users will never see your site or app. Microsoft and other vendors explicitly warn that AI “doesn’t just read your site — it acts on it.” Preparing all three data highways is therefore not optional.Where current mobile apps and websites fall short

- Built for humans, not agents. Most mobile and web experiences are optimized for visual layout and touch-driven flows; not for the programmatic, text- or API-first interactions assistants prefer.

- Fragile automation. Today’s agents often rely on brittle workarounds — screenshots, coordinate clicks or simulated DOM interactions — to navigate pages built for human use, leading to slow, error-prone automation and broken customer flows.

- Poor machine signals. Missing or inconsistent schema, incorrect HTML semantics, absent alt text, and stale metadata make it hard for models to find canonical answers they can cite confidently.

- Fragmented ownership. Marketing teams control canonical pages, product teams control APIs, and legal owns policy language; without coordinated governance, the signals an agent needs will be inconsistent or absent.

- Measurement gaps. Traditional last-click attribution fails in a “zero-click” world where assistants synthesize answers rather than sending raw clicks to websites. That undermines how marketing success is measured today.

What optimization actually means: practical tactics that work

Below are concrete, prioritized actions brands should implement now to be discoverable, actionable and safe for agentic assistants.1) Improve your machine-reamentals)

- Use clean, semantic HTML (correct headings, paragraphs and list semantics). Agents parse structure to determine hierarchy, intent and relevance.

- Publish and maintain structured data (JSs): Product, Offer, AggregateRating, FAQ, LocalBusiness and OpenGraph where relevant. These are the canonical facts agents prefer to cite.

- Provide high-quality metadata: short nonical URLs and explicit content freshness timestamps (dateModified). Freshness matters to agents scoring currency.

2) Expose authoritative feeds and APIs (the push layer)

- Publish product feeds/APIs that include price, availability, SKU/GTIN, promotion windows and timestamps. Synchronize feed frequency to product (near real-time for fast-moving SKUs). Agents rely heavily on feeds to compare offers.

- Use standard data formats (e.g., structured product feeds, OpenAPI for REST endpoints) so vendor connectors can reliably consume your data. APIs are the most agent-friendly interface because they remove the ambiguity of HTML scraping.

3) Harden the action layer (agent-navigable flows)

- Ensure live workflows — add-to-cart, promotion application, saved payment completion, booking confirmation — are navigable by an agent and fail graceut is a hard stop to conversions even if you win initial recommendation.

- Mark up reviews and ratings with Review/AggregateRating schema so assistants can quantify social propurchase* flags and structured Q&A where possible.

4) Signal access and control to models (AI.txt and provenance)

- Add an AI.txt (analogous to robots.txt, but for AI agents) to declare how your content may be used, whether it may be included in model training, and which endpoints are preferred for agent access. This is an emerging best practice for controlling model interactions.

- Surface provenance and authorship metadata in machine-readable form: publisher name, last-updated, brand verification tokens or digital signatures for canonical pages. Provenance reduces haimproves citation confidence.

5) Vectorize and prepare multimodal assets

- Provide machine-friendly image assets and vectorized representations where relevant, include descriptive alt text and transcripts for video/audio. Multimodal assistants use these cues when summarizing or executing tasks tontent.

6) Establish governance, telemetry and human-in-the-loop controls

- Build audit trails for agent actions, require least-privilege connectors for external agents, and set up human approval gates for high-risk actions (payments, account changes). Agents can execute tasks; governance must ensure they act cor server-side telemetry to measure session value (assistant sessions, successful handoffs) rather than relying solely on last-click. New experiment designs must compare cohorts with and without assistant referrals.

Imp: a phased engineering plan

- Discovery (Weeks 0–4)

- Inventory canonical pages, APIs, feeds and transactional flows.

- Run a crawl that simulates agent visits to identify broken paths and missing schema.

- Clean-up (Weeks 4–12)

- Apply semantic HTML, add missing schema types, fix broken meta tags and alt text.

- Implement AI.txt and publish a canonical “brand facts” page for agents.

- API & Feed parity (Weeks 8–20)

- Build or stabilize feeds/APIs for product, availability and booking data with timestamps.

- Add versioning and status fields for dynamic attributes.

- Action-hardening (Weeks 12–28)

- Make checkout and booking flows agent-testable; enable test-mode credentials for agent studios.

- Add machine-readable confirmation messages, tracking IDs and structured receipts.

- Governance & measurement (Ongoing)

- Establish agent IAM, logging, and human-in-the-loop approval policies.

- Run controlled experiments to measure assistant-driven lift and re-evaluate priorities.

The trust problem: hallucinations, stale data and privacy risks

AI-mediated answers can be fast and convenient — but they bring new failure modes:- Hallucinations. Assistants may synthesize confident but incorrect answers when they lack authoritative signals. Brands must provide verifiable machine-readable facts and provenance to reduce hallucination risk.

- Stale information. If feeds are out of date, agents can recommens or wrong prices. The result is poor customer experience and potential brand damage. Real-time sync and clear timestamps are essential.

- Data-exposure and privacy. Agents that act on behalf of users require access to accous. Brands must apply least-privilege access, sandboxing and consent-first flows to prevent unauthorized actions.

- Monetization and manipulation. As conversational channels introduce new ad formats (sponsored follow-ups, pansparency and disclosure become critical to avoid eroding trust. Forrester and others urge disclosure of monetized recommendations.

Measurement in a zero-click world

Traditional metrics — SERP rank, organic sessions, last-click conversions — are inadeq Measure session value and assistant lift via server-side experiments that compare outcomes when assistant referrals are enabled vs disabled.- Instrument agent interactions with evented telemetry and unique assistant tokens so attributions chain back to source signals the assistant used.

- Tess outcomes (conversion rates, churn, repeat orders) tied to assistant-driven sessions rather than raw clicks.

Early wins and platform opportunities

- Local businesses can score quick wins by publishing clear LocalBusiness schema, up-to-date hours and booking APIs. Assistacal facts for recommendations, and small updates can produce outsized improvements in visibility.

- Retailers should prioritize feed freshness: many assistant decisions are driven by price and inventory comparisons, and a fresh, complete feed dramatically increases the chance of being recom strong canonical facts pages, verified authorship and high-quality review data will be more often cited in generative answers — an important trust signal in the GEO world.

Organizational implications: governance, skills and procurement

- C-suite: treat AI as a new product endpoint. Procurement and legal teams must demand telemetry and contractual controls for third-party models to enforce non-training clauses and audit rights.

- Product: move from UI-first to data-first thinking. The user-visible UI remains important, but the canonical representation of facts and actions musle.

- Engineering: add API-first patterns, real-time feeds and sandboxed agent credentials for safer integrations.

- Marketing & UX: design conversational marketing assets (short, utility-first content, verified product cards) that work inside assistant flows as well as on screens.

Risks to watch (and mitigation strategies)

- Centralization risk. Major stacks that combine models, connectors and identity may become dominant gatekeepers. Mitigadestinations and demand contractual escape hatches.

- Regulatory scrutiny. As agents execute real-world actions, regulators will focus on transparency, liability and consumer protections. Brands in regulated sectors (finance, healthcare) must bak.

- Content and IP leakage. Agents scraping or ingesting site content may inadvertently create training material for models. Mitigation: explicit AI.txt policies and contractual protections where appropriate.

st for brand teams (quick reference)

- Audit: run an “agentability” crawl to find missing schema, broken flows and stale APIs.

- Fix: add semantic HTML, alt text, canonical facts pages and timestamps.

- Publtive feeds/APIs for products, booking and pricing.

- Harden: ensure checkouts and booking flows work when accessed programmatically; add machine-readable receipts.

- Govern: install least-privilege agent connectors, logging and human-in-the-loop checkpoints.

- Measure: design server-side experiments to capture assistant-driven lift and session value.

- Pilot: integrate with one assistant platform (ChatGPT/Apps, Gemini, Copilot) to learn connector requirements and measure ROI.

Conclusion: experiment fast, govern stronger, measure differently

AI assistants will not replace good product design or a well-built app — but they will become an essential discovery and action channel. Brands that prepare now by making their factual data machine-readable, by exposing authoritative feeds and by hardening action flows will occupy the assistant shortlist. Those that wait risk being excluded from decision paths users hand to agents.The technical work — schema, feeds, APIs and agentable flows — is concrete and achievable. The cultural work — cross-team governance, measurement shifts and new procurement expectations — is harder. Both matter. Start with a short, focused pilot that proves the economic lift from assistant-driven sessions, and expand from there. In this messy middle between the old web and agentic futures, experimentation plus disciplined governance will be the difference between brands that lead and brands that vanish from the next generation of customer journeys.

Source: Customer Experience Dive Why brands need to optimize their mobile apps and websites for AI