Microsoft and several leading vendors have pushed AI “agents” from lab concepts to production-grade features that automate threat detection, alert triage, and incident response across cloud, network, and endpoint systems—delivering faster, context-rich investigations while forcing security teams to build robust governance, identity controls, and auditability into the new workflows.

Background

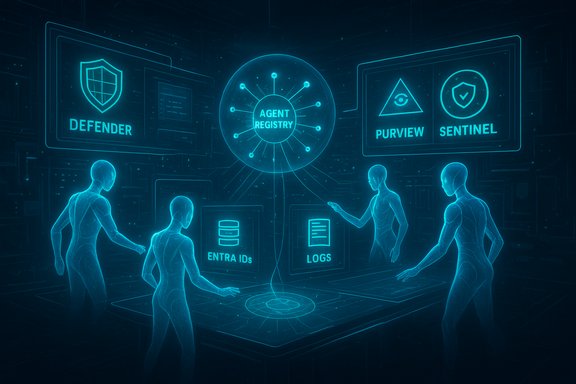

Microsoft’s Security Copilot has evolved from a conversational assistant into a platform of tenant‑scoped, purpose-built agents embedded across Defender, Entra, Intune, Purview and Sentinel. These agents are designed to perform repeatable SOC tasks—phishing triage, alert prioritization, conditional access tuning, and sensitive‑data remediation—while operating with auditable identities and lifecycle controls. Microsoft’s public announcements describe new governance tooling (Agent 365), a Security Store for third‑party agents, and an expanded agent portfolio included for Microsoft 365 E5 customers as a core outcome of Ignite and product rollouts. At the same time, major security vendors—Sophos, Tanium, CrowdStrike, Palo Alto Networks and others—have published agentic integrations or agent-focused products that bring external threat intelligence, real‑time endpoint telemetry, and runtime protections into Copilot-style workflows or their own agent frameworks. Sophos opened Sophos Intelix as an MCP-capable agent into Microsoft’s ecosystem; Tanium, CrowdStrike and other partners have built similar integrations that deliver live telemetry and remediation suggestions inside automated investigative flows. This convergence marks an operational shift: instead of single, static tools, security teams are now deploying coordinated AI agents that pull live signals, enrich them with intelligence, create auditable recommendations (or actions), and integrate with ticketing, SOAR and remediation pipelines. The payoff is operational scale: machines handle high-volume enrichment while humans focus on higher‑risk decisions. But that payoff only materializes when governance, identity, data handling, and rollback mechanisms are built in from the start.How agentic security works: the mechanics beneath the promise

Data, context, and a goal-driven loop

Agentic security tools operate as goal-oriented workflows: when given a defined objective (for example, "triage new phishing alerts"), an agent pulls telemetry from endpoints, cloud logs, email systems and network monitors. It enriches those signals with threat intelligence (reputation lookups, sandbox detonation) and historical case context, then classifies, prioritizes, and proposes or performs actions. Every decision and action is recorded so analysts can later reconstruct the chain of evidence. Key components that make this practical:- Scoped identity for agents (Entra Agent IDs and RBAC) so agent actions are auditable.

- Structured context retrieval (Model Context Protocol or equivalent) to request only the specific lookups an agent needs, rather than dumping raw tenant data into model prompts.

- Integration points to existing tools (Defender, Sentinel, SOAR, ticketing) to avoid console hopping and preserve existing investments.

Example workflow: phishing triage (typical agent play)

- Agent ingests a new email alert from Defender for Office 365.

- It runs reputation checks (URLs, domains, hashes) via an intelligence agent (e.g., Sophos Intelix) and detonation in a sandbox if needed.

- The agent synthesizes an evidence-backed classification and proposes containment actions (isolate host, block URL, reset credentials).

- Actions are staged—either suggested to a human analyst or executed under pre-approved, low‑risk policies—with full logs streamed to SIEM/SOAR.

Who’s building agentic security — and why they differ

Microsoft: platform, governance and scale

Microsoft’s approach marries native telemetry with a control plane. Security Copilot agents can access Defender telemetry, Entra signals, Purview classifications and Intune device posture, and Microsoft now exposes a central registry and governance surfaces (Agent 365) plus a Security Store for partner and custom agents. The strategic intent is to treat agents as first‑class entities—identities, auditable activities, and lifecycle controls—so enterprises can scale agent fleets without "shadow AI." Microsoft documents detail the Dynamic Threat Detection Agent (DTDA) that continuously hunts for missed behaviors and surfaces dynamic alerts in Defender queues. Microsoft also published implementation and billing signals: preview experiences are free but will consume Security Compute Units (SCUs) at general availability, and included SCU allocations for M365 E5 customers were described in product messaging. These operational levers matter: compute metering, tenancy scoping, and Entra‑backed identities form the backbone of Microsoft’s agent governance story.Sophos, Tanium and other integrators: authoritative telemetry and enrichment

Sophos has integrated Sophos Intelix as an MCP-capable agent, offering reputation lookups, detonation summaries and prevalence metrics directly into Copilot workflows. Public Sophos material reports the telemetry scale that fuels Intelix and positions the integration as “democratizing” SOC intelligence across Copilot surfaces. Tanium and other endpoint vendors offer live endpoint state and process-chain reconstructions to Copilot agents so recommendations are grounded in real-time evidence. These partners focus on data fidelity and synchronous context—areas where standalone LLMs often struggle.CrowdStrike, Palo Alto and the AI defense arms race

Vendors beyond the Microsoft ecosystem are building their own agentic systems or protections for agentic workflows. Palo Alto Networks announced agent-style capabilities and a platform (Cortex AgentiX / Prisma AIRS updates) to create virtual agents and runtime protections; reporting notes these systems are trained on massive incident datasets and designed to keep humans in control. CrowdStrike’s activity—acquisitions and new AI-focused products—shows a parallel push to provide AI Detection & Response (AIDR) and model-level governance for agentic scenarios. These moves are part product innovation, part defensive posture: vendors want to secure and control AI-assisted defenses as attackers increasingly use AI themselves.Real-world benefits: what early customers and pilots report

- Faster triage and reporting: Case studies and vendor-supplied pilot data show automated summarization and classification reducing the time from alert ingestion to a leadership-ready summary—allowing rapid handoffs to containment teams. One public case study described measurable reductions in investigation time using Copilot‑enabled workflows.

- Reduced analyst toil and improved focus: By offloading repetitive enrichment tasks—reputation lookups, sandbox queries, cross‑tool correlation—agents let analysts spend more time on complex hunts and containment decisions.

- Consolidated visibility and evidence trails: Agents that stitch together Defender, Purview and Entra context create single‑pane workflows where the chain of evidence is preserved and auditable, which improves incident briefings and compliance reporting.

- Democratized checks for non‑SOC teams: When threat intelligence is surfaced inside productivity tools (Teams, Copilot chat), IT admins and business users can validate suspicious links or attachments without immediate SOC escalation—reducing noise and enabling faster local remediation for low‑risk items.

Risks, attack surfaces, and governance imperatives

Where agentic automation introduces new danger

- Privilege escalation via agent identity: Agents with broad Entra Agent IDs and elevated rights can be misused if compromised. Treating agents as identities means they must be subject to the same lifecycle controls, access reviews, and conditional access as humans.

- Prompt‑injection and model abuse: Agents that accept freeform inputs or ingest document text risk prompt injection attacks that can coax undesired actions or data exfiltration. Runtime controls and DLP checks are essential. Partners like Check Point and specialist startups are implementing runtime guardrails that intercept planned agent actions and block policy-violating behaviors.

- Over-automation and mistaken remediation: Fully automated remediation (disabling accounts, deleting files, quarantining systems) can cause business disruption if the agent misclassifies. Progressive deployment modes—suggest-only, semi‑automated, then fully automated with strict guardrails—are advisable.

- Data leakage and compliance drift: Agents that access logs, transcripts or content could expose sensitive data to third‑party model endpoints unless routing and retention policies are tightly controlled. Purview‑level DLP for Copilot, policy-based scrubbing, and memory‑purge capabilities are necessary to limit exposure.

Attackers will target agents

Agent identities, MCP servers, and the connectors that feed agents (email, endpoint telemetry, cloud logs) are high‑value targets. If an attacker learns how an agent makes decisions, they can craft inputs that cause the agent to ignore or misclassify malicious activity—or worse, to perform harmful actions. Agent‑specific incident response plans (revoke Agent ID, quarantine connectors, reset agent keys) must be part of production playbooks.Best practices for safe adoption

Start small, iterate, and govern aggressively. The following checklist synthesizes vendor guidance and early customer experience into an operational playbook.- Identify low-risk, high‑value tasks first:

- Alert triage, enrichment, and report composition are strong starting use cases.

- Prioritize tasks that yield clear time savings with limited scope for business impact.

- Enforce least‑privilege for every agent:

- Assign minimal Entra Agent IDs, apply conditional access, and separate approval roles for agent execution vs. agent governance.

- Staged automation rollout:

- Suggest‑only mode (agent proposes actions).

- Semi‑automated mode (agent executes low‑impact tasks after automated checks).

- Fully automated mode (only for vetted workflows with rollback capabilities).

- Integrate runtime guardrails and DLP:

- Use inline runtime checks that must approve tool calls under tight latency SLAs; avoid permissive fallbacks on webhook timeouts.

- Preserve full audit trails and reversible actions:

- Log inputs, model outputs, evidence artifacts, and the precise API calls agents make so you can reconstruct decisions and undo actions when needed.

- Test agent behavior against adversarial inputs:

- Include prompt‑injection, data exfiltration and staged privilege misuse tests in red‑team cycles. Vendors recommend internal AI red teams for this purpose.

- Vendor-agnostic telemetry architecture:

- Where possible, design detection and enrichment to accept multiple intelligence sources to avoid single‑vendor lock‑in while keeping deep integrations for speed and fidelity.

Verifying claims and numbers: what we checked

Several vendor claims that shape procurement decisions were cross‑checked against public documentation and vendor announcements:- Microsoft’s inclusion of Security Copilot agents in Microsoft 365 E5, the Agent 365 governance plane, and the DTDA design are documented in Microsoft’s security blogs and product pages. These materials also describe preview-to-GA billing (SCUs) and the zero‑touch DTDA experience that surfaces dynamic alerts in Defender.

- Sophos’ telemetry figures (daily telemetry volume, detection counts, auto‑blocks) and the availability of Sophos Intelix for Microsoft Copilot/Copilot Studio are confirmed in Sophos press materials and product pages. Those materials articulate MCP as the runtime method for agent enrichment. These vendor‑reported telemetry numbers are useful for capacity planning but should be validated during procurement pilots.

- Palo Alto Networks’ announcements about AI agent platforms, Cortex AgentiX / Prisma AIRS expansions, and the claim of training on large incident datasets were reported by major outlets; these describe product roadmaps and strategic positioning rather than final, GA‑ready feature sets—expect details and pricing to firm up as products reach market.

Integration and operational checklist (practical steps for SOCs)

- Inventory current telemetry and connector coverage (endpoints, email, cloud logs).

- Map which existing playbooks can be safely automated and which require human judgement gates.

- Pilot an agent in suggest‑only mode in a narrow scope (e.g., phishing triage for a single business unit).

- Validate precision and recall: measure false positive rates, time saved per incident, and analyst satisfaction over 30–90 days.

- Build governance controls: Agent registry, access reviews, conditional access policies for agent identities, and runtime DLP integrations.

- Expand to semi‑automated actions with automatic rollback options and human sign‑offs for high‑impact remediation.

- Regularly audit agent decisions and retrain or retune models and rules where drift or bias appears.

The long view: where this is heading

Agentic AI is being woven into layered security stacks, and platform vendors are racing to combine automation, governance and marketplace ecosystems. Expect three parallel trends:- Deeper integration and richer partner catalogs (agents as composable services) that reduce context switching and let organizations curate best‑of‑breed intelligence within single investigative flows.

- Stronger runtime guardrails and DLP enforcement that operate at sub‑second latencies to avoid permissive defaults, plus more sophisticated adversarial testing to protect agents from manipulation.

- A growing market for AI‑specific detection and response (AIDR) tools that watch agent behavior itself—monitoring for malicious or erroneous actions from compromised agents and providing an “IR playbook for agents.” CrowdStrike’s and Palo Alto’s moves illustrate this defensive specialization.

Conclusion

Agentic AI is shifting the balance of modern security: it can dramatically cut the time analysts spend on enrichment and routine triage while surfacing richer, multi‑source context for decisions. Microsoft’s Security Copilot and partner ecosystem demonstrate the immediate operational gains—and they also illustrate the governance and privacy obligations that come with granting AI systems agency inside enterprise controls. The practical path forward is disciplined: start with low‑risk automations, enforce least‑privilege identities for agents, require human oversight for high‑impact moves, instrument complete audit trails, and test agents against adversarial inputs. When deployed with caution and robust governance, AI agents become a force multiplier for defenders; deployed without those controls, they create new attack surfaces and managerial liabilities. The difference between success and failure will be the rigor of governance, not the sophistication of the models.Source: Techgenyz AI Agents in Security Tools: Microsoft and Others Automate Threat Detection