Tech-industry claims that generative AI and large language models (LLMs) are a decisive lever against climate change have moved rapidly from hopeful rhetoric to corporate orthodoxy — and a new, careful analysis shows much of that rhetoric is greenwashing in plain clothes.

An independent study commissioned by climate advocacy groups including Beyond Fossil Fuels and Climate Action Against Disinformation examined 154 public statements from major technology companies and international institutions about AI’s climate benefits. The headline finding is stark: the analysis found no examples where popular generative tools such as Google’s Gemini or Microsoft’s Copilot could be shown to deliver a “material, verifiable and substantial” reduction in greenhouse‑gas emissions. The report also found that only 26% of corporate claims cited published academic research, while 36% cited no evidence at all.

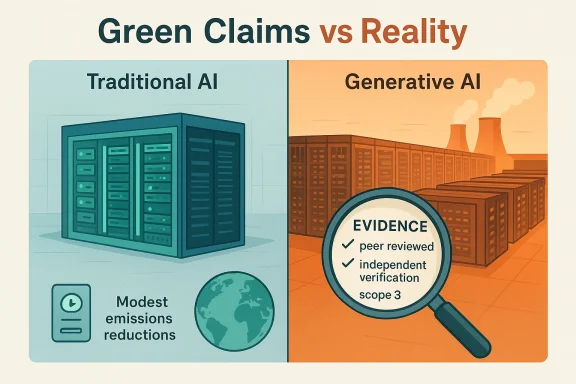

That finding is not an outlier; it sits amid mounting evidence that the sector’s electricity demand is surging and that messaging about AI’s climate upside often conflates distinct technologies — narrow, efficient machine‑learning models versus compute‑hungry generative models — in ways that amplify corporate marketing while obscuring real trade‑offs.

If governments, corporations, and civil society adopt transparent reporting standards, insist on third‑party verification, and align renewable procurement and grid planning with the realities of AI load, then society can harvest AI’s benefits without letting compute growth become an unexamined driver of emissions. Until then, sweeping corporate claims that generative AI is a climate cure are, at best, optimistic sales copy — and at worst, an industry‑scale greenwash that distracts from the deeper, harder work of decarbonisation.

Source: trendingtopics.eu AI Climate Promises Are Often Greenwashing

Background: what the new analysis found

Background: what the new analysis found

An independent study commissioned by climate advocacy groups including Beyond Fossil Fuels and Climate Action Against Disinformation examined 154 public statements from major technology companies and international institutions about AI’s climate benefits. The headline finding is stark: the analysis found no examples where popular generative tools such as Google’s Gemini or Microsoft’s Copilot could be shown to deliver a “material, verifiable and substantial” reduction in greenhouse‑gas emissions. The report also found that only 26% of corporate claims cited published academic research, while 36% cited no evidence at all.That finding is not an outlier; it sits amid mounting evidence that the sector’s electricity demand is surging and that messaging about AI’s climate upside often conflates distinct technologies — narrow, efficient machine‑learning models versus compute‑hungry generative models — in ways that amplify corporate marketing while obscuring real trade‑offs.

Overview: why this matters for readers and policymakers

The debate is consequential for three overlapping reasons:- Scale: data‑centre electricity demand is growing fast. Independent forecasts show data centres are set to drive a major share of electricity‑demand growth in advanced economies over the coming decade. This makes claims about AI’s climate benefits a public‑policy issue, not just marketing.

- Evidence quality: many corporate numbers trace back to consultancies or company‑commissioned rependent, peer‑reviewed research. When big percentages get repeated in headlines and policy briefings, they can skew decisions that shape grid planning, investment, and regulation.

- Local impacts and externalities: data‑centre growth affects regional grids, water supplies, and land use. Without transparent, audited accounting, corporate sustainability claims can mask shifting burdens onto communities and utilities.

Disentangling the technologies: narrow AI vs generative AI

What companies usually mean when they say “AI for climate”

When technology vendors and some policymakers discuss “AI for climate,” the examples most easily verified are narrow, task‑specific applications: predictive maintenance in industry, demand forecasting for grids, route optimisation in logistics, and satellite image analysis for land management. These applications typically require less compute per decision and have documented, localised efficiency gains in case studies. They are real, useful, and — crucially — amenable to measurement.Why generative AI is different

Generative models and large foundation models (LLMs) — the systems that power chatbots, image and video generators, and many developer tools — are orders of magnitude more compute‑intensive at scale. Training and inference for large models require continual server‑hour investment, specialised accelerators, and increasingly expansive data‑centre footprints. That shift in workload profile changes the environmental math: the same corporate that deploys predictive analytics can simultaneously expand generative workloads that raise steady demand for electricity and cooling resources. The distinction matters because many corporate messages blur these categories, implying that the climate benefits achieved by narrow AI automatically scale to generative AI. The analysis flagged that semantic slippage as a core greenwashing tactic.The provenance problem: where the “5–10% by 2030” claim comes from

One widely circulated figure — that AI could mitigate 5–10% of global greenhouse‑gas emissions by 2030 — has become emblematic of the provenance problem. That number was publicised by Google in a 2023 blog post summarising a report produced with Boston Consulting Group (BCG). But the figure traces back to consultancy modelling and corporate narratives rather than a reproducible, peer‑reviewed global emissions model; independent analysts and the new study caution that the number has been recycled in public communications without the transparent assumptions needed to evaluate its real‑world validity. In short: the 5–10% figure is influential, but its methodological roots are corporate and consultancy reporting rather than independent science.Data‑centre growth: projections and real grid impacts

Forecasts paint a clear upward trajectory

Multiple independent forecasters agree that data‑centre electricity demand is set to rise markedly this decade. The International Energy Agency (IEA) highlights that AI and data centres are a major driver of electricity‑demand growth in advanced economies; the IEA projects data‑centre demand to make up a substantial share of growth in the coming years. BloombergNEF’s scenario work estimates that U.S. data centres could account for 8.6% of natid by 2035 in high‑growth scenarios — more than double current shares — and forecasts large absolute increases in capacity. Gartner and other analysts provide similar directional forecasts about rapid increases in AI‑related power usage. These are not trivial numbers: they imply significant grid planning, transmission upgrades, and potential reliance on dispatchable generation during peak periods.Local and system-level externalities

The effects aren’t only national. New hyperscale data centres cluster in specific regions, putting pressure on local transmission, resilience, and water resources (cooling). Communities often discover late in the permitting process that a major data‑centre customer can shift costs or water usage burdens onto municipal systems. The rapidity of AI expansion has made those impacts politically salient and technically urgent.Evidence gaps and the nature of the greenwash

Weak evidence chains

The NGO‑commissioned analysis found that nearly three‑quarters of examined claims lacked robust, independently verifiable evidence. Only about a quarter linked to academic literature, and more than a third offered no evidence citation at all. That is a classic red flag for greenwashing: striking claims packaged without reproducible methods.Common greenwashing patterns observed

- Category conflation: mixing narrow‑AI case studies with claims about generative AI’s global benefits.

- Consultancy echo chambers: recycling consultant estimates without publishing models or assumptions.

- Cherry picking successful pilots: promoting a handful of company customer stories as proof of scalable climate impact.

- Projection without boundary conditions: offering long‑range percentage reductions absent sensitivity analyses for rebound effects or grid intensity.

Why some of the claims aren’t obviously false

This criticism is not a blanket dismissal of AI’s potential. The IEA and several peer‑reviewed studies are clear that AI can deliver real emissions reductions in specific sectors — for example, optimizing grid dispatch, improving industrial processes, and accelerating materials discovery. The analytic caveat is that these gains are conditional: they require scale, policy alignment, and careful accounting for rebound effects. In other words, AI can be part of a climate strategy, but it is not a stand‑alone climate solution that automatically offsets the emissions cost of its own expansion.Case studies: where AI has produced verifiable gains — and the limitations

Real, verifiable wins

- Data‑centre cooling optimisation: Reinforcement‑learning pilots have shown double‑digit percentage reductions in cooling energy for specific facilities when carefully implemented and audited.

- Route and logistics optimisation: Fuel savings from optimised routing are measurable and have credible customer‑level audits.

- Satellite and mapping tasks: Cloud‑scale processing of remote‑sensing imagery speeds conservation and monitoring tasks that historically were prohibitively slow.

Limitations and caveats

Each win is local and bounded. Scaling a pilot across thousands of facilities or across sectors encounters differing marginal returns, governance constraints, and operational hurdles. Most importantly, the energy cost of operating generative services massively across billions of users is not addressed by isolated efficiency pilots. The balance sheet can look very different once you add the compute cost of large‑scale generative workloads.Corporate responses and the accountability gap

Major vendors publicly defend their sustainability methodologies and investments in renewables, efficiency, and carbon‑removal offsets. But the study and independent analysts point to persistent transparency gaps: uneven reporting boundaries, limited Scope‑3 disclosure, proprietary workload nce on long‑term renewable power purchase agreements (PPAs) that may not be tightly correlated with real‑time AI demand. That combination allows companies to claim progress while expanding fossil‑fuel‑intensive grid use in regions where new renewables or storage are not yet available. (theguardian.com)Policy and corporate governance responses that should follow

The analysis concludes with practical governance demands. Below are prioritized recommendations for policymakers, corporate boards, and procurement teams that reflect both the evidence and the precaution that the study urges.For policymakers

- Mandate standardised disclosures for compute‑intensive workloads that include kWh per inference/training and regional carbon intensity during peak load.

- Require third‑party verification for claims that firms make about emissions avoided through AI — analogous to financial audit standards for material claims.

- Consider grid‑aware permitting and impact fees where hyperscale compute risks local reliability or forces fossil backfill.

- Incentivise time‑aligned renewable procurement or storage procurement that matches AI demand patterns.

For corporate boards and C‑suites

- Treat sustainability metrics for AI as operational KPIs (kWh per successful business result; gramsCO2e per inference).

- Insist on life‑cycle accounting for AI services that includes hardware manufacturing, water use, and supply‑chain emissions.

- Publish methodologies and sensitivity analyses when using consultancy models to support headline claims.

- Limit marketing claims to audited, reproducible outcomes and avoid extrapolating pilot results into global claims.

For enterprise buyers and procurement

- Demand *contractual transparencyarbon intensity hedging, and compute‑efficiency SLAs.

- Prioritise narrow AI use cases with demonstrable ROI in emissions reductions before wholesale rollout of generative capabilities.

- Require vendors to disclose the marginal emissions footprint of additional AI services provisioned for the buyer.

Strengths in the current ecosystem — and why they matter

It’s not all critique. Several systemic strengths make AI a tool decarbonisation — but only if paired with stronger governance.- Technical potential: AI’s data‑driven optimisation can cut waste in logistics, grid operations, and industrial control systems. These are real levers that scale when paired with policy and investment.

- Industry investment in efficiency: hyperscalers are investing in more efficient chips, architectural improvements, and cooling innovations. These investments lower per‑unit energy intensity, even as absolute demand rises.

- Civil society mobilisation: coordinated NGO pressure and independent audits are exposing weak claims, pushing for disclosure standards, and creating the political momentum for regulation. That is the same force that proved effective in other greenwashing fights.

The risks if we don’t change course

If the current trajectory continues — where energy‑hungry generative workloads expand under the rhetoric of “AI for good” without robust measurement and policy safeguards — the following risks are probable:- Grid stress and fossil backfill: regional power systems may lean on gas or coal to meet new, concentrated loads, undermining emissions targets.

- Regulatory blowback and litigation: greenwashing enforcement is becoming real and costly in other sectors; energy and sustainability regulators are likely to treat misleading AI claims similarly.

- Misallocated public policy: policymakers may rely on optimistic estimates to justify delayed action in harder sectors such as heavy industry and transport, resulting in missed climate targets.

- Social and environmental externalities: local communities face water stress, higher utility rates, and land‑use impacts from unchecked datacentre expansion. These outcomes have political, ethical, and human costs.

How to tell a credible AI sustainability claim from spin

Here are practical heuristics for journalists, procurement teams, and civic actors to separate evidence from spin:- Look for methods, not headlines. Credible claims include reproducible models, sensitivity tests, and peer‑reviewed or third‑party audits.

- Check the boundary definitions. Does the claim include lifecycle emissions (manufacturing, supply chain, water) or only operational energy?

- Distinguish narrow from generative AI. Does the evidence refer to lightweight predictive models or compute‑heavy foundation models? The two have very different environmental profiles.

- Demand time‑aligned accounting. Are renewable purchases matched to AI load on an hourly basis, or are they annual PPAs that do not reduce marginal grid emissions during peak AI demand?

Conclusion: a sober middle way

AI holds genuine potential to help decarbonise certain sectors — but that potential is conditional and must be evaluated with rigorous methods, disclosed assumptions, and institutional checks. The recent NGO‑commissioned analysis is a corrective: it does not say “AI is always bad,” but it does insist that marketing claims must meet the standards of evidence expected in other technical domains.If governments, corporations, and civil society adopt transparent reporting standards, insist on third‑party verification, and align renewable procurement and grid planning with the realities of AI load, then society can harvest AI’s benefits without letting compute growth become an unexamined driver of emissions. Until then, sweeping corporate claims that generative AI is a climate cure are, at best, optimistic sales copy — and at worst, an industry‑scale greenwash that distracts from the deeper, harder work of decarbonisation.

Source: trendingtopics.eu AI Climate Promises Are Often Greenwashing