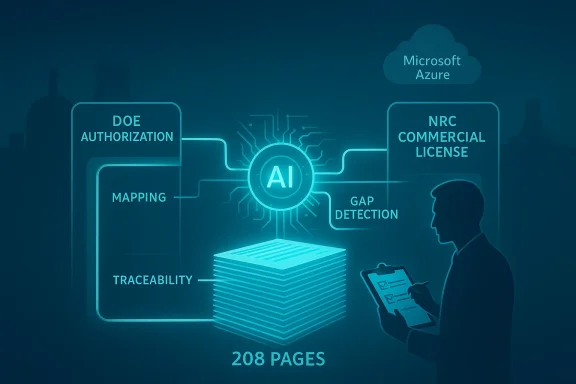

AI may be poised to trim one of the nuclear industry’s most stubborn bottlenecks: the paperwork-heavy path from a DOE-authorized demonstration reactor to an NRC commercial license. In a new demonstration involving the Department of Energy, Idaho National Laboratory, Argonne National Laboratory, Microsoft, and Everstar, a 208-page licensing document was generated in one day — a task DOE says typically takes a team four to six weeks. The result does not eliminate regulatory review, but it suggests that document production, information mapping, and gap detection can be accelerated in ways that may meaningfully shorten the front end of reactor deployment.

For years, the nuclear industry has argued that the challenge is not only reactor engineering, but also the sheer complexity of licensing. Advanced reactors tend to use different coolants, fuels, geometries, and operating assumptions than the large light-water plants that shaped much of the existing regulatory framework. That mismatch has made document development, analysis, and iterative review especially labor-intensive, even as the U.S. has pushed to modernize licensing for new reactor types.

The latest demonstration sits inside a broader federal push to speed advanced nuclear deployment. DOE has already funded licensing support, launched reactor pilot programs, and worked with national laboratories to tighten the connection between demonstration testing and eventual commercialization. NRC, meanwhile, has been under pressure from the ADVANCE Act and recent executive actions to move faster, produce predictable schedules, and apply more flexible review approaches to advanced designs.

What makes this specific experiment notable is not just that AI produced a document quickly, but that it attempted to translate between two regulatory languages: DOE’s authorization pathway for a demonstration reactor and the NRC’s licensing structure for commercial deployment. That translation layer has long been a human-intensive task, because the same underlying reactor information can need to be reformatted, cross-referenced, and verified for different agencies with different assumptions and documentation conventions.

The DOE/INL/Everstar effort also reflects a shift in how the nuclear sector is thinking about AI. Early conversations focused on simulation, digital twins, and analytics. Now the emphasis is turning toward regulatory workflow automation — the unglamorous but crucial work of preparing safety analyses, mapping requirements, finding missing information, and preserving traceability across large technical packages.

That matters because in advanced nuclear, time is often lost long before a shovel hits the ground. If a software system can reliably reduce rework in licensing preparation, then the industry could see gains not only in schedule but also in cost certainty, document quality, and regulator readiness.

That distinction matters. This was not a fully autonomous licensing submission, nor was it a machine deciding compliance on its own. It was a document translation and augmentation workflow, with human expert review afterward. In other words, AI is being positioned as a force multiplier for regulatory authorship, not as a replacement for the regulator or the safety analyst.

The headline performance number is impressive: one day to generate a 208-page document, compared with four to six weeks for a traditional team effort. But the deeper implication is that AI found missing or incomplete information during the process. That is arguably more valuable than speed alone, because nuclear licensing depends on completeness, traceability, and consistency as much as on writing efficiency.

Traditional processes also grew up around reactors that were much more standardized than the wave of advanced designs now entering the pipeline. The modern landscape includes microreactors, molten salt concepts, sodium-cooled systems, high-temperature gas reactors, and other designs that do not fit neatly into legacy templates. That forces regulators and applicants to spend time explaining where a design resembles existing practice and where it does not.

NRC has already acknowledged the need for more predictable and efficient schedules, with recent actions under the ADVANCE Act and executive orders setting tighter review milestones for some categories of work. Even so, application quality, request-for-information cycles, and changes during review can still stretch timelines. The practical bottleneck is often not a single regulation, but the cumulative burden of preparing and checking the record.

The phrase “computed and verified, not inferred” is especially significant. In nuclear work, an AI tool that merely sounds plausible is not enough. What matters is whether the output can be traced to underlying data, whether it avoids fabricating connections, and whether the result can be validated by domain experts.

That said, the claim should be read carefully. Any AI system that synthesizes complex technical text still depends on data quality, model design, validation rules, and oversight. The more precise the regulatory use case, the more valuable the output may be — but also the more unforgiving the environment if the system misses an edge case.

DOE’s experiment points toward a future where the same data backbone serves multiple regulatory phases. If a reactor developer can maintain a structured digital record from concept through demonstration, then AI can potentially repurpose that record for later licensing packages rather than rebuilding the story from scratch.

This is one reason the demonstration matters beyond a single reactor design. It hints at a workflow architecture in which DOE authorization, national lab validation, and NRC licensing are no longer treated as isolated document universes. Instead, they become linked stages in a continuous regulatory information chain.

It also reflects a broader trend: the biggest industrial AI deployments are increasingly happening through cloud platforms rather than standalone software installs. In nuclear, that matters because firms need secure environments, access controls, and auditable workflows. They also need systems that can be adapted across projects without rebuilding the infrastructure every time.

For Microsoft, the nuclear use case is strategically attractive because it sits at the intersection of AI, regulated industry, and critical infrastructure. For DOE and INL, it provides a path to test whether commercial AI tooling can be adapted to one of the most demanding documentation environments in government.

Still, this demonstration aligns with NRC’s own direction of travel. The agency has been modernizing its licensing processes, setting shorter schedules in some cases, and trying to make reviews more efficient for advanced reactors. If AI-generated submissions are cleaner and more complete, the regulator may spend less time chasing missing information and more time on substantive safety questions.

That could be a meaningful shift. Regulatory time is often consumed not by disagreement over fundamental safety, but by iterative clarification and document cleanup. AI may help compress that middle layer, where much of the friction lives.

This is especially relevant for smaller developers, which often have innovative designs but limited staff. A licensing team that can effectively multiply its output through AI may be able to do more with fewer people, at least during the document-heavy early phases. Larger firms may benefit as well, but they already have more established regulatory organizations.

The broader market implication is that AI could become part of the standard nuclear development stack, much like simulation software, digital twins, or configuration management tools. Once that happens, the competitive question may shift from “Should we use AI?” to which AI workflow has the best traceability, governance, and regulatory fit?

For enterprises, the appeal is clear: faster document generation, better traceability, and fewer repetitive tasks. Those gains can reduce non-technical labor cost, accelerate milestone delivery, and improve submission quality. For consumers, the benefit is indirect but potentially significant if it helps more advanced nuclear capacity come online faster and more reliably.

That indirect benefit should not be overstated. Nuclear project timelines are affected by financing, supply chain readiness, permitting, local politics, workforce availability, and construction risk. AI can help with one of those dimensions, but it cannot solve all of them.

INL’s interest in building its own in-house AI tools suggests the effort may expand beyond a single vendor or pilot. That matters because long-term adoption in critical infrastructure usually depends on institutional ownership, not just third-party software performance. If the labs can internalize parts of the workflow, the capability may spread more naturally across projects and agencies.

The broader market will watch for whether AI-assisted licensing can make a measurable difference in actual NRC outcomes, not just document production. If it helps reduce requests for additional information, keep schedules tighter, and improve submission quality, then it will have moved from an interesting demo to a meaningful industrial capability.

The big takeaway is simple: the licensing process may not be getting easier, but it may soon get a lot smarter. If DOE, INL, NRC, and industry partners can keep the system disciplined, auditable, and evidence-driven, AI could become a genuine enabler of faster nuclear deployment rather than just another layer of hype.

Source: Power Engineering Can AI help the DOE reduce nuclear reactor licensing timelines?

Background

Background

For years, the nuclear industry has argued that the challenge is not only reactor engineering, but also the sheer complexity of licensing. Advanced reactors tend to use different coolants, fuels, geometries, and operating assumptions than the large light-water plants that shaped much of the existing regulatory framework. That mismatch has made document development, analysis, and iterative review especially labor-intensive, even as the U.S. has pushed to modernize licensing for new reactor types.The latest demonstration sits inside a broader federal push to speed advanced nuclear deployment. DOE has already funded licensing support, launched reactor pilot programs, and worked with national laboratories to tighten the connection between demonstration testing and eventual commercialization. NRC, meanwhile, has been under pressure from the ADVANCE Act and recent executive actions to move faster, produce predictable schedules, and apply more flexible review approaches to advanced designs.

What makes this specific experiment notable is not just that AI produced a document quickly, but that it attempted to translate between two regulatory languages: DOE’s authorization pathway for a demonstration reactor and the NRC’s licensing structure for commercial deployment. That translation layer has long been a human-intensive task, because the same underlying reactor information can need to be reformatted, cross-referenced, and verified for different agencies with different assumptions and documentation conventions.

The DOE/INL/Everstar effort also reflects a shift in how the nuclear sector is thinking about AI. Early conversations focused on simulation, digital twins, and analytics. Now the emphasis is turning toward regulatory workflow automation — the unglamorous but crucial work of preparing safety analyses, mapping requirements, finding missing information, and preserving traceability across large technical packages.

That matters because in advanced nuclear, time is often lost long before a shovel hits the ground. If a software system can reliably reduce rework in licensing preparation, then the industry could see gains not only in schedule but also in cost certainty, document quality, and regulator readiness.

What DOE and INL Actually Demonstrated

The core demonstration was a conversion exercise. DOE said the team used AI mapping to convert a safety analysis document required under its authorization pathway for the National Reactor Innovation Center’s Generic High Temperature Gas Reactor into sections equivalent to an NRC license application. Everstar’s Gordian system, running on Microsoft Azure, handled the transformation, and DOE says the final output was evaluated by an expert for accuracy, missing information, consistency, grammar, and structure.That distinction matters. This was not a fully autonomous licensing submission, nor was it a machine deciding compliance on its own. It was a document translation and augmentation workflow, with human expert review afterward. In other words, AI is being positioned as a force multiplier for regulatory authorship, not as a replacement for the regulator or the safety analyst.

The headline performance number is impressive: one day to generate a 208-page document, compared with four to six weeks for a traditional team effort. But the deeper implication is that AI found missing or incomplete information during the process. That is arguably more valuable than speed alone, because nuclear licensing depends on completeness, traceability, and consistency as much as on writing efficiency.

Why the document mapping matters

Licensing teams routinely spend huge amounts of time reconciling terminology, section structure, and evidence placement. A reactor project may already have most of the necessary technical content, but that content is scattered across design docs, safety cases, engineering drawings, analysis reports, and vendor records. AI mapping can help assemble the pieces into the right regulatory shape.- It can align terminology across DOE and NRC frameworks.

- It can flag missing inputs before a formal submission is built.

- It can reduce clerical churn created by repeated reformatting.

- It can surface traceability gaps that humans might miss in a large package.

- It can support a more structured review process before regulator engagement.

Why Nuclear Licensing Has Been So Slow

Nuclear licensing has historically moved slowly because the stakes are unusually high. Every safety claim has to be backed by evidence, and every claim has to be traceable to design data, analysis methods, assumptions, and operational boundaries. That creates a paper trail that is both enormous and highly interdependent.Traditional processes also grew up around reactors that were much more standardized than the wave of advanced designs now entering the pipeline. The modern landscape includes microreactors, molten salt concepts, sodium-cooled systems, high-temperature gas reactors, and other designs that do not fit neatly into legacy templates. That forces regulators and applicants to spend time explaining where a design resembles existing practice and where it does not.

NRC has already acknowledged the need for more predictable and efficient schedules, with recent actions under the ADVANCE Act and executive orders setting tighter review milestones for some categories of work. Even so, application quality, request-for-information cycles, and changes during review can still stretch timelines. The practical bottleneck is often not a single regulation, but the cumulative burden of preparing and checking the record.

The manual-work problem

A large licensing package is less like a normal corporate report and more like a living evidence chain. Every statement can trigger a supporting calculation, a standards citation, a configuration reference, or a design assumption. That makes it a terrible fit for hurried copy-and-paste methods, and a promising fit for structured AI assistance.- Teams spend weeks on formatting and cross-walking.

- Reviewers spend time checking consistency across sections.

- Small omissions can cause large downstream delays.

- Revisions often require multiple document owners.

- A single missing traceability link can force rework across the package.

What Gordian Appears to Be Doing

Everstar describes Gordian as engineered for nuclear-grade technical work with physics and engineering tools, and DOE says the platform can understand and integrate data through semantic ontology mapping. That language suggests a system that is doing more than generic text generation. It is trying to connect concepts, sources, and regulatory categories in a structured way.The phrase “computed and verified, not inferred” is especially significant. In nuclear work, an AI tool that merely sounds plausible is not enough. What matters is whether the output can be traced to underlying data, whether it avoids fabricating connections, and whether the result can be validated by domain experts.

That said, the claim should be read carefully. Any AI system that synthesizes complex technical text still depends on data quality, model design, validation rules, and oversight. The more precise the regulatory use case, the more valuable the output may be — but also the more unforgiving the environment if the system misses an edge case.

The role of semantic mapping

Semantic mapping is essentially a way of linking meaning across differently structured information. In this context, that means turning a DOE-style safety analysis into NRC-style application sections without losing the underlying technical intent.- It can map equivalent concepts across frameworks.

- It can identify where content is reusable and where it is not.

- It can help preserve engineering traceability.

- It can support document assembly at scale.

- It can expose areas where human judgment is still required.

How This Fits the DOE-NRC Transition Path

The transition from DOE-authorized testing to NRC commercial deployment is often where promising reactor concepts slow down. A project can be well advanced technically, yet still require substantial effort to translate demonstration materials into commercial licensing materials. That translation is especially important for first-of-a-kind designs, where the documentation burden is not yet standardized by precedent.DOE’s experiment points toward a future where the same data backbone serves multiple regulatory phases. If a reactor developer can maintain a structured digital record from concept through demonstration, then AI can potentially repurpose that record for later licensing packages rather than rebuilding the story from scratch.

This is one reason the demonstration matters beyond a single reactor design. It hints at a workflow architecture in which DOE authorization, national lab validation, and NRC licensing are no longer treated as isolated document universes. Instead, they become linked stages in a continuous regulatory information chain.

From demonstration to commercialization

The commercial significance is straightforward. Faster translation between DOE and NRC formats could reduce the lag between proving a concept and selling it commercially. For advanced reactor companies, that lag can be financially punishing because capital has to sit idle while paperwork catches up with engineering progress.- Demonstration data can become licensing inputs more quickly.

- NRC submissions may require less manual reformatting.

- Teams can spend more time on technical corrections instead of clerical conversion.

- Investors may see lower schedule risk.

- Developers could reach commercial readiness with fewer document cycles.

Why Microsoft Azure Matters Here

Microsoft’s role is important because it signals that this is not a boutique software experiment running in isolation. The collaboration uses Azure as the cloud foundation, which gives the effort an enterprise-grade platform for scalability, security controls, and integration with other digital tools. That makes the demonstration more interesting to utilities, reactor vendors, and government organizations that care about reproducibility and procurement paths.It also reflects a broader trend: the biggest industrial AI deployments are increasingly happening through cloud platforms rather than standalone software installs. In nuclear, that matters because firms need secure environments, access controls, and auditable workflows. They also need systems that can be adapted across projects without rebuilding the infrastructure every time.

For Microsoft, the nuclear use case is strategically attractive because it sits at the intersection of AI, regulated industry, and critical infrastructure. For DOE and INL, it provides a path to test whether commercial AI tooling can be adapted to one of the most demanding documentation environments in government.

Why cloud infrastructure changes the equation

Cloud-based AI can support repeatability, version control, and secure collaboration across geographically dispersed teams. That is valuable when project participants include labs, vendors, regulators, and contractors working from different systems and authorization levels.- It supports shared access to structured data.

- It can help enforce role-based permissions.

- It can improve workflow reproducibility.

- It may accelerate tool updates and validation.

- It offers a path toward standardized deployment across projects.

What This Means for the NRC

The most important question is not whether DOE can generate a document in a day. It is whether the NRC can trust AI-assisted submissions enough to reduce its own review burden without lowering the safety bar. The answer is likely to be yes, but only gradually. NRC still requires evidence, consistency, and public accountability, and those obligations will not disappear because a model helped draft the paperwork.Still, this demonstration aligns with NRC’s own direction of travel. The agency has been modernizing its licensing processes, setting shorter schedules in some cases, and trying to make reviews more efficient for advanced reactors. If AI-generated submissions are cleaner and more complete, the regulator may spend less time chasing missing information and more time on substantive safety questions.

That could be a meaningful shift. Regulatory time is often consumed not by disagreement over fundamental safety, but by iterative clarification and document cleanup. AI may help compress that middle layer, where much of the friction lives.

Review efficiency versus regulatory responsibility

The NRC is unlikely to accept AI output at face value, and that is appropriate. But it may become more willing to work with applicants who demonstrate high-confidence digital workflows and rigorous validation methods.- Better submissions can reduce requests for additional information.

- More complete records can improve review predictability.

- Structured mappings can aid staff continuity.

- Fewer formatting errors can cut clerical back-and-forth.

- The agency can focus more on technical substance than document housekeeping.

The Competitive Landscape in Advanced Nuclear

AI-assisted licensing could become a differentiator in the increasingly crowded advanced reactor market. Developers are not only competing on reactor physics, fuel choice, and capital cost. They are also competing on how quickly they can clear regulatory hurdles and prove deployability. If one company can prepare a higher-quality application faster than another, that becomes a real strategic advantage.This is especially relevant for smaller developers, which often have innovative designs but limited staff. A licensing team that can effectively multiply its output through AI may be able to do more with fewer people, at least during the document-heavy early phases. Larger firms may benefit as well, but they already have more established regulatory organizations.

The broader market implication is that AI could become part of the standard nuclear development stack, much like simulation software, digital twins, or configuration management tools. Once that happens, the competitive question may shift from “Should we use AI?” to which AI workflow has the best traceability, governance, and regulatory fit?

Who stands to gain most

The biggest winners are likely to be projects with large documentation burdens and aggressive schedules.- First-of-a-kind developers need help translating novel designs into formal language.

- Small teams need leverage to compete with better-funded rivals.

- Utilities may use AI to manage uprates, renewals, and new build packages.

- National labs can use it to standardize and compare technical inputs.

- Regulators may gain better-structured submissions, even if review remains human-led.

Enterprise Versus Consumer Impact

This story is overwhelmingly an enterprise story. There is no obvious consumer-facing product here, no direct household use case, and no near-term effect on retail electricity bills. The impact lands instead in engineering organizations, compliance teams, national labs, regulators, and reactor developers. In that sense, the real audience is the industrial backbone of nuclear deployment.For enterprises, the appeal is clear: faster document generation, better traceability, and fewer repetitive tasks. Those gains can reduce non-technical labor cost, accelerate milestone delivery, and improve submission quality. For consumers, the benefit is indirect but potentially significant if it helps more advanced nuclear capacity come online faster and more reliably.

That indirect benefit should not be overstated. Nuclear project timelines are affected by financing, supply chain readiness, permitting, local politics, workforce availability, and construction risk. AI can help with one of those dimensions, but it cannot solve all of them.

Where the value shows up

The near-term value is mostly inside large organizations managing regulated complexity.- Compliance teams can reduce manual drafting.

- Engineers can focus on design validation.

- Project managers can improve schedule control.

- Executives can track risk more accurately.

- Regulators may receive cleaner submissions.

Strengths and Opportunities

This demonstration has several strengths that make it more than a publicity exercise. It targets a real bottleneck, it uses a concrete workflow, and it is tied to a licensing outcome rather than an abstract AI benchmark. Just as important, it sits inside an ecosystem of DOE, INL, ANL, Microsoft, and Everstar that can potentially validate, refine, and scale the approach.- It addresses a high-value bottleneck in nuclear deployment.

- It shows real document translation, not just chat-style summarization.

- It flags missing information early in the process.

- It could improve submission consistency across projects.

- It may reduce clerical rework for technical teams.

- It supports a more digital regulatory workflow.

- It gives the industry a path toward scalable licensing assistance.

Why the opportunity is bigger than one reactor

If the workflow proves robust, it could be adapted to other reactor types, fuel facilities, and even non-reactor nuclear infrastructure. That makes the commercial opportunity potentially much larger than the initial demonstration implies.Risks and Concerns

The promise is real, but so are the risks. Nuclear licensing is not the kind of domain where a fast draft is automatically a good draft, and AI systems can fail in subtle ways that are hard to detect if oversight is weak. There is also a governance question: who is responsible when a model mis-maps a requirement or omits a key assumption?- AI may generate plausible but incomplete regulatory text.

- Poor source data can lead to garbage in, garbage out problems.

- Overreliance on automation could reduce human scrutiny.

- Proprietary systems may limit independent verification.

- Cloud-based workflows raise security and access-control concerns.

- Regulators may need new validation standards for AI-assisted submissions.

- The industry could be tempted to treat speed as a substitute for readiness.

The validation problem

The most serious issue is not whether AI can draft pages quickly. It is whether the system can consistently produce a technically sound record across many edge cases, changing designs, and evolving guidance. That will require benchmarking, independent review, and a transparent confidence framework.Looking Ahead

The next phase will be about proving durability, not just novelty. DOE said the team plans to strengthen and validate the approach, including a reviewing agent that checks AI-generated documents against NRC guidance and a benchmarking rubric that grades Gordian’s performance. Those are the right next steps, because nuclear licensing demands a system that can be audited, not merely admired.INL’s interest in building its own in-house AI tools suggests the effort may expand beyond a single vendor or pilot. That matters because long-term adoption in critical infrastructure usually depends on institutional ownership, not just third-party software performance. If the labs can internalize parts of the workflow, the capability may spread more naturally across projects and agencies.

The broader market will watch for whether AI-assisted licensing can make a measurable difference in actual NRC outcomes, not just document production. If it helps reduce requests for additional information, keep schedules tighter, and improve submission quality, then it will have moved from an interesting demo to a meaningful industrial capability.

- Independent validation against NRC guidance.

- A formal confidence scoring method.

- More project data from real reactor programs.

- Evidence of reduced review cycles in practice.

- Expansion into related nuclear workflows, including fuel fabrication and other regulated facilities.

The big takeaway is simple: the licensing process may not be getting easier, but it may soon get a lot smarter. If DOE, INL, NRC, and industry partners can keep the system disciplined, auditable, and evidence-driven, AI could become a genuine enabler of faster nuclear deployment rather than just another layer of hype.

Source: Power Engineering Can AI help the DOE reduce nuclear reactor licensing timelines?