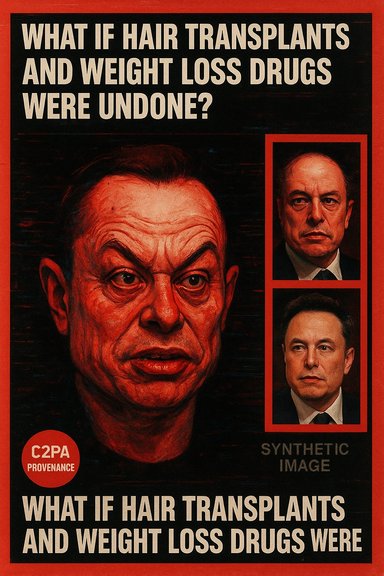

A bizarre, AI‑generated portrait that a tabloid produced by feeding a Joe Rogan screenshot into Microsoft Copilot has done more than provoke a few laughs — it has reopened urgent conversations about what mainstream generative tools can and should be used for, how easily synthetic imagery can be mistaken for evidence, and how press coverage of a prominent public figure’s body risks slipping into speculation about private medical matters. The Daily Mail’s experiment — uploading a still of Elon Musk from his latest appearance on the Joe Rogan Experience and asking Copilot to show “what Elon Musk would look like without hair transplants or weight‑loss drugs” — produced an exaggerated, grotesque result that quickly went viral and prompted medical commentary, renewed hair‑transplant speculation, and deeper questions about provenance, editorial standards, and legal exposure.

Crucially, public reporting did not preserve or disclose the session metadata: the exact Copilot build or model version, the verbatim prompt, any internal safety settings, or whether a human designer post‑processed the output. Absent that provenance, the experiment is technically irreproducible and non‑auditable. That gap transforms what could have been a transparent demonstration into a single‑source stunt whose process cannot be independently verified. For newsrooms that publish synthetic media, that omission matters: provenance and prompt logs are the only way to audit decisions, check for policy compliance, and respond to downstream misuse.

For journalists and editors, the lesson is simple and immediate: treat AI‑edited portraits as illustration, not diagnosis; preserve provenance; label synthetic images clearly; and always separate plausible inference from confirmed fact. For technologists and platforms, the lesson is equally stark: provide durable provenance, enforce conservative defaults for photorealistic edits of real people, and design features that make transparency the path of least resistance. The Daily Mail / Copilot vignette is less about one tech billionaire’s appearance and more about how society will negotiate the growing power of synthetic visual media — and whether institutions will build the guardrails before the next viral image does meaningful harm.

Source: Irish Star AI-generated image shows what Elon Musk would look like without hair transplants

Background

Background

The two threads the image pulled on

Two public facts anchor the story. First, Elon Musk has openly discussed using GLP‑1 class medications for weight loss — a holiday social post that jokingly labeled him “Ozempic Santa,” later clarified as a reference to using Mounjaro (tirzepatide). That admission is well documented in mainstream coverage and underpins the “weight‑loss drug” element of the tabloid’s prompt. Second, Musk’s hairline has long been the subject of public curiosity and cosmetic speculation. Photographic timelines show a clear change from a receding hairline in the late 1990s to a fuller hairline in subsequent years; cosmetic clinics and entertainment columnists routinely infer that surgical hair restoration (FUE/FUT) is the most plausible explanation. However, there is no public medical record or confirmed statement from Musk that explicitly verifies a hair transplant, so the hair‑transplant claim remains highly plausible but unverified. Responsible reporting must distinguish between photographic inference and documentary proof.The immediate trigger: a Rogan appearance and a tabloid stunt

The proximate cause of the Copilot image was a widely watched Joe Rogan Experience interview in which some viewers and commentators described Musk as appearing fatigued and aged compared with earlier public appearances. A clinician quoted by the Daily Mail suggested stress may be contributing to an accelerated appearance of aging — a plausible general medical observation, but one that amounts to conjecture without clinical assessment. The tabloid captured a still from the interview, uploaded it to Microsoft’s Copilot/Designer imaging workflow, and asked for a “what‑if” reversal of cosmetic and pharmacological interventions; Copilot returned a stylized, almost caricatured portrait rather than a clinically plausible counterfactual.How the Daily Mail / Copilot experiment worked — and what it did not

Reported workflow, reproducibility gap, and why that matters

Published accounts describe a simple workflow: capture a screenshot from the Joe Rogan interview, upload it into Microsoft Copilot or Designer, give a prompt to “remove” hair transplants and weight‑loss drugs, and publish the resulting image alongside the original as a visual gag. That sequence is consistent with how consumer multimodal assistants operate today: they accept uploaded images and apply edits or generate variants based on textual instructions.Crucially, public reporting did not preserve or disclose the session metadata: the exact Copilot build or model version, the verbatim prompt, any internal safety settings, or whether a human designer post‑processed the output. Absent that provenance, the experiment is technically irreproducible and non‑auditable. That gap transforms what could have been a transparent demonstration into a single‑source stunt whose process cannot be independently verified. For newsrooms that publish synthetic media, that omission matters: provenance and prompt logs are the only way to audit decisions, check for policy compliance, and respond to downstream misuse.

The product reality: what Copilot/Designer actually promises

Microsoft’s consumer imaging features incorporate provenance technologies (Content Credentials / C2PA manifests) and safety classifiers intended to label or moderate synthetic content. Those mechanisms represent real technical progress, but they are not foolproof: metadata can be stripped during resharing, not all downstream platforms surface provenance, and product behavior varies across builds and distribution channels. In short, an editor publishing an AI edit must do more than click “generate” — they must archive the prompt, capture the model identifier, and apply a visible human‑readable label to the output.What the generated image actually shows — and why stylized AI is a poor surrogate for medical or surgical claims

From statistical patterns to caricature

Generative image models do not have access to medical records, surgical histories, or personal clinical timelines. When asked to “undo” cosmetic interventions, they rely on learned statistical patterns from training data and on how similar edits were presented across millions of images. The result is often stylized inference rather than a medically grounded reconstruction: gaunter cheeks, exaggerated skin texture, or mismatched facial proportions that read as provocative but are not clinically informative. The Daily Mail / Copilot output leaned into shock value over plausibility — useful as satire, dangerous if treated as evidence.Why a synthetic portrait cannot substitute for clinical assessment

Visual appearance is influenced by lighting, camera angle, transient fatigue, makeup, recent sleep, and dozens of uncontrolled variables. A single interview still is a noisy input for medical inference; a synthetic image that amplifies perceived differences compounds that noise. Medical professionals who comment publicly without examining a patient offer observational hypotheses, not diagnoses. Using AI‑altered imagery as a basis for health claims crosses an ethical line: it can mislead readers, stigmatize the individual, and degrade public trust in responsible health reporting.The politics behind the optics: why Musk’s appearance became news

A broader context of political activity

Musk’s public role has shifted from CEO‑inventor to political player for many observers. A major review by NBC News mapped his international activity and concluded that his platform, statements, and private meetings had materially amplified right‑wing movements or leaders in many countries, a finding that generated widespread debate and political fallout. That political activity — and the backlash it sparked — is the backdrop that makes a Rogan appearance and an AI‑generated “what‑if” image feel more consequential than a simple celebrity caricature. Journalists covering appearance and health in this context must guard against letting political narratives morph into private medical speculation.Stress, public life, and the dangers of over‑reading appearance

It is medically accepted that prolonged stress can contribute to adverse health outcomes (elevated cortisol, inflammatory changes, risk factors for cardiovascular disease). When commentators attribute a public figure’s tired look to “rapid aging,” they are often making a shorthand connection between stress and visible signs. That shorthand may be plausible; it is not proof. Responsible coverage separates clinical mechanisms (well documented in standard medical literature) from an unsupported diagnosis based solely on a single filmed appearance or a stylized AI image.Ethics, law, and newsroom practice: what the stunt exposes

Reputation, defamation, and the slippery slope

AI‑generated images of real people live in a fraught legal and ethical space. Even public figures have privacy and reputational rights. A synthetic image that implies medical deficiency, surgical history, or other intimate facts can be defamatory if presented as factual without evidence. Newsrooms that publish such images without transparent methodology risk amplifying misinformation and exposing themselves to legal and reputational harm.Provenance, labeling, and the editorial checklist

Best practice for publishers using generative tools should include the following mandatory steps:- Archive the original upload, the full prompt, model/build identifier, and timestamps in a tamper‑evident audit log.

- Add a clear, visible provenance label on every AI‑generated image stating it is synthetic and explaining how it was created.

- Avoid making or implying medical claims from stylized AI outputs; consult clinicians and rely on direct medical sources for health reporting.

- Run a short harm assessment for potential defamation, invasion of privacy, or political misuse before publication.

- Require an editorial sign‑off process that includes legal and ethics review when images depict living people, especially public figures.

Platform responsibilities and technological fixes

Platforms and model vendors should continue to harden provenance mechanisms (durable C2PA manifests, visible labels in downstream embeds) and institute friction for photorealistic edits of real people — particularly in political or medical contexts. But technology alone will not solve the problem: durable editorial standards, legal frameworks, and platform enforcement are all necessary. The provenance metadata is useful, but if it gets stripped by resharing, that single technical fix cannot prevent the misinformation cascade.A practical guide for a newsroom confronted with a “what‑if” AI image

- Label first, ask questions second: If an AI image reaches an editor, apply a synthetic media label immediately and withhold publication until provenance is archived.

- Demand the prompt archive: the exact text prompt and model version are journalistic records; preserve them.

- Consult domain experts: If the image touches on medical or surgical claims, contact qualified clinicians and hair‑restoration surgeons for context before reportage.

- Frame speculative content as such: Use likely, plausible, and unverified where appropriate. Do not present inferential claims as fact.

- Consider proportionality: The public interest in a tech titan’s appearance is real, but weigh it against privacy and the potential for harm when publishing startling synthetic imagery.

Critical analysis: strengths of the Daily Mail demonstration and the real risks it exposes

What the stunt did well

- It demonstrated how straightforward it is for a mainstream outlet to produce striking synthetic images with consumer tools. That transparency can educate readers and spur needed debate about the power and ease of these systems.

- It launched a useful cross‑disciplinary conversation that touches AI safety, health literacy, and newsroom ethics — all timely topics newsrooms are currently grappling with.

What the stunt got wrong — and why that matters

- Single‑source experiments without preserved metadata are not audits; they are demonstrations. Without reproducible logs, the editorial claim is weak.

- The output’s stylization turned a potentially informative exercise into a memeable image that can be stripped of context and recirculated as (false) evidence — a classic misinformation failure mode.

- Medical inferences drawn or amplified by the image and by armchair clinicians risk conflating appearance with diagnosis; that’s a dangerous habit for outlets to adopt in the rush to publish viral content.

Where this leaves platform policy, regulation, and public discourse

- Expect policy moves: Governments and industry groups will continue pushing for mandatory provenance standards, visible labeling, and limits on photorealistic manipulations of living people — especially in political contexts. Those moves will shape vendor behavior and newsroom obligations.

- Newsrooms must adapt: Editorial training, new workflows for synthetic content, and legal/ethics sign‑offs will become standard operating procedure for responsible outlets.

- Public literacy matters: Readers should treat striking AI images of living people as constructed until an outlet provides full, verifiable provenance; absence of such transparency is a red flag.

Conclusion

The Copilot portrait of Elon Musk “without” hair transplants or weight‑loss drugs is memorable precisely because it is vivid and provocative. But its value as a piece of evidence is near zero: the image is a stylized AI construction, the documented workflow is single‑sourced and unreproducible, and any medical or surgical inferences it invites are speculative at best. The episode is nevertheless consequential. It reveals how low the barrier is for the production of persuasive synthetic content, how vulnerable public discourse is to images that look convincing but carry no verifiable provenance, and how urgently newsrooms, platforms, and regulators must adapt to a landscape where a single prompt can reshape public perception overnight.For journalists and editors, the lesson is simple and immediate: treat AI‑edited portraits as illustration, not diagnosis; preserve provenance; label synthetic images clearly; and always separate plausible inference from confirmed fact. For technologists and platforms, the lesson is equally stark: provide durable provenance, enforce conservative defaults for photorealistic edits of real people, and design features that make transparency the path of least resistance. The Daily Mail / Copilot vignette is less about one tech billionaire’s appearance and more about how society will negotiate the growing power of synthetic visual media — and whether institutions will build the guardrails before the next viral image does meaningful harm.

Source: Irish Star AI-generated image shows what Elon Musk would look like without hair transplants