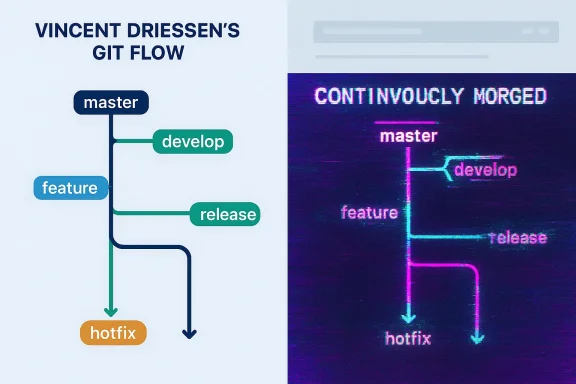

Fifteen years after Vincent Driessen published the now‑canonical “Git Flow” diagram, a crude, typo‑ridden version of that same diagram briefly appeared on a Microsoft Learn page — a reproduction Driessen says was run through an AI image generator, and one that included the viral mis‑rendering “continvoucly morged.” (nvie.com)

Vincent Driessen’s 2010 essay, “A successful Git branching model,” introduced a branching strategy and a clear, visual diagram that helped many teams reason about release, feature, and hotfix branches. The original post and diagram were published openly and have been widely shared and adapted in books, talks, and team wikis for over a decade. (nvie.com)

Earlier this week, developers on social media noticed a version of that diagram on a Microsoft Learn page. Observers quickly pointed out multiple defects in Microsoft’s image: missing or misdirected arrows, scrambled labels, and a famously awkward typo in the caption — “continvoucly morged” — which made it obvious the diagram was machine‑generated or otherwise corrupted. Driessen publicly called the image an “AI rip‑off” and asked Microsoft for simple attribution and an explanation. (nvie.com)

Microsoft subsequently removed the offending image from the Learn page while the company’s teams investigated what happened. Public reporting and community archives confirm the diagram was taken down; Microsoft did not provide detailed public attribution or a full explanation at the time of reporting. (techplanet.today)

Microsoft publicly announced a formal commitment — originally branded “Copilot Copyright Commitment” and later expanded to a broader “Customer Copyright Commitment” — to indemnify certain commercial customers against copyright claims arising from outputs generated by Microsoft’s Copilot services, subject to guardrails and conditions. In short, Microsoft has said it will defend and potentially cover adverse judgments for commercial customers who follow the required safety and filtering guidance. That policy is intended to reduce legal exposure for enterprise Copilot adopters, but it does not absolve creators of the ethical need for attribution or Microsoft of internal QA responsibilities when publishing content on its own Learn portal.

Key points about that indemnity program:

Vincent Driessen’s reaction — disappointment and a request for attribution — captures the heart of the issue. He did not demand litigation; he requested a simple link back to his original explanation of a branching model he created for the benefit of the community. Microsoft’s rapid removal of the image was the right immediate step, but procedural transparency and policy fixes must follow.

For organizations that publish technical learning materials, the lessons are clear and low‑cost: treat AI outputs as drafts, require human domain and editorial review, log provenance, and credit original creators. For AI platform vendors and major publishers, the urgency is higher: your artifacts will seed future models and shape developer practice. The smarter, more responsible path is to pair creative automation with measurable editorial controls — otherwise we risk normalizing the very “AI slop” that undermines craftsmanship, trust, and the long tail of knowledge on which engineers rely. (nvie.com)

Source: PCMag Programmer: Microsoft Ran My Work Through AI, Inserted Embarrassing Typos

Background / Overview

Background / Overview

Vincent Driessen’s 2010 essay, “A successful Git branching model,” introduced a branching strategy and a clear, visual diagram that helped many teams reason about release, feature, and hotfix branches. The original post and diagram were published openly and have been widely shared and adapted in books, talks, and team wikis for over a decade. (nvie.com)Earlier this week, developers on social media noticed a version of that diagram on a Microsoft Learn page. Observers quickly pointed out multiple defects in Microsoft’s image: missing or misdirected arrows, scrambled labels, and a famously awkward typo in the caption — “continvoucly morged” — which made it obvious the diagram was machine‑generated or otherwise corrupted. Driessen publicly called the image an “AI rip‑off” and asked Microsoft for simple attribution and an explanation. (nvie.com)

Microsoft subsequently removed the offending image from the Learn page while the company’s teams investigated what happened. Public reporting and community archives confirm the diagram was taken down; Microsoft did not provide detailed public attribution or a full explanation at the time of reporting. (techplanet.today)

What happened, in plain terms

- In 2010, Driessen published the Git Flow model and an accompanying diagram that visually maps branches across time. The post became a reference for many teams. (nvie.com)

- In February 2026, a similar diagram appeared on Microsoft Learn that resembled Driessen’s layout but contained multiple errors and the memorable typo “continvoucly morged.” (nvie.com)

- Community members (on platforms including Bluesky and Hacker News) flagged the image; Driessen confirmed the resemblance and called for attribution. (nvie.com)

- Microsoft removed the image and an internal post‑mortem was reported to be underway, according to a Microsoft employee post shared publicly by community members. (news.ycombinator.com)

Why this matters: craftsmanship, trust, and the costs of "AI slop"

At first glance the incident is comedic — a trillion‑dollar company publishing an image with an obvious garbled phrase. But the episode exposes deeper, systemic risks when large organizations automate content production without adequate human oversight.A failure of quality assurance

Microsoft Learn is an official developer learning resource. Users expect technical accuracy and clarity from such materials. The presence of:- misaligned arrows (which can invert meaning in a process diagram),

- malformed labels (which reduce legibility and trust), and

- obvious typographical hallucinations like “continvoucly morged”

The reputational cost

When a company prominent in AI development and deployment publishes shoddy AI‑generated content, it dilutes trust in that company’s brand and in AI as a tool for professionals. Microsoft has been a public champion of generative AI and Copilot products; a visible failure on an official learning page is a reputational mismatch between marketing and execution. This matters because enterprises and developers weigh vendor competence and reliability when adopting tooling and training resources. Observers called the image “AI slop,” and the meme quickly spread — but the underlying issue is erosion of confidence in technical documentation. (nvie.com)Intellectual property and attribution

Driessen published the original Git Flow diagram under terms that allowed reuse with attribution. The ethical (and often legal) norm in technical communities is to credit sources, especially when derivative materials are created. Driessen asked for a simple link back and attribution, and his tone emphasized disappointment more than anger. Whether Microsoft’s image constitutes a license violation depends on how the original was used, whether it was substantially transformed, and how the source files were obtained — complex questions that sit at the intersection of copyright, community norms, and the unsettled legal landscape around AI training and outputs. (nvie.com)What we can verify — and what remains uncertain

The following statements are corroborated by primary sources and contemporary reporting:- Vincent Driessen’s blog post titled “15+ years later, Microsoft morged my diagram” was published on February 18, 2026, and documents his account of the incident. (nvie.com)

- The original Git Flow post and diagram were published on January 5, 2010, by Driessen and remain widely referenced. (nvie.com)

- Community threads (Hacker News, Bluesky) captured Microsoft staff acknowledgement that the item looked like vendor content and that Microsoft would run a post‑mortem; a Microsoft employee’s post referencing a post‑mortem was circulated publicly. (news.ycombinator.com)

- The Microsoft Learn page in question removed the image after community pushback. Contemporary reporting and archival captures indicate the asset was taken down following the criticism. (techplanet.today)

- Whether Microsoft intentionally fed Driessen’s diagram into a specific commercial or internal AI image generator, or whether the image resulted from another vendor workflow. Driessen’s post says the diagram was “apparently run through an AI image generator,” but Microsoft has not publicly confirmed the toolchain used to create that image. That precise provenance has not been released publicly at the time of reporting; the responsible parties reportedly initiated an internal review. (nvie.com)

Legal and policy context: corporate indemnities and the Copilot Copyright Commitment

This incident also sits inside a larger legal and commercial framework that Microsoft and other major cloud vendors have been shaping around generative AI.Microsoft publicly announced a formal commitment — originally branded “Copilot Copyright Commitment” and later expanded to a broader “Customer Copyright Commitment” — to indemnify certain commercial customers against copyright claims arising from outputs generated by Microsoft’s Copilot services, subject to guardrails and conditions. In short, Microsoft has said it will defend and potentially cover adverse judgments for commercial customers who follow the required safety and filtering guidance. That policy is intended to reduce legal exposure for enterprise Copilot adopters, but it does not absolve creators of the ethical need for attribution or Microsoft of internal QA responsibilities when publishing content on its own Learn portal.

Key points about that indemnity program:

- It is conditional: customers must use the guardrails, filters, and operational mitigations Microsoft specifies.

- It applies to paid, commercial Copilot offerings (the program has specific eligibility rules).

- The indemnity stance addresses customer‑facing legal risk, but it does not relieve a company from ethical duties to creators nor from obligations to conduct proper editorial review for official documentation.

The immediate aftermath: community reaction and Microsoft’s internal response

Developers mobilized quickly. The story moved from social posts to wider coverage within hours, a pattern familiar in developer communities: a visible error + a prominent author = rapid amplification.- Community platforms captured and circulated side‑by‑side images of Driessen’s original diagram and the Microsoft version; the garbled phrase made the comparison obvious and memetic. (nvie.com)

- Microsoft staff publicly acknowledged the issue on social platforms and signaled a post‑mortem and removal. The quote “looks like a vendor, and we have a group now doing a post‑mortem trying to figure out how it happened” circulated in community captures, demonstrating Microsoft recognized the need to investigate. (news.ycombinator.com)

Deeper analysis: process failures that likely allowed this through

From a product/content governance perspective, a few plausible failure modes explain how a defective image could be published on an official Learn page:- Vendor procurement + lack of strict deliverable review. If Microsoft used a third‑party vendor or freelancer to produce diagrams or documentation, insufficient acceptance criteria or missing proofread steps could have allowed the artifact through. Internal vendor workflows must require both subject‑matter review and copy editing for officially branded assets. The presence of broken arrows and a nonsensical label suggests both technical and editorial oversight were absent or ineffective. (news.ycombinator.com)

- Overreliance on AI as a substitute for human designers and editors. Organizations experimenting with AI to scale content creation must avoid treating AI outputs as final. Generative image models are known to hallucinate text and misrender structured diagrams. When an official page carries the company logo, human review should be required by policy to catch hallucinations. (nvie.com)

- Diffusion of responsibility in large organizations. Microsoft employs hundreds of thousands of people and works with many vendors. If a vendor produced the material, and internal staff assumed vendor QA covered editorial accuracy, the result is a classic organizational handoff failure: everyone assumes someone else checked it. Public artifacts need a named owner and a sign‑off chain that includes subject matter experts. (news.ycombinator.com)

Practical recommendations (for Microsoft and other organizations publishing technical content)

- Reintroduce a mandatory human sign‑off for all generative AI outputs that will be published under corporate branding. That sign‑off must include a subject matter expert and an editorial reviewer.

- Add staged content acceptance criteria for vendors that explicitly test for:

- semantic accuracy of diagrams,

- legibility of axis labels and text, and

- fidelity of directional arrows and connectors in process flows.

- Maintain provenance logging: record whether an asset was human‑created, AI‑assisted, or AI‑generated; log the tools and prompts used; and attach the identity of the reviewer who signed off for publication. This creates auditable traces and speeds post‑mortem analysis.

- Respect attribution norms: when an asset is derived from a community resource, provide clear credit and, where applicable, link to original materials (and ensure license compliance). Even when legal obligations are unclear, attribution is a low‑cost ethical practice that reduces reputational harm.

- Educate internal teams on known failure modes of image generation — including garbled text and reversed arrowheads — so reviewers know what to look for.

Broader implications: training data, feedback loops, and the contamination problem

One particularly worrying long‑term effect of sloppy AI content is the risk of creating a feedback loop: AI systems are trained or fine‑tuned on internet content, and when major publishers inadvertently create low‑quality AI artifacts, those artifacts can reenter training data for future generations of models.- Low‑quality, machine‑generated artifacts become training fodder for new models, which then replicate errors at scale.

- The result is a degradation of knowledge fidelity online: subtle errors and corrupted representations may become normalized in downstream educational content. (nvie.com)

Why attribution still matters (beyond copyright)

Driessen’s measured response underlines a cultural point: many community authors publish work to be useful. Attribution is a social contract that recognizes authorship, helps readers trace back to fuller explanations, and preserves the provenance of ideas.- Attribution helps learners find the canonical explanation and the source files that may include editable diagrams or original SVGs.

- Attribution preserves historical record: Git Flow was conceived in a particular software era; link‑backs let readers understand the context and whether Git Flow is a good fit for their modern workflow. (nvie.com)

What Microsoft’s Copilot indemnity does — and doesn’t — change

Microsoft’s customer indemnity is a commercial hedge: it aims to reduce litigation risk for paying customers using Microsoft’s Copilot products. It is not a shield for poor editorial practice on official Microsoft content.- The indemnity protects eligible commercial Copilot customers who follow guardrails; it is not a free pass to ignore attribution or to publish AI outputs without review.

- Microsoft, as a publisher of official learning assets, must meet higher editorial standards precisely because its materials carry authority and are used to teach professional skills.

What to watch next

- Will Microsoft publish a transparent post‑mortem that details whether the image was vendor‑created, which tools were used, and what procedural controls failed? Community trust depends on meaningful follow‑through, not just takedown. (news.ycombinator.com)

- Will Microsoft adjust Learn portal publishing policies to include explicit AI‑output flags, provenance, and mandatory review steps? This would be a practical public policy correction.

- Will other large publishers learn from this incident and require explicit attribution and provenance records when content is AI‑assisted? The incident can be a useful case study for policy design across the industry.

Conclusion

The Microsoft Learn “morged” diagram is more than an internet meme. It is a compact case study of the moment we are living through: major technology firms moving rapidly to integrate generative AI into content and product pipelines while governance, editorial controls, and attribution norms lag behind.Vincent Driessen’s reaction — disappointment and a request for attribution — captures the heart of the issue. He did not demand litigation; he requested a simple link back to his original explanation of a branching model he created for the benefit of the community. Microsoft’s rapid removal of the image was the right immediate step, but procedural transparency and policy fixes must follow.

For organizations that publish technical learning materials, the lessons are clear and low‑cost: treat AI outputs as drafts, require human domain and editorial review, log provenance, and credit original creators. For AI platform vendors and major publishers, the urgency is higher: your artifacts will seed future models and shape developer practice. The smarter, more responsible path is to pair creative automation with measurable editorial controls — otherwise we risk normalizing the very “AI slop” that undermines craftsmanship, trust, and the long tail of knowledge on which engineers rely. (nvie.com)

Source: PCMag Programmer: Microsoft Ran My Work Through AI, Inserted Embarrassing Typos