A new kind of civics lesson is unfolding in a fourth‑floor classroom in downtown Newark: instead of debating whether technology will replace jobs or rewrite the rules of the internet, seniors at North Star Academy Washington Park High School are being taught how to steer artificial intelligence so it doesn’t steer them.

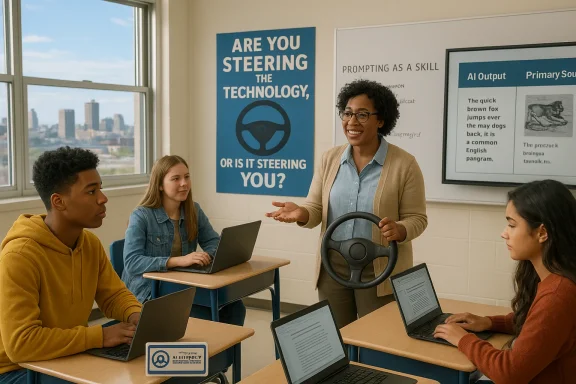

Across the United States, schools are racing to add AI literacy to the curriculum — an effort that ranges from a single workshop on prompting to full semester‑long electives that treat generative models as tools with social, legal and pedagogical consequences. Educators describe these programs as a kind of “driver’s license” for AI: the goal is not to ban the technology, nor to hand students an autopilot, but to teach judgment about when to use a chatbot, when to rely on human reasoning, and how to spot the limits of polished, persuasive outputs.

The Newark course is a useful case study. Co‑taught by Mike Taubman and Scott Kern, teachers who have already folded AI tools into other classes, the elective uses a blend of practical exercises and classroom debate to do two things at once: train students in concrete skills such as prompt design and tool evaluation, and equip them to think about broader issues like authorship, intellectual property, and the civic rules that should govern AI deployment. The result is a curriculum that positions AI literacy as both a technical competency and a public responsibility.

Teaching students to steer AI tools is, in that sense, an exercise in civic formation as much as it is a learning intervention. It trains young people to ask the right questions, demand transparency, and design equitable guardrails — all essential habits for a society reshaped by algorithmic influence.

As districts scale AI literacy programs, success will not be measured by how many students can coax a perfect essay from a model, but by how many walk away with sharper instincts about when to trust, when to verify, and when to rely on the slow, messy process of reasoning that humans do best. The emerging curricula, like the one in Newark, offer a promising blueprint: blend hands‑on skills with ethical debate, pair AI with time‑tested study methods, and treat technological fluency as inseparable from civic competence.

Source: San Juan Daily Star https://www.sanjuandailystar.com/po...cy-class-don-t-let-the-chatbot-think-for-you/

Background

Background

Across the United States, schools are racing to add AI literacy to the curriculum — an effort that ranges from a single workshop on prompting to full semester‑long electives that treat generative models as tools with social, legal and pedagogical consequences. Educators describe these programs as a kind of “driver’s license” for AI: the goal is not to ban the technology, nor to hand students an autopilot, but to teach judgment about when to use a chatbot, when to rely on human reasoning, and how to spot the limits of polished, persuasive outputs.The Newark course is a useful case study. Co‑taught by Mike Taubman and Scott Kern, teachers who have already folded AI tools into other classes, the elective uses a blend of practical exercises and classroom debate to do two things at once: train students in concrete skills such as prompt design and tool evaluation, and equip them to think about broader issues like authorship, intellectual property, and the civic rules that should govern AI deployment. The result is a curriculum that positions AI literacy as both a technical competency and a public responsibility.

Why AI literacy matters now

A technology that shapes decisions, quietly

Generative models and recommendation algorithms are no longer curiosities; they are scaffolding for how many teenagers research, write, create and socialize. Recent national surveys show a majority of U.S. teens have tried AI chatbots for schoolwork, entertainment or even emotional support. That rapid uptake creates both opportunity and risk: tools that can scaffold learning and expand curiosity can also shortcut the mental effort that builds deep understanding, and they can produce persuasive—but incorrect—answers.Evidence from classroom experiments

Randomized classroom studies show the nuance. When students used large language models (LLMs) alone to digest reading passages, their retention and comprehension were measurably weaker than students who took notes by hand. A hybrid approach — note‑taking combined with LLM assistance — produced better outcomes than the LLM alone, suggesting these tools are most effective when integrated into active, evidence‑based study practices rather than relied upon as a one‑stop substitute for thinking.Policy pressure and public programs

At the federal level, policymakers have signaled urgency: recent executive guidance has urged investment in foundational AI education for K‑12, and public‑private initiatives aimed at training teachers and funding classroom pilots are accelerating. Simultaneously, think tanks and research consortia warn that hasty, unregulated adoption could harm long‑term learning outcomes and worsen inequalities if access and safeguards aren’t prioritized.Inside an AI literacy classroom

The driver’s‑license metaphor — practical, not perfect

At Washington Park, Taubman and Kern ask students a practical question: Are you steering the technology, or is it steering you? The metaphor works because it forces students to think about control, risk tolerance and rules of the road. Lessons begin with simple exercises — comparing passive content feeds to active searches, testing chatbot outputs against primary sources — and move quickly into higher‑order tasks: designing acceptable‑use policies for school, debating whether AI‑generated film scenes deserve human authorship credit, and prototyping safety guardrails.Teaching prompt literacy and tool analysis

The class doesn’t treat chatbots like magical black boxes. Instead, students learn:- How to write precise, context-rich prompts that reduce hallucinations and ambiguity.

- How to probe outputs for provenance: ask what evidence supports a claim and where the model might be making assumptions.

- To treat AI as an assistant for iterative thinking — for expanding questions, testing counterarguments, and suggesting research directions — rather than a final authority.

Where the class draws the line

Teachers carve out AI‑free spaces intentionally. Initial critical thinking tasks, collaborative discussions, and first drafts remain human-first activities. The class uses AI for revision, for stress‑testing arguments, and for generating alternative perspectives — but not for substituting the student’s core thinking work. This calibrated use reduces the risk of offloading mental effort while harnessing AI’s unique strengths: speed, synthesis, and ideation.What the research says — benefits, limits and tradeoffs

Benefits when guided

When integrated thoughtfully, AI can:- Lower entry barriers to complex topics by offering plain‑language explanations.

- Help students explore alternative perspectives and extend curiosity beyond classroom time.

- Scale individualized feedback for draft writing, where teacher bandwidth is limited.

Limits and harms

But the evidence also warns that:- Overreliance weakens deep learning: students who substitute LLM summaries for note‑taking tend to remember and understand less.

- Hallucinations — confidently wrong outputs presented as facts — can mislead students unless cross‑checked against reliable sources.

- Academic integrity challenges arise when tools produce finished assignments that students pass off as their own thinking.

Legal and ethical headwinds that teachers must navigate

Authorship, copyright and the curriculum

Students asked whether a human should get credit for AI‑generated movie scenes split the classroom. The debate mirrors a larger legal battleground: publishers and news organizations have sued AI developers over claims that models were trained on copyrighted works without permission. That litigation, along with evolving norms about disclosure and attribution, creates a layered compliance challenge for schools that use or teach AI.Privacy, data and student safety

AI tools often depend on cloud services and third‑party models; schools must assess data flows, opt‑in consent, and whether student work or personal information could be used to fine‑tune external systems. Where models store conversational logs or retain memory across sessions, districts need clear policies to protect minors’ privacy.Equity and access

AI’s promise is unequally distributed. Underfunded schools may struggle to afford premium tools or teacher training, widening the digital divide. Thoughtful programs must pair tools with professional development, curated curricula, and offline alternatives so that reliance on AI doesn’t become a proxy for resource gaps.Practical classroom strategies: a starter playbook

Teachers and administrators should consider the following playbook when adding AI literacy:- Define AI‑free and AI‑assisted moments.

- Reserve initial idea generation, debates and formative assessments for AI‑free work.

- Teach prompting as a skill.

- Use mini‑workshops where students practice iterative prompts, compare outputs, and critique model reasoning.

- Require source verification.

- Make cross‑checking model answers against primary documents an explicit rubric item.

- Use notes + AI as a twin track.

- Combine traditional note‑taking assignments with optional AI follow‑ups to check understanding.

- Deploy guardrails and consent-driven tools.

- Prefer platforms that allow local control, restrict data export, and provide teacher dashboards for oversight.

- Train teachers, not just students.

- Invest in professional learning communities where educators co‑design lesson plans and share tested apps.

- Build fairness checks into grading.

- Adjust rubrics to reward process and original reasoning, not just polished deliverables.

Classroom culture: building habits that outlast tools

AI literacy is primarily about habits: skepticism, curiosity, and the habit of demanding sources. Teachers can embed these habits by:- Making thinking visible: require students to submit a short reflection on how they used AI, what they verified, and what they changed as a result.

- Running red‑team days: intentionally attempt to break assignments using AI-generated shortcuts to illustrate failure modes.

- Encouraging metacognition: ask students not only what they learned but how they learned it and which tools helped or hindered their thinking.

Policy and system responses: beyond the classroom

Federal and philanthropic momentum

Recent federal guidance has encouraged foundational AI education for K‑12 and created incentive structures for public‑private partnerships that supply curricula and teacher training. Philanthropic grants and nonprofit toolkits have emerged to help districts pilot bespoke AI apps while retaining local control.Research networks and evidence demands

A coordinated research agenda is essential. Short, high‑quality randomized classroom experiments, longitudinal studies on retention, and equity‑focused analyses will help answer crucial questions: does AI augment human learning over the long run, or does it simply create ephemeral gains for immediate tasks?Local policy levers

Districts should adopt clear guidance:- Acceptable‑use policies that define when and how AI may be used in coursework.

- Privacy impact assessments for vendor tools.

- Teacher training commitments and curricular integration timelines that avoid ad hoc, unregulated rollouts.

Strengths and potential risks — a critical assessment

Notable strengths

- Practical relevance: Teaching AI literacy prepares students for a labor market and civic life where algorithmic systems are omnipresent.

- Scalability of feedback: When used ethically, AI can give students iterative feedback otherwise impossible at scale.

- Engagement booster: AI can spark exploration and reduce initial barriers to complex topics, making learning more accessible.

Potential risks

- Shallow learning: Overreliance on model outputs can erode deep comprehension if schools fail to require active, human‑centered study practices.

- Misinformation amplification: Hallucinations and plausible fabrications can propagate misinformation to students seeking quick answers.

- Privacy and commercialization: Without strict controls, student interactions with third‑party AI may feed back into private datasets or commercial models, raising consent and surveillance questions.

- Legal uncertainty: Ongoing lawsuits and unsettled copyright precedents add a layer of risk for schools that reuse or redistribute AI‑generated content.

Recommendations for educators, districts and parents

- Prioritize teacher training before student rollout. Teachers are the gatekeepers who can translate research into classroom practice.

- Insist on transparent tools. Choose platforms that disclose data practices and allow local control over models when feasible.

- Institute process‑oriented grading. Reward students for visible reasoning, sources cited, and original drafts.

- Build an evidence review cadence. Establish a periodic review (every 6–12 months) of district AI policy informed by emerging research.

- Create accessible alternatives. Ensure students without regular device access can complete AI‑adjacent assignments without penalty.

- Engage students in policy design. Invite student voices into conversations about privacy, authorship and norms — they will be the primary users.

The larger civic lesson: technology as a subject of democracy

AI literacy classes do more than teach technical skills; they educate citizens. When students at Washington Park debate whether humans should be credited for AI‑generated film scenes or craft city‑level rules for AI use, they are practicing democracy. They are wrestling with the questions policymakers, technologists and legal scholars face: who owns creative labor, who is accountable when a model lies, and how do we align large systems with community values?Teaching students to steer AI tools is, in that sense, an exercise in civic formation as much as it is a learning intervention. It trains young people to ask the right questions, demand transparency, and design equitable guardrails — all essential habits for a society reshaped by algorithmic influence.

Conclusion

The lesson emerging from early AI literacy pilots is deceptively simple: don’t let the chatbot think for you. That mantra captures a practical classroom ethic and a broader civic imperative. AI can extend curiosity, accelerate learning, and democratize access to complex knowledge — but only if schools teach students to use these tools deliberately, verify outputs systematically, and protect the social foundations of learning.As districts scale AI literacy programs, success will not be measured by how many students can coax a perfect essay from a model, but by how many walk away with sharper instincts about when to trust, when to verify, and when to rely on the slow, messy process of reasoning that humans do best. The emerging curricula, like the one in Newark, offer a promising blueprint: blend hands‑on skills with ethical debate, pair AI with time‑tested study methods, and treat technological fluency as inseparable from civic competence.

Source: San Juan Daily Star https://www.sanjuandailystar.com/po...cy-class-don-t-let-the-chatbot-think-for-you/