AI-powered search has already moved past “experimental” — it’s a commercial surface advertisers can buy into today, and the rules, formats, and measurement playbooks are changing faster than most marketing teams expect. In short: if you rely solely on classic keyword bids and exact-match strategies, you risk disappearing from the place many users now land when they want an answer, not a list of links. This guide explains what AI search engine advertising actually is, where you can buy inventory today, how the formats differ from traditional search ads, practical campaign tactics, measurement pitfalls, and the regulatory and brand-safety trade-offs every advertiser must manage right now.

AI answer engines — generative summaries, conversational assistants, and “answer-first” interfaces — reframe discovery. Instead of serving a ranked list of links where the user decides next steps, these systems synthesize and deliver a single, conversational answer (often with citations), and then offer follow-ups or action options. That changes the unit of attention and the ad surface: there’s less page space and fewer link-clicks to capture, but higher-intent moments when a recommendation appears inside the conversation itself.

Platforms have reacted in two pragmatic ways:

Key characteristics that separate AI search ads from traditional search ads:

Caveats:

Source: AOL.com Everything you need to know about advertising on AI search engines

Background: why AI answers became ad inventory

Background: why AI answers became ad inventory

AI answer engines — generative summaries, conversational assistants, and “answer-first” interfaces — reframe discovery. Instead of serving a ranked list of links where the user decides next steps, these systems synthesize and deliver a single, conversational answer (often with citations), and then offer follow-ups or action options. That changes the unit of attention and the ad surface: there’s less page space and fewer link-clicks to capture, but higher-intent moments when a recommendation appears inside the conversation itself.Platforms have reacted in two pragmatic ways:

- Reuse existing ad systems where possible (Google and Microsoft initially surfaced ads created via their existing ad platforms into AI responses).

- Test new ad placements designed for conversation (sponsored follow-up questions, “showroom” interactive units, and ad cards added at the bottom of answers).

What “AI search engine advertising” actually means

At its simplest: AI search engine advertising is placing paid placements inside AI-driven search or answer experiences — not using AI to write your ads. That distinction matters: this is about where the ad appears (within a synthesized answer or conversational thread), and how platforms choose to label and surface it, not about whether your creative used a generative tool.Key characteristics that separate AI search ads from traditional search ads:

- Contextual relevance is judged at the conversation level, not single queries.

- Ads often include an explainable introduction or “ad voice” describing why the assistant is recommending the ad.

- Some formats preserve editorial independence by keeping the AI-generated answer separate from sponsored follow-ups; others integrate product cards into the response with clear “Sponsored” labels.

Who’s selling AI search ad inventory today

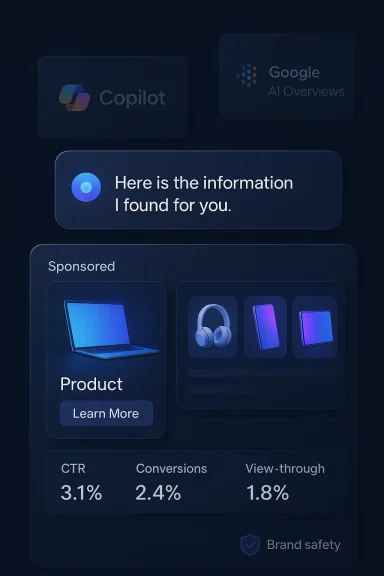

Right now there are three major surfaces advertisers can (or soon will) use:- Microsoft Copilot — Copilot integrates advertising via Microsoft Advertising inventory. Ads typically appear below answers and are introduced with a contextual ad voice that explains relevance to the user’s conversation. Microsoft reports improved relevance and early performance boosts when ads are surfaced inside Copilot.

- Google AI Overviews / AI Mode — Google uses AI Overviews (synthesized summaries in Search and AI Mode) to surface product and service ads pulled from existing Search, Shopping, or Performance Max campaigns. Eligibility leans on automated/AI-friendly campaign types (broad match, AI Max/AI Max for Search, Performance Max, and Dynamic Search Ads for certain placements). Google frames these ads as helpful, and labels them “Sponsored.”

- Perplexity — Perplexity pioneered sponsored follow-up questions and related placements as a monetization test; the format is typically a clearly labeled “sponsored” follow-up that triggers an AI-generated answer when clicked. Perplexity has run invite-only pilots and bills primarily on CPM/awareness models rather than pure CPC. Multiple industry write-ups and platform statements indicate Perplexity opened limited ad tests in late 2024 and ran closed betas through 2025.

How the main platforms differ — format, eligibility, and measurement

Microsoft Copilot

- Placement & format: Ads usually appear below the assistant’s answer and may be interactive showrooms or multimedia cards. Copilot uses an “ad voice” to explain the recommendation, improving transparency and potentially trust.

- Eligibility: Microsoft leverages existing Microsoft Advertising inventory; in many cases existing responsive and multimedia ad types can serve into Copilot if contextual signals match the conversation.

- Performance signals: Microsoft’s promotional materials and decks cite higher CTRs and conversion lifts for Copilot placements versus traditional search (platform-specific internal numbers have been quoted widely by Microsoft and industry partners). These are platform-supplied metrics — valuable but inherently self‑reported.

Google AI Overviews / AI Max for Search

- Placement & format: Ads can appear above or below the AI Overview. Google introduces sponsored products or solutions inside the summary and draws from Search, Shopping, and Performance Max inventories.

- Eligibility: To appear in AI Overviews, advertisers typically need to use more automated/AI-ready campaign types: broad match keywords, AI Max for Search, Performance Max, or Dynamic Search Ads (DSA) for relevant content. Google’s guidance encourages automated bidding and signal-based targeting over exact-match discipline.

- Measurement: Google has said it will extend reporting over time but currently treats AI Overview placements as a top-of-page inventory bucket within existing reporting; granular, per-placement reporting for AI Overviews is limited today. Independent analysts recommend treating AI Overview traffic as part of broader Search/Performance Max channel analysis until separate reporting appears.

Perplexity

- Placement & format: Sponsored follow-up questions in the “Related” section, sidebar display units, and invite-only test placements. When a user clicks a sponsored related question, they get an AI-generated answer inside Perplexity (the advertiser does not control answer text).

- Eligibility & pricing: Piloted with select advertisers and agencies; reported pricing is often CPM-focused and higher than typical display because the format prioritizes awareness in an answer-first environment. Conversion tracking is limited inside the platform; advertisers rely on post‑click journey unification and external measurement platforms to tie traffic back to outcomes.

What the early performance signals say — and why to treat them cautiously

Several platform and industry documents highlight sizable performance improvements for ads shown inside AI surfaces. Microsoft public materials and partner decks have quoted large percentage lifts in CTR and conversion when ads are shown in Copilot versus traditional search placements; Google has reported improved satisfaction when AI Overviews include helpful ads; Perplexity claims strong engagement with sponsored follow-ups in closed pilots.Caveats:

- Platform-provided uplift figures are useful but are not independent audits. Treat them as directional, not definitive.

- Reporting granularity is still limited. Google and Microsoft currently aggregate AI placements into broader reporting channels; Perplexity’s limited conversion tracking forces reliance on external measurement.

- Early-adopter advantage carries novelty effects: high CTRs can reflect curiosity and novelty, not steady-state performance.

- Measurement leakage and attribution ambiguity are common: AI answers can shorten journeys, change click behaviour (less clicking, more on-platform engagement), and complicate multi-touch attribution.

Tactical playbook: how to test AI search ads without wrecking your budget

Treat early AI search campaigns like a lab. Here’s a practical sequence you can implement in the next 30–60 days.- Inventory check (Day 1–3)

- Audit current Search, Performance Max, and DSA campaigns.

- Flag campaigns using automated bidding and broad match; these are more likely to be eligible for AI Overview inventory.

- Measurement foundation (Day 1–7)

- Ensure conversion tracking is robust (server-side where possible), and map events to revenue or qualified lead values.

- Implement a unified user-level measurement (identity stitching) to trace ad-originated journeys across sessions and devices — this is critical for Perplexity-like environments.

- Set a clear hypothesis and guardrails (Day 1)

- Example hypothesis: “Performance Max campaigns with broad match will generate 15% more high-intent conversions through AI Overviews at similar CPA.”

- Set upper and lower spend limits and a 2–4 week test window.

- Launch small, learn fast (Day 7–30)

- Deploy a narrow set of campaigns into AI-eligible inventory (Performance Max with creative diversity; DSA for content-relevant pages).

- For Microsoft Copilot, keep existing responsive and multimedia ad assets, then monitor conversational placements for contextual performance.

- Creative & UX guidance

- Build concise benefit-first headlines and images that work when read inside a conversational summary.

- Prepare landing pages that complete the conversational promise — short answer, clear CTA, immediate value (download, booking, contact).

- Optimize & iterate (Week 4 onwards)

- Monitor top-of-funnel signals (unique impressions, qualifying queries) and bottom-line conversion behavior.

- Exclude poor-performing search terms and refine brand controls (Google’s AI Max provides brand controls to prevent unwanted brand associations).

- Scale only with transparency

- Push spend up only when ROI is stable and reporting provides visibility into the customer journey. Expect to run concurrent experiments across Google, Microsoft, and Perplexity pilots to compare risk/reward.

Creative, bidding, and targeting guidance

- Move away from micro-managed exact-match strategies. AI placements reward signal-based relevance (conversation context, assets) and smart bidding. Give automated campaigns room to learn, but maintain negative keyword and URL exclusions to prevent brand-safe issues.

- Use a diverse asset set: images, short videos, and multiple headlines perform better when platforms can assemble the most relevant combination (Performance Max and Microsoft multimedia formats benefit from asset diversity).

- Monitor search term and qualifying query reports (or the available equivalents) daily in test windows — automation can learn harmful associations quickly if unchecked.

Measurement and attribution — the new weak point

Expect measurement headaches and plan for them.- Perplexity often charges on CPM and lacks native conversion tracking; tie-performance back to site analytics and session stitching tools. Use first-party signals (email capture, micro-conversions) to link AI clicks to outcomes.

- Google and Microsoft currently fold AI placements into existing report channels — tag experiments and use campaign-level comparisons rather than assuming a separate “AI placements” report exists yet.

- For any ad visible inside a synthesized answer, expect longer on-platform engagement, fewer immediate clicks, and a more diffuse downstream conversion path. Design KPIs to capture that nuance (assisted conversions, view-through conversions, lifetime value).

Brand safety, user trust, and regulatory risk

Advertising inside answer surfaces raises unique risks:- Perception and trust: Users expect neutral answers. Platforms mitigate this with “ad voice” explanations and clear “Sponsored” labels; advertisers should not try to blur lines. Microsoft and Google both emphasize labeling and ad voice as a trust-preserving mechanism.

- Sensitive queries: OpenAI has explicitly excluded sensitive categories (health, politics, mental health) from ad eligibility in early ChatGPT tests; advertisers should avoid bidding into queries that touch regulated or sensitive topics.

- Legal/regulatory: Expect increased scrutiny around disclosure, consumer protection, and political or health ads in AI contexts. Finance, legal, medical, and political advertisers should consult counsel and create pre-approval workflows before participating.

Pricing and budgeting expectations

- Early AI placements (Perplexity’s closed beta examples) often command premium CPMs because these placements are novel, high-attention, and brand-centered. Reports indicate CPMs significantly higher than standard display; early Perplexity pilots reportedly ran $50+/CPM in some tests. Expect to allocate a premium test budget and focus on awareness + assisted-conversion measurement initially.

- Google and Microsoft will price via their existing auction mechanics when AI placements are surfaced from Search/Shopping/Performance Max. The cost signal wile like search (CPC) in those systems, with variations based on competition and creative relevance.

Practical checklist before you buy

- Audit existing creative assets for conversational use.

- Verify server-side and client-side conversion tracking are firing and united.

- Establish a 4–6 week test window, a defined hypothesis, and a max spend cap.

- Prepare negative keyword lists, URL exclusions, and brand safety controls.

- Build post-click experiences to complete the conversational promise (short answers, clear CTAs).

- Legal & compliance: get pre-approvals for verticals that might be restricted.

What the near future looks like (and how to prepare)

- Expect more self-serve ad access to appear across platforms during 2026, with OpenAI and Perplexity expanding partner programs and Google and Microsoft refining AI-specific reporting.

- Programmatic ad stacks will likely build adapters for answer surfaces; ad tech vendors are already integrating AI placements into their roadmap.

- SEO teams must evolve into Answer Engine Optimization (AEO) teams — structured content, authoritative citations, and API-driven product data will determine whether a brand appears in synthesized answers and AI citations.

Final recommendations — a conservative, high-value approach

- Start small and instrument heavily. Use controlled experiments over big-budget, unmeasured bets.

- Prioritize business outcomes, not novelty metrics. Track revenue, lead quality, and post-click behavior beyond the immediate AI interaction.

- Keep brand safety and disclosure front-and-center: insist on explicit “Sponsored” labels and ad voice transparency in creative briefs.

- Invest in content that earns citations — being referenced organically in AI answers will often outperform paid placements over the long term.

Source: AOL.com Everything you need to know about advertising on AI search engines