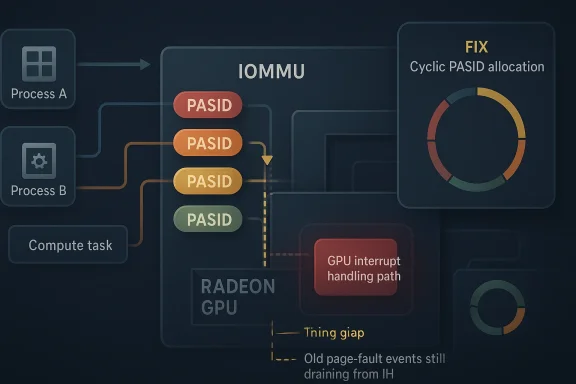

In the Linux graphics stack, CVE-2026-31462 is a reminder that even a small ordering bug in an advanced driver can ripple into visible instability, especially when the GPU is juggling multiple compute contexts in rapid succession. The flaw in drm/amdgpu centers on PASID reuse, where a newly launched process can inherit a hardware state that is still being unwound from a previous process that used the same PASID. The kernel fix changes allocation behavior so PASIDs are handed out cyclically, reducing the chance of immediate reuse while old page-fault activity may still be draining from the interrupt handler ring buffer.

The AMDGPU driver is one of the Linux kernel’s most complex graphics subsystems because it must coordinate display, compute, memory management, fault handling, and process isolation across a wide range of Radeon hardware. In that environment, PASID—Process Address Space ID—is the label that lets the GPU and IOMMU track which process owns a given virtual address context. When PASID handling is correct, the driver can safely multiplex GPU work across applications and containers; when it is not, the boundary between one process’s work and another’s can become blurry in exactly the wrong way.

The vulnerability description says the problem appears when a process exits while page faults are still pending in the IH ring buffer, then frees its PASID before the hardware has fully finished processing those fault events. If the next process receives the same PASID immediately, the GPU can be pushed into a hardware state that still reflects the prior owner. That is less a classic memory corruption story and more a synchronization and lifecycle issue, but in a kernel driver those distinctions matter less than the consequence: unpredictable behavior in the GPU’s fault and interrupt machinery.

Kernel maintainers resolved the issue by switching PASID allocation to an idr cyclic allocator, mirroring the behavior used for kernel PIDs. That design choice is significant because it avoids reissuing the same identifier immediately after release, which gives in-flight hardware activity time to settle before the label can be reused. In other words, the fix does not pretend the GPU can be made instantly quiescent; instead, it works around the race by making reuse less likely at the precise moment reuse would be dangerous.

The CVE was published by kernel.org and surfaced in the NVD dataset on April 22, 2026, with references to stable kernel commits that backported the fix. That timing matters because Linux security advisories often land in waves: first upstream, then into stable trees, then into downstream vendor advisories, and finally into distribution updates that users actually install. For AMDGPU issues, that path is especially important because the kernel driver is usually the first place the bug is corrected, while the broader ecosystem may lag behind by days or weeks.

This is why the bug is subtle rather than spectacular. The GPU is not simply “using the wrong process”; it is receiving a new process with the same identifier while stale fault notifications may still be propagating. The result can be an interrupt-handling mismatch that looks, from the outside, like a flaky GPU, a transient hang, or an error that appears only under heavy process churn. That kind of failure mode is exactly what makes driver bugs so painful to triage.

The upstream fix appears to have been cherry-picked from commit 8f1de51f49be692de137c8525106e0fce2d1912d, and stable references point to a backported version in kernel.org’s stable tree. That pattern is important because it tells administrators this is not merely theoretical code cleanup; it is the kind of patch maintainers considered fit for production kernels. Stable-tree adoption is often the best signal that a bug has real operational impact.

The wider significance lies in how the flaw touches the driver’s process isolation model. GPU compute users, containerized ML workloads, and desktop applications all depend on the driver assigning and reclaiming context identifiers in a predictable way. If one context can inadvertently overlap with another because of a too-early identifier reuse, then the system’s fault-handling assumptions weaken.

For consumer desktops, the effect is subtler but still serious. A single gaming or content-creation machine may not constantly churn processes fast enough to trigger the worst-case sequence, yet when it does happen, the user sees the symptoms as freezes, resets, or unexplained crashes rather than as an obvious security event. That distinction matters because many users will never connect a kernel CVE to what looks like ordinary GPU flakiness.

That approach also reveals something about kernel engineering culture. When a bug involves asynchronous cleanup, the best answer is not always a more aggressive free operation. Sometimes the safest answer is to stop reusing the name too soon. This is the same intuition that underlies delayed reclamation in many parts of the kernel: reduce identity collisions first, then worry about optimization later.

For rivals and adjacent ecosystems, the lesson is the same: identifier management is not a boring implementation detail when hardware state is asynchronous. Drivers for other accelerators, especially those used for inference and virtualization, face analogous lifecycle problems even if the names differ. The more GPU and accelerator workloads move into multi-tenant environments, the more these corner cases matter.

Longer term, the more interesting question is whether this fix remains the final word. Kernel maintainers have already discussed related PASID-management changes in the AMDGPU mailing lists, which suggests the identifier lifecycle is still an active area of refinement. That is not unusual for a subsystem this large; it is simply evidence that modern GPU drivers are evolving under constant pressure from new workloads, new hardware, and new recovery expectations.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center

Background

Background

The AMDGPU driver is one of the Linux kernel’s most complex graphics subsystems because it must coordinate display, compute, memory management, fault handling, and process isolation across a wide range of Radeon hardware. In that environment, PASID—Process Address Space ID—is the label that lets the GPU and IOMMU track which process owns a given virtual address context. When PASID handling is correct, the driver can safely multiplex GPU work across applications and containers; when it is not, the boundary between one process’s work and another’s can become blurry in exactly the wrong way.The vulnerability description says the problem appears when a process exits while page faults are still pending in the IH ring buffer, then frees its PASID before the hardware has fully finished processing those fault events. If the next process receives the same PASID immediately, the GPU can be pushed into a hardware state that still reflects the prior owner. That is less a classic memory corruption story and more a synchronization and lifecycle issue, but in a kernel driver those distinctions matter less than the consequence: unpredictable behavior in the GPU’s fault and interrupt machinery.

Kernel maintainers resolved the issue by switching PASID allocation to an idr cyclic allocator, mirroring the behavior used for kernel PIDs. That design choice is significant because it avoids reissuing the same identifier immediately after release, which gives in-flight hardware activity time to settle before the label can be reused. In other words, the fix does not pretend the GPU can be made instantly quiescent; instead, it works around the race by making reuse less likely at the precise moment reuse would be dangerous.

The CVE was published by kernel.org and surfaced in the NVD dataset on April 22, 2026, with references to stable kernel commits that backported the fix. That timing matters because Linux security advisories often land in waves: first upstream, then into stable trees, then into downstream vendor advisories, and finally into distribution updates that users actually install. For AMDGPU issues, that path is especially important because the kernel driver is usually the first place the bug is corrected, while the broader ecosystem may lag behind by days or weeks.

What PASID Actually Does

PASID is easy to gloss over as a low-level acronym, but it is central to how modern GPU compute sessions stay isolated. In practical terms, PASID lets the GPU tag transactions with a process identity so the IOMMU and driver can map them to the correct virtual memory context. Without that tag, GPU work from different processes would be much harder to disentangle, especially once page faults, retries, and recovery code enter the picture.This is why the bug is subtle rather than spectacular. The GPU is not simply “using the wrong process”; it is receiving a new process with the same identifier while stale fault notifications may still be propagating. The result can be an interrupt-handling mismatch that looks, from the outside, like a flaky GPU, a transient hang, or an error that appears only under heavy process churn. That kind of failure mode is exactly what makes driver bugs so painful to triage.

Why immediate reuse is risky

Immediate reuse is risky because hardware and software do not always retire state at the same speed. A process can exit in microseconds, while its last GPU fault record may still be sitting in the ring buffer or being processed by a deferred handler. If the same PASID is handed out again before that cleanup completes, the driver may misattribute the remaining events.- Old fault events can outlive the process that caused them.

- Hardware interrupts may arrive after the PASID was freed.

- New work can inherit misleading state if the identifier is reused too quickly.

- Recovery paths often become harder to reason about when identity is recycled aggressively.

How the Vulnerability Emerged

The published description is concise, but it points to a familiar class of bug in kernel code: a resource is freed on one path while asynchronous work involving that resource still exists on another path. In this case, the freed resource is the PASID, and the asynchronous work is fault handling tied to the GPU’s interrupt queue. Once the identifier can be reused immediately, the driver loses the small safety window it needs to keep process lifetimes separated.The upstream fix appears to have been cherry-picked from commit 8f1de51f49be692de137c8525106e0fce2d1912d, and stable references point to a backported version in kernel.org’s stable tree. That pattern is important because it tells administrators this is not merely theoretical code cleanup; it is the kind of patch maintainers considered fit for production kernels. Stable-tree adoption is often the best signal that a bug has real operational impact.

The role of the IH ring buffer

The IH ring buffer—interrupt handler ring buffer—is the queue where the GPU and driver exchange interrupt-related events. If a page fault arrives late, after the owning process has already gone away, it can still be processed against a now-defunct identity. That is the narrow timing window this CVE addresses.Why the patch uses cyclic allocation

The cyclic allocator is a deliberately conservative choice. Rather than reusing the lowest available identifier right away, it walks forward, which statistically pushes reuse farther away in time. That does not eliminate all possible ordering hazards, but it sharply reduces the probability that the next process inherits the very same identifier while the hardware is still unwinding the previous one.Technical Impact on AMDGPU

From a technical standpoint, the immediate consequence of this bug is not necessarily code execution or data theft. The description points to interrupt issues and a hardware state mismatch, which usually translates into instability, failed submissions, GPU faults, or recovery behavior that does not line up cleanly with the process that triggered it. In driver land, that can be enough to break a desktop session or poison a compute workload.The wider significance lies in how the flaw touches the driver’s process isolation model. GPU compute users, containerized ML workloads, and desktop applications all depend on the driver assigning and reclaiming context identifiers in a predictable way. If one context can inadvertently overlap with another because of a too-early identifier reuse, then the system’s fault-handling assumptions weaken.

Potential symptoms in the field

Symptoms will likely vary depending on workload and timing, which makes this class of bug frustrating for users. One system may show a single page fault and recover cleanly, while another sees repeated GPU hangs after rapid process churn. The same underlying race can look benign on one workstation and catastrophic on another.- Sporadic GPU page-fault messages

- Interrupt handling anomalies

- Context recovery delays

- Occasional application hangs under rapid process restarts

- Hard-to-reproduce instability in compute-heavy sessions

Enterprise and Workstation Relevance

For enterprises, the most important angle is reliability under load. GPU-accelerated virtualization, rendering farms, scientific computing, and AI inference stacks often create and destroy GPU-bound processes quickly, which is exactly the pattern that stresses PASID lifecycle handling. Even if the vulnerability does not map to a straightforward confidentiality breach, a driver bug that destabilizes a shared workstation or compute node can still become a meaningful availability issue.For consumer desktops, the effect is subtler but still serious. A single gaming or content-creation machine may not constantly churn processes fast enough to trigger the worst-case sequence, yet when it does happen, the user sees the symptoms as freezes, resets, or unexplained crashes rather than as an obvious security event. That distinction matters because many users will never connect a kernel CVE to what looks like ordinary GPU flakiness.

Where the risk concentrates

The risk concentrates where process turnover and GPU interrupts are both frequent. That includes container hosts, developer machines, and systems running multiple GPU-aware applications in succession. It also includes environments where recovery paths are already stressed, because those systems are less tolerant of any additional state confusion.- GPU compute servers with rapid job turnover

- Workstations running many short-lived GPU tasks

- Virtualized or containerized graphics stacks

- Continuous integration rigs using accelerated test workloads

- Multi-user systems that share the same driver path

The Fix and Why It Matters

The fix is elegant in the way kernel fixes often are: it changes identifier allocation policy rather than trying to force the hardware to behave faster than it can. By using a cyclic IDR allocator, the driver avoids immediate PASID reuse and buys time for pending events to clear. That design is practical because it is low-risk, easy to backport, and aligned with long-standing kernel patterns for other identifiers.That approach also reveals something about kernel engineering culture. When a bug involves asynchronous cleanup, the best answer is not always a more aggressive free operation. Sometimes the safest answer is to stop reusing the name too soon. This is the same intuition that underlies delayed reclamation in many parts of the kernel: reduce identity collisions first, then worry about optimization later.

Why cyclic allocation is a good fit

A cyclic allocator is a good fit because it preserves simplicity while dramatically lowering the odds of temporal collision. The driver does not need to track a complex quarantine list, and it does not need to infer the exact moment every interrupt has drained. It just needs to avoid handing the same number back immediately.Why this is not a silver bullet

It is not a silver bullet, because cyclic reuse only reduces risk rather than eliminating the underlying timing relationship. If the system is under extreme load or the driver has another race elsewhere, this patch will not solve all GPU-fault pathologies. Still, it meaningfully narrows the failure window, and that is often the right tradeoff for a stable backport. Kernel security work is frequently about shrinking danger, not erasing it.Competitive and Ecosystem Implications

This CVE also highlights the broader pressure on Linux GPU drivers to be both fast and predictable. AMDGPU competes not just with proprietary graphics stacks, but with the expectation that upstream kernel code must service desktops, workstations, and cloud workloads with minimal special casing. A small PASID allocator change may seem mundane, yet it signals how much complexity is now concentrated in the open-source GPU path.For rivals and adjacent ecosystems, the lesson is the same: identifier management is not a boring implementation detail when hardware state is asynchronous. Drivers for other accelerators, especially those used for inference and virtualization, face analogous lifecycle problems even if the names differ. The more GPU and accelerator workloads move into multi-tenant environments, the more these corner cases matter.

Why open-source maintenance matters

Open-source maintenance matters because this kind of bug can be fixed upstream, backported, and audited in public. The stable-tree references and CVE publication trail provide administrators with a paper trail that proprietary stacks sometimes lack. That transparency does not prevent bugs, but it improves the odds that downstream vendors can react quickly and consistently.- Public review helps validate the root cause

- Stable backports shorten the time to remediation

- Shared code paths make the fix broadly useful

- Downstream distributors can align on one corrective behavior

- Administrators get clearer evidence for patch planning

Strengths and Opportunities

The upside of this fix is that it is small, targeted, and likely low-risk to deploy across supported kernels. Because it changes allocation behavior rather than the underlying GPU protocol, it should be relatively straightforward for vendors to backport and for administrators to verify through routine updates. That makes it a good example of a narrowly scoped security fix with broad reliability benefit.- Low implementation complexity compared with deeper driver redesigns

- Backport-friendly for stable kernels and vendor trees

- Improves reliability for rapidly recycled GPU contexts

- Reduces interrupt confusion in fault-heavy workloads

- Aligns with existing kernel practices for PID-like identifiers

- Helpful for multi-user and compute environments

- Likely transparent to most end users once patched

Risks and Concerns

The main concern is that the bug sits in a timing-sensitive path, which means reproducers may be inconsistent and operators may underestimate it. Another concern is that a PASID reuse bug can masquerade as generic GPU instability, delaying attribution and patching. That kind of ambiguity is dangerous because it turns a fixable kernel defect into a chronic support headache.- Hard to reproduce in controlled testing

- Symptoms may look like ordinary GPU crashes

- Potential impact on availability in shared systems

- Patch timing may vary across vendor kernels

- Adjacent races could remain hidden behind the same symptoms

- Users may ignore the update if they do not see an obvious security headline

- Compute-heavy deployments may be more exposed than desktops

Looking Ahead

The immediate next step is straightforward: watch for downstream kernel and distribution updates that carry the backported fix, especially in enterprise and long-term-support builds. Because the issue has already moved through the kernel.org and NVD pipeline, the question is less whether it will be patched than how quickly each vendor aligns its maintenance branches. In practical terms, administrators should treat this as a reliability patch first and a security patch second, even though it is now formally tracked as a CVE.Longer term, the more interesting question is whether this fix remains the final word. Kernel maintainers have already discussed related PASID-management changes in the AMDGPU mailing lists, which suggests the identifier lifecycle is still an active area of refinement. That is not unusual for a subsystem this large; it is simply evidence that modern GPU drivers are evolving under constant pressure from new workloads, new hardware, and new recovery expectations.

- Monitor vendor advisories for backported kernel updates

- Validate patched kernels in compute and desktop testbeds

- Watch for related PASID or amdgpu allocator follow-up fixes

- Correlate unexplained GPU faults with rapid process churn

- Prioritize rollout on multi-user and accelerator-heavy systems

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center