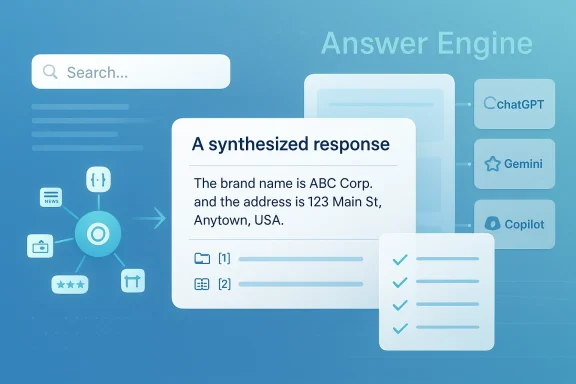

AI Search Engineers’ newly announced Answer Engine Optimization framework lands at a moment when many businesses are realizing that classic SEO is no longer the whole game. The press release argues that brands must now compete not just for clicks, but for inclusion inside AI-generated answers from systems like ChatGPT, Gemini, and Copilot. That claim is directionally plausible, but the broader story is more nuanced: AI answer engines are changing how people discover information, while the practical path to visibility still depends on trusted sources, structured data, and clear entity signals. OpenAI and Microsoft both describe their answer systems as grounded in web sources and citations, and Google continues to emphasize structured data as a way to help its systems understand content.

The release from AI Search Engineers is best understood as part of the accelerating shift from link-centric search to answer-centric discovery. Traditional search engines built their value on ranking pages, while modern AI interfaces increasingly synthesize information into a single response. That changes the competitive goal for businesses: the issue is no longer simply “How do we rank?” but “How do we become a source that the model trusts enough to mention?” OpenAI’s ChatGPT search and Microsoft’s Copilot Search both explicitly present cited, source-backed answers, reinforcing the importance of being a recognized and retrievable entity.

The press release frames this as a new discipline called AEO, or Answer Engine Optimization. Its premise is that businesses need to shape the signals AI systems use to identify credible entities, including structured data, third-party mentions, and consistent brand information. That is not a wild idea. Google’s documentation still says structured data helps search systems understand content and show richer results, and Microsoft’s Copilot guidance says grounded responses depend on relevant accessible sources, with citations used to verify answers.

At the same time, the term AEO is not a formal standard from Google, OpenAI, or Microsoft. It is a market-facing label that agencies are using to package a real strategic shift. That matters because buyers should separate the underlying technical reality from the branding around it. In other words, the framework may be useful, but the category name itself is marketing language, not an official product or certification from the platforms mentioned in the release.

What makes the announcement notable is the way it reflects a broader industry scramble. Agencies, consultants, and SEO shops are rebranding around AI visibility because clients are asking where they appear when a chatbot answers a question. That’s a real business need, especially for local services, B2B vendors, and niche experts. Yet it also raises an uncomfortable question: if AI systems already depend on the web’s existing authority graph, then AEO may be less a new invention than a new label for a set of familiar best practices, repackaged for a new interface layer.

That transition is especially disruptive for businesses that built their digital strategy around old-school keyword optimization. In the classic model, a company could chase a phrase, earn a top ten position, and capture traffic. In the new model, an AI system may cite a smaller set of sources or summarize an answer without sending the user anywhere else. That makes authority engineering—the practice of proving that a business is a trustworthy entity—more important than merely stuffing pages with search terms. Google’s structured data documentation supports the idea that machine-readable markup helps systems understand what a page is about.

This is why the press release’s emphasis on structured data and cross-platform consistency is worth taking seriously. If a business name, address, category, founder, and service description are inconsistent across web properties, the model’s confidence can drop. If trusted third-party references are thin, the entity may be harder to surface. The problem is not magic; it is recognition. And recognition is built from repeated, machine-readable, corroborated signals.

Still, there is a strong case that the underlying need is real. Microsoft says Copilot can provide grounded answers and citations when it can find relevant accessible sources, and OpenAI says ChatGPT search uses web sources to enrich responses. In practice, that rewards businesses that invest in clarity, credibility, and accessible content. The release’s message is therefore less a revelation than a redefinition of what visibility means in 2026.

The agency’s internal logic is straightforward: make the business easy to identify, easy to verify, and easy to trust. The more consistent the data, the stronger the chance that a model will treat the business as a legitimate candidate when answering a query. That fits what we know about Copilot Search, ChatGPT search, and Google’s documentation around structured data and source-backed answers.

The “multi-platform” part matters because ChatGPT, Gemini, and Copilot are not identical. They may all use sources, but they do not surface information the same way. That means a company may show up in one environment and disappear in another. Uniform optimization is therefore less effective than platform-aware optimization.

That distinction matters because businesses should not throw away SEO fundamentals. A good page architecture, crawlable content, and consistent brand data still underpin discoverability. AEO may be the newer label, but in practice it sits on top of the same foundations that have always mattered: clarity, credibility, and relevance.

For consumer brands, this might affect product discovery, comparisons, and local recommendations. For enterprise vendors, it could influence procurement research, shortlist generation, and market perception. In both cases, being omitted from an AI answer can become a competitive disadvantage even if the underlying brand is strong. Visibility now means being part of the model’s output, not just the search results page.

The strongest challenge is that many companies still treat their website as a standalone asset. AI answer engines do not. They inspect the broader ecosystem: directories, review sites, news mentions, knowledge bases, support documentation, and other corroborating material. That makes reputation management and content strategy more tightly coupled than they were in the old blue-link era.

The difference is strategic. Enterprises may invest in thought leadership, technical documentation, and analyst coverage. Consumer businesses may focus more on local schema, reviews, and clean business profiles. Either way, the principle is the same: if the model cannot confidently connect the business to the user’s intent, it will recommend someone else.

Google’s documentation remains more conservative in wording, focusing on structured data that helps search understand content and display rich results. That is important because businesses should not assume all AI systems use the same sourcing logic. Some emphasize citations, some emphasize retrieval, and some emphasize structured interpretation. In a sense, AEO is really a bundle of tactics for multiple answer pipelines.

That means the smartest strategy is portfolio thinking. Businesses should build a broad authority base that can support multiple systems, rather than chasing one narrow ranking tactic. If one platform changes its retrieval behavior, the business’s visibility should not collapse with it.

This is why businesses increasingly need content that can be cited cleanly. Long-winded promotional copy is less useful than well-structured, fact-rich pages with clear entities and corroborating references. The model does not reward fluff; it rewards material that can survive verification. That is the new editorial test.

For agencies, the appeal is obvious. This kind of work sits at the intersection of SEO, PR, schema engineering, and content strategy, which means it can command premium retainers if it produces business outcomes. It also creates a recurring opportunity, because AI platforms and search behaviors keep changing. The market is not static, and neither is the optimization problem.

It also includes editorial and reputation work. If an AI system draws confidence from third-party mentions, then brands will need more than self-published content. They will need genuine visibility in the places the models already treat as trustworthy.

That creates a more dynamic market than classic SEO. The winners may be the companies that invest in precision rather than just volume. Quality of representation could matter more than sheer content output.

It is also important not to overstate control. Businesses can improve their odds of being surfaced, but they cannot guarantee placement in a given AI answer. These systems are probabilistic, not contractual. A company may do everything “right” and still be omitted for reasons that are opaque to outsiders. That uncertainty is a core feature, not a bug.

Another risk is that companies may chase AI visibility at the expense of broader brand health. If your own site is weak, your review profile is poor, and your support documentation is outdated, then no amount of optimization jargon will help for long. The best answer-engine strategy is still good business fundamentals made machine-readable.

A smart buyer will also ask whether the work improves the company’s broader digital footprint. If the answer is yes, then AEO is probably a good investment. If the answer is only “it might get you mentioned by one model on one query,” then the business case is much weaker. Usefulness should outlast the buzz.

The second opportunity is operational. Many businesses genuinely do have weak metadata, inconsistent profiles, and thin authority footprints, which means there is low-hanging fruit. AEO work can produce gains that are visible not only in AI responses, but also in search quality, reputation management, and conversion readiness.

There is also strategic upside in aligning with platform behavior rather than fighting it. If the major AI products continue to reward clarity and source quality, then businesses that invest now may enjoy compounding benefits. Early discipline can become durable visibility.

A second concern is measurement. It is easy to claim that a brand “appears in AI answers,” but harder to isolate causation or measure business impact. Without rigorous baselines and platform-specific testing, optimization can become anecdotal. That makes transparent reporting essential.

There is also a reputational dimension. If a company tries to game AI systems with shallow or manipulative tactics, it may end up harming the very trust signals it needs. The safest approach is still the least flashy one: accurate, durable, well-cited information. That is not glamorous, but it is resilient.

Over the next year, expect more agencies to package similar services under different names: AEO, GEO, AI visibility, entity optimization, or answer optimization. The terminology may vary, but the work underneath will likely converge around the same fundamentals. Businesses should be careful not to chase labels and instead focus on whether the service improves discoverability in real systems.

In the end, AI Search Engineers is selling a message that many marketers already feel in their bones: the web is no longer just a place where people search, it is a place where machines answer. The businesses that thrive in that world will not be the loudest ones, but the clearest ones. And clarity, in the age of AI answers, is becoming a competitive asset all its own.

Source: newswire.com AI Search Engineers Introduces "Answer Engine Optimization" Framework to Help Businesses Get Recommended by ChatGPT and Gemini

Overview

Overview

The release from AI Search Engineers is best understood as part of the accelerating shift from link-centric search to answer-centric discovery. Traditional search engines built their value on ranking pages, while modern AI interfaces increasingly synthesize information into a single response. That changes the competitive goal for businesses: the issue is no longer simply “How do we rank?” but “How do we become a source that the model trusts enough to mention?” OpenAI’s ChatGPT search and Microsoft’s Copilot Search both explicitly present cited, source-backed answers, reinforcing the importance of being a recognized and retrievable entity.The press release frames this as a new discipline called AEO, or Answer Engine Optimization. Its premise is that businesses need to shape the signals AI systems use to identify credible entities, including structured data, third-party mentions, and consistent brand information. That is not a wild idea. Google’s documentation still says structured data helps search systems understand content and show richer results, and Microsoft’s Copilot guidance says grounded responses depend on relevant accessible sources, with citations used to verify answers.

At the same time, the term AEO is not a formal standard from Google, OpenAI, or Microsoft. It is a market-facing label that agencies are using to package a real strategic shift. That matters because buyers should separate the underlying technical reality from the branding around it. In other words, the framework may be useful, but the category name itself is marketing language, not an official product or certification from the platforms mentioned in the release.

What makes the announcement notable is the way it reflects a broader industry scramble. Agencies, consultants, and SEO shops are rebranding around AI visibility because clients are asking where they appear when a chatbot answers a question. That’s a real business need, especially for local services, B2B vendors, and niche experts. Yet it also raises an uncomfortable question: if AI systems already depend on the web’s existing authority graph, then AEO may be less a new invention than a new label for a set of familiar best practices, repackaged for a new interface layer.

Background

The rise of answer engines did not happen overnight. Search engines have been steadily reducing the distance between query and answer for years, first through featured snippets and knowledge panels, and now through generative responses. The major shift is that users increasingly receive a synthesized summary before they ever visit a website. OpenAI’s search product and Microsoft’s Copilot Search both explicitly highlight this experience by showing answers with cited sources, which means the brand visibility game is evolving from page ranking to source selection.That transition is especially disruptive for businesses that built their digital strategy around old-school keyword optimization. In the classic model, a company could chase a phrase, earn a top ten position, and capture traffic. In the new model, an AI system may cite a smaller set of sources or summarize an answer without sending the user anywhere else. That makes authority engineering—the practice of proving that a business is a trustworthy entity—more important than merely stuffing pages with search terms. Google’s structured data documentation supports the idea that machine-readable markup helps systems understand what a page is about.

The mechanics behind AI visibility

AI answer systems do not “understand” brands in a human sense. They infer relevance and credibility from source material, metadata, internal retrieval systems, and external citations. ChatGPT search says it searches the web when a question benefits from current information and presents inline citations, while Copilot Search describes a source list and linked evidence behind its answers. That means businesses need to think about how their identity looks across the web, not just on their own site.This is why the press release’s emphasis on structured data and cross-platform consistency is worth taking seriously. If a business name, address, category, founder, and service description are inconsistent across web properties, the model’s confidence can drop. If trusted third-party references are thin, the entity may be harder to surface. The problem is not magic; it is recognition. And recognition is built from repeated, machine-readable, corroborated signals.

Why agencies are packaging this now

The timing reflects a market opportunity as much as a technical one. Vendors know that executives are nervous about being absent from AI answers, and they are offering a framework that sounds both practical and strategic. That is a normal pattern in digital marketing: once a platform changes the user experience, a new optimization discipline gets named, sold, and standardized. The question is whether the discipline becomes durable or just becomes a temporary consulting buzzword.Still, there is a strong case that the underlying need is real. Microsoft says Copilot can provide grounded answers and citations when it can find relevant accessible sources, and OpenAI says ChatGPT search uses web sources to enrich responses. In practice, that rewards businesses that invest in clarity, credibility, and accessible content. The release’s message is therefore less a revelation than a redefinition of what visibility means in 2026.

What the AEO Framework Is Claiming

The press release says the framework centers on Authority Engineering, AI Visibility Audits, Structured Data Implementation, Citation Signal Development, and multi-platform optimization. Taken together, those pillars read like an attempt to operationalize entity SEO for an AI-first discovery layer. That is sensible, because visibility in answer engines is unlikely to hinge on a single trick; it will depend on a stack of signals that reinforce one another.The agency’s internal logic is straightforward: make the business easy to identify, easy to verify, and easy to trust. The more consistent the data, the stronger the chance that a model will treat the business as a legitimate candidate when answering a query. That fits what we know about Copilot Search, ChatGPT search, and Google’s documentation around structured data and source-backed answers.

Core components in plain English

There is no mystery in the individual parts. Authority Engineering means building a strong entity footprint across the web. AI Visibility Audits are essentially gap analyses that look for missing schema, inconsistent profiles, or weak citations. Citation Signal Development tries to increase the odds that reputable third parties mention the brand in credible contexts.The “multi-platform” part matters because ChatGPT, Gemini, and Copilot are not identical. They may all use sources, but they do not surface information the same way. That means a company may show up in one environment and disappear in another. Uniform optimization is therefore less effective than platform-aware optimization.

What is new, and what is not

What is new is the prominence of generative answers in the user journey. What is not new is the underlying importance of authoritative content, entity consistency, and technical markup. Google has long said structured data helps systems understand content and produce richer search results, so AEO is less a replacement for SEO than a reorientation toward machine-readable trust.That distinction matters because businesses should not throw away SEO fundamentals. A good page architecture, crawlable content, and consistent brand data still underpin discoverability. AEO may be the newer label, but in practice it sits on top of the same foundations that have always mattered: clarity, credibility, and relevance.

Why Businesses Are Worried About AI Visibility

The anxiety behind the press release is easy to understand. When users ask ChatGPT or Copilot a question, they may accept the answer without ever visiting the source pages. That means a business can become influential while still losing direct traffic. The value chain shifts from clicks to mentions, and that is a very different kind of digital presence.For consumer brands, this might affect product discovery, comparisons, and local recommendations. For enterprise vendors, it could influence procurement research, shortlist generation, and market perception. In both cases, being omitted from an AI answer can become a competitive disadvantage even if the underlying brand is strong. Visibility now means being part of the model’s output, not just the search results page.

The practical pain points

The press release identifies a few recurring problems: inconsistent business information, lack of schema markup, weak presence on trusted sources, and thin topical authority. Those are plausible barriers because AI systems need stable signals to connect a query to a real-world entity. If the web footprint is messy, the system has less confidence in the brand’s identity.The strongest challenge is that many companies still treat their website as a standalone asset. AI answer engines do not. They inspect the broader ecosystem: directories, review sites, news mentions, knowledge bases, support documentation, and other corroborating material. That makes reputation management and content strategy more tightly coupled than they were in the old blue-link era.

- Inconsistent brand names weaken entity recognition.

- Missing schema can reduce machine readability.

- Sparse third-party mentions can limit trust.

- Thin topical depth can reduce relevance.

- Broken or outdated pages can undermine confidence.

Enterprise versus consumer impact

Enterprises may care most about solution selection, vendor comparisons, and knowledge work. If AI assistants increasingly act as research layers, then being excluded from those answers may reduce top-of-funnel influence before a prospect ever reaches a salesperson. Consumer businesses, by contrast, may feel the pain in local discovery and recommendation queries, where being surfaced in a conversational answer can drive immediate action.The difference is strategic. Enterprises may invest in thought leadership, technical documentation, and analyst coverage. Consumer businesses may focus more on local schema, reviews, and clean business profiles. Either way, the principle is the same: if the model cannot confidently connect the business to the user’s intent, it will recommend someone else.

How AI Platforms Actually Surface Answers

The press release implies that AI platforms “select trusted entities” rather than simply rank pages. That is broadly consistent with how answer engines present information, but the mechanics differ by product. ChatGPT search says it can search the web when a prompt benefits from it and includes citations, while Copilot Search highlights sources and links used to generate the answer.Google’s documentation remains more conservative in wording, focusing on structured data that helps search understand content and display rich results. That is important because businesses should not assume all AI systems use the same sourcing logic. Some emphasize citations, some emphasize retrieval, and some emphasize structured interpretation. In a sense, AEO is really a bundle of tactics for multiple answer pipelines.

ChatGPT, Gemini, and Copilot are not interchangeable

A practical mistake would be to optimize for a single model as though it represented the entire market. ChatGPT search and Copilot Search explicitly expose sources, but they are still different products with different user experiences and different ecosystem ties. Gemini, meanwhile, sits inside Google’s broader search and knowledge infrastructure, where structured data and content clarity remain central.That means the smartest strategy is portfolio thinking. Businesses should build a broad authority base that can support multiple systems, rather than chasing one narrow ranking tactic. If one platform changes its retrieval behavior, the business’s visibility should not collapse with it.

The role of citations and grounding

Citations are not just a user-interface flourish; they are a trust signal. Microsoft’s Copilot documentation says grounding improves accuracy and relevance, and ChatGPT’s search experience lets users inspect sources directly. That makes source quality part of the product experience, which in turn makes source quality part of brand strategy.This is why businesses increasingly need content that can be cited cleanly. Long-winded promotional copy is less useful than well-structured, fact-rich pages with clear entities and corroborating references. The model does not reward fluff; it rewards material that can survive verification. That is the new editorial test.

The Opportunity for Agencies and Brands

There is a real service opportunity here for agencies that can translate AI visibility into measurable work. Many companies know they need to “show up in AI,” but they do not know where to begin. A framework like this gives them a process: audit the entity footprint, normalize the data, improve citations, and track whether the brand appears in answer systems.For agencies, the appeal is obvious. This kind of work sits at the intersection of SEO, PR, schema engineering, and content strategy, which means it can command premium retainers if it produces business outcomes. It also creates a recurring opportunity, because AI platforms and search behaviors keep changing. The market is not static, and neither is the optimization problem.

Where the work actually happens

Most of the value is likely to come from unglamorous tasks. That includes cleaning business profiles, fixing inconsistent citations, adding schema, improving about pages, strengthening service pages, and building references on credible external sites. In other words, the winning strategy may look a lot like disciplined digital hygiene.It also includes editorial and reputation work. If an AI system draws confidence from third-party mentions, then brands will need more than self-published content. They will need genuine visibility in the places the models already treat as trustworthy.

Competitive implications

The competitive effect could be significant for smaller firms that move early. A business with clean entity signals and credible citations may punch above its weight in AI answers even if it lacks the raw domain authority of larger rivals. Conversely, big brands can no longer assume that size alone guarantees inclusion if their data is messy or their content is stale.That creates a more dynamic market than classic SEO. The winners may be the companies that invest in precision rather than just volume. Quality of representation could matter more than sheer content output.

- Better entity hygiene can improve discoverability.

- Strong citations can strengthen trust.

- Structured data can reduce ambiguity.

- Thought leadership can reinforce topical authority.

- Cross-platform consistency can stabilize visibility.

Skepticism and What the Release Leaves Out

The biggest missing piece in the press release is proof. It makes strong claims about how AI systems choose recommendations, but it does not provide transparent methodology, public datasets, or platform-specific evidence. That means readers should treat the framework as a commercial offering, not as established science.It is also important not to overstate control. Businesses can improve their odds of being surfaced, but they cannot guarantee placement in a given AI answer. These systems are probabilistic, not contractual. A company may do everything “right” and still be omitted for reasons that are opaque to outsiders. That uncertainty is a core feature, not a bug.

The risk of overselling AEO

There is a real danger that vendors will sell AEO as if it were a shortcut to guaranteed chatbot placement. That would be misleading. Even official platform documentation frames source selection and grounding as dynamic processes that depend on query context, accessible sources, and system behavior.Another risk is that companies may chase AI visibility at the expense of broader brand health. If your own site is weak, your review profile is poor, and your support documentation is outdated, then no amount of optimization jargon will help for long. The best answer-engine strategy is still good business fundamentals made machine-readable.

What buyers should ask before paying for it

Businesses evaluating an AEO provider should ask for more than promises. They should want a baseline audit, a documented methodology, and a clear explanation of what changed after implementation. They should also want to know how results will be measured across platforms, because “appearing in AI” is too vague to be a meaningful KPI.A smart buyer will also ask whether the work improves the company’s broader digital footprint. If the answer is yes, then AEO is probably a good investment. If the answer is only “it might get you mentioned by one model on one query,” then the business case is much weaker. Usefulness should outlast the buzz.

Strengths and Opportunities

The strongest part of the framework is that it reflects a real market transition rather than a made-up problem. AI answer systems are now mainstream enough that businesses need a strategy for them, and the press release correctly identifies entity consistency, structured data, and citations as foundational elements. That gives the offer practical relevance, even if the branding is ambitious.The second opportunity is operational. Many businesses genuinely do have weak metadata, inconsistent profiles, and thin authority footprints, which means there is low-hanging fruit. AEO work can produce gains that are visible not only in AI responses, but also in search quality, reputation management, and conversion readiness.

- Structured data improves machine readability.

- Consistent entity data reduces ambiguity.

- Third-party citations strengthen trust.

- Cross-platform optimization widens reach.

- AI visibility audits can uncover fast wins.

- Authority engineering aligns SEO, PR, and content.

- Citation-ready content helps across multiple systems.

Why the timing is favorable

The timing is especially good because buyers already understand the risk of being invisible in AI answers. That creates a receptive market for education, audit services, and implementation work. Companies that move early may build an advantage before the category becomes crowded.There is also strategic upside in aligning with platform behavior rather than fighting it. If the major AI products continue to reward clarity and source quality, then businesses that invest now may enjoy compounding benefits. Early discipline can become durable visibility.

Risks and Concerns

The main concern is that the term AEO may encourage overconfidence. Businesses might believe that a checklist can force inclusion in AI responses, when in reality platform behavior remains fluid and partly opaque. If vendors sell certainty where none exists, the backlash could be severe.A second concern is measurement. It is easy to claim that a brand “appears in AI answers,” but harder to isolate causation or measure business impact. Without rigorous baselines and platform-specific testing, optimization can become anecdotal. That makes transparent reporting essential.

- Results may vary by platform and query.

- Visibility may not translate into clicks.

- AI systems can change behavior without warning.

- Attribution can be difficult to prove.

- Agencies may overpromise outcomes.

- Buyers may confuse brand mentions with revenue impact.

- Heavy dependence on one platform can be risky.

The enterprise risk

For enterprises, the risk is organizational confusion. Different teams may optimize different assets without a unified entity strategy, which can make the overall footprint inconsistent. In large companies, AI visibility work should be tied to brand governance, content governance, and technical SEO, not treated as a side project.There is also a reputational dimension. If a company tries to game AI systems with shallow or manipulative tactics, it may end up harming the very trust signals it needs. The safest approach is still the least flashy one: accurate, durable, well-cited information. That is not glamorous, but it is resilient.

Looking Ahead

The bigger story is not this one company’s launch, but the normalization of answer-engine competition. As more users ask questions in natural language, the brands that win will be the ones that can be confidently described by machines. That favors organizations with clean data, strong editorial discipline, and external validation.Over the next year, expect more agencies to package similar services under different names: AEO, GEO, AI visibility, entity optimization, or answer optimization. The terminology may vary, but the work underneath will likely converge around the same fundamentals. Businesses should be careful not to chase labels and instead focus on whether the service improves discoverability in real systems.

What to monitor

- Whether ChatGPT, Gemini, and Copilot continue expanding cited answers.

- How much structured data influences visibility in practice.

- Whether AI answer systems favor brands with strong third-party coverage.

- How agencies prove ROI for AI visibility work.

- Whether a common AEO methodology emerges across the market.

In the end, AI Search Engineers is selling a message that many marketers already feel in their bones: the web is no longer just a place where people search, it is a place where machines answer. The businesses that thrive in that world will not be the loudest ones, but the clearest ones. And clarity, in the age of AI answers, is becoming a competitive asset all its own.

Source: newswire.com AI Search Engineers Introduces "Answer Engine Optimization" Framework to Help Businesses Get Recommended by ChatGPT and Gemini