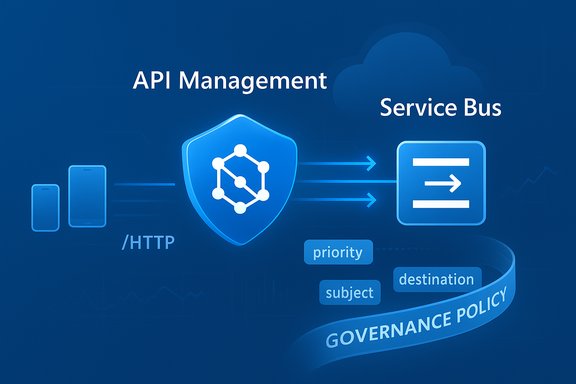

Microsoft’s API Management (APIM) now includes a built‑in policy that can publish messages directly to Azure Service Bus, turning API calls into first‑class events without the glue code or adapter services that teams have used until now.

Azure API Management is a gateway and policy engine that sits between clients and backend services to provide security, governance, transformation, and observability for APIs. Azure Service Bus is Microsoft’s fully managed enterprise messaging service designed for decoupling, async processing, and publish/subscribe patterns. Historically, when an HTTP client needed to trigger asynchronous backend work on Service Bus, teams inserted a small channel adapter — an Azure Function, Logic App, or other lightweight middleware — to accept the HTTP request, transform or enrich the payload, and publish it to Service Bus.

In late 2025 Microsoft introduced a preview APIM policy named send‑service‑bus‑message that removes that intermediary by letting APIM forward payloads (and optional metadata) directly into Service Bus queues or topics. The feature is available in preview and is accompanied by documentation and samples showing how to configure the policy, how to use managed identities for authentication, and what operational caveats to expect in the preview phase.

This development closes a practical gap for teams that already rely on APIM for security, rate limiting, and auditing and want to use the same control plane to safely expose message publishing capabilities to internal and external clients.

This example shows how you can simultaneously send a message and control the HTTP response returned to the caller — a common “fire‑and‑forget” pattern.

Flag: exact SLA values for each service and tier change over time and can differ across regions and SKUs; verify the current SLA for your chosen tiers before making production commitments.

That said, it is a preview feature and should be adopted with thoughtful engineering: validate message size and throughput constraints, plan for error and retry modes, and consider SLA and availability implications when this policy becomes part of critical paths. When used with standard messaging best practices — idempotency, correlation ids, monitoring, and minimal privileged identities — the policy promises to make API‑to‑event integrations simpler, safer, and easier to govern.

Source: infoq.com Azure APIM Simplifies Event-Driven Architecture with Native Service Bus Policy

Background

Background

Azure API Management is a gateway and policy engine that sits between clients and backend services to provide security, governance, transformation, and observability for APIs. Azure Service Bus is Microsoft’s fully managed enterprise messaging service designed for decoupling, async processing, and publish/subscribe patterns. Historically, when an HTTP client needed to trigger asynchronous backend work on Service Bus, teams inserted a small channel adapter — an Azure Function, Logic App, or other lightweight middleware — to accept the HTTP request, transform or enrich the payload, and publish it to Service Bus.In late 2025 Microsoft introduced a preview APIM policy named send‑service‑bus‑message that removes that intermediary by letting APIM forward payloads (and optional metadata) directly into Service Bus queues or topics. The feature is available in preview and is accompanied by documentation and samples showing how to configure the policy, how to use managed identities for authentication, and what operational caveats to expect in the preview phase.

This development closes a practical gap for teams that already rely on APIM for security, rate limiting, and auditing and want to use the same control plane to safely expose message publishing capabilities to internal and external clients.

Overview: what the policy does and where it runs

The core capability exposed by APIM’s new policy is simple and powerful: when a request hits an APIM operation, you can execute a policy element that constructs a Service Bus message (payload + properties) and sends it to a named queue or topic in a given Service Bus namespace. Key characteristics:- The policy is implemented as a declarative APIM policy statement and runs on the APIM gateway (managed gateway/classic gateway scenarios).

- Authentication between APIM and Service Bus is primarily handled by managed identities (system‑assigned or user‑assigned). APIM’s identity is given the Azure role Azure Service Bus Data Sender scoped to the target queue/topic or namespace.

- The policy supports both queues and topics and allows injecting message metadata via message properties.

- The policy is currently designated as preview and the official docs list usage notes and preview limitations (for example: the feature focuses on sending messages only; receive/consume is outside APIM’s remit).

- The policy can be scoped at global, product, API, or operation level in APIM, letting you choose granular or broad message publishing behavior.

Policy syntax (example)

The policy is an XML fragment placed into APIM’s policy configuration. A typical inbound policy that sends the request body to a queue and returns immediately might look like:

Code:

<policies> <inbound> <send-service-bus-message queue-name="orders" namespace="my-sb-namespace.servicebus.windows.net"> <message-properties> <message-property name="ApiName">@(context.Api?.Name)</message-property> <message-property name="Operation">@(context.Operation?.Name)</message-property> <message-property name="CallerIp">@(context.Request.IpAddress)</message-property> <message-property name="TimestampUtc">@(DateTime.UtcNow.ToString("o")</message-property> </message-properties> <payload>@(context.Request.Body.As<string>(preserveContent: true)</payload> </send-service-bus-message> <return-response> <set-status code="201" reason="Message Created" /> </return-response> </inbound> <backend /> <outbound /> <on-error> <base /> </on-error>

</policies>Why this matters: immediate benefits

This native integration between APIM and Service Bus produces clear operational and architectural benefits:- Less glue code: teams no longer need a dedicated adapter (Function/Logic App) just to forward messages from HTTP to Service Bus. That reduces maintenance load, runtime costs, and potential attack surface.

- Centralized governance: existing APIM policies (authentication, quotas, throttling, transformation, validation) can now be applied to requests that are routed into event-driven systems, ensuring the same governance model across sync and async backends.

- Simpler partner/third‑party integration: external systems that speak HTTP can publish to Service Bus using APIM as a secure front door — with the advantage of APIM observability (logs, tracing, analytics) and policy enforcement.

- Security posture improvement: by leveraging managed identities and RBAC to grant APIM the limited Service Bus Data Sender permission, credentials and secrets are avoided.

- Operational observability: APIM provides API-level logs and traces for each message published; Service Bus provides messaging metrics — together these enable end-to-end monitoring.

Technical details and constraints

Understanding the policy’s capabilities requires attention to several technical details and platform limits.Authentication and roles

- APIM uses a managed identity (system or user‑assigned) to authenticate to Service Bus. The identity must be assigned the Azure Service Bus Data Sender role on the queue/topic or namespace.

- Choose user‑assigned identities when you need to share the same identity between resources or to avoid changing the system identity if service instances are re-created.

Policy attributes and elements

- Attributes:

queue-name,topic-name(one of these must be provided),namespace(FQDN of Service Bus namespace),client-id(for user‑assigned identity). - Elements:

<message-properties>to attach custom properties (useful for routing and metadata), and<payload>which takes a string expression — typically the request body.

Message size and modes

- Service Bus message size depends on tier and protocol:

- Standard: max ~256 KB per message (including headers).

- Premium: larger messages supported — AMQP allows up to 100 MB (with caveats), while HTTP/SBMP remain constrained to about 1 MB. Large message support typically requires the Premium tier and AMQP.

- When using the APIM policy you must be mindful of these limits; if a client submits a body larger than what Service Bus supports under the selected protocol and tier, the send operation will be rejected.

Supported scopes and operational placement

- The policy can be used in inbound, outbound, and on‑error sections and applied at global, product, API, or operation scope.

- The public documentation indicates that the feature is in preview; product behavior, availability across gateways and workspaces, and supported SKU combinations may change as the feature evolves.

Preview caveats

- Preview features can change and may be limited in regions or not supported in certain APIM workspace types. Production-grade SLAs may not apply during preview.

- The current preview documentation notes the feature focuses on sending messages; APIM is not a message consumer. If your use case requires request/response via Service Bus, you will still need a backend consumer.

SLA, reliability and composite availability

Two points often raised when combining services are SLA and compounded availability.- Service Bus SLA (queues/topics) is typically in the neighborhood of 99.9% for supported tiers.

- API Management SLA varies by tier and deployment topology; modern SLA summaries indicate single‑region deployments commonly target 99.95%, with multi‑region Premium deployments capable of 99.99%.

Flag: exact SLA values for each service and tier change over time and can differ across regions and SKUs; verify the current SLA for your chosen tiers before making production commitments.

Use cases and architectural patterns

This policy unlocks several real‑world patterns:- Event publication at the edge: API calls that represent domain events (order created, device telemetry, user action) can be forwarded directly into the enterprise event bus for asynchronous processing.

- Partner onboarding and B2B: offer REST endpoints to external partners that securely publish events to Service Bus, while APIM enforces quotas, OAuth/JWT validation, and IP filtering.

- Fan‑out via topics: APIM can publish to a topic; multiple subscriptions process the event concurrently for billing, fulfillment, analytics, or third‑party notifications.

- Fire‑and‑forget front‑ends: user‑facing paths can immediately return success while backend systems process messages for long‑running tasks.

- Migration simplification: legacy systems that only speak HTTP can be modernized by using APIM + Service Bus as a bridge to event-driven microservices, minimizing code changes.

Patterns to avoid or treat carefully

- Using APIM as a synchronous adapter for large message transfer: the policy is designed to send messages; do not rely on APIM to broker large transfers or to guarantee large message delivery semantics — prefer direct Service Bus clients on Premium tier for large AMQP messages.

- Overloading message payloads with sensitive data: while messages are encrypted at rest and in transit under platform defaults, sensitive fields should be handled via tokenization/encryption strategies if regulatory requirements demand.

Implementation checklist: step‑by‑step

- Pre‑create the target Service Bus queue or topic in the correct tier (Standard/Premium) and choose partitioning or max message size as required.

- Enable a managed identity on the APIM instance (system‑assigned or user‑assigned).

- Grant the identity the Azure Service Bus Data Sender role scoped to the target queue/topic or namespace.

- Add the

<send-service-bus-message>policy to the APIM API operation in the inbound or outbound policy section. - Define message properties to carry routing and observability metadata (api name, operation, source IP, timestamp).

- Decide whether to forward the original request to a backend service or to return an immediate response (use

<return-response>where appropriate). - Implement error handling in

<on-error>to handle message send failures (retries, dead‑letter fallback, alternative storage). - Configure APIM diagnostics and Service Bus metrics/alerts in Azure Monitor for end‑to‑end visibility.

Observability, monitoring, and debugging

Observability is key for any asynchronous system. Recommended practices:- Enable APIM diagnostics to capture request traces, policy evaluation logs, and correlation identifiers.

- Propagate a unique correlation id from the HTTP request into the Service Bus message properties so consumers can trace processing across systems.

- Monitor Service Bus metrics (incoming requests, successful sends, throttling, dead‑lettered messages) and set alerts for error spikes.

- Use APIM analytics to track call volume, throttled requests, and policy execution latency — this is especially important because APIM will add some latency to the request path during message serialization and sending.

- Test error scenarios (Service Bus throttling, network errors, auth issues) and verify APIM’s retry and on‑error behavior.

Security considerations

Security controls are central to this pattern:- Prefer managed identities and RBAC over secrets. Grant the minimal permission set (Service Bus Data Sender) with the narrowest scope possible.

- Protect the APIM front door with OAuth/JWT, client certificates, mutual TLS (where required), and IP restrictions to prevent abuse of message publishing.

- If you require private networking, consider APIM private endpoints, virtual network injection (subject to tier constraints), and Service Bus private endpoints to limit data plane exposure to Microsoft backbone or your VNet.

- Implement message property validation and size checks in APIM policies to avoid accidental message floods or oversize payloads.

- Ensure that compliance controls for data at rest (Service Bus encryption) and data in transit (TLS/AMQP) match your regulatory requirements; consider customer‑managed keys if needed.

Operational risks and limitations

- Preview status: the policy is marked preview — behavior, availability, and capabilities may change. Relying on preview functionality for critical production flows requires careful risk assessment and fallback plans.

- Protocol and size constraints: HTTP and SBMP protocol limits and Service Bus tier constraints mean large payload scenarios may require Premium AMQP or alternative designs.

- Compounded availability: because two independent platform services are in the critical path, plan for degraded modes (local persistent buffer, alternate ingestion path) if either APIM or Service Bus experiences an outage.

- Error semantics: sending a message is best‑effort from APIM’s perspective. If a message send fails, your policy must decide whether to block the client, retry, or persist the payload elsewhere for later reprocessing.

- Cost considerations: APIM execution of policies and Service Bus operations are billable. High‑volume scenarios could shift costs from simple adapters to the gateway layer; model costs before migrating.

Best practices and hardening tips

- Use idempotency: include deduplication identifiers in message properties and configure consumers to be idempotent.

- Keep messages small: even if Premium allows large messages, keep payloads compact (store blobs separately and pass references when necessary).

- Use topics for broadcast: choose topics + subscriptions when multiple independent consumers must process events.

- Leverage APIM rate limits and quotas to protect Service Bus from abuse or sudden spikes from partners or mobile clients.

- Add correlation headers: propagate an

x-correlation-idand capture it in Service Bus message properties and downstream logs. - Test failover: simulate Service Bus throttling and APIM failures to verify retry/backoff and dead‑letter handling behavior.

- Audit RBAC changes: manage and periodically review the identities with Service Bus Data Sender assigned; use least privilege.

Practical example scenarios

- Order intake API: an e‑commerce checkout API accepts a request and publishes an order created event to a Service Bus topic. Downstream subscriptions handle inventory reservation, billing, and email notifications independently.

- Partner ingestion: a partner POSTs telemetry to an APIM‑exposed endpoint that validates tokens, enforces quotas, and publishes messages to Service Bus for downstream analytics pipelines.

- Telemetry gateway: edge devices that can only do HTTP publish telemetry to APIM; APIM forwards messages to Service Bus, which buffers and feeds stream processors or batch consumers.

Final assessment: strengths vs. risks

Strengths- Simplicity: removes routine integration code and reduces operational surface area.

- Governance: reuses APIM’s rich policy model for security, quotas, and transformation.

- Security: managed identities and RBAC minimize secret sprawl and improve safety.

- Speed to market: teams can expose REST endpoints that directly publish events to the enterprise bus without deploying adapter services.

- Preview maturity: feature behavior and supportability can change while in preview; do not assume GA SLAs or feature parity across regions/tiers.

- Availability coupling: combining APIM and Service Bus introduces compound availability that must be mitigated via architecture and retry/fallback patterns.

- Operational cost and throttling: high message volumes routed through APIM may have throughput and cost implications; sizing and tier choices (APIM SKU and Service Bus tier) matter.

- Message size/protocol constraints: large message scenarios may still require direct AMQP clients or Premium tier configuration.

Conclusion

The new APIM built‑in Service Bus publishing policy is a pragmatic, high‑impact addition for teams building event‑driven systems on Azure. It streamlines an often‑boring integration task — forwarding HTTP payloads into a message bus — and brings the power of APIM governance, authentication, and observability directly into asynchronous workflows. For many organizations that have already standardized on APIM, this reduces operational complexity and accelerates partner and internal integrations.That said, it is a preview feature and should be adopted with thoughtful engineering: validate message size and throughput constraints, plan for error and retry modes, and consider SLA and availability implications when this policy becomes part of critical paths. When used with standard messaging best practices — idempotency, correlation ids, monitoring, and minimal privileged identities — the policy promises to make API‑to‑event integrations simpler, safer, and easier to govern.

Source: infoq.com Azure APIM Simplifies Event-Driven Architecture with Native Service Bus Policy