Chinese researchers have released a new AI-driven imaging pipeline, ASTERIS, that the team says pushes the detection limits of astronomical imaging by roughly one magnitude and surfaces hundreds of candidate galaxies from the Universe’s earliest epoch — a result the authors report as published in Science and which, if reproduced, would materially change how survey teams turn raw telescope frames into scientific catalogs. (arxiv.org)

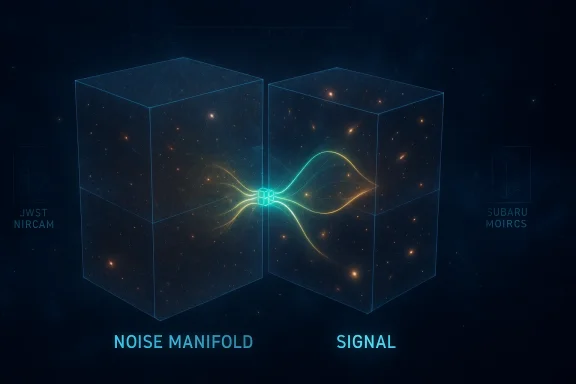

Astronomical imaging at the faintest limits is fundamentally a signal‑vs‑noise problem: photons from ultra‑distant galaxies arrive at Earth as tiny flux increments buried beneath time‑varying sky background, detector systematics, thermal emission, and minute optical aberrations. Traditional pipelines rely heavily on stacking, background modeling, and matched‑filter detection; these methods assume a degree of stationarity in the noise and often require long integrated exposure times to push sensitivities deeper. ASTERIS (Astronomical Spatiotemporal Enhancement and Reconstruction for Image Synthesis) reframes the data as a three‑dimensional spatiotemporal volume and applies a self‑supervised transformer‑based denoising strategy to learn and remove structured, non‑stationary noise patterns directly from the observations. (arxiv.org)

The result reported by the Tsinghua University team is striking on paper: a claimed improvement of approximately 1.0 magnitude at high completeness and purity, validated on both synthetic injection tests and real observational datasets (notably JWST and Subaru), and the identification of more than 160 candidate high‑redshift galaxies from “Cosmic Dawn” fields — about three times the yield obtained by earlier methods in those same data. The authors and affiliated press materials state the work appears as a long article in Science and that code and pre‑trained models have been released to the community. (arxiv.org)

That said, press summaries sometimes conflate the arXiv/author version and the final journal formatting — readers should inspect the accepted manuscript (or the publisher’s version) for exact methodological details, parameter settings, and supplementary validation materials. The arXiv posting explicitly states it is the author’s version and references the Science DOI. (arxiv.org)

The responsible path forward is collaborative: reproduce, stress‑test, and integrate. If ASTERIS’s claims hold under community scrutiny, we will have a powerful, generalizable tool that multiplies the scientific return from existing photon budgets and unlocks fainter chapters of the cosmic story — but careful validation is the price of turning promising algorithmic gains into robust scientific knowledge. (arxiv.org)

Source: The News International Chinese scientists unveil advanced AI model to support deep-space exploration

Background

Background

Astronomical imaging at the faintest limits is fundamentally a signal‑vs‑noise problem: photons from ultra‑distant galaxies arrive at Earth as tiny flux increments buried beneath time‑varying sky background, detector systematics, thermal emission, and minute optical aberrations. Traditional pipelines rely heavily on stacking, background modeling, and matched‑filter detection; these methods assume a degree of stationarity in the noise and often require long integrated exposure times to push sensitivities deeper. ASTERIS (Astronomical Spatiotemporal Enhancement and Reconstruction for Image Synthesis) reframes the data as a three‑dimensional spatiotemporal volume and applies a self‑supervised transformer‑based denoising strategy to learn and remove structured, non‑stationary noise patterns directly from the observations. (arxiv.org)The result reported by the Tsinghua University team is striking on paper: a claimed improvement of approximately 1.0 magnitude at high completeness and purity, validated on both synthetic injection tests and real observational datasets (notably JWST and Subaru), and the identification of more than 160 candidate high‑redshift galaxies from “Cosmic Dawn” fields — about three times the yield obtained by earlier methods in those same data. The authors and affiliated press materials state the work appears as a long article in Science and that code and pre‑trained models have been released to the community. (arxiv.org)

What ASTERIS claims to deliver

Core capabilities (in plain terms)

- Self‑supervised spatiotemporal denoising: the model learns noise structure across spatial pixels and across multiple exposures without requiring hand‑labeled “clean” images.

- Photometric adaptive screening: a mechanism to separate tiny, fluctuating noise artifacts from persistent astrophysical flux, designed to preserve real low‑SNR structure.

- Cross‑instrument generalization: the team reports successful application to space‑based JWST NIRCam data and ground‑based Subaru MOIRCS data, spanning ~500 nm to ~5 μm. (arxiv.org)

Reported headline numbers

- Detection limit improvement: ~1.0 magnitude at 90% completeness and purity in benchmarking. (arxiv.org)

- Faint‑object yield: >160 candidate high‑redshift galaxies (z in the Cosmic Dawn window, ~200–500 Myr post‑Big Bang) detected in re‑analysed deep JWST images — roughly three times previous yields for comparable fields. (arxiv.org)

- Wavelength reach: effective denoising and utility reported from ~500 nm up to 5 μm, extending the effective use of optical pipelines into the mid‑infrared on those datasets. (arxiv.org)

How ASTERIS works (technical overview)

Spatiotemporal volume modeling

ASTERIS treats a sequence of frames as a 3D tensor (x, y, t) rather than a set of independent 2D images. Many sources of noise are structured — they correlate across neighboring pixels and drift slowly or sporadically between exposures. By building models that can learn these spatiotemporal correlations, ASTERIS aims to remove patterns that would otherwise masquerade as or drown out faint astrophysical signal. The authors implement a transformer‑style architecture adapted for volumetric inputs and train it in a self‑supervised way: the network learns to predict withheld pixels or exposures from their context, thereby learning the noise manifold without needing simulated "ground truth" skies. (arxiv.org)Photometric adaptive screening

A central engineering risk for any denoising algorithm is over‑aggressive suppression: you can remove noise but also wash out real faint structure or bias fluxes. ASTERIS introduces a photometric screening stage that tests whether a candidate low‑SNR structure behaves photometrically in a way consistent with astronomical sources across exposures and filters. This mechanism is described as adaptive — thresholds and priors depend on local background statistics and detector telemetry — and is designed to reduce false positives while preserving real objects. The team presents injection‑and‑recovery tests to argue for preserved point‑spread functions (PSFs) and photometric fidelity. (arxiv.org)Self‑supervision & domain transfer

The model’s self‑supervised training avoids the need for large simulated training sets that may bias networks toward unrealistic morphologies. The authors additionally report pre‑trained weights for JWST NIRCam and Subaru MOIRCS; these artifacts are being published for community use, lowering the barrier for other teams to trial the software on their data.Independent validation and the publication trail

Multiple news outlets and a Tsinghua University release report the Science article and summarize the key results. The arXiv author preprint for “Deeper detection limits in astronomical imaging using self‑supervised spatiotemporal denoising” is available and lists the ASTERIS architecture, benchmarks, and observational tests; the arXiv entry itself carries a related DOI pointing to Science. The university’s announcement echoes the paper’s core claims and reproduces example deep‑field images and detection maps. These parallel sources are consistent with one another in reporting the magnitude improvement, the JWST/Subaru validation, and the reported catalogue increase. (arxiv.org)That said, press summaries sometimes conflate the arXiv/author version and the final journal formatting — readers should inspect the accepted manuscript (or the publisher’s version) for exact methodological details, parameter settings, and supplementary validation materials. The arXiv posting explicitly states it is the author’s version and references the Science DOI. (arxiv.org)

Why this matters: strengths and immediate impacts

- Telescope efficiency: A 1.0 magnitude gain translates to detecting sources ≈2.5× fainter for the same exposure time, or, equivalently, achieving the prior depth with far less observing time. For time‑constrained facilities such as JWST and future flagship observatories, the implications for survey planning and cost are profound. (arxiv.org)

- Access to Cosmic Dawn: Increasing completeness at the faint end tightens constraints on early galaxy luminosity functions, reionization history, and the timeline of early star formation — central unknowns in cosmology where sample size is currently dwarfed by Poisson noise. The reported tripling of candidate z ≳ 9 objects in tested fields would materially improve statistical power for these questions if confirmed spectroscopically. (arxiv.org)

- Cross‑platform utility: Demonstrated application to both JWST and Subaru suggests the modeling choices capture general instrumental noise modes, not just quirks of a single detector. If the pre‑trained models and training recipes generalize, smaller teams could re‑process archived datasets to mine previously hidden signals.

- Preserved photometry and morphology: The authors emphasize preservation of PSF shape and measured fluxes in injection‑recovery tests. If robust, this means downstream science (e.g., SED fitting, size measurements, lensing) remains trustworthy after denoising. The paper presents quantitative tests claiming minimal bias for typical faint sources. (arxiv.org)

Risks, caveats, and open questions

No single method is a panacea. ASTERIS’s advertised gains are compelling, but they come with measurable caveats researchers must treat carefully.- False positives and confirmation bias: Deep‑learning denoisers tuned to maximize detection completeness can increase the false discovery rate. The paper shows purity metrics in benchmarking, but catalog yields must be followed by independent confirmation: photometric redshift fitting with different pipelines, spectroscopic follow‑up where possible, and cross‑matching against other bands/surveys. Until a subset of the 160+ candidates is spectroscopically confirmed, treat the catalogue as provisional. (arxiv.org)

- Photometric biases in science measurements: Even small multiplicative or additive photometric biases at low SNR propagate through luminosity functions, mass estimates, and cosmic star‑formation history reconstructions. The authors report PSF and photometric fidelity tests, but full cosmological analyses will need careful re‑calibration and error propagation that includes model‑inference uncertainties. (arxiv.org)

- Model hallucination and morphology distortion: Generative and denoising models can sometimes create plausible but nonexistent structure — e.g., spurious low‑surface‑brightness features that correlate with detector artifacts. The team’s injection tests mitigate this risk in controlled scenarios, but heterogeneous real‑world systematics (stray light, moving objects, cosmic rays) require exhaustive stress testing. Independent pipelines must reproduce claimed structures to build confidence. (arxiv.org)

- Reproducibility & transparency: The scientific community must have access to code, pre‑trained weights, configuration files, and the seed/initialization details used for published runs. The authors have deposited pre‑trained models and related artifacts on repositories; however, full reproducibility also needs the raw input frames, calibration reference files, and detailed processing logs. The paper and institutional release indicate artifacts are being shared, but community uptake will depend on how complete those packages are.

- Computational cost: 3D volumetric transformer models are memory‑ and compute‑intensive. For large mosaics or multi‑visit surveys, ASTERIS‑scale processing will demand GPU clusters, specialized I/O strategies, and chunking schemes. Smaller groups and survey pipelines will need guidance and lightweight deployment options to adopt the method at scale. The authors discuss runtime and memory tradeoffs, but operational cost is a practical limitation for broad adoption. (arxiv.org)

Practical recommendations for astronomers, pipeline engineers, and survey teams

If you run a survey or maintain a data reduction pipeline, here’s a practical checklist to evaluate ASTERIS for your workflow:- Start small: apply ASTERIS to a limited set of calibration fields with well‑characterized sources (stars, known faint galaxies) and run injection‑and‑recovery experiments that match your instrument’s noise and PSF properties.

- Verify photometry: compare measured fluxes and sizes for bright and faint sources before and after denoising; quantify multiplicative biases and additive offsets.

- Cross‑validate detection catalogs: run a secondary detection method on both raw and ASTERIS‑processed frames to produce overlapping candidate lists; flag unique detections for targeted follow‑up.

- Track metadata & provenance: log model version, weights, training date, GPU/CPU specs, input calibration files, and random seeds; reproducibility is essential for publication‑grade science.

- Plan spectroscopic follow‑up: prioritize a subset of ASTERIS‑unique high‑redshift candidates for spectroscopy (or narrow‑band imaging) to establish a ground truth for yield and contamination rates.

- Budget compute: design chunked processing and I/O pipelines; evaluate cloud vs on‑prem GPU clusters and estimate cost per square degree for your survey. (arxiv.org)

Broader implications for astronomy and instrumentation

ASTERIS typifies a broader shift: AI is moving from post‑hoc catalog analysis to front‑line data conditioning. That shift has system‑level consequences.- Redistribution of telescope value: If algorithms can extract more signal from existing exposures, archival data become more valuable; observatory time allocation strategies may adapt to this new effective gain per exposure. (arxiv.org)

- Pipeline modularity: Teams will begin to treat denoising/enhancement as a modular front‑end that feeds classical measurements (photometry, astrometry, shape measurement) rather than as an end in itself. This requires standardized interfaces, comparators, and validation suites.

- Community standards: The field will need standards for benchmarking denoisers: agreed injection sets, evaluation metrics for completeness/purity vs. magnitude, and a public leaderboard to avoid over‑fitting to a narrow set of fields or instruments. The ASTERIS paper sets a strong precedent by providing injection‑based benchmarks and pre‑trained models; the next step is broader community stress‑testing. (arxiv.org)

What to watch next

- Independent replications: expect other groups to run ASTERIS (or comparable methods) on JWST deep fields, HST archival mosaics, and ground‑based surveys. Independent verification — especially spectroscopic confirmation of a representative subset of ASTERIS‑unique detections — will be decisive. (arxiv.org)

- Tooling and packaging: look for official packages, Docker/Conda builds, and lightweight inference modes. Community adoption accelerates when models ship with clear deployment recipes and resource‑sensitive options.

- Integration with upcoming facilities: teams building the Rubin Observatory LSST, Roman Space Telescope, and next‑generation Extremely Large Telescopes will evaluate similar denoising approaches as part of their science pipelines; early engagement could help embed robust validation standards into those projects. (arxiv.org)

Conclusion

ASTERIS represents a meaningful advance in the application of self‑supervised AI to one of astronomy’s oldest problems: teasing faint cosmic light from a sea of noise. The reported gain of about one magnitude and the dramatic increase in candidate early‑universe detections make this an immediate story for survey planners, archive miners, and cosmologists focused on reionization and early galaxy assembly. At the same time, the community must apply rigorous independent validation: injection‑recovery across instrument modes, photometric bias quantification, and — above all — spectroscopic confirmation of new high‑redshift candidates.The responsible path forward is collaborative: reproduce, stress‑test, and integrate. If ASTERIS’s claims hold under community scrutiny, we will have a powerful, generalizable tool that multiplies the scientific return from existing photon budgets and unlocks fainter chapters of the cosmic story — but careful validation is the price of turning promising algorithmic gains into robust scientific knowledge. (arxiv.org)

Source: The News International Chinese scientists unveil advanced AI model to support deep-space exploration