AT&T’s latest push at Mobile World Congress marks a deliberate move to reframe the carrier not just as a connectivity provider, but as a systems integrator for cloud-native AI — embedding last‑mile access into AWS workflows while pairing Azure-powered edge services and Nvidia-accelerated industrial AI to sell a single, telco-managed path from sensors and machines to generative AI insights.

AT&T’s announcements at MWC build on an accelerating industry trend: hyperscalers and network operators are converging at the edges of enterprise IT and physical operations. For years, cloud providers have pushed enterprises to modernize applications and shift heavy workloads into their data centres; now they are asking carriers to make the network behave like a native cloud resource — faster to provision, more predictable in performance, and simpler to manage alongside compute and storage. AT&T’s twin plays — a managed last‑mile service that plugs into AWS provisioning workflows, and a suite of Azure-based edge products for physical locations and factories — try to answer that ask by collapsing operational boundaries between on‑site devices, the network, and the cloud.

Why this matters technically: by letting an enterprise treat the access link as a cloud resource, AWS Interconnect – last mile promises faster time‑to‑deploy (days or minutes versus weeks), programmatic bandwidth selection (1 Gbps–100 Gbps on demand), and integration with cloud orchestration. For AI workloads, where repeated large transfers and chatty telemetry can be bottlenecked by suboptimal last‑mile routes, reducing jitter and hops between on‑prem devices and cloud GPUs can materially improve real‑time performance and cost efficiency. Early messaging also emphasizes metro‑level resiliency engineering — a nod to enterprise SLAs and multi‑path redundancy.

Two industry dynamics to watch:

That upside comes with tangible caveats: gated availability, potential vendor lock‑in, integration complexity in diverse operational environments, and the need for clear SLAs and data governance. Enterprises should test rigorously, negotiate hard on visibility and portability, and align pilots to real operational KPIs before committing to widespread rollouts.

In short, AT&T is betting that the next wave of enterprise value will be captured by companies that can stitch hyperscaler compute, edge AI and secure, low‑latency connectivity into a single, managed offering. For organizations willing to do the homework and run disciplined pilots, the promise is real; for those expecting plug‑and‑play magic, the operational and governance challenges will quickly clarify what “managed” really means.

Source: Telecoms AT&T upgrades enterprise AI with help from AWS and Microsoft

Background

Background

AT&T’s announcements at MWC build on an accelerating industry trend: hyperscalers and network operators are converging at the edges of enterprise IT and physical operations. For years, cloud providers have pushed enterprises to modernize applications and shift heavy workloads into their data centres; now they are asking carriers to make the network behave like a native cloud resource — faster to provision, more predictable in performance, and simpler to manage alongside compute and storage. AT&T’s twin plays — a managed last‑mile service that plugs into AWS provisioning workflows, and a suite of Azure-based edge products for physical locations and factories — try to answer that ask by collapsing operational boundaries between on‑site devices, the network, and the cloud.What AT&T announced at MWC

AWS Interconnect — last mile: turning access into a cloud-native resource

AT&T unveiled a preview of AWS Interconnect – last mile, a product that embeds AT&T’s fibre and 5G fixed‑wireless access (FWA) directly into AWS environments so enterprises can provision and manage the last‑mile link inside the AWS console. The offering is explicitly engineered to reduce round‑trip latency, simplify ordering and lifecycle management, and increase the resiliency of premises‑to‑cloud paths — all traits hyperscalers view as essential for real-time AI inference, streaming analytics, and agentic workloads. AT&T says qualifying customers will be able to join an early preview in Q2.Why this matters technically: by letting an enterprise treat the access link as a cloud resource, AWS Interconnect – last mile promises faster time‑to‑deploy (days or minutes versus weeks), programmatic bandwidth selection (1 Gbps–100 Gbps on demand), and integration with cloud orchestration. For AI workloads, where repeated large transfers and chatty telemetry can be bottlenecked by suboptimal last‑mile routes, reducing jitter and hops between on‑prem devices and cloud GPUs can materially improve real‑time performance and cost efficiency. Early messaging also emphasizes metro‑level resiliency engineering — a nod to enterprise SLAs and multi‑path redundancy.

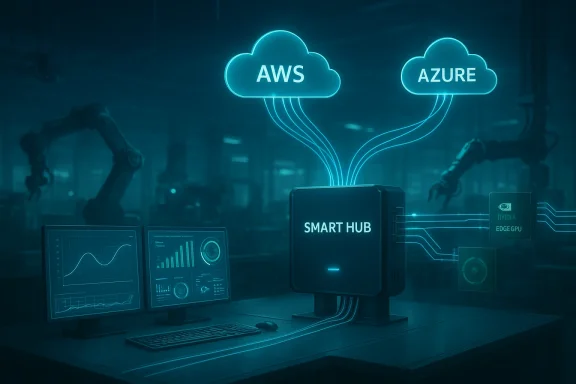

Connected Spaces for Enterprise: Azure-powered, sensor‑to‑insight platform

Running on Microsoft Azure, AT&T’s Connected Spaces for Enterprise is an integrated edge and connectivity platform that centralizes data from sensors, cameras and IoT gear into a managed gateway (a “SmartHub”), then funnels normalized telemetry and processed events into Azure analytics and Azure OpenAI services for near‑real‑time decisions. The initial go‑to‑market focus is retail and hospitality — convenience chains and quick‑service restaurants that want to reduce shrink, speed operations, and improve customer experience — but the architecture is broadly applicable to any distributed physical footprint. AT&T describes the offering as a secure, centrally managed stack that reduces the work of device onboarding, edge compute orchestration, and cloud analytics integration.Connected AI for Manufacturing: GenAI at the industrial edge

The third pillar is Connected AI for Manufacturing, developed with Nvidia, Microsoft and edge‑AI specialist MicroAI. This package pairs AT&T’s low‑latency communications and edge compute with Nvidia accelerated inference and Azure generative models to deliver use cases such as pre‑failure detection, video analytics, conversational operator interfaces, and knowledge management at the machine level. In pilot tests AT&T reports striking results: up to a 70% reduction in waste on an injection‑molding line, 35% improvement in fulfilment centre efficiency, and 2.5–4 hours of lead time for pre‑failure fault detection. Those pilot claims are presented as proof points for fast time‑to‑value in controlled environments.Why AT&T’s approach is strategically coherent

1) Packaging connectivity as a managed cloud resource

AT&T is reframing connectivity from a utility you buy and manage separately into an embedded, cloud‑orchestrated capability. For enterprise CIOs and cloud architects juggling hybrid estates, that model promises simpler operations: one API, one console, one provisioning model. That friction reduction is meaningful when projects span tens or thousands of sites and require repeatable, automated provisioning.2) Hyperscaler partnerships that cover complementary planes

By aligning with both AWS (last‑mile cloud access) and Microsoft (edge analytics, Azure OpenAI), AT&T is deliberately hedging dependency while offering interlocking products. The AWS offering reduces the access friction to AWS regions and services; the Azure-based Connected Spaces and Azure OpenAI integrations provide on‑prem and near‑edge GenAI capabilities. Nvidia’s inclusion for inferencing ensures edge workloads can be accelerated without sending every frame or telemetry stream to the cloud. This tri‑partite model addresses compute, orchestration, and connectivity simultaneously.3) Moving the needle from connectivity revenue to higher‑margin services

AT&T’s investor narrative has been consistent: promote fiber and 5G as a springboard to higher‑value managed services and edge solutions. Financial disclosures from the company show Business Wireline revenue was down year‑over‑year, but fiber and advanced connectivity grew — a trend AT&T expects to continue as it shifts revenue mix toward converged, AI‑adjacent offerings. The company reported Business Wireline revenues down 7.5% year‑over‑year, partly offset by a 6.8% increase in fiber and advanced connectivity; this arithmetic underpins AT&T’s commercial reasoning for bundling network, edge compute, and AI software.Technical and operational strengths

- Lower latency, fewer hops: Direct last‑mile access into AWS and local edge inferencing with Nvidia reduce end‑to‑end latency — critical for closed‑loop industrial control, video analytics, and conversational operator UIs.

- Simplified lifecycle: Integration into cloud consoles promises faster provisioning and more predictable operations for large distributed deployments.

- Edge + GenAI: Running generative models at the edge for operator assistance and knowledge retrieval minimizes data egress and brings AI inference closer to the point of action.

- Pre‑validated stacks: Pre‑integration with Azure, Nvidia, and MicroAI reduces integration risk for customers who would otherwise assemble these components themselves.

- Commercial flexibility: AT&T’s pitch includes POCs to multi‑plant rollouts, suggesting modular procurement paths rather than one‑size‑fits‑all contracts.

Risks, limits and unanswered questions

No single announcement eliminates the hard engineering, security, and commercial tradeoffs enterprises face. Here are the key concerns IT leaders should weigh.1) Gated preview and limited ecosystem partners

AWS Interconnect – last mile is launching as a gated preview, and early deployments typically involve a narrow set of network partners and geographies. Historically, AWS’s “Interconnect” pilots have been jointly delivered with network providers such as Lumen, Megaport and others; that means enterprise availability and choice may be constrained in the near term, and procurement teams should account for provider coverage gaps and multi‑vendor timelines.2) Potential for vendor lock‑in across cloud and telco stacks

The convenience of provisioning last‑mile access inside AWS or relying on AT&T‑managed Azure edge stacks creates a blend of cloud‑telco integration that can increase switching friction. If an enterprise becomes dependent on AT&T’s managed edge gateway and cloud integration patterns, moving to alternative network operators, cloud providers, or edge vendors could become costly. Multicloud strategies will need robust abstraction layers to avoid being boxed into one provider’s “convenience tax.”3) Security, privacy and governance at the edge

Centralizing camera feeds, POS signals, and sensor telemetry into a managed edge gateway raises questions about encryption at rest and in transit, identity and access management, data retention and deletion, and compliance with regional privacy laws. AT&T’s materials cite enterprise‑grade security, but customers will need full transparency: what keys do they control, where does sensitive data persist, and what auditing/forensics capabilities are provided?4) Operational complexity and systems integration

The promise of “turnkey” edge‑to‑cloud solutions belies the messy reality of industrial environments: diverse PLCs, brownfield machines, variable network quality inside plants, and bespoke control logic. Realizing pilot results (70% waste reduction, 35% efficiency gain) in controlled environments is encouraging, but replicating those gains across different factories requires deep systems integration, process change management, and sustained cross‑functional programs.5) Economics and cost transparency

Embedding high‑capacity fiber or 5G FWA into cloud provisioning changes cost profiles. While improved performance can lower indirect costs (reduced scrap, faster throughput), enterprises need predictable pricing for on‑demand bandwidth, edge GPU cycles, and managed application services. Contracts and SLAs must be transparent about bursts, overages, and the cost of geo‑redundancy.How enterprises should evaluate these offers

When evaluating AT&T’s bundled services, IT and OT leaders should treat the proposition as both a network procurement and a strategic application modernization project. A pragmatic checklist:- Define the business outcome first (e.g., reduce scrap by X%, detect faults Y hours earlier), then map which parts of the AT&T stack deliver those outcomes.

- Validate coverage: confirm AT&T/AWS last‑mile availability at each site and check alternate routes or providers for failover.

- Ask for measurable pilot success criteria and insist on a staged proof‑of‑value that replicates key operational environments, not just lab tests.

- Negotiate clear SLAs for latency, availability and mean time to repair — and ensure they align with application tolerances.

- Clarify data governance: who owns and controls model inputs, outputs, and logs; where are backups kept; and how is data purged?

Broader industry implications

AT&T’s moves are part of a larger tectonic shift: networks are being reprogrammed to be first‑class cloud citizens, and telcos that can operate at the intersection of connectivity, edge compute, and industry software will command higher margins than those that sell raw bandwidth alone. Hyperscalers want predictable, low‑latency funnels into their regions; enterprises want managed experiences that compress time‑to‑value. Carriers that can package both will attract workloads that previously sat in private data centres or in fragmented on‑prem stacks.Two industry dynamics to watch:

- Hyperscaler-led managed interconnects (AWS Interconnect – last mile, multicloud interconnects) will accelerate demand for programmable last‑mile provisioning and drive partnerships with global network providers.

- Verticalized, outcome‑oriented bundles (e.g., retail loss prevention, manufacturing OEE optimization) will proliferate. Enterprises will choose between assembling best‑of‑breed stacks or buying integrated outcomes from trusted telco‑hyperscaler duos.

Realistic timeframes and what customers can expect next

- Q2 (preview): AWS Interconnect – last mile will be available to qualifying customers in a gated preview. Early adopters should expect documentation, limited geographies, and partner‑specific handoffs.

- Near term: Connected Spaces for Enterprise is being positioned as generally available for U.S. customers with initial retail/QSR PoCs; enterprises in those sectors should be able to pilot quickly if coverage and device integration align.

- Immediately available: Connected AI for Manufacturing is being marketed with pilot results already in hand and packaged engagement models from POCs to multi‑plant rollouts; manufacturing IT teams should plan for multi‑disciplinary pilots that include OT, cybersecurity, and process engineering.

Practical recommendations for IT leaders and procurement teams

- Start with outcomes, not technology: craft a measurable KPI set and require vendors to commit to pilot success criteria.

- Insist on multi‑vendor portability: demand APIs and exportable configurations so you can migrate workloads if commercial terms or performance deteriorate.

- Treat the edge as a security domain: require network isolation, zero‑trust access controls, and signed model artifacts that can be audited.

- Plan for hybrid redundancy: even with last‑mile cloud‑integrated access, maintain diverse network paths and local fallback modes for safety‑critical systems.

- Build internal skills: managing converged telco-cloud stacks requires new skills — cloud networking, edge orchestration, and model lifecycle management at scale.

Final assessment: pragmatic optimism, but due diligence required

AT&T’s announcements constitute a credible commercial play that aligns with where enterprises are headed: closer coupling of the network, edge compute, and cloud AI to shorten the path from data to action. The combination of AWS‑native last‑mile access and Azure‑based edge offerings with Nvidia acceleration creates a pragmatic toolkit for latency‑sensitive and data‑intensive workloads. If the services work as promised — seamless provisioning, robust security, transparent economics, and reproducible pilot outcomes — they could materially speed enterprise AI projects that today stall on integration complexity.That upside comes with tangible caveats: gated availability, potential vendor lock‑in, integration complexity in diverse operational environments, and the need for clear SLAs and data governance. Enterprises should test rigorously, negotiate hard on visibility and portability, and align pilots to real operational KPIs before committing to widespread rollouts.

In short, AT&T is betting that the next wave of enterprise value will be captured by companies that can stitch hyperscaler compute, edge AI and secure, low‑latency connectivity into a single, managed offering. For organizations willing to do the homework and run disciplined pilots, the promise is real; for those expecting plug‑and‑play magic, the operational and governance challenges will quickly clarify what “managed” really means.

Source: Telecoms AT&T upgrades enterprise AI with help from AWS and Microsoft