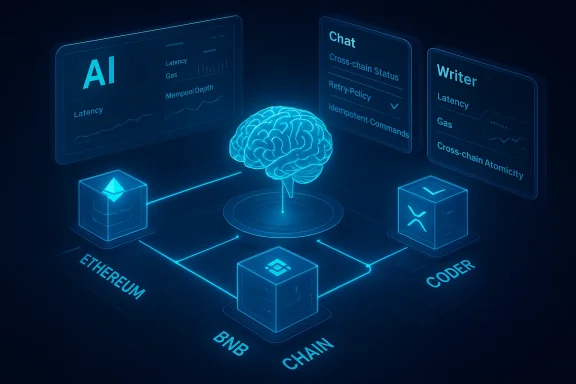

Atua AI’s newest release promises to stitch AI-driven orchestration into the seams of Web3, delivering what the company calls real-time coordination systems aimed at predictable, low-latency execution across multiple blockchains — specifically Ethereum, BNB Chain, and the XRP Ledger — and positioning itself as an infrastructure layer for scalable decentralized automation.

Atua AI is the on-chain AI and automation project backed and publicized through KaJ Labs, a Seattle-rooted research and incubation organization. Over the last year the team has publicly pushed a sequence of upgrades and product claims: modular microservices and AI-assisted smart contract tooling, followed by a steady stream of coordination-focused announcements that culminate in the current “real-time coordination” messaging.

The core pitch is straightforward: decentralized applications and automation workflows increasingly require deterministic, low-latency coordination across heterogeneous ledgers and distributed services. Atu aAI says its systems continuously track distributed state, monitor chain conditions, and dynamically tune execution workloads so automation does not degrade when networks experience congestion, reorgs, or node variance. The release highlights integrated modules — Chat, Writer, and Coder — that are described as working “in harmony” with the coordination layer so automation logic can adapt in real time.

This announcement follows prior product statements from the same organization around AI-smart contracts, microservices architecture and modular AI tooling, which together frame the current coordination capability as the next step in a larger platform roadmap.

Atua AI’s stated objective is to convert that noisy environment into a predictable substrate for higher-level automation. Practically, that means:

Key technical building blocks likely used in a system like this include:

That said, the success of such a platform depends on transparent engineering, auditable decision logic, and careful operational controls. For enterprises and DeFi teams, the following checklist encapsulates prudent next steps:

In short: the promise is significant, the technical direction is sensible, but enterprise adoption will hinge on demonstrable guarantees — not just marketing claims.

Source: isStories Atua AI Advances Scalable Operations for Decentralized Systems with Real-Time Coordination Technology | isStories

Background

Background

Atua AI is the on-chain AI and automation project backed and publicized through KaJ Labs, a Seattle-rooted research and incubation organization. Over the last year the team has publicly pushed a sequence of upgrades and product claims: modular microservices and AI-assisted smart contract tooling, followed by a steady stream of coordination-focused announcements that culminate in the current “real-time coordination” messaging.The core pitch is straightforward: decentralized applications and automation workflows increasingly require deterministic, low-latency coordination across heterogeneous ledgers and distributed services. Atu aAI says its systems continuously track distributed state, monitor chain conditions, and dynamically tune execution workloads so automation does not degrade when networks experience congestion, reorgs, or node variance. The release highlights integrated modules — Chat, Writer, and Coder — that are described as working “in harmony” with the coordination layer so automation logic can adapt in real time.

This announcement follows prior product statements from the same organization around AI-smart contracts, microservices architecture and modular AI tooling, which together frame the current coordination capability as the next step in a larger platform roadmap.

What “real-time coordination” actually aims to solve

The core problem: distributed nondeterminism

Decentralized systems are inherently nondeterministic across several axes. Transaction propagation timing, gas price dynamics, block finality, mempool ordering, RPC node health, and cross-chain messaging latency all produce an environment where automation — if naïvely designed — will be brittle.Atua AI’s stated objective is to convert that noisy environment into a predictable substrate for higher-level automation. Practically, that means:

- Continuous monitoring of network health indicators (block times, pending transaction queues, RPC response times).

- State-awareness across multiple chains so cross-chain workflows can make informed decisions about timing, retries, and atomicity.

- Dynamic workload tuning so that execution paths, batching strategies, and off-chain compute allocation adapt to current conditions.

- Orchestration that enforces ordering, idempotency, and predictable retries for automated actions.

How this differs from traditional orchestration

Cloud orchestration systems (Kubernetes, serverless workflows, or agent managers) are built for centralized control planes with trusted network links and relatively stable resource abstractions. Decentralized orchestration has to account for:- Non-uniform finality models (e.g., probabilistic finality on Ethereum vs near-instant consensus in XRP Ledger).

- On-chain cost sensitivity — every on-chain operation carries a fee that influences the chosen coordination strategy.

- Adversarial actors — you must plan for front-running, MEV, and malicious relayers.

- Trust boundaries — relying on third-party nodes, relayers, or oracles introduces new threat models.

Technical anatomy: what to expect under the hood

Modular microservices + agentic coordination

Atua AI emphasizes a modular architecture, where AI-powered modules like Chat, Writer, and Coder are componentized. A modular approach makes sense for scale: each service can be horizontally scaled, independently upgraded, and instrumented.Key technical building blocks likely used in a system like this include:

- Telemetry collection (block metrics, mempool depth, RPC latencies, gas price spreads).

- Semantic state models that translate on-chain artifacts into a normalized representation usable by orchestration logic.

- Decision agents — small, task-focused AI agents or controllers that decide whether to execute, delay, or reroute actions based on policy and current conditions.

- Message buses and event channels for pub/sub distribution of state and orchestration commands.

- Workload shapers that batch, prioritize, or throttle transactions to limit cost and reduce reorg exposure.

Real-time versus near-real-time

“Real-time” is a marketing shorthand many vendors use; in distributed ledgers it’s important to separate application-perceptible real-time (sub-second responsiveness across the control plane) from hard real-time (bounded latency guarantees). In practice, what’s achievable depends heavily on:- The slowest chain in the orchestration path (e.g., cross-chain hops can add seconds to minutes).

- Whether critical actions are executed off-chain with on-chain settlement later, or whether the system must wait for on-chain confirmation.

- The granularity of the coordination (per-transaction, per-batch, or per-workflow).

Multichain considerations: Ethereum, BNB Chain, and XRP Ledger

Atua AI specifically names Ethereum, BNB Chain, and XRP Ledger as supported targets. Those chains differ in topology and semantics, which creates operational complexity.Ethereum and EVM chains (BNB Chain family)

- Ethereum and BNB Chain are EVM-compatible, which simplifies transaction construction and cross-deployment of smart contracts.

- They share common challenges: gas fee volatility, mempool contention, and MEV risks from ordering.

- Coordination strategies for EVM chains often include gas price estimation, transaction bundling, and using private relays or Flashbots-style submission to reduce front-running.

XRP Ledger

- XRP Ledger uses a fundamentally different consensus approach with deterministic finality on a per-ledger basis.

- Typical concerns for XRP include unique transaction formats, fee mechanisms, and different replay and settlement models.

- Cross-chain orchestration with XRP requires careful translation: transaction semantics and finality guarantees mean workflow designers must treat XRP confirmations differently than probabilistic finality chains.

Cross-chain state and atomicity

Supporting multichain workflows implies solving cross-chain state visibility and the risk of partial execution. Established strategies include:- Using bridges/relayers that notarize events across ledgers.

- Implementing watchers that monitor state and trigger compensating transactions when necessary.

- Using HTLC-like constructs or cross-chain escrow to enforce atomicity where available.

Developer experience: the promise and the traps

Developer-facing modules: Chat, Writer, Coder

Atua AI’s product-suite framing — Chat, Writer, Coder — targets the developer productivity angle: conversational interfaces, content generation, and code scaffolding integrated with orchestration. That can lower the barrier to building automation, provided:- Generated code meets production-quality and security standards.

- The tooling exposes configuration for retry policies, gas optimization, and deterministic testing.

- Integrations are open and auditable rather than black-box managed services.

Benefits

- Faster prototyping: integrated AI assistants can produce boilerplate for contract interactions, event listeners, and test harnesses.

- Unified control plane: having observability and orchestration in one platform reduces integration overhead.

- Adaptive automation: AI-driven tuning can reduce manual intervention during network events.

Risks and traps

- Toolchain lock-in: if the platform encodes orchestration policies in proprietary formats or closed agents, switching costs rise quickly.

- Overreliance on generated code: AI-generated smart contract interactions need human review — a small bug can have catastrophic financial consequences.

- Opaque decisioning: AI agents that decide on-chain actions must expose their decision logic and risk thresholds; otherwise teams cannot certify behavior.

Security, trust, and governance

Attack surfaces introduced by coordination layers

Every additional coordination layer introduces new attack vectors:- Compromised control plane: if orchestration servers or agents are compromised, attackers can reorder or censor transactions.

- Data poisoning: AI modules trained or tuned on noisy inputs can misclassify conditions, triggering unsafe actions.

- Relay and oracle risks: cross-chain watchers and relayers require integrity guarantees; a malicious relayer can create false state perceptions.

- Centralization points: if key services are centralized (e.g., hosted orchestrators, proprietary relayers), the system becomes dependent on trust assumptions that Web3 often tries to minimize.

MEV and front-running exposure

The announcement hints at “reduced latency” and “predictable execution.” In practice, any system that optimizes transaction submission must explicitly account for MEV: better latency or sophisticated submission channels can reduce slippage but can also be used to extract value. Effective coordination must either integrate protected submission channels or design for MEV-resistant settlement patterns.Auditing and transparency

For enterprise and DeFi usage, the following are non-negotiables:- Publicly verifiable specs for coordination protocols: how state is observed, how decisions are made, and the guarantees offered.

- Audits of both smart contracts and critical orchestration components.

- Fail-safe modes — automatic fallback to safe, non-automated behavior in the event of anomalies.

- Clear data retention and privacy policies for telemetry, since coordination requires collecting potentially sensitive operational metadata.

Real-world use cases and their operational nuances

DeFi automation

- Liquidity rebalancing, automated market-making adjustments, and cross-chain arbitrage are natural use cases.

- These use cases are extremely latency-sensitive and expose users to MEV and slippage. Coordination systems can help, but must also provide protected execution channels and economic cost modeling.

DAO coordination

- DAOs often require cross-contract governance actions, multisig coordination, and off-chain quorum checks.

- Real-time coordination helps coordinate staging, voting windows, and execution sequencing — but DAOs usually require full audit trails and human override paths.

Cross-chain orchestration

- Token flows, messaging, and atomic swap orchestration are prime examples where state-tracking across chains is essential.

- Practical deployments must handle partial failures gracefully and provide compensating transaction patterns when atomicity is impossible.

On-chain monitoring and incident response

- Continuous monitoring and AI-assisted incident triage can reduce time-to-response for exploits or chain-level anomalies.

- However, automated countermeasures (e.g., pausing contracts or rolling back state via compensatory actions) must be governed and ideally require human confirmation for high-risk interventions.

Operational recommendations for adopters

If you are evaluating Atua AI’s coordination offering, consider a staged adoption path with measurable gates:- Proof of concept in a testnet or sandbox that mirrors real traffic patterns.

- Security review of any generated code and orchestration policies by independent third parties.

- Load and chaos testing to validate behavior during reorgs, RPC outages, and fee spikes.

- Observability and SLOs: require SLAs or measurable Service Level Objectives for latency, success rate, and time-to-detect.

- Failover policies: ensure local/manual control is available if the coordination plane becomes unavailable or behaves unexpectedly.

Market implications and vendor assessment

Atua AI’s approach reflects a broader industry trend: integrating AI with decentralized infrastructure to make Web3 systems more predictable and developer-friendly. The market response will hinge on several factors:- Technical transparency: vendors that publish protocols, reference implementations, and benchmarks will earn faster adoption.

- Security posture: third-party audits and bug bounty programs will be critical for credibility.

- Interoperability: solutions that embrace open standards and make it easy to plug in alternative relayers, oracles, and node providers will be more attractive to enterprises.

Strengths and potential risks — a balanced view

Strengths

- Addresses a real pain point: predictable, multichain automation is a practical requirement for complex decentralized systems.

- Modular design philosophy: microservices and componentized AI tools support incremental rollout and scalability.

- End-to-end developer tooling: integrated assistants for chat, content, and code can materially shorten development cycles when paired with strong governance.

Risks and unknowns

- Opaque implementation details: marketing language is clear on intent but light on specifics about consensus interaction, submission channels, and fallback behavior.

- Operational centralization: coordination services can become central points of control if not designed for decentralization.

- Security and MEV exposure: any submission optimization must be balanced with MEV resistance and anti-front-running measures.

- Economic friction: orchestration that increases on-chain activity can raise costs unless gas optimization is baked into the decision logic.

Final analysis and recommendations

Atua AI’s announcement of real-time coordination systems is a credible and relevant step in the evolution of Web3 infrastructure. The problems the company claims to solve — cross-chain nondeterminism, automation brittleness, and unpredictable execution — are genuine and materially affect builders today.That said, the success of such a platform depends on transparent engineering, auditable decision logic, and careful operational controls. For enterprises and DeFi teams, the following checklist encapsulates prudent next steps:

- Require public documentation that explains coordination semantics, failure modes, and security assumptions.

- Insist on independent code and system audits prior to production rollouts.

- Conduct staged testing in realistic traffic conditions and simulated adversarial scenarios.

- Preserve manual override and human-in-the-loop controls for high-value actions.

- Monitor and evaluate economic impacts (gas and transaction costs) introduced by the coordination layer.

In short: the promise is significant, the technical direction is sensible, but enterprise adoption will hinge on demonstrable guarantees — not just marketing claims.

Source: isStories Atua AI Advances Scalable Operations for Decentralized Systems with Real-Time Coordination Technology | isStories