AWS’ new managed Network Firewall proxy arrives as a pragmatic answer to a persistent problem: organizations tired of owning and operating large fleets of proxy servers can now offload proxy management while keeping the policy controls that matter for egress security.

Amazon’s new managed proxy is offered as a preview capability that sits inside a Virtual Private Cloud (VPC) and integrates with the VPC’s NAT Gateway. It exposes a proxy endpoint reachable via PrivateLink interface endpoints and requires workloads to be explicitly proxy-aware. The service evaluates outbound web traffic using a three-phase inspection model, can optionally perform TLS interception by issuing destination-mirroring certificates, and supports both distributed (per-VPC) and centralized (multi‑VPC) architectures. During the preview the service is constrained to a single region and a small set of resource limits, and AWS makes it available free of charge for the preview period.

This announcement matters because it converts a traditionally heavy operational problem—running, scaling, patching and securing Squid/HAProxy/other proxy fleets—into a managed capability that focuses engineering attention back onto security policy rather than infrastructure plumbing.

However, the offering is deliberately specialized rather than universal. It targets HTTP/HTTPS egress policy and inspection, leaving broader non‑HTTP protections and transparent gateway inspection to other solutions. The preview-phase limits (single-proxy-per-account, HTTP/1.1-only, IPv4 emphasis) and the operational responsibilities that come with TLS interception (certificate distribution, compliance, logging) mean teams must plan carefully.

For security and platform engineering teams, the practical approach is clear:

Source: infoq.com AWS Launches Network Firewall Proxy in Preview to Simplify Managed Egress Security

Background

Background

Amazon’s new managed proxy is offered as a preview capability that sits inside a Virtual Private Cloud (VPC) and integrates with the VPC’s NAT Gateway. It exposes a proxy endpoint reachable via PrivateLink interface endpoints and requires workloads to be explicitly proxy-aware. The service evaluates outbound web traffic using a three-phase inspection model, can optionally perform TLS interception by issuing destination-mirroring certificates, and supports both distributed (per-VPC) and centralized (multi‑VPC) architectures. During the preview the service is constrained to a single region and a small set of resource limits, and AWS makes it available free of charge for the preview period.This announcement matters because it converts a traditionally heavy operational problem—running, scaling, patching and securing Squid/HAProxy/other proxy fleets—into a managed capability that focuses engineering attention back onto security policy rather than infrastructure plumbing.

What the Network Firewall proxy actually is

A managed explicit forward proxy

- The new service is an explicit forward proxy: applications must be configured to send HTTP/HTTPS traffic to the proxy (for example, via HTTP_PROXY/HTTPS_PROXY environment variables or application proxy settings).

- The proxy handles HTTP CONNECT requests (and absolute-form HTTP for plaintext HTTP), establishes connections to destination servers on behalf of the client, and evaluates traffic at defined policy stages.

- It is not a transparent inline network firewall for arbitrary TCP/UDP traffic; it is purpose-built for web traffic — HTTP and HTTPS.

How it plugs into your VPC

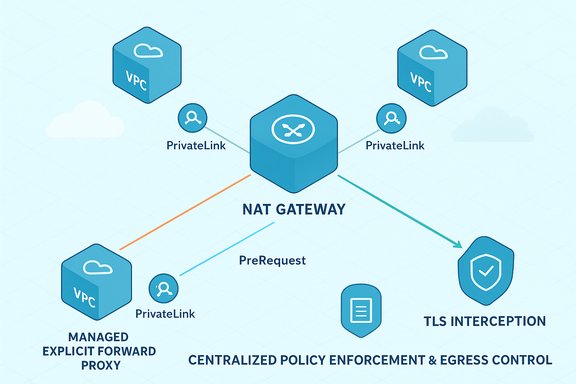

- The proxy is attached to a NAT Gateway in a VPC. When you create a proxy, the service associates it with a NAT Gateway and creates a PrivateLink interface endpoint so clients (in the same or other VPCs) can reach the proxy via private DNS.

- For workloads in the same VPC as the proxy, no extra endpoint is required. For remote VPCs that cannot route to the proxy directly, PrivateLink endpoints are created in each application VPC to offer a local access point.

- The service supports both distributed and centralized deployment models:

- Distributed: a proxy per VPC (isolation, per-VPC control).

- Centralized: a single proxy in a security/egress VPC with Proxy Endpoints or using Transit Gateway / Cloud WAN to funnel traffic from multiple VPCs.

Inspection model: the three phases

The proxy evaluates traffic sequentially across three ordered stages. Rules are processed in order of priority inside each phase; a deny in an earlier phase terminates the flow and prevents later phases from running.- PreDNS — executed before the proxy resolves the destination domain name. Useful for blocking domains by name without resolving them first (mitigates DNS exfiltration attempts by denying suspicious domain patterns early).

- PreRequest — executed before the proxy sends the HTTP request to the destination. At this phase, the proxy can apply access rules based on request headers, method, path, and other HTTP attributes (only available for decrypted traffic when TLS interception is enabled).

- PostResponse — executed after the destination responds but before the response is forwarded to the client. This phase allows filtering based on response headers, content type, content length, and status codes.

TLS interception: benefits and responsibilities

- The proxy can perform TLS interception (a man-in-the-middle for inspection). In this mode the proxy terminates the TLS session from the workload, inspects HTTP-layer data, then opens a new TLS session to the destination.

- To make interception work, the proxy generates a certificate on behalf of the destination and presents it to the client. For clients to accept that certificate the enterprise must ensure the proxy’s certificate authority is trusted by the workload. That trust can be established by:

- Using an enterprise CA and importing the root/subordinate CA into the client trust store, or

- Using a subordinate CA managed via a private certificate authority service and distributing its root into client trust stores.

- When TLS interception is disabled, the proxy establishes two independent TCP connections, but it does not decrypt payloads. Policy enforcement is then limited to unencrypted metadata like DNS answers, destination IPs, and SNI (Server Name Indication).

- The operational complexity of TLS interception is non-trivial: certificate distribution, application-level trust anchors, and potential compliance/privacy implications must be planned carefully.

Technical constraints and preview limits

During the public preview the service has explicit limitations that organizations must account for:- Region availability — the preview is limited to a single region (a US East region at launch).

- Per-account limits — for example: one proxy per account during the preview; proxy configurations and rule group counts are capped (tens to hundreds depending on item). These limits are intentionally conservative during preview.

- Protocol support — the proxy preview supports HTTP/1.1 only. HTTP/2 and HTTP/3 (H2/H3) traffic will not be proxied; such connections may be dropped or time out when routed through the proxy. Applications that default to HTTP/2 will require configuration or an intermediary layer to speak HTTP/1.1.

- Addressing — preview behavior is IPv4-focused; cross‑region and IPv6 scenarios require additional configuration or may be unsupported in preview.

- Explicit proxy requirement — applications must be proxy-aware. There is no transparent interception mode for arbitrary client traffic in this offering.

Why a managed proxy is attractive

- Operational cost reduction: no more managing, patching, and scaling Squid or other proxy fleets on EC2/containers. Patching is handled by the provider.

- Centralized policy management: rule groups and proxy configurations let security teams create sharable, prioritized rule sets.

- Simplified scaling: by detaching the proxy from a customer-managed fleet and integrating it with a NAT Gateway and PrivateLink endpoints, AWS takes on scaling and availability responsibilities.

- Visibility and logging: the service supports comprehensive logging pipelines and can push logs to standard telemetry sinks for auditing and detection workflows.

Key trade-offs and risks

Privacy, legal and compliance considerations

- TLS interception = access to plaintext data. That capability is powerful for DLP and malware scanning but also elevates insider risk and regulatory scrutiny. Organizations must document who can access decrypted payloads, where logs are stored, and how data retention and redaction are handled.

- Trust management: distributing the proxy CA to endpoints (especially across mixed OS fleets, containers, serverless functions) is a governance burden. Misconfiguration risks client trust issues or exposure if the CA is compromised.

Single point and centralization risks

- Centralizing egress through a single proxy endpoint reduces administrative overhead but concentrates risk. During preview there is a single-proxy-per-account constraint, which for production would be a severe availability and blast-radius concern unless the provider explicitly designs HA/failover at scale.

- In centralized topologies that use Transit Gateway or Cloud WAN, additional routing complexity and potential NAT hairpinning semantics must be considered.

Bypass and network design pitfalls

- The proxy is endpoint-accessed — traffic must reach the proxy endpoint to be inspected. If clients have a route to the NAT Gateway that bypasses the endpoint, proxy policies will not apply.

- Security groups, route tables, and NACLs must be carefully configured to prevent inadvertent bypasses. Implicit trust in the network can create stealth bypass paths.

Protocol and application compatibility

- Not supporting HTTP/2 or HTTP/3 in preview can cause silent failures for modern clients or API calls that prefer H2/H3. Testing and application-compatibility checks are essential.

- Some cloud-managed services or internal platform components may rely on client IP preservation; when the proxy NATs traffic, the original client IP may be lost. Policies that rely on original client IPs must be adjusted.

How this compares to self-hosted proxies and gateway firewalls

- Self-hosted proxies (e.g., Squid on EC2, containerized proxies) give you total control over TLS handling, logging, and certificate management but require engineering effort to patch, scale, and maintain.

- Gateway-mode firewalls (traditional next-gen firewalls attached to routed paths) protect arbitrary TCP/UDP traffic and can be transparent. The managed proxy is specialized: it is intentionally focused on HTTP/HTTPS and designed to complement, not replace, broader gateway firewalls for non-HTTP protocols.

- Operationally, moving from self-hosted fleets to a managed proxy:

- Reduces patching and scaling burden.

- Moves trust and capability decisions to a provider-controlled service boundary (requires governance).

- Can simplify centralization but introduces dependency on provider design choices (protocol support, feature roadmap).

Practical migration and implementation guidance

- Inventory HTTP-based clients and services and verify whether they default to HTTP/1.1 or HTTP/2/3. If they prefer H2/H3, plan to reconfigure or add an intermediary translation layer.

- Decide on distributed vs centralized deployment:

- Distributed works for strict per-VPC isolation requirements.

- Centralized reduces management overhead but needs strong HA and routing design.

- Test TLS interception policy in a controlled environment:

- Create a test subordinate CA and install its root on representative clients.

- Validate application behavior (certificate pinning, custom trust anchors, SDKs).

- Harden endpoint reachability:

- Lock down security groups and route tables to prevent direct NAT Gateway egress bypass.

- Ensure endpoint Private DNS configuration is in place for each client VPC.

- Validate logging and telemetry:

- Configure log sinks to central SIEM or monitoring stack.

- Review log retention and encryption policies for compliance.

- Prepare a rollback plan:

- Because the proxy is explicit, clients that cannot reach it should have a fallback route or a documented mitigation to restore outbound connectivity quickly.

Observability and auditing

The managed proxy offers logging to enterprise telemetry sinks and the ability to alert on denied flows or suspicious content. Teams should:- Treat logs as sensitive — decrypted payloads, headers and URIs may contain PII or credentials.

- Apply filtering, redaction or tokenization as part of the log pipeline before long-term storage.

- Correlate proxy logs with host telemetry and DNS logs for robust incident detection.

Where the service is most useful today

- Organizations that want to stop managing proxy fleets but still require granular egress controls for web traffic.

- Security teams focused on egress policy enforcement, DLP and app-layer threat detection for HTTP(S).

- Environments with mature client management and the ability to roll out enterprise CA trust to all endpoints.

- Clouds where centralized egress via Transit Gateway or Cloud WAN is already in use and can be augmented with per-VPC PrivateLink endpoints.

Where the service is not a fit (yet)

- Environments requiring transparent inspection of arbitrary non-HTTP traffic (DNS, custom TCP, UDP-based protocols).

- Scenarios where strict client IP preservation is mandatory for policy decisions.

- Workloads that rely heavily on HTTP/2 or HTTP/3 and cannot be migrated to HTTP/1.1 or a compatible intermediary.

- Organizations that cannot roll out enterprise CA trust to their clients or have numerous unmanaged endpoints (e.g., BYOD without MDM coverage).

Cost and availability considerations

- During the preview the proxy is offered free for testing. There is no firm published GA pricing or global availability timetable at preview launch.

- Preview limits (single proxy per account, rule group and configuration quotas) are intentionally conservative; production-grade scale and HA behavior will only be clear post-GA.

- For planning purposes, assume that pricing and quotas will change at GA, and build proof-of-concept tests that measure performance and throughput for your traffic profile rather than extrapolating preview experience to future scale claims.

Security checklist before enabling TLS inspection

- Confirm legal/compliance acceptability for decrypting and logging traffic; update privacy notices and internal policies accordingly.

- Deploy an internal process for certificate lifecycle management (issue, rotate, revoke subordinate CAs).

- Ensure appropriate role-based access controls around who can view decrypted traffic.

- Implement log redaction and retention policies to meet regulatory requirements.

- Validate that certificate pinning patterns (in mobile apps, some clients) are managed or exceptions are documented.

Final assessment: practical, not revolutionary

The managed Network Firewall proxy is a practical engineering move that takes a widely repeated operational burden—managing proxy fleets—and turns it into a cloud-native capability focused on policy. For many enterprises the appeal will be immediate: consistent policy management, central rule sets, and elimination of a patching/scale problem.However, the offering is deliberately specialized rather than universal. It targets HTTP/HTTPS egress policy and inspection, leaving broader non‑HTTP protections and transparent gateway inspection to other solutions. The preview-phase limits (single-proxy-per-account, HTTP/1.1-only, IPv4 emphasis) and the operational responsibilities that come with TLS interception (certificate distribution, compliance, logging) mean teams must plan carefully.

For security and platform engineering teams, the practical approach is clear:

- Use the preview to validate end-to-end compatibility (especially TLS behavior and protocol versions).

- Test architectures that combine the managed proxy for HTTP with existing gateway-mode firewalls for non‑HTTP traffic.

- Build governance and auditing procedures for any decrypted traffic and certificate management.

- Avoid assuming preview scale or pricing characteristics will hold at GA.

Source: infoq.com AWS Launches Network Firewall Proxy in Preview to Simplify Managed Egress Security