AI cost optimization is no longer a niche FinOps concern; it is becoming a core business discipline for enterprises that want to scale Azure AI without letting spend outrun value. Microsoft’s new Cloud Cost Optimization blog frames the challenge clearly: AI adoption is accelerating, workloads are consumption-based, and leaders now need a more rigorous way to connect every dollar of AI spend to measurable business outcomes. That shift matters because the winning organizations will not be the ones that simply deploy the most models, but the ones that build a durable operating model for ROI, governance, and efficiency.

For years, cloud cost conversations centered on familiar ideas: rightsize virtual machines, reduce idle resources, and pick the best pricing model for steady-state workloads. AI changes that picture in a profound way because model training, inference, experimentation, and retrieval pipelines behave differently from traditional application hosting. Microsoft’s Azure cost-optimization materials now explicitly tie cloud savings to AI investments, signaling that the company sees AI economics as part of the broader cloud optimization story rather than a separate niche.

That distinction is important because AI workloads tend to be iterative, variable, and highly sensitive to architectural choices. A team can run several model variants, tweak prompts, expand context windows, or change deployment modes, and each of those decisions affects the bill in ways that are not always obvious upfront. In Microsoft’s own framing, the answer is not simply “spend less,” but “spend more efficiently in pursuit of measurable business outcomes.”

The blog post also arrives at a moment when Microsoft is pushing a broader message around sustainable AI adoption. The company has been connecting Azure AI, Cost Management, Advisor, and its solution center content into one narrative: AI needs operational guardrails if it is going to become a repeatable business capability. That is a notable evolution from the early AI-era conversation, which often focused mostly on capability, model quality, and novelty.

There is a competitive subtext here as well. Cloud providers increasingly market AI not just as a performance story, but as a value story, and that means cost visibility is now a product differentiator. Microsoft’s positioning suggests it wants organizations to think of Azure as a place where AI can be deployed, measured, governed, and optimized within the same ecosystem, reducing the need to stitch together separate tools and control planes.

In practical terms, the blog encourages organizations to evaluate AI use cases by business value, not by technical novelty alone. That means asking whether a workload improves productivity, boosts customer satisfaction, increases revenue, or reduces operational friction. It also means understanding that AI cost optimization is not a standalone finance exercise; it is a management framework for deciding which AI initiatives deserve more investment and which should be reworked.

A narrow cost-cutting mindset can backfire by discouraging experimentation or forcing teams to choose smaller, less capable models too early. In AI, the cheapest option is not always the best option, especially when latency, answer quality, or user trust are on the line. Microsoft’s framing acknowledges that over-optimization can slow innovation as much as under-governance can inflate spend.

The economic model also changes. A conventional application might be sized around CPU, memory, and storage, but an AI application can depend on model choice, prompt length, token throughput, vector search, retraining cadence, and service tier. That creates a cost profile that is not just larger, but qualitatively more complex.

Once production begins, the workload can scale faster than expected if adoption is successful. In customer-facing scenarios, every incremental interaction can drive inference cost, and the total bill depends heavily on traffic volume, response length, and how often the model is called. That makes observability and unit-cost measurement essential, not optional.

A stronger approach is to define use cases with clear value hypotheses. For example, a customer service copilot should be tied to deflection rates, handle-time reduction, or satisfaction improvements; an internal knowledge assistant should be tied to time saved per employee; and an AI analytics workflow should be tied to faster decisions or lower manual effort. Without that linkage, cost management becomes detached from the actual reason the project exists.

A useful way to think about this is to ask whether the AI use case is a must-win, a nice-to-have, or merely an experiment. Must-win workloads deserve deeper investment and stronger governance because their value case is clearer. Experimental workloads still matter, but they should be capped, reviewed, and designed to learn efficiently rather than scale prematurely.

That is particularly true in Azure AI scenarios where deployment choices can alter latency, throughput, and spend. The company’s Azure OpenAI materials emphasize multiple deployment types and pricing models, which means teams need to match technical requirements to the right economic model rather than assuming one size fits all. This is where well-designed systems create lasting savings without sacrificing performance.

Deployment strategy matters too. If a workload requires consistent high throughput and low latency, the wrong deployment mode can cause either performance issues or unnecessary spend. A smart architecture balances response quality, reliability, and cost rather than treating them as separate optimization problems.

This is why the architecture conversation needs to involve more than developers. Product owners, finance partners, and platform teams all have a role in deciding which tradeoffs are acceptable. In mature organizations, this becomes a design review culture where cost efficiency is considered alongside security, reliability, and performance.

This is where many teams fail, because they treat go-live as the finish line. In reality, the biggest opportunity to improve ROI often begins after deployment, when actual patterns replace assumptions. That is also the point where governance must become routine rather than exceptional.

Microsoft’s broader cloud-cost resources reinforce this point with tools such as Cost Management, Advisor, and pricing guidance. That ecosystem matters because AI optimization is not just about one service; it is about creating visibility across the broader application stack that supports the AI experience. The more complex the stack, the more important continuous measurement becomes.

For enterprises, that means the finance team can no longer operate on the sidelines. They need to partner with product and engineering teams to understand how AI spend maps to business value. For consumers of AI inside the enterprise, the payoff is better user experiences delivered with less waste, rather than blunt quotas that slow everything down.

The company also appears to be leaning into a solution hub approach. The Maximize ROI from AI page aggregates guidance, optimization best practices, and value-measurement resources, which suggests Microsoft wants customers to see cost optimization as an ongoing journey rather than a one-off project. That kind of centralization can be valuable for organizations that are still building maturity around AI governance.

At the same time, platform concentration has tradeoffs. A unified approach may be efficient, but it can also create dependence on the provider’s tooling and economics. The best enterprise buyers will appreciate the simplification while still maintaining enough architectural discipline to avoid lock-in by default.

For consumers, the benefits are less visible but still meaningful. Efficient AI operations can translate into lower latency, more reliable products, and fewer interruptions caused by runaway spend or poorly managed scaling. In that sense, cost discipline is not just a finance story; it is a product-quality story.

That distinction is useful because it prevents a simplistic “lower cost at all costs” mentality. In a consumer product, a slightly more expensive workflow may still be the right choice if it materially improves experience quality. In an enterprise workflow, a cheaper option may be unacceptable if it introduces risk or reduces accuracy in a mission-critical process.

The competitive angle is especially visible in the way Microsoft emphasizes choice, governance, and visibility. Rather than framing AI as a black-box service, the company is trying to show that customers can control deployment types, analyze spend, and apply optimization guidance within the same environment. That is a persuasive message for buyers who are worried that AI could become an open-ended cost center.

Microsoft’s emphasis on sustainable value also frames the competitive conversation in a favorable way. It suggests that the real differentiator is not who has the flashiest demo, but who can help customers turn AI into a managed business capability. That is a subtle but important shift in the cloud market.

The biggest opportunity is that this thinking can help organizations move from scattered pilots to managed AI portfolios. If implemented well, the same discipline can improve forecasting, sharpen prioritization, and create stronger alignment between business leaders and engineering teams.

Another concern is measurement. ROI in AI is notoriously difficult to quantify cleanly, especially when benefits are indirect, cumulative, or distributed across teams. If organizations do not define good metrics early, they may either underinvest in useful workloads or continue funding projects that look promising but never become materially valuable.

Watch for more explicit links between AI spend, Cost Management, and workload-level optimization. Also watch for more emphasis on unit economics, because that is where AI finance is heading: not just total spend, but the cost per conversation, cost per workflow, and cost per business outcome. Those metrics will matter more as AI shifts from novelty to utility.

AI will keep getting cheaper in some areas and more expensive in others, but the organizations that come out ahead will not be the ones that wait for perfect pricing. They will be the ones that build discipline, visibility, and accountability into the AI lifecycle from the start, so every model, every deployment choice, and every production decision is tied back to real business value.

Source: azure.microsoft.com Cloud Cost Optimization: How to maximize ROI from AI, manage costs, and unlock real business value | Microsoft Azure Blog

Background

Background

For years, cloud cost conversations centered on familiar ideas: rightsize virtual machines, reduce idle resources, and pick the best pricing model for steady-state workloads. AI changes that picture in a profound way because model training, inference, experimentation, and retrieval pipelines behave differently from traditional application hosting. Microsoft’s Azure cost-optimization materials now explicitly tie cloud savings to AI investments, signaling that the company sees AI economics as part of the broader cloud optimization story rather than a separate niche.That distinction is important because AI workloads tend to be iterative, variable, and highly sensitive to architectural choices. A team can run several model variants, tweak prompts, expand context windows, or change deployment modes, and each of those decisions affects the bill in ways that are not always obvious upfront. In Microsoft’s own framing, the answer is not simply “spend less,” but “spend more efficiently in pursuit of measurable business outcomes.”

The blog post also arrives at a moment when Microsoft is pushing a broader message around sustainable AI adoption. The company has been connecting Azure AI, Cost Management, Advisor, and its solution center content into one narrative: AI needs operational guardrails if it is going to become a repeatable business capability. That is a notable evolution from the early AI-era conversation, which often focused mostly on capability, model quality, and novelty.

There is a competitive subtext here as well. Cloud providers increasingly market AI not just as a performance story, but as a value story, and that means cost visibility is now a product differentiator. Microsoft’s positioning suggests it wants organizations to think of Azure as a place where AI can be deployed, measured, governed, and optimized within the same ecosystem, reducing the need to stitch together separate tools and control planes.

Why the timing matters

The timing is especially relevant because many organizations are moving from AI pilots to production deployments. That transition usually exposes hidden costs: more traffic, more tokens, more retraining, more logging, more data movement, and more governance overhead. Microsoft’s emphasis on lifecycle-based ROI reflects the reality that the real cost curve often shows up after the demo succeeds.- AI value is increasingly measured in production, not in proofs of concept.

- Consumption pricing makes cost behavior more dynamic than in traditional infrastructure.

- Governance and measurement now need to be built into the AI operating model.

- The market is shifting from “Can we do this?” to “Can we do this sustainably?”

- Cloud cost management is becoming a board-level issue, not just an engineering concern.

What Microsoft Is Really Saying

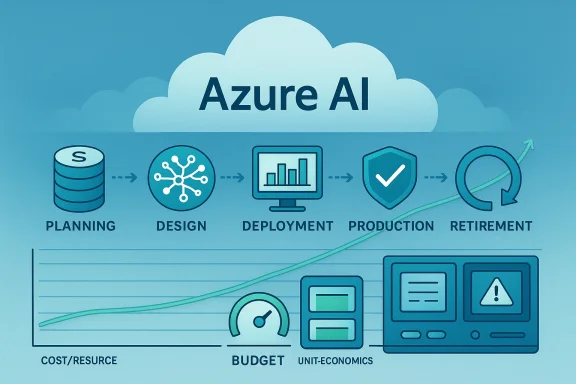

The core message of the blog is that ROI from AI should be managed as a lifecycle discipline. Microsoft argues that AI cost management begins before deployment, continues during design, and remains active throughout production and retirement. That is a sensible view because the biggest budget mistakes are often made early, when teams choose architectures that are easy to prototype but expensive to scale.In practical terms, the blog encourages organizations to evaluate AI use cases by business value, not by technical novelty alone. That means asking whether a workload improves productivity, boosts customer satisfaction, increases revenue, or reduces operational friction. It also means understanding that AI cost optimization is not a standalone finance exercise; it is a management framework for deciding which AI initiatives deserve more investment and which should be reworked.

The shift from cost cutting to value creation

This is the most important strategic nuance in the article. Microsoft is not saying that low cost is the goal; it is saying that efficient spend is the goal, and efficiency has to be judged against business outcomes. That is a much more mature position because some of the most valuable AI workloads are intentionally expensive if they deliver meaningful revenue or productivity gains.A narrow cost-cutting mindset can backfire by discouraging experimentation or forcing teams to choose smaller, less capable models too early. In AI, the cheapest option is not always the best option, especially when latency, answer quality, or user trust are on the line. Microsoft’s framing acknowledges that over-optimization can slow innovation as much as under-governance can inflate spend.

- AI spend should be compared against business outcomes, not just other IT costs.

- Early-stage experimentation should be governed differently from production.

- Efficiency matters more than raw thrift when AI drives revenue or productivity.

- Architectural decisions can create long-term cost advantages or liabilities.

- Teams need to optimize for sustainable value, not just a lower invoice.

How AI Costs Differ from Traditional Cloud Costs

Traditional cloud optimization often works well when demand is relatively stable and resource usage is predictable. AI workloads break that assumption because they can spike unpredictably during development, testing, and production inference. Microsoft’s blog calls out variable usage patterns, specialized infrastructure, and multi-team lifecycle complexity as the main drivers of AI spend.The economic model also changes. A conventional application might be sized around CPU, memory, and storage, but an AI application can depend on model choice, prompt length, token throughput, vector search, retraining cadence, and service tier. That creates a cost profile that is not just larger, but qualitatively more complex.

Why predictability is harder in AI

AI development tends to be exploratory by design. Teams test prompts, tune system instructions, compare model families, and run repeated evaluations before they ever reach production. That exploratory behavior is healthy, but it also means finance teams cannot rely on the same forecasting patterns they may have used for ordinary web apps or databases.Once production begins, the workload can scale faster than expected if adoption is successful. In customer-facing scenarios, every incremental interaction can drive inference cost, and the total bill depends heavily on traffic volume, response length, and how often the model is called. That makes observability and unit-cost measurement essential, not optional.

- AI costs vary by development, validation, and production phases.

- Model behavior can be influenced by prompt design and token usage.

- Training and inference may have very different cost structures.

- Specialized infrastructure can increase baseline spend.

- Visibility into usage is critical for forecasting and accountability.

Planning for ROI Before the First Deployment

One of Microsoft’s most practical messages is that ROI should be planned from the start. That sounds obvious, but in many organizations, AI is still treated as a lab project first and a business program later. The result is that teams build impressive demos with no clear mechanism for knowing whether the workload is actually creating value.A stronger approach is to define use cases with clear value hypotheses. For example, a customer service copilot should be tied to deflection rates, handle-time reduction, or satisfaction improvements; an internal knowledge assistant should be tied to time saved per employee; and an AI analytics workflow should be tied to faster decisions or lower manual effort. Without that linkage, cost management becomes detached from the actual reason the project exists.

A practical planning framework

A good planning process should identify the expected outcome, the cost drivers, the usage assumptions, and the success metrics before any production spend starts. That makes it easier to compare opportunities and avoid overcommitting to workloads that are interesting but not material. It also creates a common language for business, finance, and engineering teams.A useful way to think about this is to ask whether the AI use case is a must-win, a nice-to-have, or merely an experiment. Must-win workloads deserve deeper investment and stronger governance because their value case is clearer. Experimental workloads still matter, but they should be capped, reviewed, and designed to learn efficiently rather than scale prematurely.

- Define the business outcome first.

- Estimate the workload’s likely usage pattern.

- Map the main cost drivers, including compute and data.

- Choose success metrics that matter to the business.

- Set review points before production scale-up.

- Planning reduces the risk of expensive dead ends.

- Clear hypotheses make ROI easier to prove.

- Cost assumptions should be tied to real adoption scenarios.

- Business stakeholders need a voice before architecture is locked in.

- Experimentation should be budgeted, not left open-ended.

Designing AI Solutions for Efficiency

Microsoft is also pushing an architectural message: cost efficiency is not something you bolt on later. The design stage determines how much AI will cost over time, which models you choose, how you deploy them, and how frequently you call them all matter. In other words, architecture is economics.That is particularly true in Azure AI scenarios where deployment choices can alter latency, throughput, and spend. The company’s Azure OpenAI materials emphasize multiple deployment types and pricing models, which means teams need to match technical requirements to the right economic model rather than assuming one size fits all. This is where well-designed systems create lasting savings without sacrificing performance.

Architectural choices that shape the bill

Model selection is one of the biggest levers. Larger or more capable models may provide better responses, but they also typically cost more to run, especially if they are used too broadly for low-value tasks. Teams should reserve premium models for premium use cases and use smaller or more specialized models where appropriate.Deployment strategy matters too. If a workload requires consistent high throughput and low latency, the wrong deployment mode can cause either performance issues or unnecessary spend. A smart architecture balances response quality, reliability, and cost rather than treating them as separate optimization problems.

- Match model capability to business importance.

- Use the smallest effective model for the task.

- Design for predictable scaling where possible.

- Avoid unnecessary data movement and redundant calls.

- Consider latency and throughput as cost factors, not just technical metrics.

The hidden costs of iteration

AI systems often incur costs beyond the obvious model calls. Logging, retrieval infrastructure, data preparation, experimentation, evaluation pipelines, and governance tooling can all contribute to the total spend. If those costs are not tracked as part of the solution design, teams can underestimate the true economics of the program.This is why the architecture conversation needs to involve more than developers. Product owners, finance partners, and platform teams all have a role in deciding which tradeoffs are acceptable. In mature organizations, this becomes a design review culture where cost efficiency is considered alongside security, reliability, and performance.

Operating and Optimizing AI in Production

Once AI is live, the cost story changes again. Production workloads generate real usage data, which means organizations finally have the raw material needed for meaningful optimization. Microsoft’s advice is to monitor usage, evaluate performance, and continuously adjust resources so the system stays aligned with business demand.This is where many teams fail, because they treat go-live as the finish line. In reality, the biggest opportunity to improve ROI often begins after deployment, when actual patterns replace assumptions. That is also the point where governance must become routine rather than exceptional.

Continuous optimization is a discipline

A mature production model should review consumption regularly, compare actual usage against forecasts, and identify waste or underused capacity. It should also watch for changes in traffic patterns, feature adoption, and workload behavior that might call for different model choices or service tiers. The best organizations manage AI like a living portfolio, not a static asset.Microsoft’s broader cloud-cost resources reinforce this point with tools such as Cost Management, Advisor, and pricing guidance. That ecosystem matters because AI optimization is not just about one service; it is about creating visibility across the broader application stack that supports the AI experience. The more complex the stack, the more important continuous measurement becomes.

- Track actual usage against original assumptions.

- Watch for expensive features that deliver low value.

- Revisit deployment modes as adoption grows.

- Use governance to prevent drift and sprawl.

- Treat optimization as ongoing operations, not a one-time project.

FinOps meets AI operations

The article implicitly aligns AI management with FinOps thinking, even though it uses more business-friendly language. That is significant because AI programs need the same cross-functional discipline that cloud spending has required for years: accountability, transparency, and continuous improvement. What changes is the unit of analysis, which now includes tokens, model calls, and business outcomes rather than just VM hours or storage capacity.For enterprises, that means the finance team can no longer operate on the sidelines. They need to partner with product and engineering teams to understand how AI spend maps to business value. For consumers of AI inside the enterprise, the payoff is better user experiences delivered with less waste, rather than blunt quotas that slow everything down.

Microsoft’s Platform Strategy

Microsoft’s broader platform pitch is that it can help organizations build, deploy, and govern AI in one ecosystem. That matters because tool sprawl is expensive: the more separate systems a company uses to manage models, spend, policy, and observability, the harder it becomes to maintain both performance and cost control. Microsoft positions Azure as an environment where those pieces can be managed together.The company also appears to be leaning into a solution hub approach. The Maximize ROI from AI page aggregates guidance, optimization best practices, and value-measurement resources, which suggests Microsoft wants customers to see cost optimization as an ongoing journey rather than a one-off project. That kind of centralization can be valuable for organizations that are still building maturity around AI governance.

Why the ecosystem approach matters

The advantage of an integrated platform is not just convenience. It can reduce integration overhead, simplify governance, and make it easier to connect spend to outcomes. In the AI era, that connection is crucial because the organizations that win will be the ones that can answer a simple question with confidence: what are we getting for what we spend?At the same time, platform concentration has tradeoffs. A unified approach may be efficient, but it can also create dependence on the provider’s tooling and economics. The best enterprise buyers will appreciate the simplification while still maintaining enough architectural discipline to avoid lock-in by default.

- Integrated tooling can reduce operational friction.

- Centralized guidance helps teams mature faster.

- Unified governance makes ROI easier to measure.

- Platform simplicity can lower coordination costs.

- Enterprises should still preserve architectural flexibility.

Enterprise vs. Consumer Impact

For enterprises, the implications are immediate. AI cost optimization affects procurement, budget planning, architecture reviews, governance boards, and executive reporting. Once AI becomes embedded in customer support, sales enablement, internal knowledge systems, or workflow automation, the cost of ignoring usage trends can compound quickly.For consumers, the benefits are less visible but still meaningful. Efficient AI operations can translate into lower latency, more reliable products, and fewer interruptions caused by runaway spend or poorly managed scaling. In that sense, cost discipline is not just a finance story; it is a product-quality story.

Where the goals differ

Enterprises care about portfolio-level ROI, unit economics, compliance, and long-term sustainability. Consumer-facing teams care about speed, trust, responsiveness, and user satisfaction. Both groups need cost optimization, but they apply it differently: enterprises ask whether a program should scale, while product teams ask how to preserve experience quality while scaling efficiently.That distinction is useful because it prevents a simplistic “lower cost at all costs” mentality. In a consumer product, a slightly more expensive workflow may still be the right choice if it materially improves experience quality. In an enterprise workflow, a cheaper option may be unacceptable if it introduces risk or reduces accuracy in a mission-critical process.

- Enterprises evaluate AI as a portfolio investment.

- Consumer teams evaluate AI as a product experience.

- Cost control must be balanced with quality and trust.

- Different workloads justify different optimization thresholds.

- Governance needs to reflect the value of the use case.

Competitive Implications for Cloud Buyers

Microsoft’s messaging reflects a broader battle among cloud vendors to own the economics of AI. If AI becomes the center of enterprise digital transformation, then the cloud provider that makes cost understandable and manageable will have a meaningful advantage. In that respect, the Azure blog is not just educational content; it is also part of a platform strategy.The competitive angle is especially visible in the way Microsoft emphasizes choice, governance, and visibility. Rather than framing AI as a black-box service, the company is trying to show that customers can control deployment types, analyze spend, and apply optimization guidance within the same environment. That is a persuasive message for buyers who are worried that AI could become an open-ended cost center.

What rivals must answer

Other cloud providers will need to answer the same question: how do they help customers scale AI without creating financial surprises? The winning answer will likely combine observability, pricing flexibility, governance tooling, and clear ROI measurement. If a provider cannot show that, enterprise customers will be less willing to expand spend aggressively.Microsoft’s emphasis on sustainable value also frames the competitive conversation in a favorable way. It suggests that the real differentiator is not who has the flashiest demo, but who can help customers turn AI into a managed business capability. That is a subtle but important shift in the cloud market.

- Cloud vendors now compete on AI economics, not just model access.

- Visibility and governance are becoming buying criteria.

- Flexible deployment options are a market differentiator.

- ROI tooling can influence enterprise platform selection.

- The provider that simplifies AI cost control gains leverage.

Strengths and Opportunities

Microsoft’s approach has several strengths. It reframes AI cost management as a business strategy, not a technical burden, and that will resonate with executives who need a clearer line of sight from investment to outcome. It also gives organizations a practical reason to formalize governance early, which is where many AI programs are most vulnerable to waste.The biggest opportunity is that this thinking can help organizations move from scattered pilots to managed AI portfolios. If implemented well, the same discipline can improve forecasting, sharpen prioritization, and create stronger alignment between business leaders and engineering teams.

- Lifecycle thinking improves cost discipline from planning through production.

- Value-based ROI encourages better prioritization of AI use cases.

- Integrated governance can reduce tool sprawl and operational friction.

- Production visibility helps organizations optimize after launch.

- Cross-functional alignment makes AI spending easier to justify.

- Flexible deployment choices allow teams to match cost to workload needs.

- Centralized guidance lowers the learning curve for newer AI teams.

Risks and Concerns

The biggest risk is that organizations may interpret cost optimization too narrowly and suppress useful experimentation. AI is still an innovation cycle for many teams, and if governance becomes overly restrictive, it can slow learning and create a culture of caution that is out of step with strategic goals. That is why balance is such an important word in this conversation.Another concern is measurement. ROI in AI is notoriously difficult to quantify cleanly, especially when benefits are indirect, cumulative, or distributed across teams. If organizations do not define good metrics early, they may either underinvest in useful workloads or continue funding projects that look promising but never become materially valuable.

- Over-optimization can choke innovation before it reaches scale.

- Weak metrics can make ROI claims unreliable.

- Hidden infrastructure costs can distort the real economics.

- Vendor concentration may increase platform dependence.

- Forecasting errors are easy when usage patterns are still evolving.

- Governance overhead can become costly if not designed efficiently.

- Experience tradeoffs may emerge if teams optimize for cost alone.

What to Watch Next

The next phase of this conversation will likely focus on tooling, benchmarks, and operational maturity. Microsoft has signaled that the Cloud Cost Optimization series will continue, which suggests more guidance is coming on how to plan, design, and manage AI investments in a repeatable way. The key question is whether organizations can translate that guidance into measurable business practice rather than leaving it as a set of best practices on paper.Watch for more explicit links between AI spend, Cost Management, and workload-level optimization. Also watch for more emphasis on unit economics, because that is where AI finance is heading: not just total spend, but the cost per conversation, cost per workflow, and cost per business outcome. Those metrics will matter more as AI shifts from novelty to utility.

Key signals to monitor

- Expanded guidance on AI unit economics and cost attribution.

- New optimization patterns for Azure AI and related services.

- Better integration between governance and financial reporting.

- More case studies showing measurable ROI from production AI.

- Greater emphasis on lifecycle management and retirement planning.

AI will keep getting cheaper in some areas and more expensive in others, but the organizations that come out ahead will not be the ones that wait for perfect pricing. They will be the ones that build discipline, visibility, and accountability into the AI lifecycle from the start, so every model, every deployment choice, and every production decision is tied back to real business value.

Source: azure.microsoft.com Cloud Cost Optimization: How to maximize ROI from AI, manage costs, and unlock real business value | Microsoft Azure Blog