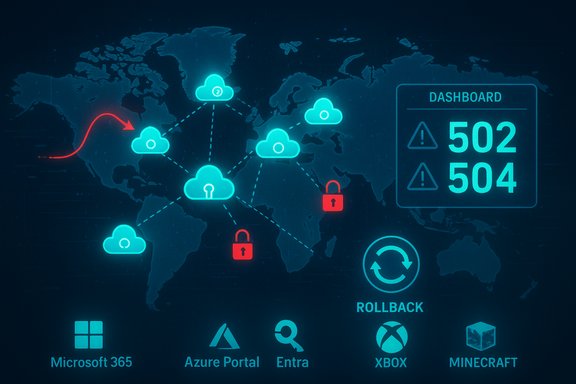

On October 29 a broad Microsoft service disruption knocked Microsoft 365, Azure management surfaces, Xbox/Minecraft authentication and thousands of Azure‑fronted customer sites offline or intermittently unreliable, with Microsoft tracing the incident to an inadvertent configuration change in Azure Front Door that propagated through its global edge fabric.

Microsoft’s cloud and consumer stacks depend on a global edge fabric called Azure Front Door (AFD) for TLS termination, Layer‑7 routing, web application firewall enforcement and DNS‑level traffic steering. Because AFD sits in front of many Microsoft first‑party services and thousands of customer workloads, faults in its control plane can present as broad, simultaneous outages across otherwise healthy back ends. On October 29 Microsoft acknowledged the outage and said its investigation pointed to an inadvertent configuration change in the AFD control plane as the proximate trigger. The public signal was fast and visible: outage trackers and social platforms registered tens of thousands of user reports within minutes of the initial failures, and some feeds recorded six‑figure peaks depending on sampling windows. Those public counts are useful indicators of scale and geographic spread but are not telemetry‑level counts of affected tenants; Microsoft’s internal post‑incident accounting will be the authoritative record.

Policymakers and enterprise architects should therefore balance the benefits of integrated edge services against concentrated failure modes. For mission‑critical national infrastructure and payment flows, architectures that avoid single‑provider choke points will be more resilient over time.

At the same time, the incident demonstrates that modern cloud operators can detect, roll back and restore complex distributed systems at scale—evidence that mature incident playbooks and global operational teams remain effective buffers against permanent service loss. Microsoft’s rollback and rebalancing restored most services within hours, demonstrating the strength of practiced containment strategies even as the incident raises fresh governance questions.

For organizations and IT leaders the takeaway is direct: assume the edge can fail, prepare your admin and customer‑facing failovers now, and design critical flows with multi‑path resilience rather than single‑path efficiency. The immediate disruption has passed, but the structural lessons it highlights must inform the next wave of cloud architecture and governance decisions.

Source: Ayr Advertiser All the sites affected by the Microsoft outage as thousands report issues

Background / Overview

Background / Overview

Microsoft’s cloud and consumer stacks depend on a global edge fabric called Azure Front Door (AFD) for TLS termination, Layer‑7 routing, web application firewall enforcement and DNS‑level traffic steering. Because AFD sits in front of many Microsoft first‑party services and thousands of customer workloads, faults in its control plane can present as broad, simultaneous outages across otherwise healthy back ends. On October 29 Microsoft acknowledged the outage and said its investigation pointed to an inadvertent configuration change in the AFD control plane as the proximate trigger. The public signal was fast and visible: outage trackers and social platforms registered tens of thousands of user reports within minutes of the initial failures, and some feeds recorded six‑figure peaks depending on sampling windows. Those public counts are useful indicators of scale and geographic spread but are not telemetry‑level counts of affected tenants; Microsoft’s internal post‑incident accounting will be the authoritative record. What failed (concise technical summary)

The proximate trigger

Microsoft’s status updates described the trigger as an inadvertent configuration change applied to the Azure Front Door control plane. That change produced inconsistent or incorrect routing and DNS behavior across AFD Points‑of‑Presence (PoPs), which in turn caused widespread DNS, TLS and HTTP gateway failures and token‑exchange timeouts for identity services such as Microsoft Entra (Azure AD). Microsoft halted further AFD configuration rollouts and deployed a rollback to a validated “last known good” configuration as the primary containment action.Why an AFD configuration error has high blast radius

AFD operates as an edge control plane and data plane, combining responsibilities that include:- Global HTTP(S) routing and host‑header validation

- TLS termination and certificate mapping

- DNS‑level routing and anycast steering

- Rate limiting, WAF rules and origin failover

Services and sites affected

The outage cataloged a remarkably broad set of visible impacts across Microsoft first‑party products and downstream customer properties. The core Microsoft services reported as affected included:- Microsoft 365 (Outlook on the web, Teams, Microsoft 365 admin center) — admin console and sign‑in failures.

- Azure Portal — blank or partially rendered blades, management UI access problems.

- Microsoft Entra / Azure AD — token issuance and SSO flows degraded, causing sign‑in failures across services.

- Microsoft Copilot and integrated AI features — degraded or inaccessible where front‑door routing was affected.

- Xbox Live / Microsoft Store / Game Pass — authentication, storefront and cloud‑gaming disruptions.

- Minecraft — launcher and Realms authentication and matchmaking issues.

Timeline: detection to mitigation

- Approximately 16:00 UTC (12:00 PM ET) — monitoring systems and external outage trackers began registering elevated packet loss, TLS handshake timeouts and HTTP gateway errors for AFD‑fronted endpoints. Microsoft posted an initial investigation notice.

- Minutes after detection — Downdetector and social platforms spiked with user reports describing sign‑in failures, blank admin portals and 502/504 errors across gaming and web storefronts; public feeds recorded tens of thousands of complaints in short order. These crowdsourced counts are directional but underscore the rapid global reach.

- Immediate containment — Microsoft blocked further Azure Front Door configuration changes to stop propagation and began deploying a rollback to a previously validated configuration. Engineers also failed the Azure Portal away from AFD where possible so administrators could regain management‑plane access. Microsoft advised affected customers to use programmatic methods (PowerShell/CLI) when the portal was unavailable.

- Recovery — the rollback completed and Microsoft started recovering edge nodes and re‑routing traffic through healthy Points‑of‑Presence (PoPs). The company later reported AFD operating above 98% availability as rebalancing progressed; residual, tenant‑specific issues lingered due to DNS TTLs and CDN caches.

- Post‑incident actions announced — Microsoft committed to a post‑incident review and stated it would strengthen safeguards and validation controls to reduce the likelihood of a similar control‑plane regression in the future.

Why recovery can appear slow even after a rollback

Two distributed‑system realities prolong apparent outages after a root cause is corrected:- DNS and CDN caching: DNS TTLs, ISP caches and CDN edge caches can direct client requests to previously misconfigured PoPs for minutes or hours after the fix is applied. That creates a visible “tail” of failures despite the control plane being corrected.

- Anycast and routing convergence: Anycast routing and BGP convergence mean client paths to nearby PoPs may change slowly as traffic is rebalanced, producing regional variability in observable recovery. This explains why some users and tenants report errors long after the global metrics return to normal.

The visible human and business impact

The outage produced immediate, practical disruptions:- Knowledge workers were unable to access Microsoft 365 admin consoles, Outlook Web, Teams sessions and collaboration tools—interrupting meetings, eroding productivity and delaying time‑sensitive workflows.

- Gamers and consumers experienced sign‑in failures, matchmaking interruptions and inability to access storefronts for digital purchases or Game Pass content. The launch of major titles scheduled for that day was materially affected.

- Retail and hospitality: Mobile ordering, loyalty lookups and storefront checkout flows for major chains showed errors or timed out, impacting revenue and customer experience.

- Transportation and public services: Airlines and airports reported check‑in and boarding pass disruptions; some governmental bodies deferred electronic processes in response to authentication failures. These operational impacts show the second‑order risk when critical citizen services depend on a single cloud provider’s edge fabric.

Strengths in Microsoft’s response — what went right

- Rapid detection and containment playbook: Microsoft quickly identified the suspected change, halted AFD configuration rollouts, and initiated a rollback—standard containment steps for a control‑plane regression. Those actions limited additional propagation of faulty state.

- Failover of management surfaces: Engineers forced the Azure Portal away from the troubled front door where possible, restoring GUI access for many administrators and enabling a faster operational response in some regions.

- Transparent status updates: Microsoft used its status pages and social channels to post rolling updates, and committed to a post‑incident review. While imperfect during the incident, the communication provided a canonical timeline and technical framing that customers could use for triage.

Risks and persistent concerns

While the immediate response followed standard containment playbooks, the outage exposes structural and operational risks that deserve attention.1. Architectural concentration at the edge

AFD combines CDN, WAF, DNS and routing responsibilities for a very large surface area. That centralization means a single control‑plane misconfiguration can affect many otherwise independent services. Designing for the convenience of a unified edge can therefore increase systemic risk.2. Insufficient guardrails in the deployment pipeline

Microsoft’s statement that safeguards and validation checks failed to prevent the faulty change points toward a deployment‑pipeline or validation gap. Large distributed systems require automated, multi‑layered validation and staged rollout mechanisms that can detect anomalous propagation early and safely halt rollouts.3. Operational visibility and admin plane fragility

When the management portals themselves are affected, administrators lose their primary triage tools. This “admin plane fragility” forces reliance on preconfigured programmatic runbooks, out‑of‑band management channels and retained manual processes—capabilities that not all organizations maintain at scale.4. Business continuity and vendor concentration

Companies whose checkout, billing or critical citizen services route through a single cloud provider’s edge are vulnerable to cascading outages that they cannot control directly. Multi‑vendor or hybrid architectures can reduce single‑point‑of‑failure exposure but carry cost, complexity and engineering tradeoffs.Practical recommendations for enterprises and IT teams

Enterprises that rely on Azure (or any hyperscaler) should review their resilience posture across these concrete dimensions:- Design for graceful degradation

- Avoid placing single, globally critical entry points behind a single managed edge unless compensated by alternative paths.

- Use multi‑region fronting and independent DNS records with shorter TTLs for critical subdomains.

- Harden the admin plane

- Maintain programmatic access runbooks (PowerShell, Azure CLI) and service principals that work when the portal is unavailable.

- Keep a verified out‑of‑band admin contact and escalation chain for cloud provider incidents.

- Test and document failover playbooks

- Regularly exercise failover and rollback procedures for your own front ends in non‑production environments.

- Maintain checklists for switching to backup authentication providers or temporary guest‑account flows when SSO is impacted.

- Reduce vendor concentration where feasible

- Evaluate which customer‑facing subsystems must be cloud‑native and which can be hosted with multi‑cloud or secondary providers for critical flows (payments, check‑in, emergency notifications).

- Adopt API and abstraction layers so backend provider changes have minimal upstream impact.

- Monitor provider health and automate mitigation

- Integrate provider status feeds, outage trackers, and synthetic transactions into runbooks to trigger automated mitigation steps (feature toggles, circuit breakers, degraded UX modes).

- Establish a short, hardened incident playbook and practice it quarterly.

- Validate your ability to operate in a portal‑less state.

- Shorten DNS TTLs for critical endpoints where application constraints permit.

What to watch next (and what remains to be verified)

Microsoft has committed to a Post Incident Review (PIR) and follow‑up remediation. Key items to verify after the PIR is published:- Whether the inadvertent configuration change was the result of human error, a software defect in the deployment pipeline, a tenant change, or a combination. Microsoft’s public phrasing has been purposefully measured; the deeper causal chain will be in the PIR.

- Exact counts of affected tenants, mail delivery delays and financial or SLAs‑related impacts. Public outage trackers provide useful surface measurements but are not a substitute for provider telemetry.

- The concrete engineering and validation controls Microsoft will adopt to avoid similar regression. These changes will matter to customers designing forward‑looking resilience models.

Broader lessons for cloud ecosystems

The October 29 AFD incident underscores a repeating theme in hyperscaler outages: architectural convenience can create systemic fragility. The same edge fabrics that accelerate and secure traffic also centralize risk when control‑plane validation or deployment processes are imperfect.Policymakers and enterprise architects should therefore balance the benefits of integrated edge services against concentrated failure modes. For mission‑critical national infrastructure and payment flows, architectures that avoid single‑provider choke points will be more resilient over time.

At the same time, the incident demonstrates that modern cloud operators can detect, roll back and restore complex distributed systems at scale—evidence that mature incident playbooks and global operational teams remain effective buffers against permanent service loss. Microsoft’s rollback and rebalancing restored most services within hours, demonstrating the strength of practiced containment strategies even as the incident raises fresh governance questions.

Conclusion

The October 29 disruption was a stark reminder of how tightly modern digital life is woven into a small set of hyperscale infrastructure providers. An inadvertent configuration change in Azure Front Door cascaded through DNS, TLS and identity flows and briefly denied millions of users access to productivity tools, gaming services and critical customer interfaces. Microsoft’s containment—blocking further AFD changes, rolling back to a known‑good configuration and failing administrative traffic away from AFD—was effective and ultimately restored broad availability, but the event also reopened urgent conversations about edge centralization, deployment guardrails and the need for resilient operational playbooks.For organizations and IT leaders the takeaway is direct: assume the edge can fail, prepare your admin and customer‑facing failovers now, and design critical flows with multi‑path resilience rather than single‑path efficiency. The immediate disruption has passed, but the structural lessons it highlights must inform the next wave of cloud architecture and governance decisions.

Source: Ayr Advertiser All the sites affected by the Microsoft outage as thousands report issues