Microsoft’s recent push to expand Azure Monitor’s edge-to-cloud observability surface adds three practical capabilities to the Azure Monitor pipeline in public preview — TLS/mTLS secure ingestion, pod placement controls, and data transformations with automated schema standardization — features that materially improve security, performance, and cost-efficiency for high-scale telemetry pipelines.

Observability is no longer limited to cloud-hosted services; production systems span on‑premises datacenters, branch offices, IoT/edge sites, and Arc‑enabled Kubernetes clusters. That distribution creates consistent problems at scale: insecure or unreliable ingestion boundaries, poor scheduling of pipeline workloads on shared Kubernetes clusters, and noisy or inconsistent telemetry that drives up ingestion and query costs.

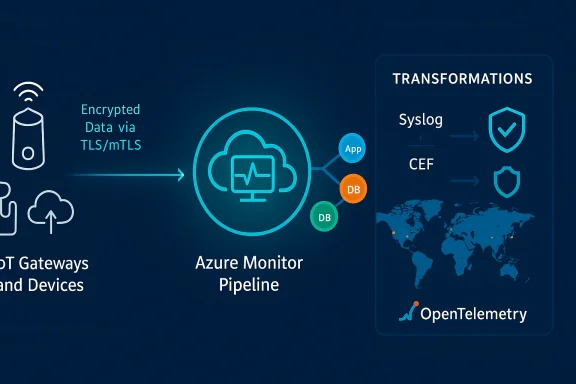

Azure Monitor pipeline is Microsoft’s response: a containerized, OpenTelemetry‑based pipeline that can run on Arc‑enabled Kubernetes clusters, buffer and cache telemetry locally, and route telemetry into Azure Monitor. The recent public preview updates focus on three operational pressures that matter to enterprise SRE, security, and networking teams — encryption and authentication at the ingestion edge, deterministic placement of pipeline instances in Kubernetes, and pre‑ingest transformations to normalize and reduce telemetry volume.

These capabilities are designed to be used together: secure ingestion ensures telemetry trust at the perimeter; placement controls let you run the pipeline where it won’t impact tenant workloads; transformations reduce cost and accelerate analytics by delivering cleaner, schema‑validated records to Azure tables. Microsoft’s community blog posts and documentation provide the feature details and configuration guidance now in public preview.

Why this matters

Capabilities provided

Key transformation features

What this implies

Source: Redmondmag.com Azure Monitor Pipeline Adds New Public Preview Capabilities to Enhance Observability -- Redmondmag.com

Background / Overview

Background / Overview

Observability is no longer limited to cloud-hosted services; production systems span on‑premises datacenters, branch offices, IoT/edge sites, and Arc‑enabled Kubernetes clusters. That distribution creates consistent problems at scale: insecure or unreliable ingestion boundaries, poor scheduling of pipeline workloads on shared Kubernetes clusters, and noisy or inconsistent telemetry that drives up ingestion and query costs.Azure Monitor pipeline is Microsoft’s response: a containerized, OpenTelemetry‑based pipeline that can run on Arc‑enabled Kubernetes clusters, buffer and cache telemetry locally, and route telemetry into Azure Monitor. The recent public preview updates focus on three operational pressures that matter to enterprise SRE, security, and networking teams — encryption and authentication at the ingestion edge, deterministic placement of pipeline instances in Kubernetes, and pre‑ingest transformations to normalize and reduce telemetry volume.

These capabilities are designed to be used together: secure ingestion ensures telemetry trust at the perimeter; placement controls let you run the pipeline where it won’t impact tenant workloads; transformations reduce cost and accelerate analytics by delivering cleaner, schema‑validated records to Azure tables. Microsoft’s community blog posts and documentation provide the feature details and configuration guidance now in public preview.

What’s new — feature deep dives

Secure ingestion: TLS and mutual TLS (mTLS)

Azure Monitor pipeline now supports TLS and mutual TLS (mTLS) for TCP‑based ingestion endpoints in public preview. This moves the pipeline from a basic ingestion forwarder to a hardened telemetry gateway capable of meeting regulatory and enterprise security requirements.Why this matters

- Telemetry often carries sensitive metadata and operational context; encrypting in‑flight telemetry prevents network snooping.

- Mutual authentication (mTLS) ensures only trusted senders can push data and lets the receiving pipeline verify senders against certificates managed by the organization.

- In regulated environments or multi‑tenant clusters, ingestion authentication at the boundary is the primary compliance control — more robust than relying on network segmentation alone.

- TLS encryption of TCP ingestion streams and optional mTLS where both client and server present certificates.

- The ability to use organization‑managed certificates — enabling integration with existing PKI or certificate management systems.

- Enforcement of authentication and encryption at the ingestion boundary, preventing unauthenticated clients from sending telemetry.

- Certificate lifecycle and rotation become part of observability operations; you'll need automation to avoid expired certs interrupting telemetry flow.

- For devices or appliances that cannot do TLS/mTLS natively, organizations will still need secure gateways or proxies; the pipeline’s support reduces — but does not always eliminate — the need for custom edge proxies.

- The Microsoft community announcement describes configuration and the preview status; confirm production readiness against your compliance and SLA requirements before enabling in sensitive environments.

Pod placement controls: executionPlacement for Kubernetes

Large Kubernetes clusters are multi‑purpose and multi‑tenant. The pipeline’s new executionPlacement configuration gives administrators fine‑grained control over where pipeline instances run. That control is essential for avoiding node resource exhaustion and enforcing isolation policies.Capabilities provided

- Target pipeline pods to specific nodes or node pools using labels and placement rules.

- Control distribution patterns (spread across zones, permit only one instance per node, etc.) to improve resiliency and avoid port exhaustion or noisy‑neighbor effects.

- Validate placement at deployment time — if cluster topology cannot meet the requirements, the pipeline refuses to deploy rather than silently fail or contend for resources.

- Under the hood, these placement controls map to affinity/anti‑affinity, taints/tolerations, and podTopologySpreadConstraint concepts, but the pipeline exposes a higher‑level, validated configuration that is applied per pipeline group.

- This reduces operator error (for example, accidentally placing a CPU‑intensive telemetry processor on low‑capacity nodes) and helps enforce governance policies across multiple teams sharing the same cluster.

- Predictable performance for telemetry processing jobs.

- Better capacity planning and avoidance of port exhaustion on shared nodes.

- Easier segregation for security zones or compliance boundaries within a single physical cluster.

- Strong placement constraints can cause deployment failures if the cluster is temporarily undersized; integrate deployment pipelines with capacity checks.

- Relying on cluster labels and node characteristics requires consistent cluster ops practices; drift or inconsistent labeling across clusters will undermine placement controls.

Transformations and automated schema standardization

Possibly the most operationally impactful capability is pipeline transformations, which let teams reshape, filter, aggregate, and normalize telemetry before ingestion into Azure Monitor. When done correctly, pre‑ingest transformations reduce ingestion volume, simplify queries, and accelerate insights. This feature is already in public preview and ships with Kusto‑flavored template support and built‑in schema validation for common telemetry formats such as Syslog and CEF.Key transformation features

- Filtering and advanced drop rules based on attributes (e.g., facility, process name, severity), enabling the removal of noisy or irrelevant events earlier in the pipeline.

- Aggregation for high‑frequency logs (for example, summarizing events by source/destination IP over 1‑minute intervals), allowing your pipeline to keep signals while dramatically reducing the raw ingestion footprint.

- Automatic schema standardization for Syslog and CEF, which maps raw, vendor‑specific messages into standardized columns suitable for Azure Monitor’s built‑in tables.

- Prebuilt KQL templates (and support for KQL operations like summarize, where, extend, project) so teams can adopt common transformations without writing complex queries from scratch.

- Ingestion costs scale with data volume. Filtering and aggregation at the edge translate directly into lower costs.

- Delivering pre‑schematized data into standard tables simplifies dashboarding and saves the engineering time normally spent on ETL pipelines.

- Schema validation and “check KQL syntax” capabilities reduce the risk of breaking downstream queries or dashboards after a schema change.

- Microsoft’s documentation and preview posts explicitly list supported KQL functions and schemas; consult the official pipeline transformations docs before adopting aggressive transformations.

- Some transformation logic can be compute‑intensive; test CPU and memory impact at expected event per second (EPS) rates. Microsoft cites throughput design targets — but real‑world performance depends on cluster sizing and the transformation complexity.

How these pieces work together: an operational scenario

Imagine a regional manufacturing company that collects telemetry from 5,000 network devices, several on‑prem control centers, and an Arc‑enabled Kubernetes cluster at each site.- Devices send syslog to the nearest pipeline ingestion endpoint.

- The pipeline endpoint enforces mTLS so only authentic, certified devices can push logs.

- Placement rules ensure that pipeline pods run on dedicated, high‑capacity nodes that are labelled for telemetry processing (not on nodes running production control plane workloads).

- Transformations aggregate frequent sensor heartbeats into 1‑minute summaries, drop debug‑level events, and map heterogeneous CEF messages into a standardized schema before the data arrives in the Log Analytics workspace.

Integration and compatibility: OpenTelemetry, Arc, and Azure Monitor

Azure Monitor pipeline is built on OpenTelemetry Collector principles and integrates with the OpenTelemetry ecosystem. That means it can collect OTLP logs and expose familiar configuration approaches to teams already using OpenTelemetry SDKs and exporters. The pipeline is designed to be deployed on Arc‑enabled Kubernetes clusters to support edge and multicloud topologies.What this implies

- If you already use OpenTelemetry, adopting Azure Monitor pipeline is a natural extension because the pipeline speaks OTLP and can plug into existing instrumentation.

- For non‑OpenTelemetry sources (e.g., raw Syslog, CEF), the pipeline includes parsers and automatic schematization to bring those formats into the same analytic fabric as OTLP telemetry.

- Confirm that your Arc and Kubernetes versions meet the pipeline’s deployment prerequisites.

- Validate OpenTelemetry exporter versions and OTLP configurations in test clusters before rolling out to production.

- If you rely on third‑party telemetry collectors today, plan for a transition window where both systems run in parallel to verify parity in collected metrics and logs.

Migration checklist: how to adopt the preview safely

If you want to trial these features in your environment, consider the following recommended steps:- Prepare a dedicated, non‑production Arc‑enabled Kubernetes cluster to run the pipeline and replicate production‑like traffic.

- Test TLS/mTLS with a subset of clients and automate certificate issuance and rotation (ACME or your PKI integration).

- Create conservative executionPlacement rules and run capacity tests to avoid accidental pod starvation or conflict with existing workloads.

- Pilot transformations with small rule sets: start by filtering known‑noisy events, then add aggregation and schema mapping after validating correctness in downstream dashboards.

- Monitor pipeline resource usage and throughput under load, and validate that transformed records maintain the fields your alerting and correlation logic requires.

- Perform end‑to‑end validation of alerts, queries, and dashboards after transformations to ensure no critical signals are dropped.

- After successful tests, progressively expand the rollout and set up operational runbooks for cert rotation, capacity onboarding, and rollback plans.

Benefits: speed, security, and cost control

- Security: mTLS closes the ingestion authentication gap and aligns telemetry boundaries with enterprise PKI and compliance controls.

- Predictability: Pod placement rules reduce noisy neighbor effects and make pipeline behavior deterministic in multi‑tenant clusters.

- Cost reduction: Pre‑ingest transformations reduce raw ingestion volume and storage, which directly lowers Azure Monitor ingestion and retention charges. Microsoft explicitly highlights lower ingestion costs as a driver of the transformations preview.

- Improved analytics: Schema standardization and KQL templates shorten time to insight and reduce ETL complexity for downstream teams.

Risks, limits, and governance concerns

While the preview is a positive step, organizations must weigh operational tradeoffs.- Preview maturity and SLAs: Public preview features typically have limited SLAs and may change behavior before GA. Do not treat preview capabilities as production‑grade without validating against your availability and compliance needs. Microsoft notes the public preview status — evaluate for staging and pilot use first.

- Certificate management burden: Using mTLS means certificate issuance, distribution, and rotation become critical operations tasks. Enterprises should invest in automation and policy to prevent outages from expired or misconfigured certs.

- Complex transformation logic: Overly aggressive transformations (dropping fields or sampling prematurely) can remove signals that are later required for incident post‑mortems. Implement conservative policies and schema‑based validations to mitigate this risk.

- Cluster resource contention: Placement rules are powerful but can backfire if cluster capacity is inadequate; deployments can fail if strict constraints are unmet — integrate capacity checks and autoscaling where appropriate.

- Vendor and feature lock‑in concerns: Pushing substantial normalization logic into Azure’s pipeline simplifies operations but increases dependency on Azure’s transformation semantics and KQL. Maintain exportable transformation definitions and version control for rules to keep portability options open.

What to watch next

- GA timelines: Microsoft has published these capabilities as public preview; watch the Azure Monitor docs and product updates for GA announcements and any changes in feature scope or SLA. The community blog posts and Azure Learn pages are the primary places Microsoft is publishing updates today.

- Expanded telemetry types: Current previews emphasize Syslog, CEF, and OTLP logs — organizations should track announcements for traces, metrics, and Windows‑centric telemetry support in the pipeline.

- Managed configuration and governance tooling: Look for richer role‑based controls, deploy‑time validations, and policy enforcement that make it easier for large orgs to safely apply transformations and placement rules at scale. Microsoft’s ongoing observability work includes broader governance features introduced at Build and subsequent updates.

Practical recommendations (quick summary)

- Treat the preview as an opportunity to reduce ingestion costs and improve security, but run it first in a controlled pilot cluster.

- Automate certificate management before enabling mTLS in any production path.

- Start transformations conservatively: filter low‑value noise, then add aggregation and schema mapping iteratively.

- Use placement controls to safeguard production node pools and to bound pipeline resource consumption.

- Keep transformation definitions in source control, and maintain a rollback plan if schema changes break dashboards or alerts.

Conclusion

Azure Monitor pipeline’s public preview updates are a concrete, operationally focused evolution of Azure’s observability stack. By adding mTLS ingestion, deterministic pod placement, and pre‑ingest transformations with schema validation, Microsoft addresses three of the most common pain points for large, distributed telemetry architectures: trust at the ingestion boundary, predictable runtime placement in shared clusters, and signal‑first data shaping that lowers cost and speeds analysis. These are pragmatic improvements that will matter to SREs, security teams, and platform engineers who run telemetry at scale — provided they pilot carefully, automate certificate and capacity management, and treat preview features as controlled rollouts rather than immediate production dependencies.Source: Redmondmag.com Azure Monitor Pipeline Adds New Public Preview Capabilities to Enhance Observability -- Redmondmag.com