Microsoft’s Azure cloud platform suffered a prolonged, multi-stage outage that began at 19:46 UTC on Monday and was not fully resolved until 06:05 UTC the following morning, leaving customers worldwide unable to perform routine virtual machine lifecycle operations and—after a mitigation step—temporarily unable to acquire managed identity tokens in two U.S. regions. The root trigger was a policy change unintentionally applied to a subset of Microsoft‑managed storage accounts that host virtual machine extension packages; that simple change blocked public read access and cascaded into widespread provisioning and scaling failures, and a rushed mitigation produced a follow‑on overload of the managed identity platform. Microsoft’s incident posts and multiple independent reports document the timeline, affected services, and the company’s containment actions.

Background / Overview

Azure virtual machine (VM) operations—provisioning, scaling, extensions installation and life‑cycle tasks—rely on a set of shared platform primitives that include Microsoft‑hosted extension packages and the managed identity/token issuance backplane. Extension packages (agents, installers, configuration bundles) are published by Microsoft and downloaded directly from Microsoft‑managed Azure Storage endpoints (blob.core.windows.net) during installation and updates unless a customer configures private endpoints. When public access to those storage blobs is blocked, VM orchestration and node provisioning can fail because the VM Agent cannot retrieve required artifacts. Microsoft’s documentation makes that dependency explicit.On February 2–3, 2026, Azure experienced two connected failure windows:

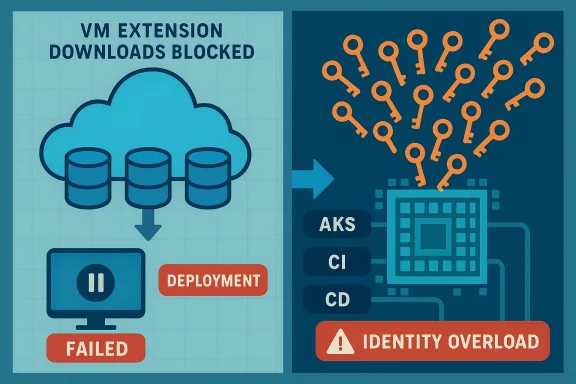

- A Virtual Machines management incident (Tracking ID FNJ8‑VQZ) beginning at 19:46 UTC on February 2 and mitigated by 06:05 UTC on February 3, during which customers saw errors for VM create/update/scale and related platform operations.

- A Managed Identities platform issue (Tracking ID M5B‑9RZ) that ran from roughly 00:10–06:05 UTC on February 3 in the East US and West US regions. That outage prevented customers from creating, updating, or deleting resources that required managed identity token acquisition and delayed identity‑dependent operations while engineers stabilized the identity service.

Timeline: what happened, when

The timeline below consolidates Microsoft’s public incident history and independent reporting into a concise, verifiable sequence.- 19:46 UTC (Feb 2): Microsoft logged an active Virtual Machines service incident (FNJ8‑VQZ) after customers reported errors when performing VM management operations—create, update, scale, start and stop—across multiple regions. Microsoft identified an unintended policy change that affected public read access to a set of Microsoft‑managed storage accounts used for extension packages.

- 20:00–22:00 UTC: Engineers applied a regional mitigation that restored access for some regions. Community reports and vendor status pages confirmed partial recovery in validated regions while the fix was being rolled out globally. Some workloads (AKS node provisioning, VM scale sets, Azure DevOps and GitHub Actions pipelines invoking extensions) continued to fail where downloads were blocked.

- ~00:10 UTC (Feb 3): After the initial mitigation, a surge of traffic and queued operations overwhelmed Microsoft’s Managed Identities platform in East US and West US. The managed identity service began returning failures for token requests and identity operations (tracking ID M5B‑9RZ). This created a second, related outage window for identity‑dependent services.

- 04:20–06:05 UTC: Microsoft reports that infrastructure nodes recovered and engineers ramped traffic back to the identity service slowly, allowing backlogged token operations to complete while preventing further overloads. Service was declared restored at 06:05 UTC after backlog processing completed.

The technical anatomy: how a simple policy change cascaded

At first glance, a change that blocks public read access on some storage accounts sounds narrowly scoped. In practice, the storage accounts in question are the distribution points for VM extension packages used ubiquitously during VM creation and configuration. A VM provisioning flow commonly includes these steps:- The compute control plane initiates a VM creation or scale operation.

- The VM Agent on the compute instance (or the VM orchestration service on scale sets/AKS nodes) requests one or more extension packages from Microsoft‑hosted storage during provisioning.

- The extension packages download, verify against a signed catalog, and the agent executes installation tasks that finalize the VM’s configuration.

- VM creates and scales failed with provisioning errors.

- Virtual Machine Scale Sets could not add or configure instances.

- AKS node provisioning and extension installs failed, causing cluster scale‑out attempts to stall.

- CI/CD systems that rely on hosted runners or extension‑driven agents—Azure DevOps, GitHub Actions—saw pipelines fail when extension artifacts could not be retrieved.

Microsoft’s incident post explicitly ties the FNJ8‑VQZ Virtual Machines incident to “a recent configuration change that affected public access to certain Microsoft‑managed storage accounts, used to host extension packages.” That sentence explains why a narrow permission change appeared as a broad VM management failure across regions.

Which services and customers were affected

The outage touched a mix of platform‑level capabilities and customer workloads because of shared dependencies. Documented, Microsoft‑named impacts included:- Azure Virtual Machines and Virtual Machine Scale Sets: provisioning, scaling and lifecycle errors.

- Azure Kubernetes Service (AKS): node provisioning and extension installation failures.

- Azure DevOps and GitHub Actions: pipeline failures where tasks required VM extensions or packages hosted by Microsoft‑managed storage. External reports and vendor status pages corroborated GitHub Actions runner failures.

- Managed Identities for Azure Resources (East US, West US): failures to create/update/delete resources or acquire tokens for managed identities, affecting many downstream services such as Synapse, Databricks, Stream Analytics, AKS, Copilot Studio and others listed in Microsoft’s incident text.

Why this incident matters: systemic risk in shared primitives

There are three structural lessons here that go beyond the particular bug:- Centralized artifact hosting creates operational coupling. Many VM lifecycle tasks implicitly assume Microsoft‑hosted extension artifacts are available via public endpoints. That assumption simplifies operations but creates a single point of failure for many provisioning and management flows.

- Control‑plane and platform primitives have systemic blast radius. When a policy or configuration error affects a shared platform surface—extension storage, edge routing, identity token issuance—the visible failures span management consoles, developer workflows and production workloads. Previous Azure incidents driven by control‑plane misconfigurations (for example, outages tied to Azure Front Door configuration changes) have produced similar broad impacts and are a useful comparison for how concentrated control‑plane failures escalate.

- Mitigations can generate second‑order failures. A regional fix that allows queued or retried operations to replay can unintentionally create a surge against another shared platform (here, managed identity), producing an overload and a second outage window. That interplay between mitigation and load is predictable and requires tactical throttles and staged rollouts to avoid regenerative failure.

What Microsoft did and what they said

Microsoft followed a standard containment and recovery pattern:- Identified the proximate cause (policy change blocking public read access to Microsoft‑managed storage accounts) and applied a configuration update (mitigation) to restore access where validated.

- When the mitigation produced a surge against Managed Identities, Microsoft removed or isolated traffic from the affected identity infrastructure and brought nodes back to a healthy state, then gradually ramped traffic to allow the backlog to complete.

- Microsoft committed to an internal retrospective and a forthcoming PIR that will explain how the configuration change was introduced, why preflight validation or deployment gates didn’t block it, and what process or tooling changes will reduce recurrence risk. Microsoft’s status history typically publishes PIRs within days to a few weeks of an incident.

Practical guidance for administrators and DevOps teams

This incident is a stark reminder to assume shared cloud primitives will sometimes fail. Here are practical steps teams should adopt now to reduce blast radius and shorten recovery windows.Short‑term (immediate, low friction)

- Cache critical extension packages or host copies in customer‑controlled storage. If you operate fleets or automation that depend on extension packages at scale, consider replicating copies into a private, regionally colocated storage account or an internal artifact repository. This removes an implicit public‑blob dependency during provisioning.

- Use private endpoints and service endpoints for critical agent and extension flows where feasible; this can allow controlled proxied access and reduce exposure to public‑blob environmental changes.

- Harden CI/CD jobs and runners: add error‑class handling that treats extension download failures as retriable but with exponential backoff and limited retries; add a fallback path to skip optional extension steps during scale events so long as this doesn’t compromise security posture.

- Alert on identity token errors and rate spikefsignal of upstream identity platform strain; instrument alerts that correlate token errors with orchestration queues to detect replay storms before they become service outages.

- Decouple critical provisioning flows from single hosted artifacts. Maintain a mirrored artifact repository under your control and integrate the mirror into bootstrapping templates and images.

- Regularly exercise failover runs: practice provisioning and scale workflows in simulated degraded artifact and identity conditions to validate runbooks and fallbacks.

- Implement throttles and circuit breakers in orchestration engines to prevent mass replay of operations after an upstream mitigation. Staged replays with exponential ramps reduce the risk of overloading secondary platforms.

- For high‑availability, mission‑critical workloads, demand more explicit availability SLAs and operational commitments in vendor contracts covering artifact distribution and identity services.

- Plan multi‑cloud or multi‑region patterns for critical management and authentication surfaces when regulatory and business requirements justify the added complexity.

- Advocate for vendor transparency: ask cloud vendors to publish more granular impact and recovery metrics in PIRs, and to document artifact distribution designs and expected recovery behaviors when their control plane is in flux.

The broader pattern: a recurring theme in hyperscaler outages

This event fits a recurring pattern in cloud outages: a small change or control‑plane bug in a heavily shared surface produces outsized, cross‑product disruptions. Earlier incidents—like configuration regressions in global edge fabrics or accidental policy rollouts—have produced similar failures across identity, portal access and developer tooling. Those historical incidents illustrate that scale and centralization deliver both economic value and concentrated operational risk. Several independent post‑mortems and community reconstructions of past events document comparable mechanics and recovery playbooks, reinforcing that this isn’t a one‑off phenomenon.Risks, unanswered questions, and what to watch in Microsoft’s PIR

Microsoft’s immediate posts describe the proximate technical facts and the mitigation sequence. The community and enterprise customers should expect the PIR to answer several crucial questions:- How did the policy change pass deployment validation and reach production? Which tooling or human process allowed the change to propagate?

- Why did the identity platform lack sufficient throttling or burst protection to withstand a predictable replay of queued operations? What capacity and autoscale changes will be adopted to avoid similar overloads?

- Did Microsoft’s mitigation controls (regional fixes, staged rollouts) adhere to preapproved playbooks, and if not, what changes to the playbooks will be implemented?

Conclusion: resilience is a choice, not a default

The February 2–3 Azure disruption is an operational cautionary tale for every cloud consumer and operator. A seemingly innocuous policy change to Microsoft‑managed storage accounts blocked extension downloads and halted routine VM operations; an earnest but hurried mitigation then produced a second outage by overwhelming the Managed Identities platform. The episode underlines three immutable truths for cloud architects and SRE teams:- Shared platform primitives are single points of failure by design; treat them as such in architecture, contracts and runbooks.

- Protect critical artifact flows with mirrors, private endpoints, and fallback images so provisioning does not depend on a single external repository.

- Design mitigations with staged ramps and automated throttles—mitigations that remove the problem without generating new ones.

Source: InfoWorld Azure outage disrupts VMs and identity services for over 10 hours