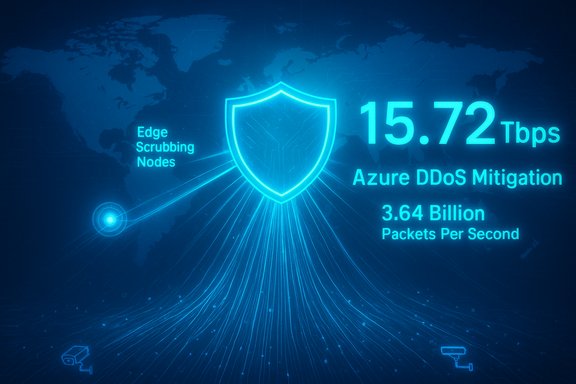

Microsoft’s Azure platform successfully detected and neutralized a record-breaking distributed denial-of-service (DDoS) attack in late October, a multi-vector assault that peaked at 15.72 terabits per second (Tbps) and nearly 3.64 billion packets per second (pps) — the largest single cloud-based DDoS event Microsoft has observed to date. The company traced the attack to the Aisuru family of TurboMirai-class IoT botnets, and said the assault was launched from more than 500,000 source IP addresses and targeted a single public endpoint in Australia; Azure’s globally distributed DDoS Protection network filtered and redirected the malicious traffic so customer workloads remained available without interruption.

However, the raw facts that enabled the attack — massive numbers of insecure consumer devices, higher consumer upstream speeds, and economically cheap bandwidth — have not changed. The Internet’s “baseline attack surface” has shifted upward. That means defenders must continue evolving: better telemetry, faster cross-stakeholder remediation, secure-by-default IoT design, and investment in packet-processing resiliency.

A connected internet offers enormous value, but it also provides greater amplification for attackers. The only durable path forward is coordinated improvement across the technology stack: OEMs hardening devices, ISPs enforcing outbound suppression, cloud providers scaling and automating mitigation, and policymakers setting minimum device security standards. The Azure incident is both a wake-up call and — because it was mitigated — a validation of the investments required to keep cloud services reliable in an era of exponentially growing DDoS capability.

Microsoft’s public disclosure and Netscout’s analysis together provide a clear picture: the threat has grown, the tools to fight it are scaling, and systemic changes are required to prevent constant escalation. The technical community, industry leaders, and regulators all have roles to play in ensuring the internet remains robust against future record-breaking attacks.

Source: Cybersecurity Dive Record-breaking DDoS attack against Microsoft Azure mitigated

Background

Background

The accelerating arms race in volumetric DDoS attacks

DDoS attacks have steadily grown in size and sophistication over the past decade, but 2024–2025 saw a marked acceleration: Mirai-derived botnets and their modern derivatives (commonly grouped under labels such as TurboMirai) have demonstrated the ability to aggregate massive bandwidth by commandeering residential routers, cameras, and other IoT devices. These botnets increasingly produce both extreme throughput (Tbps) and extreme packet rates (gpps), creating attacks that stress link capacity and packet processing simultaneously. Netscout’s ASERT group documented a wave of “demonstration attacks” in October that exceeded 20 Tbps and multiple billion-packet-per-second events, underlining a new scale of threat to network operators and cloud providers.Why this incident matters

This Azure incident is noteworthy for three interrelated reasons: the absolute scale (15.72 Tbps / 3.64 Bpps), the source distribution (hundreds of thousands of compromised consumer devices), and the target (a single cloud endpoint). Together those factors demonstrate how compromised edge devices plus ever-increasing residential broadband speeds turn what were formerly “small” botnets into potential infrastructure-level threats. Microsoft’s public mitigation of the event is an important proof point that modern cloud-scale DDoS defenses can still protect tenant workloads when engineered and deployed at global scale.Anatomy of the attack

Multi-vector, high-rate floods

Microsoft described the October 24 attack as a multi-vector assault that included extremely high-rate UDP floods aimed at a single public IP. The peak metrics reported — 15.72 Tbps and ~3.64 billion pps — place this event among the largest volumetric and packet-rate attacks publicly disclosed. Those numbers represent a combination of sheer byte-volume pressure (the terabit metric) and packet-churning intensity (the pps metric), which together can overwhelm both peering links and the packet-processing capacity of routers, firewalls, and load balancers.Source profile: consumer devices and Aisuru/TurboMirai family

The attack was attributed to the Aisuru botnet, described by Microsoft and others as a TurboMirai-class IoT botnet that commonly compromises consumer routers, IP cameras, and other internet-connected home devices. Netscout’s ASERT analysis positions Aisuru within a broader family of Mirai derivatives that have evolved to generate multi-Tbps volumes and multi-gpps packet rates. Attack traffic was observed originating from over 500,000 source IPs spanning multiple regions, with substantial representation from residential ISPs in the United States. Because many CPE (customer-premises equipment) devices have fast upstream links today, compromised home endpoints can each contribute sizeable throughput to a coordinated attack.Attack characteristics that complicate mitigation

Several characteristics made this event particularly hazardous:- High packet rate (billions of pps) that stresses router line cards and middlebox CPU cycles.

- Medium-sized randomized packets and pseudo-randomized ports/flags, complicating simple signature-based filtering.

- Direct-path traffic from many real, non-spoofed source IPs — effective for generating volume while reducing attacker traceability challenges.

- Distribution across hundreds of thousands of residential networks, making takedown or remediation of sources operationally and legally complex.

How Azure’s DDoS Protection stopped the attack

Layered, global mitigation fabric

Microsoft credits its success to Azure’s globally distributed DDoS Protection infrastructure and continuous detection capabilities. That infrastructure operates as a layered mitigation fabric: detection systems rapidly identify volumetric anomalies, automated scrubbing and filtering nodes scale to absorb and separate malicious traffic, and traffic that must be preserved for legitimate clients is routed away from overloaded paths. In this incident, Azure’s automated systems “filtered and redirected” malicious flows so customer-facing workloads experienced no visible downtime.Automation and telemetry: the operational advantage

A key practical advantage for hyperscale cloud providers is automation. Manual mitigation cannot match the speed and scale required for Tbps-scale events. Azure’s DDoS platform uses telemetry and behavioral analysis to automatically adjust mitigations in real time. That eliminates human-in-the-loop delays and enables scrubbing capacity to be applied to affected regions or tenants as needed. Microsoft reports that these automated measures initiated on detection and were sufficient to neutralize the attack.Why cloud-scale mitigation succeeds where standalone appliances fail

Traditional perimeter appliances — firewalls, NGFWs, and on-premise scrubbing devices — have finite packet-processing limits. Packet-rate-driven attacks can exhaust CPU and ASIC resources even when link capacity remains available. Cloud DDoS mitigation leverages distributed scrubbing at scale, absorbing traffic across many geographically dispersed locations and leveraging backbone capacity to dissipate the attack volume. That model is not infallible, but in this case it prevented service impact to Azure customers.Broader operational implications

Residential broadband and IoT are changing the baseline

Microsoft’s messaging highlights a simple, consequential fact: attackers are scaling with the internet itself. As fiber-to-the-home rolls out and consumer upstreams increase, the per-device attack contribution grows. Combined with increasingly capable IoT devices, the baseline for possible attack volume rises in lockstep. This means that network operators, cloud providers, and governments must assume the potential for larger volumetric and gpps events moving forward.The ISP and CPE angle: inbound vs. outbound responsibilities

Netscout’s analysis emphasizes that these botnets cannot spoof source IPs — a mixed blessing. It means ISPs can trace and correlate compromised CPE to subscribers, enabling remediation and quarantine. But it also means upstream controllers must prioritize outbound suppression (egress filtering, rate-limiting) and work with access-network operators to mitigate outbound floods that destabilize upstream infrastructure. Access networks have a new onus: stop traffic leaving residential networks that could be used to attack the broader internet.Hardware stress and collateral impacts

High pps attacks do more than consume transit capacity: they can cause chassis or line-card failures in routers and break stateful devices that aren’t engineered for extreme packet churn. Netscout reported real-world impairments where line cards and routing gear suffered operational impact under the stress of outbound device-driven floods. This elevates the risk that a single large DDoS event could produce cascading outages in service provider networks if not properly contained.What this means for enterprises and service operators

Practical steps for enterprises (ordered actions)

- Ensure DDoS protection is enabled for public-facing assets — prefer cloud-native or multi-provider scrubbing solutions that can scale beyond local appliance limits.

- Implement rate limits and behavioral detection on ingress where possible; use Anycast-distributed services to spread volumetric load.

- Maintain up-to-date BGP routing and failover plans; advertise null routes (blackholing) only as a last resort and for very small windows.

- Harden exposed services (e.g., gaming endpoints, APIs) with application-layer protections and require strong authentication where appropriate.

- Conduct DDoS tabletop exercises and ensure runbooks are in place for high-volume incidents.

What ISPs and access providers should prioritize

- Deploy egress filtering and enforce BCP38/BCP84-style anti-spoofing and rate-limiting at the network edge.

- Instrument CPE telemetry to detect abnormal outbound flows originating from subscriber devices.

- Cooperate with cloud providers and national CERTs to identify and remediate compromised devices at speed.

- Offer subscribers managed device hygiene: automated updates, default password enforcement, and notifications when devices are compromised.

Policy, OEM responsibility, and the internet hygiene problem

Device manufacturers must be part of the solution

The root cause for TurboMirai-class botnets remains largely the insecure default posture of many IoT devices: unchanged factory credentials, embedded vulnerabilities, and weak or absent update mechanisms. OEMs should adopt secure-by-default designs, implement mandatory update pathways, and work with regulators and ISPs to enable remote remediation when devices are compromised. These are engineering and commercial choices that will materially reduce botnet potential if widely adopted.Regulatory and marketplace levers

Governments and standards bodies can impose minimum security baselines for consumer devices (unique default credentials, automatic secure updates, vulnerability disclosure requirements). Marketplace pressure — such as liability or certification programs for “secure” IoT — can shift manufacturer behavior faster than voluntary approaches alone. Both public policy and consumer education are required to change the infeasible scale of commoditized insecure devices.The Cloudflare incident and what it reveals about Internet centralization

An initial DDoS suspicion, then an internal misconfiguration

In the days following Microsoft’s disclosure, Cloudflare experienced a major global outage that briefly disrupted numerous high-profile services. Cloudflare initially considered the possibility of a hyper-scale DDoS attack but later concluded the outage was caused by an internal change to a database query and an associated Bot Management configuration file; the oversized propagated file triggered failures in core proxy systems. While not caused by malicious traffic, the outage highlighted the risk that a single provider’s internal failure can have wide-reaching, internet-scale effects.Lessons from the outage

- Centralization of routing and security functionality into a few large providers increases systemic risk.

- Failure modes are not only malicious attacks — benign operational changes can cascade across dependent services.

- Providers must invest in robust configuration governance, failover, and global kill-switch mechanisms to avoid broad disruption resulting from routine internal changes.

Risks and caveats — what to watch next

Larger attacks are both more expensive to fight and more impactful

The Azure mitigation demonstrates capability, but the industry should not conflate a single successful mitigation with long-term safety. Attackers and botnet operators evolve rapidly; demonstrated “proof-of-capacity” events (multiple >20 Tbps demonstrations in October) are signals that attackers can and will keep pushing limits. Defenders must plan for sustained escalation in both Tbps and gpps metrics.Hardware and supply-chain risk

As packet rates grow, the frontier of defense shifts toward hardware resiliency: line-card throughput, TCAM capacity, and specialized DDoS mitigation ASICs. Upgrading infrastructure is capital-intensive and takes time — a gap attackers can exploit. Providers should prioritize investments in packet-processing resilience and redundant routing capacity.Legal and incident-response complexity

With source IPs mapping to end-user subscribers across jurisdictions, coordinated remediation requires cross-organizational workflows and legal clarity. Tracing and notifying hundreds of thousands of infected endpoints poses privacy and operational challenges; ISPs, cloud providers, and national CERTs must work collaboratively to streamline notifications and quarantines while respecting subscriber rights.Practical recommendations (for boards, CISOs, and network teams)

- Treat DDoS as a strategic risk: include it in risk registers and incident tabletop exercises.

- Contract for scalable DDoS mitigation with multiple providers or a cloud provider that has demonstrated Tbps/hyper-scale capacity.

- Harden public endpoints: apply rate-limiting, CDN fronting, and application-layer defenses for critical APIs and services.

- Partner proactively with your upstream ISPs for coordinated response playbooks and escalation contacts.

- Monitor device fleets and customer devices (for ISPs or managed providers) for unusual outbound behavior; enforce patching and secure defaults on CPE where possible.

Final analysis: what this event tells us about cloud security maturity

Microsoft’s mitigation of a 15.72 Tbps / ~3.64 Bpps attack demonstrates that cloud-scale DDoS protection, when architected and automated, can be effective even against unprecedented volumetric events. That is an encouraging sign: the architecture of distributed mitigation, telemetry-driven automation, and global scrubbing capacity works in practice.However, the raw facts that enabled the attack — massive numbers of insecure consumer devices, higher consumer upstream speeds, and economically cheap bandwidth — have not changed. The Internet’s “baseline attack surface” has shifted upward. That means defenders must continue evolving: better telemetry, faster cross-stakeholder remediation, secure-by-default IoT design, and investment in packet-processing resiliency.

A connected internet offers enormous value, but it also provides greater amplification for attackers. The only durable path forward is coordinated improvement across the technology stack: OEMs hardening devices, ISPs enforcing outbound suppression, cloud providers scaling and automating mitigation, and policymakers setting minimum device security standards. The Azure incident is both a wake-up call and — because it was mitigated — a validation of the investments required to keep cloud services reliable in an era of exponentially growing DDoS capability.

Microsoft’s public disclosure and Netscout’s analysis together provide a clear picture: the threat has grown, the tools to fight it are scaling, and systemic changes are required to prevent constant escalation. The technical community, industry leaders, and regulators all have roles to play in ensuring the internet remains robust against future record-breaking attacks.

Source: Cybersecurity Dive Record-breaking DDoS attack against Microsoft Azure mitigated