AI assistants are already reshaping how people find, evaluate and act on products and services — and brands that do not make their mobile apps and websites machine-readable risk being sidelined by the next generation of discovery.

The consumer path-to-purchase is fragmenting away from traditional search and direct visits toward conversational assistants and agentic systems that synthesize information and, increasingly, take actions on users’ behalf. Analysts and practitioners are watching three converging trends: rising AI-driven referrals to websites, major platform vendors embedding third‑party apps inside assistant environments, and the growing use of autonomous agents that can complete transactions. These changes are not theoretical — multiple studies show AI traffic is already measurable across the web, and platform moves make integration a practical priority for brands.

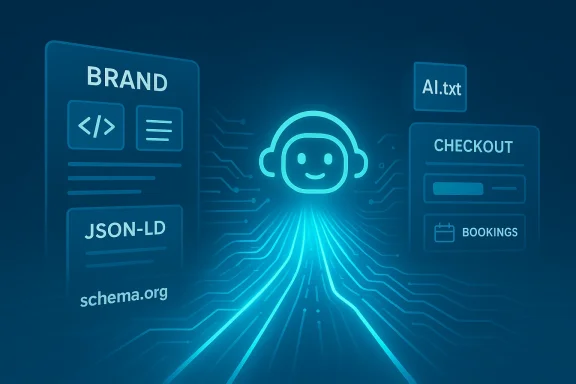

This isn’t simply an evolution of SEO. It’s a structural shift in the interfaces and protocols that mediate discovery. Whereas search‑engine optimization optimized for ranking a human‑readable page, the new work — known variously as Answer Engine Optimization (AEO), Generative Engine Optimization (GEO) or Agentic Engine Optimization (AEO / GEO / AGX) — focuses on ensuring canonical facts, product feeds and action endpoints are reliably accessible to models and agents that operate programmatically. The technical and organizational implications are broad: content hygiene, API readiness, transactional robustness, legal guardrails and new performance metrics all matter.

Local businesses can pick up fast wins by publishing LocalBusiness schema, current hours and booking APIs; retailers can boost visibility by improving feed freshness because price and inventory consistency are key signals assistants use. Even small, accurate changes can produce outsized visibility improvements in assistant responses.

AI assistants will not replace a well-designed app or a thoughtful product experience. But they will become an essential distribution and action layer. Brands that treat AI readiness as a data‑product problem — and move quickly to bridge the human‑first and machine‑first worlds — will win privileged placement in how customers discover, choose and transact in the coming decade.

Source: Marketing Dive Why brands need to optimize their mobile apps and websites for AI

Background / Overview

Background / Overview

The consumer path-to-purchase is fragmenting away from traditional search and direct visits toward conversational assistants and agentic systems that synthesize information and, increasingly, take actions on users’ behalf. Analysts and practitioners are watching three converging trends: rising AI-driven referrals to websites, major platform vendors embedding third‑party apps inside assistant environments, and the growing use of autonomous agents that can complete transactions. These changes are not theoretical — multiple studies show AI traffic is already measurable across the web, and platform moves make integration a practical priority for brands.This isn’t simply an evolution of SEO. It’s a structural shift in the interfaces and protocols that mediate discovery. Whereas search‑engine optimization optimized for ranking a human‑readable page, the new work — known variously as Answer Engine Optimization (AEO), Generative Engine Optimization (GEO) or Agentic Engine Optimization (AEO / GEO / AGX) — focuses on ensuring canonical facts, product feeds and action endpoints are reliably accessible to models and agents that operate programmatically. The technical and organizational implications are broad: content hygiene, API readiness, transactional robustness, legal guardrails and new performance metrics all matter.

Why this matters now

- AI referrals are not a curiosity. Recent large-sample studies find a majority of websites already receive measurable visits from AI chatbots, even if the share of overall traffic remains modest today. Those visits are growing and concentrated among a handful of powerful assistants.

- Market forecasts project AI‑mediated results could overtake traditional organic search traffic within a few years, making early adaptation strategic rather than experimental. Semrush’s visibility research projects AI results may surpass organic search visits by 2028 and highlights higher conversion rates among AI‑driven users.

- Platform vendors are converting assistants into distribution surfaces. OpenAI’s Apps initiative and SDK lets third‑party services run inside ChatGPT; a similar pattern is emerging at other vendors. That moves discovery away from a click-first webpage toward in‑chat, API‑driven actions. Brands that are not agent‑ready will not just lose clicks — they may lose the chance to be invoked at all.

How AI systems interact with sites and apps today

Machine-first vs. human-first interfaces

Modern websites and mobile apps are designed for humans: visual layout, touch interactions, client-side rendering, and complex JavaScript-driven flows. AI assistants, by contrast, prefer structured, programmatic interfaces — short, canonical facts, machine-readable metadata and stable APIs. When assistants must interact with human-first UIs they often fall back to brittle workarounds (screenshots, simulated clicks, DOM scraping), which are slow and error-prone. Brands must bridge that gap by exposing clear machine workflows.The three data highways assistants use

- Crawlable canonical content (authoritative pages and structured data).

- Pushable feeds and APIs (product catalogs, pricing, availability, booking endpoints).

- Live action surfaces (bookings, checkouts, reservations that an agent can execute reliably).

Practical technical checklist: what brands should implement now

Below are prioritized, concrete actions engineering and product teams can take to make apps and sites agent‑ready.1) Improve digital hygiene and page-level semantics

- Use semantic HTML: correct heading hierarchy (H1..H6), proper lists, paragraphs and landmark roles so parsers can infer structure and intent.

- Provide clean, canonical URLs and explicit freshness timestamps (dateModified) where content changes.

- Include high-quality alt text and descriptive labels for images and interactive controls to help multimodal agents interpret visual content.

2) Publish structured data and canonical facts

- Maintain robust JSON‑LD / schema.org markup for Product, Offer, AggregateRating, FAQ, LocalBusiness and Event where relevant.

- Ensure metadata contains SKU/GTIN, price, availability, promotion windows and timestamps.

- Treat structured data as the canonical machine-readable truth, not as an afterthought.

3) Expose APIs and feeds (the push layer)

- Provide a well-documented, authenticated API or push feed for product catalogs, booking availability, pricing and inventory that supports near‑real‑time freshness for fast-moving items.

- Use standard authentication patterns and sandboxed agent credentials for third‑party integrations.

- Deliver machine‑readable receipts, order tokens and status endpoints so agents can confirm and reconcile actions programmatically.

4) Adopt machine-readable AI governance (ai.txt / ai.json)

- Publish a machine-readable AI policy manifest (commonly surfaced as /.well-known/ai.txt or ai.json) that declares whether your content may be crawled, indexed, or used for model training, and where to reach your data‑use contacts.

- While no single global standard is yet universal, several industry proposals and live implementations already use ai.txt manifests to communicate policy and provenance. Treat this as an emerging best practice and a defensive control against unwanted training or reuse.

5) Harden programmatic action flows

- Test checkouts and booking flows under programmatic access (APIs and agent connectors) rather than only via UI testing. Programmatic failures are often invisible to manual QA.

- Implement idempotency tokens, transactional rollbacks and throttling rules tailored for agent-driven concurrency.

- Add human‑in‑the‑loop checkpoints for high‑risk transactions (payments, cancellations, identity‑sensitive actions).

6) Vectorize assets and metadata for multimodal agents

- Provide vector embeddings for product descriptions and images where appropriate to enable semantic retrieval by assistants that use embedding indexes.

- Ensure images are accessible, captioned, and have machine‑readable thumbnails and metadata. This reduces the need for assistants to screenshot and heuristically interpret visuals.

Organizational changes: governance, measurement and procurement

Governance

- Create cross-functional governance: product owns data models, engineering owns APIs and security, marketing owns canonical pages and copy, legal controls training and licensing.

- Procure agent connectors and model access with contractual controls (non‑training clauses, audit telemetry, data provenance and deletion rights).

Measurement

- Move beyond last‑click attribution. Design server-side experiments that attribute value to synthesized answers and completed agent actions.

- Instrument agent-driven sessions with session tokens, qualitative feedback (e.g., “How did you hear about us?”) and revenue-level tracking to capture lift from assistants.

Skills and procurement

- Hire or retrain teams to think “data-first” rather than UI-first. Skills in schema design, API productization, and conversational UX are now as important as front-end polish.

- Demand telemetry and sandbox access from assistant platforms to understand how your brand is invoked and cited.

Concrete examples and early wins

Several pilot partners launched inside ChatGPT’s app ecosystem and demonstrate the new distribution model: travel and listing platforms, streaming and discovery services, and marketplaces are particularly well positioned to benefit from agent integration when they expose prices, availability and booking APIs. Integrations with major assistants let users ask the assistant to do things — book, reserve, assemble lists — without landing on a brand’s web pages first. OpenAI’s Apps SDK pilots included companies such as Booking.com, Expedia, Spotify and Zillow, signaling that the model is practical and already in market.Local businesses can pick up fast wins by publishing LocalBusiness schema, current hours and booking APIs; retailers can boost visibility by improving feed freshness because price and inventory consistency are key signals assistants use. Even small, accurate changes can produce outsized visibility improvements in assistant responses.

Risks and mitigation

1) Centralization and gatekeeper risk

Major assistant platforms can become dominant distribution channels and may favor integrated partners. Brands should pursue dual strategies: integrate with assistants where valuable, and concurrently invest in direct‑to‑consumer channels and first‑party experiences. Maintain contractual flexibility and exportable data feeds to avoid lock‑in.2) Privacy, regulatory and liability exposure

Agentic actions create new liability vectors. If an assistant completes a purchase, who is responsible for disclosures, refunds, or fraud mitigation? Regulated industries (healthcare, finance) face elevated compliance risk when agents execute real‑world actions. Mitigation: human‑in‑the‑loop checkpoints, clear policy manifests, and legal reviews of agent permissions.3) IP leakage and inadvertent training

Public content scraped by crawlers can become training data for models, raising IP and brand‑control concerns. Publishing ai.txt manifests and negotiating contractual protections with major model providers (non‑training clauses, takedown processes) are defensive controls. Flag any unverifiable or proprietary claims in your content to limit hallucination risk when models synthesize answers.4) Measurement and attribution blindness

A zero‑click world undermines traditional measurement. Brands should instrument server‑side events, assign unique assistant session tokens, and treat agent interactions as a first‑class source of demand in measurement frameworks. Design experiments that capture conversions completed entirely within an assistant flow.A prioritized 90‑day roadmap for product, engineering and marketing teams

- Audit (Days 1–14)

- Run an “agentability” crawl: check schema presence, robots/ai.txt, sitemap correctness, and API availability.

- Identify fragile flows (checkout, booking, account creation) and pages with missing canonical metadata.

- Quick fixes (Days 15–45)

- Apply semantic HTML fixes, add missing schema.org JSON‑LD, correct alt text, and add dateModified timestamps.

- Publish a simple ai.txt manifest that states crawl and training policies; add contact details for AI use inquiries.

- API and feed work (Days 30–75)

- Build or harden product/booking APIs with clear tokenized access and sandbox credentials for assistant partners.

- Publish machine‑readable feeds with price, inventory and timestamp fields and validate freshness.

- Pilot integration (Days 60–90)

- Integrate with one assistant platform or app SDK (for example, a ChatGPT app or a partner program) to learn connector requirements and measure ROI.

- Capture baseline and experiment metrics (assistant impressions, conversions, revenue per session).

Measurement: what to track differently

- Assistant impressions and citation share (how often the assistant cites your canonical pages or feed).

- Assistant-driven conversions (tracked via server tokens, receipts and assistant session IDs).

- Feed freshness and API error rates (high variance here directly correlates with reduced inclusion).

- Assisted revenue per session and downstream retention (agents may drive different user quality).

Final analysis: strengths, opportunities and real threats

- Strengths: Brands that already have clean data layers, mature APIs and strong content governance will find the technical lift manageable. They can convert product truth into machine‑readable assets and be first movers inside assistant ecosystems. Structured facts and authoritative feeds will increase citation likelihood and, critically, support higher‑confidence assistant actions.

- Opportunities: Assistants can act as distribution multipliers. Integration reduces friction for transactions and gives brands direct access to users who might never open an app or webpage. For categories where timeliness and availability matter (travel, local services, retail), being agent‑ready is a competitive advantage.

- Threats: Centralized gatekeeping, legal exposure from agent‑executed actions, intellectual property leakage and measurement blindspots are real and immediate. Brands must balance platform participation with governance, contractual protections and strong instrumentation. Not taking proactive steps risks becoming invisible in the very places customers increasingly discover and transact.

The near-term operating principle: be discoverable, be actionable, be governable

Being discoverable means publishing canonical machine-readable facts and feeds that assistants can cite with confidence. Being actionable means exposing robust APIs and programmatic action flows that agents can use to complete tasks without breaking. Being governable means having legal, procurement and telemetry controls so integrations preserve brand safety, customer privacy and regulatory compliance.AI assistants will not replace a well-designed app or a thoughtful product experience. But they will become an essential distribution and action layer. Brands that treat AI readiness as a data‑product problem — and move quickly to bridge the human‑first and machine‑first worlds — will win privileged placement in how customers discover, choose and transact in the coming decade.

Quick checklist (for rapid copy/paste to product teams)

- Run an agentability crawl (schema, ai.txt, sitemap, robots).

- Add/mend semantic HTML and schema.org JSON‑LD.

- Publish ai.txt/.well‑known manifests declaring training/indexing policy.

- Expose pushable feeds/APIs for price, availability and bookings.

- Harden programmatic checkouts and add human checkpoints for high‑risk actions.

- Instrument assistant sessions and design server‑side experiments to capture real value.

Source: Marketing Dive Why brands need to optimize their mobile apps and websites for AI