BeyondID and Nexera are betting that the next big enterprise AI battleground is not model quality alone, but the control plane around AI: identity, governance, monitoring, and operational discipline. Their newly announced partnership aims to package those capabilities into a production-ready offer for companies that want AI agents and copilots in the real world, not just in pilots. The timing is notable. As enterprises rush to deploy systems from Anthropic, Microsoft Copilot, Google Gemini, and OpenAI, the industry is also waking up to the fact that non-human identities, agent permissions, and machine-to-machine trust are becoming a serious security problem.

The enterprise AI market has moved quickly from experimentation to execution, but the supporting security model has lagged behind. For decades, identity and access management was built around people: employees, contractors, and partners with recognizable roles and revocable credentials. AI agents change that formula because they can act autonomously, call tools, access data, and chain workflows without a human being in the loop for every request. Microsoft’s recent documentation reflects this shift by introducing agent identities and treating AI agents as a first-class identity category rather than just another application object.

That matters because non-human identities are now multiplying across enterprise environments. Service accounts, automation scripts, bots, and AI agents can all become high-value targets if they are overprivileged or poorly governed. Microsoft’s own guidance warns that agent identity availability is becoming foundational to new product experiences, while also noting that preview and governance features can affect how agents are created, tracked, and controlled. In other words, the market is moving from can we build the agent? to can we prove who it is, what it can do, and whether it should still be allowed to do it?

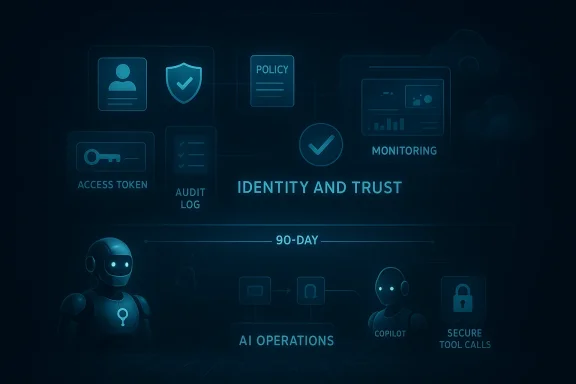

This creates a market opening for specialists. Traditional systems integrators can help with AI strategy and platform rollouts, but many enterprises still need a partner that understands both AI operations and identity governance in the same engagement. That is the gap BeyondID and Nexera are trying to fill with an integrated model that combines the Intelligence Layer with the Identity and Trust Layer. According to the announcement, the partnership is designed to take organizations from strategy to secure production in about 90 days. That promise is aggressive, but it fits the current demand curve.

For vendors, this is no longer a niche concern. It is a competitive wedge. Companies that can bundle AI creation, deployment, and identity governance may win deals that would otherwise get fragmented across security, cloud, and consulting budgets. The BeyondID-Nexera partnership is therefore more than a press release; it is a signal that the enterprise AI market is shifting toward operational trust as a buying criterion.

The partnership announcement lays out four integrated go-to-market offerings. These include an AI Identity Readiness Sprint, a 90-Day Secure Agent Launch, Enterprise AI Platform Hardening, and AI Operations + Identity Monitoring. Each offer is designed to move customers from planning to deployment and then into ongoing managed oversight. The architecture is clear: assess, secure, launch, harden, and monitor.

The 90-Day Secure Agent Launch is the centerpiece. It promises production-grade agent deployment with identity architecture embedded at build time, including access controls, secrets management, monitoring, and compliance validation. The underlying message is that security should not be a retrofit; it should be part of the birth certificate of the system. That is one of the stronger arguments in the announcement.

The Enterprise AI Platform Hardening package is aimed at organizations already using or evaluating platforms such as Anthropic, Microsoft Copilot, Google Gemini, and OpenAI. It adds shadow AI detection, privilege tiering, data segmentation, and regulatory alignment. That combination suggests the companies are targeting the real-world messiness of enterprise adoption, where multiple AI tools often appear before any formal governance framework does.

The managed service layer, AI Operations + Identity Monitoring, extends the value proposition beyond launch. It includes drift and model monitoring, identity anomaly detection, agent recertification, and continuous governance optimization. That is likely where the partnership can become sticky, because enterprises rarely want a one-time AI deployment; they want a support model that keeps pace with changing policy, data, and risk.

This is not a theoretical problem. Microsoft’s documentation explicitly distinguishes agent identities from classic applications and service principals, and also explains that different governance paths apply depending on how an agent is created. That alone reveals the complexity organizations now face. If a company cannot reliably answer whether an AI workload is an app, a service principal, or a formal agent identity, it is already behind.

The market is also beginning to recognize that the old assumptions of IAM do not fully hold for agents. Human users have predictable patterns, consent boundaries, and lifecycle events. Agents may be ephemeral, delegated, or cloned. They may also outlive the project that created them, which means governance has to include expiration, sponsorship, and recertification. Microsoft’s Entra materials now reference exactly those kinds of controls.

The partnership’s value proposition maps neatly onto those concerns. BeyondID brings the identity controls; Nexera brings the system delivery and operational layer. Together, they are essentially arguing that an AI deployment without identity governance is not enterprise-ready at all, regardless of how impressive the demo looks. That is a strong, defensible position in 2026.

The 90-day promise is especially telling. Enterprises often get stuck in long AI discovery cycles that never quite reach launch because governance, integration, and data access are unresolved. A structured 90-day window gives buyers a concrete frame of reference and forces the partner to be opinionated about scope. That can be valuable if the organization wants momentum instead of indefinite analysis.

This is where managed services become strategically important. Many enterprises do not have the internal staff to continuously validate permissions, monitor agent activity, and recertify access across a growing AI estate. A managed model allows governance to scale with adoption rather than collapsing under it. That may sound mundane, but in enterprise security, boring often wins.

The implication is that AI buying criteria are maturing. Customers are no longer asking only which model is best. They are asking which model can be deployed safely, which identity system can govern it, and which partner can keep it operational after the launch party ends.

That creates a competitive challenge for larger firms that have breadth but not always deep specialization. If the customer wants a partner that can move quickly, speak both AI and IAM fluently, and stay involved after rollout, the smaller specialized alliance may be more attractive than a large generalist. The partnership is effectively turning focus into a strategic asset.

There is also pressure on cloud and model platforms to improve native governance. Microsoft’s own agent identity and governance features suggest that major vendors are already moving in this direction. The more native controls vendors add, the more service partners will need to differentiate on implementation quality, customization, and multi-platform support. That means this partnership is not just competing with security firms; it is competing with the platforms themselves.

The mention of Anthropic, Microsoft Copilot, Google Gemini, and OpenAI is also revealing. Those are mainstream platforms with broad enterprise interest, which means the companies are not targeting a niche experimental stack. They are aiming at the AI tools that most organizations are already evaluating or piloting in some form. That broad applicability improves commercial reach, even if each deployment still needs careful customization.

The partnership could also appeal to organizations with multiple AI initiatives already underway but little central coordination. Shadow AI is a real governance problem because teams often adopt tools before security has a chance to standardize controls. The “platform hardening” offer looks designed precisely for that scenario.

A likely secondary benefit is alignment with audit and compliance workflows. If an AI agent can be tied to a clear identity, granted narrow privileges, and continuously monitored, then the organization has a more defensible story for internal audit and external review. That may not sound glamorous, but it is often what gets the deal signed.

There is also a credibility opportunity. By emphasizing monitoring, recertification, and drift management, the partnership can position itself as a long-term operational ally rather than a one-off deployment shop. In the AI era, that distinction is huge.

Another concern is vendor dependency. A managed approach can be a strength, but it also means the client may become reliant on external expertise for key governance functions. Enterprises will want to know whether they are building internal capability or simply outsourcing a new layer of complexity.

That does not make the partnership weak; it makes the market dynamic. But it does mean the companies will need to keep evolving their differentiators. Identity governance is becoming mainstream, and that can compress margins for early specialists if they do not expand their operational value.

The next stage will likely be defined by proof, not promises. Buyers will want to see reference architectures, deployment evidence, measurable risk reduction, and repeatable outcomes across different AI platforms. They will also want to know how the joint offering handles policy changes, new model releases, and the messy reality of hybrid environments.

Source: StreetInsider BeyondID and Nexera Announce Strategic Partnership to Deliver Secure, Production-Ready AI for the Enterprise

Background

Background

The enterprise AI market has moved quickly from experimentation to execution, but the supporting security model has lagged behind. For decades, identity and access management was built around people: employees, contractors, and partners with recognizable roles and revocable credentials. AI agents change that formula because they can act autonomously, call tools, access data, and chain workflows without a human being in the loop for every request. Microsoft’s recent documentation reflects this shift by introducing agent identities and treating AI agents as a first-class identity category rather than just another application object.That matters because non-human identities are now multiplying across enterprise environments. Service accounts, automation scripts, bots, and AI agents can all become high-value targets if they are overprivileged or poorly governed. Microsoft’s own guidance warns that agent identity availability is becoming foundational to new product experiences, while also noting that preview and governance features can affect how agents are created, tracked, and controlled. In other words, the market is moving from can we build the agent? to can we prove who it is, what it can do, and whether it should still be allowed to do it?

Why identity is now an AI architecture problem

The security conversation has shifted from securing prompts and model outputs to securing the entire lifecycle of an AI system. That includes onboarding, secrets management, access policy, data segmentation, monitoring, drift detection, and auditability. Microsoft’s 2025-2026 documentation and feature rollouts around managed security for Copilot Studio and Entra agent identities show how quickly identity governance is becoming part of the AI stack itself.This creates a market opening for specialists. Traditional systems integrators can help with AI strategy and platform rollouts, but many enterprises still need a partner that understands both AI operations and identity governance in the same engagement. That is the gap BeyondID and Nexera are trying to fill with an integrated model that combines the Intelligence Layer with the Identity and Trust Layer. According to the announcement, the partnership is designed to take organizations from strategy to secure production in about 90 days. That promise is aggressive, but it fits the current demand curve.

The broader market context

The wider industry backdrop is one of accelerating AI adoption and increasing control pressure. Microsoft, for example, has moved to add governance features for agents, while other vendors and researchers are emphasizing the need for auditable access, policy constraints, and explicit authorization. That suggests a converging consensus: enterprise AI cannot be treated like a consumer chatbot feature if it is connected to core business data and operational systems.For vendors, this is no longer a niche concern. It is a competitive wedge. Companies that can bundle AI creation, deployment, and identity governance may win deals that would otherwise get fragmented across security, cloud, and consulting budgets. The BeyondID-Nexera partnership is therefore more than a press release; it is a signal that the enterprise AI market is shifting toward operational trust as a buying criterion.

What the Partnership Is Actually Offering

At a high level, the deal pairs Nexera’s AI systems and managed operations with BeyondID’s identity-first security and governance capabilities. Nexera is positioned as the builder and operator of production-grade AI systems, while BeyondID provides the secure identity layer that governs agents, models, workflows, and access rights. That division of labor is important because it reflects how enterprise AI deployments actually fail in practice: not because a model cannot answer questions, but because the surrounding systems are too loosely controlled.The partnership announcement lays out four integrated go-to-market offerings. These include an AI Identity Readiness Sprint, a 90-Day Secure Agent Launch, Enterprise AI Platform Hardening, and AI Operations + Identity Monitoring. Each offer is designed to move customers from planning to deployment and then into ongoing managed oversight. The architecture is clear: assess, secure, launch, harden, and monitor.

The four offerings in plain English

The AI Identity Readiness Sprint is framed as a 30- to 45-day assessment that inventories use cases, evaluates platforms, maps risk, and builds a 90-day execution roadmap. That is a sensible first step because many organizations still do not know where their AI is already embedded, let alone which identities it uses. A sprint like this is less about theory and more about surfacing hidden exposure quickly.The 90-Day Secure Agent Launch is the centerpiece. It promises production-grade agent deployment with identity architecture embedded at build time, including access controls, secrets management, monitoring, and compliance validation. The underlying message is that security should not be a retrofit; it should be part of the birth certificate of the system. That is one of the stronger arguments in the announcement.

The Enterprise AI Platform Hardening package is aimed at organizations already using or evaluating platforms such as Anthropic, Microsoft Copilot, Google Gemini, and OpenAI. It adds shadow AI detection, privilege tiering, data segmentation, and regulatory alignment. That combination suggests the companies are targeting the real-world messiness of enterprise adoption, where multiple AI tools often appear before any formal governance framework does.

The managed service layer, AI Operations + Identity Monitoring, extends the value proposition beyond launch. It includes drift and model monitoring, identity anomaly detection, agent recertification, and continuous governance optimization. That is likely where the partnership can become sticky, because enterprises rarely want a one-time AI deployment; they want a support model that keeps pace with changing policy, data, and risk.

Why this bundle is strategically different

This is not just a security add-on. It is a packaged operating model for enterprise AI. The idea is to close the gap between innovation teams pushing for speed and security teams demanding control, while giving procurement a single point of accountability. That matters because the more fragmented the stack becomes, the harder it is to assign ownership when something goes wrong.- It reduces the friction between AI delivery and security review.

- It gives enterprises a structured way to inventory hidden AI exposure.

- It treats AI agents as governed workloads, not experimental toys.

- It supports both launch and long-term operations.

- It creates a clear service wrapper around otherwise complex tooling.

Why Non-Human Identity Is the New Enterprise Blind Spot

The partnership’s strongest underlying thesis is that non-human identities are becoming the new attack surface. AI agents, automated workflows, service accounts, and integrated tools can all accumulate privileges over time, often without the same review discipline applied to human users. That makes them attractive targets for abuse, misconfiguration, and lateral movement.This is not a theoretical problem. Microsoft’s documentation explicitly distinguishes agent identities from classic applications and service principals, and also explains that different governance paths apply depending on how an agent is created. That alone reveals the complexity organizations now face. If a company cannot reliably answer whether an AI workload is an app, a service principal, or a formal agent identity, it is already behind.

Identity sprawl and the agent problem

AI agents are not just chat interfaces. They can authenticate, access APIs, read documents, initiate tasks, and trigger downstream systems. When that activity is loosely controlled, the agent can become an internal superuser with ambiguous accountability. That is why identity-first architecture is more than a slogan here; it is the mechanism that makes auditability possible.The market is also beginning to recognize that the old assumptions of IAM do not fully hold for agents. Human users have predictable patterns, consent boundaries, and lifecycle events. Agents may be ephemeral, delegated, or cloned. They may also outlive the project that created them, which means governance has to include expiration, sponsorship, and recertification. Microsoft’s Entra materials now reference exactly those kinds of controls.

What security teams care about most

Security teams are likely to focus on a handful of issues. First, whether each agent has a unique identity. Second, whether its privileges are scoped narrowly enough to avoid accidental damage. Third, whether secrets are persisted in ways that create unnecessary risk. And fourth, whether monitoring can detect drift, anomalous behavior, or policy violations in time to matter. These are the practical questions that turn AI governance from marketing language into operational reality.The partnership’s value proposition maps neatly onto those concerns. BeyondID brings the identity controls; Nexera brings the system delivery and operational layer. Together, they are essentially arguing that an AI deployment without identity governance is not enterprise-ready at all, regardless of how impressive the demo looks. That is a strong, defensible position in 2026.

Production Readiness as a Buying Signal

One of the more interesting aspects of the announcement is the repeated emphasis on production-grade and production-ready AI. That language is carefully chosen. It separates real enterprise deployments from proofs of concept, internal experiments, and demo-driven AI theater. In procurement terms, “production-ready” means the vendor is willing to own uptime, access controls, compliance, and ongoing operations.The 90-day promise is especially telling. Enterprises often get stuck in long AI discovery cycles that never quite reach launch because governance, integration, and data access are unresolved. A structured 90-day window gives buyers a concrete frame of reference and forces the partner to be opinionated about scope. That can be valuable if the organization wants momentum instead of indefinite analysis.

Why speed matters, but only with controls

The partnership quotes captured in the announcement make the tradeoff explicit: speed without governance is a liability. That is not a new idea, but it is becoming more urgent because AI systems can act at machine speed across many systems at once. The faster the system moves, the less room there is for weak controls or manual approval processes.This is where managed services become strategically important. Many enterprises do not have the internal staff to continuously validate permissions, monitor agent activity, and recertify access across a growing AI estate. A managed model allows governance to scale with adoption rather than collapsing under it. That may sound mundane, but in enterprise security, boring often wins.

The enterprise versus consumer divide

Consumer AI tools can tolerate a fair amount of improvisation. Enterprise AI cannot. A missed prompt policy in a consumer app may be inconvenient; a mis-scoped autonomous agent in a regulated workflow can become a legal and financial problem. That is why the partnership’s focus on compliance validation and identity monitoring is so important. It acknowledges that enterprise AI needs a different standard of evidence.The implication is that AI buying criteria are maturing. Customers are no longer asking only which model is best. They are asking which model can be deployed safely, which identity system can govern it, and which partner can keep it operational after the launch party ends.

How This Could Reshape the Competitive Landscape

The BeyondID-Nexera alliance sits at the intersection of two crowded markets: AI deployment and identity governance. On one side are systems integrators and consulting firms that help enterprises plan AI initiatives. On the other are security vendors and identity platforms that help control access. The partnership tries to straddle both categories with a single, execution-oriented package.That creates a competitive challenge for larger firms that have breadth but not always deep specialization. If the customer wants a partner that can move quickly, speak both AI and IAM fluently, and stay involved after rollout, the smaller specialized alliance may be more attractive than a large generalist. The partnership is effectively turning focus into a strategic asset.

Where rivals may feel pressure

Traditional consulting shops may need to tighten their story around AI governance or risk being viewed as strategy-only vendors. Security vendors, meanwhile, may need to prove that their identity controls are usable in real AI workflows rather than just well documented. The market is moving toward integrated outcomes, not separate line items.There is also pressure on cloud and model platforms to improve native governance. Microsoft’s own agent identity and governance features suggest that major vendors are already moving in this direction. The more native controls vendors add, the more service partners will need to differentiate on implementation quality, customization, and multi-platform support. That means this partnership is not just competing with security firms; it is competing with the platforms themselves.

Potential go-to-market advantage

If the joint offering works as advertised, the companies may be able to sell into organizations that are stuck between ambition and caution. Those customers want AI value quickly, but they are also tired of hearing that governance will come later. A packaged readiness sprint and a 90-day secure launch offer a middle path that can feel more concrete than open-ended advisory.- It may appeal to regulated industries first.

- It could fit mid-market enterprises that lack deep internal AI security teams.

- It gives boards a more credible governance story.

- It may accelerate procurement by reducing vendor fragmentation.

- It could pressure rivals to bundle identity into AI services more aggressively.

Enterprise Use Cases That Fit This Model

Not every AI project needs the same level of identity rigor, but some absolutely do. The partnership’s packaging suggests a focus on workloads where the AI system will touch sensitive data, transact across systems, or trigger business actions. That includes customer support automation, IT service workflows, knowledge retrieval, compliance workflows, and back-office agent orchestration.The mention of Anthropic, Microsoft Copilot, Google Gemini, and OpenAI is also revealing. Those are mainstream platforms with broad enterprise interest, which means the companies are not targeting a niche experimental stack. They are aiming at the AI tools that most organizations are already evaluating or piloting in some form. That broad applicability improves commercial reach, even if each deployment still needs careful customization.

Best-fit scenarios

The best-fit scenarios are usually where AI can make a measurable operational difference but also create meaningful risk if misused. Think of environments where access control, data lineage, audit logging, and human approval boundaries matter as much as output quality. In those settings, identity governance is not an add-on; it is the foundation that makes automation acceptable.The partnership could also appeal to organizations with multiple AI initiatives already underway but little central coordination. Shadow AI is a real governance problem because teams often adopt tools before security has a chance to standardize controls. The “platform hardening” offer looks designed precisely for that scenario.

The regulated-industry angle

Financial services, healthcare, public sector, and critical infrastructure are likely to find the messaging especially relevant. Those sectors need strong evidence around access, monitoring, and policy enforcement, and they are already used to identity-centric control frameworks. A service that can bridge AI acceleration with security rigor may fit their procurement language unusually well.A likely secondary benefit is alignment with audit and compliance workflows. If an AI agent can be tied to a clear identity, granted narrow privileges, and continuously monitored, then the organization has a more defensible story for internal audit and external review. That may not sound glamorous, but it is often what gets the deal signed.

Strengths and Opportunities

The partnership has several clear strengths. It addresses a real and growing problem, uses language that enterprise buyers understand, and offers a structured path from assessment to production. Just as importantly, it frames security and AI delivery as one continuous service rather than two disconnected projects.- Identity-first architecture is a strong answer to the non-human identity problem.

- The 90-day secure launch model gives buyers a concrete timeline.

- Managed operations create recurring value and stickiness.

- The offer spans both platform hardening and ongoing governance.

- It is well aligned with the direction of major platforms like Microsoft.

- The partnership can appeal to regulated industries and security-conscious buyers.

- It may help enterprises standardize fragmented AI adoption.

Why the market may reward this approach

The most important opportunity is that the market may now be ready for packaged AI trust services. Enterprises are tired of stitching together advice from consultants, controls from security vendors, and implementation from systems integrators. A combined offer can reduce complexity and shorten decision cycles, which is often what the buyer wants most.There is also a credibility opportunity. By emphasizing monitoring, recertification, and drift management, the partnership can position itself as a long-term operational ally rather than a one-off deployment shop. In the AI era, that distinction is huge.

Risks and Concerns

The announcement is compelling, but there are also real risks. Any partnership that promises rapid deployment and strong governance has to prove that it can actually deliver both under customer pressure. The biggest danger is overpromising on how quickly complex enterprise environments can be made secure and production-ready.- The 90-day timeline may be difficult for highly complex organizations.

- Integration with existing identity stacks could be more complicated than the pitch suggests.

- The offer may require significant customer maturity to be effective.

- Native controls from major platforms could reduce differentiation over time.

- Success depends on the quality of continuous monitoring, not just the launch.

- The security posture may vary widely by deployment scenario.

- Buyers may struggle to define ownership across AI, security, and IT teams.

The implementation challenge

Security controls are only as good as the discipline behind them. If a customer treats the sprint as a box-checking exercise, the result could be a polished framework without real operational enforcement. That risk is especially relevant when AI systems cross multiple business units, each with its own data and policy exceptions.Another concern is vendor dependency. A managed approach can be a strength, but it also means the client may become reliant on external expertise for key governance functions. Enterprises will want to know whether they are building internal capability or simply outsourcing a new layer of complexity.

The platform-native threat

The other structural risk is that major AI and identity platforms continue adding native governance features. Microsoft is already moving in that direction with agent identities, governance controls, and managed security features. If those controls become robust enough, specialist partners will need to prove that they add value beyond configuration and policy tuning.That does not make the partnership weak; it makes the market dynamic. But it does mean the companies will need to keep evolving their differentiators. Identity governance is becoming mainstream, and that can compress margins for early specialists if they do not expand their operational value.

Looking Ahead

The most important question is whether this partnership becomes a model for a new class of enterprise AI service: one built around secure deployment, governed autonomy, and ongoing identity operations. If it does, then the market may start treating AI trust as a product category rather than a consulting concern. That would be a meaningful shift for Windows-centric enterprises and the broader IT ecosystem alike.The next stage will likely be defined by proof, not promises. Buyers will want to see reference architectures, deployment evidence, measurable risk reduction, and repeatable outcomes across different AI platforms. They will also want to know how the joint offering handles policy changes, new model releases, and the messy reality of hybrid environments.

What to watch next

- Whether the companies publish concrete customer case studies.

- Whether the 90-day model holds up across regulated industries.

- Whether Microsoft and other platforms expand native agent governance further.

- Whether identity-first AI becomes a standard enterprise buying requirement.

- Whether rivals respond with similar bundled AI-security offerings.

Source: StreetInsider BeyondID and Nexera Announce Strategic Partnership to Deliver Secure, Production-Ready AI for the Enterprise