Microsoft Bing is reportedly testing a subtle but consequential change to Copilot Search: making only the small citation marker at the end of an AI-generated line clickable, rather than turning the full cited sentence into a link. On paper, that sounds like a minor interface experiment; in practice, it touches the biggest unresolved question in AI search: whether answer engines are helping users reach the open web or quietly replacing it. The test, spotted by search observer Sachin Patel and reported by Search Engine Roundtable, could affect publishers, SEO teams, advertisers, and ordinary users who rely on citations to verify AI-generated answers.

Microsoft has spent the past three years trying to turn Bing from a distant Google rival into the most visible consumer showcase for generative AI. The company moved early in February 2023 with the launch of the new Bing, built around chat-style answers, web grounding, and source citations. That launch gave Microsoft a rare moment of search-market momentum, even if Google retained overwhelming global share.

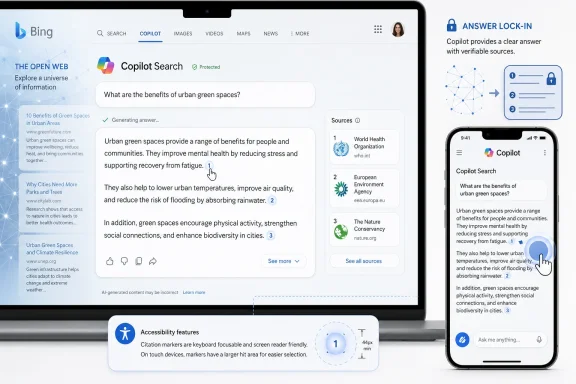

Since then, Bing’s AI search experience has evolved through multiple names and layouts. Bing Chat became part of the broader Copilot brand, while Microsoft continued to test how AI summaries, traditional blue links, side panels, source cards, and follow-up prompts should coexist. Copilot Search in Bing now sits somewhere between a search engine, a chatbot, and a research assistant.

The latest reported test matters because Microsoft has previously emphasized that generative search should include sources and clickable links. Bing’s own public messaging has described Copilot Search as a way to provide summarized answers with cited sources and opportunities for deeper exploration. If a new layout reduces the practical click area, even without removing citations, it changes how easily users can leave the AI answer and visit the underlying source.

This is not just a Bing story. It lands in the middle of a wider industry fight over AI Overviews, AI Mode, publisher traffic, zero-click searches, and the future economics of the web. Microsoft may be testing a small design variation, but the debate around it is anything but small.

That matters because search behavior is driven by friction. A user who might casually click a full sentence may not bother aiming at a small superscript-style marker, especially on touch screens, smaller laptops, accessibility tools, or high-DPI displays. The difference is measured in pixels, but the effect may be measured in traffic.

For publishers, the concern is straightforward: AI search already answers many queries before the user reaches a website. If the remaining route to a source becomes less prominent, the citation can become more decorative than functional. In that scenario, links remain present in a technical sense but weakened in a behavioral sense.

Key implications of a smaller click target include:

A citation marker that is visible but harder to activate may still provide a veneer of transparency. Yet the practical value of that transparency depends on whether users can quickly inspect the source. If verification feels like extra work, many users will simply trust the summary.

That creates a delicate tension for Microsoft. A cleaner interface may make Copilot Search feel more polished, but a less clickable answer could weaken one of Bing’s strongest differentiators against pure chatbot tools: direct connection to the web.

That strategy was never only about search share. It was also about making Microsoft’s AI stack visible across consumer and enterprise products. Bing powered web grounding, Edge provided distribution, Azure supplied infrastructure, and Copilot became the umbrella brand for AI assistance across Windows, Microsoft 365, GitHub, security, and search.

Copilot Search is the latest expression of that strategy. It promises concise answers, cited sources, suggestions for further exploration, and follow-up interaction. In theory, it blends the speed of an AI answer with the credibility of the web.

The reported link test is therefore notable because it appears to pull in the opposite direction from Microsoft’s earlier public framing. Bing’s generative search messaging has highlighted clickable links, references, and retained traditional results as part of a healthy web ecosystem. A design that makes links less prominent may be seen by publishers as a retreat from that promise.

That means every Copilot Search interface decision has strategic weight. The layout is not merely a search page; it is a template for how Microsoft believes AI-generated knowledge should be presented. If the answer becomes the central product and source links become secondary controls, that philosophy may ripple across other Microsoft AI experiences.

Microsoft has to balance several goals at once:

Generative AI complicates the exchange. If an AI system reads, summarizes, and synthesizes publisher content directly on the results page, the user may get enough information without clicking. The search engine still benefits from content availability, but the publisher may receive fewer visits.

This is why citation design has become economically significant. A citation is not merely a scholarly nicety; it is one of the remaining conversion points between an AI answer and the source that helped produce it. If citations become harder to click, publishers may argue that the bargain is being weakened further.

The issue is especially acute for categories such as:

But publishers cannot pay reporters, editors, developers, hosting bills, and legal costs with theoretical engagement alone. They need measurable visits, conversions, subscribers, and advertising impressions. If AI summaries reduce overall sessions, “higher quality” clicks may not offset the loss.

Microsoft’s position has generally been more publisher-friendly in tone than some competitors, partly because Bing needs the web ecosystem to view it as a viable alternative. The company has said it monitors traffic impacts and has described its generative search as retaining traditional results and increasing clickable links. That is why this reported test is likely to face scrutiny from SEO professionals and publishers.

The central question is not whether citations exist. The question is whether they are useful enough to preserve the economic loop that keeps the web alive.

But search is not a reading app. It is also a navigation system, a verification layer, and a gateway to competing sources. If visual simplicity comes at the expense of discoverability, the interface may feel smoother while becoming less transparent.

This tradeoff is familiar to anyone who has watched search results evolve from ten blue links into rich snippets, knowledge panels, shopping units, maps, videos, forums, ads, and AI answers. Each feature can improve convenience, but each also changes who gets visibility and who gets the click.

For AI search, the risk is sharper because the generated answer often appears authoritative. Users may not realize that the answer is a synthesis of sources with possible omissions, conflicts, or interpretation errors. A prominent link helps remind the reader that the answer has a provenance.

A small citation marker can work if it is visually obvious, accessible, and supported by source previews. It works less well if it becomes a tiny token that only experienced users understand. A citation that users do not click is functionally closer to a footnote than a pathway.

A good AI search citation experience should provide:

Google’s challenge is different from Microsoft’s. Because Google dominates search, any change it makes can immediately reshape publisher traffic at enormous scale. Microsoft, with a smaller but commercially meaningful search share, has more room to experiment but less margin for alienating the ecosystem it hopes to court.

That creates an opening for Bing. If Google is perceived as reducing publisher traffic, Microsoft can position Bing as the more open, source-friendly AI search engine. But that positioning requires interface choices that visibly favor citation access. A less clickable citation design undercuts that potential advantage.

Bing also competes with a growing set of answer engines and AI assistants:

Perplexity built much of its brand around visible sources. Google has been under pressure to show more meaningful links in AI features. Microsoft has often highlighted cited sources as part of Copilot Search’s value proposition. These companies understand that trust is the Achilles’ heel of generative search.

If Microsoft makes links feel less clickable, rivals may use that as a contrast point. A competitor can say, in effect, “Our sources are easier to open.” That message may resonate with journalists, researchers, students, developers, and professionals who care about provenance.

The irony is that Bing’s smaller market share could make trust a stronger competitive weapon. Microsoft does not need to beat Google overnight; it needs to win specific groups of users who are dissatisfied with current search. Transparent linking is one of the clearest ways to do that.

Microsoft sells Copilot across workplaces where auditability, governance, and responsible AI are central selling points. That creates a higher bar for interface patterns. If consumer Copilot Search trains users to accept answer-first layouts with less prominent source navigation, that may clash with enterprise expectations.

In corporate environments, citations serve several functions:

A Copilot summary might correctly describe a Windows update issue but omit a known limitation, version boundary, or rollback condition. The user needs to reach the Microsoft Learn page, support article, forum thread, or vendor note quickly. If the link is harder to spot or click, troubleshooting becomes more error-prone.

This is particularly important for:

The problem appears when the answer is incomplete, controversial, outdated, or misleading. At that moment, the user needs an easy path to the source. If the interface has trained them to treat the AI response as the final answer, they may not verify anything.

Consumers also differ widely in digital literacy. Some understand citation numbers, source cards, and hover states. Others do not. A full-line link is more forgiving because it turns the cited claim itself into the pathway. A tiny marker assumes the user understands the grammar of AI search.

If Microsoft applies similar thinking to mobile layouts, the practical click-through effect could be larger. Users are less likely to perform precise taps while moving, multitasking, or scanning quickly. A small marker may be technically tappable but behaviorally invisible.

Consumer-friendly AI search should preserve:

A less clickable citation design complicates that measurement. A site may appear as a source but receive fewer visits. That means visibility inside Copilot Search could become less valuable unless it produces measurable downstream behavior. The industry will need to distinguish between citation presence and citation performance.

Traditional SEO metrics such as impressions, average position, and organic sessions may not fully capture AI-search dynamics. Publishers and marketers will increasingly need to monitor AI citation frequency, referral quality, branded search lift, and conversion paths that begin with AI answers but do not immediately click.

Practical adjustments include:

This creates a new kind of inequality in search. Large publishers may benefit from authority signals even when clicks decline, while independent sites lose both visibility and monetization. If AI answers concentrate citations among a narrow set of sources, the long tail of the web could suffer.

Bing has an opportunity to avoid that outcome. By making links prominent, diverse, and easy to open, Microsoft can support a healthier discovery layer. By shrinking the clickable surface, it risks contributing to the same consolidation pressures already worrying publishers across the industry.

If Copilot Search answers more questions directly, some commercial clicks may decline. But AI answers can also create new ad opportunities, such as sponsored suggestions, shopping comparisons, travel planning prompts, and conversational recommendations. The tension is that monetization must not undermine trust.

Citation design intersects with advertising because every search results page has limited attention. If source links become less prominent while AI answers and commercial modules become more prominent, publishers will see a familiar pattern: their content helps generate the page, but paid placements capture the value.

Microsoft must avoid the perception that organic source visibility is being reduced to make room for monetizable interactions. Even if that is not the intent, interface changes are judged by outcomes.

If Bing becomes known as the AI search engine that gives clear answers and still sends meaningful traffic, it could win goodwill from SEO communities, media companies, educators, researchers, and power users. That goodwill may not instantly transform market share, but it can influence defaults, recommendations, and professional usage.

A healthier link strategy could support:

Microsoft should test larger citation zones, source chips, hover previews, publisher logos, grouped references, and optional expanded source views. It should also publish more detail about how Copilot Search affects outbound traffic, especially if it wants publishers to believe that AI answers support a healthy web ecosystem.

Key things to watch include:

For Windows users, IT professionals, publishers, and everyday searchers, the principle should be simple. AI can summarize the web, but it should not make the web harder to reach. If Copilot Search wants to be trusted as a serious research and discovery tool, its citations must be more than small marks at the end of generated sentences.

Microsoft has the technical talent, distribution, and incentive to build an AI search experience that respects both convenience and attribution. The reported less-clickable citation test may be just one experiment, and many experiments never ship. But the reaction to it should be taken seriously, because the future of search will be shaped not only by model quality, but by the tiny interface choices that decide whether users still click through to the open web.

Source: Search Engine Roundtable Microsoft Bing Testing Less Clickable Links In Copilot Search Results

Overview

Overview

Microsoft has spent the past three years trying to turn Bing from a distant Google rival into the most visible consumer showcase for generative AI. The company moved early in February 2023 with the launch of the new Bing, built around chat-style answers, web grounding, and source citations. That launch gave Microsoft a rare moment of search-market momentum, even if Google retained overwhelming global share.Since then, Bing’s AI search experience has evolved through multiple names and layouts. Bing Chat became part of the broader Copilot brand, while Microsoft continued to test how AI summaries, traditional blue links, side panels, source cards, and follow-up prompts should coexist. Copilot Search in Bing now sits somewhere between a search engine, a chatbot, and a research assistant.

The latest reported test matters because Microsoft has previously emphasized that generative search should include sources and clickable links. Bing’s own public messaging has described Copilot Search as a way to provide summarized answers with cited sources and opportunities for deeper exploration. If a new layout reduces the practical click area, even without removing citations, it changes how easily users can leave the AI answer and visit the underlying source.

This is not just a Bing story. It lands in the middle of a wider industry fight over AI Overviews, AI Mode, publisher traffic, zero-click searches, and the future economics of the web. Microsoft may be testing a small design variation, but the debate around it is anything but small.

Why Clickability Is More Than a Design Detail

The size of the target changes behavior

A clickable sentence and a clickable citation marker are not the same experience. When an entire line of text acts as a link, users can click almost anywhere in the cited claim and land on the source. When only a tiny citation marker is clickable, the action becomes more precise, less obvious, and more likely to be skipped.That matters because search behavior is driven by friction. A user who might casually click a full sentence may not bother aiming at a small superscript-style marker, especially on touch screens, smaller laptops, accessibility tools, or high-DPI displays. The difference is measured in pixels, but the effect may be measured in traffic.

For publishers, the concern is straightforward: AI search already answers many queries before the user reaches a website. If the remaining route to a source becomes less prominent, the citation can become more decorative than functional. In that scenario, links remain present in a technical sense but weakened in a behavioral sense.

Key implications of a smaller click target include:

- Lower source click-through rates if users do not recognize the marker as interactive.

- Reduced verification behavior because checking sources requires more effort.

- More zero-click sessions when the AI summary feels complete enough.

- Greater publisher anxiety over whether citations meaningfully compensate content creators.

- Potential accessibility concerns for users with motor, vision, or assistive-technology needs.

Links are part of trust, not just traffic

In AI search, links do double duty. They send users to publishers, but they also signal that an answer is grounded in retrievable material. That is crucial because generative AI systems can present uncertain, incomplete, or synthesized information with confidence.A citation marker that is visible but harder to activate may still provide a veneer of transparency. Yet the practical value of that transparency depends on whether users can quickly inspect the source. If verification feels like extra work, many users will simply trust the summary.

That creates a delicate tension for Microsoft. A cleaner interface may make Copilot Search feel more polished, but a less clickable answer could weaken one of Bing’s strongest differentiators against pure chatbot tools: direct connection to the web.

From Bing Chat to Copilot Search

Microsoft’s early AI-search advantage

Microsoft’s early move with AI search was strategically important. By integrating large language models into Bing and Edge, the company positioned itself as the bold challenger willing to rethink search before Google fully committed to AI-generated answers. For a brief period, Bing became the place where mainstream users could test a search engine that talked back.That strategy was never only about search share. It was also about making Microsoft’s AI stack visible across consumer and enterprise products. Bing powered web grounding, Edge provided distribution, Azure supplied infrastructure, and Copilot became the umbrella brand for AI assistance across Windows, Microsoft 365, GitHub, security, and search.

Copilot Search is the latest expression of that strategy. It promises concise answers, cited sources, suggestions for further exploration, and follow-up interaction. In theory, it blends the speed of an AI answer with the credibility of the web.

The reported link test is therefore notable because it appears to pull in the opposite direction from Microsoft’s earlier public framing. Bing’s generative search messaging has highlighted clickable links, references, and retained traditional results as part of a healthy web ecosystem. A design that makes links less prominent may be seen by publishers as a retreat from that promise.

The branding shift matters

The move from Bing Chat to Copilot was more than a name change. Copilot has become Microsoft’s primary AI identity, and that gives search a new role inside the company’s broader product story. Bing is no longer only competing with Google Search; it is also feeding and validating Copilot experiences across Microsoft’s ecosystem.That means every Copilot Search interface decision has strategic weight. The layout is not merely a search page; it is a template for how Microsoft believes AI-generated knowledge should be presented. If the answer becomes the central product and source links become secondary controls, that philosophy may ripple across other Microsoft AI experiences.

Microsoft has to balance several goals at once:

- Speed, because users expect AI answers immediately.

- Clarity, because long link-heavy answers can feel cluttered.

- Trust, because citations must be easy to inspect.

- Publisher value, because the open web supplies much of the information.

- Ad monetization, because search remains a commercial business.

- Competitive differentiation, because Bing needs reasons to stand apart from Google.

The Publisher Economy Behind AI Answers

The web’s bargain is under pressure

For decades, the web operated on a rough bargain: publishers made content crawlable, search engines indexed it, users clicked results, and publishers earned revenue through ads, subscriptions, commerce, or brand value. That bargain was imperfect, but it gave content creators a reason to remain visible in search.Generative AI complicates the exchange. If an AI system reads, summarizes, and synthesizes publisher content directly on the results page, the user may get enough information without clicking. The search engine still benefits from content availability, but the publisher may receive fewer visits.

This is why citation design has become economically significant. A citation is not merely a scholarly nicety; it is one of the remaining conversion points between an AI answer and the source that helped produce it. If citations become harder to click, publishers may argue that the bargain is being weakened further.

The issue is especially acute for categories such as:

- News, where freshness and reporting costs are high.

- Reviews, where affiliate revenue depends on referral traffic.

- Health information, where source authority and context matter.

- Technology explainers, where detailed troubleshooting often lives on publisher pages.

- Local information, where small businesses depend on discovery.

- Research content, where nuance is often lost in short summaries.

Traffic quality versus traffic quantity

Search companies increasingly argue that AI-generated answers may send fewer but more qualified clicks. The idea is that users who do click after reading an AI summary are more engaged, better informed, and more likely to spend time on the destination site. That may be true in some contexts, particularly for complex research queries.But publishers cannot pay reporters, editors, developers, hosting bills, and legal costs with theoretical engagement alone. They need measurable visits, conversions, subscribers, and advertising impressions. If AI summaries reduce overall sessions, “higher quality” clicks may not offset the loss.

Microsoft’s position has generally been more publisher-friendly in tone than some competitors, partly because Bing needs the web ecosystem to view it as a viable alternative. The company has said it monitors traffic impacts and has described its generative search as retaining traditional results and increasing clickable links. That is why this reported test is likely to face scrutiny from SEO professionals and publishers.

The central question is not whether citations exist. The question is whether they are useful enough to preserve the economic loop that keeps the web alive.

User Experience and Trust

Cleaner pages can hide important affordances

There is a legitimate design reason Microsoft might test a smaller click target. AI answer pages can become visually noisy when every cited phrase, card, source block, image, and follow-up prompt competes for attention. A cleaner interface may improve readability, especially for users who simply want a quick answer.But search is not a reading app. It is also a navigation system, a verification layer, and a gateway to competing sources. If visual simplicity comes at the expense of discoverability, the interface may feel smoother while becoming less transparent.

This tradeoff is familiar to anyone who has watched search results evolve from ten blue links into rich snippets, knowledge panels, shopping units, maps, videos, forums, ads, and AI answers. Each feature can improve convenience, but each also changes who gets visibility and who gets the click.

For AI search, the risk is sharper because the generated answer often appears authoritative. Users may not realize that the answer is a synthesis of sources with possible omissions, conflicts, or interpretation errors. A prominent link helps remind the reader that the answer has a provenance.

Verification should be a first-class action

A strong AI-search design should make verification effortless. Users should be able to see where a claim came from, understand why a source was used, compare alternative sources, and open the original context without hunting through a secondary panel. That is especially important for factual, medical, legal, financial, or technical queries.A small citation marker can work if it is visually obvious, accessible, and supported by source previews. It works less well if it becomes a tiny token that only experienced users understand. A citation that users do not click is functionally closer to a footnote than a pathway.

A good AI search citation experience should provide:

- Large, accessible click targets for both mouse and touch users.

- Clear visual cues that show which statements are sourced.

- Source previews that identify the publisher before the click.

- Multiple source options when a claim is synthesized from several pages.

- Fast loading behavior so verification does not feel costly.

- Consistent interaction patterns across desktop and mobile.

Competitive Pressure From Google and AI Rivals

Google’s shadow looms over every Bing decision

Bing’s AI search experiments cannot be separated from Google’s moves. Google has expanded AI Overviews and built AI Mode as a more conversational search experience. It has also adjusted how links appear in AI-generated answers, including tests and rollouts designed to make sources more visible or easier to inspect.Google’s challenge is different from Microsoft’s. Because Google dominates search, any change it makes can immediately reshape publisher traffic at enormous scale. Microsoft, with a smaller but commercially meaningful search share, has more room to experiment but less margin for alienating the ecosystem it hopes to court.

That creates an opening for Bing. If Google is perceived as reducing publisher traffic, Microsoft can position Bing as the more open, source-friendly AI search engine. But that positioning requires interface choices that visibly favor citation access. A less clickable citation design undercuts that potential advantage.

Bing also competes with a growing set of answer engines and AI assistants:

- Google Search, which controls the largest search audience.

- ChatGPT, which is increasingly used as a research and answer tool.

- Perplexity, which has made citations core to its identity.

- Claude, which is used for document-heavy reasoning and analysis.

- Gemini, which benefits from Google’s ecosystem and Search grounding.

- DuckDuckGo, which has leaned into user choice and AI-light or AI-free options.

The citation arms race

The next phase of AI search competition may not be about who can generate the longest answer. It may be about who can make answers verifiable, attributable, and useful without overwhelming the user. In that race, citation design becomes a product differentiator.Perplexity built much of its brand around visible sources. Google has been under pressure to show more meaningful links in AI features. Microsoft has often highlighted cited sources as part of Copilot Search’s value proposition. These companies understand that trust is the Achilles’ heel of generative search.

If Microsoft makes links feel less clickable, rivals may use that as a contrast point. A competitor can say, in effect, “Our sources are easier to open.” That message may resonate with journalists, researchers, students, developers, and professionals who care about provenance.

The irony is that Bing’s smaller market share could make trust a stronger competitive weapon. Microsoft does not need to beat Google overnight; it needs to win specific groups of users who are dissatisfied with current search. Transparent linking is one of the clearest ways to do that.

Enterprise Impact: Compliance, Research, and Knowledge Work

Business users need auditability

For enterprise users, source access is not optional polish. It is a compliance and productivity requirement. A financial analyst, legal researcher, IT administrator, procurement manager, or security professional cannot rely on an AI answer without understanding where the information came from.Microsoft sells Copilot across workplaces where auditability, governance, and responsible AI are central selling points. That creates a higher bar for interface patterns. If consumer Copilot Search trains users to accept answer-first layouts with less prominent source navigation, that may clash with enterprise expectations.

In corporate environments, citations serve several functions:

- They let workers validate claims before acting.

- They reduce the risk of hallucinated or stale information.

- They support documentation and internal review.

- They help distinguish official sources from commentary.

- They preserve confidence in regulated workflows.

Windows and IT audiences should pay attention

For WindowsForum readers, the concern is practical. Many users already search Bing or Copilot for Windows error messages, driver problems, PowerShell commands, registry tweaks, update failures, and Microsoft support articles. In those cases, the source is often as important as the answer.A Copilot summary might correctly describe a Windows update issue but omit a known limitation, version boundary, or rollback condition. The user needs to reach the Microsoft Learn page, support article, forum thread, or vendor note quickly. If the link is harder to spot or click, troubleshooting becomes more error-prone.

This is particularly important for:

- Windows update failures, where build numbers and KB identifiers matter.

- Security mitigations, where incomplete instructions can create risk.

- Driver conflicts, where vendor-specific documentation may be decisive.

- PowerShell examples, where context and permissions matter.

- Registry changes, where one mistaken value can cause serious problems.

Consumer Impact: Convenience Versus Control

Most users will not notice until something goes wrong

For everyday users, a less clickable citation area may initially feel irrelevant. If Copilot Search answers a simple question about movie times, recipes, definitions, travel ideas, or product comparisons, many people will read the summary and move on. That is exactly why the design matters: small changes can reshape behavior without users consciously noticing.The problem appears when the answer is incomplete, controversial, outdated, or misleading. At that moment, the user needs an easy path to the source. If the interface has trained them to treat the AI response as the final answer, they may not verify anything.

Consumers also differ widely in digital literacy. Some understand citation numbers, source cards, and hover states. Others do not. A full-line link is more forgiving because it turns the cited claim itself into the pathway. A tiny marker assumes the user understands the grammar of AI search.

Mobile makes the issue sharper

Although the reported example appears to involve a specific test that not all users can reproduce, the broader design issue is especially important on mobile. Touch targets need to be large enough for thumbs, and source links must compete with scrolling, ads, cards, keyboards, and browser controls.If Microsoft applies similar thinking to mobile layouts, the practical click-through effect could be larger. Users are less likely to perform precise taps while moving, multitasking, or scanning quickly. A small marker may be technically tappable but behaviorally invisible.

Consumer-friendly AI search should preserve:

- Immediate answers for simple questions.

- Obvious source paths for verification.

- Readable layouts that do not bury organic results.

- User control over whether to continue in AI mode or standard search.

- Clear distinction between generated text and publisher content.

SEO and Content Strategy Implications

Search optimization is becoming answer optimization

For SEO professionals, the rise of AI search has already changed the job. Ranking in traditional results is still important, but it no longer guarantees visibility in AI-generated answers. Content teams now track whether their pages are cited, summarized, paraphrased, or excluded from answer engines.A less clickable citation design complicates that measurement. A site may appear as a source but receive fewer visits. That means visibility inside Copilot Search could become less valuable unless it produces measurable downstream behavior. The industry will need to distinguish between citation presence and citation performance.

Traditional SEO metrics such as impressions, average position, and organic sessions may not fully capture AI-search dynamics. Publishers and marketers will increasingly need to monitor AI citation frequency, referral quality, branded search lift, and conversion paths that begin with AI answers but do not immediately click.

Practical adjustments include:

- Create concise, sourceable passages that AI systems can understand.

- Use clear headings that map to common user questions.

- Publish original data that gives answer engines a reason to cite you.

- Maintain technical crawlability so pages remain eligible for source inclusion.

- Track AI referrals separately from traditional organic search.

- Strengthen direct audiences through newsletters, communities, and apps.

Being cited is not the same as being rewarded

The hard truth for publishers is that citation alone may not be enough. If users see a brand name in a source panel but do not click, the publisher receives awareness but not necessarily revenue. That may help major brands, but it does little for smaller sites that depend on traffic.This creates a new kind of inequality in search. Large publishers may benefit from authority signals even when clicks decline, while independent sites lose both visibility and monetization. If AI answers concentrate citations among a narrow set of sources, the long tail of the web could suffer.

Bing has an opportunity to avoid that outcome. By making links prominent, diverse, and easy to open, Microsoft can support a healthier discovery layer. By shrinking the clickable surface, it risks contributing to the same consolidation pressures already worrying publishers across the industry.

Advertising and Monetization Stakes

Search revenue depends on user journeys

Microsoft’s search and news advertising business remains financially important, even if it is smaller than Google’s. Search ads are valuable because they appear near intent: users are actively looking for products, services, answers, or decisions. AI search changes the shape of that intent funnel.If Copilot Search answers more questions directly, some commercial clicks may decline. But AI answers can also create new ad opportunities, such as sponsored suggestions, shopping comparisons, travel planning prompts, and conversational recommendations. The tension is that monetization must not undermine trust.

Citation design intersects with advertising because every search results page has limited attention. If source links become less prominent while AI answers and commercial modules become more prominent, publishers will see a familiar pattern: their content helps generate the page, but paid placements capture the value.

Microsoft must avoid the perception that organic source visibility is being reduced to make room for monetizable interactions. Even if that is not the intent, interface changes are judged by outcomes.

The business case for better links

There is also a strong business argument for keeping links highly clickable. Bing’s best chance to grow is not simply to mimic Google’s AI strategy. It is to offer a search experience that users and publishers trust more.If Bing becomes known as the AI search engine that gives clear answers and still sends meaningful traffic, it could win goodwill from SEO communities, media companies, educators, researchers, and power users. That goodwill may not instantly transform market share, but it can influence defaults, recommendations, and professional usage.

A healthier link strategy could support:

- More publisher cooperation with Bing crawling and indexing.

- Higher user confidence in AI-generated answers.

- Better differentiation from closed chatbot experiences.

- Improved advertiser trust in the search ecosystem.

- More durable long-term content supply for AI grounding.

Strengths and Opportunities

Microsoft still has a strong opportunity to turn this moment into a product advantage. The company can use the reported test as feedback, refine the layout, and demonstrate that Copilot Search can be both clean and source-forward. If Bing handles citations better than rivals, it can build trust in a market that increasingly worries about AI opacity.- Bing can differentiate through transparent citations that are easier to click than competitors’ implementations.

- Copilot Search can appeal to researchers and power users who want AI summaries without losing access to the web.

- Microsoft can strengthen publisher relationships by prioritizing meaningful outbound traffic.

- Better source previews can improve trust without cluttering the answer page.

- Accessibility-focused link design can become a competitive advantage across desktop and mobile.

- Enterprise credibility can benefit if consumer Copilot Search models strong verification habits.

- SEO professionals may reward Bing with attention if it proves more source-friendly than Google’s AI surfaces.

Risks and Concerns

The downside is equally clear. If the test rolls out broadly and materially reduces clicks to sources, it will reinforce fears that AI search engines want publisher content without publisher visits. The reputational risk may outweigh any marginal interface gain.- Publisher traffic could decline if users overlook or avoid smaller citation targets.

- User trust could weaken if citations appear symbolic rather than functional.

- Accessibility issues may emerge if clickable areas are too small or poorly labeled.

- SEO reporting may become murkier as citations rise but referrals fall.

- Regulatory scrutiny could intensify around platform power and content use.

- Bing could lose a chance to position itself as the web-friendly AI search alternative.

- Technical users may become frustrated if source verification takes extra effort during troubleshooting.

Looking Ahead

What Microsoft should test next

The best outcome would not be a reflexive return to old blue-link layouts. AI search needs new design patterns. But those patterns should make sources more understandable, not less reachable.Microsoft should test larger citation zones, source chips, hover previews, publisher logos, grouped references, and optional expanded source views. It should also publish more detail about how Copilot Search affects outbound traffic, especially if it wants publishers to believe that AI answers support a healthy web ecosystem.

Key things to watch include:

- Whether the smaller clickable citation test expands beyond a limited experiment.

- Whether Microsoft changes source-link behavior on mobile devices.

- Whether publishers report measurable traffic shifts from Copilot Search.

- Whether Bing introduces more prominent source cards or previews.

- Whether Google’s own AI link changes pressure Microsoft to respond.

The bigger search reset

This episode is another reminder that search is being rebuilt in real time. The old results page was already crowded, but generative AI changes the hierarchy: the answer now competes directly with the sources that made the answer possible. That makes every interface decision a policy decision, even when it looks like design.For Windows users, IT professionals, publishers, and everyday searchers, the principle should be simple. AI can summarize the web, but it should not make the web harder to reach. If Copilot Search wants to be trusted as a serious research and discovery tool, its citations must be more than small marks at the end of generated sentences.

Microsoft has the technical talent, distribution, and incentive to build an AI search experience that respects both convenience and attribution. The reported less-clickable citation test may be just one experiment, and many experiments never ship. But the reaction to it should be taken seriously, because the future of search will be shaped not only by model quality, but by the tiny interface choices that decide whether users still click through to the open web.

Source: Search Engine Roundtable Microsoft Bing Testing Less Clickable Links In Copilot Search Results