A hobbyist has reportedly managed to do something Intel and Microsoft never intended: boot a Bartlett Lake embedded processor on a consumer motherboard and push Windows 11 onto hardware that is not officially supported. The story matters less as a practical how-to than as a vivid demonstration of where modern firmware engineering, community experimentation, and AI-assisted reverse engineering are headed. It also underlines a familiar truth in the PC world: where manufacturers draw a line, enthusiasts often treat it as a challenge.

The processor at the center of the report is the Intel Core 9 273PQE, a Bartlett Lake chip positioned for embedded and industrial systems rather than ordinary desktop builds. Intel’s own product page lists it as an embedded part with 12 performance cores, 24 threads, and no efficient cores, confirming that it belongs to the all-P-core Bartlett Lake family rather than the hybrid desktop designs most buyers know. Intel’s recent Bartlett Lake materials also emphasize LGA1700 compatibility and embedded-oriented software support, including Windows 11 IoT Enterprise LTSC variants and Intel’s own firmware stack.

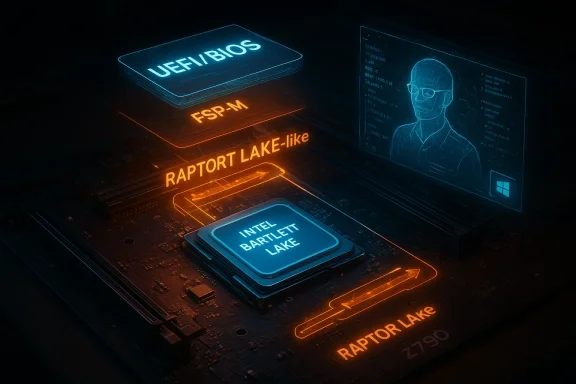

The interesting twist is that the modder, identified in the report as “kryptonfly,” did not simply force Windows installation media onto an unsupported PC. Instead, according to the coverage, he first persuaded an Asus Z790 motherboard to accept the Bartlett Lake CPU at the firmware level, then altered the Intel firmware initialization path so the system would treat the chip more like a mainstream Raptor Lake desktop processor. That is a much deeper intervention than a standard Windows compatibility bypass.

In other words, the stunt sits in the middle of a larger trend: embedded silicon that is electrically similar to consumer parts, firmware checks that enforce product segmentation, and hobbyists willing to rewrite enough code to blur the distinction. The report also suggests the enthusiast had help from Claude, which adds an AI angle that is likely to attract attention well beyond overclocking forums. The broader lesson is not that AI can magically hack a machine open, but that it can accelerate the kind of tedious, detail-heavy firmware work that used to demand days or weeks of manual trial and error.

Bartlett Lake is especially interesting because it is not a radically new architecture in the public imagination. It is more of a platform strategy: a family of chips that preserve the familiar LGA1700 mechanical interface while targeting embedded and edge deployments. Intel’s embedded product materials make clear that these parts are meant to fit into systems that value long availability, controlled configurations, and validated OS stacks more than retail-box simplicity. That makes them attractive to industrial OEMs, but it also makes them irresistible to enthusiasts who see “embedded” as a fancy label on otherwise usable silicon.

For Windows users, the story lands in a familiar place. Microsoft’s Windows 11 requirements remain stricter than many hobbyists would like, especially on CPU support and platform trust features. Microsoft’s own documentation continues to draw a line between systems that can technically be made to boot and systems that are officially supported for the full Windows 11 experience. That distinction matters because many modders are perfectly comfortable living outside the support matrix, but most consumers are not.

The report’s significance is therefore twofold. First, it shows that a modern desktop motherboard can sometimes be convinced to accept an embedded CPU if the firmware checks are loosened or rewritten. Second, it shows that the OS boot path may still be blocked by platform initialization logic before Windows even gets a chance to complain. That makes the case far more interesting than a routine registry tweak or installer flag change. It is firmware archaeology, not just installation wizard trickery.

Because the chip is mechanically compatible with the LGA1700 socket, the remaining barriers are mostly logical rather than physical. That is the key difference between “won’t fit” and “won’t initialize.” The former stops hobbyists cold. The latter invites a long night in UEFI setup, FSP configuration, and repeated reboot cycles.

According to the account, Claude helped identify missing or incompatible firmware components and rewrite code accordingly. That claim is notable because firmware work is normally painful in a very specific way: terse errors, fragile dependencies, and binary-level behavior that often has to be inferred rather than documented. A large language model can’t replace the engineering judgment required here, but it can accelerate pattern recognition and help translate scattered clues into plausible changes.

That is why the overclocker’s first success is impressive. Getting the system to POST with a nonstandard CPU is already a major victory, because it means the motherboard’s early boot logic has been altered enough to proceed. Once that happens, the project shifts from “can it be recognized?” to “can it be initialized correctly?” and the difficulty rises sharply. That is where most compatibility experiments die.

The underlying lesson is that consumer and embedded boundaries are increasingly software-defined. The socket is the easy part. The code is the wall.

The reported workaround was to make the FSP-M treat Bartlett Lake as though it were a Raptor Lake part. That is a clever form of impersonation, because Raptor Lake is close enough in family lineage to share enough assumptions for memory and system-agent initialization to proceed. In practical terms, the system is being told to initialize a chip using a more familiar desktop personality. That is not the same thing as changing the silicon, but it can be enough to move past the firmware’s refusal point.

The report’s description of the System Agent fix is also meaningful. The system agent is part of the platform logic that coordinates memory, I/O, and other early initialization tasks. If that layer is stabilized, the remaining boot sequence becomes much more ordinary. In a sense, that is the moment the hack stops being a stunt and starts resembling a normal PC boot process with an unusual CPU buried underneath.

What seems clear is that AI is becoming a practical tool for expert-adjacent technical projects. Firmware and low-level platform work often involve reading messy code, searching for the right assumptions, and comparing behavior across closely related platforms. A model can help surface possibilities faster than a forum thread, especially when the user already knows what to ask and how to verify the answer.

There is also an emerging social dimension. Enthusiast communities historically relied on packet captures, spreadsheet notes, and long back-and-forth forum exchanges. AI now sits between those old methods and the actual code, turning scattered clues into hypotheses quickly. For hardware tinkerers, that can mean more experiments, faster iteration, and a greater appetite for taking on projects that previously looked too obscure or too time-consuming.

The risk, of course, is that AI can confidently suggest bad changes. In firmware work, a wrong assumption can produce a no-boot system rather than a useful machine. So the AI story here should be read as assistive tooling, not automatic engineering.

That segmentation protects Intel’s business model in several ways. It lets the company sell parts with different life-cycle promises, power targets, and pricing structures. It also ensures that OEMs and industrial customers get platform behavior that has been tested in a controlled context rather than a broad consumer ecosystem with every imaginable combination of motherboard, memory kit, and GPU.

But the enthusiast community sees the same reality as an opportunity. If a socket is the same and the architecture is close enough, the refusal to support becomes a puzzle, not a prohibition. That tension has existed for decades in PC hardware, and the only thing that changes is the complexity of the barrier. Today, that barrier is firmware logic rather than pin compatibility or chipset limits. The game has moved up the stack.

The practical reality is simpler: Windows can often be made to run on hardware outside the official list, but stability, update reliability, and support status are separate questions. Microsoft has long acknowledged that unsupported installs may work, while still warning that they are outside the guaranteed compatibility matrix. That distinction matters far more in enterprise environments than in hobbyist labs.

That said, the case is still useful as a proof of concept. It shows how much of the OS/hardware boundary is enforced by firmware policy rather than hard electrical impossibility. It also highlights how close modern Windows builds are to one another underneath the user interface, with the major differences often living in enablement, licensing, and system initialization layers rather than the kernel itself. That is why the hack is plausible.

A caution is important here: this is not something ordinary readers should attempt on their main machine. A failed firmware experiment can leave a board unbootable, require external flashing hardware, or invalidate support in ways that are hard to undo. The fact that it worked once in a forum post does not make it repeatable or advisable in the real world.

This is increasingly how modern PCs work. The old image of a motherboard as a mostly dumb electrical board is obsolete. Today’s boards are software platforms with persistent code, board-specific rules, secure boot logic, and vendor-curated compatibility assumptions. Once you accept that, the Bartlett Lake hack feels less like magic and more like the inevitable result of treating firmware as modifiable software.

This also explains why such experiments don’t automatically generalize. A fix for one embedded SKU on one motherboard with one BIOS revision can be completely useless on another board, another stepping, or another firmware branch. Enthusiast success stories are often specific triumphs, not universal methods. Readers should keep that distinction in mind.

It also suggests that the combination of community knowledge and AI assistance may accelerate a new wave of low-level experimentation. Enthusiasts who once relied on a handful of experts with niche knowledge may now get quicker feedback loops from models that can help frame the problem. That does not democratize the skill entirely, but it does compress the learning curve for people already willing to do the hard work.

There is also an ethical line worth noting. Reverse engineering for personal learning sits in a different category from commercial misuse or support fraud. Enthusiast communities generally understand that line intuitively, even if outsiders sometimes do not. That is why the tone of posts like this often blends pride, caution, and a healthy dose of don’t try this at home.

The bigger industry question is whether segmentation will keep moving deeper into software. If the physical socket becomes less important than the boot policy, then the real battleground shifts to firmware signatures, platform whitelists, and more aggressively locked initialization chains. Enthusiasts will keep probing those boundaries, and vendors will keep deciding whether to tolerate, ignore, or close them.

Source: Research Snipers Overclocker cracks Intel's CPU lock with AI help – Research Snipers

Overview

Overview

The processor at the center of the report is the Intel Core 9 273PQE, a Bartlett Lake chip positioned for embedded and industrial systems rather than ordinary desktop builds. Intel’s own product page lists it as an embedded part with 12 performance cores, 24 threads, and no efficient cores, confirming that it belongs to the all-P-core Bartlett Lake family rather than the hybrid desktop designs most buyers know. Intel’s recent Bartlett Lake materials also emphasize LGA1700 compatibility and embedded-oriented software support, including Windows 11 IoT Enterprise LTSC variants and Intel’s own firmware stack.The interesting twist is that the modder, identified in the report as “kryptonfly,” did not simply force Windows installation media onto an unsupported PC. Instead, according to the coverage, he first persuaded an Asus Z790 motherboard to accept the Bartlett Lake CPU at the firmware level, then altered the Intel firmware initialization path so the system would treat the chip more like a mainstream Raptor Lake desktop processor. That is a much deeper intervention than a standard Windows compatibility bypass.

In other words, the stunt sits in the middle of a larger trend: embedded silicon that is electrically similar to consumer parts, firmware checks that enforce product segmentation, and hobbyists willing to rewrite enough code to blur the distinction. The report also suggests the enthusiast had help from Claude, which adds an AI angle that is likely to attract attention well beyond overclocking forums. The broader lesson is not that AI can magically hack a machine open, but that it can accelerate the kind of tedious, detail-heavy firmware work that used to demand days or weeks of manual trial and error.

Background

Intel has spent the last few years splitting its processor portfolio into more sharply defined categories. Consumer desktop chips, mobile chips, workstation parts, and embedded SKUs increasingly share architectural DNA, but they are not marketed, validated, or supported in the same way. That segmentation is about more than branding. It reflects different thermal envelopes, qualification standards, long-term availability commitments, and support expectations for enterprises that need stability instead of excitement.Bartlett Lake is especially interesting because it is not a radically new architecture in the public imagination. It is more of a platform strategy: a family of chips that preserve the familiar LGA1700 mechanical interface while targeting embedded and edge deployments. Intel’s embedded product materials make clear that these parts are meant to fit into systems that value long availability, controlled configurations, and validated OS stacks more than retail-box simplicity. That makes them attractive to industrial OEMs, but it also makes them irresistible to enthusiasts who see “embedded” as a fancy label on otherwise usable silicon.

For Windows users, the story lands in a familiar place. Microsoft’s Windows 11 requirements remain stricter than many hobbyists would like, especially on CPU support and platform trust features. Microsoft’s own documentation continues to draw a line between systems that can technically be made to boot and systems that are officially supported for the full Windows 11 experience. That distinction matters because many modders are perfectly comfortable living outside the support matrix, but most consumers are not.

The report’s significance is therefore twofold. First, it shows that a modern desktop motherboard can sometimes be convinced to accept an embedded CPU if the firmware checks are loosened or rewritten. Second, it shows that the OS boot path may still be blocked by platform initialization logic before Windows even gets a chance to complain. That makes the case far more interesting than a routine registry tweak or installer flag change. It is firmware archaeology, not just installation wizard trickery.

Why Bartlett Lake stands out

Bartlett Lake’s all-P-core design is central to why this hack is feasible. Intel’s published product data for the 273PQE shows 12 P-cores, 24 threads, and zero E-cores, which means the chip behaves more like a classic high-performance desktop processor than a hybrid scheduling puzzle. That simplicity likely reduces the number of compatibility edge cases inside the firmware and operating-system initialization flow.Because the chip is mechanically compatible with the LGA1700 socket, the remaining barriers are mostly logical rather than physical. That is the key difference between “won’t fit” and “won’t initialize.” The former stops hobbyists cold. The latter invites a long night in UEFI setup, FSP configuration, and repeated reboot cycles.

- Socket compatibility lowers the physical barrier to experimentation.

- All-P-core topology simplifies scheduling compared with hybrid designs.

- Embedded positioning means the software stack is intentionally narrower.

- Firmware checks become the real enforcement mechanism.

- Community knowledge is often enough to probe those checks.

The Firmware Barrier

The first hurdle in the report was not Windows at all. It was getting the board to recognize the processor well enough to start. That is where the role of the BIOS, UEFI setup code, and Intel’s platform initialization stack becomes decisive. If the firmware refuses to identify the CPU as valid, nothing downstream matters.According to the account, Claude helped identify missing or incompatible firmware components and rewrite code accordingly. That claim is notable because firmware work is normally painful in a very specific way: terse errors, fragile dependencies, and binary-level behavior that often has to be inferred rather than documented. A large language model can’t replace the engineering judgment required here, but it can accelerate pattern recognition and help translate scattered clues into plausible changes.

BIOS as a gatekeeper

On modern boards, BIOS and UEFI do more than present a settings menu. They enforce CPU family checks, microcode selection, memory training behavior, power-management logic, and compatibility assumptions baked into the board vendor’s validation process. If a CPU was never meant for the consumer product stack, the board may simply refuse to move forward until the relevant identifiers match what the firmware expects.That is why the overclocker’s first success is impressive. Getting the system to POST with a nonstandard CPU is already a major victory, because it means the motherboard’s early boot logic has been altered enough to proceed. Once that happens, the project shifts from “can it be recognized?” to “can it be initialized correctly?” and the difficulty rises sharply. That is where most compatibility experiments die.

The underlying lesson is that consumer and embedded boundaries are increasingly software-defined. The socket is the easy part. The code is the wall.

The Windows 11 Boot Path

The second hurdle was more subtle and more revealing. The report says the bottleneck involved Intel’s Firmware Support Package, specifically FSP-M, which handles memory initialization during boot. If that component rejects the Bartlett platform in consumer mode, the CPU can be physically present and still never reach a usable Windows boot state.The reported workaround was to make the FSP-M treat Bartlett Lake as though it were a Raptor Lake part. That is a clever form of impersonation, because Raptor Lake is close enough in family lineage to share enough assumptions for memory and system-agent initialization to proceed. In practical terms, the system is being told to initialize a chip using a more familiar desktop personality. That is not the same thing as changing the silicon, but it can be enough to move past the firmware’s refusal point.

Why memory initialization matters

A lot of users think “booting Windows” begins when the logo appears. In reality, the boot chain starts much earlier, with memory training, platform initialization, and device enumeration. If the firmware mishandles those steps, Windows never gets a fair shot. That makes FSP-level work especially important, because it governs whether the system even reaches the handoff point where the operating system can take over.The report’s description of the System Agent fix is also meaningful. The system agent is part of the platform logic that coordinates memory, I/O, and other early initialization tasks. If that layer is stabilized, the remaining boot sequence becomes much more ordinary. In a sense, that is the moment the hack stops being a stunt and starts resembling a normal PC boot process with an unusual CPU buried underneath.

- FSP-M is critical because it initializes memory early in boot.

- System Agent behavior can determine whether Windows ever loads.

- CPU masquerading is a firmware trick, not a hardware conversion.

- Raptor Lake similarity likely made the impersonation feasible.

- Windows 11 can run once the platform reaches the OS handoff cleanly.

Claude and the AI Angle

The most modern part of the story is not the CPU, the socket, or the motherboard. It is the reported use of Claude to help navigate the firmware changes. That detail will inevitably trigger debate about what AI actually contributed. Was it genuinely code assistance, debugging support, or merely an informed sounding board? The answer probably depends on the person doing the work.What seems clear is that AI is becoming a practical tool for expert-adjacent technical projects. Firmware and low-level platform work often involve reading messy code, searching for the right assumptions, and comparing behavior across closely related platforms. A model can help surface possibilities faster than a forum thread, especially when the user already knows what to ask and how to verify the answer.

AI as a force multiplier

This is the important nuance: AI does not lower the skill ceiling so much as reduce the time cost of reaching it. The user still had to understand BIOS behavior, platform identification, and the implications of altering FSP initialization. But the model may have shortened the route from “something is failing” to “this is the component most likely responsible.” That is very different from one-click hacking, and readers should not confuse the two.There is also an emerging social dimension. Enthusiast communities historically relied on packet captures, spreadsheet notes, and long back-and-forth forum exchanges. AI now sits between those old methods and the actual code, turning scattered clues into hypotheses quickly. For hardware tinkerers, that can mean more experiments, faster iteration, and a greater appetite for taking on projects that previously looked too obscure or too time-consuming.

The risk, of course, is that AI can confidently suggest bad changes. In firmware work, a wrong assumption can produce a no-boot system rather than a useful machine. So the AI story here should be read as assistive tooling, not automatic engineering.

Intel’s Segmentation Strategy

Intel’s product stack increasingly reflects a deliberate separation between consumer, workstation, and embedded markets. The Bartlett Lake family is a textbook example of that approach: the silicon may be mechanically compatible with common desktops, but the validated software and platform targets are much narrower. Intel’s product briefs emphasize Windows 11 IoT Enterprise LTSC, Linux, and embedded deployment scenarios rather than standard DIY desktop builds.That segmentation protects Intel’s business model in several ways. It lets the company sell parts with different life-cycle promises, power targets, and pricing structures. It also ensures that OEMs and industrial customers get platform behavior that has been tested in a controlled context rather than a broad consumer ecosystem with every imaginable combination of motherboard, memory kit, and GPU.

Why manufacturers care

From Intel’s perspective, unsupported cross-usage creates support ambiguity. If a Bartlett Lake chip boots on an Asus Z790 board after firmware modification, who owns the behavior when something goes wrong? The motherboard vendor? Intel? The user? In practice, the answer is usually “none of the above,” which is exactly why such experiments remain off the official path.But the enthusiast community sees the same reality as an opportunity. If a socket is the same and the architecture is close enough, the refusal to support becomes a puzzle, not a prohibition. That tension has existed for decades in PC hardware, and the only thing that changes is the complexity of the barrier. Today, that barrier is firmware logic rather than pin compatibility or chipset limits. The game has moved up the stack.

- Consumer segmentation protects official support boundaries.

- Embedded validation prioritizes reliability over experimentation.

- Life-cycle promises are part of the product’s value proposition.

- Unsupported use cases create accountability gray zones.

- Enthusiast culture turns those gray zones into challenges.

Windows 11 on Unsupported Hardware

The report’s most eye-catching claim is that the system could then start Windows 11 on unsupported hardware. That should not be mistaken for Microsoft suddenly blessing the setup. Microsoft’s own materials still distinguish between what can be installed and what is officially supported, and the company continues to document stricter CPU and platform requirements than many older PCs meet.The practical reality is simpler: Windows can often be made to run on hardware outside the official list, but stability, update reliability, and support status are separate questions. Microsoft has long acknowledged that unsupported installs may work, while still warning that they are outside the guaranteed compatibility matrix. That distinction matters far more in enterprise environments than in hobbyist labs.

Consumer versus enterprise impact

For consumers, a booting Windows 11 desktop on exotic hardware is mostly a curiosity and a bragging right. For enterprises, it is a red flag unless the hardware is explicitly validated. Support contracts, fleet management, compliance, and security posture all depend on predictable behavior, and unsupported platform hacks undermine that predictability immediately.That said, the case is still useful as a proof of concept. It shows how much of the OS/hardware boundary is enforced by firmware policy rather than hard electrical impossibility. It also highlights how close modern Windows builds are to one another underneath the user interface, with the major differences often living in enablement, licensing, and system initialization layers rather than the kernel itself. That is why the hack is plausible.

A caution is important here: this is not something ordinary readers should attempt on their main machine. A failed firmware experiment can leave a board unbootable, require external flashing hardware, or invalidate support in ways that are hard to undo. The fact that it worked once in a forum post does not make it repeatable or advisable in the real world.

Hardware and Software Convergence

What makes this story compelling is that it sits at the intersection of silicon design, firmware engineering, and operating-system policy. The CPU is physically compatible with the socket. The motherboard is technically capable of powering up the platform. The operating system is not the main obstacle once the earlier layers are massaged into agreement. That means each layer is acting as a gate, and each gate can potentially be rewritten.This is increasingly how modern PCs work. The old image of a motherboard as a mostly dumb electrical board is obsolete. Today’s boards are software platforms with persistent code, board-specific rules, secure boot logic, and vendor-curated compatibility assumptions. Once you accept that, the Bartlett Lake hack feels less like magic and more like the inevitable result of treating firmware as modifiable software.

The role of similarity

The hack likely worked because Bartlett Lake is close enough to Raptor Lake in relevant platform behavior to fool the initialization stack. That closeness matters. If the silicon were materially different in the early boot path, the impersonation would have failed far earlier. Instead, the reported method suggests that the platform differences were narrow enough for a skilled modifier to bridge them.This also explains why such experiments don’t automatically generalize. A fix for one embedded SKU on one motherboard with one BIOS revision can be completely useless on another board, another stepping, or another firmware branch. Enthusiast success stories are often specific triumphs, not universal methods. Readers should keep that distinction in mind.

- Layered boot logic creates multiple checkpoints for enforcement.

- Firmware is software and can be modified, but not casually.

- Platform similarity can make spoofing possible.

- Board revision matters as much as CPU family.

- Success is highly specific and rarely portable.

What This Means for Enthusiasts

For the overclocking and modding community, the case is another reminder that the frontier has shifted. The exciting battles are no longer just about clocks, voltages, and cooling. They are about firmware, initialization paths, and persuading complex boards to accept hardware outside the certified matrix. That is a more technical kind of hobbyism, but it is also more rewarding for those who enjoy digging.It also suggests that the combination of community knowledge and AI assistance may accelerate a new wave of low-level experimentation. Enthusiasts who once relied on a handful of experts with niche knowledge may now get quicker feedback loops from models that can help frame the problem. That does not democratize the skill entirely, but it does compress the learning curve for people already willing to do the hard work.

Practical limits

Still, the appeal of the story should not obscure its narrow usefulness. A Bartlett Lake embedded CPU on a consumer Z790 board is not a bargain desktop upgrade path. It is a curiosity, a proof of concept, and perhaps a test bed for firmware researchers. For everyone else, supported hardware remains the sane choice.There is also an ethical line worth noting. Reverse engineering for personal learning sits in a different category from commercial misuse or support fraud. Enthusiast communities generally understand that line intuitively, even if outsiders sometimes do not. That is why the tone of posts like this often blends pride, caution, and a healthy dose of don’t try this at home.

Strengths and Opportunities

The broader significance of the Bartlett Lake experiment is that it reveals a real, practical convergence between firmware hacking and AI-assisted troubleshooting. The case is not just about one CPU booting on one board; it is about how quickly enthusiasts can now explore the boundaries manufacturers try to enforce. That creates both technical opportunities and cultural momentum for the DIY hardware world.- AI-assisted debugging can shorten firmware research cycles.

- Embedded CPUs with desktop-like sockets are ripe for experimentation.

- Firmware modding is becoming more accessible to skilled enthusiasts.

- Community documentation can spread low-level knowledge faster.

- Cross-platform compatibility may reveal reuse opportunities in hardware labs.

- All-P-core designs simplify some scheduler and initialization issues.

- Forum-based experimentation still uncovers real platform behavior.

Risks and Concerns

As clever as the hack is, it is also the kind of experiment that can go wrong in expensive and unrecoverable ways. Firmware changes can brick a board, destabilize memory behavior, or introduce subtle issues that only appear under load. And because the platform is outside official support, users are largely on their own when things break.- Bricking risk is real when modifying BIOS or FSP behavior.

- Memory training failures can cause unstable or non-booting systems.

- Unsupported Windows installs may complicate updates or recovery.

- Warranty and support are likely void once firmware is altered.

- AI suggestions can be misleading if not verified carefully.

- Generalization risk is high; one working setup may not reproduce.

- Security assumptions may be weakened by undocumented changes.

Looking Ahead

The most likely near-term outcome is not a flood of Bartlett Lake desktop builds. It is a slow increase in the sophistication of enthusiast firmware experiments, particularly where AI can help users map problems faster than traditional forum-only research. That could produce more boot successes, more board-specific patches, and more stories that blur the line between engineering and tinkering. It could also encourage hardware makers to harden their firmware checks further. Both things can be true at once.The bigger industry question is whether segmentation will keep moving deeper into software. If the physical socket becomes less important than the boot policy, then the real battleground shifts to firmware signatures, platform whitelists, and more aggressively locked initialization chains. Enthusiasts will keep probing those boundaries, and vendors will keep deciding whether to tolerate, ignore, or close them.

- More AI-assisted firmware work is likely in enthusiast circles.

- Vendors may tighten checks if modding becomes easier.

- Embedded-to-consumer crossover will remain a niche but persistent theme.

- Forum discoveries will continue to expose hidden platform similarities.

- Low-level experimentation will become more visible as tools improve.

Source: Research Snipers Overclocker cracks Intel's CPU lock with AI help – Research Snipers

Last edited: