Background

Background

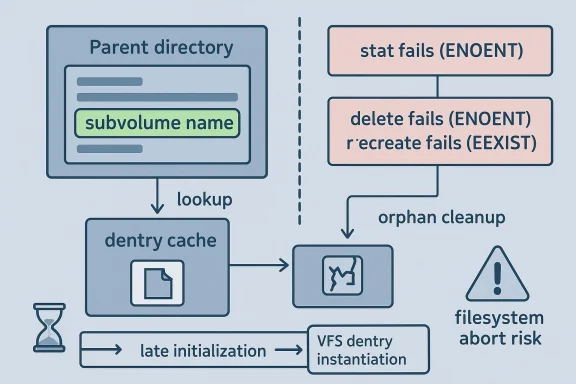

A newly published Linux kernel CVE is drawing attention to a subtle but very real Btrfs failure mode: subvolumes can wind up with broken dentries, making them appear present to the VFS while behaving like dead entries underneath. In the reported scenario, ls shows a subvolume name in the parent directory, but stat fails, deletion returns ENOENT, and fresh create attempts can trip EEXIST or even abort the filesystem. The upstream fix is narrowly framed as setting BTRFS_ROOT_ORPHAN_CLEANUP during subvolume creation, but the operational consequences are broader than that short commit message suggests. kind of kernel issue that looks deceptively small until you trace the state machine all the way through lookup, dentry instantiation, orphan cleanup, and delayed inode teardown. The CVE record says the bug was newly published on April 22, 2026 and comes from a kernel.org fix that was already pushed into stable trees through multiple backport references. That timing matters, because it means the issue is not hypothetical or academic; it is part of the live maintenance stream that Linux distributions and vendors are expected to ingest.The heart of the pretween what the dentry cache believes and what the subvolume actually is. Btrfs lookup into a subvolume can invoke orphan cleanup, and if that path returns

-ENOENT, the VFS can end up splicing in a negative dentry for something that is in fact a valid subvolume root. Once that happens, the filesystem’s metadata and the name cache stop telling the same story, and recovery becomes messy in the way only storage bugs can be.The new CVE also fits a familiar Linux kthat is not obviously a memory corruption flaw, but still has security relevance because it can trigger denial of service, metadata inconsistency, or forced filesystem aborts. Kernel.org’s CVE process explicitly ties CVE assignment to fixes that have already landed in stable trees, which reinforces the practical orientation of this disclosure. In other words, the existence of a CVE here is not ceremonial; it signals that maintainers considered the bug worthy of downstream remediation.

Overview

Btrfs is a copy-on-write filesystem with a lot of moving parts: subvolumes, snapshots, reference counting, orphan items, delayed inodes, and a dentry model that has to stay coherent across all of it. The flaw described in CVE-2026-31519 arises because the first meaningful call tobtrfs_orphan_cleanup can occur long after a subvolume has been created, yet the subvolume creation path itself did not mark that root as already eligible for orphan cleanup. That creates a race window where a later lookup can behave as if cleanup is happening for the first time, even though the object has already been visible to the filesystem for a report is unusually detailed, and that detail is useful because it shows how the bug becomes observable. A broken subvolume dentry is not just a cosmetic nuisance. It interferes with deletion, blocks reuse of the name, and can even provoke an abort if the filesystem receives a conflicting create request over the same path. Those symptoms point to a lookup-time invariant failing in a place where the VFS expects a clean yes-or-no answer.The report aissue can survive for some time before becoming visible. Most eviction paths keep references alive through parent dentries, but delayed iputs can loosen that protection. If a child dentry disappears while the inode still has outstanding references, the subvolume dentry can become evictable in a way that reopens the orphan-cleanup race. That is a textbook example of why filesystem bugs are rarely about one line of code; they are about timing, object lifetime, and assumptions that only line up under pressure.

Why this is a Btrfs-specific eneric VFS weirdness. It is rooted in the Btrfs root object’s internal state and the fact that the root-level orphan cleanup bit was not being set early enough during subvolume creation. Once the lookup path later tries to run cleanup and finds nothing to clean, it returns -ENOENT, and the VFS then treats the result as a missing object instead of a healthy one. That difference is enough to poison the dentry cache entry for the subvolume.

Why the negative dentry matters

A negative dentryence marker; in this context, it is a lie with consequences. Once the subvolume root is represented as “missing,” subsequent operations can misfire, including deletion attempts and new creates over the same name. That is why the record says the bug can be mitigated by dropping the dentry cache: you are not fixing the underlying state, but you are forcing the kernel to rebuild its belief about that path from scratch.What the fix changes

The fix is conceptually simple: ensure `BTRFS_ROOset during subvolume creation so the root cannot later be treated as if orphan cleanup has never been considered. That way, the first lookup into the subvolume does not accidentally enter a cleanup path that was never meant to run against a healthy, fully created root. Simple fixes are often the strongest ones in kernel code, because they tighten the invariant at the point where the invariant is born.How the Bug Manifests

The observed failure mode is strikingly user-visible. In the examre, a parent directory lists a subvolume entry that looks present, butstat fails and the file is effectively unusable. That means the issue straddles two layers at once: the namespace still advertises the object, while the inode and root lookup logic no longer agree on how to interpret it.That kind of split-brain behavior is especially painful for administrators because it breaks the normal recovery playnot be deleted, but also cannot be overwritten safely, is a maintenance trap. The report says the condition can be worked around by dropping the dentry cache, which is a clue that the bad state is largely in-memory and cache-mediated, not necessarily an on-disk structural catastrophe. That distinction matters because it tells operators the issue is recoverable, but not cleanly recoverable.

The dmesg symptom is equally revealing:

"could not do orphan cleanup -2", with -2 meaning ENOENT. The stack trace leads through btrfs_lookup_dentry into btrfs_orphan_cleanup, which means the filesystem is trying to reconcile a subvolume root that should be healthy with orphan logic that expects a different lifecycle state. When that reconciliation fails, the lookup machinery decides the root is negative, and the rest of the failure cascades from there.Why ENOENT is misleading here

The important nuance is that ENOENT is not proving the subvolume is gone. Instead, it is the error returned by a cleanupnot have been the gatekeeper for existence in the first place. That is why the bug is so confusing to users: a path can be physically there and logically alive, yet still be reported as missing because one internal helper returned the wrong answer at the wrong time.Why EEXIST shows up next

Once the dentry cache believes the name is occupied, the next create operation under that same path can return EEXIST. That is not the kernel beinernel being internally consistent with its own poisoned cache entry. The bad part is that the consistency is built on a false premise, so the filesystem can end up both denying deletion and denying recreation.Why an abort is so serious

A transaction abort or filesystem abort turns a narrow metadata bug into an availability incident. Even if the underlying data is intact, an aborted filesystem can forceterruption, and time-consuming remediation. In enterprise terms, that is enough to justify treating the CVE as operationally significant even if it is not a remote-code-execution issue.- The bug can present as a live name in the parent directory.

statcan fail even though the subvolume appears visible.- Deletion may fail with

ENOENT. - Recreate attempts can fail with

EEXIST. - The filesystemte is pushed far enough.

Root Cause Analysis

The inteCVE is not simply that orphan cleanup can fail. It is that theeing used as a state transition guaruld already be marked as participating in thaeport, the maintainers explicitly note that the meaningful firsupcall, the one that setsBTRFS_ROOT_ORPHAN_CLEANUP`, happens much later than subvolume creation. That delay creates the possibility that the lookup path becomes the first real consumer of a state bit that should have been initialized earlier.That is a classic kernel bug shape: late initialization of a stateful flag. The code works in the common case because many operations never need to revisit the exact boundary where the flag matters. But once the dentry cache is dropped or delayed iputs shift the object lifetime, the system revisits that boundary and trips over the missing bit. at are hardest to smoke out in testing because they depend on a very specific sequence of visibility, eviction, and cleanup.

The report also shows that the subvolume was “otherwise healthy looking,” meaning there was no partial delete or explicit drop progress to blame. That is important because it narrows the problem away from corruption and toward lifecycle bookkeeping. In plain terms, the filesystem did not lose the subvolume; it lost the ability to explain to itself how that subvolume ing lookup.

The role of the dentry cache

The dentry cache is central here because it caches the name-to-inode relationship. If the first lookup after creation enters orphan cleanup in a state where cleanup is not appropriate, then the cache can hold on to a bad negative result. From that point on, the kernel and user space may be arguing about a path that each side thinks is settled, even thougy is actually wrong.The delayed-iput corner

The delayed-iput corner case is the most nuanced piece of the report. Ordered extent creation can grab inode references, and if a file is unlinked and closed while those references still exist, the inode can remain alive after the child dentry is freed. That can make the subvolume dentry evictable while the underlying inode is still around, which is precisely the sort of timing gnt state bug to surface.Why the race is narrow but real

The report says there are effectively two races, though only the second and third diagrams are true races. That is a good sign that the maintainers were thinking carefully about the exact interleavings, not just waving at “a race condition” in the abstract. Narrow races often matter more than broad ones because they are harder to reproduce, more likely to survive review, and more likely to shnder stress.- The root is healthy, not half-deleted.

- The orphan-cleanup bit is set too late.

- Lookup can become the first real cleanup path.

- Cache eviction can expose the race window.

- Delayed iputs complicate inode lifetime.

Why the Fix Is Surgical

The proposed fix is attractive because it does not rewrite Btrfs subvolume handling wholesale. Instead, it moves the point at which the filesystem declares, “this root is subject to orphan clean lookup path is no longer forced to infer tThat is the kind of patch maintainers like flag with the object lifecycle instead of compensle after the fact.There is also an importan: the change appears small enough to be backel fixes live or die on whether they can be transplanted into older trees without pulling in a giant dependency chain. The presence of multiple stable commit references in the CVE record strongly suggests this fix was considered suitable for that exact path.

The deeper engineering lesson is that initialization time matters just as much as cleanup time. Setting a state bit too late is not much better than never setting it at all if the first consumer of that bit e decision before the flag is established. In this case, the cleanup bit is not a formality; it is part of the root’s contract with the rest of the filesystem.

Why this is preferable to a lookup workaround

A workaround in lookup would only mask the symptom. It might avoid negative dentries in the broken case, but it would leave the lifecycle ambiguity intact. By g subvolume creation, the fix moves correctness earlier in the pipeline and reduces the chance of future code paths accidentally reintroducing the same bug through another caller. That is the more durable fix, even if it is less flashy.Why the state bit should travel with the root

The root object is the right place to remember cleanup state because the root already owns the semanetime. Putting that knowledge elsewhere would make the code harder to reason about, not easier. Kernel bugs often arise when the “truth” of an object is split across multiple call sites; consolidating that truth into the root’s creation path is the cleanest response.Why stable maintainers care

Stable maintainers tend to prefer fixes that are easy to explain in one sentence and easy to verify in one test. This one qualifies. The bug is narrow, the symptom is is a simple invariant correction. That combination usually translates well to vendor kernels, which is where most enterprises actually consume their Linux updates.- The fix is narrow and targeted.

- It restores the invariant at creation time.

- It is more durable than a lookup-only workaround.

- It is suitable for stable backports.

- It redture lifecycle drift.

Operational Impact

For administrators, the most important question is not “can an attacker get code execution?” but “can this bug disrupt storage operations in a way that hurts production?” On that measure, the answer is clearly yes. A broken subvolume dentry can block deletion, block recreation, and in some cases cause the filesystem to abort when it encounters conflicting state. Those are all bad outcomes in environmenfs for snapshots, rollback, or container storag are likely to see this less often simply because the specific subvolume and delayed-iput pate issue. Enterprise fleets, by contrast, generate th concurrent, and repetitive workloads that expose filesystem lifecycle bugs. That asymmetry is why “rare” kernel bugs often become very real in larger deployments.The issue also complicates incident response. A storage admin faced with a broken-looking subvolume may first assume on-disk corruption, when in fact the underlying state can sometimes be repaired by dropping the dentry cache. That is not a fix you want to discover under pressure, because it blurs the line between metadata inconsistency aisoning. Clearer diagnostics would help, but the safest course is still to apply the fix once it lands in the distro kernel.

Enterprise vs consumer exposure

Enterprises are more exposed because they run more instances, keep them alive longer, and place greater trust in rollback and snapshot semantics. Consumers may never notice the bug unless they happen to hit the exact sequence, cleanup, and cache eviction. The operational risk therefore scales less with feature usage in the abstract and more with the density of Btrfs-managed state in the fleet.Why snapshot-heavy setups should care

Snapshot-heavy setups increase the importance of root lifecycle correctness because they rely on subvolume metadata being trustworthy. If a subvolume root can fall into an inconsistent dentry state, the snapshot management layer may inherit that confusion during automatiis a particularly nasty class of failure because it can break the assumptions of backup tooling even when the data itself still exists.Why this is an availability bug first

This CVE is best understood as an availability and integrity problem rather than an exploit primitive. The bug can prevent expected maintenance actions and can cause the filesystem to reject perfectly plausible operations. In security terms, that is still serious, because avaie core properties defenders are obligated to protect.- Deletion can fail unexpectedly.

- Recreation can fail unexpectedly.

- Filesystem aborts raise outage risk.

- Enterprise fleets are more likely to hit the race.

- Snapshot and rollback workflows are the most exposed.

Competitive and Ecosystem Implications

Btrfs has always lived under a microscope because it promises advanced features while asking operators to trust complex metadata behavior. A bug an Btrfs is uniquely unstable, but it does remind the market that sophisticated filesystem features come with sophisticated correctness demands. Every visibility bug in a filesystem strengthens the conservative bias toward the better-understood alternatives in some workloads, even if those alternatives have their own separate trade-offs.The broader Linux ecosystem also benefits from these di upstream review process remains visThe report’s detail, combined with stces, shows the maintenance pipeline workfound, root cause analyzed, fix applied, and downstreaar target. That kind of transparency is one reason kerneld systematically rather than left to drift.

From a competitive standpoint, Btrfs does not need this bug to define its reputation. But high-variance lifecycle bugs are exactly the kind of issues that make storage teams cautious about adopting a filesystem as a default. The real battlefield is trust, and trust is built not just by shipping features, but by proving that edge cases will not collapse under load.

What rivals gain indirectly

Competing filesystearketing advantage when Btrfs has to publicly explain a race involving broken dentries and orphan cleanup. Operators often read such reports as evidence that complexity must be weighed against operational familiarity. That does not make the alternatives perfect, but it does make them look safer in procurement conversations, which is often enough to matter.Why transparency helps Btrfs too

At the same time, a clear upstream fix is healthier than a hidden failure mode. The report shows maintainers were able to reason about the issue, isolate the state transition, and land a targeted correction. That is an argument in favor of the ecosystem’s maturity, not against it. Mature software is often the software that exposes its scars in public and then patches them.Why the CVE matters beyond Linux

Microsoft’s security portal includininder that Linux kernel issues now show up in mixed-platform vulnerability management workflows. Even organizations that do not think of themselves as “Linux shops” increasingly have Linux in containers, appliance images, edge devices, and storage backends. That makes Btrfs bugs relevant to broader patch governance than they once were.- Trust is as depth.

- Public fixes help downstream validation.

- Complex filesystems carry complex edge cases.

- Visibility can be a competitive disadvantage and a maintenance strength.

- Mixed-platform vulnerability tools make Linux bugs more visible. ([nvd.nist.gov](NVD - CVE-2026-23018 and Opportunities

There is also a real opportunity here for operators to tighten monitoring around Btrfs subvolume lifecycle events. If a path starts behaving like n the object should still be valid, that is exactly the sort of condition worth surfacing early in logs or health checks. The omalies are noticed, the easier they are to diagnose before they become files.

For vendors, the bug is a good candidate for quick backporting because the fix is small and the behavior change is narrow. That lowers the risk of collateral regressions, which means support teams can ship it with more confidence. In a world where kernel change management is often driven by caution, that matters as much as the technical merit of the patch itself.

- Clear root caow fix surface.

- Good backport characteristics.

- Useful signal for storage-health monitoring.

- Strong relevance to snapshot-heavy systems.

- Better understanding of Btrfs lifecycle semantics.

Risks and Concerns

The obvious risk is that the bug is subtle enough to evade casual testing but disruptive enough to he are the worst kinds of filesystem defects, because they do not fail loudly and immediately; they fail inconsistently, under particular timing conditions, and often when the system is already under stress. That makes the incident both harder to reproduce and harder to explain.Another concern is patch lag. The fix may be in upstream and stable trees, but many production systems consume vendor kernels on a delayed schedule. Unrrive in shipping buildw remains open, especially in appliance-style deployments that do not track mainline closely. ([docs.kernel.org](ht/next/process/backporting.html?utm_source=openae risk of misclassification. Because this does not reaoit, teams may downgrade it in priority despite the fact that it can abort a filesystem or block crucial administrative actions. That would be a mistake. In storage, reliability failures are security failures when they put data availability and recovery guarantees at risk.

- Hard to reproduce in labs.

- Patch lag can extend real exposure. ([docs.kernel.org](Backporting and conflict resolution — The Linux Kernel documentation confusion can mislead responders.

- File-system aborts can amplify impact.

- The bug may be underestimated because it is not RCE.

- Snapshot and rollback users bear the highest pain.

Looking Ahead

The next thing to watch is how quickly downstream distributions fold the fix into their kernel streams. The CVE record already points to multiple stable references, which is encouraging, but the real-world answer depends on how fast vendors translate those commits into shipped updates. For most organizations, the practical difference between “fixed upstream” and “fixed in the image you actually run” is what determines risk.It is also worth watching whether the bug triggers follow-on cleancode paths. Whenever a filesystate-set lifecycle bit, maintainers often review similar code for other roots or teardown paths that might have inherited That kind of audit tends to produce more susually a sign of healthy maintenance rather than broadeators, the practical checklist is straightforward: convenance, verify whether your Btrfs deployments are affected, and prioritize patching on systems that rely on subvolumes for snapshots or rollback. In the meantime, if a subvolume begins behaving like a phantom entry, treat that as a real filesystem anomaly rather than an oddity to ignore. Kernel cache state and durable filesystem state are not the same thing, and this CVE is a reminder of how dangerous it can be when they drift apart.

- Confirm wheady contains the backport.

- Prioritize systems that use Btrfs subvolumes heavily.

- Watch for parent-directory entries that cannot be

stated. - Treat

ENOENTplusEEXISTon the same name as a red flag. - Track vendor advisories, not just upstream commits.

Source: NVD / Linux Kernel Security Update Guide - Microsoft Security Response Center