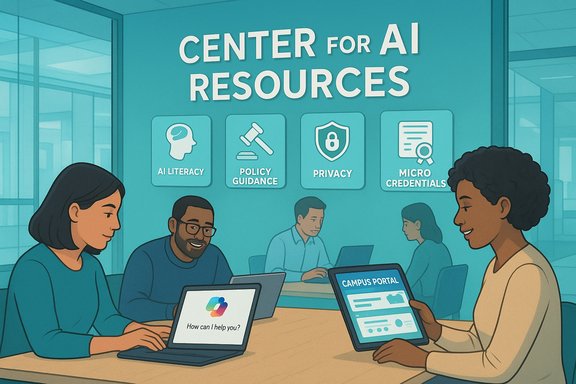

University of Phoenix has launched a centralized Center for AI Resources aimed at helping working adult learners, faculty and staff navigate generative AI responsibly, build practical AI literacy, and use institution-provisioned tools such as Microsoft Copilot within a governed learning environment. The new hub consolidates policy-aligned guidance, how-to prompting material, privacy and safety guidance, and course-facing expectations — and is available inside campus portals so students encounter AI guidance where they study.

University of Phoenix’s Center for AI Resources is positioned as a student-first portal that combines foundational AI literacy with practical, course-level expectations and tools. The Center highlights the university’s policy stance on generative AI in coursework, provides step-by-step orientation to institutionally available tools, and emphasizes responsible-use scenarios tied to academic integrity and privacy. The University’s public materials also confirm that students receive Microsoft 365 accounts through which they can access Microsoft Copilot, and that a Generative AI elective and related learning badges are part of the institution’s skills-aligned curriculum. This launch follows a broader higher-education trend: universities are increasingly moving from rhetoric to operational programs that pair managed tool access (tenant-contained or enterprise-grade Copilot-like services), explicit pedagogy and faculty development, and governance controls such as data classification and DLP. Peer institutional pilots and playbooks emphasize the same priorities — equity of access, faculty readiness, privacy controls, and assessment redesign — all of which appear reflected in the University of Phoenix announcement and its student-facing pages.

Source: StreetInsider University of Phoenix launches Center for AI Resources to help students use generative AI responsibly and effectively

Background / Overview

Background / Overview

University of Phoenix’s Center for AI Resources is positioned as a student-first portal that combines foundational AI literacy with practical, course-level expectations and tools. The Center highlights the university’s policy stance on generative AI in coursework, provides step-by-step orientation to institutionally available tools, and emphasizes responsible-use scenarios tied to academic integrity and privacy. The University’s public materials also confirm that students receive Microsoft 365 accounts through which they can access Microsoft Copilot, and that a Generative AI elective and related learning badges are part of the institution’s skills-aligned curriculum. This launch follows a broader higher-education trend: universities are increasingly moving from rhetoric to operational programs that pair managed tool access (tenant-contained or enterprise-grade Copilot-like services), explicit pedagogy and faculty development, and governance controls such as data classification and DLP. Peer institutional pilots and playbooks emphasize the same priorities — equity of access, faculty readiness, privacy controls, and assessment redesign — all of which appear reflected in the University of Phoenix announcement and its student-facing pages.What the Center delivers: features and immediate value

The Center for AI Resources is explicitly practical and course-minded. Key features called out by the University include:- Foundational AI literacy — plain-language explanations of what generative AI is, how it works, and why it matters in workplaces and coursework.

- Coursework expectations — an institutional philosophy and policy framework that clarifies when AI is allowed, how to disclose use, and how faculty will evaluate AI-assisted work.

- Tool orientation and prompting guidance — step-by-step directions for safely using campus-provisioned tools (for University of Phoenix this includes Microsoft Copilot via student Microsoft 365 accounts) plus practical prompting basics.

- Safety, privacy and data hygiene guidance — what counts as sensitive content, how to avoid exposing protected information to external models, and how enterprise protections apply when using institution-managed accounts.

- Benefits and limitations — balanced sections that encourage students to use AI for ideation, productivity and iterative drafting while warning about hallucinations, bias, and the need for verification.

Why this matters for working adult learners

University of Phoenix frames the Center within its broader career-focused, skills-aligned ecosystem. That ecosystem emphasizes:- Mapping career-relevant skills across degree programs,

- Offering micro-credentials and skills badging,

- Aligning assessments with workplace-relevant tasks, and

- Evaluating real work experience for credit.

Sector context: how University of Phoenix’ move aligns with peers

The University’s approach mirrors a broader, emergent best-practice pattern in higher education:- Provision enterprise-grade or tenant-contained AI tools to ensure equitable access and enforceable governance.

- Pair platform access with mandatory or well-scaffolded literacy/training modules for both faculty and students.

- Redesign assessments toward process, provenance and demonstrable skills (staged submissions, oral defenses, annotated AI logs).

- Implement technical guardrails — DLP, role-based access control, tenant-level logging — before broad deployment.

Critical analysis — strengths

- Centralized, student-focused guidance

The Center puts practical, course-level guidance where students learn, reducing confusion from conflicting course-by-course rules. Embedding AI literacy inside platforms such as MyPhoenix and the Virtual Student Union increases discoverability and lowers the activation cost for learners. - Alignment with enterprise tooling (Microsoft Copilot)

By provisioning Microsoft 365 accounts and enabling Copilot, the University gives students hands-on experience with workplace-grade assistants — a real upside for employability. The institutional framing around encryption, enterprise protections and guidance mitigates some privacy risk when compared to students using consumer accounts. - Career- and skills-aligned pedagogy

Integrating AI literacy into existing skills frameworks and micro-credentials helps make AI competency demonstrable to employers and more than a passing novelty. The decision to tie generative AI guidance to skills mapping and badges is pedagogically smart for adult learners who prioritize direct career value. - Continuous improvement and feedback loops

The Center’s built-in feedback mechanisms and phased enhancements (video explainers, infographics, expanded topics) show an operational commitment to iterate — which is essential given how rapidly tool capabilities and vendor terms change.

Critical analysis — risks and gaps

- Data governance and vendor contractual guarantees

Even when institutions provide Copilot through Microsoft 365, the promise of “private” or “enterprise-protected” use depends on contract terms, telemetry practices, and retention policies. Institutional marketing language can overstate guarantees if procurement does not secure explicit deletion rights, auditability and non-training clauses with vendors. This is a sector-wide concern and requires documentation and transparency beyond marketing. - Academic integrity and assessment design

Clear policies alone are insufficient if assessment design still rewards polished final products over process. Without redesigned assessments that make student process visible (iterative drafts, oral defenses, annotated prompt logs), widespread Copilot access could enable sophisticated shortcutting rather than deeper learning. The Center must be paired with faculty development and course-level rubric changes to hold weight. - Equity and access beyond licensing

Providing Microsoft Copilot via M365 reduces paywall disparities, but access inequities can persist: device constraints, poor connectivity, or differential familiarity with AI tools. The institution must monitor and mitigate these gaps (device lending, low-bandwidth guides, asynchronous training paths). - Hallucinations, bias and over-reliance

Generative models can produce fluent but incorrect content. Students must be taught verification workflows and citation hygiene. Center content that emphasizes citation, provenance and skepticism is necessary but not sufficient — assessment and grading must require and reward independent verification. - Vendor lock-in and curriculum conditioning

Deep curricular integration with a single tool or vendor can create future switching costs and condition student skills to vendor-specific interfaces. Promoting vendor-neutral AI literacy (concepts, verification, model-agnostic prompting techniques) preserves portability of graduate competencies. - Transparency and enforceability of policy

Announced policies must be operationalized: how will faculty enforce disclosure rules? What evidence will suffice to verify AI use? Will AI-usage logs be retained and used in disputes? These procedural questions should be answered in public-facing governance documents and faculty handbooks.

Practical recommendations and tactical checklist

The following actionable checklist synthesizes the University’s stated approach with sector best practices and risk mitigations:- Governance and procurement

- Require auditable contract clauses: non-training assurances, deletion/retention schedules, and vendor telemetry visibility.

- Publish a governance summary for students and faculty that explains what data is logged, how long it is retained, and how to request deletion.

- Technical controls

- Enforce role-based access control and integrate M365/Entra SSO before broad Copilot-enabled workflows.

- Apply DLP and Purview sensitivity labels for data types that must never be sent to general-purpose models.

- Create a sandbox environment for experimentation that isolates sensitive datasets.

- Pedagogy and assessment

- Redesign high-stakes assessments toward process evidence: staged deliverables, annotated AI prompt logs, reflective disclosures, and oral defenses.

- Offer assignment exemplars that show acceptable vs. unacceptable AI use in submissions.

- Faculty development and support

- Require or incentivize faculty to complete short, discipline-specific AI pedagogy modules before integrating AI into graded work.

- Build an “AI-assessment playbook” and a shared repository of AI-aware assignments and rubrics.

- Student supports and equity

- Provide low-bandwidth, asynchronous learning modules and device-lending programs for students with connectivity or device constraints.

- Offer short, practical micro-credentials in prompt literacy, bias awareness, and verification workflows.

- Monitoring and measurement

- Track KPIs: active users, student-perceived clarity of AI policies, DLP incidents, academic integrity incidents tied to AI, and learning outcomes over time.

- Publish anonymized dashboards to demonstrate accountability and inform continuous improvement.

Implementation and governance: what to watch for next

- How contractual terms evolve — Watch for published procurement summaries or FAQs that disclose whether vendor commitments include non-use of prompts for model training, access to telemetry/audit logs, and deletion rights. Without visible procurement clarity, privacy promises remain aspirational.

- Faculty adoption and assessment redesign at scale — The Center’s educational impact depends on faculty readiness and willingness to redesign assessments. Look for evidence of mandatory faculty modules, course-level policy templates, and exemplars.

- KPI transparency — Effective programs measure both operational outcomes (licenses, active users) and pedagogical outcomes (student learning gains, employer signal of skills). Publishing these metrics increases institutional credibility and guides iterative improvements.

Quick guide for students and instructors interacting with the Center

- For students:

- Use Microsoft Copilot provided through your institutional Microsoft 365 account for coursework where permitted, but always verify factual claims and cite sources used or suggested by the tool.

- Keep simple documentation of your AI interactions (prompt text, timestamps, and short notes on how output was used) for transparency and learning reflection.

- Never paste personally identifiable or protected data (HIPAA, financials or proprietary corporate data) into public or consumer-grade AI tools.

- For instructors:

- Update your syllabus with explicit AI-use expectations and include an assignment-level disclosure template that students can use to state tool usage.

- Prefer assessment formats that capture process: require drafts, annotated bibliographies, and short oral check-ins for high-stakes work.

- Make the Center’s guidance and institutional policies an explicit resource on your course main page.

Final assessment

University of Phoenix’s Center for AI Resources is a timely, pragmatic move that recognizes two realities: generative AI is already a student study tool, and higher education must shift from bans to taught, governed adoption. The Center’s strengths are its integration into the student experience, alignment with enterprise tooling (Copilot via M365), and its intention to connect AI literacy with career-relevant skills. These features make the Center a useful model for working adult learners who need both practical guidance and employer-aligned competencies. That said, the initiative’s long-term success will depend on the durability of its governance measures, the clarity and enforceability of procurement terms, the scale and quality of faculty development, and the ability to redesign assessments so they reward learning processes rather than final polish. The Center is a necessary, but not sufficient, element of responsible AI adoption in higher education. Institutions that combine tool access with rigorous procurement, transparent data governance, assessment redesign, and continuous measurement will realize the most durable educational gains.Closing thoughts

By centralizing AI guidance and coupling it to the institution’s technology ecosystem and skills framework, University of Phoenix has taken a defensible step toward delivering AI literacy to working learners at scale. The Center aligns with a sector trend favoring managed adoption — an approach that balances access, pedagogy, and governance. The immediate priorities now are operational transparency, robust faculty support for assessment redesign, and continued focus on equity so that AI becomes a skill-building partner, not a shortcut or source of inequity. If the Center is maintained as a living resource — one that publishes governance decisions, measures outcomes, and adapts to tool and regulatory changes — it can be a practical blueprint for how universities translate AI capability into measurable student and workforce value while managing real privacy, integrity and equity risks.Source: StreetInsider University of Phoenix launches Center for AI Resources to help students use generative AI responsibly and effectively