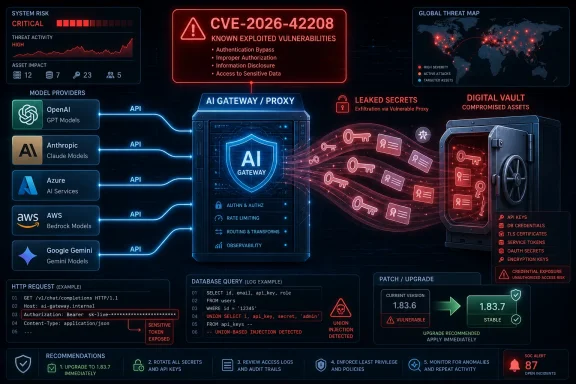

CISA on May 8, 2026, added CVE-2026-42208, a critical SQL injection flaw in BerriAI’s LiteLLM AI proxy, to its Known Exploited Vulnerabilities Catalog after evidence showed attackers were actively exploiting the bug against systems that broker access to large language model services. The entry is only one line in the federal catalog, but it points to a much larger shift in enterprise risk. AI gateways are no longer experimental glue code sitting at the edge of a lab project; they are becoming credential brokers, policy engines, billing chokepoints, and now high-value targets.

The federal government’s Known Exploited Vulnerabilities catalog has always been a blunt instrument by design. CISA does not add every severe bug, every scary proof-of-concept, or every vulnerability with a dramatic CVSS score. It adds vulnerabilities when there is evidence of exploitation and when the agency believes the weakness presents material risk to federal civilian networks.

That is what makes the LiteLLM addition notable. CVE-2026-42208 is not a Windows kernel bug, an Exchange flaw, a VPN appliance zero-day, or one of the familiar enterprise perimeter failures that have dominated KEV updates for years. It is a vulnerability in an open-source AI proxy, the kind of software many organizations adopted quickly because they needed a single way to route traffic to OpenAI, Anthropic, Azure OpenAI, AWS Bedrock, Google Gemini, Cohere, and other model providers.

LiteLLM’s appeal is obvious. It gives developers and platform teams an OpenAI-compatible interface, centralizes provider routing, tracks spending, manages virtual keys, and reduces the chaos of every app team hard-coding its own model credentials. That same design also means a vulnerable LiteLLM instance can become a vault door with a web API on the front.

The CISA alert says the agency added one vulnerability to KEV based on evidence of active exploitation. The affected issue, CVE-2026-42208, is a BerriAI LiteLLM SQL injection vulnerability. Under Binding Operational Directive 22-01, federal civilian executive branch agencies must remediate cataloged vulnerabilities by the due date listed in KEV; in practice, private-sector defenders should treat the catalog as a triage signal rather than a federal-only compliance artifact.

In this case, the input was not a search box or a customer name field. It was the bearer token used during proxy API key verification. Reports and advisories describe a vulnerable code path in which LiteLLM checked incoming authorization data against its database and, under certain error-handling conditions, allowed attacker-controlled token content to influence the SQL query.

That matters because the path was reachable before authentication. An attacker did not need a valid LiteLLM key, an account, or prior access to the target organization. A crafted

Affected versions have been reported as LiteLLM 1.81.16 through versions before 1.83.7, with 1.83.7-stable identified as the fixed release. Security databases and vendor-linked advisories rate the vulnerability as critical, with scores reported in the 9.x range depending on the scoring source. The exact decimal matters less than the shape of the risk: network reachable, no authentication required, high confidentiality impact, and potential integrity impact against the proxy database.

That position is operationally convenient. It lets teams centralize logging, enforce policy, apply model allowlists, manage rate limits, track cost, and abstract one provider away from another. It also creates an aggregation point for credentials that were previously scattered across applications, environment variables, CI secrets, and developer laptops.

A successful SQL injection against that layer can therefore expose more than one application’s token. Depending on deployment choices, it may reveal virtual proxy keys, master keys, provider API credentials, database connection strings, and configuration values. In a poorly segmented environment, the attacker does not merely get a way into the proxy; the attacker may get the keys needed to go around it.

That is the architectural sting. Many organizations introduced AI gateways to increase control over model access. CVE-2026-42208 shows that centralizing control without treating the gateway as a high-value secrets system can simply move risk into a more attractive pile.

Reporting from security researchers described exploitation attempts within roughly 36 hours of public advisory indexing. The observed activity reportedly used UNION-based SQL injection payloads and targeted database areas associated with LiteLLM tokens, credentials, and configuration. That is not the behavior of someone randomly spraying apostrophes at every endpoint and hoping for an error message.

The speed matters because it compresses the useful life of traditional patch planning. In older enterprise rhythms, a critical open-source component might have been scheduled into the next sprint, the next maintenance window, or the next quarterly platform update. For internet-facing AI infrastructure, that rhythm is increasingly untenable.

The adversary does not need the same inventory discipline that defenders need. Attackers can scan for exposed services, test a small number of recognizable routes, and move on to the next host if a payload fails. Defenders must know where LiteLLM is deployed, which version is running, whether it is reachable from the internet, which credentials it holds, and who owns the business process that can rotate those credentials.

Private organizations are not bound by BOD 22-01, but ignoring KEV because it is a federal requirement misses the point. CISA’s catalog is one of the few widely recognized vulnerability lists filtered by known exploitation rather than theoretical severity alone. In a world where every scanner produces endless critical findings, KEV helps answer the more urgent question: which bugs are attackers already using?

For LiteLLM, the federal due date should be treated as the slowest acceptable outer boundary, not the target. Internet-facing instances should already be patched or isolated. Internal-only instances deserve attention too, because “internal” increasingly includes contractors, CI jobs, developer workstations, service meshes, test harnesses, and compromised identities.

Organizations also need to resist the comforting fiction that patching alone ends the incident. If a vulnerable LiteLLM proxy was exposed during the exploitation window, the safer assumption is that stored secrets may have been accessible. The operational work then becomes more painful: rotate keys, revoke virtual tokens, review provider usage, inspect database logs where available, and verify that model-provider credentials were scoped narrowly enough that a leak would not become a blank check.

LiteLLM is often deployed as Linux-based container infrastructure, but its consumers may be Windows applications, .NET services, internal helpdesk bots, Power Platform connectors, developer tools, or Azure-hosted workloads. A compromise of the proxy can affect Windows users even if no Windows host is directly exploited. The boundary that matters is not the operating system boundary; it is the credential boundary.

This is especially relevant for shops standardizing on Azure OpenAI while still experimenting with other providers. A proxy layer can make that multi-provider strategy manageable, but it can also obscure where credentials live and who is responsible for rotating them. If the AI platform team owns LiteLLM and the Windows infrastructure team owns the applications consuming it, the response can fall between org chart boxes.

The right lesson is not that Windows admins must become AI framework specialists overnight. It is that AI infrastructure has become part of enterprise infrastructure. It belongs in asset inventories, vulnerability management scopes, network diagrams, backup plans, incident response exercises, and privileged access reviews.

The harder question is what to do after patching. SQL injection against a credential-bearing database is not like a denial-of-service bug where service restoration is the main task. The meaningful impact is what an attacker may have read or changed before the patch landed.

That means defenders should treat exposed vulnerable deployments as possible secret exposure events. LiteLLM virtual keys should be revoked and regenerated. Provider API keys stored in the proxy should be rotated. Master keys deserve special scrutiny because they can often create or manage other tokens. If the proxy stored database connection strings, cloud credentials, or environment-derived secrets, those should be reviewed as well.

Logs may help, but they should not become an excuse for inaction. Application logs might show suspicious authorization headers, SQL metacharacters, UNION keywords, or anomalous requests to common LLM routes. Network and reverse-proxy logs may show probes from unfamiliar infrastructure. PostgreSQL logs, if sufficiently detailed, may reveal odd SELECT patterns against credential or configuration tables. But many organizations will discover that the logs they wish they had were not enabled before the incident.

This distinction matters because AI infrastructure is often owned by teams that move faster than central IT. A platform engineer may be tempted to apply a configuration workaround, close the ticket, and wait for the next container rebuild. That is risky when the vulnerability is already in CISA KEV and exploitation has been observed.

Network-layer controls are similarly valuable but incomplete. Placing LiteLLM behind a VPN, private API gateway, identity-aware proxy, or allowlisted ingress path reduces opportunistic scanning. It does not remove the vulnerable code, and it may not protect against compromised internal accounts, malicious insiders, exposed CI runners, or adjacent workloads.

The broader mitigation is to reduce what the proxy can lose. Provider keys should be scoped to the smallest practical set of models, projects, budgets, and permissions. Credentials should be rotated on a schedule that assumes eventual exposure. Administrative functions should be separated from runtime request forwarding. The proxy database should not become a general-purpose dumping ground for every secret an AI application might need.

The problem is the mismatch between adoption velocity and operational maturity. Many organizations adopted AI proxies during a period of intense experimentation. Developers needed model access immediately, finance wanted spend visibility, security wanted some centralized control, and platform teams wanted to avoid a dozen bespoke integrations. A tool like LiteLLM solved a real coordination problem.

But software that begins as an accelerant can quietly become a dependency. Once it holds production credentials and sits in the path of business workflows, it must be governed like infrastructure rather than a developer convenience. That transition often happens without ceremony, budget, or ownership changes.

CVE-2026-42208 is therefore less a referendum on one project than a warning about how quickly AI middleware is being promoted into critical roles. The security model has to catch up with the deployment model. If a proxy can authorize users, spend money, store provider keys, and route sensitive prompts, it deserves the same scrutiny as an API gateway, identity broker, and secrets manager combined.

A SQL injection in an AI proxy does not require the attacker to understand transformer architectures. It does not require tricking a model into revealing system prompts. It does not require adversarial examples, malicious embeddings, or a zero-click agent workflow. It requires a reachable endpoint, unsafe input handling, and something valuable in the database.

That should shape defensive priorities. AI-specific threat modeling is necessary, but it cannot replace web application fundamentals. Parameterized queries, input handling, least privilege, logging, segmentation, dependency updates, and secret rotation remain the controls that decide whether a vulnerability becomes a breach.

The novelty is where those old controls now need to be applied. The AI gateway is not just another microservice. It may be the place where every app’s model traffic, every provider’s key, and every governance promise converges. When an old bug lands there, the impact looks new.

LiteLLM is exactly the kind of component that can appear in that shadow layer. A team might deploy it to normalize API calls from a .NET application. A data science group might use it to compare model output across Azure OpenAI and Anthropic. A support automation project might run it in Kubernetes with a handful of provider keys and minimal oversight because it started as a pilot.

The danger is not experimentation itself. The danger is production value accumulating inside experimental infrastructure. Once a tool stores real provider credentials, handles real customer prompts, or becomes embedded in business workflows, it should be pulled into the same governance loop as any other production service.

Windows and Microsoft administrators can help by asking practical questions. Are any internal apps calling a LiteLLM endpoint? Are container registries, Kubernetes clusters, Azure Container Apps, developer VMs, or Linux hosts running LiteLLM images or Python packages? Are API keys for Azure OpenAI or other providers stored in a proxy database rather than a managed secret store? Are those keys scoped, logged, and rotated?

KEV cuts through that by emphasizing exploitation. When CISA adds a vulnerability, the message is not that every other bug can be ignored. The message is that this one has crossed from possibility into observed attacker behavior, and the remediation clock should move accordingly.

CVE-2026-42208 deserves that treatment because the target class is expanding. AI gateways are increasingly common, often internet reachable, and frequently connected to valuable credentials. Even organizations that do not run LiteLLM should use the alert as a prompt to examine equivalent systems.

The important distinction is between vendor positioning and operational reality. A product may describe itself as a proxy, gateway, router, observability layer, cost manager, or developer platform. Defenders should describe it in terms of what it can access. If it can read or mint keys, spend money at provider APIs, access sensitive prompts, or alter routing policy, it is critical infrastructure.

CISA’s May 8 KEV update is small enough to skim past and serious enough to deserve a change in posture. CVE-2026-42208 shows that the next major enterprise AI incident does not need to begin with a science-fiction prompt attack; it can begin with a decades-old SQL injection in the system everyone trusted to make AI access manageable. The organizations that come out ahead will be the ones that inventory those gateways now, patch them on exploitation timelines, and design them so the next bug leaks as little power as possible.

Source: CISA CISA Adds One Known Exploited Vulnerability to Catalog | CISA

CISA’s Small Update Lands on a Large New Attack Surface

CISA’s Small Update Lands on a Large New Attack Surface

The federal government’s Known Exploited Vulnerabilities catalog has always been a blunt instrument by design. CISA does not add every severe bug, every scary proof-of-concept, or every vulnerability with a dramatic CVSS score. It adds vulnerabilities when there is evidence of exploitation and when the agency believes the weakness presents material risk to federal civilian networks.That is what makes the LiteLLM addition notable. CVE-2026-42208 is not a Windows kernel bug, an Exchange flaw, a VPN appliance zero-day, or one of the familiar enterprise perimeter failures that have dominated KEV updates for years. It is a vulnerability in an open-source AI proxy, the kind of software many organizations adopted quickly because they needed a single way to route traffic to OpenAI, Anthropic, Azure OpenAI, AWS Bedrock, Google Gemini, Cohere, and other model providers.

LiteLLM’s appeal is obvious. It gives developers and platform teams an OpenAI-compatible interface, centralizes provider routing, tracks spending, manages virtual keys, and reduces the chaos of every app team hard-coding its own model credentials. That same design also means a vulnerable LiteLLM instance can become a vault door with a web API on the front.

The CISA alert says the agency added one vulnerability to KEV based on evidence of active exploitation. The affected issue, CVE-2026-42208, is a BerriAI LiteLLM SQL injection vulnerability. Under Binding Operational Directive 22-01, federal civilian executive branch agencies must remediate cataloged vulnerabilities by the due date listed in KEV; in practice, private-sector defenders should treat the catalog as a triage signal rather than a federal-only compliance artifact.

The Bug Is Old-School SQL Injection in a Very New Place

The uncomfortable part of CVE-2026-42208 is how familiar the underlying class of bug is. SQL injection is not a novel AI-era exploit technique. It is one of the oldest and most preventable web application failures: untrusted input is mixed into a database query as text instead of being handled safely through parameterized queries or prepared statements.In this case, the input was not a search box or a customer name field. It was the bearer token used during proxy API key verification. Reports and advisories describe a vulnerable code path in which LiteLLM checked incoming authorization data against its database and, under certain error-handling conditions, allowed attacker-controlled token content to influence the SQL query.

That matters because the path was reachable before authentication. An attacker did not need a valid LiteLLM key, an account, or prior access to the target organization. A crafted

Authorization header sent to an LLM API route could reach the vulnerable query path, making this a pre-authentication SQL injection against software that often stores secrets by design.Affected versions have been reported as LiteLLM 1.81.16 through versions before 1.83.7, with 1.83.7-stable identified as the fixed release. Security databases and vendor-linked advisories rate the vulnerability as critical, with scores reported in the 9.x range depending on the scoring source. The exact decimal matters less than the shape of the risk: network reachable, no authentication required, high confidentiality impact, and potential integrity impact against the proxy database.

The AI Proxy Has Become the New Credential Choke Point

For years, defenders have been trained to think about identity providers, password managers, CI/CD systems, and cloud control planes as crown-jewel infrastructure. AI proxies now belong in that conversation. A proxy such as LiteLLM is not merely forwarding chat completions; it can sit between every internal application and every external model provider the organization uses.That position is operationally convenient. It lets teams centralize logging, enforce policy, apply model allowlists, manage rate limits, track cost, and abstract one provider away from another. It also creates an aggregation point for credentials that were previously scattered across applications, environment variables, CI secrets, and developer laptops.

A successful SQL injection against that layer can therefore expose more than one application’s token. Depending on deployment choices, it may reveal virtual proxy keys, master keys, provider API credentials, database connection strings, and configuration values. In a poorly segmented environment, the attacker does not merely get a way into the proxy; the attacker may get the keys needed to go around it.

That is the architectural sting. Many organizations introduced AI gateways to increase control over model access. CVE-2026-42208 shows that centralizing control without treating the gateway as a high-value secrets system can simply move risk into a more attractive pile.

Exploitation Arrived on the Same Clock as Modern Vulnerability Intelligence

The timeline around this bug follows a pattern defenders now know too well. A patch appears, an advisory becomes public, vulnerability databases index the issue, and automated watchers on both sides of the line begin their work. Legitimate scanners alert defenders; opportunistic attackers assemble payloads.Reporting from security researchers described exploitation attempts within roughly 36 hours of public advisory indexing. The observed activity reportedly used UNION-based SQL injection payloads and targeted database areas associated with LiteLLM tokens, credentials, and configuration. That is not the behavior of someone randomly spraying apostrophes at every endpoint and hoping for an error message.

The speed matters because it compresses the useful life of traditional patch planning. In older enterprise rhythms, a critical open-source component might have been scheduled into the next sprint, the next maintenance window, or the next quarterly platform update. For internet-facing AI infrastructure, that rhythm is increasingly untenable.

The adversary does not need the same inventory discipline that defenders need. Attackers can scan for exposed services, test a small number of recognizable routes, and move on to the next host if a payload fails. Defenders must know where LiteLLM is deployed, which version is running, whether it is reachable from the internet, which credentials it holds, and who owns the business process that can rotate those credentials.

Federal Deadlines Are a Floor, Not a Strategy

BOD 22-01 gives CISA’s KEV catalog its government teeth. Federal civilian executive branch agencies are required to remediate listed vulnerabilities by the catalog deadline, and the directive was created precisely because actively exploited vulnerabilities are a bad place for discretionary patch timing. Once a CVE is in KEV, “we will get to it eventually” is not a defensible federal plan.Private organizations are not bound by BOD 22-01, but ignoring KEV because it is a federal requirement misses the point. CISA’s catalog is one of the few widely recognized vulnerability lists filtered by known exploitation rather than theoretical severity alone. In a world where every scanner produces endless critical findings, KEV helps answer the more urgent question: which bugs are attackers already using?

For LiteLLM, the federal due date should be treated as the slowest acceptable outer boundary, not the target. Internet-facing instances should already be patched or isolated. Internal-only instances deserve attention too, because “internal” increasingly includes contractors, CI jobs, developer workstations, service meshes, test harnesses, and compromised identities.

Organizations also need to resist the comforting fiction that patching alone ends the incident. If a vulnerable LiteLLM proxy was exposed during the exploitation window, the safer assumption is that stored secrets may have been accessible. The operational work then becomes more painful: rotate keys, revoke virtual tokens, review provider usage, inspect database logs where available, and verify that model-provider credentials were scoped narrowly enough that a leak would not become a blank check.

Windows Shops Are in the Blast Radius Even When the Bug Is Not in Windows

WindowsForum readers might reasonably ask why an AI proxy vulnerability belongs in a Windows community publication. The answer is that modern Windows estates rarely end at Windows. The same organizations running Windows Server, Entra ID-integrated desktops, SQL Server workloads, PowerShell automation, Azure DevOps pipelines, and Microsoft 365 Copilot pilots are also stitching AI services into internal tools at speed.LiteLLM is often deployed as Linux-based container infrastructure, but its consumers may be Windows applications, .NET services, internal helpdesk bots, Power Platform connectors, developer tools, or Azure-hosted workloads. A compromise of the proxy can affect Windows users even if no Windows host is directly exploited. The boundary that matters is not the operating system boundary; it is the credential boundary.

This is especially relevant for shops standardizing on Azure OpenAI while still experimenting with other providers. A proxy layer can make that multi-provider strategy manageable, but it can also obscure where credentials live and who is responsible for rotating them. If the AI platform team owns LiteLLM and the Windows infrastructure team owns the applications consuming it, the response can fall between org chart boxes.

The right lesson is not that Windows admins must become AI framework specialists overnight. It is that AI infrastructure has become part of enterprise infrastructure. It belongs in asset inventories, vulnerability management scopes, network diagrams, backup plans, incident response exercises, and privileged access reviews.

The Patch Is Simple; the Cleanup May Not Be

The direct remediation path is straightforward: upgrade LiteLLM to version 1.83.7-stable or later. Advisories describe the fix as eliminating the unsafe query construction in the API key verification path. If an organization can patch immediately, it should.The harder question is what to do after patching. SQL injection against a credential-bearing database is not like a denial-of-service bug where service restoration is the main task. The meaningful impact is what an attacker may have read or changed before the patch landed.

That means defenders should treat exposed vulnerable deployments as possible secret exposure events. LiteLLM virtual keys should be revoked and regenerated. Provider API keys stored in the proxy should be rotated. Master keys deserve special scrutiny because they can often create or manage other tokens. If the proxy stored database connection strings, cloud credentials, or environment-derived secrets, those should be reviewed as well.

Logs may help, but they should not become an excuse for inaction. Application logs might show suspicious authorization headers, SQL metacharacters, UNION keywords, or anomalous requests to common LLM routes. Network and reverse-proxy logs may show probes from unfamiliar infrastructure. PostgreSQL logs, if sufficiently detailed, may reveal odd SELECT patterns against credential or configuration tables. But many organizations will discover that the logs they wish they had were not enabled before the incident.

Workarounds Are Risk Reduction, Not Exoneration

Some advisories and research notes have described configuration-level mitigations that reduce exposure to the vulnerable error-handling path. Those may be useful for emergency containment when patching cannot happen immediately. They are not a substitute for upgrading.This distinction matters because AI infrastructure is often owned by teams that move faster than central IT. A platform engineer may be tempted to apply a configuration workaround, close the ticket, and wait for the next container rebuild. That is risky when the vulnerability is already in CISA KEV and exploitation has been observed.

Network-layer controls are similarly valuable but incomplete. Placing LiteLLM behind a VPN, private API gateway, identity-aware proxy, or allowlisted ingress path reduces opportunistic scanning. It does not remove the vulnerable code, and it may not protect against compromised internal accounts, malicious insiders, exposed CI runners, or adjacent workloads.

The broader mitigation is to reduce what the proxy can lose. Provider keys should be scoped to the smallest practical set of models, projects, budgets, and permissions. Credentials should be rotated on a schedule that assumes eventual exposure. Administrative functions should be separated from runtime request forwarding. The proxy database should not become a general-purpose dumping ground for every secret an AI application might need.

Open Source Is Not the Villain, but Velocity Is the Problem

It would be easy, and wrong, to turn this incident into a morality play about open-source AI tools. Open source did not invent SQL injection, and commercial AI gateways are not magically immune to authentication-path bugs. The advantage of open source is that fixes, advisories, and independent analysis can move quickly in public.The problem is the mismatch between adoption velocity and operational maturity. Many organizations adopted AI proxies during a period of intense experimentation. Developers needed model access immediately, finance wanted spend visibility, security wanted some centralized control, and platform teams wanted to avoid a dozen bespoke integrations. A tool like LiteLLM solved a real coordination problem.

But software that begins as an accelerant can quietly become a dependency. Once it holds production credentials and sits in the path of business workflows, it must be governed like infrastructure rather than a developer convenience. That transition often happens without ceremony, budget, or ownership changes.

CVE-2026-42208 is therefore less a referendum on one project than a warning about how quickly AI middleware is being promoted into critical roles. The security model has to catch up with the deployment model. If a proxy can authorize users, spend money, store provider keys, and route sensitive prompts, it deserves the same scrutiny as an API gateway, identity broker, and secrets manager combined.

The Old Vulnerability Classes Still Win in the AI Era

The AI security conversation often gravitates toward prompt injection, model poisoning, jailbreaks, data leakage through retrieval systems, and agentic tool abuse. Those are real issues. But CVE-2026-42208 is a reminder that attackers will happily use boring bugs in exciting systems.A SQL injection in an AI proxy does not require the attacker to understand transformer architectures. It does not require tricking a model into revealing system prompts. It does not require adversarial examples, malicious embeddings, or a zero-click agent workflow. It requires a reachable endpoint, unsafe input handling, and something valuable in the database.

That should shape defensive priorities. AI-specific threat modeling is necessary, but it cannot replace web application fundamentals. Parameterized queries, input handling, least privilege, logging, segmentation, dependency updates, and secret rotation remain the controls that decide whether a vulnerability becomes a breach.

The novelty is where those old controls now need to be applied. The AI gateway is not just another microservice. It may be the place where every app’s model traffic, every provider’s key, and every governance promise converges. When an old bug lands there, the impact looks new.

Microsoft-Centric Enterprises Need to Inventory the Invisible AI Layer

For organizations standardized on Microsoft infrastructure, the obvious AI inventory starts with Copilot, Azure OpenAI, Entra ID permissions, Purview controls, Defender alerts, and Azure networking. That inventory may miss the shadow layer: open-source proxies, SDK wrappers, internal gateways, and developer-run containers that connect Microsoft environments to non-Microsoft model providers.LiteLLM is exactly the kind of component that can appear in that shadow layer. A team might deploy it to normalize API calls from a .NET application. A data science group might use it to compare model output across Azure OpenAI and Anthropic. A support automation project might run it in Kubernetes with a handful of provider keys and minimal oversight because it started as a pilot.

The danger is not experimentation itself. The danger is production value accumulating inside experimental infrastructure. Once a tool stores real provider credentials, handles real customer prompts, or becomes embedded in business workflows, it should be pulled into the same governance loop as any other production service.

Windows and Microsoft administrators can help by asking practical questions. Are any internal apps calling a LiteLLM endpoint? Are container registries, Kubernetes clusters, Azure Container Apps, developer VMs, or Linux hosts running LiteLLM images or Python packages? Are API keys for Azure OpenAI or other providers stored in a proxy database rather than a managed secret store? Are those keys scoped, logged, and rotated?

CISA’s Alert Is Really About Prioritization Discipline

The security industry has made vulnerability management both more data-rich and more exhausting. Organizations now receive CVSS scores, EPSS estimates, vendor advisories, GitHub alerts, scanner findings, exploit chatter, package manager warnings, and cloud security posture recommendations. The result is not always clarity. Often it is a queue no human team can drain.KEV cuts through that by emphasizing exploitation. When CISA adds a vulnerability, the message is not that every other bug can be ignored. The message is that this one has crossed from possibility into observed attacker behavior, and the remediation clock should move accordingly.

CVE-2026-42208 deserves that treatment because the target class is expanding. AI gateways are increasingly common, often internet reachable, and frequently connected to valuable credentials. Even organizations that do not run LiteLLM should use the alert as a prompt to examine equivalent systems.

The important distinction is between vendor positioning and operational reality. A product may describe itself as a proxy, gateway, router, observability layer, cost manager, or developer platform. Defenders should describe it in terms of what it can access. If it can read or mint keys, spend money at provider APIs, access sensitive prompts, or alter routing policy, it is critical infrastructure.

The LiteLLM KEV Entry Turns AI Middleware Into a Board-Level Risk Conversation

The concrete response to CVE-2026-42208 is not complicated, but the implications are broader than one upgrade command. CISA’s addition should push organizations to treat AI middleware as part of the security perimeter, even when it runs deep inside a cloud environment or behind a developer-friendly abstraction.- Organizations running LiteLLM versions from 1.81.16 through versions before 1.83.7 should upgrade to 1.83.7-stable or later immediately.

- Any vulnerable LiteLLM instance that was reachable during the disclosure and exploitation window should be treated as a possible credential exposure event.

- Teams should rotate LiteLLM virtual keys, master keys, and upstream model-provider credentials stored in affected deployments.

- Security teams should review logs for suspicious authorization headers, SQL injection syntax, unusual LLM route traffic, and abnormal provider API usage after the likely exposure period.

- AI proxies should be placed behind strong network and identity controls, but those controls should supplement patching rather than replace it.

- Enterprises should scope model-provider credentials narrowly so that a proxy compromise does not become an organization-wide AI account compromise.

CISA’s May 8 KEV update is small enough to skim past and serious enough to deserve a change in posture. CVE-2026-42208 shows that the next major enterprise AI incident does not need to begin with a science-fiction prompt attack; it can begin with a decades-old SQL injection in the system everyone trusted to make AI access manageable. The organizations that come out ahead will be the ones that inventory those gateways now, patch them on exploitation timelines, and design them so the next bug leaks as little power as possible.

Source: CISA CISA Adds One Known Exploited Vulnerability to Catalog | CISA