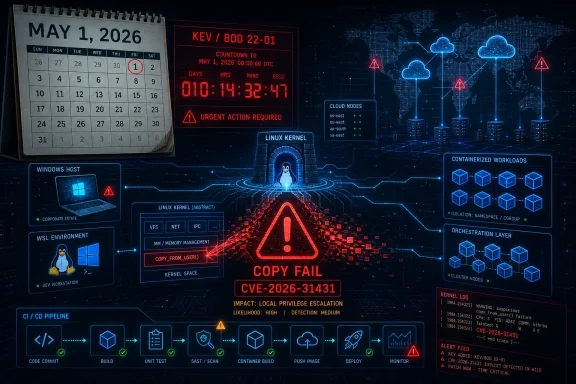

CISA added CVE-2026-31431, a Linux kernel local privilege escalation flaw known as “Copy Fail,” to its Known Exploited Vulnerabilities Catalog on May 1, 2026, after evidence of active exploitation, triggering mandatory remediation for U.S. federal civilian agencies under BOD 22-01. The move turns a fast-moving Linux security story into an operational deadline. It also reminds Windows-heavy IT shops that Linux risk is now woven through their estates: WSL, containers, appliances, cloud images, CI runners, hypervisors, and security tools all carry kernels somewhere. This is not a remote worm headline; it is the kind of privilege-escalation bug that makes every foothold more dangerous.

CISA’s KEV Catalog is not a vulnerability encyclopedia. It is a triage instrument, and that distinction matters. When a CVE lands there, the government is saying the vulnerability is not merely plausible, not merely severe on paper, but known to be exploited in the wild.

For federal civilian agencies, that changes the conversation from “track and evaluate” to “fix by the due date.” Binding Operational Directive 22-01 created that machinery in 2021, and it has become one of the more useful signals in a noisy vulnerability market because it collapses theoretical severity into observed attacker behavior.

CVE-2026-31431 fits that model awkwardly and perfectly. Awkwardly, because it is a local privilege escalation rather than a remote code execution flaw. Perfectly, because modern intrusions are chains, and local root is often the step that converts an annoying compromise into full system control.

The catalog entry is also a shot across the bow for organizations that still rank Linux patching below Windows patching by habit. In 2026, few enterprises are “Windows shops” or “Linux shops” in any clean sense. They are mixed estates where Windows administrators may own identity, endpoint, and productivity, while Linux quietly powers the build systems, scanners, appliances, workloads, clusters, edge boxes, and management platforms.

That description should make sysadmins sit up for one reason: it does not depend on a glamorous network-facing daemon. The attacker needs local code execution first, but local code execution is not rare. A compromised web app account, a malicious dependency running in a build job, a low-privilege SSH credential, a vulnerable containerized workload, or an abused automation account can all provide that first step.

The technical path runs through the kernel’s

The important operational detail is that exploitation is described as reliable. Researchers and security vendors have characterized the proof of concept as small, portable, and not dependent on winning a race condition. That puts it in a different mental category from kernel bugs that are theoretically severe but practically temperamental.

A local privilege escalation bug is an accelerant. If an attacker compromises a low-privilege Linux service account and then immediately becomes root, every other control on the machine gets thinner. Logs can be altered, credentials can be harvested, agents can be tampered with, lateral movement tooling can be staged, and persistence can be installed below the level most application teams inspect.

This is especially important in environments that rely on Linux as shared infrastructure. Multi-tenant systems, research clusters, CI/CD runners, developer jump boxes, container hosts, and SaaS platforms that execute customer or user-supplied code all turn “local user” into a much broader category than it sounds. On those systems, unprivileged code execution may be a feature, not a breach.

The risk is not evenly distributed. A single-user laptop with full-disk encryption and little exposed service surface is less urgent than a shared runner that builds untrusted pull requests. But the direction of travel is clear: wherever low-privilege Linux code can run, this bug can potentially erase the privilege boundary that made that arrangement tolerable.

That matters because Linux’s strength is also its weakness. It is a vast, portable, high-performance kernel used in almost every computing context. Features that are safe in one mental model can become risky when combined with another subsystem, another syscall, another memory mapping assumption, or another optimization.

The flaw’s classification as an “Incorrect Resource Transfer Between Spheres” sounds like taxonomy soup, but it captures the deeper failure. Data or authority crosses a boundary it should not cross. In this case, the dangerous boundary is not between two network zones; it is between what user space is allowed to read, what the kernel is allowed to transform, and what should never be modified without write permission.

This is why the fix is not a grand redesign. It is a retreat from cleverness. The kernel patch removes complexity from the AEAD operation path and returns to a safer model where the source and destination handling is less susceptible to confused ownership.

WSL 2 runs a real Linux kernel in a lightweight virtual machine. Containers on developer workstations and build hosts often use Linux images. Kubernetes nodes are typically Linux. Many backup, monitoring, EDR, SIEM, vulnerability scanning, storage, firewall, and identity appliances run Linux under the hood. Cloud workloads may be Linux even when the corporate desktop is not.

The practical question is not “Do we run Linux?” It is “Where do we allow untrusted or semi-trusted code to run on Linux kernels?” That includes CI systems that build external pull requests, data science notebooks, shared bastion hosts, container platforms, managed hosting environments, security sandboxes, and developer machines with broad repository access.

Those are precisely the places where local privilege escalation can have disproportionate blast radius. A compromised CI runner can become a credential theft platform. A rooted container host can threaten workloads that teams assumed were isolated. A rooted developer Linux VM can expose signing keys, cloud tokens, package publishing credentials, or private source code.

That does not mean every containerized workload is automatically exploitable. Runtime configuration matters. Seccomp profiles may block relevant syscalls. The vulnerable kernel functionality may be unavailable or restricted. The attacker still needs code execution in a container and a path from that environment to the vulnerable interface.

But the broad strategic point remains: container isolation is only as strong as the kernel boundary underneath it. A local root exploit that works from an unprivileged account is exactly the kind of bug that makes cluster operators revisit assumptions about tenant separation.

This is where cloud-native environments should prioritize. If a fleet includes internet-facing containers, customer-executed workloads, shared build infrastructure, or internal platforms where many teams deploy code, patching those nodes should outrank low-risk single-purpose servers. The patch order should follow exposure and trust boundaries, not asset age or convenience.

But KEV should not become a substitute for architecture-aware prioritization. The same CVE can demand emergency treatment on one host and routine treatment on another. A shared Linux shell server, an exposed web application host, a Kubernetes worker, and a single-purpose offline appliance do not carry the same risk just because they share a kernel lineage.

The right response is to combine CISA’s signal with asset context. Which vulnerable systems allow local users? Which run containers? Which execute untrusted jobs? Which hold credentials that unlock cloud control planes? Which are difficult to rebuild if compromised? Which sit in management networks?

This is where many vulnerability programs still struggle. They know CVEs and package versions but not trust relationships. Copy Fail is a reminder that the position of a vulnerable kernel in the enterprise often matters more than the CVSS score attached to it.

That lag is not always negligence. Kernel updates can affect drivers, security agents, storage stacks, live patching systems, and compliance baselines. Reboots require windows. Cluster nodes need draining. Appliances may require vendor-certified firmware. In regulated environments, even an urgent kernel patch can become a change-control event.

Still, the existence of change control is not an excuse for paralysis. Local privilege escalation with public exploit code and KEV status is exactly the class of vulnerability that justifies emergency procedures. If the organization’s emergency kernel patch process cannot move quickly for this, it likely cannot move quickly for the next one either.

Temporary mitigations deserve attention but not overconfidence. Blocking or restricting AF_ALG access through seccomp policies, hardening container profiles, or disabling the affected user-space crypto interface where feasible can reduce exposure. Those controls may be excellent short-term measures, but they should be treated as compensating controls on the way to kernel remediation, not a permanent landing zone.

That does not mean Windows admins must become kernel engineers. It means they need enough Linux situational awareness to ask sharper questions. Which business services depend on Linux? Which security tools deploy Linux collectors? Which developer workflows use WSL? Which cloud images are blessed? Which container base images and hosts are maintained by whom?

WSL deserves special mention because it sits at the cultural intersection of Windows convenience and Linux attack surface. WSL 2 uses a Microsoft-provided Linux kernel, and enterprises that allow it broadly should track kernel servicing as part of endpoint governance. A developer workstation that can build production code, access internal repos, hold cloud credentials, and run Linux workloads is not a casual endpoint.

For many organizations, the bigger risk is not that every Windows admin forgot about Linux. It is that Linux ownership is split across platform teams, app teams, cloud teams, security engineering, vendors, and individual developers. Copy Fail is the kind of incident that exposes those seams.

Even so, organizations should not wait for perfect detection before acting. Suspicious creation of AEAD-related AF_ALG sockets by unexpected processes is a useful signal. So is unusual execution of setuid binaries following strange syscall patterns. So is untrusted code spawning low-level crypto interface activity from containers or build jobs.

The goal is not to build a magical exploit detector that catches every variation. The goal is to raise the cost of post-exploitation. If an attacker lands in a low-privilege service account and immediately begins interacting with obscure kernel crypto interfaces, that should look strange in most environments.

Security teams should also revisit forensic assumptions. Some reporting suggests exploitation may alter page cache state without changing the file on disk, which weakens file integrity monitoring as a sole defense. That is a useful reminder that runtime behavior and memory-backed state can matter as much as hashes on disk.

But defenders should focus on exploit shape. Is public code available? Is exploitation reliable? Does it require a race? Does it work across common distributions? Does it need special configuration? Does it give root? Can it be chained after common initial-access bugs? Those answers drive urgency more than a decimal score.

This is why CISA’s KEV decision matters. The catalog is not saying “this scored high.” It is saying exploitation has crossed from possibility into operational reality. That distinction should override the familiar internal debate about whether local bugs deserve emergency patching.

A local root exploit is especially valuable to attackers who already have phishing, credential theft, web shell, CI abuse, or supply-chain footholds. It shortens the path from “we got in” to “we own the box.” In incident response, that difference can determine whether containment is a password reset or a rebuild.

The best organizations will use this event as a drill. They will identify vulnerable kernels, rank systems by exposure, apply vendor updates, verify kernel versions after reboot, tighten runtime controls where patching lags, and document exceptions. They will also measure how long that process takes.

The mediocre organizations will paste the CVE into a scanner, wait for the next authenticated scan cycle, and discover two weeks later that half the affected systems were appliances, containers, or cloud images outside the scanner’s view. The worst will assume that because the word “Linux” appears in the advisory, the Windows team has no action item.

This is the larger operational divide. Vulnerability management is no longer about matching CVEs to servers in a spreadsheet. It is about understanding where code runs, who controls the kernel underneath it, how quickly that layer can be changed, and what compensating controls exist when it cannot.

That means multi-user Linux hosts first. It means CI/CD runners and build agents early. It means Kubernetes and container hosts early, especially where teams deploy their own workloads. It means internet-facing Linux servers where a web exploit could become root in the same step. It means developer machines that hold production credentials.

Appliances are the wild card. Many security and network products run Linux but expose customers only to a vendor update channel. If those systems are affected, customers may need vendor advisories, hotfixes, or mitigations rather than conventional package updates. Procurement teams should notice when vendors cannot answer kernel exposure questions quickly.

Cloud users face their own split responsibility. Managed services may be patched by the provider, but self-managed VM images, autoscaling groups, node pools, and custom AMIs are customer problems. Golden images need refreshes, not just running hosts. Otherwise, today’s fixed fleet becomes tomorrow’s vulnerable replacement fleet.

Source: CISA CISA Adds One Known Exploited Vulnerability to Catalog | CISA

CISA Turns a Kernel Bug Into a Clock

CISA Turns a Kernel Bug Into a Clock

CISA’s KEV Catalog is not a vulnerability encyclopedia. It is a triage instrument, and that distinction matters. When a CVE lands there, the government is saying the vulnerability is not merely plausible, not merely severe on paper, but known to be exploited in the wild.For federal civilian agencies, that changes the conversation from “track and evaluate” to “fix by the due date.” Binding Operational Directive 22-01 created that machinery in 2021, and it has become one of the more useful signals in a noisy vulnerability market because it collapses theoretical severity into observed attacker behavior.

CVE-2026-31431 fits that model awkwardly and perfectly. Awkwardly, because it is a local privilege escalation rather than a remote code execution flaw. Perfectly, because modern intrusions are chains, and local root is often the step that converts an annoying compromise into full system control.

The catalog entry is also a shot across the bow for organizations that still rank Linux patching below Windows patching by habit. In 2026, few enterprises are “Windows shops” or “Linux shops” in any clean sense. They are mixed estates where Windows administrators may own identity, endpoint, and productivity, while Linux quietly powers the build systems, scanners, appliances, workloads, clusters, edge boxes, and management platforms.

Copy Fail Is Dangerous Because It Is Boringly Practical

The public name, “Copy Fail,” has the theatrical quality modern vulnerability branding tends to acquire. The underlying issue is less theatrical and more troubling: a logic flaw in the Linux kernel’s cryptographic interface that can reportedly let an unprivileged local user corrupt the page cache of readable files and climb to root.That description should make sysadmins sit up for one reason: it does not depend on a glamorous network-facing daemon. The attacker needs local code execution first, but local code execution is not rare. A compromised web app account, a malicious dependency running in a build job, a low-privilege SSH credential, a vulnerable containerized workload, or an abused automation account can all provide that first step.

The technical path runs through the kernel’s

algif_aead code, part of the interface that exposes authenticated encryption with associated data operations to user space. Kernel maintainers resolved the issue by reverting an old in-place processing optimization, effectively acknowledging that the clever performance shortcut had created dangerous complexity around memory mappings.The important operational detail is that exploitation is described as reliable. Researchers and security vendors have characterized the proof of concept as small, portable, and not dependent on winning a race condition. That puts it in a different mental category from kernel bugs that are theoretically severe but practically temperamental.

A Local Bug Still Belongs on the Front Page

Security teams often treat local privilege escalation as second-tier risk because it requires a prior foothold. That instinct is understandable and increasingly obsolete. Attackers rarely need one spectacular bug when two ordinary ones will do.A local privilege escalation bug is an accelerant. If an attacker compromises a low-privilege Linux service account and then immediately becomes root, every other control on the machine gets thinner. Logs can be altered, credentials can be harvested, agents can be tampered with, lateral movement tooling can be staged, and persistence can be installed below the level most application teams inspect.

This is especially important in environments that rely on Linux as shared infrastructure. Multi-tenant systems, research clusters, CI/CD runners, developer jump boxes, container hosts, and SaaS platforms that execute customer or user-supplied code all turn “local user” into a much broader category than it sounds. On those systems, unprivileged code execution may be a feature, not a breach.

The risk is not evenly distributed. A single-user laptop with full-disk encryption and little exposed service surface is less urgent than a shared runner that builds untrusted pull requests. But the direction of travel is clear: wherever low-privilege Linux code can run, this bug can potentially erase the privilege boundary that made that arrangement tolerable.

The Nine-Year Lesson Is About Complexity, Not Neglect

One of the more uncomfortable details around CVE-2026-31431 is the age of the vulnerable path. Reporting and technical analysis point to a lineage involving kernel changes that accumulated over years, with the problematic optimization dating back to 2017. That does not mean maintainers were asleep for nine years. It means subtle kernel interactions can remain quiet until someone composes the right sequence.That matters because Linux’s strength is also its weakness. It is a vast, portable, high-performance kernel used in almost every computing context. Features that are safe in one mental model can become risky when combined with another subsystem, another syscall, another memory mapping assumption, or another optimization.

The flaw’s classification as an “Incorrect Resource Transfer Between Spheres” sounds like taxonomy soup, but it captures the deeper failure. Data or authority crosses a boundary it should not cross. In this case, the dangerous boundary is not between two network zones; it is between what user space is allowed to read, what the kernel is allowed to transform, and what should never be modified without write permission.

This is why the fix is not a grand redesign. It is a retreat from cleverness. The kernel patch removes complexity from the AEAD operation path and returns to a safer model where the source and destination handling is less susceptible to confused ownership.

The Exploit Chain Starts Where Enterprises Are Messiest

For WindowsForum readers, the temptation is to file this as “Linux news.” That would be a mistake. The modern Windows estate is surrounded by Linux in ways that are easy to overlook because they do not always appear in endpoint dashboards.WSL 2 runs a real Linux kernel in a lightweight virtual machine. Containers on developer workstations and build hosts often use Linux images. Kubernetes nodes are typically Linux. Many backup, monitoring, EDR, SIEM, vulnerability scanning, storage, firewall, and identity appliances run Linux under the hood. Cloud workloads may be Linux even when the corporate desktop is not.

The practical question is not “Do we run Linux?” It is “Where do we allow untrusted or semi-trusted code to run on Linux kernels?” That includes CI systems that build external pull requests, data science notebooks, shared bastion hosts, container platforms, managed hosting environments, security sandboxes, and developer machines with broad repository access.

Those are precisely the places where local privilege escalation can have disproportionate blast radius. A compromised CI runner can become a credential theft platform. A rooted container host can threaten workloads that teams assumed were isolated. A rooted developer Linux VM can expose signing keys, cloud tokens, package publishing credentials, or private source code.

The Container Angle Deserves More Than a Footnote

Container security often rests on a layered bargain: containers are not virtual machines, but namespaces, cgroups, seccomp, capabilities, AppArmor, SELinux, and runtime hardening can make them good enough for many workloads. Kernel privilege escalation bugs strain that bargain because all containers on a host share the same kernel.That does not mean every containerized workload is automatically exploitable. Runtime configuration matters. Seccomp profiles may block relevant syscalls. The vulnerable kernel functionality may be unavailable or restricted. The attacker still needs code execution in a container and a path from that environment to the vulnerable interface.

But the broad strategic point remains: container isolation is only as strong as the kernel boundary underneath it. A local root exploit that works from an unprivileged account is exactly the kind of bug that makes cluster operators revisit assumptions about tenant separation.

This is where cloud-native environments should prioritize. If a fleet includes internet-facing containers, customer-executed workloads, shared build infrastructure, or internal platforms where many teams deploy code, patching those nodes should outrank low-risk single-purpose servers. The patch order should follow exposure and trust boundaries, not asset age or convenience.

KEV Is a Signal, Not a Substitute for Engineering Judgment

CISA’s catalog is valuable because it is conservative. It does not list every severe vulnerability. It lists vulnerabilities for which exploitation evidence has changed the risk equation. That makes it a useful forcing function, especially for organizations drowning in scanner output.But KEV should not become a substitute for architecture-aware prioritization. The same CVE can demand emergency treatment on one host and routine treatment on another. A shared Linux shell server, an exposed web application host, a Kubernetes worker, and a single-purpose offline appliance do not carry the same risk just because they share a kernel lineage.

The right response is to combine CISA’s signal with asset context. Which vulnerable systems allow local users? Which run containers? Which execute untrusted jobs? Which hold credentials that unlock cloud control planes? Which are difficult to rebuild if compromised? Which sit in management networks?

This is where many vulnerability programs still struggle. They know CVEs and package versions but not trust relationships. Copy Fail is a reminder that the position of a vulnerable kernel in the enterprise often matters more than the CVSS score attached to it.

The Patch Story Is Simple Until It Meets Reality

At the kernel level, the remediation path is straightforward: update to a fixed kernel or a vendor backport that includes the relevant fix. The complication is that enterprise Linux patching rarely means pulling vanilla upstream kernels. It means waiting for Red Hat, Canonical, SUSE, Debian, Amazon, Oracle, appliance vendors, cloud image maintainers, and embedded platform suppliers to ship tested packages.That lag is not always negligence. Kernel updates can affect drivers, security agents, storage stacks, live patching systems, and compliance baselines. Reboots require windows. Cluster nodes need draining. Appliances may require vendor-certified firmware. In regulated environments, even an urgent kernel patch can become a change-control event.

Still, the existence of change control is not an excuse for paralysis. Local privilege escalation with public exploit code and KEV status is exactly the class of vulnerability that justifies emergency procedures. If the organization’s emergency kernel patch process cannot move quickly for this, it likely cannot move quickly for the next one either.

Temporary mitigations deserve attention but not overconfidence. Blocking or restricting AF_ALG access through seccomp policies, hardening container profiles, or disabling the affected user-space crypto interface where feasible can reduce exposure. Those controls may be excellent short-term measures, but they should be treated as compensating controls on the way to kernel remediation, not a permanent landing zone.

Windows Administrators Have Linux Homework Now

The center of gravity for Windows administrators has shifted. A decade ago, a Windows admin could plausibly focus on domain controllers, Group Policy, WSUS, SCCM, Exchange, file servers, and endpoint images. Today, the same team may be accountable for Entra ID integrations, Defender telemetry, Azure workloads, GitHub or Azure DevOps pipelines, Kubernetes-backed applications, and developer environments full of Linux tooling.That does not mean Windows admins must become kernel engineers. It means they need enough Linux situational awareness to ask sharper questions. Which business services depend on Linux? Which security tools deploy Linux collectors? Which developer workflows use WSL? Which cloud images are blessed? Which container base images and hosts are maintained by whom?

WSL deserves special mention because it sits at the cultural intersection of Windows convenience and Linux attack surface. WSL 2 uses a Microsoft-provided Linux kernel, and enterprises that allow it broadly should track kernel servicing as part of endpoint governance. A developer workstation that can build production code, access internal repos, hold cloud credentials, and run Linux workloads is not a casual endpoint.

For many organizations, the bigger risk is not that every Windows admin forgot about Linux. It is that Linux ownership is split across platform teams, app teams, cloud teams, security engineering, vendors, and individual developers. Copy Fail is the kind of incident that exposes those seams.

Detection Will Be Noisy, But Silence Is Worse

Runtime detection for this class of bug is challenging because the exploit path uses legitimate kernel interfaces. AF_ALG sockets exist for a reason. Crypto tooling, disk encryption utilities, and specialized applications may use them legitimately. Blocking or alerting on every use can generate noise.Even so, organizations should not wait for perfect detection before acting. Suspicious creation of AEAD-related AF_ALG sockets by unexpected processes is a useful signal. So is unusual execution of setuid binaries following strange syscall patterns. So is untrusted code spawning low-level crypto interface activity from containers or build jobs.

The goal is not to build a magical exploit detector that catches every variation. The goal is to raise the cost of post-exploitation. If an attacker lands in a low-privilege service account and immediately begins interacting with obscure kernel crypto interfaces, that should look strange in most environments.

Security teams should also revisit forensic assumptions. Some reporting suggests exploitation may alter page cache state without changing the file on disk, which weakens file integrity monitoring as a sole defense. That is a useful reminder that runtime behavior and memory-backed state can matter as much as hashes on disk.

The CVSS Number Is Less Important Than the Exploit Shape

CVE-2026-31431 carries a high CVSS rating from kernel.org, but the number is not the most interesting part. CVSS treats the bug as local, low-complexity, requiring low privileges, requiring no user interaction, and potentially affecting confidentiality, integrity, and availability. That is a fair mechanical summary.But defenders should focus on exploit shape. Is public code available? Is exploitation reliable? Does it require a race? Does it work across common distributions? Does it need special configuration? Does it give root? Can it be chained after common initial-access bugs? Those answers drive urgency more than a decimal score.

This is why CISA’s KEV decision matters. The catalog is not saying “this scored high.” It is saying exploitation has crossed from possibility into operational reality. That distinction should override the familiar internal debate about whether local bugs deserve emergency patching.

A local root exploit is especially valuable to attackers who already have phishing, credential theft, web shell, CI abuse, or supply-chain footholds. It shortens the path from “we got in” to “we own the box.” In incident response, that difference can determine whether containment is a password reset or a rebuild.

The Federal Deadline Should Become a Private-Sector Drill

BOD 22-01 formally applies to Federal Civilian Executive Branch agencies. Everyone else can ignore the mandate but not the lesson. CISA’s KEV additions are among the clearest public indicators of what attackers are actually using, and private-sector organizations should treat them as a standing patch-priority feed.The best organizations will use this event as a drill. They will identify vulnerable kernels, rank systems by exposure, apply vendor updates, verify kernel versions after reboot, tighten runtime controls where patching lags, and document exceptions. They will also measure how long that process takes.

The mediocre organizations will paste the CVE into a scanner, wait for the next authenticated scan cycle, and discover two weeks later that half the affected systems were appliances, containers, or cloud images outside the scanner’s view. The worst will assume that because the word “Linux” appears in the advisory, the Windows team has no action item.

This is the larger operational divide. Vulnerability management is no longer about matching CVEs to servers in a spreadsheet. It is about understanding where code runs, who controls the kernel underneath it, how quickly that layer can be changed, and what compensating controls exist when it cannot.

The Patch Queue Needs a New Order

The instinctive patch order in many enterprises follows business visibility: domain controllers, externally facing servers, executive endpoints, production databases, then everything else. Copy Fail argues for a different lens. Prioritize systems where privilege boundaries are part of the service model.That means multi-user Linux hosts first. It means CI/CD runners and build agents early. It means Kubernetes and container hosts early, especially where teams deploy their own workloads. It means internet-facing Linux servers where a web exploit could become root in the same step. It means developer machines that hold production credentials.

Appliances are the wild card. Many security and network products run Linux but expose customers only to a vendor update channel. If those systems are affected, customers may need vendor advisories, hotfixes, or mitigations rather than conventional package updates. Procurement teams should notice when vendors cannot answer kernel exposure questions quickly.

Cloud users face their own split responsibility. Managed services may be patched by the provider, but self-managed VM images, autoscaling groups, node pools, and custom AMIs are customer problems. Golden images need refreshes, not just running hosts. Otherwise, today’s fixed fleet becomes tomorrow’s vulnerable replacement fleet.

The Small Facts That Should Drive the Next Maintenance Window

The operational story is narrow enough to summarize, but serious enough that it should not be reduced to panic. Treat this as a fast patching and exposure-reduction exercise, especially on systems where untrusted code and shared kernels meet.- CISA added CVE-2026-31431 to the KEV Catalog on May 1, 2026, after evidence of active exploitation.

- The vulnerability is a Linux kernel local privilege escalation flaw in the

algif_aeadarea and is widely referred to as Copy Fail. - The practical danger is not remote exploitation by itself, but reliable escalation from a low-privilege foothold to root on vulnerable systems.

- The highest-priority systems are multi-tenant Linux hosts, CI/CD runners, container and Kubernetes nodes, cloud workloads, and servers where exposed applications run under restricted accounts.

- Kernel updates or vendor backports are the real fix, while AF_ALG restrictions, seccomp hardening, and module-level mitigations should be treated as temporary risk reduction.

- Windows-centric organizations should inventory WSL, Linux-based appliances, developer tooling, cloud images, and container infrastructure rather than assuming the issue lives outside their estate.

Source: CISA CISA Adds One Known Exploited Vulnerability to Catalog | CISA