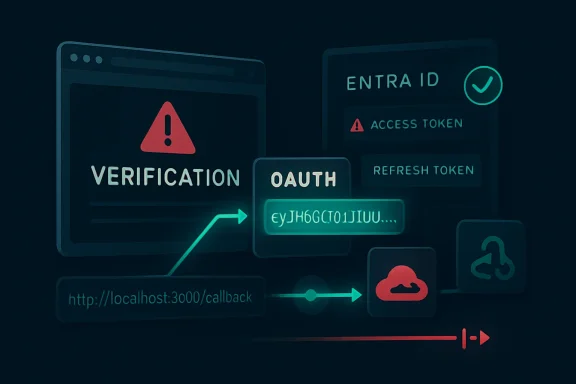

ConsentFix v3 is a newly reported phishing toolkit and attack method that targets Microsoft Azure and Entra ID accounts by automating OAuth authorization-code theft, using services such as Cloudflare Pages and Pipedream to collect codes and exchange them for usable Microsoft access and refresh tokens. The point is not that Microsoft’s login page has been spoofed better than before. The point is that attackers have learned to industrialize a workflow in which the legitimate Microsoft login page does most of the trust-building for them. For Windows shops, this is another reminder that the post-password era did not abolish phishing; it moved the battlefield from passwords to tokens.

The old phishing story was easy to explain to users and auditors: someone built a fake login page, the victim typed a password, and the attacker tried to reuse it before MFA or risk scoring got in the way. ConsentFix v3 is more uncomfortable because it lives in the gray zone between user consent, delegated access, and legitimate OAuth plumbing. The victim may authenticate to Microsoft itself, pass MFA normally, and still hand the attacker the one thing needed to impersonate the session.

That makes the technique feel less like a bug and more like a design tradeoff being turned inside out. OAuth exists so applications can obtain delegated access without collecting user passwords. In Microsoft’s cloud, first-party tooling such as Azure CLI and Azure PowerShell must be able to request tokens in ways that support administrators, developers, automation jobs, and real-world device flows. Attackers are exploiting the fact that these flows are familiar to identity systems but obscure to ordinary users.

ConsentFix is therefore not a password bypass in the cinematic sense. It is a consent and token-capture con wrapped around legitimate authorization behavior. MFA can still happen, the login can still be real, and the tenant can still issue a token because, from the identity provider’s vantage point, the user participated in a valid authorization flow.

The v3 twist is automation. Earlier variants already demonstrated that a victim could be tricked into copying a localhost redirect URL containing an authorization code back into a malicious page. The newer reporting says the kit adds target discovery, employee profiling, disposable account setup, webhook collection, and token exchange automation, allowing the operation to scale beyond artisanal phishing.

If the victim is already signed in, the experience can look mundane. If the victim is not signed in, they may be taken through Microsoft’s actual authentication process. This is precisely what makes the lure dangerous: the part of the process that users have been trained to trust may indeed be genuine.

The redirect then lands on a localhost URL containing an OAuth authorization code. In legitimate scenarios, that code is meant to be consumed by a local application, such as a command-line client, that is listening for the callback. In the attack, the user is instructed to paste or drag that URL back into the phishing page under some pretext — to “fix” an issue, complete a verification, or satisfy a fake interface step.

Once the attacker has the code, the clock starts. OAuth authorization codes are short-lived, but automation collapses the timeline. ConsentFix v3 reportedly uses Pipedream as a webhook endpoint and automation layer to receive the captured URL, extract the code, exchange it for tokens, and store or forward the result for follow-on access.

That is the important leap from clever trick to operational platform. A manual attacker has to move quickly and consistently. A serverless workflow does not get tired, does not mistype, and does not pause between victims.

Cloudflare Pages gives an attacker a clean, TLS-protected hosting surface with the visual credibility of modern web infrastructure. Pipedream gives them a programmable glue layer for receiving webhook data and calling APIs. Other services can be used for enrichment, staging, disposable identities, and data handling. None of this requires the attacker to run an obvious criminal command-and-control server in the old sense.

This is the SaaS-abuse era of phishing. The attacker’s infrastructure increasingly looks like a badly governed startup stack: serverless endpoints, workflow automation, web hosting, enrichment tools, and cloud storage. The payload is not always a binary. Sometimes the payload is a workflow.

That matters for defenders because blocking “bad infrastructure” becomes harder when the infrastructure is also used by legitimate teams. A blanket block on every automation or web-hosting platform may be operationally impossible. But allowing everything because it is “just SaaS” leaves identity teams blind to the places where authorization artifacts are being collected and replayed.

ConsentFix v3 is a case study in that tension. The attack does not need to defeat Microsoft’s TLS, crack passwords, or compromise MFA servers. It needs to persuade a user to bridge two legitimate systems in a way the user does not understand.

But saying MFA “failed” misses the more useful lesson. MFA answered the question it was asked: is the user completing an authentication event? ConsentFix asks a different question: can the attacker steal the authorization artifact produced after that event?

That distinction is not academic. Many enterprises have spent years hardening the front door: passwordless sign-in, number matching, phishing-resistant factors for admins, impossible-travel alerts, and conditional access at authentication time. Those controls remain valuable. They simply do not cover the entire lifecycle of a token once issued.

The uncomfortable phrase here is token replay. If a refresh token can be used from somewhere other than the device or context in which it was issued, an attacker can turn a short social-engineering success into durable access. That is why Microsoft’s own guidance around token protection, compliant devices, network-based controls, and continuous access evaluation has become more central to identity defense.

For many WindowsForum readers, this will feel familiar from the evolution of browser-cookie theft and adversary-in-the-middle phishing. As password controls improved, attackers moved to session artifacts. ConsentFix applies the same strategic pressure to OAuth flows and Microsoft cloud tooling.

That trust is necessary. Azure CLI cannot be useful if every legitimate developer and administrator login is treated as a crisis. But the same trust gives attackers an attractive cover story: they can make the victim interact with a Microsoft flow that looks normal enough and produces tokens associated with capabilities the attacker wants.

The problem is not that Azure CLI is malicious. The problem is that enterprise identity often struggles to distinguish “Alice the cloud engineer using Azure CLI from her managed workstation” from “Alice the finance employee somehow producing Azure CLI tokens after visiting a fake verification page.” Those events are radically different in business context, even if they share pieces of the same authentication machinery.

This is where identity telemetry has to become more opinionated. App identity, user role, device state, network location, token redemption source, and subsequent resource access all matter. A user who has never touched administrative tooling suddenly authorizing a command-line client should not be treated as just another successful sign-in.

There is a larger Microsoft ecosystem lesson here. First-party applications are not magic exceptions to security governance. They are high-value paths that deserve monitoring precisely because attackers prefer trusted roads.

This is the same psychological territory exploited by ClickFix campaigns, fake CAPTCHA prompts, and bogus Cloudflare Turnstile checks. Users are told that something is broken, blocked, or incomplete, and that a strange manual action will restore normality. The novelty is not that users can be tricked into copying and pasting things. The novelty is that the copied object can be an OAuth redirect URL whose meaning is invisible to most humans.

A long localhost URL looks like technical noise. To a developer, it may signal a callback. To an accountant, project manager, or even many IT generalists, it is just a string the page told them to move. The attacker benefits from the fact that modern authentication already contains enough redirects, consent screens, and browser handoffs to make weirdness feel normal.

Security awareness training has not caught up to that reality. Many programs still emphasize fake login domains, misspelled brands, and suspicious attachments. Those remain useful lessons, but they do not teach users that a legitimate Microsoft login followed by a request to paste a URL can be malicious.

The training message needs to be narrower and sharper: no legitimate verification system should ask a user to copy a browser address, paste a command, drag a URL, or transfer an authorization code into another page. That is not a normal human step in cloud authentication. It is a red flare.

That matters because short-lived authorization codes are often treated as inherently low-risk if intercepted. They expire quickly, and in a normal application they are exchanged by the intended client. ConsentFix v3 makes expiration less comforting. If the code is delivered directly into a webhook-driven workflow, the useful lifetime of the code is more than enough.

Automation also supports scale before and after compromise. Target discovery can identify valid tenant IDs and collect employee information. Lure infrastructure can be cloned. Accounts across different services can be created to host pages, receive data, and move artifacts. Token capture can feed directly into exfiltration workflows.

This is the same industrial logic that transformed ransomware from opportunistic malware into a service economy. The technical move is not always revolutionary. The operational packaging is.

For defenders, the response has to be similarly operational. It is not enough to know that OAuth abuse exists. Teams need detections that fire quickly, playbooks that revoke tokens decisively, and policy baselines that reduce the number of users and apps capable of producing dangerous surprises.

That means defenders need to move beyond “did MFA pass?” as the decisive question. They need to ask which client application received tokens, from where the authorization code was redeemed, whether the device was compliant, whether the user normally uses that app, and what resources were accessed afterward.

In Microsoft environments, this points toward Entra sign-in logs, audit logs, workload logs, Defender XDR signals, and SIEM correlation. It also points toward an uncomfortable governance task: deciding which first-party Microsoft applications are actually needed by which populations. Not every user needs Azure CLI. Not every department should be able to produce tokens for administrative tooling without scrutiny.

A practical detection strategy should treat unexpected use of developer or administrative clients as high-signal, especially when paired with unfamiliar locations or rapid access to mail and files. Security teams should also watch for patterns that suggest automation infrastructure is involved, such as token-related events tied to unexpected hosting, serverless, or integration-platform endpoints.

The best detections will be contextual rather than absolute. Azure CLI usage from a cloud engineer’s managed workstation during business hours may be routine. The same pattern from a sales user after interacting with a suspicious page is a different story.

But the phrase “turn on token protection” can be too glib. Microsoft’s own documentation describes scope limits, platform differences, and application support boundaries. Token protection is strongest where supported native applications, compliant devices, and Entra-joined or registered endpoints are part of the environment. Browser-based and unmanaged-device scenarios complicate the picture.

That does not make the control unimportant. It makes piloting important. Enterprises should identify high-risk users, privileged roles, and sensitive resources where token replay would be especially damaging, then test token protection and device compliance requirements against real workflows.

Network-based enforcement can also help. If refresh tokens or sign-in session artifacts are only useful from compliant networks or approved egress paths, attackers face more friction. The downside is familiar to anyone who has managed conditional access at scale: policies can break edge cases, contractors, mobile users, legacy tooling, and emergency access patterns.

The answer is not paralysis. The answer is staged enforcement, good reporting, and clear exceptions. Token theft is now a mainstream identity threat, so token controls have to become mainstream identity engineering rather than a premium afterthought.

Tenants should restrict user consent to applications where possible, require admin approval for risky permissions, and review existing grants. That is not glamorous work. It is the identity equivalent of patching forgotten servers and cleaning stale local admins. It also prevents small mistakes from becoming durable access paths.

The hard part is cultural. Many organizations adopted SaaS by letting users connect tools freely, then tried to add governance later. OAuth permission prompts became background noise, and “Sign in with Microsoft” became an everyday convenience. Attackers are now feeding on that convenience.

IT teams should inventory which apps have delegated access, which users granted it, and which permissions are excessive. They should pay special attention to mail, files, offline access, directory read permissions, and administrative APIs. They should also separate consumer convenience from enterprise trust: the fact that an app can ask for access does not mean the tenant should allow it.

App governance will not stop every ConsentFix-style attack, especially where first-party apps are involved. But it reduces the blast radius of OAuth abuse and forces the organization to understand its own identity surface.

That means responders should disable or contain the account if necessary, revoke user sessions, reset credentials, review authentication methods, examine sign-in and audit logs, and inspect mailbox rules, forwarding settings, file access, and app consent records. If privileged accounts are involved, assume the blast radius extends beyond the mailbox.

The timeline matters. Automation can exchange codes quickly and begin resource access almost immediately. Waiting for a daily report or a user complaint gives attackers time to search mail, download files, create persistence, or pivot into additional services.

This is why identity incidents need rehearsed playbooks. Security teams should know where to revoke tokens, how long revocation takes to propagate, which logs show token and app activity, and which business owners must be involved when data access is suspected. They should also know which emergency access accounts are exempt from conditional access and whether those exemptions create their own risk.

ConsentFix v3 is not merely a phishing problem for awareness teams. It is an identity incident-response problem for the whole Microsoft cloud stack.

That changes triage. A user reporting “I pasted a URL into a verification page” should trigger identity investigation even if antivirus logs are quiet. A suspicious OAuth sign-in should be treated seriously even if EDR shows no process injection, no PowerShell abuse, and no persistence on disk.

The modern Windows estate is not just laptops and servers. It is Entra ID, Intune, Defender, Exchange Online, SharePoint, Teams, Azure subscriptions, Power Platform, and a constellation of OAuth-connected SaaS tools. The control plane is the new crown jewel.

Attackers understand this. They are not always trying to own the device because the device may be hardened, monitored, and short-lived. Owning the token can be faster. Owning the delegated cloud access can be quieter. Owning the mailbox can be enough to launch the next compromise.

This is not an argument to abandon endpoint security. It is an argument to stop treating endpoint security as the center of gravity. In Microsoft 365 and Azure environments, identity telemetry is now endpoint telemetry’s equal.

ConsentFix v3 is not the final form of OAuth phishing, but it is a useful preview of where the pressure is moving. Attackers are learning to automate the abuse of legitimate cloud flows, and defenders will have to answer with identity systems that understand context, bind tokens to devices and networks where possible, and treat first-party trust as something to monitor rather than something to assume. The next phishing page may not ask for a password at all, and that is precisely why Microsoft shops need to start defending the moments after login with the same seriousness they brought to the login screen itself.

Source: SC Media New ConsentFix v3 attack automates Microsoft Azure account hijacking

The Password Was Not the Prize

The Password Was Not the Prize

The old phishing story was easy to explain to users and auditors: someone built a fake login page, the victim typed a password, and the attacker tried to reuse it before MFA or risk scoring got in the way. ConsentFix v3 is more uncomfortable because it lives in the gray zone between user consent, delegated access, and legitimate OAuth plumbing. The victim may authenticate to Microsoft itself, pass MFA normally, and still hand the attacker the one thing needed to impersonate the session.That makes the technique feel less like a bug and more like a design tradeoff being turned inside out. OAuth exists so applications can obtain delegated access without collecting user passwords. In Microsoft’s cloud, first-party tooling such as Azure CLI and Azure PowerShell must be able to request tokens in ways that support administrators, developers, automation jobs, and real-world device flows. Attackers are exploiting the fact that these flows are familiar to identity systems but obscure to ordinary users.

ConsentFix is therefore not a password bypass in the cinematic sense. It is a consent and token-capture con wrapped around legitimate authorization behavior. MFA can still happen, the login can still be real, and the tenant can still issue a token because, from the identity provider’s vantage point, the user participated in a valid authorization flow.

The v3 twist is automation. Earlier variants already demonstrated that a victim could be tricked into copying a localhost redirect URL containing an authorization code back into a malicious page. The newer reporting says the kit adds target discovery, employee profiling, disposable account setup, webhook collection, and token exchange automation, allowing the operation to scale beyond artisanal phishing.

ConsentFix Turns the Browser Into the Redirect Endpoint

The essential trick is brutally simple once stripped of the branding. An attacker sends a victim to a phishing page that mimics a Microsoft or Azure-related experience. That page initiates a real OAuth authorization flow against Microsoft, often abusing trust associated with first-party Microsoft applications rather than asking the victim to approve a transparently malicious third-party app.If the victim is already signed in, the experience can look mundane. If the victim is not signed in, they may be taken through Microsoft’s actual authentication process. This is precisely what makes the lure dangerous: the part of the process that users have been trained to trust may indeed be genuine.

The redirect then lands on a localhost URL containing an OAuth authorization code. In legitimate scenarios, that code is meant to be consumed by a local application, such as a command-line client, that is listening for the callback. In the attack, the user is instructed to paste or drag that URL back into the phishing page under some pretext — to “fix” an issue, complete a verification, or satisfy a fake interface step.

Once the attacker has the code, the clock starts. OAuth authorization codes are short-lived, but automation collapses the timeline. ConsentFix v3 reportedly uses Pipedream as a webhook endpoint and automation layer to receive the captured URL, extract the code, exchange it for tokens, and store or forward the result for follow-on access.

That is the important leap from clever trick to operational platform. A manual attacker has to move quickly and consistently. A serverless workflow does not get tired, does not mistype, and does not pause between victims.

Cloud Trust Became the Camouflage

The services named in reporting — Cloudflare Pages, Pipedream, and other legitimate SaaS infrastructure — are not exotic. They are exactly the sort of platforms defenders expect to see somewhere in enterprise traffic. That is part of the appeal.Cloudflare Pages gives an attacker a clean, TLS-protected hosting surface with the visual credibility of modern web infrastructure. Pipedream gives them a programmable glue layer for receiving webhook data and calling APIs. Other services can be used for enrichment, staging, disposable identities, and data handling. None of this requires the attacker to run an obvious criminal command-and-control server in the old sense.

This is the SaaS-abuse era of phishing. The attacker’s infrastructure increasingly looks like a badly governed startup stack: serverless endpoints, workflow automation, web hosting, enrichment tools, and cloud storage. The payload is not always a binary. Sometimes the payload is a workflow.

That matters for defenders because blocking “bad infrastructure” becomes harder when the infrastructure is also used by legitimate teams. A blanket block on every automation or web-hosting platform may be operationally impossible. But allowing everything because it is “just SaaS” leaves identity teams blind to the places where authorization artifacts are being collected and replayed.

ConsentFix v3 is a case study in that tension. The attack does not need to defeat Microsoft’s TLS, crack passwords, or compromise MFA servers. It needs to persuade a user to bridge two legitimate systems in a way the user does not understand.

MFA Still Works, Which Is Exactly Why This Is Awkward

It is tempting to describe ConsentFix as bypassing MFA, and in one practical sense that is how many defenders will experience it. A victim with MFA enabled can still be compromised. Password resets may not immediately solve the problem. The attacker may end up with refresh tokens that permit continuing delegated access until revoked, constrained, or detected.But saying MFA “failed” misses the more useful lesson. MFA answered the question it was asked: is the user completing an authentication event? ConsentFix asks a different question: can the attacker steal the authorization artifact produced after that event?

That distinction is not academic. Many enterprises have spent years hardening the front door: passwordless sign-in, number matching, phishing-resistant factors for admins, impossible-travel alerts, and conditional access at authentication time. Those controls remain valuable. They simply do not cover the entire lifecycle of a token once issued.

The uncomfortable phrase here is token replay. If a refresh token can be used from somewhere other than the device or context in which it was issued, an attacker can turn a short social-engineering success into durable access. That is why Microsoft’s own guidance around token protection, compliant devices, network-based controls, and continuous access evaluation has become more central to identity defense.

For many WindowsForum readers, this will feel familiar from the evolution of browser-cookie theft and adversary-in-the-middle phishing. As password controls improved, attackers moved to session artifacts. ConsentFix applies the same strategic pressure to OAuth flows and Microsoft cloud tooling.

First-Party Trust Is the Soft Underbelly

One reason ConsentFix has attracted attention is its reported abuse of first-party Microsoft application IDs, including tooling such as Azure CLI. These applications occupy a privileged mental and technical space. Users do not see them as suspicious, and tenants often treat them as part of the expected Microsoft cloud fabric.That trust is necessary. Azure CLI cannot be useful if every legitimate developer and administrator login is treated as a crisis. But the same trust gives attackers an attractive cover story: they can make the victim interact with a Microsoft flow that looks normal enough and produces tokens associated with capabilities the attacker wants.

The problem is not that Azure CLI is malicious. The problem is that enterprise identity often struggles to distinguish “Alice the cloud engineer using Azure CLI from her managed workstation” from “Alice the finance employee somehow producing Azure CLI tokens after visiting a fake verification page.” Those events are radically different in business context, even if they share pieces of the same authentication machinery.

This is where identity telemetry has to become more opinionated. App identity, user role, device state, network location, token redemption source, and subsequent resource access all matter. A user who has never touched administrative tooling suddenly authorizing a command-line client should not be treated as just another successful sign-in.

There is a larger Microsoft ecosystem lesson here. First-party applications are not magic exceptions to security governance. They are high-value paths that deserve monitoring precisely because attackers prefer trusted roads.

The Phish Is Becoming a Wizard, Not a Web Page

Classic phishing pages were imitations. ConsentFix-style pages are more like malicious setup wizards. They guide the user through a multi-step process in which each step feels plausible enough to keep going.This is the same psychological territory exploited by ClickFix campaigns, fake CAPTCHA prompts, and bogus Cloudflare Turnstile checks. Users are told that something is broken, blocked, or incomplete, and that a strange manual action will restore normality. The novelty is not that users can be tricked into copying and pasting things. The novelty is that the copied object can be an OAuth redirect URL whose meaning is invisible to most humans.

A long localhost URL looks like technical noise. To a developer, it may signal a callback. To an accountant, project manager, or even many IT generalists, it is just a string the page told them to move. The attacker benefits from the fact that modern authentication already contains enough redirects, consent screens, and browser handoffs to make weirdness feel normal.

Security awareness training has not caught up to that reality. Many programs still emphasize fake login domains, misspelled brands, and suspicious attachments. Those remain useful lessons, but they do not teach users that a legitimate Microsoft login followed by a request to paste a URL can be malicious.

The training message needs to be narrower and sharper: no legitimate verification system should ask a user to copy a browser address, paste a command, drag a URL, or transfer an authorization code into another page. That is not a normal human step in cloud authentication. It is a red flare.

Automation Changes the Defender’s Clock

The reported use of Pipedream is more than a colorful detail. It changes the timing assumptions. A manually operated phishing campaign leaves gaps: an operator has to notice the submission, redeem the code, store the token, and begin exploitation. Automated token exchange turns that into a near-real-time pipeline.That matters because short-lived authorization codes are often treated as inherently low-risk if intercepted. They expire quickly, and in a normal application they are exchanged by the intended client. ConsentFix v3 makes expiration less comforting. If the code is delivered directly into a webhook-driven workflow, the useful lifetime of the code is more than enough.

Automation also supports scale before and after compromise. Target discovery can identify valid tenant IDs and collect employee information. Lure infrastructure can be cloned. Accounts across different services can be created to host pages, receive data, and move artifacts. Token capture can feed directly into exfiltration workflows.

This is the same industrial logic that transformed ransomware from opportunistic malware into a service economy. The technical move is not always revolutionary. The operational packaging is.

For defenders, the response has to be similarly operational. It is not enough to know that OAuth abuse exists. Teams need detections that fire quickly, playbooks that revoke tokens decisively, and policy baselines that reduce the number of users and apps capable of producing dangerous surprises.

The Logs Will Tell a Story, If Anyone Is Reading the Right Ones

ConsentFix is hard to prevent perfectly, but it is not invisible. The challenge is that the signal may not appear where security teams are used to looking. A successful interactive sign-in from the user’s device may look legitimate because it was legitimate. The suspicious activity may appear in token redemption, non-interactive sign-ins, unfamiliar application usage, anomalous IP addresses, or unusual access to Exchange, SharePoint, OneDrive, or Graph resources.That means defenders need to move beyond “did MFA pass?” as the decisive question. They need to ask which client application received tokens, from where the authorization code was redeemed, whether the device was compliant, whether the user normally uses that app, and what resources were accessed afterward.

In Microsoft environments, this points toward Entra sign-in logs, audit logs, workload logs, Defender XDR signals, and SIEM correlation. It also points toward an uncomfortable governance task: deciding which first-party Microsoft applications are actually needed by which populations. Not every user needs Azure CLI. Not every department should be able to produce tokens for administrative tooling without scrutiny.

A practical detection strategy should treat unexpected use of developer or administrative clients as high-signal, especially when paired with unfamiliar locations or rapid access to mail and files. Security teams should also watch for patterns that suggest automation infrastructure is involved, such as token-related events tied to unexpected hosting, serverless, or integration-platform endpoints.

The best detections will be contextual rather than absolute. Azure CLI usage from a cloud engineer’s managed workstation during business hours may be routine. The same pattern from a sales user after interacting with a suspicious page is a different story.

Token Binding Is the Right Idea With Real-World Edges

Microsoft’s Token Protection in Conditional Access is the obvious strategic answer because it tries to make stolen refresh tokens less useful by binding them to the device that received them. If a token cannot be replayed from an attacker’s infrastructure, the ConsentFix payoff shrinks dramatically.But the phrase “turn on token protection” can be too glib. Microsoft’s own documentation describes scope limits, platform differences, and application support boundaries. Token protection is strongest where supported native applications, compliant devices, and Entra-joined or registered endpoints are part of the environment. Browser-based and unmanaged-device scenarios complicate the picture.

That does not make the control unimportant. It makes piloting important. Enterprises should identify high-risk users, privileged roles, and sensitive resources where token replay would be especially damaging, then test token protection and device compliance requirements against real workflows.

Network-based enforcement can also help. If refresh tokens or sign-in session artifacts are only useful from compliant networks or approved egress paths, attackers face more friction. The downside is familiar to anyone who has managed conditional access at scale: policies can break edge cases, contractors, mobile users, legacy tooling, and emergency access patterns.

The answer is not paralysis. The answer is staged enforcement, good reporting, and clear exceptions. Token theft is now a mainstream identity threat, so token controls have to become mainstream identity engineering rather than a premium afterthought.

App Consent Governance Cannot Stay on Autopilot

ConsentFix v3 sits adjacent to a broader problem: organizations often have weak governance over OAuth app consent and delegated permissions. Over the last decade, attackers have repeatedly abused consent grants to maintain access to mailboxes, files, and Graph data even when passwords were changed. ConsentFix’s use of first-party flows complicates the story, but it does not reduce the need for consent hygiene.Tenants should restrict user consent to applications where possible, require admin approval for risky permissions, and review existing grants. That is not glamorous work. It is the identity equivalent of patching forgotten servers and cleaning stale local admins. It also prevents small mistakes from becoming durable access paths.

The hard part is cultural. Many organizations adopted SaaS by letting users connect tools freely, then tried to add governance later. OAuth permission prompts became background noise, and “Sign in with Microsoft” became an everyday convenience. Attackers are now feeding on that convenience.

IT teams should inventory which apps have delegated access, which users granted it, and which permissions are excessive. They should pay special attention to mail, files, offline access, directory read permissions, and administrative APIs. They should also separate consumer convenience from enterprise trust: the fact that an app can ask for access does not mean the tenant should allow it.

App governance will not stop every ConsentFix-style attack, especially where first-party apps are involved. But it reduces the blast radius of OAuth abuse and forces the organization to understand its own identity surface.

Incident Response Starts With Revocation, Not Password Resets

When a ConsentFix compromise is suspected, the reflex to reset the password is understandable and insufficient. If the attacker’s valuable asset is a refresh token or delegated session, response must focus on revoking sessions, invalidating refresh tokens, removing malicious grants, and investigating post-compromise access.That means responders should disable or contain the account if necessary, revoke user sessions, reset credentials, review authentication methods, examine sign-in and audit logs, and inspect mailbox rules, forwarding settings, file access, and app consent records. If privileged accounts are involved, assume the blast radius extends beyond the mailbox.

The timeline matters. Automation can exchange codes quickly and begin resource access almost immediately. Waiting for a daily report or a user complaint gives attackers time to search mail, download files, create persistence, or pivot into additional services.

This is why identity incidents need rehearsed playbooks. Security teams should know where to revoke tokens, how long revocation takes to propagate, which logs show token and app activity, and which business owners must be involved when data access is suspected. They should also know which emergency access accounts are exempt from conditional access and whether those exemptions create their own risk.

ConsentFix v3 is not merely a phishing problem for awareness teams. It is an identity incident-response problem for the whole Microsoft cloud stack.

Windows Shops Need a New Suspicion Model

For Windows enthusiasts and sysadmins, the instinct is often to look for malware on the endpoint. That instinct is still useful, but it is incomplete. ConsentFix can succeed without dropping a traditional payload on the victim’s machine. The endpoint may be clean while the cloud session is compromised.That changes triage. A user reporting “I pasted a URL into a verification page” should trigger identity investigation even if antivirus logs are quiet. A suspicious OAuth sign-in should be treated seriously even if EDR shows no process injection, no PowerShell abuse, and no persistence on disk.

The modern Windows estate is not just laptops and servers. It is Entra ID, Intune, Defender, Exchange Online, SharePoint, Teams, Azure subscriptions, Power Platform, and a constellation of OAuth-connected SaaS tools. The control plane is the new crown jewel.

Attackers understand this. They are not always trying to own the device because the device may be hardened, monitored, and short-lived. Owning the token can be faster. Owning the delegated cloud access can be quieter. Owning the mailbox can be enough to launch the next compromise.

This is not an argument to abandon endpoint security. It is an argument to stop treating endpoint security as the center of gravity. In Microsoft 365 and Azure environments, identity telemetry is now endpoint telemetry’s equal.

The Concrete Work Starts Before the Next Lure Lands

The practical response to ConsentFix v3 is not one magic toggle. It is a set of identity-security habits that reduce the chance of token theft, shorten the useful life of stolen tokens, and make strange OAuth behavior noisy enough to investigate.- Organizations should pilot Token Protection in Conditional Access for supported platforms and applications, starting with privileged users and high-value departments.

- Security teams should monitor for unexpected use of Azure CLI, Azure PowerShell, and other first-party clients by users whose roles do not normally require them.

- Administrators should restrict app consent, review existing OAuth grants, and require approval for applications requesting sensitive delegated permissions.

- Detection engineering should correlate successful sign-ins with token redemption location, non-interactive activity, device compliance, client application, and subsequent access to mail or files.

- User training should explicitly warn that legitimate services do not ask people to paste browser URLs, authorization codes, or commands into verification pages.

- Incident-response playbooks should prioritize session revocation, refresh-token invalidation, consent review, and cloud data-access investigation, not just password resets.

ConsentFix v3 is not the final form of OAuth phishing, but it is a useful preview of where the pressure is moving. Attackers are learning to automate the abuse of legitimate cloud flows, and defenders will have to answer with identity systems that understand context, bind tokens to devices and networks where possible, and treat first-party trust as something to monitor rather than something to assume. The next phishing page may not ask for a password at all, and that is precisely why Microsoft shops need to start defending the moments after login with the same seriousness they brought to the login screen itself.

Source: SC Media New ConsentFix v3 attack automates Microsoft Azure account hijacking