Microsoft’s Copilot has crossed a threshold from assistant to active collaborator, and the implications now reach well beyond Microsoft 365. With Copilot Cowork, Claude models inside the Microsoft stack, and a new Agent 365 control plane, Microsoft is signaling that enterprise AI is no longer about drafting text or summarizing meetings; it is about executing multi-step work with governance, auditability, and human oversight. At the same time, the latest Claude Code security-review features, Google’s Gemma 4, and the growing OpenClaw ecosystem show that AI competition is fragmenting into specialized lanes: coding, office work, open-weight models, and local agent runtimes.

The broader story here is not a single product launch, but a market-wide redefinition of what an AI tool is supposed to do. For much of 2023 and 2024, the headline promise was conversational intelligence: ask a model, get an answer, then copy the result somewhere useful. In 2025 and now 2026, the center of gravity has moved to systems that can operate inside real workflows, manage permissions, and remain useful over hours or days rather than seconds. Microsoft’s own public framing makes that shift explicit, describing a new era of productivity where AI “executes multi-step tasks with clear user control points,” rather than merely suggesting code or drafting copy.

That shift explains why Microsoft is now willing to embrace a multi-model posture. The company’s March 2026 Copilot Cowork announcement emphasized a Critique layer built on Anthropic’s Claude to review output generated by OpenAI models, plus a Model Council for side-by-side comparison. That is a striking admission that no single model family has won every layer of enterprise work, and that the best product may be the one that orchestrates multiple systems rather than worships a single brand. In practical terms, Microsoft is turning model diversity into a feature, not an accident.

The coding side of the market is undergoing a similar evolution. Anthropic has now pushed Claude Code beyond plain coding assistance and into automated security review, with a

Open models are also getting more serious. Google’s Gemma 4 is being framed as Google DeepMind’s most capable open model family to date, with larger variants positioned prominently on open-model leaderboards and released under Apache 2.0. That is not just an open-source flourish. It is a strategic signal that open weights are becoming viable infrastructure for teams that want control, lower cost, and deployment flexibility without surrendering to a closed API.

Meanwhile, OpenClaw and related local-agent tooling are showing how quickly the industry is moving toward automation at the edge. OpenClaw’s documentation and ecosystem make clear that users are now asking whether subscriptions, APIs, and third-party harnesses are interchangeable or billable in different ways, which is exactly the kind of operational messiness that appears when an experimental agent becomes real infrastructure. Its existence is evidence of demand; its billing and security questions are evidence of maturity.

This is a meaningful commercial turn. Microsoft is not just selling productivity assistance; it is selling operational labor in software form. That shifts the buying conversation from “Is this useful?” to “What business process can this replace or compress?” For CIOs and enterprise architects, that is a bigger question because it touches governance, auditability, and the cost of failure.

For customers, this is potentially good news. It suggests Microsoft is optimizing for outcomes rather than exclusivity, and that should improve robustness in tasks where one model’s blind spots are another model’s strengths. The downside is obvious too: more models also mean more complexity, more policy work, and more things that can fail in deployment.

Key implications for Microsoft customers:

That same logic explains the broader commercial packaging. Microsoft is tying agentic capabilities to enterprise bundles and preview programs because the company understands that autonomy without controls will trigger resistance. The pitch to IT departments is therefore as much about restraint as about power. It is an attempt to say: yes, the agent can act, but only within a framework you can monitor.

That matters because software teams are quickly discovering that the real value of AI coding tools is not the first draft. It is compression of the entire software lifecycle: scaffolding, refactoring, documentation, vulnerability scanning, and PR triage. Claude Code’s new security review workflow fits that pattern neatly. It turns the assistant into a gatekeeper, not just a helper.

The company also warns that automated review should supplement, not replace, manual review. That warning is wise and necessary. AI can catch common flaws, but it can also normalize overconfidence, and there is a danger that teams start trusting a green checkmark as a proxy for actual security diligence. That would be a serious mistake.

The practical payoff is obvious:

The competitive significance is straightforward. If open-weight models get better, the pricing power of closed frontier APIs gets weaker at the margin. Enterprises do not have to switch all workloads to open models for the shift to matter; they only need enough internal or regulated workloads to make open deployment strategically attractive. That puts pressure on every major vendor to prove that its closed model is worth the premium.

There is also a broader ecosystem effect. When a major lab ships an open family with strong capability, it raises the baseline for everyone else. Smaller vendors, local-model platforms, and enterprise AI teams can all build more ambitious products because the underlying open stack is better. That benefits innovation even when customers never deploy the model directly.

That contest is healthy, but it is also messy. Once open models become “good enough” for a growing share of tasks, vendors can no longer rely on benchmark prestige alone. They need distribution, tooling, governance, and service reliability. In other words, the business of AI is becoming more like the business of enterprise software. That is probably where it was always headed.

The central lesson is that agent software is becoming infrastructure, not novelty. Once agents are allowed to touch apps, local files, APIs, and long-running workflows, the key question is no longer “Can it do it?” but “Who controls it, who pays for it, and what happens when it misbehaves?” That is the real OpenClaw story.

It also hints at a coming wave of policy fights. If a local agent wrapper sits on top of a vendor’s consumer or subscription access, then the vendor may decide that the wrapper is no longer “native” usage. That is a likely point of friction across the whole agent market, not just OpenClaw. Distribution without licensing clarity is not a stable business model.

The industry is learning that an agent runtime is not just a productivity feature. It is a new kind of attack surface. If OpenClaw helped popularize local agents, the next phase will likely be about hardening them so enterprises can trust them at scale.

That matters because it changes the economics of platform competition. In the earlier phase of the AI race, companies competed to prove they had the best frontier model. In the current phase, they are competing to prove they can operationalize AI safely inside real environments. That means distribution, orchestration, observability, and trust are becoming as important as raw benchmark performance.

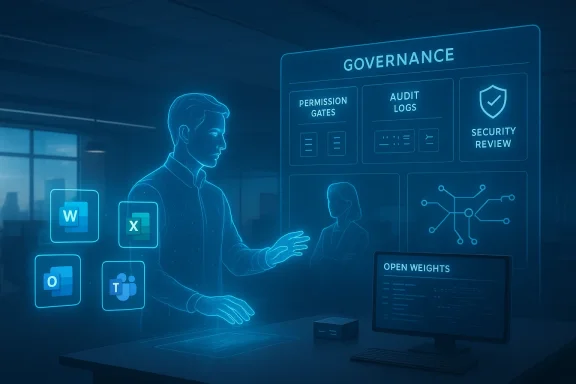

That also explains why the market is talking more about governance. As soon as an AI can take action, it becomes a software actor, not just a text generator. The enterprise question becomes whether that actor can be inventoried, restricted, reviewed, and audited. If the answer is no, procurement will stall.

That split is visible in Microsoft’s rollout posture, Anthropic’s review tooling, and OpenClaw’s local-first approach. A consumer might tolerate a quirky agent that saves time. An enterprise buyer usually will not, especially if the system can touch internal data or trigger actions without a clear trail. The winner in enterprise AI will be the vendor that can make autonomy feel boring, controlled, and supportable.

The result is that enterprise AI adoption will likely be uneven. Some teams will move quickly on low-risk tasks like drafting, research, or code review. Others will stay cautious until governance and observability improve further. That caution is not a sign of failure; it is a sign that the products are finally touching real business processes.

It is also likely that the market will become more pluralistic, not less. Open weights will keep improving, local runtimes will keep proliferating, and multi-model orchestration will become normal in enterprise products. The real question is not whether AI will be everywhere; it already is. The question is whether the systems around AI will become trustworthy enough for people to let them do more than talk.

What to watch next:

Source: Fathom Journal Fathom - For a deeper understanding of Israel, the region, and global antisemitism

Overview

Overview

The broader story here is not a single product launch, but a market-wide redefinition of what an AI tool is supposed to do. For much of 2023 and 2024, the headline promise was conversational intelligence: ask a model, get an answer, then copy the result somewhere useful. In 2025 and now 2026, the center of gravity has moved to systems that can operate inside real workflows, manage permissions, and remain useful over hours or days rather than seconds. Microsoft’s own public framing makes that shift explicit, describing a new era of productivity where AI “executes multi-step tasks with clear user control points,” rather than merely suggesting code or drafting copy.That shift explains why Microsoft is now willing to embrace a multi-model posture. The company’s March 2026 Copilot Cowork announcement emphasized a Critique layer built on Anthropic’s Claude to review output generated by OpenAI models, plus a Model Council for side-by-side comparison. That is a striking admission that no single model family has won every layer of enterprise work, and that the best product may be the one that orchestrates multiple systems rather than worships a single brand. In practical terms, Microsoft is turning model diversity into a feature, not an accident.

The coding side of the market is undergoing a similar evolution. Anthropic has now pushed Claude Code beyond plain coding assistance and into automated security review, with a

/security-review command and GitHub Actions support that are designed to surface vulnerabilities such as SQL injection, XSS, auth flaws, and insecure data handling. That matters because software teams increasingly need a tool that does not merely write code quickly, but helps them police the quality and safety of the code it writes. The more agentic the development workflow becomes, the more the review layer matters.Open models are also getting more serious. Google’s Gemma 4 is being framed as Google DeepMind’s most capable open model family to date, with larger variants positioned prominently on open-model leaderboards and released under Apache 2.0. That is not just an open-source flourish. It is a strategic signal that open weights are becoming viable infrastructure for teams that want control, lower cost, and deployment flexibility without surrendering to a closed API.

Meanwhile, OpenClaw and related local-agent tooling are showing how quickly the industry is moving toward automation at the edge. OpenClaw’s documentation and ecosystem make clear that users are now asking whether subscriptions, APIs, and third-party harnesses are interchangeable or billable in different ways, which is exactly the kind of operational messiness that appears when an experimental agent becomes real infrastructure. Its existence is evidence of demand; its billing and security questions are evidence of maturity.

Why this news matters now

A useful way to read the current AI cycle is to separate novelty from procurement reality. Consumer excitement still gravitates toward demos, but enterprise buyers now care about control planes, review workflows, permissions, and identity boundaries. Microsoft, Anthropic, Google, and the OpenClaw ecosystem are all converging on the same conclusion: the future is not one chatbot, but a stack of specialized agents and governance layers.Microsoft’s Copilot Becomes a Work Engine

Microsoft’s Copilot strategy is no longer merely about embedding generative AI into Word, Excel, PowerPoint, Outlook, and Teams. With Copilot Cowork, the company is now positioning AI as a system that can plan, execute, and return completed work across Microsoft 365. The emphasis on longer-running tasks is important because it marks a departure from the original Copilot pitch, which was largely about speed and convenience. The new pitch is autonomy, but with corporate guardrails.This is a meaningful commercial turn. Microsoft is not just selling productivity assistance; it is selling operational labor in software form. That shifts the buying conversation from “Is this useful?” to “What business process can this replace or compress?” For CIOs and enterprise architects, that is a bigger question because it touches governance, auditability, and the cost of failure.

Multi-model intelligence as a product strategy

The most notable part of the Copilot Cowork move is Microsoft’s willingness to bake Anthropic into the stack. The company’s press materials describe Claude participating in Critique and Model Council workflows, which means Microsoft is using another frontier model not as a side integration but as part of the product’s core reasoning path. That is a strong indication that model quality is now being judged in context, not by brand loyalty.For customers, this is potentially good news. It suggests Microsoft is optimizing for outcomes rather than exclusivity, and that should improve robustness in tasks where one model’s blind spots are another model’s strengths. The downside is obvious too: more models also mean more complexity, more policy work, and more things that can fail in deployment.

Key implications for Microsoft customers:

- Less dependence on one model vendor

- More room for task-specific optimization

- Higher governance and compliance complexity

- Greater potential for accuracy through critique loops

- More bargaining power for enterprise buyers

- A stronger moat for Microsoft’s workflow layer, not just its model layer

Agent 365 and the governance problem

The introduction of Agent 365 is just as important as the model work itself. Microsoft is clearly trying to create a control plane that can inventory, manage, and supervise agents inside the enterprise. That is the logical next step once agents begin taking actions rather than merely drafting text. In an environment where AI can trigger tasks, manipulate documents, or touch shared data, governance is no longer optional decoration; it is the product.That same logic explains the broader commercial packaging. Microsoft is tying agentic capabilities to enterprise bundles and preview programs because the company understands that autonomy without controls will trigger resistance. The pitch to IT departments is therefore as much about restraint as about power. It is an attempt to say: yes, the agent can act, but only within a framework you can monitor.

Claude Code and the New Code Review Era

Anthropic’s Claude Code update is one of the most important signals in the coding market because it addresses the part of AI development that most demos skip: review. It is easy to impress people with generated code; it is harder to help teams catch mistakes before they become incidents. Anthropic’s automated security review features show that the company understands the next competitive battleground is not just code generation but code assurance.That matters because software teams are quickly discovering that the real value of AI coding tools is not the first draft. It is compression of the entire software lifecycle: scaffolding, refactoring, documentation, vulnerability scanning, and PR triage. Claude Code’s new security review workflow fits that pattern neatly. It turns the assistant into a gatekeeper, not just a helper.

Security review becomes a native workflow

Anthropic says the feature can be used from the terminal or through GitHub Actions, which is the right move for enterprise adoption. Security review must happen where the code lives, not in a separate dashboard that developers ignore. By integrating with the normal development loop, Anthropic is acknowledging a simple truth: if safety is inconvenient, people will skip it.The company also warns that automated review should supplement, not replace, manual review. That warning is wise and necessary. AI can catch common flaws, but it can also normalize overconfidence, and there is a danger that teams start trusting a green checkmark as a proxy for actual security diligence. That would be a serious mistake.

Why security review matters to developers

For developers, automated review reduces one of the biggest hidden costs of AI adoption: the cognitive burden of verifying machine-generated code. If a model can produce more code than a team can reasonably inspect, then the bottleneck shifts from writing to verifying. That is exactly where Anthropic is now trying to compete.The practical payoff is obvious:

- Faster code turns into faster PRs.

- Faster PRs require faster review.

- Faster review demands more automation.

- More automation increases the need for trust controls.

- Trust controls become a product feature.

Gemma 4 and the Open-Model Pressure Campaign

Google’s Gemma 4 release is another reminder that open-weight models are no longer being treated as second-class citizens. Google DeepMind’s announcement frames the family as its most capable open models to date, with the larger variants positioned as high performers for their size. That matters because many enterprise customers want the practical benefits of open systems without sacrificing modern reasoning quality.The competitive significance is straightforward. If open-weight models get better, the pricing power of closed frontier APIs gets weaker at the margin. Enterprises do not have to switch all workloads to open models for the shift to matter; they only need enough internal or regulated workloads to make open deployment strategically attractive. That puts pressure on every major vendor to prove that its closed model is worth the premium.

Open weights as enterprise infrastructure

The market has moved from “open vs closed” as an ideology to “open vs closed” as a deployment decision. Open weights are increasingly attractive where data residency, customization, or long-term cost control matters. Gemma 4’s Apache 2.0 positioning makes that easier to justify for teams that need internal flexibility and compliance comfort.There is also a broader ecosystem effect. When a major lab ships an open family with strong capability, it raises the baseline for everyone else. Smaller vendors, local-model platforms, and enterprise AI teams can all build more ambitious products because the underlying open stack is better. That benefits innovation even when customers never deploy the model directly.

What it means for rivals

For Anthropic and Microsoft, Gemma 4 is a reminder that model quality is becoming more democratized. For OpenAI, it reinforces the need to keep improving agentic reliability and developer tooling. For enterprise IT teams, it widens the option set. The result is not a single winner but a more contested, more modular market.That contest is healthy, but it is also messy. Once open models become “good enough” for a growing share of tasks, vendors can no longer rely on benchmark prestige alone. They need distribution, tooling, governance, and service reliability. In other words, the business of AI is becoming more like the business of enterprise software. That is probably where it was always headed.

OpenClaw and the Agent Runtime Problem

OpenClaw is a good example of what happens when AI agents move from theory to daily use. The ecosystem has enough traction to attract documentation, provider notes, update commands, and billing questions about whether Claude subscriptions count as third-party harness usage. That sort of complexity only emerges when a tool starts sitting between a model and a real workflow.The central lesson is that agent software is becoming infrastructure, not novelty. Once agents are allowed to touch apps, local files, APIs, and long-running workflows, the key question is no longer “Can it do it?” but “Who controls it, who pays for it, and what happens when it misbehaves?” That is the real OpenClaw story.

Billing, identity, and trust

OpenClaw’s documentation makes clear that the line between subscription usage and external harness usage can be blurry. Anthropic’s apparent position that OpenClaw-based Claude login traffic is counted differently is more than a billing note; it is a sign that vendors are trying to define where product ends and platform begins. That distinction matters because it affects both economics and compliance.It also hints at a coming wave of policy fights. If a local agent wrapper sits on top of a vendor’s consumer or subscription access, then the vendor may decide that the wrapper is no longer “native” usage. That is a likely point of friction across the whole agent market, not just OpenClaw. Distribution without licensing clarity is not a stable business model.

The security angle

There is a darker side too. Local agents are appealing because they promise privacy, speed, and control. But the more autonomous they become, the more dangerous they are if compromised. An agent that can act on a user’s behalf is also an agent that can be abused on a user’s behalf. That is why runtime security, identity boundaries, and explicit permissions have become central design issues.The industry is learning that an agent runtime is not just a productivity feature. It is a new kind of attack surface. If OpenClaw helped popularize local agents, the next phase will likely be about hardening them so enterprises can trust them at scale.

The Enterprise AI Market Is Going Multi-Model

The biggest strategic shift in this wave is the normalization of multi-model AI. Microsoft is making it explicit in Copilot Cowork. Anthropic is building tooling that assumes code review and safety layers. Google is pushing open weights harder. OpenClaw is pushing local-first autonomy. Each move reflects the same realization: no single model family will own every use case, and the best products will increasingly route work across multiple systems.That matters because it changes the economics of platform competition. In the earlier phase of the AI race, companies competed to prove they had the best frontier model. In the current phase, they are competing to prove they can operationalize AI safely inside real environments. That means distribution, orchestration, observability, and trust are becoming as important as raw benchmark performance.

From chatbot to system of systems

The old chatbot model was simple: ask, answer, maybe search. The new model is closer to a workflow orchestrator: inspect context, consult tools, ask for approval, act, and report back. Microsoft’s Copilot Cowork and Anthropic’s Claude Code updates both reflect this shift. The product is now an operating layer, not a conversation box.That also explains why the market is talking more about governance. As soon as an AI can take action, it becomes a software actor, not just a text generator. The enterprise question becomes whether that actor can be inventoried, restricted, reviewed, and audited. If the answer is no, procurement will stall.

Consumer vs Enterprise: Two Different Battles

The consumer market and the enterprise market are now diverging in visible ways. Consumers still want convenience, novelty, and low friction. Enterprises want control, policy alignment, cost predictability, and audit trails. The same model family may serve both, but the product demands are increasingly different.That split is visible in Microsoft’s rollout posture, Anthropic’s review tooling, and OpenClaw’s local-first approach. A consumer might tolerate a quirky agent that saves time. An enterprise buyer usually will not, especially if the system can touch internal data or trigger actions without a clear trail. The winner in enterprise AI will be the vendor that can make autonomy feel boring, controlled, and supportable.

Why enterprises will move more slowly

Enterprises move slowly because failure is expensive. A model that hallucinates a summary is annoying. A model that misroutes approvals, edits the wrong document, or exposes sensitive data can create real financial and legal consequences. That is why features like security review, control planes, and permissioned execution matter so much.The result is that enterprise AI adoption will likely be uneven. Some teams will move quickly on low-risk tasks like drafting, research, or code review. Others will stay cautious until governance and observability improve further. That caution is not a sign of failure; it is a sign that the products are finally touching real business processes.

Strengths and Opportunities

The current wave of AI updates is impressive because it shows the market maturing in several directions at once. The strongest players are no longer chasing novelty for its own sake; they are trying to build durable systems that fit how people actually work. That creates a lot of opportunity for vendors, integrators, and enterprise customers alike.- Microsoft can turn Copilot into the default orchestration layer for office work.

- Anthropic can deepen trust in coding workflows through review and safety tooling.

- Google can use Gemma 4 to strengthen the open-model ecosystem.

- OpenClaw can help define the shape of local, user-controlled agents.

- Enterprise buyers get more choice and less lock-in.

- Developers get faster iteration with better guardrails.

- IT teams can align AI deployment with policy and governance.

Risks and Concerns

The most obvious risk is that the market will move faster than the control systems around it. Agentic AI is becoming powerful enough to be useful, but not yet mature enough to be fully trusted. That gap creates room for operational mistakes, security failures, and overconfident deployment decisions.- Permission fatigue may cause users to approve actions too quickly.

- Automation bias may lead teams to overtrust model outputs.

- Billing ambiguity may create disputes around third-party harnesses.

- Security drift may leave local agents underprotected.

- Vendor fragmentation may increase integration overhead.

- Compliance gaps may appear if logs and audits are incomplete.

- Model routing complexity may make failures harder to diagnose.

Looking Ahead

The next phase of the AI race will probably be less about who has the cleverest demo and more about who can run the most dependable systems at scale. That favors companies that can combine strong models with enterprise controls, integration depth, and a realistic understanding of how work actually gets done. Microsoft’s Copilot pivot and Anthropic’s Claude Code updates both suggest that the market is moving in that direction quickly.It is also likely that the market will become more pluralistic, not less. Open weights will keep improving, local runtimes will keep proliferating, and multi-model orchestration will become normal in enterprise products. The real question is not whether AI will be everywhere; it already is. The question is whether the systems around AI will become trustworthy enough for people to let them do more than talk.

What to watch next:

- Further expansion of Copilot Cowork beyond preview workflows

- More formal enterprise rollout of Agent 365

- Additional Claude Code review and safety capabilities

- Broader adoption of Gemma 4 in open-model deployment stacks

- New policy and billing rules for OpenClaw-style agent runtimes

Source: Fathom Journal Fathom - For a deeper understanding of Israel, the region, and global antisemitism

Last edited: