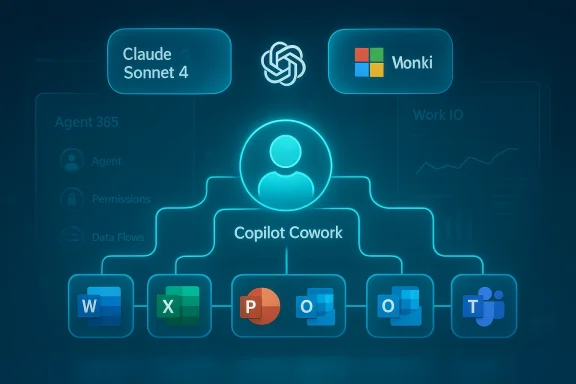

Microsoft’s Copilot has moved decisively from a conversational helper to a doing teammate: the company this week unveiled Copilot Cowork, a Claude‑powered agent designed to plan, execute and return finished work across Microsoft 365, accompanied by a new Agent 365 control plane and an enterprise commercial play that surfaces as a higher‑tier bundle for organizations.

Microsoft’s Copilot program has been evolving for more than two years from a chat‑first assistive layer into a platform for agentic automation inside Windows and Microsoft 365. Early Copilot releases emphasized drafting, summarization and inline help; recent waves moved toward multi‑turn planning, document creation, and connectors that let Copilot act on user content across accounts. Those building blocks set the stage for the next step: agents that don’t just suggest, but do.

At the same time Microsoft has been deliberately unbundling model choice inside Copilot, adding Anthropic’s Claude family as selectable backends alongside existing providers. That multi‑model approach allows specific workloads to be routed to Claude models when the task or enterprise policy calls for it. The Copilot Cowork announcement formalizes a closer, research‑preview collaboration with Anthropic to deliver agentic, long‑running task automation.

Key user‑facing capabilities Microsoft describes include:

Be cautious, however: at the time of the preview the publicly available pricing and final GA (general availability) dates were not fully baked into Microsoft’s public briefings. Enterprises should treat any commercial commitments as subject to change until Microsoft posts formal pricing and terms. Where specific dates or price points are not included in Microsoft’s preview materials, those items remain unverifiable and should be validated with Microsoft sales channels.

Mitigation:

Mitigation:

Mitigation:

Mitigation:

For enterprises, the opportunity is real: automate repetitive, multi‑step workflows and reclaim knowledge worker time. But the risks are equally tangible: data residency, auditability, automation brittleness, and commercial uncertainties demand careful piloting, strict governance, and legal scrutiny before broad rollouts. Microsoft’s multi‑model orchestration and Agent 365 acknowledge these tradeoffs, but the burden falls on IT and security teams to translate preview promises into safe, reliable production practice.

Adopt with discipline, instrument with auditability, and treat agents as new organizational teammates that must be hired, managed, and offboarded with the same rigor as any human coworker.

Source: Windows Report https://windowsreport.com/microsoft...lot-cowork-agent-to-automate-workplace-tasks/

Background

Background

Microsoft’s Copilot program has been evolving for more than two years from a chat‑first assistive layer into a platform for agentic automation inside Windows and Microsoft 365. Early Copilot releases emphasized drafting, summarization and inline help; recent waves moved toward multi‑turn planning, document creation, and connectors that let Copilot act on user content across accounts. Those building blocks set the stage for the next step: agents that don’t just suggest, but do.At the same time Microsoft has been deliberately unbundling model choice inside Copilot, adding Anthropic’s Claude family as selectable backends alongside existing providers. That multi‑model approach allows specific workloads to be routed to Claude models when the task or enterprise policy calls for it. The Copilot Cowork announcement formalizes a closer, research‑preview collaboration with Anthropic to deliver agentic, long‑running task automation.

What Copilot Cowork is — and what it promises

From helper to coworker

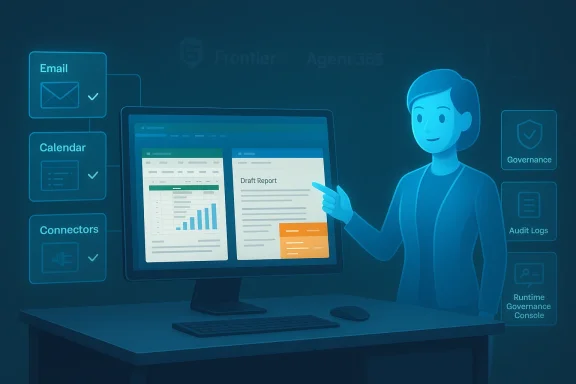

Copilot Cowork is explicitly framed as a coworker rather than an assistant. That means the agent is built to accept responsibility for multi‑step workflows — scheduling, assembling reports, building spreadsheets, researching topics, and returning finished outputs — not just to return suggestions or text snippets. Microsoft positions this as the practical next stage for workplace automation: hand the agent a goal, grant explicit, permissioned access, and receive a completed deliverable.Key user‑facing capabilities Microsoft describes include:

- Permissioned access to calendar, mail, files and apps so the agent can act with context.

- Long‑running task orchestration — agents that can continue work beyond a single chat interaction.

- Outputs returned as finished artifacts (documents, spreadsheets, schedules) rather than ephemeral suggestions.

- Integration with Copilot Studio and the Agent 365 control plane to manage, govern and instrument agent behavior at scale.

Why Claude?

Microsoft’s selection of Anthropic’s Claude models for Cowork follows the company’s broader decision to offer model choice inside Copilot. Claude’s capabilities — particularly in multi‑step reasoning and agentic behavior in Anthropic’s Cowork experiments — made it a natural fit for this kind of task‑oriented agent. Microsoft’s approach isn't replacement of its existing models; it’s additive: customers can route specific workloads to Claude when that model’s traits are desired.Architecture and product surfaces

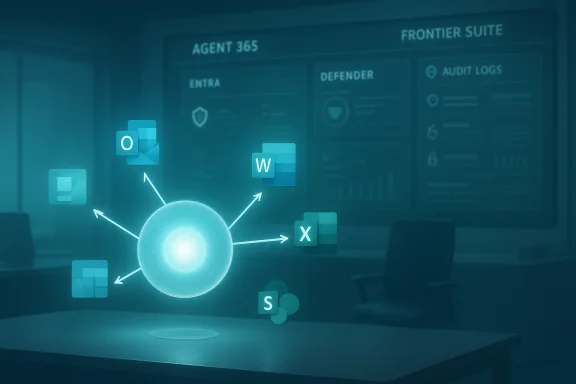

Agent 365 control plane

Copilot Cowork will be managed through a new Agent 365 control plane — a governance and orchestration layer intended to let IT and admin teams provision agents, control data flows, and monitor agent activity across the enterprise. Agent 365 is presented as the instrument for enforcing policies, audit trails, and operational settings necessary for deploying agentic AI in regulated environments. Microsoft has signaled this control plane will be central to how Cowork is adopted in large organizations.Copilot Studio and “Computer Use”

Copilot Studio now includes capabilities often described as “computer use” — a set of tools that let agents perform UI‑level interactions on desktop and web apps. That is, agents can operate mouse and keyboard actions in a controlled way to interact with legacy systems and web portals that have no API. This is a crucial enabler for real‑world automation where backend integrations are unavailable. It also raises important security and reliability questions that IT teams must manage.Multi‑model orchestration

Copilot is becoming an orchestration layer for multiple LLM backends. The Researcher agent and Copilot Studio can now select between OpenAI models, Microsoft‑hosted models, and Anthropic Claude variants depending on workload, policy, or developer configuration. For Cowork, Anthropic’s Claude engines are used in a research‑preview context to run long‑running, agentic tasks. Microsoft emphasizes opt‑in selection and tenant admin controls rather than an automatic or forced rerouting of prompts to third‑party models.Enterprise packaging and licensing: the E7 signal

Microsoft’s announcements include a commercial framing that bundles agent management and agentic capabilities into a premium enterprise offering — referenced in internal materials as a higher‑tier E7 bundle. The E7 positioning signals that Microsoft intends Copilot Cowork and Agent 365 to be a seat‑based, auditable enterprise product rather than a simple add‑on for consumer subscribers. That packaging will affect procurement, licensing costs, and rollout strategies for IT organizations.Be cautious, however: at the time of the preview the publicly available pricing and final GA (general availability) dates were not fully baked into Microsoft’s public briefings. Enterprises should treat any commercial commitments as subject to change until Microsoft posts formal pricing and terms. Where specific dates or price points are not included in Microsoft’s preview materials, those items remain unverifiable and should be validated with Microsoft sales channels.

Security, privacy and governance: the hard questions

Permissioned access is necessary, not optional

Microsoft highlights permissioned access as a critical design requirement: Cowork agents act only when a user or tenant explicitly grants them access to mail, calendar, files, or apps. That model is meant to reduce accidental exposure while enabling automation. But permissioned access alone does not eliminate risk: misconfigured permissions, over‑broad scopes, or lingering tokens can still create exposure vectors that IT must monitor.Data handling and third‑party model hosting

Because Copilot Cowork uses Anthropic’s Claude models in preview, enterprises must give attention to where data is processed and stored. Microsoft’s multi‑model approach includes options that may route workloads to third‑party model hosts. Microsoft has indicated opt‑in behavior and admin controls, but the exact boundaries of data residency, logging, and third‑party retention policies depend on contract terms and implementation choices. Organizations in regulated sectors should insist on concrete, written guarantees before routing sensitive data through third‑party models.Auditability and explainability

Copilot Cowork is designed to return finished artifacts, which raises two audit requirements:- A clear provenance trail showing which agent steps produced each part of an output.

- Verifiable logs that capture agent actions (what it accessed, what it changed, and when).

UI automation and brittle automations

The “computer use” and UI‑level automation features are practical but brittle by nature: agents that click through web pages or emulate desktop interactions can break when interfaces change. Organizations must expect maintenance overhead and define guardrails:- Use UI automation only where APIs are unavailable and monitor for failures.

- Combine UI interactions with log‑driven health checks and fallback workflows.

- Limit UI automation scopes to narrowly scoped automation tasks with robust error handling.

Operational and business impact

Productivity gains — real but variable

If Copilot Cowork works as marketed, teams will see meaningful reductions in repetitive knowledge‑work: meeting scheduling that reconciles complex calendars, multi‑document research briefs, or spreadsheet construction from natural‑language prompts. In practice the productivity delta will vary by task complexity, data quality, and the amount of human supervision retained. Early adopters should pick high‑value, low‑risk workflows for pilots.Cost and governance tradeoffs

Agentic automation shifts budget from manual labor to platform and governance costs. Organizations will need to weigh:- License and seat costs (E7 tier and agent seats).

- Model consumption costs if routing to external backends like Claude.

- Engineering and SRE effort to maintain automation reliability.

- Compliance and legal review costs for data flows.

IT and security team roles

Successful rollouts depend on tight collaboration between product teams and IT/security. Practical actions include:- Creating a pilot governance policy and a whitelist of allowed agent tasks.

- Establishing least‑privilege permissions for Cowork agents.

- Enabling comprehensive auditing via Agent 365 and validating log exports.

- Running red‑team tests to simulate agent misuse or credential leakage.

Risks and recommended mitigations

1. Hallucinations and incorrect outputs

Risk: Agents may synthesize plausible but incorrect facts or spreadsheets with erroneous formulas.Mitigation:

- Require human review for any outputs used in decision‑making.

- Configure Copilot Cowork to annotate outputs with source citations and provenance metadata where available.

- Use the Agent 365 control plane to enable verification and automated sanity checks.

2. Over‑privileged access and data leakage

Risk: An agent with excessive permission could expose sensitive mail, calendars, or files.Mitigation:

- Apply least privilege; grant access just long enough for the task and revoke tokens automatically.

- Use conditional access and session limits tied to Agent 365 policies.

- Monitor agent sessions in near real time and configure alerting for anomalous access patterns.

3. Third‑party model data residency and retention

Risk: Routing data to Anthropic or other model hosts may violate data residency or contractual obligations.Mitigation:

- Validate model hosting locations and retention policies in procurement.

- Keep high‑sensitivity workflows on models with strictly controlled data flow or on‑prem/enterprise‑hosted options when available.

- Require data minimization and redaction where appropriate.

4. Automation brittleness

Risk: UI automation breaks when interfaces change.Mitigation:

- Prefer APIs where possible.

- Implement automated test suites that exercise UI automations on a schedule.

- Use feature flags to disable agents rapidly if errors spike.

How this compares to competitive moves

Google, Anthropic, and other cloud vendors are pursuing similar visions: agents as workflow partners embedded inside productivity suites. Google’s Workspace has been evolving toward AI co‑authoring and agentic features inside Docs and Sheets, while Anthropic has been experimenting with Cowork‑style desktop agents that act on files in user‑designated folders. Microsoft’s differentiator is its tight integration with Microsoft 365, the Agent 365 governance plane, and the orchestration layer that offers model choice inside a single enterprise product. That matters for enterprises that already run critical workflows on Office apps and need centralized governance.Practical rollout checklist for IT leaders

- Identify low‑risk, high‑value pilot workflows (e.g., recurring reporting, calendar triage).

- Define a permissions and provisioning policy for Cowork agents (least privilege, time‑bound tokens).

- Validate Agent 365 auditing capabilities and log export formats against compliance requirements.

- Test failure modes for UI automations and implement monitoring and rollback mechanisms.

- Conduct a legal and privacy review for model routing and third‑party processing.

- Budget for license, consumption, and ongoing SRE/maintenance costs.

- Train end users on when to trust agent outputs and how to escalate uncertain results.

Strengths and opportunities

- Real productivity uplift: Automating multi‑step, repetitive workflows can unlock substantial time savings and let knowledge workers focus on higher‑value tasks. Early previews suggest Copilot Cowork can produce finished deliverables rather than drafts, which is a meaningful change in outcome.

- Enterprise governance first: The introduction of Agent 365 as a control plane demonstrates Microsoft’s awareness that agentic AI needs centralized management, which is crucial for regulated customers.

- Model choice: Offering Anthropic’s Claude as an option reduces single‑vendor risk and lets organizations route workloads to the model best suited for a task. This is a pragmatic approach that can accelerate adoption across diverse enterprise needs.

Key limitations and unresolved questions

- Final commercial terms and GA timing remain unclear. Microsoft’s preview materials and research‑preview timelines leave pricing and availability subject to later announcements; organizations should not assume immediate general availability or fixed pricing based on preview messaging alone.

- Exact data residency guarantees for third‑party model routing are not public in preview materials. Enterprises with strict residency requirements will need to secure contractual commitments before routing sensitive workloads through third‑party models. This is a material, verifiable risk until Microsoft publishes firm contractual terms.

- Operational maintenance overhead for UI automations. While “computer use” unlocks legacy automation, it also inherits the classic brittleness of RPA‑style approaches. Expect a nontrivial maintenance burden.

Recommendations for buyers and decision makers

- Start small and measure: run pilots that have clearly measurable KPIs (hours saved, time to completion, error rate).

- Insist on strong audit visibility from Agent 365 before expanding agent scopes.

- Bake security into procurement: require model hosting locality, retention policies, and incident response SLAs in writing.

- Train staff on agent behavior expectations and keep humans in the loop for high‑risk outputs.

- Maintain an internal register of agent tasks and prescriptive runbooks for when agents fail or produce unexpected results.

Conclusion

Copilot Cowork marks a meaningful inflection point: Microsoft is moving Copilot from a conversational assistant to an agentic coworker capable of taking responsibility for end‑to‑end tasks. The research preview — built with Anthropic’s Claude models and managed through the Agent 365 control plane — combines promising productivity gains with significant governance and operational challenges.For enterprises, the opportunity is real: automate repetitive, multi‑step workflows and reclaim knowledge worker time. But the risks are equally tangible: data residency, auditability, automation brittleness, and commercial uncertainties demand careful piloting, strict governance, and legal scrutiny before broad rollouts. Microsoft’s multi‑model orchestration and Agent 365 acknowledge these tradeoffs, but the burden falls on IT and security teams to translate preview promises into safe, reliable production practice.

Adopt with discipline, instrument with auditability, and treat agents as new organizational teammates that must be hired, managed, and offboarded with the same rigor as any human coworker.

Source: Windows Report https://windowsreport.com/microsoft...lot-cowork-agent-to-automate-workplace-tasks/