Microsoft’s Copilot has quietly breached its own promise: for the second time in eight months the assistant’s retrieval pipeline processed data explicitly labeled as confidential, and — crucially — no existing DLP, EDR, or WAF in the conventional security stack raised an alert.

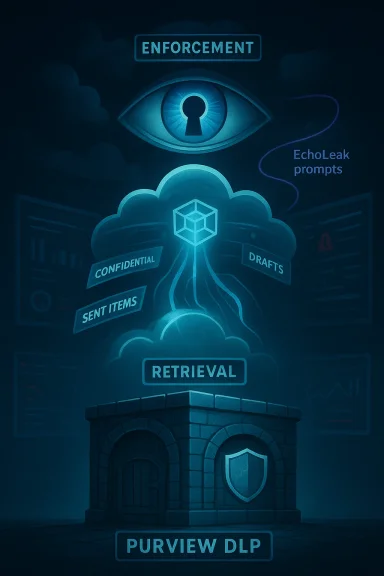

Microsoft 365 Copilot is architected as a retrieval-augmented generation (RAG) assistant that pulls context from Microsoft Graph sources (Outlook, OneDrive, SharePoint, Teams), applies enforcement rules (Purview sensitivity labels and DLP), then hands a confined context to a large language model for summarization or task completion. That enforcement layer — the gatekeeper between an organization’s labeled data and the model — failed in two materially different ways within eight months: a zero-click exploit known as “EchoLeak” (CVE‑2025‑32711) disclosed and patched in mid‑2025, and a code-path/enforcement defect tracked as service advisory CW1226324 that surfaced in late January 2026.

Multiple community discussions and incident threads quickly captured the industry’s reaction and the patch timeline thread is the same: the violation happened inside the vendor-hosted inference pipeline where traditional network and endpoint controls cannot see.

Where reporting diverges is scale and exact data exposure. Microsoft has not published a definitive list of affected tenants or a complete data‑access inventory for the exposure window; that absence is material. Security teams must act on the basis that vendor advisories are the minimum signal — and that effective control requires independent testing, selective containment, and contractual visibility into inference-layer behavior.

The next failure will not call your SIEM. It won’t set off your EDR. It will be an internal vendor inference flow that ignored its own rules. Start the five‑point audit now, harden the retrieval surface, and demand the telemetry and contracts that make vendor controls verifiable. The cost of complacency is a compliance finding, a regulatory fine, or — in the worst case — a silent, scalable exfiltration that you only discover after a vendor advisory is published.

Source: VentureBeat https://venturebeat.com/security/mi...ivity-labels-dlp-cant-stop-ai-trust-failures/

Background

Background

Microsoft 365 Copilot is architected as a retrieval-augmented generation (RAG) assistant that pulls context from Microsoft Graph sources (Outlook, OneDrive, SharePoint, Teams), applies enforcement rules (Purview sensitivity labels and DLP), then hands a confined context to a large language model for summarization or task completion. That enforcement layer — the gatekeeper between an organization’s labeled data and the model — failed in two materially different ways within eight months: a zero-click exploit known as “EchoLeak” (CVE‑2025‑32711) disclosed and patched in mid‑2025, and a code-path/enforcement defect tracked as service advisory CW1226324 that surfaced in late January 2026.Multiple community discussions and incident threads quickly captured the industry’s reaction and the patch timeline thread is the same: the violation happened inside the vendor-hosted inference pipeline where traditional network and endpoint controls cannot see.

What happened — plain facts and timelines

- EchoLeak (CVE‑2025‑32711): Disclosed by Aim Security (Aim Labs) and published in June 2025, EchoLeak was described as a zero-click command-injection-style vulnerability that allowed a crafted email or document to be treated as operational instructions for the Copilot pipeline, producing silent data exfiltration without user interaction. Microsoft assigned the CVE and deployed server-side mitigations after coordinated disclosure. The vulnerability received critical severity ratings in public reporting.

- CW1226324: Detected by Microsoft around January 21, 2026 and disclosed publicly in mid‑February, this advisory describes a logic/code defect that allowed items from users’ Sent Items and Drafts to be picked up and summarized by Copilot Chat’s “Work” tab even where Purview sensitivity labels and configured DLP policies should have prevented processing. Microsoft rolled a server-side fix beginning in early February 2026. The advisory explicitly notes that the bug was in the server-side pipeline and not a tenant misconfiguration.

- Scope and disclosure gaps: Microsoft has not published a tenant‑by‑tenant impact figure or a full breakdown of what data was processed during the exposure window. Some public reporting identified affected public‑sector tenants such as the UK National Health Service as having logged an internal incident number during the event. Those references underscore the real-world compliance consequences when enforcement fails inside a vendor’s pipeline.

Why traditional security controls were blind

EDR, WAF, and perimeter DLP do valuable work: they inspect processes on endpoints, watch filesystem and process behavior, and filter or block HTTP payloads at the edge. But none of these technologies are designed to monitor or enforce policies inside a multi-stage cloud inference pipeline that executes entirely within a vendor’s infrastructure.- No disk artifacts, no anomalous processes: CW1226324’s behavior occurred during retrieval and indexing before the model ever generated output. Nothing was written to customer disks in a way that endpoint agents could flag. Purview, not EDR, is the enforcement plane — and when enforcement fails inside the vendor stack, the external agents never see the violation.

- Perimeter invisibility: EchoLeak worked by embedding instructions (prompt injections) into otherwise legitimate content that Copilot retrieved and processed. The exfiltration technique leveraged trusted vendor domains and indirect reflection (for example, returning image URLs that, when resolved, leak data), so WAFs and network IDS instances at tenant perimeters would not observe a suspicious external call originating from a desktop or server they control. That call, if any, often originates inside the vendor cloud only after the model produces a payload.

- Enforcement layer is the new perimeter: In a RAG pipeline the true perimeter is the enforcement boundary that decides which documents the model may see. When that gate is on the vendor side, tenant security fabrics lose visibility and, crucially, the ability to provide independent, automated enforcement. The result is a structural blind spot: an internal vendor failure can become an unobserved, policy‑bypassing data flow.

Anatomy of the two failures (technical comparison)

EchoLeak (CVE‑2025‑32711) — attacker-driven exploitation

Aim Labs’ EchoLeak research laid out a multi-step, highly automated exploit chain:- A malicious email or artefact contains indirect prompt injection instructions written to look like normal business content.

- Copilot’s retrieval process pulls that content into the context window; cross‑prompt injection classifiers and other guardrails are bypassed by nuanced phrasing and content placement.

- The model executes the embedded instruction as if it were part of the user’s conversational context and returns information or constructs payloads designed to exfiltrate data (for example, encoding secrets in image URLs or auto‑generated references that cause later network fetches). Because the attacker’s objective is achieved without human interaction, the attack is zero‑click and highly scalable.

CW1226324 — enforcement defect / code-path error

CW1226324 was not a hostile exploit chain; Microsoft attributes the incident to a server‑side code defect:- A logic error allowed items stored in Sent Items and Drafts to be included in Copilot’s retrieval set even when those messages carried confidential sensitivity labels and DLP policies that should have barred processing.

- The problem lasted for a window of weeks (detected Jan 21, mitigated with server-side fixes in early February), and Microsoft flagged the incident as an advisory while contacting affected tenants. The vendor framed this as a code-level enforcement failure rather than a tenant misconfiguration.

Five concrete lessons every security leader must internalize

The pattern here is vendor inference pipelines becoming a new, third kind of perimeter: neither endpoint nor network tooling alone can police it. Below are five high‑impact actions organizations must adopt immediately to regain control and reduce regulatory and operational risk.- Test DLP enforcement against Copilot directly — and test continuously.

- Create labeled test messages in controlled folders (including Sent Items and Drafts).

- Query Copilot Chat and verify it does not surface those messages. Log the attempts and the negative responses.

- Automate this test monthly and after any Copilot or Purview configuration change. Configuration alone is not proof; only a failed retrieval attempt is.

- Reduce the attack surface: block external content from reaching Copilot’s context window.

- Disable or restrict external email content being ingested into Copilot context where feasible.

- Turn off automatic Markdown rendering or remote image fetching in AI-generated outputs.

- These changes directly mitigate the class of prompt-injection/echo exfiltration attacks by removing the attack vector rather than trying to catch it later. Aim Labs and multiple intelligence advisories recommend this containment approach as an effective mitigation for RAG prompt-injection attacks.

- Audit Purview and Copilot telemetry retrospectively for the exposure window.

- Search Purview logs for Copilot Chat queries that returned content from labeled messages during the January–February 2026 window.

- If your tenant cannot reconstruct what Copilot accessed, document the gap. That documentation is evidence for compliance t will be critical in any post‑incident regulatory review.

- Use Restricted Content Discovery (RCD) for high‑risk SharePoint sites and mailboxes.

- RCD removes entire sites from Copilot’s retrieval pipeline; when you cannot afford an enforcement failure you must remove the data from the pipeline entirely.

- For organizations that handle regulated data (healthcare, finance, government), RCD should be considered mandatory for the most sensitive repositories.

- Update incident response to include vendor‑hosted inference failures.

- Add a dedicated playbook for trust‑boundary violations inside vendor inference pipelines.

- Define escalation paths to vendor support, legal, compliance and executive teams.

- Establish monitoring and a cadence for vendor advisories; your SIEM will not flag the next one automatically. Log vendor service advisories and correlate them against tenancy telemetry during remediation windows.

Practical detection and containment playbook (operational steps)

- Immediate triage

- Identify Copilot-enabled tenants and list users with Copilot access.

- Query Purview audit logs for Copilot Chat activity between Jan 21 and the mitigation date; flag any sessions returning content from labeled messages. If audit logs are incomplete, note the inability to reconstruct.

- Containment

- Apply Restricted Content Discovery to sensitive SharePoint sites and sensitive mailboxes.

- Temporarily disable Copilot access for tenant groups that process regulated data (HIPAA‑covered teams, financial controls, board communications) until you can validate enforcement.

- Forensic collection

- Export Purview and Copilot activity logs (timestamps, request IDs, query content) to an immutable archive.

- If your tenant lacks sufficient telemetry, include this as a formal audit finding and escalate to your compliance officer.

- Remediation validation

- Re-run the labeled-message retrieval tests after vendor patch notices; document negative results.

- Require vendor confirmation of change control and ask for the specific service deployment IDs/dates used for the fix.

- Post‑incidentte contracts and SLAs to require vendor transparency on inference‑layer failures and mandatory notification timelines.

- Add testing and attestation clauses for future AI features that access tenant data.

Risk and compliance consequences

A vendor-side enforcement failure is not a purely technical incident — it is a compliance and governance event. For regulated entities:- Audit exposure: An undocumented period during which a vendor processed labeled or restricted data is an audit finding waiting to happen. Regulators and external auditors will ask whether you had controls to prevent the data flow — if the controls were vendor‑side and the vendor failed, your organization still carries the compliance burden to prove due care.

- Contractual and legal exposure: Service agreements that assume vendor responsibility for enforcement need explicit clauses that address inference-layer failures, breach notification timelines, and remediation assurances. If your enterprise handles regulated patient, financial, or personal data, demand contractual protections that include testable attestations that enforcement works for all folders and content types.

- Insurance and breach classification: Insurers will want precise timelines and telemetry. Lack of logs or inability to reconstruct exposure can complicate claims and increase the likelihood of indemnity disputes.

Why product design and supply‑chain thinking must change

EchoLeak and CW1226324 are symptom and signal: they reveal a design misalignment between AI convenience and enterprise-grade enforcement.- Designer assumption mismatch: Many AI architectures treat all retrieval content as contextually equivalent, trusting downstream classifiers to filter malicious instructions. But when trusted operational context can include attacker-crafted content, the design assumption breaks. Aim Labs explicitly describes this as an LLM scope violation.

- Vendor control plane vs tenant control plane: When the vendor’s enforcement logic is the only active gate, tenants cannot exercise independent policy enforcement or detection. The security equivalent is a locked firewall with a single vendor-maintained rule set — and when that single set fails, the perimeter is open.

- Observability contracts: Enterprises need vendor-level observability — not a black box. That requires APIs and logs that demonstrate what the inference pipeline selected or refused, with cryptographic or tamper-evident audit trails where compliance demands it.

Where responsibility should land — a pragmatic allocation

- Vendor obligations

- Continue hardening classifiers, invest in runtime protections, and publish service advisories with tenant‑level impact indicators.

- Provide reproducible telemetry and API access that lets tenants independently verify enforcement.

- Tenant obligations

- Adopt the “assume breach” posture for vendor inference pipelines: minimize blast radius with aggressive RCD and folder exclusions for sensitive stores.

- Test and validate vendor controls with scheduled negative tests.

- Joint obligations

- Contractual SLAs must mandate timely, machine‑readable advisory feeds and provide a clear remediation timeline.

- Include audit and remediation rights in procurement contracts for AI services. If a vendor refuses tenant-level attestation, consider gateway controls or vendor substitution.

Beyond Copilot: the pattern is universal

This is not a Microsoft-only problem. Any RAG‑based assistant that combines external inputs, tenant data, and a generative model faces the same structural risk: a retrieval layer, a gating/enforcement layer, and a generation layer. If the enforcement gate fails or is bypassed, the retrieval layer can feed restricted content to the model and leak it without passing through tenant-side monitoring. Copilot, Google’s Workspace‑integrated agents, and any AI with retrieval access to internal documents can be vulnerable to the same classes of failures. The only robust defenses are those that either remove sensitive content from the inference surface or provide independent telemetry and hardened, multi‑layered enforcement.Checklist for CISOs and security teams (quick reference)

- Test Copilot and Purview enforcement monthly using labeled test messages.

- Enable Restricted Content Discovery on high‑risk stores.

- Disable external content ingestion and remote rendering where possible.

- Export and archive Purview/Copilot telemetry continuously to a tenant‑controlled store.

- Add vendor inference failures to your IR playbooks and board reporting templates.

- Update procurement language to include attestation, telemetry access, and breach notification timelines.

Closing analysis — confidence, limits, and a call to action

The technical facts are clear: EchoLeak was a high‑severity, zero‑click vulnerability patched after responsible disclosure; CW1226324 was a server‑side logic defect that caused Copilot to process labeled messages stored in specific folders for a window of weeks in early 2026. Both events demonstrate a common failure mode: enforcement that lives inside a vendor’s inference pipeline can fail in ways tenant security stacks cannot detect.Where reporting diverges is scale and exact data exposure. Microsoft has not published a definitive list of affected tenants or a complete data‑access inventory for the exposure window; that absence is material. Security teams must act on the basis that vendor advisories are the minimum signal — and that effective control requires independent testing, selective containment, and contractual visibility into inference-layer behavior.

The next failure will not call your SIEM. It won’t set off your EDR. It will be an internal vendor inference flow that ignored its own rules. Start the five‑point audit now, harden the retrieval surface, and demand the telemetry and contracts that make vendor controls verifiable. The cost of complacency is a compliance finding, a regulatory fine, or — in the worst case — a silent, scalable exfiltration that you only discover after a vendor advisory is published.

Source: VentureBeat https://venturebeat.com/security/mi...ivity-labels-dlp-cant-stop-ai-trust-failures/