Microsoft’s new Copilot Health reframes the company’s approach to medical conversations by explicitly separating clinically focused interactions from everyday Copilot chats — a design choice that promises clearer signal, tighter controls, and better provenance for health guidance, but that also raises thorny questions about liability, data governance, and how useful a consumer-facing medical assistant can be without clinical oversight.

Microsoft has steadily expanded Copilot from a productivity assistive layer into a family of specialized assistants. Over the last year the product line has grown to include enterprise-focused tools, a consumer Copilot integrated across Windows and Edge, a voice- and ambient-driven Dragon Copilot for clinical settings, and now Copilot Health, a service designed to handle health queries and personal medical data in a distinct workflow from general-purpose chat.

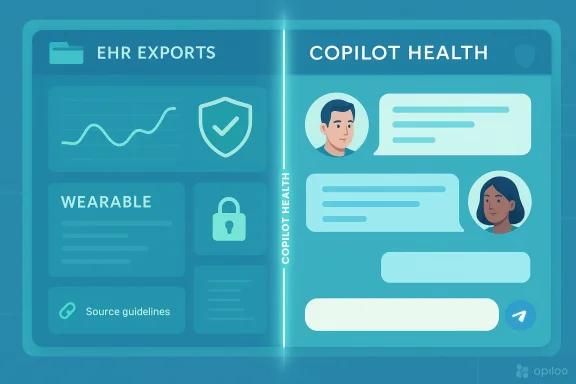

This distinction matters because health-related conversations are not ordinary information queries: they can influence decisions about care, medications, and urgent actions. Microsoft’s public materials and recent reporting indicate Copilot Health will let users upload electronic health records (EHRs), wearable and fitness data, and ask sustained medical questions — while routing those conversations through systems and content sources intended to be more clinically credible than generic large language model (LLM) outputs.

Benefits:

Advantages:

This design helps align with user expectations and basic privacy hygiene.

Regulatory landscapes (FDA in the U.S., MHRA in the U.K., EU rules) have been evolving. Whether Copilot Health crosses the line into regulated medical advice — especially when integrated with EHRs — will depend on functionality, claims made about the product, and how it’s marketed to consumers vs. institutions.

Potential outcome:

What’s distinctive about Microsoft’s approach:

But separation alone does not eliminate risk. The real measure will be operational details: how Microsoft governs uploads of sensitive data, whether provenance is meaningful and searchable, whether content licensing preserves editorial independence, and how legal and regulatory liabilities are addressed.

For clinicians and IT leaders, the right stance is cautious experimentation. Pilot Copilot Health where the tasks are well-scoped — medication reconciliation, summarizing patient histories, drafting patient-facing educational material — and keep clinicians in the loop. For consumers, Copilot Health can be a helpful starting point for information, but it should never replace direct clinical contact for urgent or complex problems.

Yet the product’s promise depends on execution. Technical safeguards, contractual clarity, rigorous clinical validation, and transparent governance will determine whether Copilot Health is a genuinely useful tool for patients and clinicians — or another high‑profile experiment whose limits are only apparent after lives are affected.

For technologists, administrators, clinicians, and curious users, the correct posture is engaged skepticism: test the tool, demand clear evidence about safety and data protections, and insist on the human oversight and regulatory clarity that medical applications require. If Microsoft follows through with the operational discipline its public messaging promises, Copilot Health could materially improve access to trustworthy health information. If it doesn’t, the cost could be confusion, misplaced trust, and legal headaches — outcomes no one in healthcare or technology wants to repeat.

Source: Axios Copilot Health separates medical chats from general AI

Background

Background

Microsoft has steadily expanded Copilot from a productivity assistive layer into a family of specialized assistants. Over the last year the product line has grown to include enterprise-focused tools, a consumer Copilot integrated across Windows and Edge, a voice- and ambient-driven Dragon Copilot for clinical settings, and now Copilot Health, a service designed to handle health queries and personal medical data in a distinct workflow from general-purpose chat.This distinction matters because health-related conversations are not ordinary information queries: they can influence decisions about care, medications, and urgent actions. Microsoft’s public materials and recent reporting indicate Copilot Health will let users upload electronic health records (EHRs), wearable and fitness data, and ask sustained medical questions — while routing those conversations through systems and content sources intended to be more clinically credible than generic large language model (LLM) outputs.

Overview: What Copilot Health is positioning to do

- Provide a separate health-focused experience that isolates medical chats from general Copilot interactions.

- Accept medical records and wearable data so Copilot Health can synthesize an individual’s history and ongoing readings when answering questions.

- Surface trusted, editorially reviewed content as provenance for its answers, including licensed consumer health content from established publishers.

- Lean on retrieval and grounding techniques (rather than freeform generation alone) to reduce hallucinations and show the origins of medical guidance.

- Offer a continuum from consumer-facing explanations to enterprise clinical tools (e.g., Dragon Copilot), while keeping patient safety disclaimers prominent.

Why Microsoft is separating medical chats from general AI

Trust, safety, and user expectations

Medical queries carry higher stakes. People expect health information to be evidence-based, cited, and conservative in tone. By creating a distinct Copilot Health pathway, Microsoft is signaling a recognition that the usual Copilot conversational surface — optimized for productivity, creativity, and speed — should not be the default place for potentially life-impacting advice.Technical and product benefits

Segregating medical interactions allows Microsoft to:- Use specialist retrieval systems and curated knowledge bases for health queries.

- Apply stricter guardrails and disclaimers tailored to clinical risk.

- Route conversations to different model stacks or safety layers (for example, a retrieval-augmented system that prefers cited, peer-reviewed content).

- Limit data flows: medical data can be kept under more restrictive governance, with different retention and export rules.

Business and regulatory incentives

Healthcare is an enormous market, and big tech companies are racing to establish trusted footholds. For Microsoft, positioning Copilot as a bridge between everyday users and clinical-grade information (and licensable content) opens commercial opportunities while also pushing the company into territories where regulation, vendor liability, and institutional procurement matter.How Copilot Health likely works (what’s stated and what’s inferred)

Stated capabilities

Microsoft has outlined — in product pages and research summaries — that Copilot Health will:- Accept uploads of EHR exports and wearable data.

- Use licensed content and peer-reviewed sources to ground answers.

- Provide user-facing explanations with provenance: indicating which statements are drawn from the user’s records and which are from external content.

Probable architecture (inferred)

While Microsoft has not disclosed full technical details, the separation model most vendors adopt for high-risk domains suggests Copilot Health will use layered techniques:- Retrieval-augmented generation (RAG): queries first search a curated, indexed set of trusted health documents (publisher content, guidelines, clinical literature). The model synthesizes answers citing that retrieved material.

- Model routing / specialist ensembles: a health-specific model or a set of specialist components verify and rephrase responses generated by a general model.

- Identity and permission checks: the system verifies consent and may require explicit opt-ins for any upload of medical records or device data.

- Data isolation: medical data is stored, encrypted, and processed under separate policies and potentially different physical or logical controls than consumer chat logs.

The strengths: What Copilot Health brings that matters

1. Grounded answers and provenance

One of the clearest advances is the emphasis on sourcing and provenance. Answers that show “this recommendation is based on guideline X and your blood pressure readings from last month” are dramatically more useful and scrutinizable than free-floating LLM assertions.Benefits:

- Users and clinicians can inspect the underlying evidence.

- Reduces the risk of confident misinformation by tying claims to documents.

2. Integration of personal health data

Allowing uploads of EHRs and wearable data can make advice contextually relevant. Instead of generic, one‑size‑fits‑all responses, Copilot Health can potentially:- Summarize trends (e.g., weight, steps, heart rate variability).

- Flag data points that suggest escalation (e.g., dangerously high glucose).

- Provide tailored reminders or checklists aligned to a patient’s medications and conditions.

3. Licensing and editorial scaffolding

Licensing vetted consumer health content from reputable publishers — and surfacing it inside answers — strengthens credibility and offers a path to monetize trustworthy content rather than relying on opaque model memorization.Advantages:

- Editorial oversight can catch errors and update guidance.

- Publishers gain control over how their content is presented and monetized.

4. Product-level separation reduces accidental exposure

By isolating health chats from general Copilot history and social features (such as group chats or cross-app memory), Microsoft can better prevent accidental sharing of sensitive health data.This design helps align with user expectations and basic privacy hygiene.

The risks and unresolved questions

1. Clinical liability and regulatory ambiguity

A central unresolved issue is where responsibility lies when Copilot Health provides incorrect or harmful guidance. Microsoft can disclaim that Copilot is not a medical device and is not a substitute for professional care — but in practice, users may act on conversational advice.Regulatory landscapes (FDA in the U.S., MHRA in the U.K., EU rules) have been evolving. Whether Copilot Health crosses the line into regulated medical advice — especially when integrated with EHRs — will depend on functionality, claims made about the product, and how it’s marketed to consumers vs. institutions.

2. Accuracy vs. immediacy trade-offs

Relying on retrieved, editorial content can reduce hallucinations but introduces latency and complexity. More importantly, not all clinical questions map cleanly to consumer-facing articles. Diagnostic nuance, rare conditions, and comorbidity interactions are difficult to capture with canned guidance.Potential outcome:

- Safer, but more conservative answers that may under‑recommend interventions.

- Or, conversely, insufficient caution when encountering edge cases.

3. Data security and HIPAA-like protections

Accepting EHR uploads and device data raises immediate questions about storage, access controls, and third‑party data sharing. Enterprises often require Business Associate Agreements (BAAs) and strict HITRUST-level assurances for HIPAA compliance. For consumer deployments that straddle both personal and institutional contexts, Microsoft must clarify:- Which features are covered by enterprise agreements and which are consumer-level.

- Data retention policies and the ability for users to delete uploaded records.

- Whether de-identified data is used for model training, and—with what opt-in mechanisms.

4. Trust vs. commercial incentives

Licensing deals with publishers and a broader push to multiply trusted sources are good on the surface. But these arrangements can also create perverse incentives:- Will Microsoft prioritize licensed publisher content over open clinical guidelines where they conflict?

- How transparent will the company be about ranking and weighting of sources?

- Could pay-to-play dynamics skew which guidance appears more prominently?

5. Overreliance by users and clinicians

There is risk that both patients and overburdened clinicians might lean on Copilot Health for triage or decision support beyond its intended scope. If clinicians use consumer Copilot Health outputs to inform care without independent verification, patient safety could suffer.6. Equity and data representativeness

Health models trained or tuned on published content can inadvertently reflect biases present in the literature and in clinical practice. Device data — like wearables — is unevenly distributed across socioeconomic groups and skin tones, which may affect accuracy for underrepresented populations.Practical implications for IT teams, clinicians, and end users

For IT and security teams

- Treat Copilot Health as a distinct service: design network segmentation, separate logging, and explicit access controls for health data.

- Review Microsoft’s contractual terms carefully — clarify whether medical data is covered by enterprise compliance frameworks (e.g., BAAs).

- Plan for data deletion and export workflows; ensure users can revoke consent and that admins can audit uploads.

For clinicians and health systems

- Evaluate Copilot Health in controlled pilot scenarios before wide deployment. Test edge cases and failure modes, particularly around medication guidance and triage recommendations.

- Maintain human-in-the-loop policies: AI-generated summaries or suggestions should be verified by licensed clinicians before they inform care.

- Use Copilot Health as a productivity tool (summaries, paperwork reduction) rather than an independent diagnostic engine.

For consumers and patients

- Share medical data only when necessary and understand retention rules.

- Treat Copilot Health as informational rather than definitive medical advice. For urgent symptoms, seek immediate medical attention.

- Use provenance features — if a Copilot response cites a guideline or publisher piece, read the source or consult a clinician.

How regulators and policymakers should respond

- Define clear criteria for when an AI assistant becomes a medical device. Guidance should treat functionality, not marketing, as the deciding factor.

- Require transparent provenance for health suggestions: users should be able to trace claims back to named guidelines or editorial sources.

- Mandate opt-in and granular consent for the use of personal health data in model training, with auditing requirements.

- Encourage standards for interoperability and secure EHR ingestion so data flows are auditable and reversible.

Where Copilot Health fits in the broader healthcare AI landscape

Copilot Health is the latest manifestation of a larger trend: mainstream AI tools are moving from novelty chatbots to contextualized assistants that combine personal data, curated knowledge, and task automation. Competitors from cloud providers and AI platforms are pursuing similar verticalized assistants for triage, clinical documentation, and patient engagement.What’s distinctive about Microsoft’s approach:

- Deep integration across desktop and productivity surfaces (Word, Outlook, Windows).

- A dual track for consumer and enterprise — a consumer-facing Copilot Health and an enterprise Dragon Copilot for clinical workflows.

- Emphasis on licensed editorial content and provenance as a core safety mechanism.

Best practices Microsoft should publish (and what to watch for)

If Microsoft wants Copilot Health to be both useful and responsible, readers should look for these commitments:- Explicit data governance: clear documentation of storage, encryption, access, retention, and deletion policies for uploaded health data.

- BAA and regulatory mapping: clear statements about what features fall under enterprise compliance agreements and what features are consumer-only.

- Transparency about training: definitive answers about whether user-uploaded, de-identified data will ever be used to improve models, and how opt-in is obtained.

- Versioned provenance: the ability to see the exact source and timestamp of the guidance used to produce an answer, with links to the underlying guideline or article.

- Robust error reporting: simple ways for clinicians and users to flag incorrect or harmful outputs, and a public dashboard of safety incidents and remediation steps.

A realistic assessment: promise tempered by prudence

Copilot Health is a sensible, necessary attempt to reconcile two competing trends: the public’s appetite for instant, conversational health guidance and the imperative that medical advice be evidence-based and safely delivered. The architectural move to separate health chats from general AI is a practical recognition that one-size-fits-all conversational models are inadequate for medical contexts.But separation alone does not eliminate risk. The real measure will be operational details: how Microsoft governs uploads of sensitive data, whether provenance is meaningful and searchable, whether content licensing preserves editorial independence, and how legal and regulatory liabilities are addressed.

For clinicians and IT leaders, the right stance is cautious experimentation. Pilot Copilot Health where the tasks are well-scoped — medication reconciliation, summarizing patient histories, drafting patient-facing educational material — and keep clinicians in the loop. For consumers, Copilot Health can be a helpful starting point for information, but it should never replace direct clinical contact for urgent or complex problems.

Seven concrete recommendations for safe adoption

- Require explicit patient consent and make data deletion obvious and immediate.

- Use Copilot Health first for non-decision-critical tasks: summaries, information retrieval, and patient education.

- Keep human review mandatory for any AI-generated clinical recommendation that could change treatment.

- Audit provenance: require that every clinical assertion include a named source and timestamp.

- Patch contract terms to include BAAs and evidence of technical safeguards for any institutional use.

- Track model performance by condition and population subgroup to detect bias and coverage gaps.

- Report safety incidents publicly and iteratively improve the system — transparency builds trust.

Conclusion

Microsoft’s Copilot Health represents a pragmatic step forward: it acknowledges the unique demands of medical conversations and attempts to engineer a separate pathway designed for higher trust and better provenance. The design choices reflect lessons learned across the AI sector about the dangers of mixing casual conversation and clinical guidance.Yet the product’s promise depends on execution. Technical safeguards, contractual clarity, rigorous clinical validation, and transparent governance will determine whether Copilot Health is a genuinely useful tool for patients and clinicians — or another high‑profile experiment whose limits are only apparent after lives are affected.

For technologists, administrators, clinicians, and curious users, the correct posture is engaged skepticism: test the tool, demand clear evidence about safety and data protections, and insist on the human oversight and regulatory clarity that medical applications require. If Microsoft follows through with the operational discipline its public messaging promises, Copilot Health could materially improve access to trustworthy health information. If it doesn’t, the cost could be confusion, misplaced trust, and legal headaches — outcomes no one in healthcare or technology wants to repeat.

Source: Axios Copilot Health separates medical chats from general AI