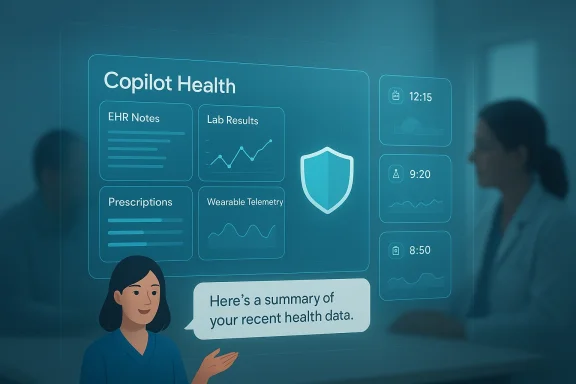

Microsoft’s consumer Copilot just walked into the most intimate ledger most people keep: their medical record, wearable streams and lab results — and it did so with a single, public preview called Copilot Health that promises to pull those fragments together into plain‑language summaries, trend detection and appointment‑ready guidance.

Microsoft’s Copilot family has evolved rapidly from productivity helpers to a collection of verticalized assistants aiming to own everyday workflows. What began as writing and scheduling help has expanded into domain copilots for finance, coding, and now health; Copilot Health is the company’s clearest push to make Copilot the” for personal medical data.

The preview, announced in mid‑March 2026, is initially available only to adults in the United States as an early access program. Microsoft frames the product as an aid to understanding — not a replacement for clinicians — and highlights privacy segmentation, grounding with curated health content, and a multi‑sohat spans clinical systems and consumer devices.

This launch sits on a foundation Microsoft has been building for years: clinical products such as Dragon Copilot for clinicians, Azure Health Data Services for standardized clinical data, and licensing deals to surface medically reviewed publisher content in consumer replies. Those pieces are now being stitched together to support a consumer‑oriented feature set that is both consequential and fragile.

But promise is not proof. The high bar for safety, privacy and clinical reliability demands transparent technical documentation, robust consent engineering, independent evaluation and clear regulatory alignment. Microsoft’s stated use of standards such as FHIR and TEFCA, partnerships for identity and provenance, and editorial licensing are good design choices. Yet the outcome will depend on rigorous operational execution and whether the company demonstrates how it prevents hallucinations, enforces privacy by default, and reconciles liability with clinicians and patients.

For users: be curious, stay cautious, and use Copilot Health as an assistant to prepare for clinical care — not as a clinical authority. For organizations and regulators: insist on documentation, audits and enforceable controls before equating convenience with safety. If Microsoft can answer those questions with concrete evidence and independent validation, Copilot Health may become a helpful bridge between fragmented data and meaningful care; until then, the tool is an enormous convenience with equally enormous responsibilities attached.

Source: reclaimthenet.org Microsoft Copilot Health Centralizes Personal Medical Records

Source: Fitt Insider Microsoft Launches AI Health Copilot

Background / Overview

Background / Overview

Microsoft’s Copilot family has evolved rapidly from productivity helpers to a collection of verticalized assistants aiming to own everyday workflows. What began as writing and scheduling help has expanded into domain copilots for finance, coding, and now health; Copilot Health is the company’s clearest push to make Copilot the” for personal medical data.The preview, announced in mid‑March 2026, is initially available only to adults in the United States as an early access program. Microsoft frames the product as an aid to understanding — not a replacement for clinicians — and highlights privacy segmentation, grounding with curated health content, and a multi‑sohat spans clinical systems and consumer devices.

This launch sits on a foundation Microsoft has been building for years: clinical products such as Dragon Copilot for clinicians, Azure Health Data Services for standardized clinical data, and licensing deals to surface medically reviewed publisher content in consumer replies. Those pieces are now being stitched together to support a consumer‑oriented feature set that is both consequential and fragile.

What Copilot Health Says It Does

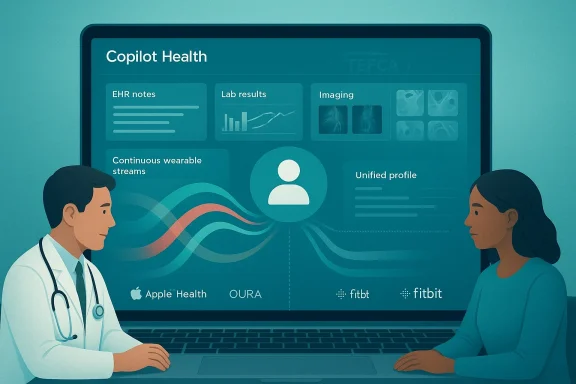

Single pane for fragmented health data

At its core, Copilot Health promises to aggregate three classes of personal data:- Electronic health records (EHRs) — visit notes, problem lists, medications, imaging reports and lab results stored by hospitals and clinics.

- Laboratory and imaging results — discrete lab values and imaging interpretations that are often hard for patients to interpret alone.

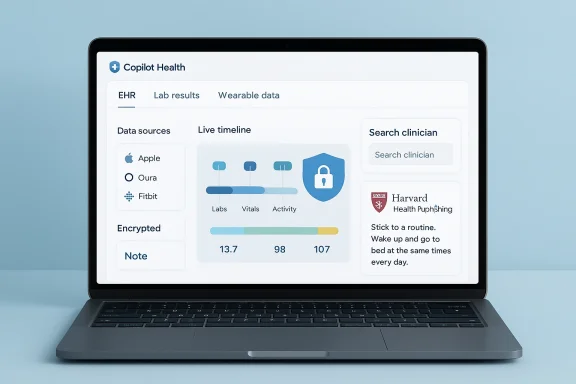

- Wearable telemetry — continuous biometric streams from consumer devices, specifically cited integrations include Apple Health, Oura and Fitbit.

Grounding, provenance and user empowerment

Microsoft has said Copilotze user data with grounded, medically reviewed guidance to explain abnormal values, highlight trends, and generate actionable next steps such as appointment prep or questions to ask a clinician. The company has pursued partnerships and licensing to improve provenance — notably licensing consumer‑facing content from trusted publishers to anchor answers — and highlights tools that will show where guidance came from.Interoperability: FHIR, TEFCA and identity

On the technical side, Copilot Health leans on the same industry standards and policy ominated U.S. interoperability work. The product reportedly uses FHIR (Fast Healthcare Interoperability Resources) endpoints and leverages Individual Access Services (IAS) under TEFCA (the Trusted Exchange Framework and Common Agreement) through partners such as HealthEx to let individuals bring verifiable clinical records into the Copilot workspace. That design is explicitly consent‑driven in vendor messaging and is intended to allow links to tens of thousands of provider records without bespoke integrations.Technical Anatomy (what Microsoft is likely deploying)

Data ingestion and normalization

Aggregating data from EHRs, labs and wearables requires robust pipelines:- FHIR APIs and DICOM for imaging allow structured clinical content to be pulled into a standardized store.

- Azure Health Data Services or Microsoft’s healthcare data stack is an obvious backend candidate to normalize disparate clinical payloads into unified profiles.

Identity, consent and TEFCA

To connect a patient’s record across multiple providers, Copilot Health appears to rely on TEFCA‑style individual access flows and identity verification provided by partners such as HealthEx — a design that avoids Microsoft buior code for each health system while creating a verified, auditable path for data transfer. That pathway is central to any claim that Microsoft can stitch together a “complete” personal health record from multiple provider silos.Model behavior, grounding and content provenance

Microsoft is attempting to reduce unmoored generative outputs by pairing model responses with licensed editorial content and by building provenance surfaces — explicit statements or citations linking claims to underlying clinical notes, lab values or publisher guidance. This is a pragmatic move; it signals recognition that trust in health AI depends less on rhetoric than on the ability to show why the assistant made a recommendation.Why this matters: benefits and immediate use cases

- Patient understanding: For many patients, lab reference ranges and visit notes are opaque. An assistant that summarizes key changes and flags actionable items can improve health literacy and appointment efficiency.

- Care prep and adherence: Copilot Health could produce appointment checklists, medication question lists, and simplified explanations of imaging or lab trends — practical outputs that clinicians and patients both value.

- Longitudinal trend detection: Continuous device data (sleep, heart rate variability, step counts) combined with clinical labs could highlight meaningful trends earlier than episodic care does. This is particularly valuable for chronic disease monitoring where moderation and early detection matter. ([axios.com](https://www.axios.com/2026/03/12/microsoft-copilot-healt- Consumer convenience and platform lock‑in: If Copilot Health succeeds at consolidating health data, Microsoft gains a powerful consumer relationship — the company’s broader ecosystem incentives (email, calendar, cloud storage) make Copilot a natural place for users to centralize personal information.

The risks and failure modes Microsoft must manage

No single paragraph captures the combinations of safety, privacy and usability risks here. Below are the most important, high‑impact concerns.1) Accuracy and "hallucinations"

Generative models can invent plausible but false statements. In health contexts, plausible falsehoods can be dangerous. Even with grounding and licensed content, the assistant may misinterpret a lab trend, conflate similar conditions, or produce an overconfident next step that a clinician would never endorse. Microsoft’s emphasis on provenance reduces but does not eliminate this risk. Independent validation and clinician oversight remain essential.2) Data qualirable streams are noisy, device‑dependent and vary in clinical validity. Combining high‑precision clinical labs with consumer heart‑rate streams risks over‑interpreting device artifacts as medical signals. Academic work shows LLMs can add value to wearable interpretation, but only within carefully validated pipelines — conversion and denoising steps are essential to reliable outputs. ([arxiv.org](AI on the Pulse: Real-Time Health Anomaly Detection with Wearable and Ambient Intelligence?## 3) Privacy, consent and default settings

Microsoft says health data will be “segmented” and governed separately from general Copilot memory, but prior reports show Copilot memory has begun using signals from other Microsoft services unless users opt out. Any default on/off behavior or hidden toggles creates real privacy risk if users do not understand the scope of sharing. The stakes here are higher than for calendar or email: medical da protections (HIPAA for covered entities) and social harms if leaked.4) Liability and clinical responsibility

Who is responsible if Copilot Health suggests an inappropriate next step and a patient acts on it? Microsoft frames the product as educational aniented, but the line between advice and medical decision support is thin. Regulators and litigators will want to know where the company drew its line and how it documents provenance and disclaimers.5) Interoperability gaps and false completeness

TEFCA/IAS and FHIR endpoints cover many providers but not every practice, especially small clinics or non‑participating hospitals. Users may get a false sense of completeness: a Copilot‑generated “comprehensive” chart can still omit entire episodes of care if the underlying providers are not connected or the identity link fails. That false completeness is dangerous in clinical contexts.6) Platform concentration and data governance

If consumers entrust a single platform with their aggregated medical histories, high‑value target for attackers and an influential gatekeeper in care decisions. Consumers must weigh convenience against centralization risk; regulators will be watching for anti‑competitive lock‑in effects as well.Cross‑checking the most load‑bearing claims

- Launch timing and scope — Microsoft previewed Copilot Health in mid‑March 2026 as a U.S. early access program. This was widely reported in press coverage and Microsoft’s Copilot updates.

- Device and data integrations — Apple Health, Oura and Fitbit were explicitly cited as wearable sources Microsoft intends to ingest. That detail appears in multiple reporting articles and internal previews.

- TEFCA and FHIR usage — Partners such as HealthEx have stated they will connect TEFCA IAS and FHIR endpoints to power individual access flows into Copilot Health. This was described in partner announcements and reporting focused on the integration path.

- Grounding and publisher licensing — Microsoft has negotiated licensing and editorial relationships to surface medically reviewed content (for example, Harvard Health Publishing content has been discussed as a licensed grounding source in thlth strategy). Independent reporting and earlier threads corroborate that licensing strategy.

Critical analysis: strengths, gaps and what Microsoft must prove

Strengths

- Unified user experience: Microsoft uniquely controls multiple consumer touchpoints (desktop, mobile, cloud) and has the scale to make a consolidated health hub convenient and discoverable.

- Standards‑based integration plan: Building on FHIR, DICOM and TEFCA IAS is the correct approach to avoid brittle point‑to‑point connectors and to rely on emergent national interoperability infrastructure.

- Editorial grounding: Licensing medically reviewed content and surfacing provenance will materially reduce some categories of hallucination and improve user trust compared with free‑form chatbots that lack source attribution.

Gaps and skepticism

- Operational safety: Microsoft must show real‑world, cross‑vendor validation demonstrating that model outputs track clinician judgment and that false positives/negatives are understood and minimized.

- Privacy defaults: Segmentation language is necessary but insufficient; the company needs clear, user‑facing controls, immutable audit logs, and simple ways to revoke access and export data.

- Regulatory posture: Consumers and clinicians need clarity about HIPAA exposure, whether Microsoft will act as a business associate in some flows, and how responsibility is allocated when clinical advice is requested.

- Provenance realism: Citing a publisher or a lab value is good; showing the exact note or recoa conclusion — and making that accessible to clinicians — is better. The company must avoid hand‑waving provenance in favor of verifiable, linked evidence.

Practical guidance for users, clinicians and IT teams

For consumers (patients)

- Treat Copilot Health as a preparation and interpretation tool — use it to organize questions, grasp high‑level trends, and prepare for visits, not as a substitute for professional medical advice.

- Confirm which providers are included in your linked record and verify that nothing critical is missing before making decisions.

- Check and lock down privacy settings. If in doubt, limit what you upload or connect and prefer manual export/import for sensitive records.

- Keep copies: export or print important summaries and share them with clinicians through established channels, rather than relying on the assistant alone.

For clinicians and care organizations

- Expect more prepared patients but plan for new kinds of pre‑visit disclosures and potential misinterpretations; set clinic workflows to validate patient‑generated summaries.

- Work with your EHR vendor and IT team to understand how FHIR access is executed and what TEFCA IAS flows will mean for inbound patient requests.

- Advocate for audit trails and consent receipts that can be reconciled into the patient’s legal record when necessary.

For IT and security leaders

- Evaluate vendor attestation and compliance — request HIPAA, SOC and penetration test reports where applicable.

- Require role‑based access, granular consent captures, and short retention windows for any Copilot‑generated artifacts you allow into enterprise systems.

- Prepare incident response playbooks for accidental disclosures involving Copilot Health, includin accessed what and when.

Competition and the broader landscape

Copilot Health does not appear in isolation; competitors from cloud providers and AI startups are racing to offer consumer health companions and clinical decision support. OpenAI introduced a health capability in early 2026 and Amazon has pursued health AI in retail and cloud contexts as well. The strategic play is straightforward: whichever platform becomesd aggregator of health data gains a persistent engagement advantage and potentially a monetizable pathway into clinical services. Microsoft’s advantage is integration with office productivity workflows and enterprise relationships with health systems, but competitors are agile and well‑funded.What to watch next (short and medium term)

- Will Microsoft publish a detailed technical whitepaper describing data flows, retention policies, model evaluation metrics, and third‑party audit results? That will be the clearest signal of readiness.

- How robust are identity and consent receipts produced via TEFCA IAS flows? The operational reliability of identity verification will determine how comprehensive the aggregated records become.

- Will Microsoft commit to explicit limitations on uses of Copilot Health data for model training, and will those commitments be verifiable via audits or cryptographic proofs?

- Will regulators characterize a consumer Copilot that provides actionable health guidance as a medical device or a decision‑support tool subject to medical device rules? Regulatory treatment will shape both product design and risk management obligations.

Conclusion: a measured opportunity, not a done deal

Copilot Health is a consequential and logical next step for a company that already sits at many of the touchpoints people use to manage life. The promise — a single, private workspace that translates tests and wearable streams into clear, actionable guidance — could genuinely increase health literacy and reduce friction in care.But promise is not proof. The high bar for safety, privacy and clinical reliability demands transparent technical documentation, robust consent engineering, independent evaluation and clear regulatory alignment. Microsoft’s stated use of standards such as FHIR and TEFCA, partnerships for identity and provenance, and editorial licensing are good design choices. Yet the outcome will depend on rigorous operational execution and whether the company demonstrates how it prevents hallucinations, enforces privacy by default, and reconciles liability with clinicians and patients.

For users: be curious, stay cautious, and use Copilot Health as an assistant to prepare for clinical care — not as a clinical authority. For organizations and regulators: insist on documentation, audits and enforceable controls before equating convenience with safety. If Microsoft can answer those questions with concrete evidence and independent validation, Copilot Health may become a helpful bridge between fragmented data and meaningful care; until then, the tool is an enormous convenience with equally enormous responsibilities attached.

Source: reclaimthenet.org Microsoft Copilot Health Centralizes Personal Medical Records

Source: Fitt Insider Microsoft Launches AI Health Copilot