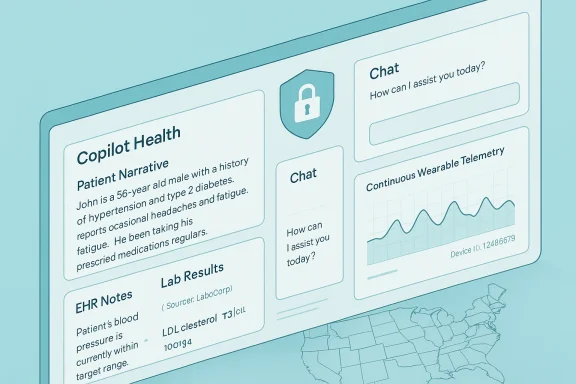

Microsoft’s new Copilot Health preview is the clearest sign yet that the cloud giants intend to make consumer-facing AI the default front door to personal healthcare — a privacy‑segmented Copilot workspace that ingests electronic health records, lab results and wearable telemetry, explains findings in plain language, and promises actionable next steps while stressing that it is not a replacement for a clinician. //www.axios.com/2026/03/12/microsoft-copilot-health))

Microsoft has spent the last several years layering AI into both enterprise and consumer products, and the company’s health efforts — from clinical workflow tools like Dragon and DAX Copilot to consumer-facing features inside Bing and Copilot — have been iterative building blocks toward a larger ambition: put an “intelligence layer” on top of fragmented health data and make it useful for patients and clinicians alike. That ambition is now visible in Copilot Health, a preview launched by Microsoft in March 2026 that is initially available in English to U.S. adults through an early access waitlist. (axios.com) (microsoft.com)

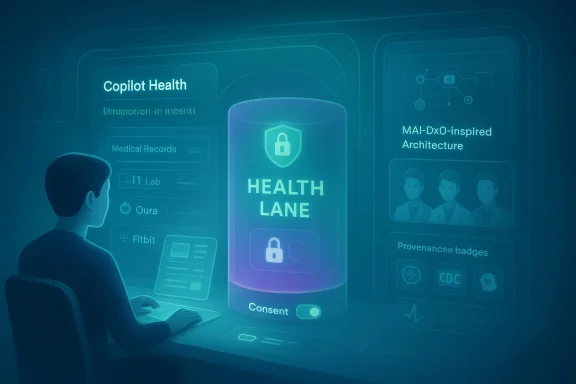

Microsoft positions Copilot Health as a separate, secure space within Copilot — a design intended to keep clinical interactions distinct from general Copilot conversations, encrypt data in transit and at rest, and avoid mixing consumer health data into the company’s broader model‑training pipelines. The company also points to a series of prior research efforts and internal tools — most notably the Microsoft AI Diagnostic Orchestrator (MAI‑DxO) — as technical foundations for the product’s reasoning capabilities. These research artifacts and benchmarks are not hypothetical: Microsoft has published internal results showing MAI‑DxO solving complex, staged diagnostic cases from the New England Journal of Medicine at rates materially higher than individual physicians in their experiments. However, Microsoft’s research documents also include explicit caveats and limitations — an important detail that must temper how these claims are read and used. (microsoft.ai) (microsoft.com)

Two technical elements deserve emphasis:

There are additional assertions circulating in early press and social summaries — for example, references to an external panel of “over 230 physicians across 24 countries” conducting clinical safety reviews, or independent ISO certifications mentioned in some third‑party writeups. Those specific numerical claims appear in several community and news summaries but are not prominently documented in Microsoft’s central public post or research briefings as of the initial preview announcement. Because these figures could be accurate but are not yet clearly substantiated in Microsoft’s official materials, they should be treated as claims requiring verification. Until Microsoft publishes more explicit documentation or a third‑party audit confirms them, these numbers remain uncertain.

At the same time, the stakes could not be higher. The technical and social problems are not just engineering challenges but questions about clinical responsibility, legal accountability, and public trust. Microsoft’s public commitments around isolation, clinical evaluation, and provenance are necessary first steps — but the company will need sustained transparency, independent verification, and conservative product behavior to justify asking users to hand over their most sensitive medical records.

Copilot Health is not just another feature update; it is a test of whether the industry can design consumer AI that helps without harming. Its early promise is real; its pitfalls are equally real. For patients, clinicians and regulators, the next months should not be a watching brief — they should be an active period of validation, audit and governance. Only then will the product’s ambitions for “medical superintelligence” translate into safe, equitable, and reliable improvements in care. (microsoft.ai)

Source: Phandroid Microsoft's "Copilot Health" is Designed to Answer Medical Queries Online - Phandroid

Background / Overview

Background / Overview

Microsoft has spent the last several years layering AI into both enterprise and consumer products, and the company’s health efforts — from clinical workflow tools like Dragon and DAX Copilot to consumer-facing features inside Bing and Copilot — have been iterative building blocks toward a larger ambition: put an “intelligence layer” on top of fragmented health data and make it useful for patients and clinicians alike. That ambition is now visible in Copilot Health, a preview launched by Microsoft in March 2026 that is initially available in English to U.S. adults through an early access waitlist. (axios.com) (microsoft.com)Microsoft positions Copilot Health as a separate, secure space within Copilot — a design intended to keep clinical interactions distinct from general Copilot conversations, encrypt data in transit and at rest, and avoid mixing consumer health data into the company’s broader model‑training pipelines. The company also points to a series of prior research efforts and internal tools — most notably the Microsoft AI Diagnostic Orchestrator (MAI‑DxO) — as technical foundations for the product’s reasoning capabilities. These research artifacts and benchmarks are not hypothetical: Microsoft has published internal results showing MAI‑DxO solving complex, staged diagnostic cases from the New England Journal of Medicine at rates materially higher than individual physicians in their experiments. However, Microsoft’s research documents also include explicit caveats and limitations — an important detail that must temper how these claims are read and used. (microsoft.ai) (microsoft.com)

What Copilot Health promises to do

At launch Microsoft says Copilot Health will be able to:- Aggregate user medical records and lab results from tens of thousands of U.S. providers.

- Ingest continuous telemetry and biometric streams from consumer wearables (Microsoft specifically cited Apple Health, Oura and Fitbit among examples) and synthesize those signals with clinical data. (axios.com)

- Provide plain‑language explanations of results, highlight trends over time (sleep, activity, vitals), and generate appointment prep notes or suggested questions for clinicians.

- Let users search for local healthcare providers and filter by insurance coverage when available.

- Keep the “health lane” separate and encrypted from general Copilot content and explicitly state that health data will not be used to train Microsoft’s general AI models. (axios.com)

How Copilot Health works — the technical picture

Microsoft’s public materials and research papers make clear that Copilot Health is not a single monolithic model but a system of components: connectors that pull and normalize structured clinical data (likely via standards such as FHIR though Microsoft’s public summary focuses on capabilities rather than implementation details), device integrations for consumer telemetry, retrieval and grounding systems that attach authoritative guidance to answers, and orchestration layers that sequence reasoning steps.Two technical elements deserve emphasis:

- MAI‑DxO and orchestrated reasoning. Microsoft’s MAI‑DxO is described as a system that orchestrates multiple models or reasoning agents to act like a virtual panel of clinicians, able to ask follow‑ups, order tests in a simulated benchmark, and verify its own reasoning. In Microsoft’s Sequential Diagnosis Benchmark (which converts 304 NEJM case records into stepwise challenges), MAI‑DxO paired with a top-performing model achieved a correct‑diagnosis rate reported at roughly 85.5%, compared with a mean accuracy of about 20% for the small cohort of practicing physicians evaluated in the study. Microsoft presents this as evidence that properly orchestrated AI can match or exceed individual clinicians on constrained diagnostic benchmarks — while noting important experimental limitations. (microsoft.ai) (microsoft.com)

- Retrieval‑augmented generation (RAG) and provenance. Microsoft describes Copilot Health as linking answers to “credible health organizations” spanning many countries and putting medically reviewed content in front of users to reduce hallucination risk. In practice this means the assistant will combine generative reasoning with retrieval from curated, licensed sources and label outputs to indicate whether a recommendation is grounded in medical guidance or is a probabilistic inference. Microsoft has also reported internal usage metrics — claiming Copilot already handles tens of millions of health-related sessions per day — and has used that data to prioritize product design and safety mechanisms. (microsoft.ai) (axios.com)

What the research actually shows (and what it does not)

Microsoft’s benchmark work is striking and useful, yet it must be read in context.- The MAI‑DxO results come from an experimental benchmark modeled on particularly complex NEJM case records; participants — both AI and human — were evaluated under the constraints of that benchmark. The research notes that clinicians in the study did not have access to colleagues, textbooks, or outside tools that they would ordinarily use in practice, and that further testing is needed to assess performance on common, everyday presentations. In short: high performance on a difficult, well‑defined benchmark is a strong signal but not proof that the system will perform equally well in the messy, incomplete, and social reality of clinical practice. (microsoft.ai) (microsoft.com)

- Benchmarks measure a narrow, measurable slice of capability. Diagnostic accuracy in a staged case series does not directly equate to safe triage advice, correct medication adjustments, or legal responsibility in real‑world care pathways. Microsoft’s own documents highlight these limits and call for more research and clinical validation before translating experimental capabilities directly into consumer medical advice. (microsoft.com)

Clinical validation, governance, and Microsoft’s safety claims

Microsoft has repeatedly framed Copilot Health as a product that will ship new capabilities only after “rigorous clinical evaluations” and with “clear labelling.” The company has emphasized several governance features:- A separate, encrypted “health lane” to isolate clinical conversations.

- Explicit statements that health data processed in Copilot Health will not be used to train Microsoft’s broader models.

- Use of curated content and licensed medical publisher material to anchor consumer responses.

- Ongoing clinical evaluations and promises to publish research findings. (axios.com) (microsoft.ai)

Strengths — why Copilot Health could matter

- Data consolidation at consumer scale. Many patients lack a single consolidated view of their labs, notes and device telemetry; Copilot Health’s ability to synthesize these disparate inputs into a single, comprehensible narrative is a major usability win if executed correctly. (axios.com)

- Actionable, appointment‑readiness features. Generating question lists, highlighting trends and translating medical jargon into plain language can materially improve clinician–patient interactions and may reduce misunderstandings during visits.

- Advanced diagnostic research feeding product design. Microsoft’s MAI‑DxO and sequential benchmark experiments demonstrate how orchestration and ensemble reasoning can improve measured diagnostic outcomes in controlled settings. Those technical improvements — paired with retrieval and provenance mechanisms — are a meaningful step beyond simple chatbot responses. (microsoft.ai)

- Ecosystem leverage. Microsoft already serves many health customers with enterprise cloud, data and analytics products and has relationships across payer and provider ecosystems; those integrations can help scale feature parity with clinical workflows when privacy and interoperability are handled correctly.

Risks and failure modes — what keeps clinicians and privacy experts awake

No single paragraph can exhaust the risks, but the most consequential categories are these:- Incorrect or misleading medical guidance. Even a low rate of incorrect triage or diagnostic suggestion can lead to patient harm, delayed care, or unnecessary testing. Generative models can be confidently wrong; grounding and provenance reduce but do not eliminate this risk. Microsoft’s MAI‑DxO research acknowledges boundaries and emphasizes further validation — a responsible admission that also underscores ongoing uncertainty. (microsoft.ai)

- Data provenance and privacy leakage. Consolidating EHRs, labs and device telemetry is valuable — and also concentrates risk. Microsoft states Copilot Health will not use health data to train its models and that health conversations are isolated and encrypted, but those technical protections require continuous audit, third‑party verification, and transparent policies that users can operationally understand. Past incidents in the industry (and even within large vendors) show that technical promises need constant verification, not just initial design intent. (axios.com)

- Regulatory and liability complexity. Health care is highly regulated; different jurisdictions have different standards for medical devices, clinical decision support, and patient privacy. What qualifies as information versus medical advice can change legal obligations. Microsoft will need to navigate HIPAA, FDA guidance on clinical decision support, state medical practice rules, and consumer protection regimes — and that complexity will multiply in future expansions outside the U.S. Microsoft’s public comments promise clinical evaluations and labeling, but regulatory engagement is the next, critical step. (microsoft.com)

- User misunderstanding and overreliance. Consumers often prefer simple, reassuring narratives; the danger is that they may treat Copilot Health outputs as definitive medical verdicts rather than one data point among many. Clear, persistent UI signals, friction when appropriate (e.g., “seek urgent care” flags), and explicit instructions to consult a clinician are necessary but not sufficient to prevent misuse. (microsoft.com)

- Commercial conflicts of interest and access equity. If Copilot Health later becomes a paid tier — a direction Microsoft has signaled — access disparities could emerge, especially if premium features provide more sopupport. Meanwhile, integration choices (which providers and devices are supported) can privilege certain ecosystems and create network effects that entrench particular vendors. (axios.com)

Claims to verify — and a note on uncertain or unsupported details

Microsoft’s public materials and research clearly support several load‑bearing claims: the MAI‑DxO benchmark results, the existence of a privacy‑segmented health lane, explicit promises about not using health data for model training, and the initial U.S. preview and waitlist. These are documented in Microsoft’s AI pages and the company’s research report, and they are echoed by independent reporting. (microsoft.ai)There are additional assertions circulating in early press and social summaries — for example, references to an external panel of “over 230 physicians across 24 countries” conducting clinical safety reviews, or independent ISO certifications mentioned in some third‑party writeups. Those specific numerical claims appear in several community and news summaries but are not prominently documented in Microsoft’s central public post or research briefings as of the initial preview announcement. Because these figures could be accurate but are not yet clearly substantiated in Microsoft’s official materials, they should be treated as claims requiring verification. Until Microsoft publishes more explicit documentation or a third‑party audit confirms them, these numbers remain uncertain.

Competitive context

Copilot Health launches into an increasingly crowded field. OpenAI released a consumer health product earlier in the year, and Amazon has expanded its own health chatbot offerings and partnerships. Each major cloud or AI company is racing to be the “front door” for health questions; the differences will come down to integration depth with clinical systems, regulatory posture, data governance, and trust. Microsoft’s advantages are its enterprise healthcare footprint, its cloud relationships with hospitals and payers, and the academic‑grade research work it is publishing. Its disadvantages are the same as any platform ambition: concentrated risk and the need to earn patient trust in a new role. (axios.com)Recommendations — what Microsoft should show next

To turn promising research and a glossy preview into a genuinely safe and useful product, Microsoft should prioritize the following:- Publish an independent audit plan. Invite third‑party security and privacy auditors to verify the “health lane” isolation, encryption, and the claim that health data will not be used for model training.

- Provide granular consent controls. Users must be able to see, export, and delete records ingested by Copilot Health; they should also control which device streams (sleep, activity, heart rate) are included and how long telemetry is retained.

- Open a clinical governance dashboard. Describe the clinical review process, the composition and credentials of advisory panels, and the exact nature of clinical evaluations — including negative results or failure modes discovered during testing.

- Publish regulatory engagement roadmaps. Clarify interactions with FDA guidance (or its equivalents outside the U.S.), HIPAA applicability, and how liability is allocated when Copilot Health is integrated into care pathways.

- Build conservative default behaviors for high‑risk outputs. For example, when the assistant’s confidence is low or when serious red‑flag symptoms are detected, force escalation paths that direct users to emergency services or clinician contact rather than offering tentative home‑care advice.

Practical guidance for users and clinicians

- If you are a consumer: Treat Copilot Health as a tool, not an arbiter. Use it to prepare for visits, translate medical jargon, and consolidate records, but always validate clinical recommendations with a trusted healthcare professional. Pay close attention to consent flows and data‑sharing controls during sign‑up, and take advantage of export/delete features if you later decide to remove records. (axios.com)

- If you are a clinician: Expect patients to arrive with AI‑generated summaries and trend charts. Develop a workflow for verifying patient‑provided AI outputs (for example, quickly checking the EHR source and ordering confirmatory testing when appropriate) and be explicit with patients about the assistant’s limits. Consider participating in vendor evaluations to help shape product behavior in clinical contexts. (microsoft.com)

- If you are an IT or privacy officer at a healthcare organization: Demand contractual clarity about data flows, encryption and incident response. Even if a consumer product does not intend to use patient data for model training, contractual and technical safeguards must ensure data isolation and clear governance boundaries.

Final appraisal: bold ambition, heavy responsibility

Copilot Health is a consequential product launch because it makes explicit what many in the industry have been building toward: AI that touches the full arc of a person’s medical life — historical records, lab signals, and the constantly streaming telemetry from wearables. Microsoft’s research work, particularly the MAI‑DxO experiments, shows that orchestrated AI can deliver impressive results on carefully designed benchmarks; its enterprise connections and engineering resources give it a real shot at addressing interoperability and scale. (microsoft.ai)At the same time, the stakes could not be higher. The technical and social problems are not just engineering challenges but questions about clinical responsibility, legal accountability, and public trust. Microsoft’s public commitments around isolation, clinical evaluation, and provenance are necessary first steps — but the company will need sustained transparency, independent verification, and conservative product behavior to justify asking users to hand over their most sensitive medical records.

Copilot Health is not just another feature update; it is a test of whether the industry can design consumer AI that helps without harming. Its early promise is real; its pitfalls are equally real. For patients, clinicians and regulators, the next months should not be a watching brief — they should be an active period of validation, audit and governance. Only then will the product’s ambitions for “medical superintelligence” translate into safe, equitable, and reliable improvements in care. (microsoft.ai)

Source: Phandroid Microsoft's "Copilot Health" is Designed to Answer Medical Queries Online - Phandroid