Microsoft’s new Copilot Health preview is not a science‑fiction cure — it’s a consumer‑facing, privacy‑segmented space that promises to stitch your scattered medical records, lab reports and wearable streams into plain‑language summaries and actionable next steps — and a recent first‑person account shows how that kind of assistance can change the course of care when everything else has failed. s://microsoft.ai/news/health-check-how-people-use-copilot-for-health/)

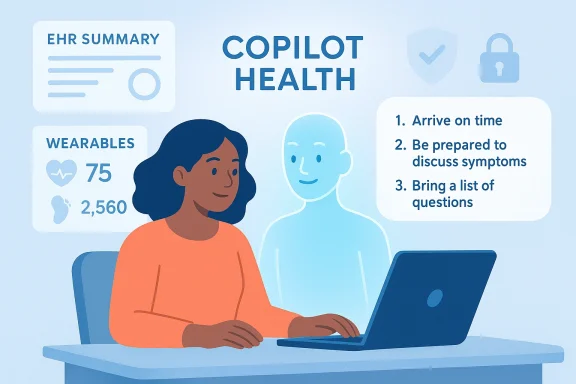

In mid‑March 2026 Microsoft opened a U.S.‑only preview of Copilot Health, pitching it as a separate, secure lane inside Copilot that aggregates electronic health records (EHRs), lab results, prescriptions and continuous telemetry from consumer wearables, and then explains trends, highlights anomalies, and helps people prepare for clinical appointments. Microsoft frames the product as a conversation aid, explicitly saying it is not a replacement for clinicians but a way to make clinical visits more productive.

The company’s own usage research — the Copilot Usage Report 2025 and follow‑on analysis — shows why the product exists: people already use Copilot and related Microsoft AI tools for health questions in very large numbers. Microsoft reports handling over 50 million health‑related sessions per day across its consumer AI surfaces, and documents that nearly one in five health conversations involve personal symptom descriptions or interpretation of test results. That scale is both the justification for a dedicated, privacy‑segmented health workspace and the central risk driver for any system that touches medical information at that volume.

A Windows Central reporter offered a clear, grounded example of what this consumer assistance can do in practice: after two decades of intermittent upper‑right abdominal pain that clinicians repeatedly attributed to hormonal causes, the author used Copilot to develop better wording and a focused e‑consult request. That request led to an ultrasound tllstones and a chronic digestive dysfunction; the author is now on a surgical waiting list. Importantly, the author stresses that Copilot did not diagnose them — it helped them ask the right question and get the right test.

Why that matters: many diagnostic delays are the result of incomplete histories, poor documentation, or clinicians applying heuristics too broadly. Tools that help patients prepare succinct, evidence‑oriented narratives can shift triage and testing decisions in meaningful ways.

Practical implication: even small gains in triage accuracy or patient‑clinician communication, when multiplied across millions of daily interactions, can materially affect health outcomes at population scale. But scale also magnifies risk: bad advice, model errors, or data breaches at such volume would have broad consequences.

But technical controls are not the whole story. Security is a system property: it requires not only ut also rigorous identity controls, auditability, software supply‑chain hygiene, independent verification, and robust incident response. Microsoft has faced high‑profile incidents in the past — for example, logic bugs in enterprise Copilot features that led to confidential emails being incorrectly processed — and those incidents demonstrate how implementation and operational errors can undermine policy promises. Any “secure by design” product should be judged on the totality of engineering and operational controls, not marketing language.

Key mitigations include:

At the same time, the scale of demand (over 50 million health queries per day) and the sensitivity of the data involved mean that Copilot Health must be held to exceptionally high standards. Secure by design promises and privacy‑segmented lanes are necessary first steps, but they are not a substitute for independent verification, strong operational controls, transparent provenance, and robust regulatory oversight. Microsoft’s early positioning and stated safeguards are encouraging, but history — from enterprise Copilot logic errors to disruptive supplier ransomware incidents affecting public health systems — shows why vigilant oversight and conservative, safety‑first rollouts are essential.

For patients, Copilot Health and similar tools are bes of agency*: they can help you prepare clearer histories, request specific tests, and translate medical jargon — but they do not replace the need for professional clinical judgment. For clinicians and health systems, these tools will be a new part of the landscape to govern, validate, and incorporate into workflow. Done right, they can reduce diagnostic delay and make clinical time more productive; done poorly, they risk undermining trust and privacy at scale.

The Windows Central story provides a real‑world data point: an AI assistant didn’t lay out a diagnosis, but it helped a patient win the single thing that matters most in medicine — the right test at the right time. That outcome should inform the debate — not to blind us with optimism, but to remind us why getting the governance, engineering and clinical validation right is so important.

Source: Windows Central Copilot helped me find the problem my doctors missed

Background / Overview

Background / Overview

In mid‑March 2026 Microsoft opened a U.S.‑only preview of Copilot Health, pitching it as a separate, secure lane inside Copilot that aggregates electronic health records (EHRs), lab results, prescriptions and continuous telemetry from consumer wearables, and then explains trends, highlights anomalies, and helps people prepare for clinical appointments. Microsoft frames the product as a conversation aid, explicitly saying it is not a replacement for clinicians but a way to make clinical visits more productive.The company’s own usage research — the Copilot Usage Report 2025 and follow‑on analysis — shows why the product exists: people already use Copilot and related Microsoft AI tools for health questions in very large numbers. Microsoft reports handling over 50 million health‑related sessions per day across its consumer AI surfaces, and documents that nearly one in five health conversations involve personal symptom descriptions or interpretation of test results. That scale is both the justification for a dedicated, privacy‑segmented health workspace and the central risk driver for any system that touches medical information at that volume.

A Windows Central reporter offered a clear, grounded example of what this consumer assistance can do in practice: after two decades of intermittent upper‑right abdominal pain that clinicians repeatedly attributed to hormonal causes, the author used Copilot to develop better wording and a focused e‑consult request. That request led to an ultrasound tllstones and a chronic digestive dysfunction; the author is now on a surgical waiting list. Importantly, the author stresses that Copilot did not diagnose them — it helped them ask the right question and get the right test.

What Copilot Health claims to do (and what the product actually is)

Aggregation and synthesis, not autonomous diagnosis

Copilot Health is described as a workspace that:- Ingests EHRs, imaging and lab reports from participating providers.

- Pulls in continuous telemetry from consumer wearables (Apple Health, Fitbit, Oura and similar).

- Synthesizes that data into plain‑language summaries, trend visualizations, and suggested “next steps” to discuss with a clinician.

- Provides appointment‑prep materials and suggested phrasings to help users convey their symptoms and medical history clearly.

A separate, “privacy‑segmented” lane

Microsoft says Copilot Health conversations and the underlying data will be isolated from general Copilot and governed by dedicated controls — what the company calls a “privacy‑segmented” health lane inside Copilot. The pledge includes technical controls like encryption and permissioned data access, and promises that users control sharing and permissions. Those are necessary but not sufficient conditions for trustworthy handling of sensitive health data. Implementation details and independent audits will determine whether “privacy‑segmented” is meaningful in practice.The personal case: how an AI conversation changed a care pathway

The Windows Central account is instructive because it describes a common health‑care frustration and a replicable use case for consumer AI.- The author experienced upper‑right abdominal pain for roughly 20 years, with intermittent flares and scant investigation.

- Clinicians repeatedly attributed symptoms to hormonal causes; the author’s request for an abdominal ultrasound was not originally ordered.

- Using Copilot, the author iterated on questions, provided symptom history, and adopted Copilot’s suggested phrasing when submitting an e‑consult to their GP.

- That e‑consult produced an ultrasound that revealed multiple gallstones and a chronic digestive dysfunction; an operation was scheduled within weeks.

Why that matters: many diagnostic delays are the result of incomplete histories, poor documentation, or clinicians applying heuristics too broadly. Tools that help patients prepare succinct, evidence‑oriented narratives can shift triage and testing decisions in meaningful ways.

Quantifying demand: the 50 million figure and what it means

Microsoft’s consumer analytics indicate enormous demand for health advice through AI. The Copilot Usage Report and associated Microsoft.ai posts state that Copilot and related Microsoft AI services handle over 50 million health questions per day, with mobile being the locus of the most personal conversations and an observed rise in symptom queries late at night. That level of engagement explains why Microsoft is carving out a dedicated health product: users already treat AI as a front line for health inquiries.Practical implication: even small gains in triage accuracy or patient‑clinician communication, when multiplied across millions of daily interactions, can materially affect health outcomes at population scale. But scale also magnifies risk: bad advice, model errors, or data breaches at such volume would have broad consequences.

Trust, privacy and the tradeoffs

Microsoft’s “Secure by Design” pitch — merits and limits

Microsoft repeatedly frames Copilot Health as “Safe and Secure by Design,” pointing to encryption, isolation of health conversations from general Copilot, and explicit permission controls. Those are important controls and represent the baseline any consumer health product must meet. Microsoft’s public materials also describe partnerships with established clinical content providers (for example, Harvard Health) and cite governance efforts to ground answers in credible sources.But technical controls are not the whole story. Security is a system property: it requires not only ut also rigorous identity controls, auditability, software supply‑chain hygiene, independent verification, and robust incident response. Microsoft has faced high‑profile incidents in the past — for example, logic bugs in enterprise Copilot features that led to confidential emails being incorrectly processed — and those incidents demonstrate how implementation and operational errors can undermine policy promises. Any “secure by design” product should be judged on the totality of engineering and operational controls, not marketing language.

Other custodians of health data have also suffered lapses

Concerns about third‑party risk are reasonable, but they are not unique to Big Tech. Public health systems and vendors alike have experienced disruptive cyber incidents that affected care and exposed patient data. Notably, ransomware and software incidents affecting NHS suppliers have forced service disruptions and raised fines and regulatory scrutiny, underscoring that handing data to any single steward entails tradeoffs. These historical incidents are good reason to insist on high standards of governance, not a categorical rejection of new approaches.A personal calculus

For some people — including the Windows Central author — the immediate clinical benefit (a long‑missed diagnosis and a plan for treatment) outweighs abstract fears about hypothetical breaches. That calculus will vary by individual, condition, and trust in the vendor or institution involved. As a matter of public policy and product design, however, one person’s tradeoff should not be the default for everyone; robust defaults and clear, enforceable protections are essential.Clinical accuracy and model behavior: the risk of “helpful” hallucinations

Generative models can be persuasive. They can synthesize patient narratives, suggest reasonable next steps, and even recommend tests that fit the symptom pattern. But these same models can also hallucinate — producing plausible‑sounding but incorrect clinical assertions, unsupported causal links, or overconfident recommendations.Key mitigations include:

- Grounding answers in cited, vetted clinical sources and surfacing those sources clearly.

- Flagging uncertain or speculative outputs and recommending escalation to a clinician.

- Logging the provenance of any diagnosis‑adjacent suggestion (which records what data and model reasoning produced a given suggestion).

- Using human‑in‑the‑loop review for high‑risk outputs and prve, safety‑oriented language.

What clinicians should expect (and how they should prepare)

- More patients will arrive with AI‑generated summaries and suggested next steps. That changes the dynamic of consultations: some encounters will be shorter and better prepared, others may require clinicians to spend time correcting misinformation.

- Clinicians should demand clarity about provenance and consent. If a patient submits an AI‑prepared symptom history, the clinician should be able to see what data was used to construct that narrative and whether the patient consented to share specific records. Systems should expose audit trails that integrate into the clinical record.

- Workflow integration matters. The most useful AI outputs are those that slot into existin — e.g., structured summaries that import directly into EHR problem lists, or suggested test orders that match local formularies and referral pathways.

- Liability and documentation. Clinicians and institutions will need policies for when and how to rely on patient‑provided AI outputs. It should be straightforward to document that a patient asked for a particular test and to record why a clinician agreed or declined.

Practical guidance for patients who want to use Copilot Health or similar tools

- Treat Copilot as a prep tool, not a definitive diagnostic authority. Use it to summarize symptoms, collate test results, and prepare concise notes for your clinician.

- Keep a local copy of important records. Even when you grant access to an AI product, maintain your own archive of key lab results, imaging reports and medications — this reduces dependence on any single vendor.

- Understand and control permissions. Before importing records or linking provider portals, read the permission screens and set only the minimum necessary access. Ask for audit logs if possible.

- Get a second clinical opinion for any major decision or invasive treatment; use AI to prepare for the conversation but rely on qualified clinicians for final judgment.

- Consider the privacy calculus: for some users and conditions, the convenience and clarity Copilot offers may be worth the tradeoff; others may choose to avoid sharing certain records with third parties.

Regulatory and governance issues Microsoft (and regulators) must address

- Independent audits and third‑party attestations of the data controls, model safety testing, and clinical accuracy.

- Clear, enforceable data retention and deletion policies for health data, with defaults that minimize sharing and require explicit patient consent for broader uses.

- Auditability and provenance: every output that influences care should carry a transparent chain of custody and a human‑readable explanation of the model’s reasoning and data inputs.

- Certification pathways:nsider certification standards for consumer AI health assistants, similar to medical device pathways when the tool informs clinical decisions.

- Incident response and breach notification: Copilot Health must integrate with healthcare incident reporting frameworks and provide timely, transparent notifications to affected users and regulators if anything goes wrong.

Strengths, opportunities and clear risks

Strengths

- Empowerment and access: Tools like Copilot Health can lower the barrier for patients to explain their symptoms clearly and request appropriate tests. The Windows Central case is a concrete example of this benefit in action.

- Scale and reach: With tens of millions of health queries daily, Copilot has the reach to affect triage and information access at population scale. If responsibly implemented, the product could reduce delays in diagnosis and improve health literacy.

- User preparation for clinical visits: Better‑phrased histories and structured summaries can make appointments more efficient and targeted.

Opportunities

- Clinician augmentation: The best outcomes will come when systems augment clinicians by surfacing structured, source‑anchored information that saves time and supports decision‑making.

- Public health insights: Aggregated, de‑identified trends could surface unmet needs and guide resource allocation — but only with rigorous privacy protection and oversight.

- Improved adherence and navigation: Personalized follow‑up suggestions and navigation assistance could help patients adhere to treatment plans and find appropriate local care.

Risks

- Privacy and breach risk: Centralizing EHRs and continuous telemetry in a commercial consumer product increases the attack surface and raises questions about secondary uses of data.

- Erroneous medical advice and hallucination: Model errors can mislead patients and clinicians, creating clinical risk if suggestions are acted upon uncritically.

- Regulatory and liability uncertainty: Who is responsible if an AI‑suggested line of inquiry leads to harm? Clear legal frameworks are needed.

- Equity and access: Not everyone will trust or be able to use these tools equally — the benefits could disproportionately accrue to those who are already digitally literate.

Concrete recommendations for Microsoft and for health systems

- Microsoft should publish independent model validation results and subject Copilot Health to third‑party safety audits and clinical trials that are open to peer review.

- Offer granular, easily reversible consent controls and a straightforward way to export and permanently delete health data.

- Provide tightly integrated audit logs that clinicians and patients can view, showing provenance for each suggestion or summary.

- Health systems should map Copilot Health’s outputs into existing governance and legal framempliance in the U.S.) and negotiate Business Associate Agreements (BAAs) where necessary for enterprise deployments.

- Regulators should define clear thresholds for when consumer health AI crosses into regulated medical device territory.

Conclusion

The Windows Central reporter’s account — that Copilot helped them ask the right question and finally get an ultrasound that identified decades‑old gallstones — is persuasive evidence that consumer AI can close gaps in patient‑clinician communication and shorten diagnostic odysseys. It is concrete, human, and replicable: better questions lead to better tests, and better tests can reveal answers that simple web searches or hurried consultations miss.At the same time, the scale of demand (over 50 million health queries per day) and the sensitivity of the data involved mean that Copilot Health must be held to exceptionally high standards. Secure by design promises and privacy‑segmented lanes are necessary first steps, but they are not a substitute for independent verification, strong operational controls, transparent provenance, and robust regulatory oversight. Microsoft’s early positioning and stated safeguards are encouraging, but history — from enterprise Copilot logic errors to disruptive supplier ransomware incidents affecting public health systems — shows why vigilant oversight and conservative, safety‑first rollouts are essential.

For patients, Copilot Health and similar tools are bes of agency*: they can help you prepare clearer histories, request specific tests, and translate medical jargon — but they do not replace the need for professional clinical judgment. For clinicians and health systems, these tools will be a new part of the landscape to govern, validate, and incorporate into workflow. Done right, they can reduce diagnostic delay and make clinical time more productive; done poorly, they risk undermining trust and privacy at scale.

The Windows Central story provides a real‑world data point: an AI assistant didn’t lay out a diagnosis, but it helped a patient win the single thing that matters most in medicine — the right test at the right time. That outcome should inform the debate — not to blind us with optimism, but to remind us why getting the governance, engineering and clinical validation right is so important.

Source: Windows Central Copilot helped me find the problem my doctors missed