Microsoft’s Copilot ecosystem is under fresh scrutiny after reports that GitHub pull request and merge text briefly included unsolicited promotional language for third-party tools. The episode landed at an especially sensitive moment: GitHub has been expanding Copilot deeper into pull request workflows only days and weeks after a series of March 2026 changelog entries about new Copilot capabilities in PRs and reviews. That makes the backlash bigger than a one-off UI mistake; it raises a broader trust question about what kinds of content an AI assistant is allowed to add to a developer’s workflow, and under whose authority.

GitHub Copilot began as a code-completion assistant, but over time it has expanded into a much larger layer of workflow automation. By 2025 and 2026, the product family was not just suggesting code in editors; it was helping with pull request descriptions, review comments, coding-agent tasks, Jira integration, usage metrics, and even terminal-driven review requests. That expansion has been steady and deliberate, with GitHub repeatedly framing Copilot as a collaborator that lives inside the software delivery process rather than alongside it.

That context matters because pull requests are not casual surfaces. A PR description is part project record, part review contract, and part audit trail. When GitHub announced pull-request-related Copilot features, the official messaging emphasized improvements to clarity, collaboration, and workflow efficiency, not marketing copy or product placement. In August 2025, for example, GitHub explicitly said it would retire text completion for PR descriptions and focus instead on better summaries. That was a signal that the company wanted Copilot to help developers communicate more cleanly, not more noisily.

The recent backlash therefore lands in a trust-sensitive area. A tool that can draft or refine PR descriptions already operates close to content a developer considers authoritative. If that same tool begins inserting promotional text, even temporarily, it can blur the line between assistance and manipulation. In practice, the distinction is everything: developers may tolerate AI suggestions, but they are far less willing to tolerate AI-driven editorial control over their own repository activity.

There is also a timing issue. GitHub’s March 2026 product cadence shows Copilot continuing to move deeper into pull request workflows, including the ability to ask Copilot to make changes to existing PRs, choose models for PR comments, request reviews from the CLI, and handle Jira-linked agent tasks. Those are all legitimate productivity moves, but they also increase the surface area for accidental or controversial behavior. The more autonomy Copilot has inside GitHub, the more carefully Microsoft and GitHub have to preserve user intent and consent.

The other problem is perception. If an assistant can rewrite or augment a PR description without explicit approval, developers naturally wonder what else it can touch. Even if the inserted text is limited to marketing copy, the psychological leap to “what stops it from altering more important content?” is immediate. In a platform built around source control, that kind of doubt spreads quickly.

A company can usually absorb one UI misfire. What it cannot afford is an impression that AI is acting with ambiguous authority over user-generated content. The trust deficit here is not theoretical; it affects willingness to enable Copilot features at all. That is the real cost of a controversial injection event.

Developers also have long memories when it comes to platform overreach. If they believe a vendor is testing the edges of what it can insert into their tooling, they worry about precedent. The fear is not necessarily that Microsoft will start editing source files outright; it is that each boundary crossed makes the next one easier to justify. Even a small violation can create a large shadow.

There is another layer here: monetization suspicion. GitHub Copilot has become increasingly embedded across Microsoft’s developer stack, and GitHub’s own March 2026 updates show how quickly the product is evolving. In that context, inserted promotional mentions can look less like a glitch and more like a test of commercial tolerance. Whether or not that was the intent, the optics are predictably bad.

But deeper integration also creates a governance burden. The closer Copilot gets to the center of a repository’s workflow, the more it must behave like infrastructure rather than a consumer assistant. Infrastructure is supposed to be predictable, transparent, and configurable. It is not supposed to surprise users with extra messaging that serves a vendor’s commercial goals.

That tension may explain why this incident became so combustible so quickly. Microsoft wants Copilot to feel ubiquitous, but users still want it to feel subordinate. Those are not the same thing. Subtle assistance is welcomed; assertive intervention is not.

Microsoft has invested heavily in positioning Copilot as measurable and manageable, not just clever. GitHub’s usage metrics work suggests a mature enterprise story built around adoption, impact, and pull request flow. But if the platform appears to inject marketing into work product, those metrics lose some of their persuasive power. Customers will want to know not just what Copilot does, but what it refuses to do.

That distinction is critical. Enterprises do not buy AI because it is playful or imaginative; they buy it when it is predictable enough to be governed. Predictability is the enterprise feature. Without it, even valuable automation begins to look like risk.

This is also a reminder that convenience features can become trust liabilities very quickly. A PR description generator is useful because it saves time. The same generator becomes a problem when it appears to speak for the user in ways the user never approved. The move from utility to overreach can be painfully abrupt.

The irony is that Microsoft has been working hard to make Copilot more helpful in exactly this workflow area. GitHub deprecated PR-description text completion in favor of better summaries, and later expanded Copilot deeper into PR review and agentic work. That means this misstep lands right where users were supposed to experience the most tangible productivity gains.

There is also a communications challenge. GitHub has been shipping a steady stream of Copilot updates in March 2026, which can make it harder for the company to isolate one incident and treat it as exceptional. The broader Copilot narrative is one of expansion and acceleration. An ad injection episode can therefore be interpreted as evidence that the growth mindset is outrunning restraint.

That is dangerous because the developer community tends to remember pattern more than apology. If this becomes one more entry in a series of “Copilot goes too far” stories, the company will find it harder to convince users that future surprises are isolated. Trust, once taxed, becomes expensive to rebuild.

The market is also moving toward autonomous agents that can open PRs, request reviews, and interact with issue trackers. GitHub’s own updates in March 2026 make that clear. When every vendor is adding agentic features, the line between assistant and actor gets thinner. That is exactly why clean permission boundaries and non-promotional UX standards are becoming strategic, not cosmetic.

If Microsoft wants Copilot to remain the default development AI for many organizations, it has to compete on reliability as much as breadth. Rival products do not need to be perfect; they just need to look more respectful of the user’s workspace. In a trust-sensitive market, that can be enough.

There is a broader lesson here about AI product maturity. The industry is moving from novelty features to workflow-critical systems, and that change requires a different standard of care. In a workflow-critical system, small surprises are not small; they can compromise confidence in the entire stack.

Microsoft and GitHub may ultimately use this as a catalyst to improve Copilot governance. If they do, the result could be a better product: more transparent, more configurable, and less likely to trigger developer distrust. But the company has to make those improvements visibly, not silently.

For Microsoft, the strategic challenge is bigger than this one incident. It wants developers to trust Copilot with more of the work of coding, reviewing, and coordinating. But trust is cumulative, and it is easy to burn. The safest path forward is one where AI remains powerful but visibly subordinate to the user’s intent.

Source: PC Perspective Microsoft Copilot Was Injecting Ads Into GitHub Pulls Requests And Merges - PC Perspective

Background

Background

GitHub Copilot began as a code-completion assistant, but over time it has expanded into a much larger layer of workflow automation. By 2025 and 2026, the product family was not just suggesting code in editors; it was helping with pull request descriptions, review comments, coding-agent tasks, Jira integration, usage metrics, and even terminal-driven review requests. That expansion has been steady and deliberate, with GitHub repeatedly framing Copilot as a collaborator that lives inside the software delivery process rather than alongside it.That context matters because pull requests are not casual surfaces. A PR description is part project record, part review contract, and part audit trail. When GitHub announced pull-request-related Copilot features, the official messaging emphasized improvements to clarity, collaboration, and workflow efficiency, not marketing copy or product placement. In August 2025, for example, GitHub explicitly said it would retire text completion for PR descriptions and focus instead on better summaries. That was a signal that the company wanted Copilot to help developers communicate more cleanly, not more noisily.

The recent backlash therefore lands in a trust-sensitive area. A tool that can draft or refine PR descriptions already operates close to content a developer considers authoritative. If that same tool begins inserting promotional text, even temporarily, it can blur the line between assistance and manipulation. In practice, the distinction is everything: developers may tolerate AI suggestions, but they are far less willing to tolerate AI-driven editorial control over their own repository activity.

There is also a timing issue. GitHub’s March 2026 product cadence shows Copilot continuing to move deeper into pull request workflows, including the ability to ask Copilot to make changes to existing PRs, choose models for PR comments, request reviews from the CLI, and handle Jira-linked agent tasks. Those are all legitimate productivity moves, but they also increase the surface area for accidental or controversial behavior. The more autonomy Copilot has inside GitHub, the more carefully Microsoft and GitHub have to preserve user intent and consent.

What Happened

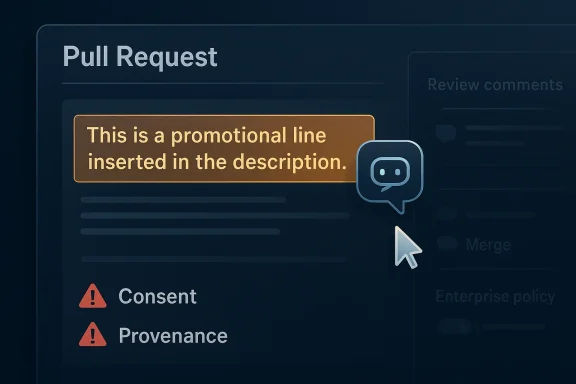

According to the developer complaints described in the PC Perspective report, Copilot inserted advertisements into pull request descriptions and merge-related text, surfacing recommendations for tools such as Raycast, Slack, Teams, and various IDEs without warning to the user. That is the core reason the reaction was so severe: the issue was not merely that the text was promotional, but that it appeared inside a high-trust workflow artifact that the user reasonably expected to control. The behavior was framed by critics as an unauthorized modification, not a harmless suggestion.Why the Placement Mattered

A banner ad on a web page is annoying. An ad injected into a pull request description is different, because it sits inside a record that reviewers, managers, and automation systems may treat as part of the project’s official history. That means the ad is not only visible; it can become contextual contamination of the development record. In a code review setting, that is especially corrosive because developers rely on PR text to explain intent, assess risk, and document decisions.The other problem is perception. If an assistant can rewrite or augment a PR description without explicit approval, developers naturally wonder what else it can touch. Even if the inserted text is limited to marketing copy, the psychological leap to “what stops it from altering more important content?” is immediate. In a platform built around source control, that kind of doubt spreads quickly.

A company can usually absorb one UI misfire. What it cannot afford is an impression that AI is acting with ambiguous authority over user-generated content. The trust deficit here is not theoretical; it affects willingness to enable Copilot features at all. That is the real cost of a controversial injection event.

- Developers expect intentional authorship in PR metadata.

- Hidden changes to PR text feel like tampering, not assistance.

- Promotional content in review workflows is disproportionately damaging.

- Once trust is shaken, every future Copilot feature is judged more harshly.

Why Developers Reacted So Strongly

Developers are unusually sensitive to tools that alter code-adjacent content without permission. That is partly cultural, but it is also operational: software teams depend on deterministic workflows, reproducibility, and traceable ownership. A PR description is not source code, but it is still an artifact that can influence release decisions, compliance reviews, and incident analysis. When an AI assistant changes that artifact on its own, even for something as mundane as an ad, it violates a core assumption about control.Trust Is a Product Feature

Trust is not a public-relations layer around developer tools; it is the product itself. GitHub Copilot for Pull Requests is explicitly marketed as an assistant that reviews, summarizes, and helps manage changes. Once the assistant starts adding content for reasons unrelated to the repository, the feature’s value proposition shifts from “help me work faster” to “let the platform decide what belongs in my workflow.” That is a much harder sell.Developers also have long memories when it comes to platform overreach. If they believe a vendor is testing the edges of what it can insert into their tooling, they worry about precedent. The fear is not necessarily that Microsoft will start editing source files outright; it is that each boundary crossed makes the next one easier to justify. Even a small violation can create a large shadow.

There is another layer here: monetization suspicion. GitHub Copilot has become increasingly embedded across Microsoft’s developer stack, and GitHub’s own March 2026 updates show how quickly the product is evolving. In that context, inserted promotional mentions can look less like a glitch and more like a test of commercial tolerance. Whether or not that was the intent, the optics are predictably bad.

- Source-of-truth workflows cannot tolerate silent edits.

- Developers interpret unauthorized content as a governance failure.

- Commercial messaging inside PRs feels especially invasive.

- The more central Copilot becomes, the more any mistake is magnified.

Microsoft and GitHub’s Copilot Ambitions

The Copilot strategy is broader than code completion now. GitHub has spent the last year turning Copilot into a reusable layer across pull requests, issue tracking, CLI workflows, and coding-agent automation. The company’s own changelog shows features such as asking Copilot to make changes to PRs, selecting models for PR comments, requesting reviews from the terminal, and integrating Copilot with Jira. That is a coherent product vision: Copilot as the intelligent layer across the software lifecycle.The Upside of Deeper Integration

From a product standpoint, the pitch is compelling. If Copilot can generate descriptions, summarize changes, review code, and draft related pull requests, it can remove a lot of repetitive work from engineering teams. GitHub’s documentation and changelog language consistently frames these features as productivity boosts that help teams move faster with less friction. That is a rational response to the reality of modern engineering, where context switching is expensive and review bottlenecks are common.But deeper integration also creates a governance burden. The closer Copilot gets to the center of a repository’s workflow, the more it must behave like infrastructure rather than a consumer assistant. Infrastructure is supposed to be predictable, transparent, and configurable. It is not supposed to surprise users with extra messaging that serves a vendor’s commercial goals.

That tension may explain why this incident became so combustible so quickly. Microsoft wants Copilot to feel ubiquitous, but users still want it to feel subordinate. Those are not the same thing. Subtle assistance is welcomed; assertive intervention is not.

Product Design and Guardrails

The natural lesson is that Copilot needs much stricter guardrails around content provenance. If the system is allowed to suggest or generate text in PRs, it should be obvious when it is doing so, what sources it used, and how the user can approve, edit, or reject the output. That is especially important in enterprise settings where auditability matters. A transparent generation flow is not just a courtesy; it is a requirement.- Copilot is now positioned as a workflow layer, not just a coding helper.

- The promise of automation is strong, but so is the governance risk.

- PRs and merges require clear provenance.

- Enterprise buyers will expect visible controls and opt-outs.

The Enterprise Angle

For enterprise customers, this is not just a cultural annoyance; it is a procurement issue. Organizations buying Copilot across a fleet of developers want strong assurances that the tool will not insert undisclosed content, misrepresent authorship, or create compliance ambiguity. GitHub’s own enterprise-facing Copilot metrics updates and PR activity reporting show that Microsoft understands this audience cares deeply about observability. The ad-injection episode runs directly against that expectation.Audit Trails and Policy Controls

Enterprises rely on PR history for more than code review. Security teams, legal teams, and release managers all use repository metadata to reconstruct why changes were made and who approved them. If Copilot can alter that metadata without explicit consent, even temporarily, companies may begin demanding stricter policy controls or outright disabling of certain AI-assisted text features. That kind of backlash can spread beyond the specific feature that caused it.Microsoft has invested heavily in positioning Copilot as measurable and manageable, not just clever. GitHub’s usage metrics work suggests a mature enterprise story built around adoption, impact, and pull request flow. But if the platform appears to inject marketing into work product, those metrics lose some of their persuasive power. Customers will want to know not just what Copilot does, but what it refuses to do.

That distinction is critical. Enterprises do not buy AI because it is playful or imaginative; they buy it when it is predictable enough to be governed. Predictability is the enterprise feature. Without it, even valuable automation begins to look like risk.

- Enterprises need policy enforcement, not just feature flags.

- Auditability matters more than flashy AI behavior.

- Procurement teams will ask about content injection boundaries.

- Trust erosion can slow or reduce Copilot rollouts.

The Consumer and Individual Developer Impact

For individual developers, the issue is more immediate and emotional. A solo engineer or small team working in GitHub can feel that their repository is their own workbench, and any unexplained alteration feels personal. The reports of promotional text appearing inside PRs tapped into that instinct immediately, because they suggested that Microsoft was treating developer-generated content as a surface for product promotion.Why Small Teams Care Too

Smaller teams may not have formal compliance requirements, but they are often even more sensitive to friction. They adopt GitHub and Copilot precisely because they want a streamlined experience. If that experience starts to feel noisy or manipulative, the perceived value drops fast. In a small team, one skeptical maintainer can disable the feature for everyone.This is also a reminder that convenience features can become trust liabilities very quickly. A PR description generator is useful because it saves time. The same generator becomes a problem when it appears to speak for the user in ways the user never approved. The move from utility to overreach can be painfully abrupt.

The irony is that Microsoft has been working hard to make Copilot more helpful in exactly this workflow area. GitHub deprecated PR-description text completion in favor of better summaries, and later expanded Copilot deeper into PR review and agentic work. That means this misstep lands right where users were supposed to experience the most tangible productivity gains.

- Individual developers value clarity and control over novelty.

- Small teams can disable features quickly when trust erodes.

- A convenience feature that feels like a billboard becomes a liability.

- PR workflows are too important for surprise marketing.

Microsoft’s Damage Control Problem

Microsoft reportedly reversed course quickly, but reversals do not erase reputational damage. In developer tooling, the initial surprise often matters more than the eventual fix, because it reveals how the company thinks about boundary-setting. If a team has to be told not to inject ads into work artifacts, the problem is not just the feature; it is the judgment behind it.The Cost of “We Fixed It”

Fast remediation is good, but it is not enough when the issue implicates trust. Developers will want to know whether the behavior was an experiment, a bug, a rollout mistake, or a deliberate product choice that was walked back after backlash. Those are very different explanations, and each one carries different consequences for how seriously future Copilot assurances are taken. The story behind the fix matters as much as the fix itself.There is also a communications challenge. GitHub has been shipping a steady stream of Copilot updates in March 2026, which can make it harder for the company to isolate one incident and treat it as exceptional. The broader Copilot narrative is one of expansion and acceleration. An ad injection episode can therefore be interpreted as evidence that the growth mindset is outrunning restraint.

That is dangerous because the developer community tends to remember pattern more than apology. If this becomes one more entry in a series of “Copilot goes too far” stories, the company will find it harder to convince users that future surprises are isolated. Trust, once taxed, becomes expensive to rebuild.

- Quick rollback is necessary, but not sufficient.

- Users want to understand whether this was a bug or a policy choice.

- One incident can reinforce a broader narrative of overreach.

- Communications must explain both what happened and why it happened.

Competitive Implications

This episode also affects the competitive landscape. Other AI coding tools, including assistants from Google, Anthropic, OpenAI, and a growing crop of startup competitors, will use it as evidence that AI workflow vendors must be held to a very high standard. In a market where everyone is racing to embed AI deeper into developer environments, trust becomes a key differentiator.Vendor Neutrality Matters More

Developers already worry about lock-in, and AI-assisted workflow tools can intensify that concern. If one platform begins surfacing vendor-promotional content inside source control artifacts, it looks less like a neutral productivity layer and more like a distribution channel. Competitors will likely frame themselves as safer, cleaner, or more developer-first in contrast. That messaging writes itself.The market is also moving toward autonomous agents that can open PRs, request reviews, and interact with issue trackers. GitHub’s own updates in March 2026 make that clear. When every vendor is adding agentic features, the line between assistant and actor gets thinner. That is exactly why clean permission boundaries and non-promotional UX standards are becoming strategic, not cosmetic.

If Microsoft wants Copilot to remain the default development AI for many organizations, it has to compete on reliability as much as breadth. Rival products do not need to be perfect; they just need to look more respectful of the user’s workspace. In a trust-sensitive market, that can be enough.

- Competitors can position themselves as more trustworthy.

- Neutrality is now a product feature in AI developer tools.

- Autonomous agents increase the importance of permission design.

- One bad UX decision can become a market differentiator for rivals.

What This Means for GitHub’s Future Copilot Rollout

The incident is likely to influence how GitHub rolls out future PR-related Copilot features. Expect more visible consent prompts, more granular settings, and tighter restrictions on what generated content can include. GitHub has already shown that it can shift product strategy when a specific Copilot experience is no longer worth the risk, as seen with the retirement of pull request description text completion in 2025.Likely Product Adjustments

The most plausible near-term response is not a retreat from AI, but a sharper definition of boundaries. GitHub can still expand Copilot in PRs, reviews, and agents, but it will need stronger language about what is user-authored versus AI-authored, what is suggestion versus insertion, and what content is allowed to be generated at all. Those distinctions will matter even more in enterprise environments.There is a broader lesson here about AI product maturity. The industry is moving from novelty features to workflow-critical systems, and that change requires a different standard of care. In a workflow-critical system, small surprises are not small; they can compromise confidence in the entire stack.

Microsoft and GitHub may ultimately use this as a catalyst to improve Copilot governance. If they do, the result could be a better product: more transparent, more configurable, and less likely to trigger developer distrust. But the company has to make those improvements visibly, not silently.

- More explicit opt-in controls are likely.

- PR text generation should become more clearly labeled.

- Allowing or blocking promotional content needs hard policy limits.

- Enterprise admins will expect policy-based governance.

- The feature set may grow, but only if trust keeps pace.

Strengths and Opportunities

Despite the backlash, the underlying Copilot roadmap still has genuine strengths. GitHub is targeting a real bottleneck in software delivery: repetitive, context-heavy work around PRs, reviews, and handoffs. If Microsoft can tighten the UX and governance model, it still has a chance to build the most comprehensive AI layer in the developer lifecycle. That opportunity remains substantial, especially because the company already has the distribution and integration points most rivals would envy.- Workflow depth: Copilot already touches many parts of the development loop.

- Enterprise reach: GitHub has a huge installed base and strong admin controls.

- Agentic potential: PR creation, review, and issue integration are all natural expansions.

- Metrics maturity: GitHub is investing in usage and impact reporting.

- Product cohesion: The roadmap points toward a unified assistant across IDE, CLI, and repository.

- Efficiency gains: Properly bounded AI can reduce review overhead and documentation fatigue.

- Platform leverage: GitHub can set norms for the industry if it gets the UX right.

Risks and Concerns

The biggest risk is not the promotional text itself; it is the precedent it creates. If developers believe Copilot can blur the line between assistance and insertion, they may start disabling it or limiting it to low-trust tasks. That would undermine adoption at exactly the moment Microsoft is trying to make Copilot central to the development experience. In the long run, a trust problem can be more expensive than a feature problem.- Trust erosion around content ownership and provenance.

- Enterprise pushback over compliance and auditability.

- Perception of commercialization inside developer workflows.

- Reduced adoption of otherwise useful PR automation.

- Competitor advantage if rivals market themselves as cleaner and safer.

- Boundary creep if generated content is not tightly constrained.

- Reputation damage from even brief repeated incidents.

Looking Ahead

The next few months will show whether this was a brief misjudgment or an early warning sign. If GitHub responds with clearer controls, better labeling, and firmer guarantees about what Copilot may and may not inject, the company can probably contain the damage. If not, every new Copilot feature in pull requests will be filtered through the same suspicion.For Microsoft, the strategic challenge is bigger than this one incident. It wants developers to trust Copilot with more of the work of coding, reviewing, and coordinating. But trust is cumulative, and it is easy to burn. The safest path forward is one where AI remains powerful but visibly subordinate to the user’s intent.

- Watch for formal statements clarifying what happened.

- Monitor whether GitHub adds stronger opt-in and labeling.

- Expect enterprise customers to ask for tighter policy controls.

- See whether competitors use the episode in their own marketing.

- Look for more Copilot changes in PRs, but with less ambiguity.

Source: PC Perspective Microsoft Copilot Was Injecting Ads Into GitHub Pulls Requests And Merges - PC Perspective