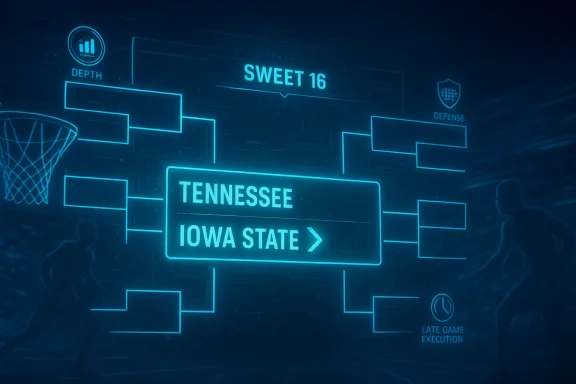

Sweet 16 predictions are where March Madness stops being a bracket exercise and starts becoming a stress test for algorithms, coaching, and nerve. In USA Today’s March 25, 2026 piece, Microsoft Copilot emerged as an early bracket geek’s favorite after correctly calling a major first-week upset and 12 of the 16 Sweet 16 teams. Its latest simulation is far less chaotic than the opening weekend, but it still leaves room for one meaningful upset: No. 6 Tennessee over No. 2 Iowa State.

That matters because the Sweet 16 is where predictive models often begin to diverge from the raw seed list. The field may look chalky on paper, but the matchups now tilt toward depth, size, discipline, and late-game execution rather than pure ranking. In other words, this is the round when AI predictions can look either impressively insightful or painfully overconfident.

March Madness has always invited prediction, but the rise of generative AI has changed the tone. Fans used to compare bracket pools and analyst picks; now they can ask a chatbot to simulate the entire tournament and explain its reasoning in plain English. That makes March 2026 part basketball tournament, part public demonstration of how far consumer AI has come.

The NCAA tournament itself is built for surprise. The first weekend routinely punishes overreliance on seed lines because one poor shooting night, one foul-trouble stretch, or one matchup problem can swing everything. By the time the Sweet 16 arrives, the remaining teams are usually those with the most reliable two-way profiles, which is why a model that went 12-for-16 on the Sweet 16 field deserves attention even if it was not perfect.

This year’s Sweet 16 was especially fertile ground for AI chatter because the bracket still contained only a handful of so-called Cinderella stories. According to the USA Today report, No. 11 Texas and No. 9 Iowa were the only teams seeded higher than sixth to survive to the second weekend. That gives the regionals a different feel than the more chaotic years, when double-digit seeds can distort the entire predictive landscape.

The Copilot simulation also stands out because it was not blindly contrarian. It correctly forecast High Point’s upset over Wisconsin in the first round, and it pegged a Final Four of Houston, Duke, Arizona, and Michigan, all of which remained alive at the time of publication. That kind of performance encourages the tempting but dangerous conclusion that the model has “figured out” March Madness. It hasn’t. It has simply found a good set of cues in a small sample.

The article’s core claim is simple: Copilot got the first weekend mostly right and now predicts just one Sweet 16 upset, Tennessee over Iowa State. That forecast is conservative by March standards, but it is also grounded in the idea that deeper, more physical teams tend to survive the tournament’s middle rounds. In that sense, the model is not trying to be flashy; it is trying to be credible.

What makes this more interesting than a one-off novelty is the broader strategic implication for both fans and media outlets. If AI can deliver a mostly sensible bracket with narrative explanations, then it becomes an additional layer of analysis rather than a gimmick. For readers, that means more ways to engage with the tournament; for publishers, it means a new kind of evergreen, high-interest content.

Still, the article also reveals the limits of sports AI coverage. Copilot’s explanations lean on familiar basketball descriptors: depth, physicality, defense, pace, and efficiency. Those are valid factors, but they are also the same factors human analysts have relied on for decades. The value is not that AI invented new basketball logic; it is that it can package familiar logic quickly and at scale.

For Duke, the model’s reasoning emphasized depth and defensive efficiency, which are exactly the traits that travel well in the tournament. St. John’s, by contrast, was described as dangerous but less complete. That distinction matters because in the Sweet 16, a hot shooter or a buzzy upset story is rarely enough unless the underdog can also survive on the glass and defend for forty minutes.

UConn versus Michigan State is a different kind of matchup, one between a team with a high ceiling and a team with a reputation for toughness. Copilot sided with the Huskies because their defense is more consistent and their overall gear is higher. That is a classic AI move: trust the better all-around profile unless the underdog has a clear, repeatable path to controlling pace.

Nebraska over Iowa is notable because it breaks from pure seed orthodoxy without becoming reckless. Copilot cited Nebraska’s experience in high-level, tight games and its steadier defense. That kind of logic reflects a sophisticated appreciation for tournament context, where emotional resilience and defensive structure often decide late possessions.

Houston over Illinois is less surprising, but it reinforces the model’s identity. Houston is treated as a team that overwhelms opponents when locked in, especially through athleticism and physical play. That is a reminder that AI does not need every pick to be spicy; it needs the bracket to hang together internally.

Arizona is described as a team that can control the matchup with pace and shot creation. That phrasing matters because tournament teams often win not by “playing their game” in a vague sense, but by forcing the opponent into the wrong kinds of possessions. If Arizona can accelerate tempo while limiting Arkansas’s ability to impose inconsistency, the prediction becomes easier to defend.

Purdue over Texas is the most lopsided of Copilot’s regional calls in terms of profile logic. The model cited Purdue’s completeness, superior interior scoring, and efficiency, plus Texas’s status as a double-digit seed that is “not a Cinderella.” That line is more than a quip; it signals that the AI is making a distinction between seed position and volatility. Texas may be dangerous, but danger alone does not equal upset probability.

The Michigan-Alabama matchup, meanwhile, went to the Wolverines. Copilot cited Michigan’s balance and momentum, while acknowledging that Alabama can score. That tension is important because Alabama-style offensive firepower can flatten weaker opponents but may not be enough when the other side can answer on both ends.

This region feels like the AI’s most human part of the bracket. It does not simply praise the highest-seeded teams; it identifies a path for the lower seed based on style. Tennessee’s defense and toughness are treated as capable of shrinking possessions and changing the game’s texture. In the Sweet 16, that can matter more than the ranking next to the name.

The first weekend is where predictive credibility gets earned. If an AI system can successfully identify a few meaningful upsets while still keeping the overall bracket mostly intact, it starts to look more like a useful assistant and less like a novelty. That does not make it omniscient, but it does make it relevant.

The model’s 12-of-16 Sweet 16 hit rate also reflects a key reality about March Madness: the favorite usually survives more often than fans remember. Humans tend to overweight the dramatic upset and underweight the dozens of routine results that keep brackets on schedule. AI systems, at their best, correct for that bias by leaning on structure rather than spectacle.

The value of that pick is also pedagogical. It teaches readers that a lower seed is not automatically a bad pick, but it should usually have a concrete path to victory. Here, that path is defense, physicality, and execution in a tight game. If the model had instead picked a lower seed because of vague momentum language, the prediction would have been weaker.

At the same time, one upset also reveals restraint. AI in sports writing can be tempted to chase novelty because novelty drives clicks. A more conservative model may be more boring, but it is often more useful. That’s especially true in the Sweet 16, where the talent gap narrows and the temptation to overreact grows.

The enterprise angle is subtler but more important. If a general-purpose chatbot can generate structured sports analysis on demand, it proves something about the model’s ability to summarize, compare, and explain under ambiguous conditions. Those are the same skills that matter in workplace knowledge tasks, from internal research to decision support to content drafting.

But there is also a branding risk. A visibly wrong sports prediction can become a joke, and public mistakes are more memorable than abstract technical competence. That means consumer-facing sports demos are valuable partly because they are risky. If the model looks smart under tournament pressure, it gains cultural credibility; if it misses spectacularly, it becomes just another chatbot with an opinion.

For media companies, that is both an opportunity and a threat. An AI-generated bracket story can keep readers engaged, but it can also compress the space for traditional opinion coverage if publishers lean too heavily on automation. The winning strategy will likely be hybrid: use AI for speed and scale, then use journalists for judgment and context.

For rivals in the AI market, sports prediction is not really about sports. It is about proving that a chatbot can be useful in a popular, emotionally resonant setting without collapsing into nonsense. Microsoft gets to show off Copilot in a low-barrier environment where the audience immediately understands whether the output feels plausible. That is powerful marketing, even if it is not formal benchmarking.

The other question is whether AI sports coverage settles into a stable editorial role. If the best use case is a quick prediction plus a readable rationale, then publishers may increasingly treat these models as assistants rather than authorities. That would be a healthy outcome because it preserves human judgment while still exploiting AI’s speed.

Source: USA Today Sweet 16 predictions: AI predicts a March Madness upset

That matters because the Sweet 16 is where predictive models often begin to diverge from the raw seed list. The field may look chalky on paper, but the matchups now tilt toward depth, size, discipline, and late-game execution rather than pure ranking. In other words, this is the round when AI predictions can look either impressively insightful or painfully overconfident.

Background

Background

March Madness has always invited prediction, but the rise of generative AI has changed the tone. Fans used to compare bracket pools and analyst picks; now they can ask a chatbot to simulate the entire tournament and explain its reasoning in plain English. That makes March 2026 part basketball tournament, part public demonstration of how far consumer AI has come.The NCAA tournament itself is built for surprise. The first weekend routinely punishes overreliance on seed lines because one poor shooting night, one foul-trouble stretch, or one matchup problem can swing everything. By the time the Sweet 16 arrives, the remaining teams are usually those with the most reliable two-way profiles, which is why a model that went 12-for-16 on the Sweet 16 field deserves attention even if it was not perfect.

This year’s Sweet 16 was especially fertile ground for AI chatter because the bracket still contained only a handful of so-called Cinderella stories. According to the USA Today report, No. 11 Texas and No. 9 Iowa were the only teams seeded higher than sixth to survive to the second weekend. That gives the regionals a different feel than the more chaotic years, when double-digit seeds can distort the entire predictive landscape.

The Copilot simulation also stands out because it was not blindly contrarian. It correctly forecast High Point’s upset over Wisconsin in the first round, and it pegged a Final Four of Houston, Duke, Arizona, and Michigan, all of which remained alive at the time of publication. That kind of performance encourages the tempting but dangerous conclusion that the model has “figured out” March Madness. It hasn’t. It has simply found a good set of cues in a small sample.

Why this matters now

A strong bracket model can be useful, but only if readers understand what it is and what it is not. AI can summarize roster quality, seed value, and recent form, yet it cannot experience pressure, sense fatigue, or detect the human volatility that makes the tournament famous. The Sweet 16 is where that limitation becomes most visible.- AI can identify structure better than it can predict emotion.

- Seed-based logic still matters, but it is no longer sufficient.

- Upsets become more matchup-driven in the later rounds.

- Narrative confidence often exceeds mathematical certainty.

- Single-game samples are inherently fragile.

Overview

The USA Today story gives us a compact case study in how modern sports coverage has evolved. Instead of relying only on beat writers and handicappers, the article uses Microsoft Copilot as a transparent, conversational forecasting engine. That is a meaningful shift because the model’s reasoning is part of the product, not just the result.The article’s core claim is simple: Copilot got the first weekend mostly right and now predicts just one Sweet 16 upset, Tennessee over Iowa State. That forecast is conservative by March standards, but it is also grounded in the idea that deeper, more physical teams tend to survive the tournament’s middle rounds. In that sense, the model is not trying to be flashy; it is trying to be credible.

What makes this more interesting than a one-off novelty is the broader strategic implication for both fans and media outlets. If AI can deliver a mostly sensible bracket with narrative explanations, then it becomes an additional layer of analysis rather than a gimmick. For readers, that means more ways to engage with the tournament; for publishers, it means a new kind of evergreen, high-interest content.

Still, the article also reveals the limits of sports AI coverage. Copilot’s explanations lean on familiar basketball descriptors: depth, physicality, defense, pace, and efficiency. Those are valid factors, but they are also the same factors human analysts have relied on for decades. The value is not that AI invented new basketball logic; it is that it can package familiar logic quickly and at scale.

The bracket logic beneath the headline

The headline promises an upset prediction, but the deeper story is about how conservative the model actually is. The best AI bracket predictors tend to favor teams that win possession battles, defend consistently, and avoid turnover-heavy styles. That naturally makes the Sweet 16 look more chalky than casual fans might expect.- Duke and Houston are rewarded for depth and consistency.

- Purdue gets credit for interior size and efficiency.

- Arizona is framed as a pace-and-shot-creation team.

- Tennessee is treated as a grinder with late-game discipline.

- Texas is downgraded less for being a double-digit seed and more for being a less stable profile.

East Region

The East bracket is where Copilot leaned hardest into familiar blue-blood logic. It projected No. 1 Duke over No. 5 St. John’s and No. 2 UConn over No. 3 Michigan State, which would produce a regional final full of tradition, talent, and NBA-level personnel. That is not a bold computer prediction so much as a statement that the highest-ceiling teams are still the safest bets.For Duke, the model’s reasoning emphasized depth and defensive efficiency, which are exactly the traits that travel well in the tournament. St. John’s, by contrast, was described as dangerous but less complete. That distinction matters because in the Sweet 16, a hot shooter or a buzzy upset story is rarely enough unless the underdog can also survive on the glass and defend for forty minutes.

UConn versus Michigan State is a different kind of matchup, one between a team with a high ceiling and a team with a reputation for toughness. Copilot sided with the Huskies because their defense is more consistent and their overall gear is higher. That is a classic AI move: trust the better all-around profile unless the underdog has a clear, repeatable path to controlling pace.

Why Duke and UConn fit the model

Both programs represent the kind of teams machine-learning systems tend to like. They combine brand recognition with roster quality, and they usually carry data profiles that look strong across multiple dimensions. In bracket terms, that translates into lower variance and fewer red flags.- Duke tends to score well in depth and shot quality.

- UConn often grades out as a complete two-way team.

- St. John’s and Michigan State bring real toughness but more uncertainty.

- Elite defenses usually travel better than streaky offense.

- Talent concentration matters more as the bracket narrows.

South Region

The South regional is where the AI’s prediction gets more interesting. Copilot projected No. 4 Nebraska over No. 9 Iowa and No. 2 Houston over No. 3 Illinois, setting up a region that values steadiness, size, and physicality over flair. It is the kind of bracket where the model appears to be saying: the better team usually survives when the margin for error gets tiny.Nebraska over Iowa is notable because it breaks from pure seed orthodoxy without becoming reckless. Copilot cited Nebraska’s experience in high-level, tight games and its steadier defense. That kind of logic reflects a sophisticated appreciation for tournament context, where emotional resilience and defensive structure often decide late possessions.

Houston over Illinois is less surprising, but it reinforces the model’s identity. Houston is treated as a team that overwhelms opponents when locked in, especially through athleticism and physical play. That is a reminder that AI does not need every pick to be spicy; it needs the bracket to hang together internally.

The Nebraska-Iowa debate

The Nebraska-Iowa line is the clearest example of an AI model reading beyond seed numbers. Iowa may have the more obvious Cinderella aura, especially after upsetting defending national champion Florida, but Copilot leaned on Nebraska’s steadier profile instead. That is a useful distinction for readers who assume upsets automatically mean “momentum.”- Nebraska is valued for stability in tight games.

- Iowa has the better upset story but also more volatility.

- Defense is the deciding lens in Copilot’s view.

- Experience in close games becomes a tiebreaker.

- Narrative momentum is not the same as predictive strength.

West Region

The West region is a showcase for the model’s willingness to reward pace and interior efficiency. Copilot picked No. 1 Arizona over No. 4 Arkansas and No. 2 Purdue over No. 11 Texas, which means the AI is not trying to manufacture drama where it does not see it. It simply sees a stronger structure on the favorite side.Arizona is described as a team that can control the matchup with pace and shot creation. That phrasing matters because tournament teams often win not by “playing their game” in a vague sense, but by forcing the opponent into the wrong kinds of possessions. If Arizona can accelerate tempo while limiting Arkansas’s ability to impose inconsistency, the prediction becomes easier to defend.

Purdue over Texas is the most lopsided of Copilot’s regional calls in terms of profile logic. The model cited Purdue’s completeness, superior interior scoring, and efficiency, plus Texas’s status as a double-digit seed that is “not a Cinderella.” That line is more than a quip; it signals that the AI is making a distinction between seed position and volatility. Texas may be dangerous, but danger alone does not equal upset probability.

Arizona and Purdue as data-friendly favorites

Both teams fit the classic profile of computer-friendly contenders. Arizona brings pace and scoring variety; Purdue brings size and efficiency around the basket. Those are not identical strengths, but they are both easy for models to value because they translate into stable game outcomes across different opponents.- Arizona can create shots before defenses set.

- Purdue can punish smaller lineups inside.

- Arkansas is talented but less consistent.

- Texas is dangerous but lower-seeded for a reason.

- Efficiency usually matters more than volatility in later rounds.

Midwest Region

The Midwest prediction is the only one that produces a mild surprise: No. 6 Tennessee over No. 2 Iowa State. Copilot framed this as a grinder’s game, one where Tennessee’s physicality and late-game execution give it a narrow edge. That is the kind of pick that separates a cautious model from a purely seed-driven one.The Michigan-Alabama matchup, meanwhile, went to the Wolverines. Copilot cited Michigan’s balance and momentum, while acknowledging that Alabama can score. That tension is important because Alabama-style offensive firepower can flatten weaker opponents but may not be enough when the other side can answer on both ends.

This region feels like the AI’s most human part of the bracket. It does not simply praise the highest-seeded teams; it identifies a path for the lower seed based on style. Tennessee’s defense and toughness are treated as capable of shrinking possessions and changing the game’s texture. In the Sweet 16, that can matter more than the ranking next to the name.

Tennessee over Iowa State as the model’s upset

If there is a “smart upset” in the Copilot bracket, this is it. Tennessee is not being picked because the model thinks Iowa State is bad; it is being picked because the matchup likely compresses into a possession-by-possession fight. Those games often turn on discipline, rebounding, and who can score without panicking.- Tennessee gets the nod for physicality.

- Iowa State is respected for defense but not enough to win the grinder.

- Late-game execution is treated as a decisive edge.

- Momentum is less important than style compatibility.

- Close games tend to favor the team with the cleaner plan.

What the Model Got Right

One of the strongest parts of the USA Today story is the reminder that Copilot’s earlier bracket logic was not random. It correctly picked High Point to upset Wisconsin, and it accurately projected a Final Four of Houston, Duke, Arizona, and Michigan. That kind of hit rate matters because it suggests the model was not merely echoing consensus; it was identifying some real tournament tendencies.The first weekend is where predictive credibility gets earned. If an AI system can successfully identify a few meaningful upsets while still keeping the overall bracket mostly intact, it starts to look more like a useful assistant and less like a novelty. That does not make it omniscient, but it does make it relevant.

The model’s 12-of-16 Sweet 16 hit rate also reflects a key reality about March Madness: the favorite usually survives more often than fans remember. Humans tend to overweight the dramatic upset and underweight the dozens of routine results that keep brackets on schedule. AI systems, at their best, correct for that bias by leaning on structure rather than spectacle.

Why accuracy in the first weekend matters

The first weekend is the most volatile part of the tournament, so early accuracy creates trust. Once a model successfully identifies an upset like High Point over Wisconsin, users are more likely to believe its later-round calls. But that trust can become a trap if the model’s success is really just a matter of matching common basketball logic with a few fortunate breaks.- Correct upset calls raise perceived credibility.

- Early bracket survival improves narrative momentum.

- Consensus alignment can look like insight after the fact.

- Small samples are dangerous for declaring a model “hot.”

- Balanced picks can still be useful even when not dramatic.

Why the One Upset Matters

Copilot’s lone Sweet 16 upset, Tennessee over Iowa State, is more interesting than a headline that simply shouted “AI picks a shocker.” It shows that the model still sees the later rounds as a place where one stylistic mismatch can matter more than bracket status. That is a more believable use of AI than a scattershot upset generator.The value of that pick is also pedagogical. It teaches readers that a lower seed is not automatically a bad pick, but it should usually have a concrete path to victory. Here, that path is defense, physicality, and execution in a tight game. If the model had instead picked a lower seed because of vague momentum language, the prediction would have been weaker.

At the same time, one upset also reveals restraint. AI in sports writing can be tempted to chase novelty because novelty drives clicks. A more conservative model may be more boring, but it is often more useful. That’s especially true in the Sweet 16, where the talent gap narrows and the temptation to overreact grows.

The difference between upset logic and upset theater

Not all upset predictions are created equal. Some are built on style, some on emotion, and some on little more than wishful thinking. Copilot’s Tennessee call falls into the first category, which is why it feels more credible than a random bracket buster.- Style-based upsets are easier to defend.

- Emotion-based upsets usually fade under scrutiny.

- Wishful picks make for fun content but weak analysis.

- The best upset calls explain the possession battle.

- One credible upset is better than five theatrical ones.

Enterprise vs. Consumer Implications

This story is bigger than basketball because it also serves as a product demonstration for Microsoft’s AI ecosystem. For consumers, Copilot becomes a fun, accessible bracket companion that can explain itself in everyday language. For Microsoft, it is a visibility play: the model is not just answering questions, it is participating in one of America’s biggest annual sports conversations.The enterprise angle is subtler but more important. If a general-purpose chatbot can generate structured sports analysis on demand, it proves something about the model’s ability to summarize, compare, and explain under ambiguous conditions. Those are the same skills that matter in workplace knowledge tasks, from internal research to decision support to content drafting.

But there is also a branding risk. A visibly wrong sports prediction can become a joke, and public mistakes are more memorable than abstract technical competence. That means consumer-facing sports demos are valuable partly because they are risky. If the model looks smart under tournament pressure, it gains cultural credibility; if it misses spectacularly, it becomes just another chatbot with an opinion.

What sports predictions say about broader AI usability

Sports are a low-stakes but high-visibility arena for testing AI reasoning. The data is plentiful, the outcomes are immediate, and the audience understands uncertainty. That makes bracket prediction a useful sandbox for evaluating whether an AI system can be both fluent and restrained.- Consumer users want fun, fast, explainable predictions.

- Enterprise buyers want structured reasoning under uncertainty.

- Sports content is a clean demonstration of probabilistic language.

- Public errors are more visible than quiet competence.

- Brand trust can rise or fall on a single viral miss.

The Competitive Landscape

March Madness prediction has long been a crowded field. Human pundits, statistical models, sportsbook odds, and bracket challenge millions all try to answer the same question from different angles. Copilot’s entrance into that ecosystem does not replace existing methods; it adds another layer of interpretation.For media companies, that is both an opportunity and a threat. An AI-generated bracket story can keep readers engaged, but it can also compress the space for traditional opinion coverage if publishers lean too heavily on automation. The winning strategy will likely be hybrid: use AI for speed and scale, then use journalists for judgment and context.

For rivals in the AI market, sports prediction is not really about sports. It is about proving that a chatbot can be useful in a popular, emotionally resonant setting without collapsing into nonsense. Microsoft gets to show off Copilot in a low-barrier environment where the audience immediately understands whether the output feels plausible. That is powerful marketing, even if it is not formal benchmarking.

Why bracket content is strategically valuable

Bracket content combines virality, repeat engagement, and easy explanation. It is one of the best consumer-facing demos for an AI model because nearly everyone has an opinion, but few people have certainty. That makes it ideal for showcasing probabilistic language and reasoning.- High emotional engagement drives sharing.

- Simple outputs make the model easy to understand.

- Frequent updates keep the content timely.

- Direct comparisons with human picks are inevitable.

- Mistakes are memorable, which cuts both ways.

Strengths and Opportunities

The Copilot bracket story highlights how AI can add real value when it stays disciplined, explainable, and grounded in basketball logic. The opportunity is not to replace analysts, but to give fans a fast second opinion that is easy to interrogate and compare against other forecasts.- Fast synthesis of a complex bracket into readable predictions.

- Transparent reasoning that explains why a team is favored.

- Good fit for casual fans who want a quick tournament lens.

- Useful as a comparison tool against expert picks.

- Strong content potential for publishers and platforms.

- Ability to highlight matchup factors beyond raw seed lines.

- Consumer familiarity that helps normalize AI use.

Risks and Concerns

The biggest danger is mistaking a few successful calls for broad predictive mastery. March Madness is a tiny sample environment, and even strong models can look brilliant or foolish depending on a few late shots. The other risk is that AI-generated sports content may be consumed too literally, without enough skepticism about variance and luck.- Small sample size makes overconfidence easy.

- Overreliance on seed logic can flatten important context.

- Hallucinated confidence may sound convincing even when shaky.

- Public misses can undermine trust quickly.

- Automation bias may lead users to defer too much to the model.

- Narrative compression can strip away meaningful uncertainty.

- Sports outcomes are inherently too volatile for certainty.

Looking Ahead

The most important thing to watch after this Sweet 16 is not whether Copilot “wins” every game. It is whether its style of explanation remains useful when the bracket gets even smaller and the matchups become even more specific. Elite Eight games tend to reward roster flexibility, coaching adjustments, and foul management, which are all areas where generic phrasing can start to break down.The other question is whether AI sports coverage settles into a stable editorial role. If the best use case is a quick prediction plus a readable rationale, then publishers may increasingly treat these models as assistants rather than authorities. That would be a healthy outcome because it preserves human judgment while still exploiting AI’s speed.

- How the AI handles the Elite Eight

- Whether more upsets emerge than predicted

- If the model stays consistent across rounds

- How fans react when the bracket inevitably breaks

- Whether media outlets deepen or dilute their AI integration

Source: USA Today Sweet 16 predictions: AI predicts a March Madness upset