Microsoft’s Copilot Usage Report 2025 is not a sleepy vendor marketing brief — it is a practical intelligence report that forces corporate compliance teams to rethink the scope, scale, and style of AI risk they manage. By analyzing 37.5 million de-identified Copilot conversations, Microsoft and independent observers found that users turn to Copilot not only for productivity tasks but for health, relationships, advice, and existential reflection — often on mobile devices and at hours when human judgment is most vulnerable. This behavioral, contextual reality transforms AI risk from a narrow “model validation” issue into a broad human-systems problem requiring new logging practices, explainability, escalation protocols, and organizational governance.

Compliance must stop treating AI as a purely technical problem and start governing the human relationship with intelligent systems. Doing so requires immediate, measurable changes: inventories, telemetry, escalation, and board-level oversight. The Copilot data makes the case unequivocal: the future of AI compliance will be about understanding people as much as understanding models.

Source: Compliance Week What the Copilot Usage Report 2025 Means for Corporate Compliance

Background

Background

What the report measured and why it matters

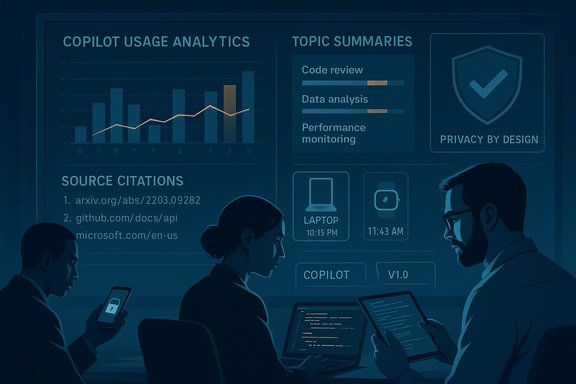

Microsoft’s Copilot Usage Report 2025 analyzed a sample of approximately 37.5 million de-identified interactions collected between January and September 2025, summarizing conversations to extract topics and intent rather than storing verbatim exchanges. The analysis surfaced clear device- and time-of-day patterns: health-related topics dominate mobile use across all hours, programming and work tasks dominate desktop use during the workweek, and advice-seeking behavior — asking Copilot what to do about relationships, life decisions, or personal dilemmas — is on the rise. These findings are important because they show where AI actually influences human decisions, not just where engineering teams expected it to be used.How Compliance Week framed the implications

In an opinion piece dissecting the report’s implications, Compliance Week’s Tom Fox argued that the Copilot dataset should shift compliance thinking from hypothetical, model-centric risk to human-centered and contextual risk. Fox emphasizes that when AI acts as a trusted advisor — especially in personal or high-stakes contexts — failures are governance failures with regulatory and reputational consequences. The editorial contends that compliance must move beyond checklist audits and into shaping how people interact with AI in real time.What the Copilot Usage Report 2025 reveals for corporate compliance

1) AI risk is behavioral — and time- and device-dependent

The single most consequential insight is simple but rarely operationalized: who uses AI, when, and on what device, changes the risk profile. Health queries on mobile at midnight are a different compliance problem from code reviews on a corporate desktop at 10 a.m. Compliance teams that assume consistent user behavior across contexts will miss these nuanced risk concentrations. That matters for monitoring, escalation, and remediation design.2) Advice-seeking shifts liability and expectations

When employees treat enterprise AI tools as advisors — asking what to do about an HR situation, contract language, or regulatory interpretation — organizations could inherit responsibility for guidance that is inaccurate, biased, or unaligned with policy. The legal and regulatory expectation is increasingly that organizations can explain, test, and remediate how AI systems reach advice-like conclusions. That degree of auditability requires stronger logging, traceability, and documented human-in-the-loop (HITL) decision points.3) Privacy-conscious analytics can and should inform governance

Microsoft’s approach — deriving topic and intent summaries from de-identified conversations — demonstrates that meaningful usage analytics are possible without retaining raw conversational content. From a compliance perspective, privacy preservation and operational insight are complementary: robust privacy controls enable the analysis that uncovers risk patterns while maintaining regulatory trust. Organizations that treat privacy as an impediment to monitoring will be strategically disadvantaged.4) Context-aware policies beat one-size-fits-all rules

The report shows that context shapes not only the content of queries but also the state of the user (fatigue, emotional stress, commute). Policies designed for daytime office use that assume rational, policy-aware operators won’t capture the real-world environments where AI is used. Compliance must therefore adopt adaptive controls — for example, different guardrails or escalation paths based on device, time, or detected user intent.Regulatory and standards landscape: why the report raises the bar

EU AI Act: operational obligations for deployers and providers

The EU Artificial Intelligence Act introduces concrete obligations that map directly to the Copilot findings: transparency about AI interaction, detailed documentation and post-market monitoring for high-risk systems, and human oversight requirements. Crucially, the Act makes deployers responsible for certain operational duties (such as logs and monitoring), and it can treat a deployer as a provider if they substantially modify or brand a model. For multinational organizations, the behavioral insights in the Copilot report increase the likelihood that some deployments or use-cases will fall within “high-risk” definitions or draw regulator scrutiny under the Act’s transparency and human oversight rules.NIST AI RMF: actionable structure for compliance programs

The NIST AI Risk Management Framework (AI RMF) prescribes four functions — Govern, Map, Measure, Manage — that provide a practical bridge between the Copilot report’s behavioral findings and compliance controls. NIST emphasizes traceability, continuous monitoring, and lifecycle governance: exactly the capabilities needed to detect context-dependent risk spikes, audit AI advice, and show regulators how decisions were governed. Compliance functions should use the AI RMF as a scaffolding for building evidence-based controls aligned with the report’s real-world patterns.US regulators: rising expectations though less prescriptive

U.S. federal regulators (consumer protection agencies, securities regulators, and sectoral supervisors) increasingly expect firms to manage AI risks proactively. While U.S. guidance is currently more fragmented than the EU’s, trends emphasize transparency, consumer protection, and governance — all areas where the Copilot report’s behavioral evidence increases scrutiny on how organizations prevent harm from advice-seeking or context-driven misuse. Organizations should assume that evidence of poor governance, not just technical failure, will attract regulatory interest.Concrete compliance implications and what compliance officers should do now

The Copilot findings call for operational changes. Below are nine concrete actions prioritized by urgency and impact.1. Build and maintain an AI use-case inventory (Map)

- Create a centralized registry of every AI instance, integration, and third-party tool in production or shadow IT.

- For each entry, record device profiles, typical user groups, data sensitivity, and plausible harms.

- Re-evaluate the classification quarterly and when models, connectors, or prompts change.

2. Require contextual logging and conversation metadata (Measure)

- Log topic-level summaries, intent labels, timestamps, device type, and model version for each interaction.

- Retain logs in an immutable audit trail with appropriate access controls and retention policies.

- Ensure logs are queryable for investigations and regulatory requests.

3. Implement tiered guardrails and dynamic escalation (Manage)

- Define triggers (sensitive topics, legal/HR queries, health advice) that flag interactions for human review.

- Automate escalation to compliance, legal, or HR when AI advice could drive a material decision.

- Institute rate limits and stricter response templates for late-night or mobile-originated advice-seeking.

4. Require explainability and source-attribution policies (Govern and Measure)

- For advice-like outputs, capture the model prompt, retrieval sources, citations (where possible), and the rationale generated by the system.

- Maintain versioned explanations and model cards that show training data summaries, limitations, and known biases.

5. Red-team advice-provisioning scenarios

- Run adversarial tests focused on advice flows: ask the system to generate HR counseling, compliance memos, or legal interpretations.

- Measure hallucination rates, harmful suggestions, and likelihood of policy contradiction.

- Feed red-team findings into prompt-design constraints and pre-approved response templates.

6. Tighten third-party and vendor governance

- Treat major LLM vendors and SaaS Copilot providers as critical vendors; require evidence of data handling, de-identification, and logging practices.

- Contractual SLAs must mandate audit rights, incident notification timelines, and minimum explainability features.

- Where the EU AI Act applies, assess whether the vendor or your organization assumes “provider” or “deployer” obligations.

7. Embed privacy-by-design into telemetry and analytics

- Use privacy-preserving techniques (topic/intent summaries, differential privacy, secure aggregation) to analyze usage patterns without exposing personal data.

- Align telemetry retention and access controls with GDPR/HIPAA/other applicable frameworks.

8. Train employees and create clear user guidance

- Publish role-specific policies: what Copilot should and shouldn’t be used for, who to notify when AI gives risky advice, and how to document decisions that relied on AI.

- Provide scenario-based training (including late-night or emotionally charged situations) that reflects the behavioral patterns the report uncovered.

9. Elevate AI compliance governance to the board

- Integrate AI risk into enterprise risk management (ERM), assign executive ownership, and report usage trends and incidents to the board regularly.

- Consider a named AI compliance officer or committee to bridge compliance, legal, product, and security functions.

Technical controls that matter

Data access controls and secrets hygiene

The rise of advice-seeking increases the risk that prompts will inadvertently include PII, trade secrets, or other sensitive material. Enforce connector allow-lists, restrict document ingestion to approved sources, and apply real-time PII detectors to prevent exfiltration.Prompt engineering and response templates

Pre-approved prompts and response templates reduce hallucination risk and ensure outputs include necessary disclaimers or steps for escalation. For enterprise deployments, embed business rules and policy-checking logic into the response pipeline.Model versioning and provenance

Capture model version, retrieval index snapshot, and prompt template for every advice response. This is essential for replicability and audit, and it aligns with both NIST traceability principles and EU documentation requirements.Monitoring for drift and behavior anomalies

Continuous monitoring should look beyond model metrics to user-behavior metrics: spikes in late-night advice-seeking, repeated dependence on AI for HR queries, and cross-border pattern changes that might trigger jurisdictional compliance issues.Risk scenarios and playbook examples

- Health advice used to support an accommodation decision: an employee uses Copilot’s health guidance to support a leave request. Compliance must verify whether the AI output was admissible, whether the employer’s reliance on it violated health-privacy rules, and whether the guidance conflicted with occupational health expert opinion. Logs and escalation evidence are decisive in defending decisions.

- Legal/contract guidance relied upon by non-lawyers: if counsel is not engaged and employees act on contract language generated by Copilot, the organization can face liability for inaccurate legal advice. Policies should prohibit using AI-generated legal language without lawyer review.

- HR decisions influenced by AI advice: an AI suggestion to discipline or terminate can create procedural and discrimination risks. The deployer must show human oversight and documented rationale consistent with company policy and legal requirements.

Strengths, limitations, and cautionary notes

Strengths

- The Copilot Usage Report 2025 provides rare, large-scale behavioral data that surfaces real user patterns — an invaluable input for compliance program design. It demonstrates that privacy-preserving analytics can reveal risk signals without retaining raw conversational content.

- The report underlines that AI governance must be cross-disciplinary, merging compliance, product, security, legal, and HR perspectives — a recognition that will improve resilience when operationalized.

Limitations and cautionary notes

- The Microsoft analysis is based on de-identified, summarized conversation data. While topic and intent summaries provide strong signals, they do not capture the full content, nuance, or context of every high-risk exchange. Compliance teams should treat summarized telemetry as a complement to — not a replacement for — targeted forensic logs in cases of incident response.

- The report reflects a single vendor’s installed base and product design. Organizations must map findings to their own deployments: different models, prompt pipelines, integrations, and cultures will yield different risk profiles. Do not assume Microsoft’s usage distribution will exactly mirror your environment.

- The regulatory landscape is evolving rapidly. The EU AI Act and other frameworks impose new duties that may change with delegated acts and member-state enforcement. Compliance programs must be living processes with frequent re-assessment cycles.

A short roadmap for the next 90 days

- Launch an AI use-case registry and classify by potential harm and advice-likeness.

- Implement or require vendor telemetry that preserves privacy while delivering topic-level and metadata logs.

- Define escalation triggers and an advisory sign-off process for advice-like outputs (HITL for HR, legal, and health-related queries).

- Run a prioritized red-team focused on advice-provision flows and remediate defects found in templates and guardrails.

- Brief the board and ERM owners on usage trends and the compliance program’s roadmap.

Conclusion

The Copilot Usage Report 2025 crystallizes a critical truth: AI risk is now human-first. Users turn to AI for help when they are tired, vulnerable, curious, and in contexts far removed from the tidy conditions imagined by tech teams. For corporate compliance, that elevates the role from post-facto auditor to real-time risk interpreter. Organizations that treat the report as a wake-up call — operationalizing context-aware logging, explainability, escalation protocols, and vendor governance — will convert a potential liability into a disciplined competitive advantage.Compliance must stop treating AI as a purely technical problem and start governing the human relationship with intelligent systems. Doing so requires immediate, measurable changes: inventories, telemetry, escalation, and board-level oversight. The Copilot data makes the case unequivocal: the future of AI compliance will be about understanding people as much as understanding models.

Source: Compliance Week What the Copilot Usage Report 2025 Means for Corporate Compliance