Microsoft used Azure Cosmos DB Conf 2026 in late April to argue that AI-native applications are turning databases into flexible, globally distributed systems for memory, retrieval, reasoning, and cost-aware scale, with OpenAI, Vercel, Walmart, and Microsoft presenters showing production patterns built around Azure Cosmos DB and Azure DocumentDB. The message was not subtle: the database is no longer just where the application stores its state after the interesting work is done. In Microsoft’s telling, the data tier is becoming the place where AI applications remember, search, coordinate, and police their own economics. That is a big claim, and it deserves both attention and skepticism.

Cosmos DB has always been easy to describe in ambitious terms and harder to evaluate in the abstract. It is Microsoft’s globally distributed NoSQL database, built for low latency, elastic scale, multiple APIs, and the sort of reliability promises that look wonderful in a slide deck and become very real when an outage hits a checkout cart. For years, its pitch was planetary scale.

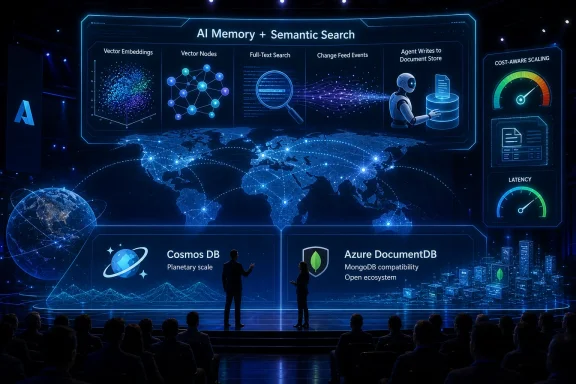

At Cosmos DB Conf 2026, Microsoft sharpened that pitch for the AI era. Kirill Gavrylyuk, Microsoft’s vice president for Azure Cosmos DB, framed the conference around three shifts: AI workloads depend on flexible semi-structured data, AI accelerates development cycles, and semantic search is becoming a first-class database operation rather than an external service bolted on after the fact.

That framing matters because it moves Cosmos DB out of the traditional “cloud database” bucket and into the hotter, more contested category of AI application infrastructure. In that world, the workload is not merely a web app reading and writing JSON documents. It is an agent saving conversation memory, retrieving relevant context, applying semantic search, reacting to events, and serving a user who may have appeared five seconds ago because a generated app went viral.

The implication is that Microsoft wants developers to think of Cosmos DB less as a back-end store and more as a live substrate for AI systems. That is a natural vendor move, but it is also grounded in a real architectural shift. AI applications create messy data, they change quickly, and they often need retrieval and operational state in the same hot path.

The risk is that the industry hears “AI database” and forgets that the old database problems did not retire. Partition keys, data modeling, latency budgets, consistency tradeoffs, regional failover, indexing costs, and runaway queries remain stubbornly non-magical. Cosmos DB Conf’s most useful moments were the ones that admitted as much.

That does not mean relational databases are suddenly obsolete. It means that many AI-facing applications are accumulating state whose shape changes faster than a conventional schema governance process can comfortably absorb. The operational question becomes whether the database can tolerate that evolution without turning every product iteration into a migration event.

Cosmos DB’s document model fits neatly into this story. JSON documents can carry evolving application context, and teams can add fields as agents, prompts, and product features change. For fast-moving AI teams, that flexibility is not a luxury; it is a way to avoid freezing the application design while the underlying user behavior is still being discovered.

But “schema-less” is a dangerous term when spoken too casually. Production systems always have a schema; sometimes it lives in the database, sometimes in application code, sometimes in validation libraries, and sometimes in the heads of the engineers who are paged at 2 a.m. The absence of a rigid database schema can accelerate iteration, but it can also hide inconsistency until queries, indexes, and downstream consumers begin to disagree about what a document actually means.

That is where Cosmos DB’s AI pitch becomes interesting. Microsoft is not merely saying that documents are flexible. It is saying that flexibility, global distribution, integrated search, caching, serverless capacity, and agent-friendly interfaces are converging into a single application platform. The database is being asked to absorb the chaos of AI development while still behaving like infrastructure.

That has an uncomfortable consequence for databases. The more quickly code is generated, the more quickly bad data models can be created. A coding agent can scaffold an application in minutes, but it cannot repeal the physics of distributed systems or the economics of inefficient queries.

Microsoft’s answer is to make Cosmos DB more legible to both humans and agents. The conference material emphasized serverless deployment, instant scalability, integrated caching, and tools that give developers feedback about partitioning, performance, and cost. That is a practical recognition that an AI-generated application still needs a responsible data model if it is going to survive contact with users.

The Vercel portion of the conference made this point sharply. Guillermo Rauch has spent years arguing for serverless workflows that remove friction from deployment. At Cosmos DB Conf, the AI-era version of that argument was that software creation may expand from millions of developers to far more creators who generate applications through agents and prompts.

If that vision is even half right, the database layer will be under new pressure. Applications will appear, spike, mutate, and disappear faster than procurement committees or classic capacity planning models can track. A database that expects careful upfront provisioning and slow schema evolution will feel antique in that environment.

Yet the Vercel story also exposes a tension. Serverless economics are attractive precisely because they make small and bursty workloads viable. But AI-generated applications can be careless with resources, and a system that scales instantly can scale mistakes instantly too. The next phase of developer experience is not just “make it easy to deploy.” It is “make it hard to accidentally build a financially ridiculous system.”

For years, this often meant stitching together an operational database, a search engine, an embedding pipeline, and an orchestration layer. That architecture can be powerful, but it introduces duplication, latency, consistency questions, and more moving parts for teams that already struggle to operate conventional distributed systems.

Microsoft’s pitch is that Cosmos DB can collapse more of that into the operational database itself. If the same platform can store application state, support vector retrieval, handle full-text search, respond to change feed events, and scale globally, then teams can reduce architectural sprawl. In theory, that means fewer synchronization paths and faster application loops.

The practical appeal is obvious. AI applications often need current context, not stale search indexes updated through a slow pipeline. If a user adds information, an agent observes an event, or a transaction changes state, retrieval should reflect that quickly enough to matter. Bringing search closer to the operational store reduces the number of places where reality can drift.

But consolidation is not automatically simplification. A database that becomes the system of record, vector index, text search platform, event source, cache partner, and agent memory layer also becomes more critical and more complex. The fewer systems you operate, the more discipline you need in the one system left standing.

This is where the phrase first-class query operator carries real weight. Semantic search cannot remain a demo feature if developers are expected to build production AI workflows on it. It needs predictable performance, clear cost behavior, robust indexing choices, and operational observability. Otherwise, the architecture merely moves the complexity from the diagram into the monthly bill.

The OpenAI story is valuable because it connects two ideas that are often treated separately: scale and malleability. It is not enough for a database to be large if every schema adjustment becomes a platform negotiation. It is not enough to be flexible if the system falls over when adoption explodes.

For OpenAI-like workloads, the hard problem is that success does not arrive gradually. A product feature can move from experiment to global dependency very quickly. Thousands of developers may be building against shared infrastructure, and the database must absorb both the traffic and the organizational churn.

That makes Cosmos DB’s schema-flexible, globally distributed identity a strong fit for the story Microsoft wants to tell. The company can point to OpenAI and argue that the demands of frontier AI applications are not hypothetical. They are already punishing conventional assumptions about onboarding speed, capacity planning, and data evolution.

Still, most WindowsForum readers are not OpenAI. Most enterprise teams are not processing petabytes of rapidly evolving AI application state, and most line-of-business apps do not need to scale from zero to millions of queries per second overnight. The lesson is not that every application should be designed like OpenAI’s.

The better lesson is that the pressure felt first at the extreme end of the market often drifts downward. Today’s “planet scale” requirement becomes tomorrow’s expectation that a departmental AI tool can launch globally, survive a usage spike, and let a small team iterate without a database redesign every sprint. OpenAI is the proof point; the broader market is the target.

That world changes the personality of a database. It cannot simply wait for a senior database engineer to bless every decision. It has to guide the developer in real time, surface cost consequences, and nudge applications toward workable models before usage makes mistakes expensive.

Rauch’s point about wanting a system where a developer writes a query and understands its cost gets to the center of the issue. Cost cannot remain a monthly surprise discovered by finance after a product launch. In cloud-native AI applications, cost has to become part of the development feedback loop.

That is especially true for agentic applications, where loops can be hidden. A human user clicks a button once, but the application may perform multiple retrievals, tool calls, writes, cache checks, and background updates. The developer needs to know not only whether the feature works, but whether the feature’s internal behavior scales economically.

Microsoft’s challenge is to make that feedback comprehensible. Request units, indexing policies, partitioning strategies, and consistency levels are powerful but not always intuitive. If AI coding agents are going to produce Cosmos DB-backed applications, they will need guardrails that translate database mechanics into design consequences.

The irony is that AI may make database expertise more important, not less. The expert may no longer handcraft every query or schema migration, but someone must understand whether the generated architecture is sane. In the AI era, the database professional becomes part reviewer, part platform engineer, and part economic safety officer.

Technical Fellow Sid Anand’s comments about keeping cart and view-cart interactions available and low latency through regional failures cut through the AI gloss. The point is not that AI makes reliability less important. It makes reliable infrastructure more heavily loaded, more widely integrated, and more visible to users.

This is Cosmos DB’s older pitch reappearing inside the newer one. Global distribution, low latency, and high availability were selling points before the current AI wave. Walmart’s presence at the conference suggests Microsoft wants customers to understand that the AI story sits on top of the same operational foundation.

That is a smart move because many AI platform pitches currently sound like they were assembled in a lab far from production traffic. Enterprises do not get to choose between innovation and reliability. They need both, and they will usually sacrifice novelty before they sacrifice checkout, authentication, support, billing, or compliance.

For Windows and Azure administrators, this is the familiar part of the story. The enterprise does not care that an application is AI-native if it breaks failover, explodes latency, or bypasses governance. The AI era may change the workload shape, but it does not lower the bar for operations.

In fact, it raises it. AI applications often sit closer to user decision-making, customer support, internal automation, and business workflows. When they fail, hallucinate, retrieve stale information, or lose context, the impact can be broader than a conventional page error.

That split is strategically important. Cosmos DB is powerful, but it has long carried a reputation for requiring careful design to avoid unpleasant cost outcomes. Microsoft knows that AI applications intensify that concern because retrieval, embeddings, agent memory, and rapid iteration can all multiply database activity.

By highlighting Azure DocumentDB and claiming significant cost advantages over alternatives, Microsoft is trying to cover a broader part of the document database market. Not every workload needs the full Cosmos DB value proposition. Some teams want MongoDB-style compatibility, independent scaling of compute and storage, and a more portable architecture.

There is also a competitive subtext. MongoDB remains a strong default mental model for document applications, while PostgreSQL, specialized vector databases, search platforms, and cloud-native NoSQL services all compete for AI-era workloads. Microsoft cannot win simply by saying Cosmos DB scales. It has to make a credible argument across cost, openness, developer familiarity, and integration with Azure’s AI stack.

DocumentDB gives Microsoft a second answer when a customer says Cosmos DB is too much platform for the job. That may make the Azure data portfolio more coherent, but it also creates a positioning challenge. Customers will need clear guidance on when to choose Cosmos DB, when to choose Azure DocumentDB, and when another Azure database is the better fit.

The most honest answer is workload-specific. If the application needs global distribution, aggressive elasticity, integrated multi-region availability, and Cosmos DB’s operational model, Cosmos DB remains the flagship. If the application prioritizes MongoDB compatibility, portability, and cost efficiency over the full planetary-scale feature set, Azure DocumentDB becomes easier to justify.

That phrase sounds like vendor poetry, but the pattern underneath is concrete. AI agents need memory, but memory is not just a transcript dumped into a table. It is a mix of recent context, durable facts, retrieved knowledge, coordination state, and safeguards against competing actions.

Semantic caching is especially compelling. If an AI system can recognize that a new request is meaningfully similar to a previous one, it may avoid redundant work, reduce model calls, and improve latency. But that only works if retrieval is fast enough and the cache is governed carefully enough not to return stale or inappropriate responses.

The change feed piece is equally important. Agentic systems often need to react when data changes: a document arrives, a user updates a profile, a workflow advances, or another agent writes state. Treating the database as an event source can keep these systems coordinated without constantly polling or building separate synchronization machinery.

Optimistic concurrency rounds out the production story. Multiple agents or services may try to update the same entity, and the system needs a way to detect and resolve conflicts. In ordinary applications this is already important; in AI applications, where automated actors may operate concurrently, it becomes essential.

This is the kind of architecture that makes Cosmos DB interesting beyond marketing. It uses database capabilities together rather than treating the product as a JSON bucket. It also demonstrates why AI applications are pushing databases toward a combined role: store, search engine, event hub, and coordination layer.

The Model Context Protocol has become a popular way to connect AI systems with tools and data sources, but it also creates a new attack and governance surface. If an AI agent can call tools, query data, or act on behalf of a user, then authentication and authorization must follow the user’s actual permissions rather than the developer’s optimism.

Storing per-user data in Cosmos DB and tying access to Entra ID is the sort of pattern enterprises will expect. Role-based access, least privilege, and auditability are not optional just because the interface is conversational. If anything, conversational interfaces make permission boundaries harder for users to see.

Microsoft’s advantage here is ecosystem gravity. Entra ID, Microsoft Graph, Azure services, GitHub Copilot, VS Code, and Cosmos DB can be presented as one integrated development and governance loop. That is appealing for organizations already standardized on Microsoft’s identity and productivity stack.

The caution is lock-in by convenience. The tighter the integration, the easier it is to build quickly inside Azure and the harder it may become to separate application logic from platform assumptions later. For many enterprises that tradeoff is acceptable, but it should be made consciously.

The broader point is that AI governance is becoming a data architecture issue. Where data lives, how it is partitioned, what identity is attached to it, how retrieval is filtered, and what tools can access it are all part of the application’s security posture. The prompt is not the perimeter. The data layer is.

Cosmos DB rewards good partitioning and punishes careless access patterns. It can scale impressively, but not if every query fights the data model. It can support flexible documents, but not if teams abdicate responsibility for validation, versioning, and lifecycle management.

Cost visibility is the thread that ties these concerns together. Microsoft’s emphasis on real-time feedback and economic design is not cosmetic. Developers building AI applications need to understand how reads, writes, indexes, vector operations, caching, and regional distribution affect the bill.

This is where sysadmins and platform teams should pay attention. AI application development is often sold as a way for product teams to move faster without waiting on central infrastructure. But when those applications hit production, central teams inherit the reliability, security, observability, and cost consequences.

The answer is not to block AI adoption or force every project through a six-month architecture review. The answer is to create paved roads: approved patterns for agent memory, retrieval, identity, logging, cost controls, and deployment. Cosmos DB can be part of that road, but it cannot be the road by itself.

Microsoft’s strongest argument is that databases need to become more helpful in that process. If tools can suggest partition keys, explain query costs, flag inefficient designs, and integrate with coding agents, they can reduce the gap between prototype and production. The weakest version of that argument is “let the agent handle it.” The strongest version is “make the expert’s judgment easier to apply earlier.”

Source: Microsoft Azure Build AI apps with Azure Cosmos DB: Key trends from Cosmos Conf 2026 | Microsoft Azure Blog

Microsoft Recasts Cosmos DB as the AI Application Substrate

Microsoft Recasts Cosmos DB as the AI Application Substrate

Cosmos DB has always been easy to describe in ambitious terms and harder to evaluate in the abstract. It is Microsoft’s globally distributed NoSQL database, built for low latency, elastic scale, multiple APIs, and the sort of reliability promises that look wonderful in a slide deck and become very real when an outage hits a checkout cart. For years, its pitch was planetary scale.At Cosmos DB Conf 2026, Microsoft sharpened that pitch for the AI era. Kirill Gavrylyuk, Microsoft’s vice president for Azure Cosmos DB, framed the conference around three shifts: AI workloads depend on flexible semi-structured data, AI accelerates development cycles, and semantic search is becoming a first-class database operation rather than an external service bolted on after the fact.

That framing matters because it moves Cosmos DB out of the traditional “cloud database” bucket and into the hotter, more contested category of AI application infrastructure. In that world, the workload is not merely a web app reading and writing JSON documents. It is an agent saving conversation memory, retrieving relevant context, applying semantic search, reacting to events, and serving a user who may have appeared five seconds ago because a generated app went viral.

The implication is that Microsoft wants developers to think of Cosmos DB less as a back-end store and more as a live substrate for AI systems. That is a natural vendor move, but it is also grounded in a real architectural shift. AI applications create messy data, they change quickly, and they often need retrieval and operational state in the same hot path.

The risk is that the industry hears “AI database” and forgets that the old database problems did not retire. Partition keys, data modeling, latency budgets, consistency tradeoffs, regional failover, indexing costs, and runaway queries remain stubbornly non-magical. Cosmos DB Conf’s most useful moments were the ones that admitted as much.

AI Makes Semi-Structured Data the Main Event

The first of Microsoft’s three shifts is the least flashy and probably the most important. AI systems generate and consume data that does not sit comfortably in a rigid schema: prompts, embeddings, message histories, retrieved passages, tool outputs, user preferences, policy decisions, agent plans, and traces of intermediate reasoning.That does not mean relational databases are suddenly obsolete. It means that many AI-facing applications are accumulating state whose shape changes faster than a conventional schema governance process can comfortably absorb. The operational question becomes whether the database can tolerate that evolution without turning every product iteration into a migration event.

Cosmos DB’s document model fits neatly into this story. JSON documents can carry evolving application context, and teams can add fields as agents, prompts, and product features change. For fast-moving AI teams, that flexibility is not a luxury; it is a way to avoid freezing the application design while the underlying user behavior is still being discovered.

But “schema-less” is a dangerous term when spoken too casually. Production systems always have a schema; sometimes it lives in the database, sometimes in application code, sometimes in validation libraries, and sometimes in the heads of the engineers who are paged at 2 a.m. The absence of a rigid database schema can accelerate iteration, but it can also hide inconsistency until queries, indexes, and downstream consumers begin to disagree about what a document actually means.

That is where Cosmos DB’s AI pitch becomes interesting. Microsoft is not merely saying that documents are flexible. It is saying that flexibility, global distribution, integrated search, caching, serverless capacity, and agent-friendly interfaces are converging into a single application platform. The database is being asked to absorb the chaos of AI development while still behaving like infrastructure.

Coding Agents Turn Database Design Into a Faster Failure Mode

The second shift is speed. Microsoft and its conference speakers repeatedly returned to the idea that AI coding tools are shortening the distance between idea and deployed application. Developers are iterating faster, shipping more often, and increasingly relying on agents to generate code, infrastructure templates, and migration paths.That has an uncomfortable consequence for databases. The more quickly code is generated, the more quickly bad data models can be created. A coding agent can scaffold an application in minutes, but it cannot repeal the physics of distributed systems or the economics of inefficient queries.

Microsoft’s answer is to make Cosmos DB more legible to both humans and agents. The conference material emphasized serverless deployment, instant scalability, integrated caching, and tools that give developers feedback about partitioning, performance, and cost. That is a practical recognition that an AI-generated application still needs a responsible data model if it is going to survive contact with users.

The Vercel portion of the conference made this point sharply. Guillermo Rauch has spent years arguing for serverless workflows that remove friction from deployment. At Cosmos DB Conf, the AI-era version of that argument was that software creation may expand from millions of developers to far more creators who generate applications through agents and prompts.

If that vision is even half right, the database layer will be under new pressure. Applications will appear, spike, mutate, and disappear faster than procurement committees or classic capacity planning models can track. A database that expects careful upfront provisioning and slow schema evolution will feel antique in that environment.

Yet the Vercel story also exposes a tension. Serverless economics are attractive precisely because they make small and bursty workloads viable. But AI-generated applications can be careless with resources, and a system that scales instantly can scale mistakes instantly too. The next phase of developer experience is not just “make it easy to deploy.” It is “make it hard to accidentally build a financially ridiculous system.”

Search Moves From Sidecar to Query Plane

The third shift is semantic retrieval. AI applications need to find information by meaning, not just by exact string match or key lookup. Vector search, full-text search, hybrid search, and semantic ranking are becoming baseline requirements for retrieval-augmented generation, agent memory, support copilots, document analysis, personalization, and a growing list of enterprise workflows.For years, this often meant stitching together an operational database, a search engine, an embedding pipeline, and an orchestration layer. That architecture can be powerful, but it introduces duplication, latency, consistency questions, and more moving parts for teams that already struggle to operate conventional distributed systems.

Microsoft’s pitch is that Cosmos DB can collapse more of that into the operational database itself. If the same platform can store application state, support vector retrieval, handle full-text search, respond to change feed events, and scale globally, then teams can reduce architectural sprawl. In theory, that means fewer synchronization paths and faster application loops.

The practical appeal is obvious. AI applications often need current context, not stale search indexes updated through a slow pipeline. If a user adds information, an agent observes an event, or a transaction changes state, retrieval should reflect that quickly enough to matter. Bringing search closer to the operational store reduces the number of places where reality can drift.

But consolidation is not automatically simplification. A database that becomes the system of record, vector index, text search platform, event source, cache partner, and agent memory layer also becomes more critical and more complex. The fewer systems you operate, the more discipline you need in the one system left standing.

This is where the phrase first-class query operator carries real weight. Semantic search cannot remain a demo feature if developers are expected to build production AI workflows on it. It needs predictable performance, clear cost behavior, robust indexing choices, and operational observability. Otherwise, the architecture merely moves the complexity from the diagram into the monthly bill.

OpenAI Gives Microsoft the Planet-Scale Proof Point It Wanted

No cloud database conference is complete without a customer scale story, and OpenAI supplied the one Microsoft most wanted to showcase. Jon Lee of OpenAI discussed operating systems at massive scale, with Microsoft’s conference recap emphasizing trillions of transactions, petabytes of data, and the need to move from zero usage to enormous query volumes.The OpenAI story is valuable because it connects two ideas that are often treated separately: scale and malleability. It is not enough for a database to be large if every schema adjustment becomes a platform negotiation. It is not enough to be flexible if the system falls over when adoption explodes.

For OpenAI-like workloads, the hard problem is that success does not arrive gradually. A product feature can move from experiment to global dependency very quickly. Thousands of developers may be building against shared infrastructure, and the database must absorb both the traffic and the organizational churn.

That makes Cosmos DB’s schema-flexible, globally distributed identity a strong fit for the story Microsoft wants to tell. The company can point to OpenAI and argue that the demands of frontier AI applications are not hypothetical. They are already punishing conventional assumptions about onboarding speed, capacity planning, and data evolution.

Still, most WindowsForum readers are not OpenAI. Most enterprise teams are not processing petabytes of rapidly evolving AI application state, and most line-of-business apps do not need to scale from zero to millions of queries per second overnight. The lesson is not that every application should be designed like OpenAI’s.

The better lesson is that the pressure felt first at the extreme end of the market often drifts downward. Today’s “planet scale” requirement becomes tomorrow’s expectation that a departmental AI tool can launch globally, survive a usage spike, and let a small team iterate without a database redesign every sprint. OpenAI is the proof point; the broader market is the target.

Vercel Shows Why the Database Has to Teach the Developer

The Vercel segment put a different face on the same shift. Where OpenAI represents massive scale from the top down, Vercel represents application creation from the edge outward. The developer or creator writes less boilerplate, relies more heavily on agents, and expects infrastructure to appear on demand.That world changes the personality of a database. It cannot simply wait for a senior database engineer to bless every decision. It has to guide the developer in real time, surface cost consequences, and nudge applications toward workable models before usage makes mistakes expensive.

Rauch’s point about wanting a system where a developer writes a query and understands its cost gets to the center of the issue. Cost cannot remain a monthly surprise discovered by finance after a product launch. In cloud-native AI applications, cost has to become part of the development feedback loop.

That is especially true for agentic applications, where loops can be hidden. A human user clicks a button once, but the application may perform multiple retrievals, tool calls, writes, cache checks, and background updates. The developer needs to know not only whether the feature works, but whether the feature’s internal behavior scales economically.

Microsoft’s challenge is to make that feedback comprehensible. Request units, indexing policies, partitioning strategies, and consistency levels are powerful but not always intuitive. If AI coding agents are going to produce Cosmos DB-backed applications, they will need guardrails that translate database mechanics into design consequences.

The irony is that AI may make database expertise more important, not less. The expert may no longer handcraft every query or schema migration, but someone must understand whether the generated architecture is sane. In the AI era, the database professional becomes part reviewer, part platform engineer, and part economic safety officer.

Walmart Reminds Everyone That Latency Still Wins the Checkout

The Walmart story served as the necessary antidote to conference futurism. AI may be changing application development, but retail systems still live or die by latency, availability, and user trust. A cart that disappears, stalls, or fails during a regional incident is not an abstract distributed systems lesson; it is abandoned revenue.Technical Fellow Sid Anand’s comments about keeping cart and view-cart interactions available and low latency through regional failures cut through the AI gloss. The point is not that AI makes reliability less important. It makes reliable infrastructure more heavily loaded, more widely integrated, and more visible to users.

This is Cosmos DB’s older pitch reappearing inside the newer one. Global distribution, low latency, and high availability were selling points before the current AI wave. Walmart’s presence at the conference suggests Microsoft wants customers to understand that the AI story sits on top of the same operational foundation.

That is a smart move because many AI platform pitches currently sound like they were assembled in a lab far from production traffic. Enterprises do not get to choose between innovation and reliability. They need both, and they will usually sacrifice novelty before they sacrifice checkout, authentication, support, billing, or compliance.

For Windows and Azure administrators, this is the familiar part of the story. The enterprise does not care that an application is AI-native if it breaks failover, explodes latency, or bypasses governance. The AI era may change the workload shape, but it does not lower the bar for operations.

In fact, it raises it. AI applications often sit closer to user decision-making, customer support, internal automation, and business workflows. When they fail, hallucinate, retrieve stale information, or lose context, the impact can be broader than a conventional page error.

Azure DocumentDB Is the Cost Argument Wearing an Open Ecosystem Jacket

The conference also pushed Azure DocumentDB as a complementary option to Cosmos DB. Microsoft positioned Cosmos DB for global scale, serverless elasticity, and five-nines reliability, while presenting Azure DocumentDB as a lower-cost, flexible, open-ecosystem option with MongoDB compatibility and multi-cloud portability ambitions.That split is strategically important. Cosmos DB is powerful, but it has long carried a reputation for requiring careful design to avoid unpleasant cost outcomes. Microsoft knows that AI applications intensify that concern because retrieval, embeddings, agent memory, and rapid iteration can all multiply database activity.

By highlighting Azure DocumentDB and claiming significant cost advantages over alternatives, Microsoft is trying to cover a broader part of the document database market. Not every workload needs the full Cosmos DB value proposition. Some teams want MongoDB-style compatibility, independent scaling of compute and storage, and a more portable architecture.

There is also a competitive subtext. MongoDB remains a strong default mental model for document applications, while PostgreSQL, specialized vector databases, search platforms, and cloud-native NoSQL services all compete for AI-era workloads. Microsoft cannot win simply by saying Cosmos DB scales. It has to make a credible argument across cost, openness, developer familiarity, and integration with Azure’s AI stack.

DocumentDB gives Microsoft a second answer when a customer says Cosmos DB is too much platform for the job. That may make the Azure data portfolio more coherent, but it also creates a positioning challenge. Customers will need clear guidance on when to choose Cosmos DB, when to choose Azure DocumentDB, and when another Azure database is the better fit.

The most honest answer is workload-specific. If the application needs global distribution, aggressive elasticity, integrated multi-region availability, and Cosmos DB’s operational model, Cosmos DB remains the flagship. If the application prioritizes MongoDB compatibility, portability, and cost efficiency over the full planetary-scale feature set, Azure DocumentDB becomes easier to justify.

The Agent Memory Pattern Is Becoming Real Architecture

One of the more useful signals from the conference was the move from keynote language to implementation patterns. Farah Abdou of SmartServe reportedly described rebuilding an architecture around Cosmos DB as an “agent memory fabric,” combining vector search for semantic caching, change feed for event-driven coordination, and optimistic concurrency to prevent conflicts.That phrase sounds like vendor poetry, but the pattern underneath is concrete. AI agents need memory, but memory is not just a transcript dumped into a table. It is a mix of recent context, durable facts, retrieved knowledge, coordination state, and safeguards against competing actions.

Semantic caching is especially compelling. If an AI system can recognize that a new request is meaningfully similar to a previous one, it may avoid redundant work, reduce model calls, and improve latency. But that only works if retrieval is fast enough and the cache is governed carefully enough not to return stale or inappropriate responses.

The change feed piece is equally important. Agentic systems often need to react when data changes: a document arrives, a user updates a profile, a workflow advances, or another agent writes state. Treating the database as an event source can keep these systems coordinated without constantly polling or building separate synchronization machinery.

Optimistic concurrency rounds out the production story. Multiple agents or services may try to update the same entity, and the system needs a way to detect and resolve conflicts. In ordinary applications this is already important; in AI applications, where automated actors may operate concurrently, it becomes essential.

This is the kind of architecture that makes Cosmos DB interesting beyond marketing. It uses database capabilities together rather than treating the product as a JSON bucket. It also demonstrates why AI applications are pushing databases toward a combined role: store, search engine, event hub, and coordination layer.

Governance Moves Into the Prompt Path

Security and governance also appeared in the conference material, notably through Pamela Fox’s work on secure, multi-user AI systems using the Model Context Protocol, Microsoft Entra ID, Microsoft Graph, Azure Cosmos DB, Visual Studio Code, and GitHub Copilot. The details matter because enterprise AI applications cannot treat identity as an afterthought.The Model Context Protocol has become a popular way to connect AI systems with tools and data sources, but it also creates a new attack and governance surface. If an AI agent can call tools, query data, or act on behalf of a user, then authentication and authorization must follow the user’s actual permissions rather than the developer’s optimism.

Storing per-user data in Cosmos DB and tying access to Entra ID is the sort of pattern enterprises will expect. Role-based access, least privilege, and auditability are not optional just because the interface is conversational. If anything, conversational interfaces make permission boundaries harder for users to see.

Microsoft’s advantage here is ecosystem gravity. Entra ID, Microsoft Graph, Azure services, GitHub Copilot, VS Code, and Cosmos DB can be presented as one integrated development and governance loop. That is appealing for organizations already standardized on Microsoft’s identity and productivity stack.

The caution is lock-in by convenience. The tighter the integration, the easier it is to build quickly inside Azure and the harder it may become to separate application logic from platform assumptions later. For many enterprises that tradeoff is acceptable, but it should be made consciously.

The broader point is that AI governance is becoming a data architecture issue. Where data lives, how it is partitioned, what identity is attached to it, how retrieval is filtered, and what tools can access it are all part of the application’s security posture. The prompt is not the perimeter. The data layer is.

The Old Cosmos DB Lessons Still Apply

The most dangerous takeaway from Cosmos DB Conf would be that AI changes everything so completely that established database discipline no longer matters. In reality, the conference reinforced the opposite. AI makes the old lessons more urgent.Cosmos DB rewards good partitioning and punishes careless access patterns. It can scale impressively, but not if every query fights the data model. It can support flexible documents, but not if teams abdicate responsibility for validation, versioning, and lifecycle management.

Cost visibility is the thread that ties these concerns together. Microsoft’s emphasis on real-time feedback and economic design is not cosmetic. Developers building AI applications need to understand how reads, writes, indexes, vector operations, caching, and regional distribution affect the bill.

This is where sysadmins and platform teams should pay attention. AI application development is often sold as a way for product teams to move faster without waiting on central infrastructure. But when those applications hit production, central teams inherit the reliability, security, observability, and cost consequences.

The answer is not to block AI adoption or force every project through a six-month architecture review. The answer is to create paved roads: approved patterns for agent memory, retrieval, identity, logging, cost controls, and deployment. Cosmos DB can be part of that road, but it cannot be the road by itself.

Microsoft’s strongest argument is that databases need to become more helpful in that process. If tools can suggest partition keys, explain query costs, flag inefficient designs, and integrate with coding agents, they can reduce the gap between prototype and production. The weakest version of that argument is “let the agent handle it.” The strongest version is “make the expert’s judgment easier to apply earlier.”

The Cosmos Conf Signal Beneath the Azure Sales Pitch

The concrete lesson from Cosmos DB Conf 2026 is that Microsoft is aligning Cosmos DB, Azure DocumentDB, identity, developer tools, and AI retrieval around a single claim: AI applications need a data tier that can change shape quickly without giving up scale, security, or cost control.- AI applications are pushing semi-structured data, memory, prompts, tool outputs, and retrieved context into the center of application architecture.

- Microsoft is positioning semantic, vector, full-text, and hybrid search as database-native capabilities rather than peripheral services.

- OpenAI supplied the extreme-scale validation story, while Vercel supplied the serverless developer-experience argument.

- Walmart’s reliability story showed that low latency, regional resilience, and operational discipline remain non-negotiable.

- Azure DocumentDB gives Microsoft a cost- and compatibility-oriented counterweight to Cosmos DB’s global-scale flagship pitch.

- The most practical production patterns combine storage, retrieval, change events, identity, and concurrency controls rather than treating AI memory as a simple log table.

Source: Microsoft Azure Build AI apps with Azure Cosmos DB: Key trends from Cosmos Conf 2026 | Microsoft Azure Blog