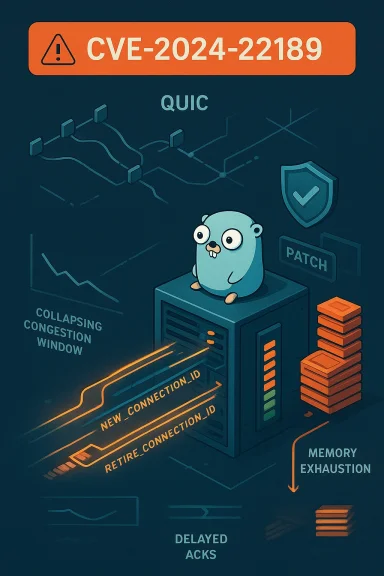

On April 4, 2024 the QUIC ecosystem faced a high‑severity availability risk when researchers disclosed CVE‑2024‑22189: a memory‑exhaustion flaw in the popular Go implementation quic‑go that lets a remote attacker force a peer to consume unbounded memory by abusing QUIC’s Connection ID management. The bug is not a cryptographic break or data theft vector — its damage is blunt and operational: sustained or persistent denial of service (DoS) by exhausting process memory and preventing normal connection management.

QUIC is a modern transport protocol used by HTTP/3 and other applications to replace TCP+TLS in many scenarios. A core feature of QUIC is its use of Connection IDs (CIDs) so endpoints can survive client IP/port changes and load‑balanced paths. Endpoints can issue new CIDs with NEW_CONNECTION_ID frames and request retirement of old ones; the peer acknowledges retirements with RETIRE_CONNECTION_ID frames. That bookkeeping keeps a bounded set of active CIDs and prevents stale identifiers from accumulating. In quic‑go prior to version 0.42.0 this retirement bookkeeping could be manipulated so that retirement acknowledgements could not be transmitted, allowing an attacker to force the receiver to accumulate unbounded retirement state until the process ran out of memory.

This is an allocation without limits problem (CWE‑770) and is scored as high severity (CVSS 7.5 in published advisories). The practical outcome is availability loss — services using vulnerable quic‑go builds can be made unresponsive or crash under attack.

The quic‑go issue is an archetypal example: acceptance of retirements without sufficient local throttling or transmission guarantee created a window where an attacker could fill up local resources while preventing the normal clearing mechanism from occurring. Similar problems were independently found in other QUIC libraries (for example Cloudflare’s quiche family with CVE‑2024‑1410), showing that this is a systemic design and implementation challenge rather than a one‑off mistake.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

QUIC is a modern transport protocol used by HTTP/3 and other applications to replace TCP+TLS in many scenarios. A core feature of QUIC is its use of Connection IDs (CIDs) so endpoints can survive client IP/port changes and load‑balanced paths. Endpoints can issue new CIDs with NEW_CONNECTION_ID frames and request retirement of old ones; the peer acknowledges retirements with RETIRE_CONNECTION_ID frames. That bookkeeping keeps a bounded set of active CIDs and prevents stale identifiers from accumulating. In quic‑go prior to version 0.42.0 this retirement bookkeeping could be manipulated so that retirement acknowledgements could not be transmitted, allowing an attacker to force the receiver to accumulate unbounded retirement state until the process ran out of memory.This is an allocation without limits problem (CWE‑770) and is scored as high severity (CVSS 7.5 in published advisories). The practical outcome is availability loss — services using vulnerable quic‑go builds can be made unresponsive or crash under attack.

How the attack works — technical breakdown

1) Attack primitive: NEW_CONNECTION_ID flood

An attacker sends a large number of NEW_CONNECTION_ID frames to the target QUIC endpoint. Each NEW_CONNECTION_ID invites the peer to add an additional connection identifier to its bookkeeping and to schedule retirement of an older identifier. The receiver stores state for each retirement until it can emit the corresponding RETIRE_CONNECTION_ID frame.2) Blocking the RETIRE_CONNECTION_IDs

Normally the peer will send RETIRE_CONNECTION_ID frames back to acknowledge retirements and therefore free the stored retirement state. The clever part of this attack is that the attacker can prevent the receiver from successfully sending most of those RETIRE frames by manipulating the perceived network conditions: selective acknowledgements and carefully crafted packet timing cause the receiver’s congestion window and RTT estimators to collapse, so outgoing frames are delayed or held. Over time the receiver’s queue of pending retirements grows because it cannot transmit the RETIRE frames fast enough, and the code in quic‑go did not limit how much memory that backlog could use.3) Memory exhaustion and DoS

With the retirement backlog growing unbounded, the victim process allocates more memory to track pending retirements until it either crashes or becomes non‑functional due to high memory pressure. The consequence is a denial of service that can be either sustained (while the attacker continues) or persistent (if the process cannot recover cleanly). This is a resource exhaustion DoS rather than an authentication bypass or data exfiltration exploit.Which code and versions are affected

- Affected component: the quic‑go library (the leading Go implementation of QUIC used in many server and client apps).

- Affected versions: versions prior to 0.42.0. The fix was introduced in quic‑go v0.42.0.

Risk assessment: Who should worry, and how badly?

- Developers and operators embedding quic‑go in production must assume high risk until they update. Services reachable by untrusted clients (public HTTP/3 endpoints, APIs, CDN edge services, load balancers) are particularly exposed because the attacker needs only to open QUIC connections and send crafted frames.

- The exploit requires packet‑level control and precise ACK manipulation to keep the receiver’s outgoing frames suppressed. That raises the bar compared with an off‑the‑shelf application layer exploit, but it is well within the capability of skilled attackers and automated exploit clients. In short: the attack is nontrivial but practical.

- The attack is pure availability damage — there is no direct confidentiality or integrity compromise reported for CVE‑2024‑22189. Even so, DoS can have cascading business impacts (service outages, failover storms, capacity exhaustion of upstream systems).

- There are no known reliable workarounds short of patching in the vulnerable quic‑go ecosystems; public advisories emphasize that upgrading to v0.42.0 (or later) is the remediation. This leaves organizations with only operational mitigations (isolate/quarantine QUIC‑exposed endpoints, rate‑limit or filter UDP/QUIC at network edges) until they can deploy patches.

Mitigation and remediation guidance

Immediate actions (0–24 hours)

- Inventory: identify all services and binaries that embed quic‑go. Treat any custom Go services, edge proxies, or servers that list github.com/quic‑go/quic‑go in their dependencies as high priority.

- Apply network controls: restrict or rate‑limit incoming QUIC/UDP traffic at the perimeter and upstream DDoS scrubbing services where possible. These are temporary measures and do not resolve the underlying vulnerability.

Patching (recommended)

- Upgrade quic‑go to v0.42.0 or later and rebuild/deploy dependent services. This is the authoritative fix shipped by the project.

- Test in staging: exercise typical QUIC workloads and run fault injections (controlled replays of NEW_CONNECTION_ID/RETIRE sequences) to confirm behavior and ensure the update does not regress performance.

Operational hardening

- Employ UDP/QUIC rate limiting and connection caps on edge routers and load balancers. Limit the number of simultaneous QUIC connection attempts per source IP where appropriate. These controls can raise the cost of attack but are insufficient alone.

- Monitor memory and socket state for unusual growth patterns on QUIC‑serving hosts; create alerts for sudden increases in per‑process memory usage or socket backlog. Early detection reduces blast radius.

Long‑term design recommendations

- Implement bounded resource accounting for all protocol‑driven state (e.g., cap pending retirement entries, bound queue depth per connection). The fix for quic‑go follows this principle. Libraries and applications should never accept unbounded state driven by remote requests without local limits.

- Add fuzzing and protocol‑aware stress tests that specifically exercise corner cases of frame sequencing and congestion interactions. QUIC’s combinatorial state (frames, ACK timing, congestion control) benefits from protocol‑aware testing.

Why this class of bug keeps reappearing in QUIC implementations

QUIC moves a lot of responsibility into the transport layer in user space: congestion control, packetization, frame management, connection migration and connection ID handling. That flexibility gives performance gains but also pushes complex state machines into application code rather than kernel TCP stacks. When a protocol allows remote actors to request the allocation of local state (new CIDs, streams, etc.), robust defenses require conservative local limits and defensive timeouts.The quic‑go issue is an archetypal example: acceptance of retirements without sufficient local throttling or transmission guarantee created a window where an attacker could fill up local resources while preventing the normal clearing mechanism from occurring. Similar problems were independently found in other QUIC libraries (for example Cloudflare’s quiche family with CVE‑2024‑1410), showing that this is a systemic design and implementation challenge rather than a one‑off mistake.

Threat modeling and detection

What to watch for in logs and telemetry

- Unusual, persistent increases in memory usage on processes that serve QUIC traffic. Set hard alerts so operators can react before a crash.

- High rates of NEW_CONNECTION_ID frames or atypical sequences where the number of added CIDs far exceeds normal workload patterns. If available, collect QUIC frame statistics and produce baselines.

- Congestion window collapse artifacts: many repeated small packet ACKs from a client or highly irregular ACK timing could indicate manipulative acknowledgement behavior used by an attacker to suppress outgoing frames. These signals are subtle and require packet‑level visibility.

Network‑level detection

- Monitor for high volumes of short‑lived QUIC handshakes from single or distributed sources. Use connection‑tracking to spot clusters of connections that create many NEW_CONNECTION_IDs.

Responsible disclosure and timeline (condensed)

The vulnerability was publicly documented in early April 2024 with a corresponding security advisory and a fix in quic‑go v0.42.0. The quic‑go maintainers and the original researcher(s) published detailed writeups and a presentation to the QUIC working group, describing the attack, exploitation vector, and the implemented mitigation approach in the library. Public advisories urged immediate updates because no reliable mitigations short of patching were available.Strengths of the response — and ongoing risks

Notable strengths

- The quic‑go project addressed the issue quickly with a clear fix and version bump, and maintainers published explanatory notes for operators. That rapid vendor response reduced the window of exposure for well‑maintained deployments.

- The public disclosures included technical writeups that help defenders understand detection signals and implement defensive measures beyond simple patching. Those educational artifacts are valuable for hardening other QUIC implementations.

Remaining risks and caveats

- The attack pattern requires fine‑grained control of ACK behavior and timing to collapse a victim’s congestion window; while this increases exploitation complexity, motivated attackers (or automated exploit toolkits) can achieve this with crafted clients and sufficient bandwidth. Treat the vulnerability as practical rather than purely theoretical.

- The underlying class of resource‑exhaustion in Connection ID management is not unique to quic‑go. Other implementations have had similar issues, so organizations should assume potential exposure across their QUIC stack and validate every implementation in use.

Practical checklist for administrators and engineers

- Inventory: locate every service, container image, and binary that depends on github.com/quic‑go/quic‑go.

- Patch: upgrade dependencies to quic‑go v0.42.0 or later and rebuild. Verify the updated version is in deployed artifacts.

- Network controls: temporarily rate‑limit QUIC/UDP traffic and implement source IP connection caps. These are stopgap mitigations.

- Monitor: add memory and frame‑level telemetry for QUIC services and set actionable alerts.

- Test: run protocol fuzzers and scripted NEW_CONNECTION_ID/RETIRE sequences in test environments to verify the fix and exercise edge behaviors.

Final assessment

CVE‑2024‑22189 is a high‑impact, availability‑focused vulnerability in quic‑go that demonstrates why protocol state‑management must be conservative and bounded. The vulnerability is fixed in quic‑go v0.42.0, and public advisories consistently recommend immediate upgrades. While exploitation requires protocol‑level sophistication (ACK manipulation and congestion control abuse), the attack is practical and can cause sustained service outages; therefore operators should prioritize patching, add temporary network controls, and harden detection. The broader lesson for the QUIC ecosystem is clear: when endpoints accept remote requests that create local state, defensive limits and careful transmission guarantees are non‑optional.Quick reference (at a glance)

- Vulnerability: CVE‑2024‑22189 — memory exhaustion via Connection ID mechanism in quic‑go.

- Impact: Denial of Service (process memory exhaustion).

- Affected: quic‑go versions prior to v0.42.0.

- Remediation: Upgrade to quic‑go v0.42.0+ and apply network mitigations until patches are deployed.

Source: MSRC Security Update Guide - Microsoft Security Response Center