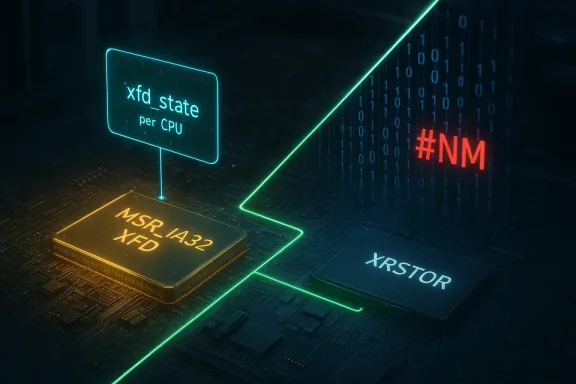

The Linux kernel bug tracked as CVE-2024-35801 creates a mismatch between a cached per‑CPU state (xfd_state) and the processor model-specific register MSR_IA32_XFD, allowing normal FP/SIMD context-management operations to trigger a machine‑check (#NM) during XRSTOR and crash the kernel — effectively a local denial‑of‑service that vendors have since patched.

CVE-2024-35801 is a medium‑to‑high impact Linux kernel vulnerability rooted in the x86 floating‑point / XSAVE state path. The kernel introduced a per‑CPU cache named xfd_state to reduce writes to the MSR_IA32_XFD register. Under normal operation that cache mirrors the MSR; however, on CPU hotplug the hardware MSR is reset to a default (init_fpstate.xfd) while the kernel’s per‑CPU cache was not reset. That divergence can cause the kernel to fail to update the real MSR when required, and a subsequent XRSTOR instruction executed by kernel code can raise a #NM (device not available) exception inside the kernel, producing a crash or kernel panic.

This is not a remote arbitrary‑code exploit: it requires local code execution and specific CPU state transitions. The practical result is an availability impact — a kernel crash — that a local attacker (or buggy driver/module) can trigger under certain system configurations. Vendors and distributions pushed kernel updates that change the state management code so the cached xfd_state is always kept in sync with MSR_IA32_XFD; the fix introduces a new setter routine to update both the model‑specific register and the cached value atomically.

To avoid redundant MSR writes, the kernel maintainers added a cached per‑CPU value (xfd_state). The idea was performance: only write the MSR when the cached value implies a real change. But this cache becomes a liability when the hardware value can change for reasons the kernel didn’t account for (CPU hotplug being the concrete example). If the hardware MSR is reset but the kernel cache isn’t, subsequent kernel code may think no MSR write is needed and skip the write — leading to an inconsistent state. With XRSTOR executed later, the CPU can detect a mismatch and throw a #NM in kernel space.

Key operational notes for administrators:

Operators should verify their deployed kernel includes equivalent changes. You can confirm the presence of the fix by checking kernel changelogs supplied with vendor packages or the stable kernel commit notes that reference the x86/fpu changes. Distributors that rebase or backport patches will include the functional fix inside their kernel update; confirm package changelogs rather than assuming version numbers alone.

When verifying, look for these behavioral outcomes:

Public exploit code would likely be limited (proof‑of‑concept) and requires careful handling; indefinite reproduction requires detailed knowledge of local kernel builds, CPU microarchitecture, and module interactions. Organizations should assume that a motivated attacker with local access could weaponize the condition if not patched.

In short: patch your kernels, prioritize hosts that serve many tenants or provide virtualization services, and treat this as a serious availability bug that deserves immediate operational attention.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

CVE-2024-35801 is a medium‑to‑high impact Linux kernel vulnerability rooted in the x86 floating‑point / XSAVE state path. The kernel introduced a per‑CPU cache named xfd_state to reduce writes to the MSR_IA32_XFD register. Under normal operation that cache mirrors the MSR; however, on CPU hotplug the hardware MSR is reset to a default (init_fpstate.xfd) while the kernel’s per‑CPU cache was not reset. That divergence can cause the kernel to fail to update the real MSR when required, and a subsequent XRSTOR instruction executed by kernel code can raise a #NM (device not available) exception inside the kernel, producing a crash or kernel panic.This is not a remote arbitrary‑code exploit: it requires local code execution and specific CPU state transitions. The practical result is an availability impact — a kernel crash — that a local attacker (or buggy driver/module) can trigger under certain system configurations. Vendors and distributions pushed kernel updates that change the state management code so the cached xfd_state is always kept in sync with MSR_IA32_XFD; the fix introduces a new setter routine to update both the model‑specific register and the cached value atomically.

Why this matters: the technical essentials

- Component affected: Linux kernel x86 FPU / XSAVE/XRSTOR handling and MSR state management.

- Root cause: A per‑CPU cached copy of the XFD MSR (xfd_state) was not always synchronized when the MSR was implicitly reset (for example during CPU hotplug), creating a stale cache that could prevent required writes to the MSR later.

- Trigger: When the kernel attempts to restore floating‑point extended state (XRSTOR) but the underlying MSR and the kernel cache disagree, XRSTOR can fault with a #NM exception in kernel space.

- Impact: Kernel crash / denial of service (availability). Not a direct confidentiality or integrity breach in the widely understood sense, but a serious availability risk for affected systems.

- Attack vector: Local (requires code running on the machine). The vulnerability is exploitable by low‑privilege local users in some metrics, but exploitation scenarios depend on kernel configuration and workload.

- Fix: Kernel code changes that introduce a unified xfd_set_state() to write both MSR and the per‑CPU cache together and ensure all MSR writes use this unified path.

How the defect works (detailed, but accessible)

XSAVE/XRSTOR and XFD: a very short primer

The XSAVE/XRSTOR family of instructions lets the processor and operating system save and restore extended processor state (AVX, MPX, some future ISA extensions). Certain features are controlled by MSRs (model‑specific registers) to enable or configure the handling of those state components. On modern x86 CPUs a specific MSR — MSR_IA32_XFD — holds configuration for an optional FPU extension (XFD) and the kernel must ensure the MSR reflects the intended state before executing XRSTOR or XSAVE sequences.To avoid redundant MSR writes, the kernel maintainers added a cached per‑CPU value (xfd_state). The idea was performance: only write the MSR when the cached value implies a real change. But this cache becomes a liability when the hardware value can change for reasons the kernel didn’t account for (CPU hotplug being the concrete example). If the hardware MSR is reset but the kernel cache isn’t, subsequent kernel code may think no MSR write is needed and skip the write — leading to an inconsistent state. With XRSTOR executed later, the CPU can detect a mismatch and throw a #NM in kernel space.

Sequence that leads to a crash (simplified)

- Kernel runs with xfd_state = A and MSR_IA32_XFD = A.

- CPU is hot‑unplugged or hot‑plugged — hardware resets MSR_IA32_XFD to the init value B while kernel xfd_state remains A.

- Later, kernel code calls xfd_update_state(), sees xfd_state == A and concludes no MSR write is necessary.

- XRSTOR runs while the MSR holds B — CPU raises #NM inside kernel context.

- Kernel does not gracefully handle the #NM in that code path, resulting in an OOPS/crash.

Vendor and distribution response

Major Linux distributions and vendors have acknowledged the issue and produced updates. The kernel project fixed the upstream code by introducing a dedicated setter routine that writes the MSR and the per‑CPU cached variable together; downstream maintainers have backported or rebased that fix into their stable kernels and published advisories or package updates.Key operational notes for administrators:

- If you run commodity distributions (Ubuntu, Debian, RHEL, SUSE, Amazon Linux, Oracle Linux, etc.), expect a kernel package update that contains the x86/fpu fix. Install kernel updates from your vendor’s package repositories rather than trying to patch by hand.

- Cloud images and ISVs that ship custom kernels (for appliance, virtualization host, or embedded use) must ensure they carry the upstream fix or an equivalent synchronization change.

- The issue is most relevant for systems with CPU hotplug activity, unusual XSAVE usage patterns, or workloads that deliberately manipulate low‑level FP/SIMD state (hypervisors, KVM guests, VFIO passthrough, high‑performance scientific stacks). But it can also be triggered in less exotic environments by third‑party kernel modules.

Risk analysis: who should worry and why

High‑priority systems

- Virtualization hosts and cloud hypervisors: A kernel crash here affects many tenants; local guests or a misbehaving driver inside a VM could cause host instability if nested or paravirtual interfaces interact with XSAVE/MSR paths.

- Multi‑tenant shared machines: Any machine where unprivileged or low‑privileged users share kernel resources (CI runners, build hosts, container hosts with privileged capabilities) raises the risk that one tenant can crash the host.

- Systems that perform CPU hotplug frequently: Systems that programmatically add/remove CPUs (some cloud autoscale host shapes or dynamic NUMA reconfiguration) can create the hardware MSR-reset condition that exposes the bug.

Medium‑priority systems

- Endpoint desktops and laptops: Less likely to see an exploit in the wild because the attack requires local code execution. Still, a kernel panic can be impactful for single-user machines.

- Embedded devices and appliances: If they accept untrusted code or receive third‑party driver updates, they may be exposed. Many appliances also use custom kernels and may need vendor firmware updates.

Low‑priority systems

- Single‑purpose appliances with closed stacks and no local user access: If local code execution is impossible, the risk is effectively zero.

Detection and indicators

There is no standard remote indicator for CVE‑2024‑35801 beyond kernel crash logs and OOPS messages. Administrators should look for the following in system logs and crash traces:- Kernel OOPS or panic messages that reference XRSTOR, XFD, or "device not available" (#NM) exceptions in the kernel stack.

- Call traces involving the x86/fpu code path, XSAVE/XRSTOR, or explicit references to MSR_IA32_XFD in debug output.

- Reproducible crashes after CPU hotplug operations, or after loading/unloading modules that touch FPU/XSAVE paths.

- Unexplained kernel crashes that coincide with specific workloads using AVX/AVX‑512/XSAVE features.

Mitigation and remediation guidance

Immediate steps for sysadmins and operators:- Patch promptly: Install the vendor kernel update that contains the x86/fpu synchronization fix. This is the correct and permanent remediation.

- Staged rollout: Test updated kernels in a staging environment before long‑running production deployment, because kernel upgrades require reboots and can affect drivers and stack compatibility.

- If you cannot patch immediately: Reduce exposure by limiting untrusted local code execution:

- Restrict user access and remove unnecessary privileges from untrusted users.

- Disable or restrict kernel module loading where possible (module signing and secure boot help reduce the chance of untrusted modules).

- For virtualization hosts: Consider moving critical VMs to patched hosts before performing hotplug operations. Avoid live CPU hotplug in production until hosts are updated.

- Monitoring: Increase log monitoring for XRSTOR/#NM traces and consider temporary alerting rules for kernel panics while you complete patching.

Patch details and verification (what maintainers did)

Kernel maintainers introduced a dedicated helper function — conceptually named xfd_set_state() — that performs the MSR write and the per‑CPU cache update under a single code path. They then replaced all direct MSR writes with calls to that setter so the cache and the hardware MSR can never diverge.Operators should verify their deployed kernel includes equivalent changes. You can confirm the presence of the fix by checking kernel changelogs supplied with vendor packages or the stable kernel commit notes that reference the x86/fpu changes. Distributors that rebase or backport patches will include the functional fix inside their kernel update; confirm package changelogs rather than assuming version numbers alone.

When verifying, look for these behavioral outcomes:

- CPU hotplug no longer causes the observed divergence and reproducible XRSTOR/#NM kernel panics cease.

- XSAVE/XRSTOR operations run without kernel exceptions in workloads that previously triggered the problem.

Exploitability, public exploits, and attacker model

As of the kernel fixes and vendor advisories, there are no widely‑reported, reliable public exploit chains that turn CVE‑2024‑35801 into a remote attack. The practical attacker model is:- Local: Attackers require the ability to run code on the target machine.

- Low complexity: The condition is not a complex timing race; the problem is a logic/state mismatch and can often be triggered by specific sequences of CPU hotplug or kernel operations.

- No privilege escalation implied: The vulnerability itself does not grant higher privileges; it allows a local actor to crash the kernel and deny service.

Public exploit code would likely be limited (proof‑of‑concept) and requires careful handling; indefinite reproduction requires detailed knowledge of local kernel builds, CPU microarchitecture, and module interactions. Organizations should assume that a motivated attacker with local access could weaponize the condition if not patched.

Strengths and limitations of the fix

Strengths

- The upstream fix addresses the root cause (state divergence) rather than applying superficial workarounds.

- It centralizes MSR writes so all code paths use a single canonical setter, reducing future regression risk.

- Backports into stable kernel branches allow vendors to distribute updates quickly to diverse deployments.

Limitations and residual risks

- The fix relies on correct usage: if downstream or third‑party modules still write MSR_IA32_XFD directly (bypassing the setter), a divergence could still occur. That risk is low for well‑maintained systems but possible in custom kernels or out‑of‑tree modules.

- Systems that for operational reasons cannot be rebooted quickly will remain exposed until the kernel is updated and restarted.

- Because this is a local availability issue, organizations that permit untrusted local execution or have weak tenant isolation remain at higher risk.

Practical checklist for admins (quick actionable items)

- Ensure your inventory and patching pipeline identifies all Linux kernel instances across servers, cloud images, appliances, and containers that run a full kernel (not just userland).

- Apply vendor kernel updates that include the x86/fpu fix as soon as a tested maintenance window permits.

- For hypervisors and hosts that schedule live CPU hotplug or intensive XSAVE workload transitions, prioritize updates.

- Harden local user access policies and restrict the ability to compile or load unsigned kernel modules.

- Add temporary log alerts for XRSTOR/#NM traces while you complete patching.

- For custom kernel maintainers: audit out‑of‑tree modules and ensure they do not write MSR_IA32_XFD directly.

Broader implications and lessons learned

CVE‑2024‑35801 is a textbook example of how small optimizations — here, a cached per‑CPU copy of an MSR to avoid unnecessary writes — can introduce correctness bugs when hardware state changes (CPU hotplug, for instance) are not fully accounted for. The incident reinforces several enduring lessons for kernel developers and system engineers:- Cached replicas of hardware state must be refreshed on all events that can mutate the hardware independently of the cache.

- Centralized accessors and encapsulation (single setter functions that update both cache and hardware) reduce the chance of subtle, distributed inconsistencies.

- Low‑level state management code is unforgiving: logic errors often result in panics rather than graceful failure, so tests and invariants around state synchronization are essential.

- Vendors and distributors play a crucial role in pushing timely fixes; automated, auditable patch pipelines reduce exposure for multi‑tenant and critical infrastructure.

Conclusion

CVE‑2024‑35801 is not the most dramatic vulnerability you’ll read about this year — it does not leak secrets or open a remote shell — but it exemplifies how subtle state management bugs at the kernel/MSR level can produce high‑impact availability failures. The upstream kernel fix is targeted and correct: unify MSR writes and the per‑CPU cache so they cannot diverge, and ensure all code paths use the new setter. Operators should apply vendor kernel updates without undue delay, harden systems against untrusted local execution, and monitor for XRSTOR/#NM kernel traces as a temporary defensive measure.In short: patch your kernels, prioritize hosts that serve many tenants or provide virtualization services, and treat this as a serious availability bug that deserves immediate operational attention.

Source: MSRC Security Update Guide - Microsoft Security Response Center