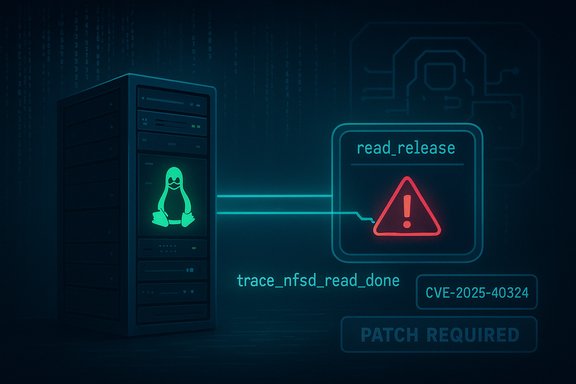

A harmless-looking tracehook in the NFS server (nfsd) could crash a system: CVE-2025-40324 patches a fault in nfsd4_read_release that causes the trace_nfsd_read_done tracepoint to crash during a specific pynfs read.testNoFh unit test when kernel tracing is enabled, turning a test scenario into an availability problem for real-world NFS servers.

The Linux kernel's NFS server (nfsd) is a complex, long-lived subsystem that tracks client state, read/write streams, and pNFS layout interactions. A recent upstream disclosure — assigned CVE-2025-40324 — describes a crash in the nfsd read-release path when tracing/tracepoints are enabled. The failure manifests in the tracepoint handler for read completion (trace_nfsd_read_done) during the pynfs test suite's read.testNoFh case, leading to an oops/crash in impacted kernels. This is fundamentally an availability vulnerability: the observable effect is a kernel oops or crash, not data disclosure or immediate privilege escalation. Upstream maintainers issued a surgical patch that corrects the handling in nfsd4_read_release, and the fix has been merged into stable kernel branches and referenced by public vulnerability trackers and advisories.

Because tracing is often enabled for debugging, performance telemetry, or in some observability stacks, this kind of fault can surface unexpectedly on systems where observability controls are co-located with production workloads, such as debug-enabled cloud images or development/test clusters.

Key characteristics of the fix:

Recommended remediation checklist:

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background / Overview

Background / Overview

The Linux kernel's NFS server (nfsd) is a complex, long-lived subsystem that tracks client state, read/write streams, and pNFS layout interactions. A recent upstream disclosure — assigned CVE-2025-40324 — describes a crash in the nfsd read-release path when tracing/tracepoints are enabled. The failure manifests in the tracepoint handler for read completion (trace_nfsd_read_done) during the pynfs test suite's read.testNoFh case, leading to an oops/crash in impacted kernels. This is fundamentally an availability vulnerability: the observable effect is a kernel oops or crash, not data disclosure or immediate privilege escalation. Upstream maintainers issued a surgical patch that corrects the handling in nfsd4_read_release, and the fix has been merged into stable kernel branches and referenced by public vulnerability trackers and advisories. What happened — technical anatomy

The failing tracepoint and the test case

- The failing code path is in the NFSv4 read-release sequence inside the nfsd server.

- When kernel tracing is enabled, the tracepoint trace_nfsd_read_done is invoked at read completion.

- Under the pynfs test case read.testNoFh (a unit/integration test used by the kernel NFS test harness), this tracepoint invocation can cause a crash because the trace callback is accessing state the release path is concurrently tearing down.

Why tracing matters

Tracepoints are intended to be lightweight instrumentation hooks, but they execute callbacks in the kernel context and must respect the object lifetimes they observe. If the release path frees or alters structures while the trace callback dereferences them, a NULL or use-after-free can occur. The crash described in CVE-2025-40324 is a manifestation of that race between the release sequence and the trace callback invocation.Because tracing is often enabled for debugging, performance telemetry, or in some observability stacks, this kind of fault can surface unexpectedly on systems where observability controls are co-located with production workloads, such as debug-enabled cloud images or development/test clusters.

The fix — what the patch changes

Upstream maintainers produced a minimal, targeted patch to nfsd4_read_release and the related tracepoint handling so the trace callback no longer observes already-freed or inconsistent state during the release flow.Key characteristics of the fix:

- The change is surgical and limited to the read-release sequence and tracepoint invocation ordering, reducing regression risk.

- The patch ensures the trace callback observes a stable state or defers invocation until the read-related state is safe to access, preventing the immediate crash during the pynfs read.testNoFh sequence.

- The fix was merged into the kernel stable branches and referenced in public CVE trackers with links to the stable commits that implement the change.

Affected range and practical exposure

At disclosure, vulnerability databases and distribution trackers mapped the issue to kernel trees where nfsd and the relevant pNFS/tracing code existed prior to the stable fix. Operators need to determine exposure by checking:- Whether the running kernel includes nfsd (CONFIG_NFSD, NFSv4 support).

- Whether tracing/ftrace or other tracepoint consumers are enabled on hosts that run nfsd.

- Whether the host acts as an NFS server that can receive the specific read sequences used in the pynfs test or similar client behavior.

- Check kernel config for nfsd: zcat /proc/config.gz | grep -i NFSD or inspect /boot/config-$(uname -r).

- Check if the nfs server is running or nfsd modules are loaded: systemctl status nfs-server; lsmod | grep nfsd.

- Inspect whether tracing is enabled: check ftrace, perf, or systemtap-related services and active tracepoint subscriptions. Active tracing increases the immediate risk surface.

Detection and telemetry — what to look for

The vulnerability produces kernel-level failure modes that are best detected through kernel logs and crash artifacts. Primary indicators:- Kernel oops/panic traces in dmesg or journalctl -k that reference nfsd, nfsd4_read_release, or trace_nfsd_read_done symbols.

- Repeated or reproducible crashes when NFS clients perform reads that match the pynfs test patterns.

- Core dumps or vmcore images captured by kdump that include stack traces through the read completion/release code paths.

- Add SIEM/EDR rules that flag repeated kernel OOPS events with nfsd stack traces. Public writeups show representative oops traces for similar nfsd list-management crashes and advise searching for function names such as nfsd4_free_ol_stateid, trace_nfsd_read_done, and other nfsd symbols.

- Preserve crash artifacts: enable kdump/vmcore if you need forensic analysis; kernel oops output is essential for mapping a crash to CVE-2025-40324 during incident triage.

Remediation — patching and mitigation guidance

The definitive remediation is to install vendor-supplied kernel packages that include the upstream stable commit(s) fixing nfsd4_read_release. Do not attempt to “hot-fix” kernel code in production without vendor guidance unless you maintain in-house kernels and have appropriate test coverage.Recommended remediation checklist:

- Inventory

- Identify hosts that run nfsd: use the kernel config and running modules checks above.

- Flag multi-tenant file servers, storage appliances, and cloud images that would be high-priority for patching.

- Confirm vendor status

- Check your distribution’s security tracker and kernel package changelog for the CVE mapping and fixed package versions (Debian, Ubuntu, Red Hat, SUSE, etc.. Public trackers and upstream listings link to the stable commits.

- Patch and reboot

- Install the vendor kernel that lists the fix; schedule reboots as required by your maintenance policy.

- Stage updates: roll out to a test ring first, validate NFS functionality, and monitor for regressions.

- For appliances / embedded devices

- Contact the vendor for a backported image or patched firmware. Vendor kernels often lag upstream merges and require vendor-provided fixes.

- Disable or limit tracing on NFS servers until patched. Because the reproducible crash depends on tracepoint invocation, turning off ftrace/tracepoint consumers reduces the immediate trigger surface.

- Restrict which clients can perform pNFS/read operations; implement network-level access controls so untrusted clients cannot exercise problematic sequences.

- Isolate NFS services behind trusted networks or VPNs for the short term.

- Ingest vendor VEX/CSAF attestation feeds and automate triage — Microsoft has adopted product-scoped attestations for similar kernel CVEs, which accelerate automation when available. However, absence of an attestation does not imply safety; each artifact must be verified.

Exploitability and risk profile

- Attack vector: local or client-driven. The practical trigger is an NFS client performing particular read sequences while kernel tracing is enabled; it is not a remote, unauthenticated RCE in the classical sense.

- Privileges: low in many configurations — an unprivileged client that can perform NFS reads may be able to trigger the sequence, especially on multi-tenant or public export systems.

- Impact: high for availability — kernel oops/panic requiring reboot or causing service interruption.

- Exploit likelihood: low in mass remote-exploitation terms because the conditions require tracing and specific client sequences, but non-negligible for environments where those conditions exist (test clusters, observability-enabled servers, or multi-tenant hosts).

Operational checklist — prioritized actions

- Immediate (0–48 hours)

- Identify any hosts running nfsd and listing tracing as enabled. Use the kernel config checks and lsmod/systemctl commands.

- If tracing is not required, disable ftrace/tracepoint consumers on those hosts.

- Prioritize multi-tenant and publicly reachable NFS exports for expedited patching.

- Short term (48 hours–7 days)

- Apply vendor-supplied patched kernel packages and reboot hosts in a staged fashion.

- Validate NFS correctness using test workloads; ensure no new OOPS traces appear.

- For appliances, contact vendors for backports or image updates if vendor kernels are used.

- Medium term (1–4 weeks)

- Integrate vendor VEX/CSAF attestations into SBOM and patch orchestration to automate triage for future kernel CVEs. Microsoft and other cloud vendors publish machine-readable attestations that can speed triage for attested product families, but those attestations are product-scoped and must be complemented by artifact-level verification.

- Rebuild and republish custom images built from vulnerable bases with patched kernels baked in.

- Ongoing

- Harden observability policies: treat full-system tracing as a privileged operation and avoid enabling kernel tracepoints on production servers unless required.

- Maintain an inventory of kernel configurations across images and VMs; differences in CONFIG_* options determine actual exposure.

Critical analysis — strengths of the response and remaining risks

Strengths- The upstream fix is small and targeted, which makes it easy for distribution maintainers to backport into stable kernels and for administrators to accept vendor kernel updates with minimal functional risk. Public trackers refer to the stable commits and list the fix reliably, enabling straightforward mapping from CVE to fixed packages.

- The classification of the impact as availability-focused is accurate and helps triage prioritization: systems that do not run nfsd or do not enable tracing can be de-prioritized.

- Vendor and embedded kernel lag: the long-tail of vendor-supplied or OEM/kernel-forked images may remain vulnerable until the vendor backports the fix or issues new images. Appliance vendors and custom kernels require vendor coordination.

- Observability in production: modern cloud and platform observability stacks increasingly enable tracing by default in development or testing images. If similar tracing is accidentally enabled in production, the vulnerability surface widens.

- Misinterpretation of vendor attestations: product-scoped attestations (for example, Microsoft’s early Azure Linux attestations in other kernel CVEs) are operationally helpful but should not be read as an assertion that other artifacts are safe — absence of an attestation is not proof of safety. Operators must verify images individually.

- There were no authoritative public reports of in-the-wild exploitation for CVE-2025-40324 at disclosure; treat any later claim of active exploitation as requiring verification against reproducible evidence and kernel logs. Current public trackers did not show PoC weaponization at the time of disclosure.

Practical Q&A (concise operational takeaways)

- Should a file server operator panic? No — but prioritize patching. The issue is availability-focused and requires tracing plus a specific read sequence; remediation is to install vendor kernel updates and, if necessary, disable tracing until patched.

- Can the bug be exploited remotely? Not in the typical remote, unauthenticated sense. The vector is client-driven or local and depends on tracepoint exposure. Multi-tenant hosts and public exports are higher risk.

- Is it safe to keep traces enabled in production? Observability is valuable, but full-system tracing increases attack surface for trace-linked races. Best practice is to restrict kernel tracing to trusted debug windows and to use sampling telemetry rather than broad tracepoints on production servers.

Conclusion

CVE-2025-40324 is a textbook example of how kernel instrumentation and object-lifetime races can convert a unit-test failure into a production availability issue. The vulnerability — a crash in nfsd4_read_release triggered via the trace_nfsd_read_done tracepoint during the pynfs read.testNoFh sequence — is addressed by a narrow upstream patch that prevents the trace callback from observing unstable release-state. Operators should treat this as an availability-first CVE: identify NFS servers and whether tracing is enabled, apply vendor kernel updates promptly, and restrict tracing or client access as short-term mitigations. Public trackers and the upstream stable commits document the fix, but vendor backports and large image inventories remain the practical operational challenge; diligence, testing, and a prioritized patch rollout are the correct path forward.Source: MSRC Security Update Guide - Microsoft Security Response Center