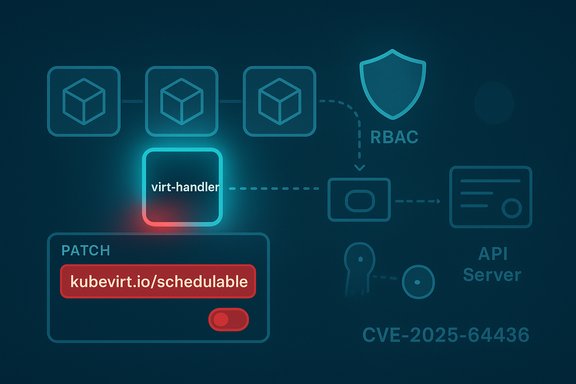

KubeVirt maintainers disclosed a privilege-management flaw, tracked as CVE-2025-64436, where excessive permissions granted to the virt-handler service account could be abused to force Virtual Machine Instance (VMI) migrations or otherwise concentrate VM workloads on attacker-controlled nodes — a defect fixed in KubeVirt 1.7.0 but one that demands immediate attention from Kubernetes operators running VM workloads.

KubeVirt is the de facto open-source extension for running full virtual machines inside Kubernetes clusters, exposing VirtualMachine and VirtualMachineInstance (VMI) APIs that integrate VMs with Kubernetes scheduling and lifecycle primitives. The project’s architecture includes node agents (virt-handler), controllers (virt-controller, virt-api), and an operator that reconciles KubeVirt CRs and RBAC objects. The virt-handler daemon runs on each node and performs host-level tasks such as marking nodes schedulable for VMI workloads and interacting with local virtualization tooling. The newly disclosed CVE appears in the product’s RBAC and default permission model: certain ClusterRole/Role bindings and reconciliation behavior gave the virt-handler service account permissions that were broader than necessary, allowing it to patch Node objects and update arbitrary VMI resources across the cluster. Those elevated capabilities are the root cause exploited in the advisory.

This vulnerability illustrates a broader operational lesson: components that mediate scheduling decisions or that write Node objects become high-value control points. Mis-scoped service accounts in such components amplify the blast radius of a local compromise. Similar patterns have appeared across virtualization and hypervisor advisories in recent years — small privileges or host-level write access can have outsized downstream effects on availability and tenant isolation.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background

Background

KubeVirt is the de facto open-source extension for running full virtual machines inside Kubernetes clusters, exposing VirtualMachine and VirtualMachineInstance (VMI) APIs that integrate VMs with Kubernetes scheduling and lifecycle primitives. The project’s architecture includes node agents (virt-handler), controllers (virt-controller, virt-api), and an operator that reconciles KubeVirt CRs and RBAC objects. The virt-handler daemon runs on each node and performs host-level tasks such as marking nodes schedulable for VMI workloads and interacting with local virtualization tooling. The newly disclosed CVE appears in the product’s RBAC and default permission model: certain ClusterRole/Role bindings and reconciliation behavior gave the virt-handler service account permissions that were broader than necessary, allowing it to patch Node objects and update arbitrary VMI resources across the cluster. Those elevated capabilities are the root cause exploited in the advisory. What CVE-2025-64436 actually is

The short summary

- The virt-handler service account in KubeVirt versions shipped up to the affected releases had permissions to patch Node resources and update VMI objects across the cluster.

- If a virt-handler instance — or the node it runs on — is compromised, an attacker could manipulate Node labels and VMI fields to effectively force VM schedule decisions, concentrating VM placement on attacker-chosen nodes or causing unintended re-creation/migration of workloads.

The mechanics (how it can be abused)

The advisory describes two complementary abuse paths:- Patch node labels such as the KubeVirt-managed kubevirt.io/schedulable label to mark other nodes unschedulable for VMI workloads. Repeated patches can make the cluster converge to the attacker’s node as the only node marked schedulable for VM placement. When an operator restarts or re-creates a VMI, Kubernetes scheduling may place that VMI on the attacker-controlled node.

- Update arbitrary VMI objects cluster-wide. Because the virt-handler service account had rights to update VMI resources, a compromised handler could manipulate the VMI’s metadata (for example, nodeName labels or status) to cause termination or re-creation flows that result in migration to a chosen node.

Affected versions and severity

- Affected KubeVirt versions: releases up to the affected lines reported in the advisory (<= 1.5.3 and <= 1.6.1 in reported advisories; other listings mark <= 1.5.0 as impacted depending on downstream packaging). Confirm the exact package mapping for your distribution.

- Patched in: KubeVirt 1.7.0 (the upstream advisory marks 1.7.0 as the release that contains the fix).

- Public severity: the community/NVD/GHSA metadata places the issue at Moderate with a CVSS v4.0 base score contribution of 6.9 (per the GitHub advisory/NVD summary), describing a network-exploitable, low-complexity vector when preconditions are met.

- The immediate exploitation step requires control of a virt-handler instance or the ability to act with the virt-handler service account token — that is, an attacker typically needs to compromise the node or the pod where virt-handler runs (local compromise) or obtain the service-account token through another vulnerability or misconfiguration. However, because virt-handler is a long-lived daemon with high privileges, compromise is high impact.

- As of public tracking data at disclosure time, there was no broad, public proof-of-concept that was weaponized in the wild at scale; community trackers show modest EPSS/KEV signals. Nonetheless, the low complexity of node/label patches and the reliance on legitimate API calls make this an urgent operational concern.

Why this matters to Kubernetes VM operators

KubeVirt intentionally integrates VMs with Kubernetes scheduling and node labeling. That integration is powerful but means that mistakes in RBAC scoping or default ClusterRole bindings can convert a single host compromise into a cross-node placement control problem.This vulnerability illustrates a broader operational lesson: components that mediate scheduling decisions or that write Node objects become high-value control points. Mis-scoped service accounts in such components amplify the blast radius of a local compromise. Similar patterns have appeared across virtualization and hypervisor advisories in recent years — small privileges or host-level write access can have outsized downstream effects on availability and tenant isolation.

Immediate mitigation and remediation (what to do now)

- Patch first — upgrade to the fixed KubeVirt release (1.7.0) as soon as practical.

- The upstream advisory and maintainers marked 1.7.0 as containing the resolution. Operators should test the release in staging and validate VMI lifecycle, live migration behavior, and node-heartbeat logic before global rollout.

- If you cannot upgrade immediately, apply compensating controls:

- Reduce network exposure of KubeVirt management interfaces and the Kubernetes API server.

- Limit who can access the kubevirt namespace and the virt-handler pods. Use network policies to restrict which pods or management hosts can reach the virt-handler pods.

- Audit and rotate the virt-handler service account tokens. Treat any long-lived token materials as suspect if you cannot guarantee pod/node integrity.

- Temporarily harden RBAC bindings: inspect ClusterRoleBindings for kubevirt roles and remove unnecessary cluster-wide privileges, replacing with finer-grained RoleBindings where operationally feasible (note: KubeVirt’s operator may reconcile RBAC, so coordinate carefully).

- Consider disabling or constraining the feature gate/mechanism that grants broad VMI-update scope — the advisory notes that a NodeRestriction-like mechanism exists but is gated by a feature and may not be on by default; enabling the equivalent protection, or moving to per-node service accounts for virt-handler instances, reduces abuse potential.

- Detection and triage — look for the following signals immediately:

- Suspicious PATCH calls to Node API objects originating from virt-handler pods or nodes that otherwise should not be modifying other nodes’ labels (especially changes to kubevirt.io/schedulable). The advisory shows this as an explicit attack vector.

- Unexpected updates to VMI objects (changes in nodeName, status transitions to Succeeded caused by label manipulation, or repeated restarts of VMIs that correlate to label changes).

- Retrieve and examine audit logs (Kubernetes audit logs) for API requests authenticated by the virt-handler service account token. Configure alerts for cross-node node-label patches performed by virt-handler identities.

- If you detect suspicious actions or evidence of token theft, isolate the node(s) and perform forensic triage (capture pod filesystem, container image hashes, and node-level logs) before rotating tokens and re-creating compromised components.

- Operational checklist for safe patch rollout:

- Inventory: enumerate clusters running KubeVirt and list KubeVirt version(s) and the nodes hosting virt-handler pods.

- Test: upgrade a staging cluster to 1.7.0; validate migration, live-migration, device hotplug, and VMI lifecycle flows.

- Patch: schedule maintenance windows and upgrade clusters methodically (control-plane → operators → virt-controller → virt-handler).

- Verify: confirm the absence of suspicious node label PATCH operations post-upgrade and validate audit logs for expected behavior.

Configuration hardening: concrete recommendations

- Apply least privilege to KubeVirt roles:

- Replace broad ClusterRole bindings with targeted Roles/RoleBindings where possible.

- Use KubeVirt’s documented default ClusterRoles as a baseline and explicitly reduce create/update rights for non-admin users. The KubeVirt documentation and hardening notes describe the default RBAC model and the recommended migrate role to grant migration rights only to selected principals.

- Consider per-node virt-handler service accounts:

- Embedding node identity in the service account username or leveraging node-specific identities allows the API server to tie requests back to the originating node. The advisory discusses per-node accounts as an operational alternative to a broad cluster-scoped virt-handler identity, though it increases operational complexity (rotation, reconciliation).

- Enable or backport the node-restriction style checks:

- Similar to Kubernetes’ NodeRestriction admission controls, ensure that the KubeVirt mechanism that limits which nodes a virt-handler may act upon is enabled where available in your KubeVirt/Kubernetes version matrix. The advisory notes that the feature is gated by configuration and may not be on by default.

- Pod and node hardening:

- Run virt-handler with minimal host privileges and restrict access to host namespaces unless required. Limit exec/attach capabilities and enforce strong container security policies (seccomp, SELinux/AppArmor, read-only root filesystem).

- Use network policies to ensure only operator-approved hosts can reach virt-handler endpoints.

- Keep host OS and container runtime patched; virt-handler compromise is often a downstream result of node-level exploitation.

Detection queries and SIEM rules (practical examples)

- Kubernetes audit rule: alert when a PATCH or UPDATE to a Node object is authenticated as the virt-handler service account and the action targets a node other than the one hosting that virt-handler instance.

- Alert on frequent changes to kubevirt.io/schedulable or kubevirt.io/heartbeat labels by non-system identities.

- Alert on cluster-wide VMI updates originating from virt-handler service accounts.

- Correlate anomalous VMI lifecycle transitions (Running → Succeeded → re-created on different node) with node label modifications in audit logs.

Risk assessment — attack scenarios and what’s realistic

- Realistic short-chain attack:

- An attacker first obtains code-execution inside a non-privileged pod or compromises a node (for example via a vulnerable daemon or misconfigured container). From there, they escalate to access the virt-handler pod or node context, retrieve the service account token, and call the Kubernetes API to patch Node labels and VMI metadata. Because the attack uses valid API operations, it tends to blend into normal-looking control-plane traffic.

- Real-world impact:

- Confidentiality: limited in the direct sense — the bug’s primary immediate impact is control over scheduling and placement, which could be weaponized for lateral movement (by moving sensitive workloads to attacker-controlled nodes) or escalation if combined with other host-level vulnerabilities.

- Integrity/availability: moderate risk. Forcing VM migration to a compromised node allows additional attack paths and may result in workload disruption. The advisory frames the impact in terms of the attacker being able to force migration/placement, not immediate remote code execution of arbitrary workloads on other nodes without additional conditions.

- Exploitability:

- The GitHub advisory documents a low-complexity sequence to achieve the label patch and VMI update, making exploitation plausible after initial access to virt-handler credentials. However, the initial step remains the containment failure or compromise of a node or virt-handler process. This means organizations that already strongly isolate node management and harden node surfaces have a material defensive advantage.

Upstream and maintainer perspective — why did this happen?

The root cause sits squarely in default privilege scoping and reconciliation behavior: KubeVirt’s operator reconciles RBAC roles and ClusterRoles, and the default model historically granted virt-handler broad rights to update VMI resources and to modify Node labels used to indicate schedulability. Where an operator reconciles roles automatically, administrators cannot simply “deny” a permission: they must change the operator’s configuration or upstream role definitions — which is why default scoping changes and the introduction of a migrate-specific ClusterRole were part of the longer-term hardening trajectory of the project. The advisory also references historical work (ValidatingAdmissionPolicy and later admission-mode controls) to limit where virt-handler can write on Node objects and to confine label updates to kubevirt.io-prefixed labels.Longer-term recommendations for operators and maintainers

- Adopt a strict least-privilege model for all control-plane agents. Treat daemons that write Node objects or that manipulate scheduling metadata as high-value privileges.

- Maintain a tentative “zero-trust” posture for node-level agents: assume that any node-facing component may be compromised and design RBAC and network controls to limit the blast radius in case of local compromise.

- Add per-node identities or enforce admission checks that bind actions to the originating node’s identity (as described in the advisory’s per-node service-account or userInfo recommendations). While operationally heavier, this change improves auditability and makes cross-node label manipulation more difficult.

- Keep a staged test and rollback plan for KubeVirt upgrades: virtualization workloads are sensitive to migration code paths and live-migration semantics, so validate in a representative stage cluster before broad rollout.

What we verified and what remains uncertain

Verified facts:- The vulnerability and advisory text were published by the KubeVirt project as a GitHub security advisory and are tracked under CVE-2025-64436. The advisory describes the virt-handler permission model and the abuse of Node and VMI update capabilities.

- NVD and multiple vulnerability trackers have recorded CVE-2025-64436 and list a moderate severity score and the general exploit narrative described above.

- Upstream fix released in KubeVirt 1.7.0 (operators and maintainers marked this as the patched release).

- Public exploit activity: at the time of advisory disclosure community trackers did not show wide in-the-wild exploit campaigns leveraging this specific CVE, but event feeds could change rapidly. Treat exploit telemetry as time-sensitive and validate live threat feeds for indicators referencing CVE-2025-64436.

- Distribution/package mappings: downstream distributions or managed Kubernetes vendors may have different package versions or backports. Confirm your provider’s advisory and the exact patched release for managed clusters before automating updates.

Conclusion — prioritized action list

- Inventory all clusters and nodes running KubeVirt; map exact KubeVirt versions and virt-handler locations.

- Upgrade to KubeVirt 1.7.0 (or the vendor-marked fixed package) after testing in staging.

- While you prepare upgrades, harden RBAC for kubevirt roles, restrict network access to virt-handler, rotate service account tokens, and enable monitoring and alerts for node label and VMI updates authenticated by virt-handler identities.

- Add SIEM rules and audit-watchers for kubevirt.io/schedulable label changes, cross-node node PATCHes from virt-handler identities, and cluster-wide VMI update anomalies.

- For longer-term resilience, adopt least-privilege ClusterRoles, consider per-node service accounts for virt-handler, and verify that admission controls reducing write scope are enabled in your platform configuration.

Source: MSRC Security Update Guide - Microsoft Security Response Center