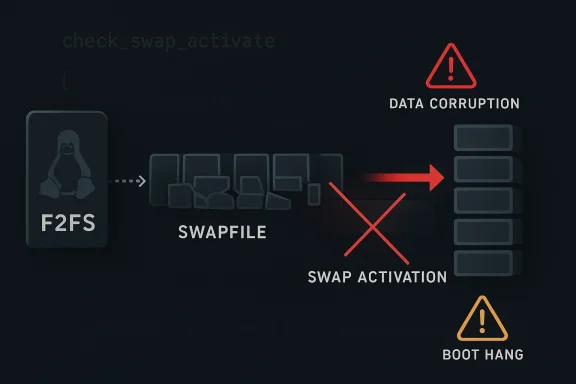

CVE-2026-23233 is a Linux kernel vulnerability in F2FS that can cause the filesystem to map the wrong physical blocks for a swapfile, potentially leading to data corruption, boot hangs, or dm-verity failures on affected systems. The issue was published through Microsoft’s vulnerability guidance and traced by NVD to the kernel.org fix titled “f2fs: fix to avoid mapping wrong physical block for swapfile.” In practical terms, this is not a generic “swap is broken” bug; it is a narrow but serious filesystem edge case involving fragmented swapfiles and extent handling on F2FS. (nvd.nist.gov)

F2FS, short for Flash-Friendly File System, was designed to work well on flash storage by aligning its layout decisions with the realities of NAND behavior and wear patterns. That design matters because the same mechanisms that make F2FS efficient for mobile and embedded devices can also create sharp edges when the filesystem has to deal with unusual workloads such as swapfiles, fragmentation, and block migration. Linux kernel documentation describes F2FS as a filesystem that supports modern features such as encryption and inline encryption, which helps explain why it remains relevant in current kernels and device ecosystems.

Swapfiles are already more delicate than ordinary files because they are used as virtual memory backing store, which means the kernel expects the file’s physical mapping to be stable and correct. When a swapfile is fragmented, the kernel has to translate logical offsets into the right physical blocks with absolute precision, or memory pages end up being written to the wrong place. That can corrupt unrelated filesystem data rather than simply reducing swap performance, which is why swap-related bugs often look like general storage instability rather than a self-contained memory-management issue. (nvd.nist.gov)

This CVE appears to stem from a logic error in check_swap_activate() inside

The CVE is especially interesting because it is not framed as an exploit in the traditional remote-code-execution sense. Instead, it is a corruption bug that can still be security-relevant because it can damage file integrity, destabilize systems, and in some scenarios trigger integrity-protection mechanisms such as dm-verity. NVD lists a High CVSS 3.1 score of 7.8 with local attack requirements, reflecting that the attacker needs some level of local control or the ability to exercise the swap path, but the impact can be severe once the condition is triggered. (nvd.nist.gov)

There is also a subtler operational risk: corruption in a swap-backed system may not appear immediately at the moment of activation. The system can run for some time before the corrupted write path is exercised, which makes the issue harder to diagnose. That delayed failure pattern is exactly why filesystem bugs often surface as “random” crashes, boot failures, or impossible-to-reproduce corruption reports. (nvd.nist.gov)

The NVD write-up also includes a telling trace difference between affected and unaffected kernels. On kernel 6.6, the trace showed only the first extent being mapped during the second

The most important takeaway is that swap activation is not just a bookkeeping step. On filesystems like F2FS, it may involve migration, validation, and remapping decisions that must all remain internally consistent. When the function decides it has seen “the last extent” too early, it is effectively cutting off the rest of the truth. That is the kind of failure mode that can survive code review and still slip into real-world kernels. (nvd.nist.gov)

The “local” nature of the vulnerability should not lull anyone into thinking it is low risk. Many high-impact kernel bugs are local because the kernel itself is the thing being asked to perform a privileged operation, and the attack surface is often a legitimate administration or memory-management path. Here, the danger is not remote takeover; it is the filesystem being tricked into mis-placing its own writes. (nvd.nist.gov)

There is also an operational angle around validation. If a vendor ships an image with F2FS and swap enabled, the fix is not just “apply a patch when convenient.” Teams need to retest suspend/resume, memory pressure, crash recovery, and integrity verification, because corruption bugs often manifest outside the exact trigger window. That is why filesystem regressions are so costly for device programs. (nvd.nist.gov)

The key security point is that integrity is the first casualty, but availability follows closely behind. In some environments, especially mobile and embedded systems, data corruption is effectively a service outage. If the corrupted area includes metadata or essential boot-linked content, a local bug becomes a platform-level recovery event. (nvd.nist.gov)

If a system depends on F2FS and swap, it is also wise to review whether swapfiles are fragmented or unusually small. That is not a substitute for patching, but it can reduce the likelihood of hitting the vulnerable path while maintenance windows are scheduled. Patch first, then validate the storage policy—that remains the safest sequence. (nvd.nist.gov)

The bug also illustrates why “niche” kernel advisories can have outsized ecosystem effects. A flaw in a flash-optimized filesystem can ripple into Android builds, appliance firmware, cloud edge devices, and OEM support channels. That wide potential footprint gives even a local-only bug strategic relevance, because it can affect release schedules, support costs, and certification cycles. (nvd.nist.gov)

The final point is cultural: kernel bugs like this remind administrators that storage correctness is part of security, not just reliability. When a filesystem can map a swap write to the wrong block, the line between “bug” and “vulnerability” disappears very quickly. The best defense is disciplined patching, careful workload testing, and a willingness to treat integrity failures as first-class incidents. (nvd.nist.gov)

CVE-2026-23233 is a classic example of a small-looking kernel defect with a large blast radius: a subtle extent-mapping mistake in F2FS can turn ordinary swap activity into corruption, instability, and integrity failures. The fix is straightforward in principle, but the lesson is bigger than the patch itself. As Linux continues to power flash-heavy devices and specialized deployments, the systems that stay safest will be the ones that treat low-level correctness as a security requirement, not an implementation detail.

Source: MSRC Security Update Guide - Microsoft Security Response Center

Background

Background

F2FS, short for Flash-Friendly File System, was designed to work well on flash storage by aligning its layout decisions with the realities of NAND behavior and wear patterns. That design matters because the same mechanisms that make F2FS efficient for mobile and embedded devices can also create sharp edges when the filesystem has to deal with unusual workloads such as swapfiles, fragmentation, and block migration. Linux kernel documentation describes F2FS as a filesystem that supports modern features such as encryption and inline encryption, which helps explain why it remains relevant in current kernels and device ecosystems.Swapfiles are already more delicate than ordinary files because they are used as virtual memory backing store, which means the kernel expects the file’s physical mapping to be stable and correct. When a swapfile is fragmented, the kernel has to translate logical offsets into the right physical blocks with absolute precision, or memory pages end up being written to the wrong place. That can corrupt unrelated filesystem data rather than simply reducing swap performance, which is why swap-related bugs often look like general storage instability rather than a self-contained memory-management issue. (nvd.nist.gov)

This CVE appears to stem from a logic error in check_swap_activate() inside

fs/f2fs/data.c, where the kernel misidentifies the final extent of a small swapfile under specific alignment conditions. NVD’s description says that when the first extent of a swapfile under 2 MB is not aligned to a section boundary, the function can treat it as the last extent, fail to continue mapping the rest of the file, and then create an incorrect swap extent record. That is a classic example of a low-level boundary bug with outsize consequences: one off-by-one decision in extent handling can redirect future swap writes into someone else’s data. (nvd.nist.gov)The CVE is especially interesting because it is not framed as an exploit in the traditional remote-code-execution sense. Instead, it is a corruption bug that can still be security-relevant because it can damage file integrity, destabilize systems, and in some scenarios trigger integrity-protection mechanisms such as dm-verity. NVD lists a High CVSS 3.1 score of 7.8 with local attack requirements, reflecting that the attacker needs some level of local control or the ability to exercise the swap path, but the impact can be severe once the condition is triggered. (nvd.nist.gov)

What the Bug Actually Does

The core of the problem is that swap activation on F2FS appears to have mishandled how it continues scanning extents after migrating blocks in the tail of a swapfile. According to NVD’s record of the kernel fix, the code path could decide that a rounded-up number of physical blocks effectively represented the last extent even when it did not, causing the remaining extents never to be processed. Once that happens, the swap subsystem believes it has a complete mapping when in reality only the first chunk is accounted for. (nvd.nist.gov)Why that matters

If the kernel later writes swapped-out pages based on that incomplete extent map, those writes may go to the wrong physical location. That means the bug can overwrite unrelated data, which is much worse than simply wasting a little swap space. In effect, the filesystem and the memory manager lose synchronization on where a page really lives, and data integrity becomes the casualty. (nvd.nist.gov)There is also a subtler operational risk: corruption in a swap-backed system may not appear immediately at the moment of activation. The system can run for some time before the corrupted write path is exercised, which makes the issue harder to diagnose. That delayed failure pattern is exactly why filesystem bugs often surface as “random” crashes, boot failures, or impossible-to-reproduce corruption reports. (nvd.nist.gov)

- The flaw affects F2FS swapfile extent handling.

- It is triggered by a fragmented swapfile under specific alignment conditions.

- The result is wrong physical block mapping.

- The downstream symptom can be data corruption, not just swap failure.

- Integrity layers such as dm-verity may detect the damage and react. (nvd.nist.gov)

Reproduction Conditions

NVD’s description makes clear that this is not a universal F2FS problem. The reported scenario involves stress-ng’s swap stress test and a system with an F2FS-formatted userdata partition, with the issue showing up on kernel 6.6+ while 6.1 was unaffected in the cited report. That kind of version split strongly suggests a regression introduced by a later kernel change rather than a longstanding design flaw. (nvd.nist.gov)The specific trigger pattern

The vulnerable pattern combines several conditions at once. The swapfile must be smaller than the F2FS section size—reported as 2 MB in the NVD description—and it must have a fragmented physical layout with multiple non-contiguous extents. In other words, this is the sort of scenario that ordinary desktop usage may never generate, but stress tests and certain storage behaviors absolutely can. (nvd.nist.gov)The NVD write-up also includes a telling trace difference between affected and unaffected kernels. On kernel 6.6, the trace showed only the first extent being mapped during the second

f2fs_map_blocks call, whereas on kernel 6.1 both extents were properly mapped. That contrast suggests the failure is not simply “fragmentation is bad,” but rather the new code path failed to continue mapping after an initial migration step. (nvd.nist.gov)- Kernel 6.6+ was implicated in the report.

- Kernel 6.1 reportedly behaved correctly.

- The swapfile had to be fragmented.

- The swapfile size had to be under 2 MB.

- The issue was reproduced with stress-ng swap pressure. (nvd.nist.gov)

Why stress tests matter

Stress tools often reveal bugs that ordinary workloads never hit because they intentionally push corner cases in allocation, migration, and reclaim. That is important for F2FS because it is a modern filesystem with complex allocation behavior and section-based rules that can interact badly with swap semantics. In other words, the bug may look niche, but the class of code involved is very much production-relevant. (nvd.nist.gov)Technical Root Cause

The NVD description includes the heart of the logic failure: when the first extent is unaligned androundup(nr_pblocks, blks_per_sec) overshoots the section max, the code subtracts a section’s worth of blocks and can end up with nr_pblocks = 0. At that point, the function assumes it has reached the last extent, sets the mapping length to the remainder of the file, and does not retry mapping the remaining extents. That is a dangerous combination of boundary math and control-flow inference. (nvd.nist.gov)Why this is a filesystem-class bug

This kind of bug usually does not come from a lack of memory safety primitives or from malformed user input in the classic sense. It comes from the filesystem’s internal model diverging from the physical reality of the storage layout. Once the model becomes wrong, every subsequent I/O operation that depends on it becomes suspect, and the corruption can spread into areas that have nothing to do with the original swapfile. (nvd.nist.gov)The most important takeaway is that swap activation is not just a bookkeeping step. On filesystems like F2FS, it may involve migration, validation, and remapping decisions that must all remain internally consistent. When the function decides it has seen “the last extent” too early, it is effectively cutting off the rest of the truth. That is the kind of failure mode that can survive code review and still slip into real-world kernels. (nvd.nist.gov)

- The bug is rooted in extent continuation logic.

- A rounding step can produce a misleading zero-length state.

- The function then takes a wrong control-flow branch.

- The result is an incomplete swap extent map.

- Later swap writes can hit unrelated physical blocks. (nvd.nist.gov)

The significance of “wrong physical block”

“Wrong physical block” sounds abstract, but in filesystem terms it is one of the worst possible outcomes. It means data thought to belong to the swap subsystem is written to storage belonging to some other file or metadata region. If that happens, the damage can range from silent file corruption to unrecoverable boot failure, depending on what got overwritten. (nvd.nist.gov)Impact on End Users

For consumers, the most visible effect is likely to be instability: crashes, boot hangs, or files that suddenly become corrupted after a swap-heavy workload. NVD’s description explicitly mentions dm-verity corruption errors and F2FS node corruption errors, which are the kinds of symptoms that can turn a seemingly healthy device into a machine that fails integrity checks or refuses to boot cleanly. (nvd.nist.gov)Consumer devices are not immune

This matters because F2FS is widely used in Android and other flash-based environments where swap-like memory pressure scenarios can occur behind the scenes. Even if a typical user never manually creates a swapfile, vendor configurations, test images, or storage-maintenance activities may still exercise the affected code path. That makes the bug more relevant to device makers and OEMs than to most laptop owners, but the consequences can still surface as a customer-facing reliability problem. (nvd.nist.gov)The “local” nature of the vulnerability should not lull anyone into thinking it is low risk. Many high-impact kernel bugs are local because the kernel itself is the thing being asked to perform a privileged operation, and the attack surface is often a legitimate administration or memory-management path. Here, the danger is not remote takeover; it is the filesystem being tricked into mis-placing its own writes. (nvd.nist.gov)

- Possible symptoms include boot loops.

- Integrity-checked systems may show verity failures.

- Users may see filesystem corruption after swap pressure.

- Reinstallation may not help if the underlying storage state is damaged.

- The problem may appear intermittent, which complicates support calls. (nvd.nist.gov)

Why this is hard to triage

From a support standpoint, corruption bugs like this are painful because the cause and effect are separated by time. A device may look fine until a specific combination of fragmentation and memory pressure happens, at which point the damage has already been done. That lag makes it easy to misdiagnose the issue as bad hardware, flaky flash, or unrelated kernel instability. (nvd.nist.gov)Impact on Enterprises and OEMs

For enterprises, the most important issue is not whether employees are likely to handcraft F2FS swapfiles; it is whether managed images, embedded systems, or specialized Android-derived platforms do so. Systems with aggressive memory pressure, long uptimes, or automated test workloads are exactly where a latent storage-mapping bug can move from theoretical to expensive. In that sense, this CVE is a fleet-reliability problem as much as a security advisory. (nvd.nist.gov)Where the risk concentrates

OEMs and device vendors should care because they often own the storage format, kernel configuration, and swap policy that determine whether the vulnerable path is reachable. Enterprise Linux teams should care when they support edge devices, kiosks, appliances, or mobile form factors where flash-optimized filesystems are common. The more customized the storage stack, the more likely it is that a bug like this will survive long enough to matter. (nvd.nist.gov)There is also an operational angle around validation. If a vendor ships an image with F2FS and swap enabled, the fix is not just “apply a patch when convenient.” Teams need to retest suspend/resume, memory pressure, crash recovery, and integrity verification, because corruption bugs often manifest outside the exact trigger window. That is why filesystem regressions are so costly for device programs. (nvd.nist.gov)

- Vendor images may need kernel updates and regression testing.

- Storage layouts should be checked for fragmented swapfiles.

- Integrity tooling may need to be rerun after remediation.

- Field failures could resemble hardware faults.

- Recovery planning should assume possible on-disk corruption. (nvd.nist.gov)

The broader enterprise lesson

Enterprises often treat swap as a dull back-end concern, but bugs like this show that swap is part of the trusted compute path. When swap interacts with a filesystem that has its own allocation rules, the boundary between memory management and storage management gets thin very quickly. That is a reminder that kernel regressions can have infrastructure-wide consequences even when the vulnerable workload is rare. (nvd.nist.gov)Security and Severity

NVD currently lists CVSS 3.1 Base Score 7.8 High for CVE-2026-23233, with a local attack vector and impacts rated high for confidentiality, integrity, and availability. Even though the bug is not a classic privilege-escalation or remote-code-execution flaw, the score reflects the seriousness of kernel-level corruption on a machine that may be trusted to protect data and maintain uptime. (nvd.nist.gov)Why the rating is high

At first glance, a corruption bug may seem less dangerous than a code-execution bug, but the real-world damage profile can be just as severe. If swap writes go to the wrong physical blocks, the kernel can destroy data owned by unrelated files, trigger integrity errors, or create a boot-unfriendly storage state that requires recovery work. In environments that rely on dm-verity or similar integrity checks, the system may become unusable until repaired. (nvd.nist.gov)The key security point is that integrity is the first casualty, but availability follows closely behind. In some environments, especially mobile and embedded systems, data corruption is effectively a service outage. If the corrupted area includes metadata or essential boot-linked content, a local bug becomes a platform-level recovery event. (nvd.nist.gov)

- Local attack vector

- High integrity impact

- High availability impact

- High confidentiality impact in the scoring model

- Severe consequences despite a narrow trigger condition (nvd.nist.gov)

A note on attack surface

Because the issue is local, attackers need access to the system or a workflow that invokes the vulnerable swap path. That does not make it harmless; it means the exploitability depends on deployment style. Systems that expose more administrative flexibility, or that automatically create conditions for swap pressure, are naturally more exposed than tightly locked-down desktops. (nvd.nist.gov)Fix Strategy and Remediation

The kernel fix, as summarized by NVD, is conceptually straightforward: after migrating all blocks in the tail of the swapfile, the code should look up block mapping information again instead of assuming the previous state correctly reflects the file’s remaining extents. That means the remedy is not a workaround layered on top of the problem, but a correction of the control flow that determines how mapping continues after migration. (nvd.nist.gov)What administrators should do

The most important action is to install the vendor kernel update that includes the relevant fix for the affected kernel series. NVD lists patched ranges for several branches, including versions before the fixed builds in the 6.6, 6.9, 6.13, and 6.19 series. Administrators should verify the exact kernel flavor in use, because stable branch numbering and distro backports can make the practical fix version differ from the upstream point release. (nvd.nist.gov)If a system depends on F2FS and swap, it is also wise to review whether swapfiles are fragmented or unusually small. That is not a substitute for patching, but it can reduce the likelihood of hitting the vulnerable path while maintenance windows are scheduled. Patch first, then validate the storage policy—that remains the safest sequence. (nvd.nist.gov)

- Deploy the vendor kernel update with the fix.

- Verify the exact kernel branch in production.

- Audit whether F2FS swapfiles are fragmented.

- Re-test boot, suspend, and memory pressure scenarios.

- Check for any prior filesystem corruption after remediation. (nvd.nist.gov)

Why backports matter

Kernel CVEs rarely map one-to-one with a single upstream version, especially in long-term support distributions. The same functional fix may be backported into multiple stable branches with different build numbers, which is why simply memorizing “6.6.33” or “6.12.74” is not enough. Enterprises should rely on their distribution’s security tracker and update advisories rather than assuming upstream numbering tells the whole story. (nvd.nist.gov)Broader Market and Competitive Implications

This bug is a reminder that Linux filesystem hardening remains a moving target, especially in environments where storage topology, flash behavior, and memory management intersect. Vendors that ship kernel-based mobile or embedded platforms compete not only on features but on how quickly they can absorb subtle storage fixes without destabilizing the rest of the system. That makes kernel maintenance a competitive differentiator, even if it is rarely marketed that way. (nvd.nist.gov)Why rivals should care

Competing operating systems and platform vendors can draw a few lessons here. First, any filesystem that supports swap-like or block-remapping behavior must treat extent boundaries as security-sensitive. Second, stress testing needs to combine storage fragmentation with memory pressure, because that is where many latent bugs surface. Third, integrity-protected systems are especially unforgiving of swap-path corruption, which means testing has to go beyond basic boot success. (nvd.nist.gov)The bug also illustrates why “niche” kernel advisories can have outsized ecosystem effects. A flaw in a flash-optimized filesystem can ripple into Android builds, appliance firmware, cloud edge devices, and OEM support channels. That wide potential footprint gives even a local-only bug strategic relevance, because it can affect release schedules, support costs, and certification cycles. (nvd.nist.gov)

- Kernel maintenance quality affects platform trust.

- Storage bugs can influence device certification timelines.

- OEMs may need more aggressive regression testing.

- Integrity failures can become customer-facing outages.

- Fast patching can be a market advantage. (nvd.nist.gov)

The competitive takeaway

In the long run, the vendors that win are often the ones that can absorb low-level fixes without creating new regressions. F2FS is still a practical choice for many flash-based systems, but this CVE reinforces that every filesystem feature carries operational tradeoffs. The lesson for the market is simple: performance optimizations must never outrun correctness. (nvd.nist.gov)Strengths and Opportunities

The positive side of this story is that the issue was identified, documented, and assigned a fix path quickly enough for distributors and device makers to act on it. The vulnerability also highlights how mature Linux’s disclosure ecosystem has become: a bug reported through kernel channels can flow into NVD, distro trackers, and downstream security guidance with enough detail to support targeted remediation. That creates a better outcome than silent corruption ever could. (nvd.nist.gov)- The fix appears to be surgical, not architectural.

- The bug was well characterized with reproducible conditions.

- Downstream vendors can likely backport the correction.

- The issue encourages better swap and stress testing.

- F2FS maintainers get a chance to harden extent logic further.

- Security teams can improve fleet hygiene around kernel updates.

- The event reinforces the value of integrity-aware testing. (nvd.nist.gov)

Risks and Concerns

The biggest concern is that corruption bugs can remain latent until they cause expensive field failures. Even if a given deployment is unlikely to use a small fragmented swapfile, the moment a vendor image, test harness, or recovery path creates that state, the risk becomes real. Because the failure may emerge later than the trigger, attribution can be difficult and recovery can be incomplete. (nvd.nist.gov)- Silent data overwrite is possible.

- Corruption may be detected only after the fact.

- Boot-critical files can be affected.

- Devices with dm-verity may fail integrity checks.

- Support teams may misdiagnose the problem as hardware failure.

- Fragmented layouts are common enough to be a real risk.

- Delayed remediation increases the chance of fleet-wide exposure. (nvd.nist.gov)

What to Watch Next

The next thing to watch is how Linux distributions and device vendors map the upstream fix into their own stable branches. NVD already lists affected version ranges and references the patch set, but real-world risk declines only when the relevant vendor kernels are shipped and actually installed. For many organizations, the practical question is not “is there a fix?” but “is our build line included in it yet?” (nvd.nist.gov)Watch these items closely

- Distro advisories that name specific backported kernel builds

- OEM firmware or Android image updates that include the F2FS fix

- Reports of reproducible corruption on 6.6-derived kernels

- Any follow-up analysis of the check_swap_activate() logic

- Additional filesystem bugs found by similar swap stress tests (nvd.nist.gov)

The final point is cultural: kernel bugs like this remind administrators that storage correctness is part of security, not just reliability. When a filesystem can map a swap write to the wrong block, the line between “bug” and “vulnerability” disappears very quickly. The best defense is disciplined patching, careful workload testing, and a willingness to treat integrity failures as first-class incidents. (nvd.nist.gov)

CVE-2026-23233 is a classic example of a small-looking kernel defect with a large blast radius: a subtle extent-mapping mistake in F2FS can turn ordinary swap activity into corruption, instability, and integrity failures. The fix is straightforward in principle, but the lesson is bigger than the patch itself. As Linux continues to power flash-heavy devices and specialized deployments, the systems that stay safest will be the ones that treat low-level correctness as a security requirement, not an implementation detail.

Source: MSRC Security Update Guide - Microsoft Security Response Center